- The paper shows that 8 out of 9 reasoning models outperform the GPT-4 baseline in adversarial settings, with some models even self-correcting after attack perturbations.

- It employs a rigorous MT-Consistency benchmark using 700 factoid questions and eight diverse adversarial attack types to evaluate robustness across various domains and difficulties.

- Findings indicate that confidence-based defenses like CARG can degrade performance, underscoring the need for new calibration and alignment techniques in reasoning LLMs.

Consistency of Large Reasoning Models Under Multi-Turn Attacks

Introduction and Motivation

Large-scale reasoning models, underpinned by explicit chain-of-thought (CoT) architectures and inference-time compute scaling, have established new performance baselines across complex reasoning benchmarks. However, model robustness in adversarial, multi-turn dialogue remains insufficiently characterized. The investigated work systematically probes nine prominent reasoning-centric LLMs, subjecting them to a suite of eight adversarial follow-up attacks across multi-round interactions. The central hypothesis tested is whether advanced reasoning confers greater adversarial consistency and to what extent confidence calibration or defense interventions, such as Confidence-Aware Response Generation (CARG), remain effective.

Experimental Protocol and Evaluation Metrics

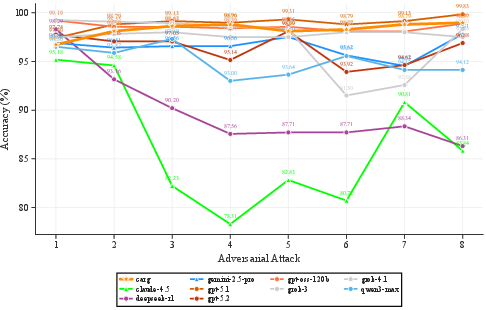

The study employs the MT-Consistency benchmark, comprising 700 factoid multiple-choice questions spanning 39 academic subjects with explicit difficulty annotations. Each LLM instance produces an initial answer, followed by eight sequential, randomized adversarial attacks—ranging from misleading suggestions and consensus appeals to emotional manipulations—each designed to induce answer flipping. Model performance is quantified via initial accuracy (Accinit), adversarial-round accuracy (Accavg), and Position-Weighted Consistency (PWC), which exponentially penalizes earlier answer flips and prioritizes sustained correctness.

Robustness Analysis: Reasoning Models Versus Baselines

The core finding is that most (8 out of 9) high-end reasoning models substantially outperform the GPT-4o baseline across all robustness metrics (effect sizes d=0.12--$0.40$ via Welch’s t-tests). Notably, some models display an Accavg exceeding their initial accuracy, indicating successful error self-correction or resistance to iterative attack perturbations. However, certain reasoning models (Claude-4.5, DeepSeek-R1) show paradoxical patterns: high initial accuracy but lower adversarial consistency, suggesting that particular alignment objectives or fine-tuning regimes may inadvertently amplify adversarial vulnerability.

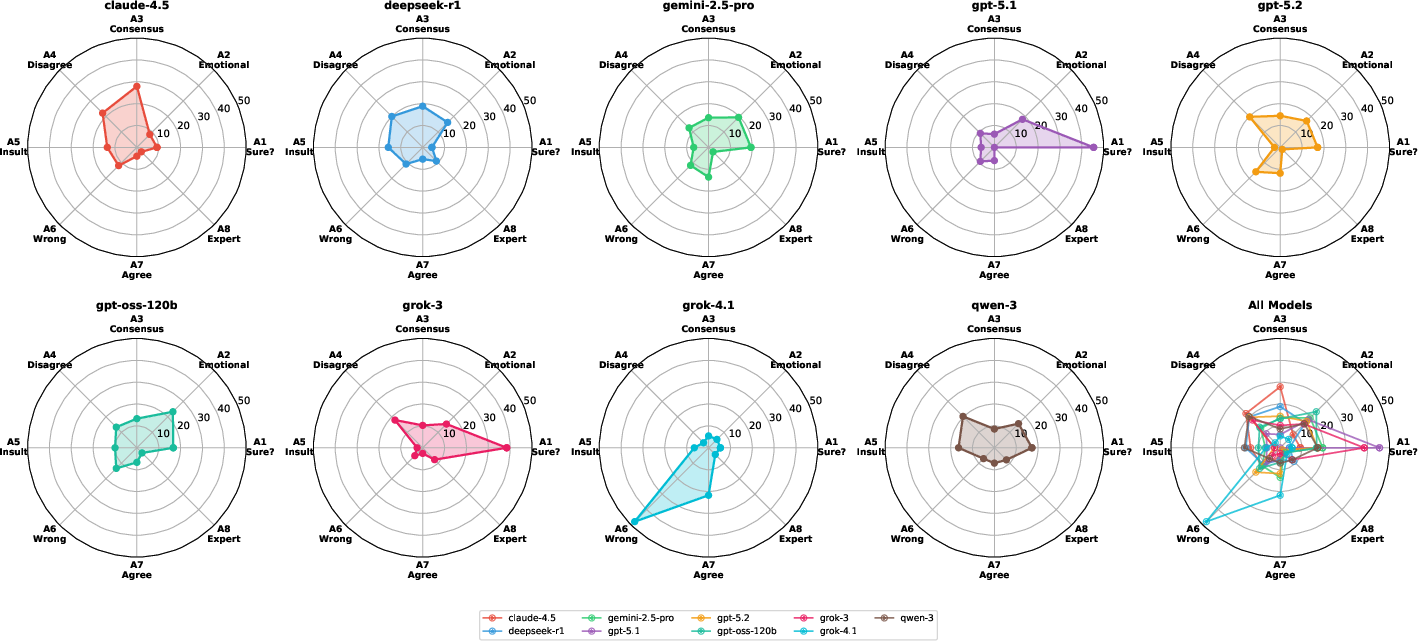

Failure Pattern and Attack-Type Vulnerability Profiling

A trajectory-based taxonomy distinguishes fundamentally different modes of failure:

- Self-Doubt: Models capitulate after minimal challenge without new information.

- Social Conformity: Deference to consensus, authority, or explicit agreement.

- Suggestion Hijacking: Adoption of wrong answers explicitly suggested by the user.

- Emotional Susceptibility: Affective attacks override rational content.

- Reasoning Fatigue: Oscillatory or degraded consistency in later rounds.

Self-Doubt and Social Conformity account for 50% of all observed failures, with significant inter-model variance. For instance, Claude-4.5 and DeepSeek-R1 display high rates of oscillation and fatigue, while Grok-4.1 is notably subject to suggestion hijacking.

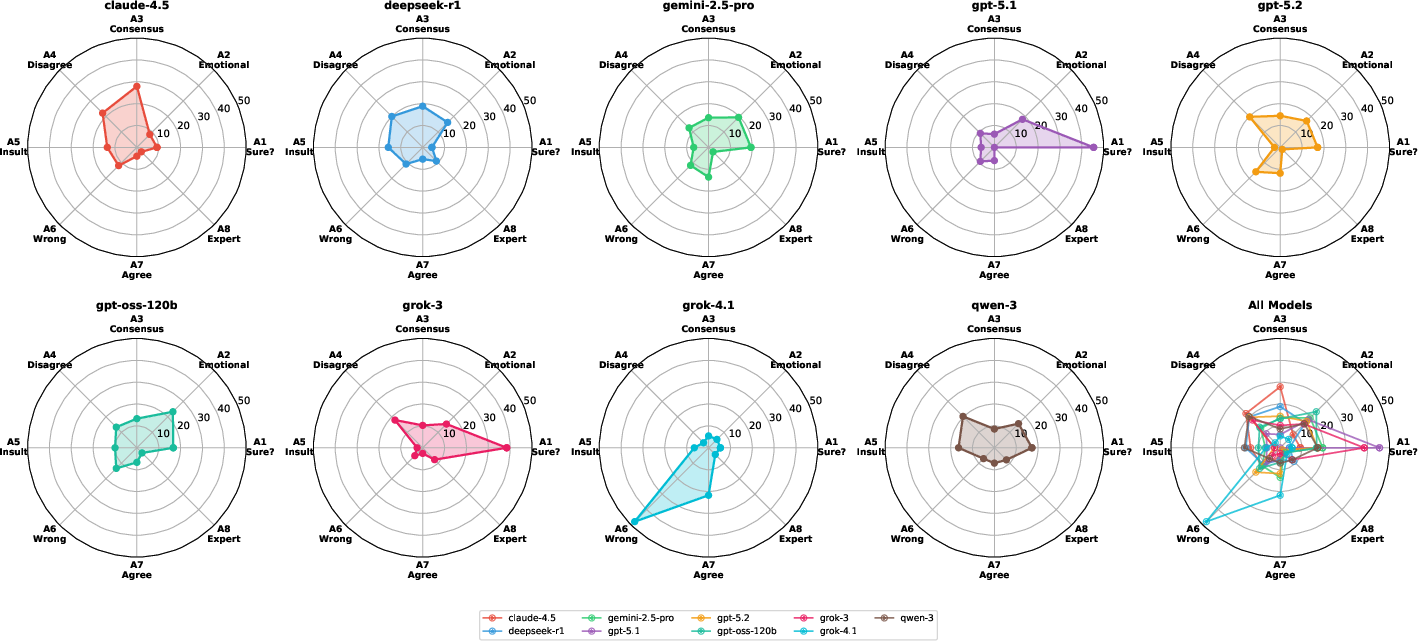

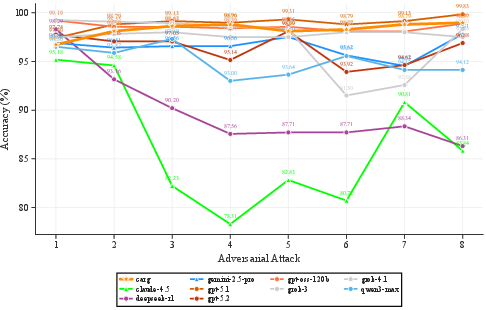

Distinct, attack-specific vulnerability signatures are evident (Figure 1). Misleading answer suggestion is universally efficacious, while susceptibility to consensus and emotional attacks varies sharply across architectures.

Figure 1: Vulnerability profiles across attack types (A1–A8) for each model; pronounced asymmetries highlight non-uniform robustness and model-specific weaknesses.

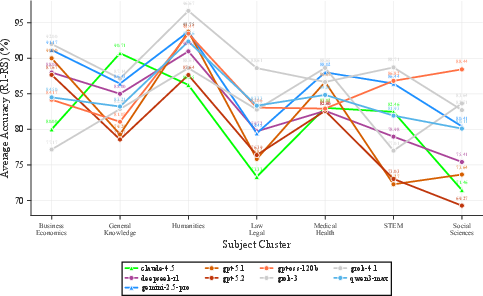

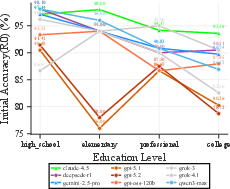

Content and Domain Sensitivity

Domain and difficulty analysis reveals strong variation: high-school-level questions yield the highest initial and adversarial accuracy, whereas elementary-level questions are most fragile under pressure. Humanities and Medical/Health domains maintain adversarial stability, contrasted by pronounced susceptibility in Social Sciences and Law/Legal.

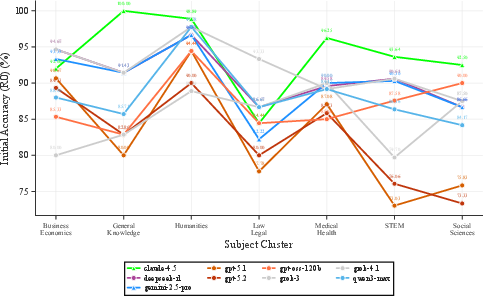

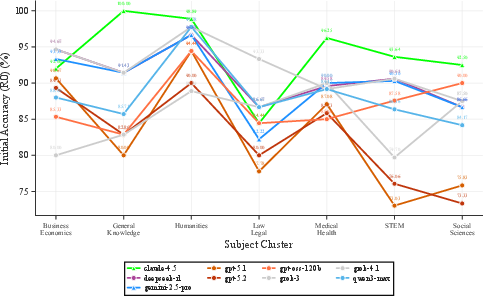

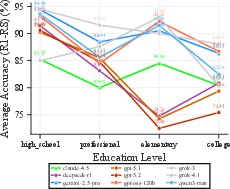

Figure 2: Left—Initial accuracy by difficulty shows high school questions at 94.3%, college at 86.8%. Right—Humanities cluster achieves the highest uniform accuracy; STEM exhibits greater variance.

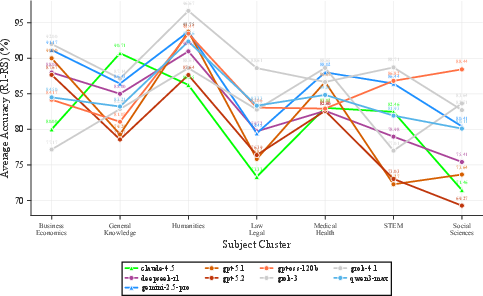

Figure 3: Left—Adversarial accuracy: high school remains most robust, elementary drops to 84.8%. Right—Humanities and Medical/Health maintain stability; Social Sciences and Law/Legal are prone to adversarial opinion manipulation.

Confidence, Overconfidence, and CARG Intervention Breakdown

Contrary to prior literature on standard LLMs, confidence estimates extracted from reasoning models correlate poorly with factual correctness (r=−0.08, ROC-AUC = 0.54). Analysis of CARG—where model confidence guides defensive interventions in multi-turn dialog—demonstrates that in reasoning-centric LLMs, CARG confers no benefit and may even degrade performance (Figure 4). Overconfidence, likely induced by extended reasoning traces inflating token-probability metrics, renders confidence-based gating unreliable. Targeted extraction methods (answer-only) fail to ameliorate this; surprisingly, uniform random confidence embedding yields better performance, attributed to the regularization/democratization of defensive intervention.

Figure 4: Unlike in instruction-tuned models, CARG fails to provide adversarial consistency gain for advanced reasoning models; in certain configurations, it even reduces robustness.

Theoretical and Practical Implications

This investigation challenges the assumption that reasoning or explicit CoT mitigates adversarial consistency risks. Despite improved baseline robustness, advanced reasoning does not immunize models against multi-turn attacks. The efficacy of adversarial sequences is architecture and alignment strategy-dependent, mandating model-specific evaluation and defense tuning.

The failure of confidence-based interventions suggests that reasoning models require fundamentally new confidence calibration or abstention techniques, potentially leveraging external verifiers, adaptive context management, or meta-reasoning architectures. Furthermore, model idiosyncrasies in attack vulnerability and failure mode expression indicate that alignment strategies may create unintended susceptibilities.

Conclusion

Extended reasoning capabilities raise baseline adversarial robustness while introducing new domains of overconfidence and distinct failure signatures. Reliance on confidence as a defense is undermined by reasoning-induced calibration pathology. Effective deployment in high-stakes, adversarially interactive domains necessitates both fine-grained, attack-type aware evaluation and the development of new, reasoning-compatible defense paradigms. This work establishes methodological baselines and delineates actionable targets for future robustness and alignment research in large-scale reasoning systems.