Gaussian Mesh Renderer for Lightweight Differentiable Rendering

Abstract: 3D Gaussian Splatting (3DGS) has enabled high-fidelity virtualization with fast rendering and optimization for novel view synthesis. On the other hand, triangle mesh models still remain a popular choice for surface reconstruction but suffer from slow or heavy optimization in traditional mesh-based differentiable renderers. To address this problem, we propose a new lightweight differentiable mesh renderer leveraging the efficient rasterization process of 3DGS, named Gaussian Mesh Renderer (GMR), which tightly integrates the Gaussian and mesh representations. Each Gaussian primitive is analytically derived from the corresponding mesh triangle, preserving structural fidelity and enabling the gradient flow. Compared to the traditional mesh renderers, our method achieves smoother gradients, which especially contributes to better optimization using smaller batch sizes with limited memory. Our implementation is available in the public GitHub repository at https://github.com/huntorochi/Gaussian-Mesh-Renderer.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces a new way to make and improve 3D models from images, called the Gaussian Mesh Renderer (GMR). It blends two worlds:

- Triangle meshes (the classic way to represent 3D surfaces),

- And “Gaussian splatting” (a newer, fast method that renders scenes as lots of soft, fuzzy blobs).

The main idea: turn every triangle in a mesh into a thin, flat “Gaussian” (think of a very flat, smooth paint splat) so rendering stays fast and provides smooth feedback for learning. This helps computers adjust a 3D shape to match pictures, even on devices with limited memory.

Goals and Questions

The paper asks:

- How can we make mesh-based rendering “differentiable” (easy to learn from mistakes) without heavy, slow computation?

- Can we get smoother, more useful learning signals (called gradients) across the whole triangle, not just at its edges?

- Can this work well with small batch sizes (processing only a few images at a time), which is important for phones or small GPUs?

How It Works (in simple terms)

Think of a 3D model as a set of tiny, flat triangles (like a puzzle). Traditional rendering decides which pixels each triangle covers using sharp, binary decisions: inside or outside. That’s fast to display but not great for learning because the sharp edges don’t tell you how to move the triangle smoothly to improve a picture.

This paper’s trick is to turn each triangle into a “Gaussian”—a smooth blob that:

- Lives exactly on the triangle’s plane,

- Has the right size and orientation to match the triangle,

- Is very thin in the direction perpendicular to the triangle (almost like a paper-thin jelly pancake),

- Carries the triangle’s color.

Key steps (explained like everyday actions):

- Find a local coordinate system for each triangle (like choosing “left” and “up” directions on that triangle).

- Compute how the triangle’s area is spread in that local space (its “covariance,” which you can imagine as how the paint splat spreads out).

- Build a flat 3D Gaussian using that shape, keeping it aligned with the triangle.

- Render all these Gaussians using a fast “splatting” engine that is already good at handling lots of blobs efficiently.

- During training, compare the rendered image to the real photo, and use the “gradient” (a gentle nudge telling you how to change the model) to adjust the mesh.

Helpful analogies:

- Differentiable rendering: like getting soft, continuous feedback instead of “yes/no” so you can make fine, steady improvements.

- Gaussian splatting: like painting with many small, soft airbrush dots that blend smoothly.

- Gradient: a hint telling you which direction to move triangle corners to make the rendered picture look more like the real one.

Main Findings

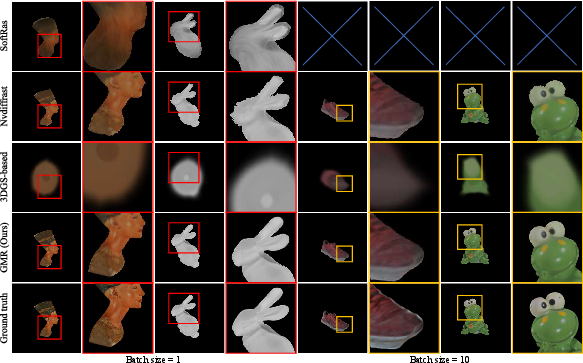

In tests across 17 different 3D objects and two training setups (using 1 image at a time vs. 10 images at a time), GMR:

- Reconstructed shapes more accurately than popular alternatives (both classic mesh renderers like SoftRas and Nvdiffrast, and simple Gaussian-based baselines).

- Produced smoother gradients across triangles, which made learning more stable—especially when using small batches, which is common on memory-limited devices.

- Used less memory than Nvdiffrast in the mini-batch setup and was faster than SoftRas when using batch size 1.

- Stayed robust even when the starting mesh was off-center (it still converged to the right shape).

Why this matters:

- Smoother gradients mean the model can reliably improve from image comparisons, reducing artifacts like jagged edges or broken shapes.

- Better performance with small batches makes this suitable for mobile or low-memory setups.

Limitations:

- For very large batch sizes, a highly optimized GPU renderer like Nvdiffrast can still be faster and even more accurate. The authors suggest moving some parts of their method to the GPU (CUDA) in the future to speed things up.

Implications and Impact

GMR makes it easier and lighter to train 3D mesh models from images:

- It can help create accurate 3D shapes for apps like AR/VR, games, and digital twins on everyday hardware.

- Because meshes are standard and work well with physics and editing tools, this approach bridges the gap between fast, modern rendering (Gaussian splatting) and practical, editable 3D geometry.

- With smoother learning and lower memory use, it’s promising for mobile devices and real-time applications.

The authors have shared their implementation on GitHub, which can help researchers and developers build on this idea quickly.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of what remains missing, uncertain, or unexplored in the paper, phrased to guide concrete follow-up research:

- GPU implementation and throughput: The mesh-to-Gaussian conversion runs on the CPU; no CUDA/fused kernels are provided. Quantify per-iteration time breakdown (conversion vs. rasterization), implement a GPU kernel, and re-benchmark throughput and scalability.

- Large-batch regime performance: The method underperforms Nvdiffrast for batch sizes >50; the trade-off curve across batch sizes is not characterized. Provide systematic batch-scaling experiments (e.g., 1–256) and optimize for high-throughput training.

- Fixed topology assumption: GMR assumes fixed mesh connectivity. Explore differentiable remeshing, adaptive refinement/coarsening, and topology-changing operations (splits/merges) compatible with the Gaussian representation.

- Appearance model minimality: All Gaussians are fully opaque (o=1) and colored by per-triangle averaged RGB under a Lambertian assumption. Integrate and evaluate:

- UV texture sampling (to avoid losing high-frequency detail),

- view-dependent BRDFs via spherical harmonics,

- PBR material parameters (normal/roughness/metallic maps),

- and shadows/reflections under differentiable lighting.

- Visibility and occlusion fidelity for opaque surfaces: 3DGS’s alpha compositing is not analyzed for z-buffer equivalence; potential blending artifacts near occluding boundaries are unexamined. Compare against strict z-buffer ground truth and investigate depth-testing-like compositing within GMR.

- Silhouette sharpness and edge leakage: Matching the triangle area to the Gaussian 1-sigma ellipse may extend support beyond the triangle boundary, potentially blurring silhouettes or bleeding colors across edges. Ablate the scaling choice (e.g., k-sigma area matching, per-triangle bandwidth control) and measure effects on edge accuracy.

- Sensitivity to s_z and bandwidth choices: The flatness parameter is fixed at s_z=1e-6 without justification. Analyze stability and gradient quality versus s_z and in-plane bandwidths, especially for glancing angles and thin structures.

- Numerical robustness for degenerate/sliver triangles and non-manifold meshes: Although ε-stabilization is used, failure modes under extreme aspect ratios, zero-area facets, and non-manifold connectivity are not evaluated. Provide stress tests and preprocessing recommendations.

- Scalability with mesh resolution and scene complexity: Experiments use ~40K facets and single-object scenes. Benchmark accuracy, memory, and speed at 10×–100× triangle counts, multi-object scenes, and large indoor/outdoor environments.

- Real-world evaluation and generalization: All views are synthetically rendered with known cameras and clean silhouettes. Evaluate on real images with:

- unknown/estimated lighting,

- pose noise,

- background clutter and segmentation errors,

- and photometric noise; consider joint optimization of cameras and lighting.

- Texture seams and UV continuity: Per-triangle constant color discards texture detail and may ignore seam handling. Develop texture-aware GMR that samples UV per-pixel/splat and preserves seam continuity without bleeding.

- Backface culling and two-sided materials: The paper does not specify how backfaces are handled (culled vs. rendered). Study the impact on visibility, gradients, and convergence for thin shells and two-sided materials.

- Support beyond triangles: The conversion is defined for triangles only. Extend and validate GMR for quads/ngons, subdivision surfaces, and implicit/spline surfaces via appropriate Gaussian approximations.

- Gradient field analysis: The claim of “smoother gradients” is empirical; no theory or diagnostic analysis is provided. Characterize the gradient distribution (e.g., spatial extent, magnitude spectra), convergence basins, and compare with SoftRas/Nvdiffrast analytically and via controlled experiments.

- Baseline breadth and fairness: Comparisons omit stronger mesh-aligned 3DGS methods (e.g., SuGaR, MeshGS) in an optimization setting and do not explore hyperparameter tuning of SoftRas/Nvdiffrast (e.g., softness, coverage). Add these baselines and tuned variants for a fuller picture.

- Multi-object and complex visibility: Experiments focus on isolated objects. Test scenes with multiple inter-occluding objects, depth sorting across instances, and per-object compositing.

- Dynamic/animated meshes: GMR is evaluated on static geometry only. Extend to time-varying meshes with temporal regularization and assess stability and drift across frames.

- Profiling and memory analysis: Only headline memory reductions are reported. Provide detailed memory/time profiles (conversion, rasterization, autograd), and explore memory-saving strategies (e.g., mixed precision, checkpointing).

- Camera model generality: The camera model and projection specifics are not detailed. Validate GMR with orthographic, fisheye, rolling-shutter, and panoramic cameras; differentiate w.r.t. intrinsics/extrinsics.

- Anti-aliasing and prefiltering: How GMR handles aliasing across scales and high-resolution rendering is not studied. Compare prefiltering strategies, splat footprint control, and multi-scale rendering quality.

- Robustness to silhouette supervision noise: Silhouette BCE assumes clean masks. Quantify sensitivity to mask errors and investigate robust silhouette losses or uncertainty-aware masks.

- Regularization ablation: Edge-length and Laplacian losses are fixed across experiments; their influence on detail preservation vs. over-smoothing is not analyzed. Perform ablations and explore curvature-aware or feature-preserving regularizers.

- Reproducibility scope: While code is released, the paper lacks details on dataset splits, exact object list, and training scripts/configs for baselines. Provide complete reproducibility materials and seeds for fair verification.

Practical Applications

Immediate Applications

The following applications can be deployed with the current GMR implementation and commodity GPUs. Each item notes sector(s), potential tools/workflows, and key assumptions or dependencies impacting feasibility.

- Lightweight inverse rendering for mesh optimization on small GPUs

- Sectors: software/ML research, computer vision, graphics

- What: Drop-in replacement for SoftRas-style differentiable mesh renderers in PyTorch pipelines; use GMR’s smoother gradients to stabilize optimization with small batch sizes (1–10) and limited memory

- Tools/workflows: PyTorch + gsplat-based training loops; existing losses (color, silhouette, edge length, Laplacian); start-from-sphere initialization; export to standard mesh formats (OBJ/GLTF)

- Assumptions/dependencies: Fixed mesh topology; accurate camera intrinsics/extrinsics; Lambertian base color; opaque surfaces; CPU-side mesh-to-Gaussian conversion (current implementation), GPU preferred for throughput

- On-device or edge-friendly photogrammetry for e-commerce and content creation

- Sectors: retail/e-commerce, media/entertainment, AR/VR

- What: Memory-efficient mesh reconstruction from multi-view images or short capture sessions, suitable for laptops and high-end phones/tablets; generate product meshes for catalogs, virtual try-on, and XR assets

- Tools/workflows: Capture video/images → camera calibration → GMR-based optimization → texture baking/export; integrate as a plug-in in Blender or Unity asset pipelines

- Assumptions/dependencies: Adequate multi-view coverage; stable calibration; good lighting and limited translucency/specularity; performance depends on device GPU; current speed may be slower than Nvdiffrast for large batches

- Robotics and autonomous systems: object-level reconstruction for manipulation and perception

- Sectors: robotics

- What: Offline reconstruction of object meshes from robot camera logs using small batches, enabling accurate shape models for grasp planning, collision checking, and simulation

- Tools/workflows: Dataset ingestion → GMR inverse rendering → export to simulation engines (Gazebo, Isaac, PyBullet)

- Assumptions/dependencies: Fixed topology meshes suffice for most manipulation targets; visibility constraints and occlusions handled via silhouette/color losses; real-time operation requires further optimization

- Visual effects and match-move: rapid asset recovery from plates

- Sectors: media/entertainment

- What: Fast, memory-efficient mesh recovery for scene elements from limited camera viewpoints; improves boundary quality and reduces jagged artifacts versus traditional rasterizers

- Tools/workflows: Plate selection → camera solve → GMR optimization → mesh export and lookdev

- Assumptions/dependencies: Opaque objects with Lambertian approximation are preferred initially; for glossy/metallic assets, view-dependent materials require extension (see long-term)

- Education and prototyping in differentiable rendering and inverse graphics

- Sectors: education, academia

- What: A simple, open-source renderer to teach and prototype differentiable mesh optimization methods; demonstrate gradient behavior improvements compared to SoftRas/Nvdiffrast

- Tools/workflows: Classroom notebooks and assignments; reproducible experiments on common 3D test models; rapid ablations of loss terms and batch sizes

- Assumptions/dependencies: Students require basic GPU access; familiarity with PyTorch; fixed topology constraints in initial exercises

- Digital twin calibration and asset alignment

- Sectors: industrial simulation, manufacturing

- What: Adjust existing CAD or mesh assets to match real imagery (e.g., site photos), refining geometry under limited hardware and small batches

- Tools/workflows: Initial CAD mesh → multi-view capture → GMR optimization → alignment validation with NC/CD metrics

- Assumptions/dependencies: Fixed topology meshes; rigid components favored; complex materials may need PBR integration later

- Privacy-preserving local 3D reconstruction

- Sectors: policy/privacy, consumer apps

- What: Perform on-device reconstruction to avoid uploading imagery to cloud services; leverage GMR’s lower memory footprint to reduce energy and data transfer

- Tools/workflows: Mobile app local pipeline; export/share meshes without raw image uploads

- Assumptions/dependencies: Sufficient device GPU; minimal data retention; battery/thermal constraints

Long-Term Applications

The following applications require further research, engineering, or scaling—especially GPU-side acceleration of mesh-to-Gaussian conversion, support for complex materials, or real-time streaming.

- Real-time, on-device 3D capture and reconstruction for XR

- Sectors: AR/VR, mobile software

- What: Streamed multi-view capture with online GMR optimization to produce meshes in near real-time; enable live object insertion in AR scenes

- Tools/products: “GMR Lite” mobile SDK; ARKit/ARCore integration; Unity/Unreal plugins

- Dependencies: CUDA/Metal/Vulkan kernels for conversion and rasterization; incremental/streaming optimizers; thermal management on mobile; camera tracking robustness

- Differentiable physics and control with mesh-based gradients

- Sectors: robotics, engineering design

- What: Use GMR to bridge image-based objectives to mesh geometry, then propagate gradients into physical simulation (e.g., shape optimization for manipulability, aerodynamic properties)

- Tools/workflows: GMR + differentiable physics engines; joint optimization of geometry and control parameters

- Dependencies: Accurate physical models; stable differentiable visibility across occlusions; tight integration of rendering and physics; potential need for topology changes (not supported yet)

- Healthcare: reconstruction from endoscopy or limited-view medical imaging

- Sectors: healthcare

- What: Optimize anatomical surface meshes from sparse, noisy views for surgical planning or navigation, leveraging small-batch stability and lower memory usage

- Tools/workflows: Endoscope video + calibration → GMR optimization → mesh export to surgical planning systems

- Dependencies: Regulatory compliance; handling specular highlights, translucency, and non-Lambertian tissues; domain-specific losses and priors; real-time constraints in OR settings

- Industrial inspection and metrology from multi-view imagery

- Sectors: manufacturing, quality assurance

- What: Reconstruct precise meshes of parts to detect defects or deviations from CAD using image-based pipelines and GMR’s smoother gradients

- Tools/workflows: Multi-view capture rigs → GMR reconstruction → automated comparison against CAD models (CD/NC metrics)

- Dependencies: High geometric fidelity; error bounds and certification; materials with complex reflectance require PBR support and spherical harmonics color modeling

- Full PBR/material modeling and view-dependent appearance within GMR

- Sectors: graphics, gaming, VFX

- What: Extend GMR’s color handling to spherical harmonics and PBR material parameters for realistic novel-view rendering and inverse material estimation

- Tools/workflows: Material parameter optimization; shader integration; texture/material baking workflows

- Dependencies: Accurate lighting models; differentiable BSDFs; robust visibility handling; potential performance trade-offs

- Large-scale batch training and dataset generation for learned geometry models

- Sectors: ML, dataset curation

- What: Use accelerated GMR in large-batch regimes to generate training data (meshes, renderings, normals) or to supervise geometry networks with image-based losses

- Tools/workflows: Distributed pipelines; cloud GPU clusters; curriculum learning for inverse graphics

- Dependencies: GPU-side conversion; scheduler-aware rasterization; improved throughput (currently Nvdiffrast is faster at large batches)

- Consumer-grade 3D printing and personalization from phone captures

- Sectors: consumer apps, maker ecosystem

- What: Capture an object with a phone and reconstruct a watertight mesh suitable for 3D printing; perform lightweight refinements on-device

- Tools/workflows: Phone capture → GMR reconstruction → mesh repair/simplification → slicer export

- Dependencies: Watertightness and manufacturability checks; topology editing (not in scope yet); support for thin structures and non-opaque materials

- Energy-efficient edge AI standards and privacy policy alignment

- Sectors: policy/standards, sustainability

- What: Promote local 3D reconstruction standards that reduce compute, bandwidth, and data sharing; define benchmarks for energy savings with lightweight differentiable rendering

- Tools/workflows: Policy frameworks; green AI metrics; secure on-device pipelines

- Dependencies: Industry buy-in; standardized evaluation suites; device capability baselines

- CAD-aware inverse design and shape optimization

- Sectors: engineering, product design

- What: Iteratively update design meshes to meet image-based criteria (aesthetics/visibility) or measured appearance, while integrating CAD constraints

- Tools/workflows: CAD → mesh → GMR optimization → parameter updates back to CAD

- Dependencies: Differentiable link to CAD parameters; topology changes; multi-object constraints; high-fidelity photometric modeling

Notes on cross-cutting assumptions and dependencies:

- Current GMR assumes fixed mesh topology and nearly planar Gaussians (s_z ≈ 0); topology changes and volumetric effects are out of scope.

- Opaque, Lambertian approximation is used; complex reflectance needs spherical harmonics/PBR extensions.

- Camera calibration quality and sufficient multi-view coverage are critical for reliable reconstruction.

- The mesh-to-Gaussian conversion is not yet GPU-accelerated; large-batch performance lags behind Nvdiffrast.

- Mobile and edge deployments must consider thermal limits, memory caps, and real-time latency requirements.

Glossary

- 1-sigma ellipse: The contour enclosing one standard deviation of a 2D Gaussian, used to match area with a triangle. "the area of the Gaussian's $1$-sigma ellipse, "

- 3D Gaussian Splatting (3DGS): A rendering technique that represents scenes with 3D Gaussians for efficient, differentiable rasterization. "3D Gaussian Splatting (3DGS) has enabled high-fidelity virtualization with fast rendering and optimization for novel view synthesis."

- anisotropic Gaussians: Gaussians whose spread varies with direction, modeled by non-uniform covariance. "By representing a scene as a collection of anisotropic Gaussians, 3DGS supports parallel rasterization and provides analytical gradients"

- barycentric coordinates: A coordinate system expressing any point inside a triangle as a weighted combination of its vertices. "we can represent any point inside the triangle using barycentric coordinates with "

- chamfer distance (CD): A metric measuring geometric discrepancy between two surfaces or point sets. "We compare geometric accuracy after optimization using chamfer distance (CD) between the predicted and the ground-truth surfaces"

- covariance matrix: A matrix capturing the variance and covariance of a Gaussian’s spread. "The 2D covariance matrix in the local coordinate system is then constructed as:"

- DC component: The constant (direct) term of a signal; here, the view-independent RGB color of a Gaussian. "represented as its RGB direct component (DC) and view-dependent spherical harmonics coefficients."

- differentiable rendering: Rendering that allows gradient computation through the image formation process for optimization. "Differentiable rendering, 3D reconstruction, Gaussian splatting."

- edge-crossing: A coverage computation technique in rasterization based on detecting crossings of triangle edges between pixels. "computing coverage based on edge-crossing between neighboring pixels."

- eigen decomposition: Factoring a matrix into eigenvalues and eigenvectors to analyze principal directions. "denote the eigen decomposition of the 2D covariance matrix as"

- Gaussian Mesh Renderer (GMR): The proposed lightweight differentiable mesh renderer integrating Gaussian and mesh representations. "named Gaussian Mesh Renderer (GMR)"

- Gaussian primitive: An individual Gaussian entity used as a rendering primitive in 3DGS/GMR. "Each Gaussian primitive is analytically derived from the corresponding mesh triangle"

- Gram-Schmidt orthogonalization: A method to produce orthonormal vectors from a set of vectors. "in practice, it can be computed via Gram-Schmidt orthogonalization using ."

- gradient flow: The propagation of gradients from rendered outputs back to model parameters during optimization. "preserving structural fidelity and enabling the gradient flow."

- gsplat: An open-source rasterizer library used for Gaussian splatting. "uses the gsplat rasterizer"

- hard step function: A non-differentiable binary function used in traditional rasterization edge tests. "commonly implemented using a hard step function"

- Laplacian smoothing loss: A mesh regularization loss that promotes smooth surfaces by minimizing local geometric distortion. "Laplacian smoothing loss promotes mesh smoothness by minimizing local geometric distortion."

- Lambertian surfaces: Surfaces that reflect light diffusely, independent of view direction. "assuming Lambertian surfaces for simplicity"

- LPIPS: A learned perceptual image similarity metric comparing patches for visual quality assessment. "i.e, PSNR, SSIM, and LPIPS."

- local orthonormal coordinate system: A right-handed frame with orthonormal axes defined on a mesh facet. "we define a local orthonormal coordinate system embedded on the facet plane."

- mesh topology: The fixed connectivity structure of vertices and faces in a mesh. "which assumes a fixed mesh topology"

- normal consistency (NC): A metric that measures alignment of surface normals between predicted and ground-truth geometry. "and normal consistency (NC) measuring the angle deviation between corresponding surface normals."

- novel view synthesis: Generating images of a scene from unseen camera viewpoints. "optimization for novel view synthesis."

- Nvdiffrast: A GPU-efficient differentiable rasterization library by NVIDIA. "Nvdiffrast, computing coverage based on edge-crossing between neighboring pixels."

- opacity: A scalar controlling transparency; 1 denotes fully opaque. "we set the opacity for all Gaussians"

- physically-based rendering (PBR): Rendering models based on physical light-material interactions. "using mesh's physically-based rendering (PBR) materials"

- positive semi-definite: A matrix property indicating all eigenvalues are non-negative. "is guaranteed to be positive semi-definite."

- PSNR: Peak Signal-to-Noise Ratio, an image reconstruction fidelity metric. "i.e, PSNR, SSIM, and LPIPS."

- rasterization: Converting geometric primitives into pixel coverage for image formation. "leveraging the efficient rasterization process of 3DGS"

- silhouette loss: A loss measuring mismatch between rendered and ground-truth object masks via binary cross-entropy. "Silhouette loss measures binary cross-entropy between the rendered mask and the ground-truth object silhouette."

- Soft Rasterizer (SoftRas): A differentiable mesh renderer that replaces hard edges with smooth probabilities. "Soft Rasterizer (SoftRas) replaces hard step functions of mesh boundary with smooth probability functions"

- SO(3): The group of 3D rotation matrices (special orthogonal group). ""

- spherical harmonics: A basis for representing view-dependent appearance in Gaussians. "view-dependent spherical harmonics coefficients."

- splatting: Projecting and blending primitives (e.g., Gaussians) onto the image plane during rendering. "in a differentiable splatting process"

- SSIM: Structural Similarity Index, an image quality metric assessing structural fidelity. "i.e, PSNR, SSIM, and LPIPS."

- tessellated: Subdivided into smaller polygons (e.g., triangles) to approximate a surface. "tessellated into approximately 40K triangular facets."

- VectorAdam: An optimizer variant tailored for rotation-equivariant geometry optimization. "We adopt VectorAdam as the default optimizer for all experiments."

- view-dependent: Appearance that varies with the camera viewpoint. "view-dependent spherical harmonics coefficients."

Collections

Sign up for free to add this paper to one or more collections.