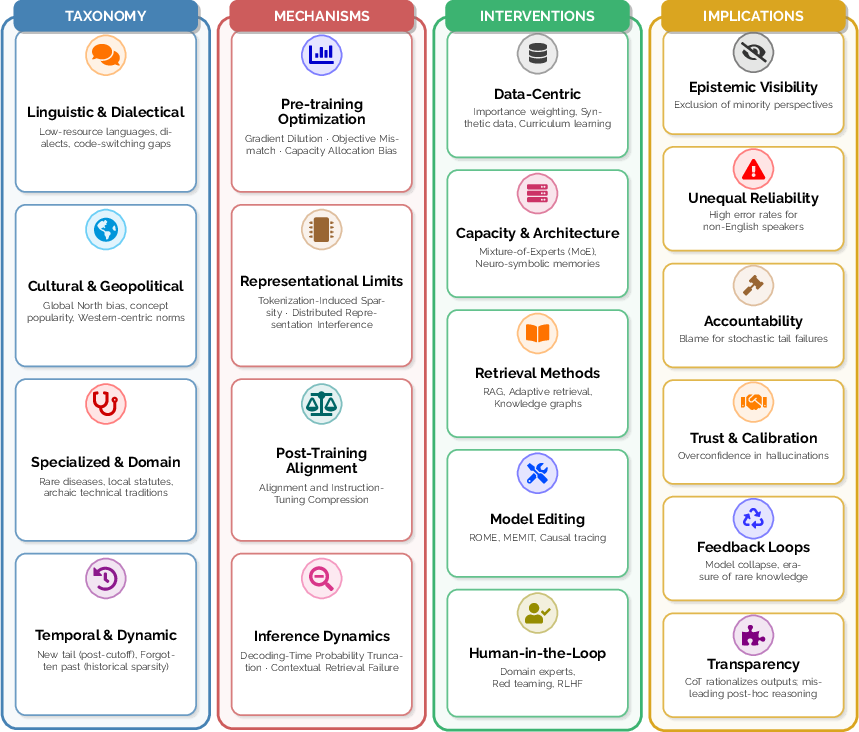

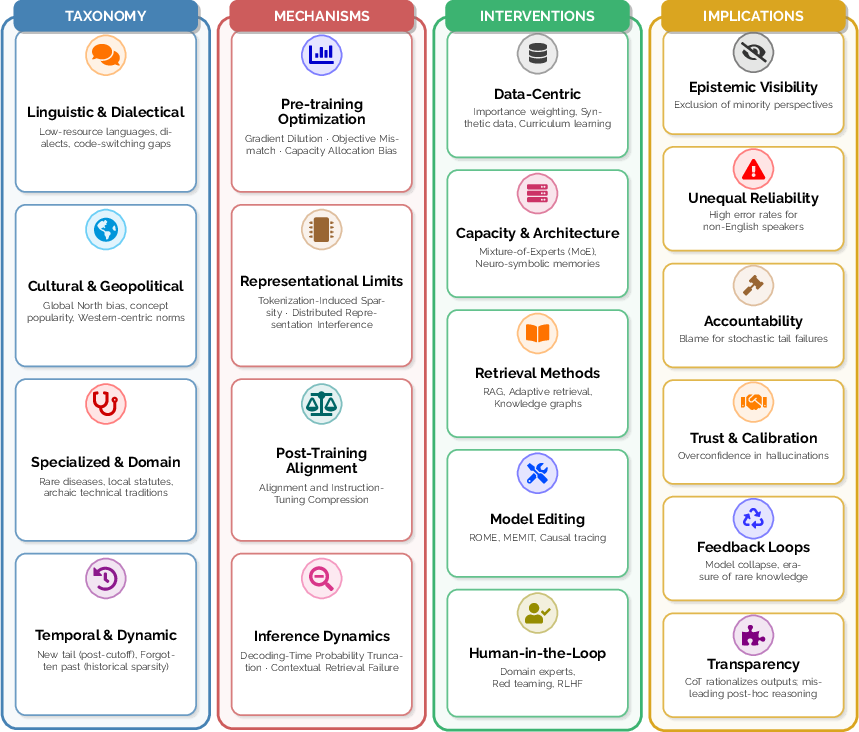

- The paper introduces a taxonomy for long-tail knowledge in LLMs, detailing linguistic, cultural, specialized, and temporal deficiencies.

- It analyzes mechanisms impacting rare fact encoding, such as gradient dilution, tokenization challenges, and filtering effects during inference.

- The paper surveys interventions like data augmentation, architectural tweaks, and retrieval-augmented generation to mitigate tail knowledge failures.

Structured Analysis of Long-Tail Knowledge in LLMs

Introduction and Motivation

The paper "Long-Tail Knowledge in LLMs: Taxonomy, Mechanisms, Interventions and Implications" (2602.16201) systematically examines the under-explored phenomenon of Long-Tail Knowledge (LTK) in LLMs. The motivation rests on empirical evidence that, despite increasing model scale and data volume, LLMs persistently underperform on low-frequency, domain-specific, cultural, and temporal knowledge. These failures, often overlooked in standard LLM evaluation and development, have both technical and sociotechnical ramifications that current research has failed to synthesize coherently.

The paper addresses this fragmentation by introducing a conceptual framework for LTK: articulating its taxonomy, analyzing mechanisms of loss, reviewing mitigation interventions, and mapping downstream implications for reliability, accountability, and fairness.

Figure 1: Overview of Long-Tail Knowledge in LLMs: Taxonomy, Mechanisms, Interventions, and Implications.

Taxonomy of Long-Tail Knowledge

The taxonomy differentiates LTK along four non-exclusive axes, each reflecting distinct sources of empirical sparsity in training data:

- Linguistic and Dialectical Sparsity: LLMs degrade sharply on low-resource languages and dialects due to tokenization artifacts and limited data presence— performance collapse is observed for African languages like Amharic, with failures compounded by morphological complexity and suboptimal token segmentation [stanford2025mindgap; petrov2023tokenization; adebara2025enhancing]. Even within high-resource languages, dialects (e.g., AAVE) and code-switching pose significant robustness and safety failures [hofmann2024dialects; groenwold2024one; yong2023multilingual].

- Cultural and Geopolitical Peripheries: Cultural salience imbalances emerge as high accuracy for Western and Global North entities, but frequent hallucination or omission for underrepresented regions. LLMs trained on dominant discourse encode a Western-centric worldview, with notable failures in subjective global opinion alignment and local concept retrieval [jiang2023cpopqa; naous2024measuring; santurkar2023whose].

- Specialized and Domain-Specific Tails: Rare medical diagnoses, legal statutes, and technical tradition are minimally represented in web-scale data, resulting in high hallucination rates and low recall in rare disease prediction and specialized legal citation [chen2025mimic; dahl2024hallucinating; nay2024law].

- Temporal and Dynamic Knowledge: The "new tail" (post-training facts) and the "forgotten past" (historical knowledge with low digital presence) both suffer from recency or temporal misalignment, producing outdated answers, hallucinations, and representational gaps [luu2021time; jang2023temporal].

Mechanisms of Knowledge Loss

The analysis identifies compound failures across model pipeline stages:

- Pre-training Optimization: Standard loss functions induce gradient dilution for rare facts— expected gradient magnitude is proportional to fact frequency, causing tail knowledge to be sparsely and unreliably encoded [pezeshki2021gradient; kandpal2023struggle]. Maximum Likelihood Estimation objectives further incentivize high-probability generic patterns over rare, accurate facts, increasing hallucination rates [ji2023hallucination; mallen2023trust].

- Representational Constraints: Tokenization-induced sparsity inflates sequence length and semantic fragmentation for rare tokens, especially affecting low-resource languages and entity names [petrov2023tokenization; ali2024tokenization]. Superposition effects in Transformer representations force rare features into fragile, non-orthogonal spaces, susceptible to interference and catastrophic forgetting [elhage2022toy; henighan2025superposition].

- Post-Training Alignment: RLHF and other instruction tuning methods introduce "alignment tax"—the model learns to hedge or abstain on tail distributions, compressing outputs toward generic, head-biased answers. Instruction tuning can degrade calibration on low-frequency queries and overgeneralize refusal or uninformative responses [ouyang2022training; lin2024mitigating].

- Inference Dynamics: Nucleus and top-k decoding explicitly filter low-probability tail tokens, making rare fact retrieval during generation highly improbable. Retrieval failures are exacerbated by weak attention keys for tail knowledge and context-mismatch between prompts and training data [holtzman2019curious; mallen2023trust].

Survey of Mitigation Strategies

Intervention strategies are categorized by model lifecycle locus:

- Data-Centric Approaches: Importance weighting (e.g., GradTail [chen2022gradtail]), synthetic tail data generation (LLM-AutoDA [wang2024llmautoda]), and curriculum learning with focused synthetic textbooks enhance tail representation. However, aggressive reweighting risks head catastrophic forgetting, and recursive synthetic training precipitates model collapse and further tail erosion [shumailov2024curse].

- Architectural Schemes: Sparse Mixture-of-Experts architectures (e.g., Mixtral 8x7B [jiang2024mixtral]) expand potential memory capacity without activating all parameters per token. Empirically, however, routing mechanisms often collapse to super-experts, undertraining tail-specific experts [dai2024unveiling; li2024meid]. Neuro-symbolic hybrids incorporating explicit key-value memories offer tail fact retrieval, but suffer latency and synchronization issues [khandelwal2020generalization].

- Retrieval-Augmented Generation (RAG): Externalizes knowledge by querying an index at inference, effectively patching "new" tail gaps without retraining [lewis2020rag; guu2020retrieval]. Adaptive RAG selectively retrieves when tail knowledge is detected (using metrics like GECE) but is bound by retrieval quality and context window limitations [zhang2024role].

- Model Editing: Local parameter updates (ROME, MEMIT) enable surgical correction of tail facts but are not scalable to lifelong editing due to ripple effects and parameter drift [meng2022rome; meng2023memit; cohеn2023evaluating].

- Human-in-the-Loop and Institutional Oversight: Integration of expert auditing and pluralistic feedback reduces high-stakes hallucination, but remains inherently unscalable, with limitations in panel diversity and sustainment [AuditLLM; barman2025pluralistic].

Sociotechnical Implications

LTK deficiencies manifest in concrete ecosystem risks:

- Epistemic Visibility: Models amplify digitally dominant perspectives, making rare or marginalized facts progressively invisible. Empirical audits show a strong correlation between document frequency and answerability, raising concerns about knowledge pluralism and structural epistemic exclusion [kandpal2023struggle].

- Unequal Reliability: Users in linguistic, cultural, or regional peripheries experience systematically higher error and hallucination rates, particularly in languages with low data presence or for local concepts not covered in standard benchmarks [stanford2025mindgap; lee2024blend].

- Accountability Vacuum: Failure attribution becomes ambiguous for tail failures since generation is probabilistic. Hallucinated legal citations have led to real-world sanctions, yet error responsibility diffuses across the system pipeline [dahl2024hallucinating; ho2025free].

- Trust and Calibration: Confidence remains high even when factual accuracy collapses in the tail, leading to systematic user overreliance and critical risk in scenarios where users cannot verify rare facts [zhang2025law].

- Feedback Loops: LLM output is recursively incorporated into training corpora, exacerbating tail erosion. Model collapse studies show that repeated synthetic data finetuning erases distribution diversity, systematically compounding LTK loss [shumailov2024aimc].

- Explainability Limits: Post-hoc explanations are unreliable for tail knowledge; models rationalize outputs even when lacking robust internal representations, undermining faithfulness guarantees [turpin2023unfaithful].

- Measurement/Audit Gaps: Standard evaluation suites omit tail knowledge, and single-metric aggregation conceals stratified, high-impact errors [oakden2020hidden; liang2023holistic]. Closed-source evaluation further blurs transparency and accountability [widder2023limits; casper2024blackbox].

Open Challenges and Directions

The convergence of technical and sociotechnical limitations highlights several open problems:

- Tail-Aware Evaluation: Need for operational LTK definitions that stratify by domain relevance, not just frequency. Static benchmarks must be supplemented by slice-based and domain-specific measures that explicitly surface worst-group error and head/tail performance divergence.

- Accountability and Governance: Black-box audits are insufficient for tail failure discovery; regulatory frameworks must incorporate knowledge uncertainty and the stochastic, context-dependent nature of generation errors.

- Privacy and Sustainability: Aggressively reweighting or amplifying tail data raises unique privacy risks (rare fact leakage [carlini2021extracting]) and calls into question the scalability of parametric memorization as the sole strategy for rare knowledge retention, especially given the high economic and carbon costs of ever-larger models [strubell2019energy; patterson2021carbon].

- Dynamic and Sociotechnical Focus: The tail is not static—feedback loops between LLMs, users, and digital information environments recursively reshape what is "rare". RAG-systems’ dependency on commercial indices adds layers of external bias and fragility to knowledge access.

Conclusion

The paper presents a unified analytical framework for understanding LTK limitations as a fundamental, systemic property of LLM architectures, optimization paradigms, and data pipelines (2602.16201). Contrary to the view that long-tail failures are isolated technical anomalies, the synthesis reveals these are deeply interwoven with sociotechnical infrastructure and the epistemics of digital knowledge aggregation. Isolated interventions are at best partial: improvements in scale and architectural heterogeneity do not resolve foundational sparsity or representational exclusions; retrieval strategies, while pragmatic, inherit and amplify new dimensions of external dependency. Robust measurement and conscientious deployment necessitate transparent, tail-aware evaluation practices, explicit demarcation of reliability bounds, and ongoing institutional oversight. Future LLM development must reconsider both the technical and systemic logics by which rare, marginalized, or domain-critical knowledge is encoded, accessed, and governed.