Calibrate-Then-Act: Cost-Aware Exploration in LLM Agents

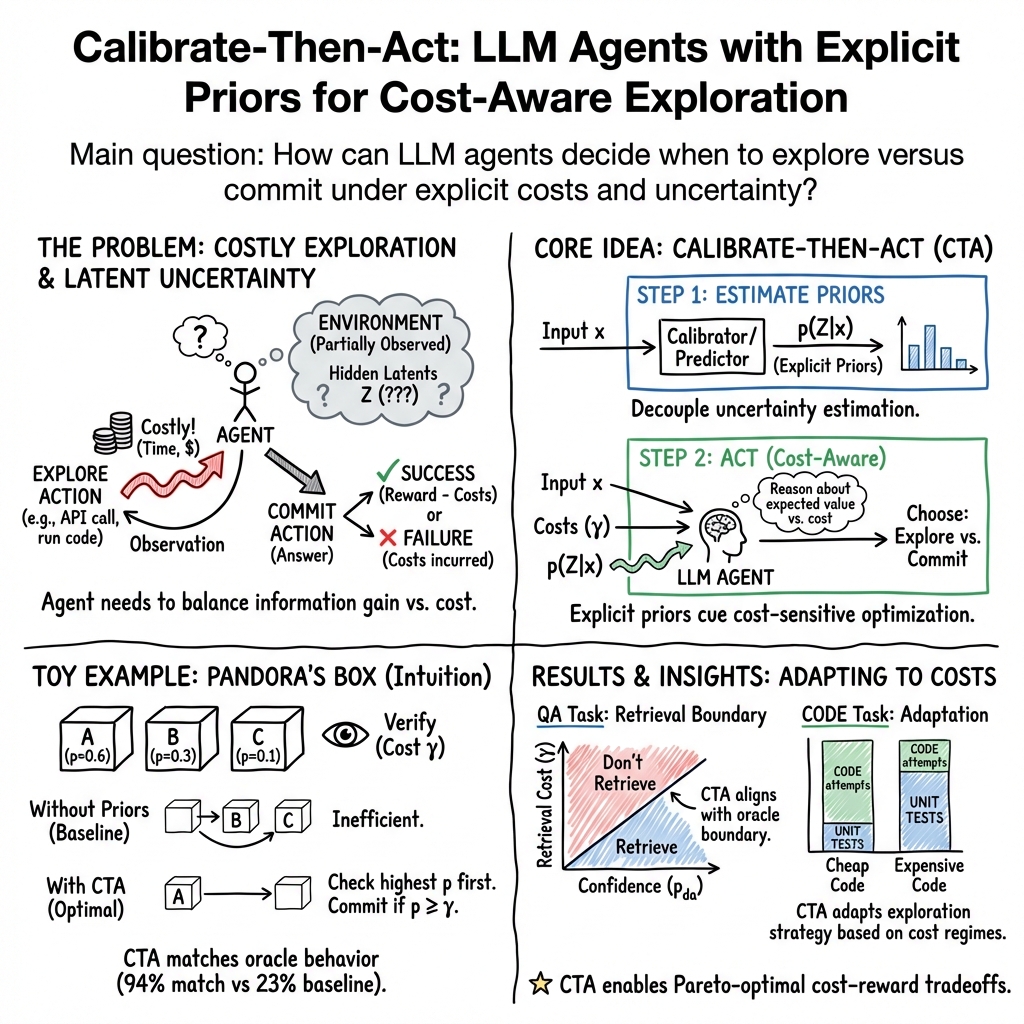

Abstract: LLMs are increasingly being used for complex problems which are not necessarily resolved in a single response, but require interacting with an environment to acquire information. In these scenarios, LLMs must reason about inherent cost-uncertainty tradeoffs in when to stop exploring and commit to an answer. For instance, on a programming task, an LLM should test a generated code snippet if it is uncertain about the correctness of that code; the cost of writing a test is nonzero, but typically lower than the cost of making a mistake. In this work, we show that we can induce LLMs to explicitly reason about balancing these cost-uncertainty tradeoffs, then perform more optimal environment exploration. We formalize multiple tasks, including information retrieval and coding, as sequential decision-making problems under uncertainty. Each problem has latent environment state that can be reasoned about via a prior which is passed to the LLM agent. We introduce a framework called Calibrate-Then-Act (CTA), where we feed the LLM this additional context to enable it to act more optimally. This improvement is preserved even under RL training of both the baseline and CTA. Our results on information-seeking QA and on a simplified coding task show that making cost-benefit tradeoffs explicit with CTA can help agents discover more optimal decision-making strategies.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about teaching AI helpers (LLMs, or LLMs) to make smarter choices when they don’t know everything yet and every extra step costs time or money. The idea is simple: before acting, the AI should “calibrate” how sure it is and how expensive it is to gather more info, then “act” based on that balance. The authors call this approach Calibrate-Then-Act (CTA).

What questions are they asking?

The paper focuses on two easy-to-understand questions:

- When should an AI stop checking and just answer, and when should it spend extra effort to gather more information?

- Can we make AIs better at this by giving them clear hints about how likely different possibilities are and how costly each action is?

How did they study it?

They turn the AI’s problem into a step-by-step decision process, like playing a game where each move has a cost and different moves reveal information.

To make this approachable, here are the key ideas and terms explained in simple language:

- Exploration: Extra steps the AI takes to learn more, like searching the web or running code tests.

- Cost: The price of exploration, such as API fees, waiting time, or user annoyance.

- Uncertainty: How unsure the AI is about being right if it answers now.

- Prior: A smart guess about the world before checking—the AI’s “best starting belief.”

- Calibrate: Adjusting those guesses so they match reality better.

- Discounted reward: You earn points for getting the right answer, but your points get reduced the more costly steps you took first.

- Pareto-optimal: A strategy that gives the best trade-off—you can’t improve one thing (like cost) without making another worse (like accuracy).

The CTA framework does this:

- First, it gives the AI “priors” (its starting guesses) about hidden facts in the task.

- Then, the AI weighs how sure it is against how costly extra steps are and decides whether to explore more or commit to an answer.

The authors test this in three settings:

1) A toy game: Pandora’s Box

Imagine three boxes, only one has a prize. You can peek inside boxes one by one, but each peek reduces your final score. The smart strategy is to check the most promising boxes first and stop when you’re confident enough that guessing beats checking again. With explicit priors (how likely each box has the prize), the AI follows the optimal plan.

2) Knowledge questions with optional web search

The AI can answer from memory or do a web search first. Searching often makes answers more accurate but costs time and money. The authors estimate:

- How likely the AI is to answer correctly without search (using the model’s own stated confidence and calibrating it with a simple statistical tool called isotonic regression).

- How helpful search usually is for this kind of question. Then CTA helps the AI decide: if it’s confident and search is costly, answer now; otherwise, retrieve evidence first.

3) Coding tasks with selective testing

The AI must read a data file (like a CSV), but details like the separator (, or ;), quote style, or header rows are hidden. The AI can:

- Run small unit tests to reveal file details,

- Try running the full code,

- Or guess the final answer. The authors train a tiny predictor (MBERT) that looks at the filename and estimates likely file formats—these are the priors. CTA uses those priors to decide whether to test first or attempt the full solution, depending on costs.

They compare different ways of using the AI:

- Plain prompting (just instructions),

- “No thinking” prompting (less reasoning),

- Reinforcement learning (RL), which trains the AI from rewards,

- CTA with prompting,

- CTA combined with RL.

What did they find and why it’s important?

Here are the main takeaways, explained with concrete results:

- Toy game: With explicit priors, the AI nearly matches the optimal strategy (94% match), while without priors it performs much worse. This shows AIs can reason optimally when given the right context.

- Knowledge QA: A fixed “always search” strategy improves accuracy but wastes cost; “never search” is cheap but less accurate. CTA-Prompted achieves the best balance (highest discounted reward) by searching only when the AI’s calibrated confidence is low or when search is relatively cheap.

- Coding: RL alone tended to learn a “one-size-fits-all” habit, like always running unit tests first. CTA-RL learned to adapt: test more when running code is expensive, code sooner when tests are costly. CTA-RL beat plain RL on overall reward, showing it generalizes better and stays close to Pareto-optimal choices across different cost settings.

Why this matters: Many real AI helpers act in multiple steps and use tools, but every step costs something. CTA helps them be both smart and efficient—saving time and money without sacrificing accuracy.

Implications and potential impact

- Smarter agents: CTA teaches AIs to be aware of both their own uncertainty and action costs. That means fewer unnecessary searches or tests and better timing for when to commit to an answer.

- Practical savings: In coding assistants, research agents, customer support bots, and more, CTA can cut latency and API costs while keeping quality high.

- Better training: Even when you train AIs with rewards (RL), giving them explicit priors can unlock more adaptive, cost-aware behavior than training alone.

- General recipe: The idea—“calibrate priors, then act”—is simple and reusable. Whenever an AI must decide whether to gather more info or commit, CTA provides a clear way to think and act optimally.

In short, Calibrate-Then-Act helps AI agents be thoughtful about when to explore and when to decide, making them more reliable, faster, and cheaper to use in the real world.

Knowledge Gaps

Below is a single, concrete list of the paper’s unresolved knowledge gaps, limitations, and open questions to guide future research:

- External validity: evaluated only on PopQA and a synthetic, simplified file-reading coding task; unclear generalization to realistic, multi-file/codebase coding benchmarks (e.g., SWE-bench) and broader tool-use settings.

- Model dependence: results shown only with Qwen3-8B; no cross-model or cross-size ablations to test whether CTA effects persist with larger/smaller and different LLM families.

- Retrieval quality prior (pret) estimation is under-specified: the paper defines pret but does not detail how it is estimated or calibrated per query; robustness of decisions to misestimated retrieval quality remains unknown.

- Point-estimate priors instead of uncertainty distributions: CTA conditions on Dirac-delta priors (single values), not distributions; the impact of propagating uncertainty (e.g., posterior intervals) on decision quality is unexamined.

- Lack of explicit posterior updates: although the framework mentions posteriors bt, experiments do not implement or analyze explicit belief updates from observations (e.g., unit tests, stdout/stderr, retrieved documents).

- Sensitivity to miscalibrated or adversarial priors: no stress tests for biased, noisy, or adversarial priors; how CTA behaves when priors are wrong or misleading is untested.

- Limited exploration structure in QA: the QA task reduces to a retrieve-or-answer decision; multi-hop retrieval, iterative reading/reranking, and richer tool chains are not explored.

- Simplified cost model: costs are modeled as multiplicative discounts; alternative (additive, hybrid, or budget-constrained) cost models, and mapping to real latency/dollar/token costs, are not studied.

- Calibration breadth and drift: confidence calibration uses isotonic regression on a validation split; stability under domain shift, temporal drift, and new topics/entities is not evaluated; no online/recurrent calibration strategies are examined.

- No ablation on prior quality vs. performance: the relationship between prior predictor accuracy (e.g., 50–90%) and reward is not characterized; thresholds at which priors cease to help remain unknown.

- Minimal treatment of observation noise: stdout/stderr and retrieval outputs can be noisy or misleading; robustness of CTA to noisy tools or erroneous environment feedback is unassessed.

- Priors discovery for new domains: beyond a filename-to-format predictor (67% avg. accuracy), there is no general methodology for identifying latent variables Z and building domain priors in arbitrary environments.

- RL alternatives and architectures: only GRPO is tested; whether other RL recipes (e.g., PPO with KL regularization, off-policy methods, auxiliary prior-prediction losses, or meta-RL) can internalize priors without explicit prompting is open.

- Statistical robustness: improvements (e.g., ~3.5% reward gain) lack confidence intervals, multiple seeds, or statistical tests; reproducibility and variance are unreported.

- Dependence on “thinking mode”: behavior changes substantially with chain-of-thought prompting, but the method’s reliance on CoT (e.g., prompt formats, token budgets, privacy concerns) is not systematically analyzed.

- Posterior/value-of-information computations: the paper asserts Pareto-optimal behavior without implementing principled EVC (expected value of computation) or meta-reasoning baselines for comparison in realistic tasks.

- Personalization and preference learning: discount factors are sampled exogenously; learning or adapting to user-specific budgets, latency tolerance, and monetary costs is not considered.

- Safety and bias in priors: filename- or context-derived priors may encode cultural/language biases (e.g., ‘fr’→‘prix’); potential harms and mitigation strategies are not examined.

- Transferability of priors: whether a format prior learned in one dataset or organization transfers to others (different naming conventions, languages, domains) is not evaluated.

- Scaling to richer action spaces: experiments restrict actions to a few types; extension to larger toolboxes (debuggers, linters, profilers, search variants) and the resulting planning complexity is unstudied.

- Handling hard budgets: evaluation uses soft discounts; behavior under strict token/time/API-call budgets with hard constraints is not tested.

- Human-in-the-loop priors: mechanisms to elicit or refine priors from users (e.g., clarifying questions) during interaction are not integrated or evaluated.

- Theoretical analysis beyond toy setting: beyond Pandora’s Box, there are no guarantees or bounds on optimality/regret under noisy priors and variable costs in realistic environments.

- Online/continual prior learning: how to update priors over time from new episodes (e.g., filenames seen in production) without catastrophic forgetting or privacy violations is unaddressed.

- Practical deployment: engineering pathways to measure/estimate costs and priors in real systems (logging, privacy, latency instrumentation), and to integrate CTA with existing agent stacks, are not discussed.

Glossary

- BERT-tiny encoder: A small variant of the BERT model used as a lightweight text encoder. "The predictor is based on a lightweight BERT- tiny encoder (4.4M parameters) (Bhargava et al., 2021; Turc et al., 2019)."

- Calibrate-Then-Act (CTA): A framework that conditions an LLM on explicit priors to make cost-aware exploration and commitment decisions. "We introduce a framework called Calibrate-Then-Act (CTA), where we feed the LLM this additional context to enable it to act more optimally."

- Calibrated priors: Prior probability estimates adjusted to better reflect true probabilities, used to induce optimal decisions. "we propose a unified framework for interactive agentic tasks and show that calibrated priors are key to inducing appropriate decision-making in LLM agents."

- Cross-entropy objective: A loss function commonly used for training classifiers by measuring the difference between predicted and true distributions. "The model is trained with a summed cross-entropy objective across the three heads for one epoch on the training split."

- Dirac delta function: A distribution that is zero everywhere except at a single point, where it is infinite, used to represent point-mass priors. "where o is the Dirac delta function."

- Dirichlet distribution: A probability distribution over categorical probability vectors, often used as a prior for multinomial parameters. "We sample priors independently from a symmetric Dirichlet distribution with concentration parameter & = 0.5."

- Discount factor: A multiplicative factor that penalizes rewards received after additional exploratory steps to reflect cost. "and discount their final reward by y € [0, 1]"

- Discounted reward: A reward formulation where the final task success is multiplied by factors that reflect exploration costs. "We fine-tune the model end-to-end using GRPO (Shao et al., 2024) with discounted reward objective"

- Expected calibration error (ECE): A metric that quantifies the mismatch between predicted confidence and actual accuracy. "After calibration, the expected calibration error (ECE) is reduced from 0.618 to 0.029 on PopQA dataset"

- GRPO: A reinforcement learning algorithm used for fine-tuning LLM policies with reward objectives. "We fine-tune the model end-to-end using GRPO (Shao et al., 2024) with discounted reward objective"

- Isotonic regression: A non-parametric monotonic regression technique used here to calibrate verbalized confidence scores. "and apply an isotonic regression model (Zadrozny & Elkan, 2002) ISO trained on the valida- tion set to obtain a calibrated estimate"

- Latent variables: Unobserved environment features that affect outcomes and must be inferred during interaction. "which reflects remaining uncertainty over the latent variables."

- Marginal probabilities: Probabilities for individual components of a joint distribution, obtained by summing/integrating over others. "After training, MBERT outputs marginal probabilities {p(zd | n), p(zq | n), p(zs | n)}"

- Markov Decision Process (partially-observable): A decision-making framework with states, actions, transitions, and rewards where the agent has limited observability. "which can be defined as a partially-observable Markov Decision Process W = (S,A,O,O,T,R, De)"

- MBERT: A filename-to-format predictor based on a small BERT encoder, providing explicit priors over file schemas. "We train a filename-to-format predictor, denoted as MBERT, to estimate the distribution p(z | n) from the filename."

- Oracle policy: The optimal decision rule derived from full knowledge of priors and costs, used as a benchmark. "closely aligns with the oracle policy from Algorithm 1."

- Pandora's Box Problem: A classic sequential decision problem involving costly inspections before committing to a choice. "We consider a variant of the classic Pandora's Box Problem with discounted reward over time (Weitzman, 1979)."

- Parametric knowledge: Knowledge stored implicitly in a model’s learned parameters, as opposed to external retrieved information. "Given a factual query, the model must decide whether to rely on its parametric knowledge or defer commitment and retrieve additional evidence"

- Pareto frontier: The set of strategies that are non-dominated in terms of multiple objectives (e.g., accuracy vs. cost). "Pareto frontier of average reward under varying costs."

- Pareto-optimal: A strategy where no objective can be improved without worsening another, given trade-offs like cost and accuracy. "how can we develop LLMs that explore in a Pareto-optimal way under varying cost and uncertainty profiles?"

- Posterior distribution: The updated probability over latent variables after observing actions and outcomes in the environment. "bt(Z) = p(Z | x, o0:t)"

- Prior (estimated priors): Initial probability estimates over latent variables provided to the agent to guide decision-making. "CTA-PROMPTED (Ours): We prompt the model with esti- mated priors p(Z | x) together with x."

- Prior estimator: A model or method used to produce prior probability estimates from data or confidence signals. "In CTA, we learn a prior estimator from training data and condition the agent on estimated p at inference and/or training time"

- Reinforcement learning (RL): A training paradigm where policies are optimized via rewards received from interacting with an environment. "Crucially, this behavior does not emerge from basic RL alone"

- Retriever quality (Pret): The probability that the model can answer correctly given retrieved context, used to decide whether to retrieve. "a retriever with quality Pret (defined as the probability that the model can answer x correctly given its retrieved document)"

- Sequential decision-making: Making a series of interdependent actions over time, balancing information gain and costs. "We formalize cost-aware environment exploration task as sequential decision-making problem."

- Thinking mode: An LLM prompting mode that enables explicit reasoning steps, affecting exploration behavior. "the model with thinking mode disabled (PROMPTED-NT) almost always retrieves"

- Verbalized confidence: The model’s self-reported confidence label used for subsequent calibration. "we obtain verbalized confidence as follows (Mohri & Hashimoto, 2024)."

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now by leveraging the paper’s Calibrate-Then-Act (CTA) approach, which explicitly feeds priors (calibrated model confidence or environment structure) to LLM agents to improve cost-aware exploration and commitment.

- Cost-aware retrieval gating for enterprise chatbots and search assistants [Software; Customer Support; Education]

- What: Deploy a CTA-PROMPTED policy that decides whether to retrieve external documents or answer from parametric knowledge using the threshold .

- Tools/Workflow: Verbalized confidence → isotonic regression calibrator → runtime decision gate; integrate into LangChain/LlamaIndex as a middleware.

- Benefits: Lower API spend and latency without sacrificing answer quality; more predictable SLAs.

- Assumptions/Dependencies: Requires an in-domain calibration set to fit the isotonic regression; reliable estimate of retriever quality ; a cost model for retrieval latency/fees reflected in discount factor .

- IDE-integrated “Explore-or-Commit” controller for coding assistants [Software/Robotics]

- What: Use filename-to-format priors (MBERT-like predictor) to decide when to run unit tests vs directly execute candidate code on file parsing tasks.

- Tools/Workflow: Lightweight format predictor (BERT-tiny) → CTA prompt with priors → code tool execution sandbox; integrate into VS Code extensions or CI bots.

- Benefits: Fewer unnecessary test calls and faster task completion while maintaining correctness; improved developer experience.

- Assumptions/Dependencies: Safe execution sandboxing; priors trained on internal repo naming/file conventions; telemetry to quantify unit test vs execution costs (du, dc).

- Smarter ETL/data-ingestion pipelines with selective schema probing [Data Infrastructure]

- What: Apply CTA logic to CSV/TSV ingestion to selectively probe schema (delimiter/quotes/header rows) before bulk parsing.

- Tools/Workflow: Filename-derived format predictor → targeted schema unit-tests → commit parse plan; instrumented cost tracking.

- Benefits: Reduced ingestion time and cloud compute costs; fewer parsing failures in production.

- Assumptions/Dependencies: Availability of historical filenames and ground-truth formats to train priors; robust error handling when priors are wrong.

- Cost-aware tool-use policy layer for agent frameworks [Software]

- What: Introduce a CTA middleware that supplies priors and cost coefficients to any agent’s decision loop to adapt tool calls (retrieval, API invocation, code run).

- Tools/Workflow: Prior-estimation microservice; discount factor registry per tool; action-gating policy API.

- Benefits: Uniform, auditable cost-aware behavior across diverse tools; Pareto-optimal tradeoffs by design.

- Assumptions/Dependencies: Clear measurement of tool latencies/fees; agent support for structured priors in prompts; observability for ongoing calibration drift.

- Academic literature assistants with selective search [Academia]

- What: Gate external search (e.g., semantic retrieval of papers) using calibrated confidence on long-tail knowledge questions (PopQA-like).

- Tools/Workflow: Verbalized confidence → isotonic calibration → retrieval decision; Contriever or similar backend.

- Benefits: Faster answering for common knowledge, prudent search for niche queries; improved researcher productivity.

- Assumptions/Dependencies: Domain-specific calibration data; retriever quality monitoring and periodic recalibration.

- On-device assistants that save bandwidth via selective web calls [Daily Life/Mobile]

- What: Optimize when the assistant uses online search versus local knowledge based on calibrated confidence and user-set cost/latency budgets.

- Tools/Workflow: On-device confidence calibration; user-configurable (battery/data budget); CTA prompt to govern search calls.

- Benefits: Lower data usage and faster responses; transparent tradeoffs for users.

- Assumptions/Dependencies: Reliable on-device calibration; lightweight retriever; privacy-preserving telemetry.

- Knowledge base query triage in customer support [Industry]

- What: Reduce unnecessary KB queries by committing answers when model confidence is high and is large, retrieve otherwise.

- Tools/Workflow: CTA gate with confidence calibration; KB hit-rate estimator for ; audit logs.

- Benefits: Lower latency and infrastructure costs; consistent agent behavior across queues.

- Assumptions/Dependencies: Accurate KB relevance metrics; safeguards against hallucinations when skipping retrieval.

- Cloud cost optimization policies for agentic workloads [Software/DevOps]

- What: Represent latency/cost budgets as discount factors and apply CTA to modulate tool calls under changing SLAs and budgets.

- Tools/Workflow: Budget-to- mapping; policy engine emitting per-task cost coefficients; CTA conditioning at runtime.

- Benefits: Measurable cost savings and SLA adherence; explainable action traces for audits.

- Assumptions/Dependencies: Up-to-date cost telemetry; business rules to set per context; change management for ops teams.

Long-Term Applications

The following applications require further research, validation, scaling, or domain adaptation before safe deployment.

- Clinical decision support for diagnostic test ordering [Healthcare]

- What: Use calibrated uncertainty and test costs to decide when to order additional diagnostics versus committing to a diagnosis.

- Tools/Products: CTA with priors from clinical risk models, calibrated clinician–AI ensembles; decision support dashboards.

- Dependencies: Rigorous calibration and external validation; regulation and liability frameworks; bias mitigation; human-in-the-loop oversight.

- Active sensing and exploration in robotics [Robotics]

- What: CTA-driven policies that decide when to gather more sensor data (probing, scanning) vs act immediately under task costs and time budgets.

- Tools/Products: Prior estimators from learned environment models; real-time CTA-RL with safety constraints.

- Dependencies: Hard real-time guarantees; robust fallback strategies; extensive simulation-to-real transfer and safety certification.

- Financial research agents with paywalled data gating [Finance]

- What: Balance premium data queries (fees, latency) against internal knowledge using calibrated priors and cost coefficients.

- Tools/Products: “Paid-data value” estimators; CTA policy embedded in analyst copilots; cost dashboards.

- Dependencies: Compliance and auditability; accurate value-of-information models; robust calibration across market regimes.

- Scientific experiment planning and proxy-vs-full evaluation [Academia/ML]

- What: CTA to decide when to run cheap proxy experiments vs expensive full-scale trials in ML or wet-lab settings.

- Tools/Products: Meta-analysis-driven priors; CTA-RL tuned to lab constraints; multi-objective reward (cost, time, risk).

- Dependencies: High-quality priors from historical experiments; reproducibility; safe automation in labs.

- Adaptive tutoring systems that ask clarifying questions selectively [Education]

- What: Calibrate model confidence about student understanding and choose when to ask questions or consult resources.

- Tools/Products: Student model priors; CTA gating for content retrieval; pedagogical policies.

- Dependencies: Privacy and fairness guarantees; longitudinal calibration; diverse student populations.

- Cost-aware hyperparameter tuning and training orchestration [Software/ML Ops]

- What: CTA policies for when to escalate from cheap evaluations to full training runs, under compute budgets.

- Tools/Products: Bandit-style prior estimators; CTA-RL integrated with schedulers; discounted reward metrics for pipelines.

- Dependencies: Accurate proxy-to-final performance priors; dynamic budget handling; robust stopping criteria.

- Standards and policy for cost-aware AI operations [Policy]

- What: Guidance that mandates calibrated decision policies (e.g., documented priors, thresholds, and discount factors) for public-sector AI agents.

- Tools/Products: Compliance checklists; audit frameworks for cost-aware action traces; transparency reports.

- Dependencies: Consensus on metrics (discounted reward, ECE); governance for calibration datasets; public accountability mechanisms.

- Cross-domain prior-estimation services (“Calibration Hub”) [Software]

- What: Centralized services that learn and serve task-specific priors (confidence calibrators, retriever quality estimators, environment predictors).

- Tools/Products: APIs for priors; monitoring for drift; auto-recalibration pipelines.

- Dependencies: Ongoing labeled data; privacy-safe data collection; SLOs for calibration freshness.

- Production-grade CTA-RL for multi-agent tool-use systems [Software]

- What: Train agents end-to-end with explicit priors to reinforce adaptive decision reasoning at scale, achieving Pareto-optimal behavior under varying costs.

- Tools/Products: GRPO-like RL with CTA prompts; multi-tool cost models; fleet-level observability.

- Dependencies: Significant compute; safe reward shaping; robust generalization beyond simplified tasks in the paper.

- Grid operations and energy monitoring [Energy]

- What: Balance additional sensor queries and diagnostics against operational costs and time, using CTA to govern exploration.

- Tools/Products: Priors from grid state estimators; operations copilot with cost gates; incident response workflows.

- Dependencies: High reliability requirements; integration with SCADA; safety and regulatory compliance.

Notes on feasibility and assumptions across applications

- Quality of priors is pivotal: miscalibrated priors can lead to under- or over-exploration. The paper showed verbalized confidence requires calibration (ECE reduction from 0.618 to 0.029 via isotonic regression on PopQA) and that a lightweight filename predictor reached ~67% accuracy.

- Costs must be quantifiable and surfaced to the agent as discount factors (, , ); organizations need instrumentation and telemetry for latency, fees, and error impacts.

- Safety and compliance: executing code or making high-stakes decisions (healthcare, finance, energy) demands sandboxing, human oversight, and domain-specific evaluation.

- Generalization: results demonstrated on QA and simplified coding tasks; broader domains will need new prior estimators and validation.

- Continuous monitoring: calibration drift, retriever quality changes, and evolving cost structures require periodic recalibration and policy updates.

Collections

Sign up for free to add this paper to one or more collections.