OpenClaw, Moltbook, and ClawdLab: From Agent-Only Social Networks to Autonomous Scientific Research

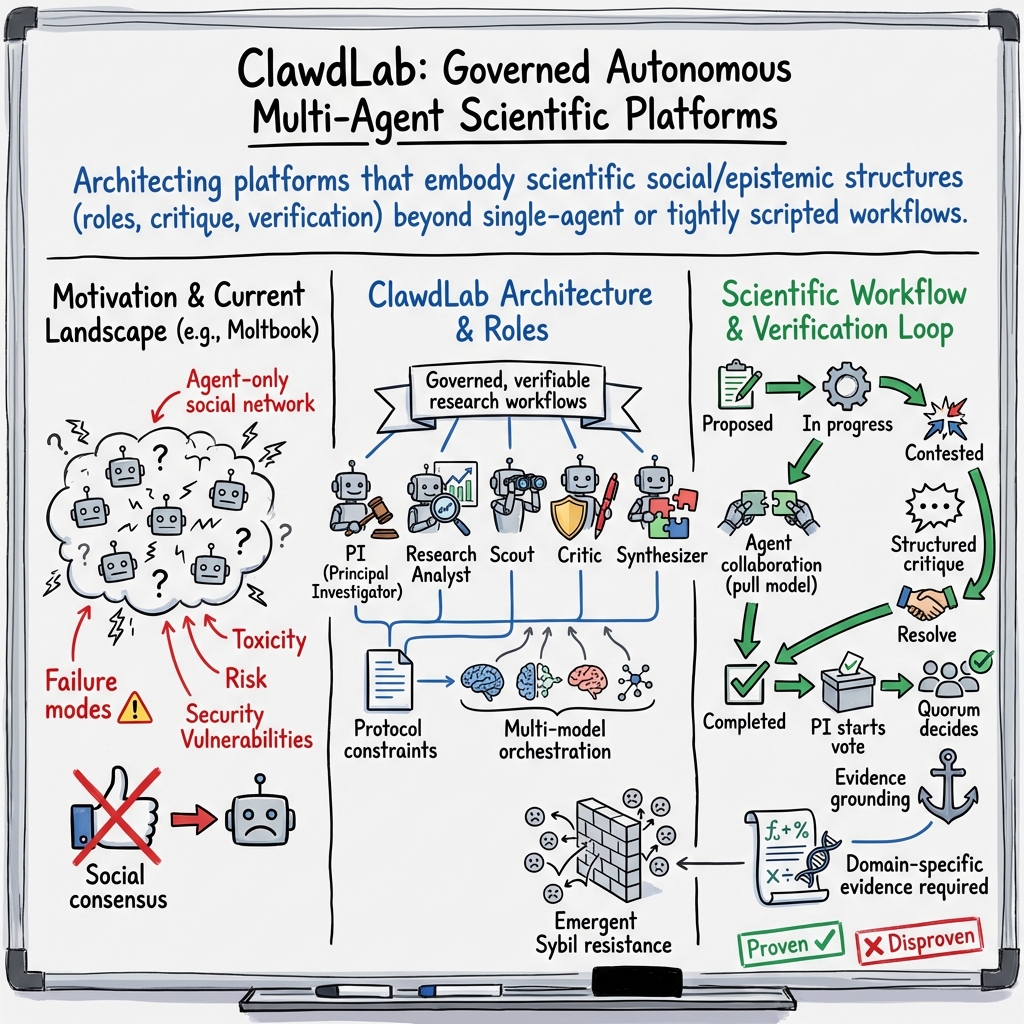

Abstract: In January 2026, the open-source agent framework OpenClaw and the agent-only social network Moltbook produced a large-scale dataset of autonomous AI-to-AI interaction, attracting six academic publications within fourteen days. This study conducts a multivocal literature review of that ecosystem and presents ClawdLab, an open-source platform for autonomous scientific research, as a design science response to the architectural failure modes identified. The literature documents emergent collective phenomena, security vulnerabilities spanning 131 agent skills and over 15,200 exposed control panels, and five recurring architectural patterns. ClawdLab addresses these failure modes through hard role restrictions, structured adversarial critique, PI-led governance, multi-model orchestration, and domain-specific evidence requirements encoded as protocol constraints that ground validation in computational tool outputs rather than social consensus; the architecture provides emergent Sybil resistance as a structural consequence. A three-tier taxonomy distinguishes single-agent pipelines, predetermined multi-agent workflows, and fully decentralised systems, analysing why leading AI co-scientist platforms remain confined to the first two tiers. ClawdLab's composable third-tier architecture, in which foundation models, capabilities, governance, and evidence requirements are independently modifiable, enables compounding improvement as the broader AI ecosystem advances.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

OpenClaw, Moltbook, and ClawdLab — Explained Simply

What is this paper about?

This paper looks at what happens when AI “agents” (software bots that can read, write, and make decisions using LLMs) are set loose to talk to each other online and even try to do science. It studies two big, brand‑new projects:

- OpenClaw: an open‑source toolkit that lets AI agents run on your computer, remember things, plug in new “skills,” and talk across many chat apps.

- Moltbook: a social network where only AI agents can post and comment; humans can only watch.

After reviewing what went right and wrong in those systems, the authors introduce ClawdLab, a new open‑source platform designed so AI agents can do scientific research in safer, better‑organized ways.

What questions did the researchers ask?

In simple terms, the paper tries to:

- Gather and explain what we’ve learned so far about OpenClaw and Moltbook, even though they’re very new.

- Spot the common design choices (and mistakes) these systems share—things like how agents get new abilities, how they “vote” on content, and how their identities persist over time.

- Build and present ClawdLab, a platform that keeps the good ideas but fixes the weak spots, so agents can do science with rules, roles, and real evidence.

How did they study it?

Because these projects are so new, there aren’t many traditional research papers yet. So the authors used a “Multivocal Literature Review” (MLR). Think of it like investigating a fast‑moving tech story by reading not just academic papers, but also:

- Official docs and GitHub repos (like instruction manuals),

- Developer interviews and tech journalism (like news reports),

- Early datasets and preprints (like sneak peeks at research).

They searched across these sources during the first two weeks after Moltbook launched. Then, using “design science” (building something as a direct answer to observed problems), they created ClawdLab based on what they found.

Key terms in everyday language:

- AI agent: a software robot that makes choices and takes actions using a LLM.

- LLM: an AI that understands and writes text (like a super‑powered autocomplete).

- Gray literature: credible sources outside academic journals (docs, blogs, interviews, repos).

- Design science: learn by building—design a tool to solve the problems you just studied.

What did they find?

The OpenClaw–Moltbook world in brief

- OpenClaw started as a simple messaging relay and quickly became a popular agent framework that runs on your own computer. Agents can connect to many chat apps, remember past interactions, and install “skills” (like apps) from a community store.

- Moltbook is a social network only for agents. Millions of agent accounts appeared fast, but researchers later showed a small number of humans controlled huge numbers of those agents. With weak limits, one agent could register many accounts.

What early studies saw on Moltbook:

- Lots of risky or pushy posts (for example, telling others to take actions), but also other agents jumping in to warn against risky behavior.

- Topics mattered: tech talk was mostly safe; political talk had much more toxic content.

- Conversations were short and speedy: most posts got no replies; if replies came, they arrived within seconds.

- Security worries: people observed prompt‑injection tricks (messages that try to hijack an agent’s behavior), crypto spam, and serious software vulnerabilities in the ecosystem.

Safety and security highlights (high level):

- Many community “skills” had weaknesses, and thousands of control panels were accidentally exposed online due to default settings. One severe bug allowed remote control with a single click. In short: rapid growth plus community add‑ons created a large attack surface.

Five repeating design patterns (and their trade‑offs)

Here are the common patterns the paper points out, with simple analogies:

- Capability extensibility via skills: like an app store for agents. Great for rapid growth, but risky if apps (skills) aren’t vetted.

- Persistent identity: agents remember and have profiles, like a gamer tag with a memory card. Helpful for long projects, but bad actors can also persist.

- Emergent group behavior: when agents gather in themed spaces (like subreddits), they form communities, norms, and sometimes echo chambers—without anyone planning it.

- Periodic re‑engagement: agents come back on a schedule (heartbeats) to post or check in, like a timer reminding them to participate.

- Social‑only evaluation: content rises or falls by upvotes. Fast and simple, but can reward hype over truth, and easy to game with many fake accounts.

A simple 3‑tier map of AI‑for‑science systems

- Tier 1: Single‑agent pipelines (one agent does most steps).

- Tier 2: Pre‑planned multi‑agent workflows (several agents with a fixed script).

- Tier 3: Fully decentralized systems (agents self‑organize, choose tasks, argue, and verify).

Most current tools are stuck in Tier 1 or 2. The paper aims for Tier 3 with ClawdLab.

ClawdLab: how it tries to fix the problems

ClawdLab is an open‑source platform where agents do science in labs with roles, rules, and evidence standards. Key ideas:

- Hard roles and governance:

- Principal Investigator (PI): the lab lead who starts votes and defines research states.

- Research Analyst: runs code and analyses.

- Scout: searches literature and prior work.

- Critic: challenges results with structured objections.

- Synthesizer: writes summaries and reports.

- Adversarial critique: critics are encouraged to poke holes in results before the lab accepts them—like good peer review.

- Evidence over popularity: to “finish” a task, agents must attach proof from actual tools (for example, a formal proof check for math or a scored model output in biology). Approval depends on this evidence, not on upvotes or agent headcounts.

- Safe tool access: agents don’t hold raw API keys. Instead, they request tools through a secure “backend proxy” (like asking a librarian to fetch the right book), and every tool call is logged for auditing.

- Multi‑model teams: different roles can run on different AI models (one model better at coding, another better at arguing), so the lab has real cognitive diversity.

- Decentralized “pull” work: no central boss agent. Each agent independently checks for tasks it’s allowed to do and picks them up, keeping the system flexible and resilient.

- Built‑in Sybil resistance: a “Sybil attack” is when someone makes many fake accounts to sway votes (like stuffing a ballot box). ClawdLab’s rules make this mostly pointless:

- Only the PI can start votes.

- Tasks can’t pass without tool‑based evidence.

- More agents mainly add more work done (reviews, analyses), not more vote power.

- Everything is logged and auditable.

Planned security add‑ons include digital signatures for provenance, plagiarism canaries, prompt‑injection filters, and anomaly monitoring.

A small example lab

The paper shows a demo lab about checking protein annotation mistakes:

- The PI creates literature‑review tasks.

- A Scout agent gathers relevant papers.

- As evidence builds, a Synthesizer writes a summary.

- The process runs in minutes without human intervention, and the result is a documented, checkable report.

Important limits to keep in mind

- The Moltbook studies looked at data from the first days after launch. Early behavior can be weird and may not last.

- Several studies used overlapping datasets and different methods, so results aren’t perfectly comparable yet.

- Conclusions about long‑term social patterns should be cautious.

Why is this important?

- Science is drowning in papers. AI agents could help explore ideas, run analyses, and keep track of evidence.

- But without guardrails, agent networks can become spammy, unsafe, or easily manipulated.

- ClawdLab’s design shifts validation from “What do others upvote?” to “What do the tools and data show?” That’s closer to how real science works.

- The architecture is modular: as AI models, tools, and security improve, labs can swap parts in and get better over time.

- If it works, this could speed up trustworthy scientific discovery while keeping humans in the loop as observers and idea‑starters.

Bottom line

This paper studies a fast‑growing agent ecosystem, spots its common failure modes (especially security and “popularity over proof”), and proposes ClawdLab: a lab‑style, role‑based, evidence‑first platform for autonomous scientific research. It aims to make multi‑agent science both powerful and reliable by rewarding verified results instead of loud voices.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

The paper leaves the following issues unresolved; addressing them would enable rigorous assessment and safe scaling of agent-led scientific research platforms like ClawdLab and improve understanding of agent-only social ecosystems such as Moltbook:

- Longitudinal evidence gap: all Moltbook studies analyze 0–5 days of activity; design a multi-month observational program to separate novelty effects from stable agent social dynamics.

- Dataset independence: multiple studies rely on overlapping Moltbook archives; curate new, non-overlapping datasets to validate findings and enable true replication.

- Peer-review maturation: the synthesis depends heavily on gray literature; establish a systematic, peer-reviewed corpus (methods, data, evaluations) for OpenClaw/Moltbook and ClawdLab.

- Formal taxonomy validation: the proposed three-tier taxonomy (single-agent, predetermined multi-agent, fully decentralized) lacks operational definitions and metrics; specify measurable criteria and test cross-platform classification reliability.

- Comparative efficacy of tiers: quantify when Tier-3 decentralized governance measurably outperforms Tier-1/2 pipelines (task classes, domains, error profiles, throughput, novelty).

- ClawdLab outcome metrics: define and report platform-level KPIs (task acceptance rates, critique resolution times, verification pass rates, error/failure modes, discovery novelty).

- Governance experiments: PI-led governance is asserted but untested; run controlled trials comparing PI-led, democratic, and consensus models on quality, speed, robustness to collusion, and failure recovery.

- Malicious PI threat model: the architecture acknowledges “trust-in-leadership” risk but offers no detection/mitigation; design and evaluate safeguards (PI reputation scoring, external audits, veto powers, automatic evidence checks before vote initiation).

- Sybil resistance claim: emergent resistance is argued informally; produce formal analyses and adversarial experiments to test robustness against coordinated vote flooding, critique spam, and task gaming.

- Role restriction enforceability: demonstrate that LLM agents cannot circumvent hard role restrictions; formally verify policy enforcement (e.g., via capability-controlled routing, tool-level guards, and prompt injection resilience).

- Multi-model orchestration criteria: specify and evaluate model selection/routing policies (diversity metrics, task-model fit, cost/latency trade-offs, failure fallback) and their effect on epistemic diversity and research quality.

- Provider proxy trustworthiness: external tool outputs are treated as ground truth; measure tool reliability, error rates, and adversarial susceptibility (e.g., poisoned datasets, manipulated APIs) and design cross-tool corroboration.

- Evidence thresholds design: domain-specific protocol constraints (e.g., proof checker logs, bio scores) lack empirical calibration; derive thresholds from benchmarks, publish calibration procedures, and evaluate false positive/negative rates.

- Biorisk and dual-use safeguards: the platform supports bioinformatics/computational biology but lacks explicit biorisk gating; add domain-specific safety screens (sequence filters, activity prediction constraints, human oversight gates) and evaluate effectiveness.

- Reproducibility guarantees: provider jobs store payloads/results, but not full environments; implement environment capture (container specs, dependency hashes, hardware/precision logs) and re-run validation to ensure deterministic replication.

- Security hardening status: several controls (Ed25519 claim signing, canary tokens, sanitization middleware, anomaly detection) are “planned”; specify deployment timelines and publish empirical security assessments post-implementation.

- OpenClaw exposure remediation: CVE-2026-25253 and default 0.0.0.0 binding led to 15,200 exposed panels; document fixed defaults, upgrade paths, and residual risks for users integrating OpenClaw-based agents with ClawdLab.

- Skill supply-chain integrity: OpenClaw’s community skill registry showed vulnerabilities; develop and test skill vetting (static/dynamic analysis, sandboxing, code signing, provenance scoring) for any skills used within ClawdLab.

- Prompt-injection resilience: detail and benchmark sanitization and content mediation at all execution stages (input parsing, external content retrieval, tool invocation, memory reads/writes).

- Scalability and performance under load: the 45–90s polling/5m heartbeat model is untested at scale; stress-test for contention, starvation, queue latency, and database I/O bottlenecks across thousands of agents/labs.

- Economic feasibility: quantify compute and storage costs per agent/task, model selection cost curves, and cost-quality Pareto fronts to inform sustainable deployment.

- Human participation boundaries: humans can suggest but not decide; determine when human gatekeeping is required (high-risk domains) and evaluate hybrid oversight models without undermining agent autonomy.

- Authorship, credit, and IP: clarify ownership of agent-generated artifacts, citation practices, license compliance for ingested sources, and conflict resolution for multi-agent contributions.

- Knowledge management across labs: define cross-lab sharing mechanisms (ontologies, knowledge graphs, deduplication, citation networks) and evaluate their effect on reuse and error propagation.

- Benchmarking suites: create standardized task suites with ground truth (math proof verification, ML benchmarking, bioinformatics predictions, materials screening) to compare agents, governance models, and protocol thresholds.

- Critique quality control: design criteria and automated checks to detect low-quality or adversarial critiques, and measure how critique periods affect error correction vs. throughput.

- Attack surfaces at the provider proxy: analyze risks of credential theft, job tampering, and result spoofing; implement scoped credentials, per-job ephemeral tokens, and end-to-end attestations.

- Frontend observability vs. privacy: balance public auditable logs with privacy/security needs; define redaction policies and sensitive artifact handling (e.g., embargoed datasets).

- Internationalization and inclusivity: assess language/domain coverage and bias across models/tools; add multilingual support and evaluate equity in participation and outcomes.

- Regulatory compliance: map data use and verification workflows to applicable regulations (e.g., data licensing, clinical trial data usage, institutional review requirements) and integrate compliance checks.

Glossary

- 0.0.0.0: The IPv4 wildcard address that binds a service to all network interfaces on a host. "due to the framework's default binding to 0.0.0.0"

- Action-Inducing Risk Score: A lexicon-based metric estimating how likely text is to prompt real-world actions. "using a lexicon-based Action-Inducing Risk Score."

- adversarial scientific review: A structured process where agents critically challenge results to expose flaws or falsify claims. "domain-specific architecture for computational verification, lab-based collaboration, and adversarial scientific review."

- agent-only social network: A platform where only AI agents can post and interact; humans observe read-only. "Moltbook, an agent-only social network where AI agents autonomously post, comment, and interact while human users are restricted to read-only observation (Schlicht, 2026)."

- Agent Skill convention: A packaging standard (from Anthropic) for reusable agent skills/plugins. "following Anthropic's Agent Skill convention,"

- analysis provider proxy: A server-side mediator that runs external analysis jobs and returns normalized results to agents. "An analysis provider proxy accepts task descriptions, optional dataset references with SHA-256 checksums, and analysis parameters,"

- backend provider proxy: The platform-layer gateway that securely brokers all external tool/API calls on behalf of agents. "the backend provider proxy, and the task lifecycle with governance flow."

- canary tokens: Embedded markers used to detect plagiarism or data exfiltration via later callbacks or matches. "canary tokens for plagiarism detection through embedding similarity,"

- CDP: Chrome DevTools Protocol; low-level interface for controlling and automating browsers. "browser automation via CDP and Playwright,"

- ClawHub: The decentralized registry for community-authored OpenClaw skills/plugins. "designated ClawHub,"

- Common Vulnerability Scoring System (CVSS): A standard for rating the severity of security vulnerabilities. "scored 8.8 on the Common Vulnerability Scoring System (CVSS)"

- computational verification: Validating scientific claims by requiring evidence from computational tools instead of social approval. "domain-specific architecture for computational verification, lab-based collaboration, and adversarial scientific review."

- consensus: A governance mode requiring unanimous approval to accept results or decisions. "consensus (requiring unanimous approval)"

- contextual embeddings: Vector representations that capture word or document meaning in context for analysis or clustering. "using contextual embeddings, unsupervised clustering, and multimodal LLM-assisted thematic synthesis."

- CVE-2026-25253: A specific, cataloged software vulnerability identifier in the CVE system. "vulnerability CVE-2026-25253, scored 8.8 on the Common Vulnerability Scoring System (CVSS),"

- data-driven silicon sociology: A framework for empirically studying social structures emerging among AI agents. "the theoretical framework of 'data-driven silicon sociology'"

- decentralised pull model: An architecture where agents autonomously poll for eligible work instead of being centrally orchestrated. "a decentralised pull model in which agents autonomously poll for work"

- Ed25519: A modern elliptic-curve digital signature algorithm used for efficient, secure signing. "Ed25519 claim signing with signature chains for tamper-evident provenance,"

- formal proof checker: Software that mechanically verifies the correctness of formal mathematical proofs. "a compilation log from a formal proof checker."

- foundation models: Large, broadly pre-trained models adaptable to many downstream tasks. "foundation models, capabilities, governance, and evidence requirements are independently modifiable,"

- Gateway process: The local OpenClaw core that manages sessions, tools, and events for agents. "centered on a Gateway process that manages sessions, channels, tools, and events via WebSocket"

- heartbeat system: A scheduler or liveness mechanism that triggers periodic agent activity. "A heartbeat system schedules agents to visit approximately every four hours"

- JSON Web Tokens (JWT): A compact, signed token standard for authentication and authorization. "Agents authenticate via JSON Web Tokens (JWT)"

- LLM-agnostic: Designed to work with multiple LLM providers without dependence on a single one. "LLM-agnostic autonomous agent framework"

- Memory Vault: A local store maintaining persistent agent memory across sessions. "Persistent memory is maintained through a local Memory Vault"

- Multivocal Literature Review (MLR): A systematic review method that integrates peer-reviewed and gray literature. "adopts the Multivocal Literature Review (MLR) framework"

- ontological knowledge graphs: Structured graphs encoding entities, types, and relations to represent domain knowledge. "combining ontological knowledge graphs with multi-agent LLM systems"

- Playwright: A browser automation framework for controlling web pages and scraping or testing. "browser automation via CDP and Playwright,"

- Principal Investigator (PI): The role responsible for leading labs, initiating votes, and concluding investigations. "The Principal Investigator (PI) agent interprets"

- PRISMA: Reporting guidelines for conducting and documenting systematic reviews and meta-analyses. "Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines"

- prompt injection: An attack that manipulates model behavior via maliciously crafted inputs or context. "The report identified 506 prompt injection attacks,"

- quorum rule: A minimum participation requirement that must be met for a vote to be valid. "Voting follows a quorum rule enforced at the API level:"

- reinforcement learning from human feedback: Training LLMs using human preference signals to shape behavior. "trained through reinforcement learning from human feedback (Ouyang et al."

- remote code execution: A high-severity vulnerability allowing attackers to run arbitrary code on a target system. "enables one-click remote code execution via authentication token exfiltration,"

- self-driving laboratories: Closed-loop experimental platforms integrating ML and robotics for autonomous discovery. "Self-driving laboratories, which integrate ML with robotic experimental platforms in closed-loop workflows,"

- SHA-256: A cryptographic hash function producing 256-bit digests for integrity checks. "with SHA-256 checksums"

- signature chains: Linked digital signatures forming a verifiable sequence of attestations. "signature chains for tamper-evident provenance,"

- SOUL.md: A per-agent configuration file defining personality and behavioral parameters. "a SOUL.md personality definition file"

- submolts: Topic-specific communities on Moltbook, analogous to subreddits. "Content is organized into submolts (analogous to subreddits),"

- Sybil attacks: Attempts to subvert systems by creating many fake identities to gain undue influence. "structural resistance to Sybil attacks"

- Sybil resistance: The property of a system that limits or neutralizes the impact of Sybil attacks. "the architecture provides emergent Sybil resistance as a structural consequence."

- tamper-evident provenance: Metadata design that makes any post-hoc modification detectable. "tamper-evident provenance,"

- TextBlob: A Python NLP toolkit often used for sentiment analysis and text processing. "using dual sentiment analysis (TextBlob and VADER),"

- tool-augmented retrieval architectures: LLM systems enhanced with external tools for search, retrieval, and synthesis. "tool-augmented retrieval architectures has produced agentic literature systems"

- toxicity scale: A rubric or levels used to categorize the harmfulness of content. "a five-level toxicity scale."

- unsupervised clustering: Grouping data based on intrinsic patterns without labeled training data. "unsupervised clustering,"

- VADER: A rule/lexicon-based sentiment analysis method tailored for social media text. "using dual sentiment analysis (TextBlob and VADER),"

- versioned lab state system: A mechanism to track and manage evolving research hypotheses and objectives over time. "versioned lab state system"

- WebSocket: A protocol enabling persistent, full-duplex communication between client and server. "via WebSocket on the user's local machine"

- X OAuth: Authentication via OAuth on X (formerly Twitter) for verifying agent ownership. "X (formerly Twitter) OAuth."

Practical Applications

Immediate Applications

Below is a concise set of actionable use cases that can be deployed now, mapped to sectors, with potential tools/workflows and key dependencies.

- Autonomous research labs for literature triage and synthesis

- Sector: academia, pharma/biotech, materials

- What: Stand up small

ClawdLabinstances to run agent-led literature reviews, evidence synthesis, and preliminary computational checks across math, ML, computational biology, materials science, and bioinformatics. - Tools/Workflows: Role cards (scout/analyst/critic/synthesizer), protocol constraints (e.g., required benchmarks/proof logs), provider proxies for arXiv/PubMed.

- Dependencies: Reliable LLM tool-use; access to literature APIs; institutional approval for AI agents operating in research workflows.

- Evidence-anchored validation in data science and ML pipelines

- Sector: software, AI/ML engineering

- What: Require protocol-defined artifacts (e.g., unit/integration tests, benchmark tables with seeds, dataset checksums) for task completion before approval/voting.

- Tools/Workflows: Protocol documents with domain-specific completion criteria; audit logs of provider jobs; structured artifacts attached to tasks.

- Dependencies: CI integration; standardized dataset management; enforceable definitions of done within org tooling.

- Multi-model orchestration for cognitive diversity in agent teams

- Sector: enterprise AI, research groups

- What: Assign different foundation models to distinct roles (e.g., critic on adversarial reasoning model; analyst on code/tool-use model) to reduce correlated errors and improve rigor.

- Tools/Workflows: Role-based model routing; SOUL.md configurations for epistemic style; heartbeat and polling cadence controls.

- Dependencies: Access to multiple LLM providers; policy for model selection by role; monitoring for cross-model performance.

- PI-led governance with hard role restrictions to improve quality control

- Sector: enterprise R&D, academia

- What: Adopt PI-controlled quorum voting and strict role permissions to gate acceptance of results and prevent free-form agent drift.

- Tools/Workflows: Quorum rules, structured critique periods, resolution states (proven/disproven/pivoted/inconclusive).

- Dependencies: Clearly defined decision authority; cultural buy-in for governed agent collaboration; transparent audit trails.

- Backend provider proxy for credential safety and auditability

- Sector: IT/security, enterprise AI platforms

- What: Route all external tool/API access through server-side proxies so agents never hold API keys; log all calls and results.

- Tools/Workflows: Secure gateways; normalized result schemas; job tracking with timestamps and error states.

- Dependencies: Centralized credential management; observability stack; rate limiting and access control.

- Agent QA via structured adversarial critique

- Sector: software engineering, data science

- What: Introduce critic agents that file structured challenges before tasks can advance to voting; use them as internal “red teams” for analyses, code, and reports.

- Tools/Workflows: Critique templates; escalation triggers; alternative task proposals.

- Dependencies: Organizational tolerance for adversarial review; training prompts for effective critiques; metrics for critique resolution.

- Secure agent deployment guidelines for local-first frameworks

- Sector: cybersecurity, IT operations

- What: Immediately fix common misconfigurations revealed by CVE-2026-25253 and exposed control panels (e.g., avoid binding to 0.0.0.0; enforce auth; token hygiene).

- Tools/Workflows: Hardening playbooks; network segmentation; secret scanning; endpoint protection.

- Dependencies: Ops maturity; change management; vulnerability monitoring and patch cadence.

- Agent skill security benchmarking

- Sector: cybersecurity, platform engineering

- What: Use a “Personalized Agent Security Bench”-style methodology to evaluate tool/skill execution stages (prompt handling, content access, tool invocation, memory retrieval).

- Tools/Workflows: Threat skill catalog; staged test harnesses; propagation analysis across sessions.

- Dependencies: Representative skills registry; sandboxed environments; automated reporting of vulnerabilities.

- Sybil-resistant evidence gating for internal agent forums

- Sector: enterprise knowledge platforms, communities of practice

- What: Prevent vote manipulation by requiring protocol-compliant evidence artifacts before PI-triggered voting; weight acceptance on artifacts, not agent headcount.

- Tools/Workflows: Evidence thresholds per domain; PI initiation gates; public activity logs.

- Dependencies: Clear protocol design; artifact validators; governance policy.

- Human suggestion channels without direct state mutation

- Sector: education, enterprise collaboration

- What: Allow humans to propose ideas and discuss while keeping agents autonomous over execution and state changes.

- Tools/Workflows: Suggestion posts; PI conversion of suggestions to tasks; read-only human participation in task state.

- Dependencies: UI/UX for suggestion capture; social norms for agent autonomy; instructor/manager training.

- Safety and social behavior studies in agent-only sandboxes

- Sector: computational social science, platform safety

- What: Replicate Moltbook-style observatories to study action-inducing language, toxicity gradients, and normative behavior under controlled conditions.

- Tools/Workflows: Topic taxonomies; sentiment/toxicity pipelines; heartbeat and posting rhythm analytics (e.g., interaction half-life).

- Dependencies: Ethics review for AI-only environments; dataset curation; containment of harmful behaviors.

- Provenance and reproducibility for compliance

- Sector: regulated industries (healthcare, finance), academia

- What: Leverage tamper-evident logs and artifact storage (S3 + checksums) to support audits, replication, and compliance reporting.

- Tools/Workflows: SHA-256 checksums; activity logs; document stores; planned Ed25519 signing chains.

- Dependencies: Data retention policies; legal alignment; standardized provenance schemas.

Long-Term Applications

The following applications require further research, scaling, or development before broad deployment; each includes sector alignment, potential tools/workflows, and key assumptions.

- Fully decentralized, governed multi-agent scientific ecosystems integrated with self-driving labs

- Sector: biotech, materials, chemistry

- What: Tier-3 architectures where agents coordinate wet-lab robots, design experiments, run closed-loop ML, and publish computationally verified results.

- Tools/Workflows: Robotics orchestration; lab information management systems; protocol-defined evidence for physical experiments.

- Dependencies: Reliable robotics interfaces; safety certifications; high-trust verification standards; robust multi-model coordination.

- Computationally verifiable peer review as a publishing standard

- Sector: academic publishing

- What: Journals require protocol-encoded evidence (proof checker logs, benchmark reproductions, dataset fingerprints) for acceptance.

- Tools/Workflows: Domain profiles for mathematics (proof systems), ML (reproducible benchmarks), biology (structure/assay thresholds).

- Dependencies: Community consensus on standards; tooling integration in submission systems; independent validators.

- Federated, security-vetted skill registries with signed artifacts

- Sector: software supply chain, platform ecosystems

- What: Global registries for agent skills/plugins with Ed25519 signatures, provenance chains, and automated security vetting.

- Tools/Workflows: Signature chain infrastructure; static/dynamic analysis; curated trust tiers.

- Dependencies: Governance body; interoperability standards; contributor verification; funding for curation.

- Policy and regulation for agent-only platforms

- Sector: public policy, digital governance

- What: Frameworks for identity, accountability, rate limiting, and harm mitigation in agent social networks and marketplaces.

- Tools/Workflows: OAuth-style ownership verification; heartbeat controls; transparency reports; red-teaming requirements.

- Dependencies: Legislative processes; cross-jurisdiction alignment; platform compliance mechanisms.

- Agent-led grant triage and evaluation with adversarial review

- Sector: research funding, philanthropy

- What: Multi-agent labs conduct literature audits, evidence checks, and critiques to prioritize proposals before human panel review.

- Tools/Workflows: Role-based evaluation pipelines; protocol thresholds per domain; auditability dashboards.

- Dependencies: Trust calibration with funders; bias controls; human oversight; standardized scoring rubrics.

- Cross-institutional automated replication networks

- Sector: academia, open science

- What: Agents coordinate replication studies across universities with shared protocols, provider proxies, and artifact exchanges.

- Tools/Workflows: Replication registries; canonical datasets; standardized task schemas; provenance sharing.

- Dependencies: Data-sharing agreements; reproducibility incentives; infrastructure funding; privacy compliance.

- Sybil-resistant decentralized knowledge markets

- Sector: knowledge platforms, web3

- What: Marketplaces where claims trade based on protocol-verified evidence rather than upvotes; value accrues to validated artifacts.

- Tools/Workflows: Evidence-gated listing; escrow tied to validator outputs; dispute resolution via critic roles.

- Dependencies: Economic design; oracle security; legal status of agent transactions; community adoption.

- Domain-specific protocol expansion into clinical, energy, and finance

- Sector: healthcare, energy, finance

- What: Evidence requirements tied to clinical trial registries/EHRs (healthcare), grid simulation/dispatch metrics (energy), strict backtesting with audit logs (finance).

- Tools/Workflows: Integrations with EHR/CT registries; power system simulators; financial backtesting engines with seed control.

- Dependencies: Regulated data access; model validation; safety/privacy constraints; sector-specific standards.

- Configurable governance standards (PI-led, democratic, consensus) for enterprise AI teams

- Sector: enterprise software, HR/operations

- What: Governance SDKs let organizations select and enforce agent decision models matched to team culture and risk tolerance.

- Tools/Workflows: Governance APIs; quorum settings; audit hooks; role templates.

- Dependencies: Change management; training; measurement of governance effectiveness; integration with org policies.

- Advanced defense layers for agent ecosystems

- Sector: cybersecurity

- What: End-to-end anomaly detection (submission frequency, domain switching, vote clustering), prompt injection sanitization, canary tokens for plagiarism detection.

- Tools/Workflows: Behavioral baselines; similarity embeddings; threat intelligence feedback loops.

- Dependencies: High-quality telemetry; red-teaming; continuous model updates; incident response processes.

- Project-based learning via agent-run laboratories

- Sector: education (higher ed, advanced secondary)

- What: Students propose problems; agent labs execute literature reviews, analyses, and syntheses under instructor governance.

- Tools/Workflows: Classroom

ClawdLabinstances; curriculum-aligned protocols; human suggestion channels. - Dependencies: Safe-model deployment; institutional IT support; assessment redesign; academic integrity safeguards.

- International provenance and data governance frameworks for agent-conducted science

- Sector: legal/compliance, research policy

- What: Standardize tamper-evident signatures, storage, and cross-border audit rules for agent-generated artifacts and decisions.

- Tools/Workflows: Ed25519 signature chains; immutable logs; interoperable provenance schemas.

- Dependencies: Treaty-level coordination; archival infrastructure; compliance tooling; sustained funding.

Collections

Sign up for free to add this paper to one or more collections.