From Statics to Dynamics: Physics-Aware Image Editing with Latent Transition Priors

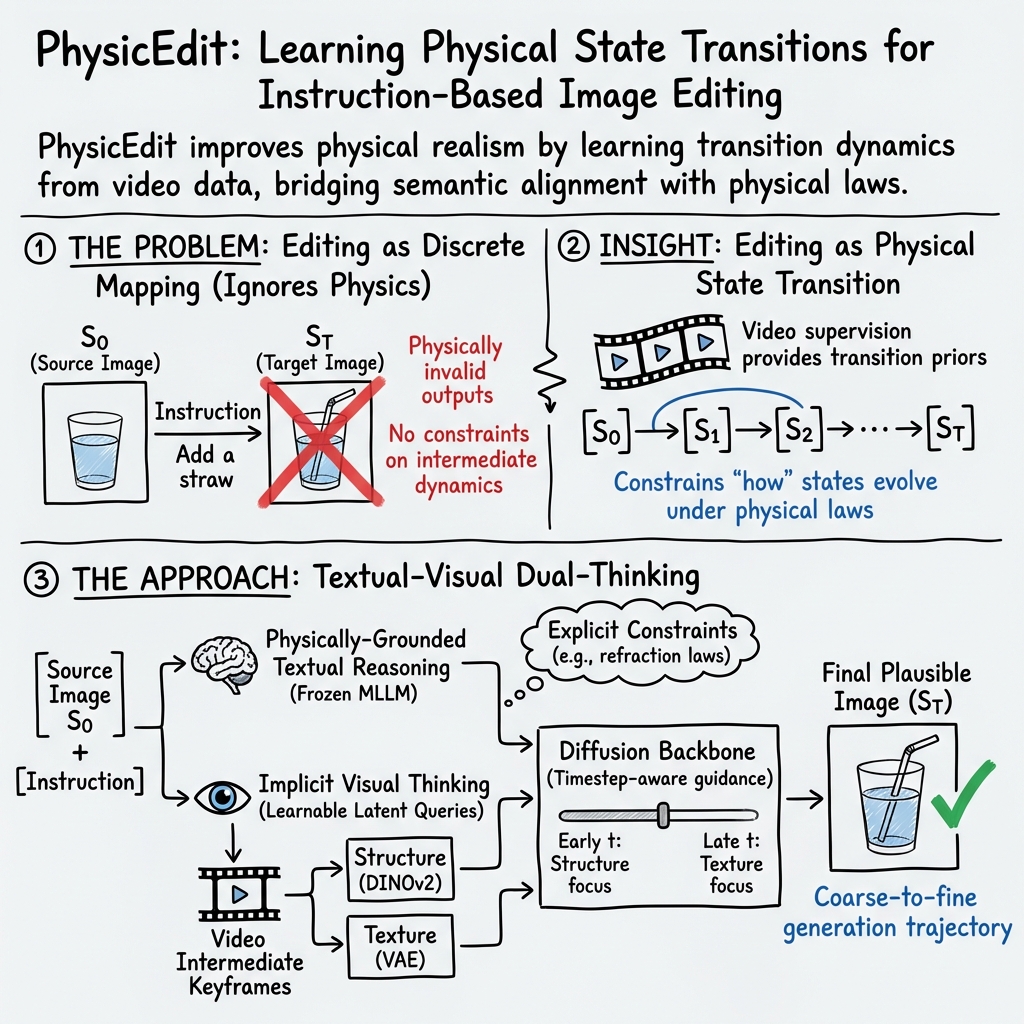

Abstract: Instruction-based image editing has achieved remarkable success in semantic alignment, yet state-of-the-art models frequently fail to render physically plausible results when editing involves complex causal dynamics, such as refraction or material deformation. We attribute this limitation to the dominant paradigm that treats editing as a discrete mapping between image pairs, which provides only boundary conditions and leaves transition dynamics underspecified. To address this, we reformulate physics-aware editing as predictive physical state transitions and introduce PhysicTran38K, a large-scale video-based dataset comprising 38K transition trajectories across five physical domains, constructed via a two-stage filtering and constraint-aware annotation pipeline. Building on this supervision, we propose PhysicEdit, an end-to-end framework equipped with a textual-visual dual-thinking mechanism. It combines a frozen Qwen2.5-VL for physically grounded reasoning with learnable transition queries that provide timestep-adaptive visual guidance to a diffusion backbone. Experiments show that PhysicEdit improves over Qwen-Image-Edit by 5.9% in physical realism and 10.1% in knowledge-grounded editing, setting a new state-of-the-art for open-source methods, while remaining competitive with leading proprietary models.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about making image edits that follow the real rules of physics. Today’s AI image editors are good at matching what you ask for (like “add a straw”), but they often break physics (like forgetting that a straw looks bent in water because of refraction). The authors propose a new way: treat image editing as a step-by-step physical change over time, not just a before-and-after swap. They also build a big video dataset and a new model to help AI “think” about physics while editing.

Key Questions and Goals

The paper sets out to:

- Reframe image editing as a “physical state transition,” meaning the scene changes over time following physical laws.

- Build a large, physics-focused video dataset (PhysicTran38K) that shows real transitions like melting, bending, or light refraction.

- Create a new editing system (PhysicEdit) that combines text-based reasoning about physics with visual guidance learned from videos.

- Test whether this approach produces more realistic, physics-faithful edits than existing models.

How They Did It (Methods)

Idea: From Static to Dynamic

Most editors learn a direct jump from the starting image to the final image. That’s like saying “I start here, end there” without showing the journey. The authors argue you need the journey—how things change step by step—to respect physics. Videos naturally show these steps, so they use videos to teach the model how scenes evolve.

Building the PhysicTran38K Dataset

Think of this dataset as a teaching library of “how things change in the real world.” It includes 38,000 video clips covering five big physics areas: Mechanical, Thermal, Material, Optical, and Biological. Each clip has:

- A start state (e.g., a mirror sitting on a desk),

- A trigger (e.g., rotate the mirror),

- A transition (what happens over time),

- A final state.

To make and clean this dataset:

- They generate videos with a text-to-video model using structured prompts.

- They filter out clips with camera shakes (so changes are about the scene, not the camera).

- They check the physics using simple rules (like the law of reflection: “angle in equals angle out”) with an AI helper. Clips that break the rules get rejected or flagged.

- They annotate each video with clear instructions and a “physics reasoning” summary that explains why the changes follow physical laws.

- They also save a few “in-between frames” (keyframes) to teach the model the mid-way steps, not just the start and end.

The PhysicEdit Model: Two Ways of Thinking

PhysicEdit combines two “minds” to edit images correctly:

- Physically-Grounded Reasoning (Text-based)

- A smart text-and-vision AI (kept “frozen,” meaning its parameters aren’t changed) writes down the physics rules and a plan for the edit.

- Example: “Turning off a lamp should reduce brightness gradually, change shadows, and keep the scene’s geometry consistent.”

- Implicit Visual Thinking (Learned visual guidance)

- The model learns “transition queries,” which you can imagine as small internal hints that represent how a scene should change over time.

- It trains these hints using the video keyframes:

- One visual encoder focuses on structure and shape (think outlines, positions, and geometry).

- Another encoder focuses on texture and details (think color, patterns, and surface look).

- During generation, the model mixes structure-first and texture-later guidance in a time-aware way:

- Early steps focus more on getting the overall shape right.

- Later steps refine the look and details.

- This fits how “diffusion” models work: they start with noise and gradually “paint” the image from rough to detailed, like sketching before shading.

A note on terms:

- “Diffusion model”: An AI that creates images by starting with random noise and repeatedly improving it, adding structure and details step by step.

- “Latent transition priors”: Learned patterns inside the model about how scenes usually change, used to guide edits.

- “LoRA” training: A lightweight way to fine-tune large models without retraining everything.

Why this design matters

- During training, the model sees videos (so it learns the steps).

- During use (inference), you only give it one image and an instruction. The model uses its learned “transition queries” and physics reasoning to fill in the missing steps, producing a physically believable final image.

Main Findings and Why They Matter

The authors test PhysicEdit on two benchmarks:

- PICABench: Checks whether edits obey physics (like light behavior, refraction, deformation, and causal changes).

- KRISBench: Checks whether edits reflect correct knowledge and time-based understanding.

Key results:

- PhysicEdit beats leading open-source editors and is competitive with top proprietary models.

- Compared to its base model (Qwen-Image-Edit), it improves physical realism by 5.9% and knowledge-grounded editing by 10.1%.

- Biggest gains show up in tasks that need dynamic understanding:

- Light source effects (how bright/dim and shadows change),

- Deformation (bending, stretching),

- Causality (what follows from a trigger),

- Refraction (how things look through transparent materials like water or glass).

- On KRISBench, PhysicEdit improves time perception and natural science tasks, suggesting it “understands” how scenes evolve and respects physical laws.

In simple terms: PhysicEdit doesn’t just add or remove things; it changes the scene in ways that look and feel physically real.

What This Means Going Forward

- Better tools: Editors that follow physics can help artists, designers, educators, and engineers create believable images, test ideas, and teach science concepts.

- Trustworthy visuals: It reduces weird, unrealistic results, making edited images more consistent with how the real world works.

- Caution: More realistic editing can also make misinformation harder to spot. The authors encourage responsible use and research into ways to detect synthetic content.

- Next steps: This approach pushes image editing toward real-world simulation—future models might handle even more complex physics, like fluid flows or material breakage, with higher precision.

Overall, the paper shows that teaching AI “how things change” (not just “what things are”) is the key to physics-aware image editing.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of concrete gaps that remain unresolved in the paper and could guide future research:

- Synthetic-data dependence: PhysicTran38K is primarily generated by Wan2.2-T2V with LLM-based verification; the extent to which learned priors transfer to real-world, camera-captured scenes remains untested.

- Verification reliability: Principle-driven filtering relies on GPT-5-mini judgments with a 0.5 retention threshold; the rate of false positives/negatives and their downstream impact on learned priors is not quantified.

- Generator bias: The model may inherit artifacts or biases specific to the Wan video generator; no analysis isolates and measures generator-induced biases in training data.

- Limited physics coverage: The taxonomy omits complex domains like turbulent fluids, multiphase flows, electromagnetism beyond basic optics, plasticity/viscoelasticity, and thermodynamics with coupled heat–mass transfer.

- Static-camera constraint: Training videos enforce static cameras; the method’s ability to honor physics under viewpoint changes, parallax, and multi-view consistency is untested.

- Real-video supervision: No experiments with real or hybrid (real+synthetic) video supervision to assess robustness to real noise, calibration errors, and natural variability.

- Temporal generalization: Though trained on videos, the system is not evaluated on producing or maintaining temporally coherent video edits; ability to generate full transition sequences remains open.

- Intermediate-state controllability: Users cannot steer or inspect intermediate states (e.g., adjust “time” or physical parameters like gravity, refractive index); mechanisms for interactive or parameterized control are absent.

- Handling impossible or contradictory edits: The system’s behavior on instructions that violate physical laws (e.g., “water flows upward without support”) is not characterized—reject, reinterpret, or comply?

- Reasoning correctness: The frozen Qwen2.5-VL produces physics “reasoning,” but its factual accuracy and consistency with the image content are not evaluated; failure cases and their effects on outputs are unclear.

- Hallucination containment: No mechanisms ensure that incorrect textual constraints from the MLLM do not misguide the diffusion model; detecting and mitigating erroneous reasoning remains unaddressed.

- No explicit physics constraints: The framework learns priors but does not incorporate differentiable physics, symbolic constraints, or conservation laws (e.g., energy, mass), limiting guarantees of physical validity.

- Feature choice limitations: Transition supervision uses DINOv2 (structure) and a VAE (texture); geometry-aware signals (depth, normals), optical flow, or material-property estimators are not explored.

- Fixed mixing schedule: The timestep-aware linear mixing t·FDINO + (1–t)·FVAE is hand-designed; learned or adaptive gating strategies and their potential benefits are unstudied.

- Query design sensitivity: The number, dimensionality, and placement of transition queries (K=64) are fixed; sensitivity analyses and minimal/optimal configurations are missing.

- Training regime underexplored: Only one epoch of LoRA fine-tuning is reported; effects of longer training, different adapters, or full finetuning on stability and performance are not assessed.

- Decoupled optimization: Transition queries are updated only by the transition loss, diffusion by Ldiff; whether joint or partially coupled training improves synergy is untested.

- Backbone dependence: The approach is built on Qwen-Image-Edit; portability to other diffusion backbones (e.g., Flux, SDXL variants) and to non-diffusion editors is unverified.

- Multi-turn and compositional edits: The capability to compose multiple sequential edits with consistent physics across steps remains unexamined.

- Multi-object interactions: Contact mechanics, frictional stacking, granular media, and soft–rigid couplings are scarcely addressed; dataset and model handling of these interactions is unclear.

- Illumination complexity: Cases with multiple light sources, caustics, participating media, and global illumination effects are not systematically evaluated with physics-based metrics.

- Evaluation metrics: Benchmarks rely heavily on LLM-based scoring; quantitative, law-specific metrics (e.g., measuring Snell’s law compliance, shadow geometry consistency) are absent.

- Robustness to occlusion and partial observability: How the model infers unobserved causes (hidden forces, occluded interactions) is not analyzed.

- Domain shift to scientific/industrial use: The framework’s precision under strict physical tolerances (e.g., optical systems, mechanical stress scenarios) beyond visual plausibility is not evaluated.

- Data noise handling: Retained videos may include contradictions (logged as constraints); the effect of noisy or conflicting supervision on learned priors and model robustness is not quantified.

- Failure-mode catalog: Systematic analysis of failure patterns (by transition type, materials, lighting, or instruction phrasing) is missing.

- Efficiency and latency: Relative inference/training compute, memory overhead from reasoning and transition queries, and runtime comparisons to baselines are not reported.

- Security and safety: No watermarking, provenance, or edit-trace mechanisms are proposed despite increased realism; detection of physically plausible yet semantically deceptive edits is left open.

- Licensing and reproducibility: Long-term accessibility of the video generator, LLMs, and their versions used in dataset construction is not ensured; reproducibility under different generator/LLM versions is untested.

- Dataset balance: While overall counts are given, per-transition balance and coverage of edge cases (extreme parameters, rare materials) are not detailed; potential class imbalance effects are unmeasured.

Glossary

- Ablation study: A controlled experiment that removes or alters components to assess their contribution to performance. "Ablation Study on physically-grounded reasoning and implicit visual thinking effectiveness."

- Boundary conditions: Known initial/final states used to constrain a system without specifying the full dynamics. "which provides only boundary conditions and leaves transition dynamics underspecified."

- Buoyancy: An upward force exerted by a fluid that opposes the weight of an immersed object. "Transitions: Translation, Rotation, Oscillation, Gravity, Buoyancy."

- Causality: The relationship between causes and effects governing how changes propagate in a system. "their reasoning modules operate primarily at the semantic level rather than physical causality."

- Coarse-to-fine generative trajectory: The process by which diffusion models form global structure before refining local details. "Since diffusion models exhibit coarse-to-fine generative trajectory"

- Constraint-aware annotation: An annotation process that incorporates explicit constraints (including negatives) to guide correctness. "constructed via a two-stage filtering and constraint-aware annotation pipeline."

- DINOv2: A self-supervised vision transformer used to extract robust structural features from images. "DINOv2 (Oquab et al., 2023) for structural semantics"

- Diffusion backbone: The core diffusion-based generative network that produces edited images. "timestep-adaptive visual guidance to a diffusion backbone."

- Dual projection heads: Two separate mapping modules aligning embeddings to distinct target feature spaces (e.g., structure and texture). "via dual projection heads."

- Flow-matching loss: A training objective aligning model dynamics with a target probability flow, used in rectified-flow diffusion. "Ldiff is the standard flow-matching loss applied to the diffusion backbone."

- Frozen (model): A model whose parameters are kept fixed and are not updated during training. "the frozen Qwen2.5-VL generates a structured physics reasoning trace"

- Geometric stability filter: A tool that filters out samples with undesired camera/viewpoint changes by analyzing geometry. "We therefore apply ViPE (Huang et al., 2025) as a geometric stability filter."

- Hierarchical physics categories: A taxonomy organizing phenomena into domains, sub-domains, and transition types. "We design hierarchical physics categories covering five major physical domains"

- Implicit Visual Thinking: A learned latent mechanism that encodes transition dynamics without explicitly generating full intermediate frames. "we introduce Implicit Visual Thinking, as illustrated in Figure 1."

- Instruction-based image editing: Editing images according to natural-language instructions while preserving relevant content. "Instruction-based image editing, which aims to generate a new image following the given instruction"

- Keyframes: Selected frames from a video that represent important intermediate states for supervision or analysis. "intermediate keyframes provide supervision through two complementary encoders"

- Latent space: A compact feature space where high-level attributes are represented and manipulated. "to implicitly reconstruct transition priors in the latent space."

- Law of reflection: An optical principle stating that the angle of incidence equals the angle of reflection. "Principle1: Law of reflection, Angle of incidence equals angle of reflection."

- LoRA: Low-Rank Adaptation; a parameter-efficient fine-tuning method that injects low-rank updates into large models. "using LoRA (Hu et al., 2022)."

- Maillard reaction: A heat-driven chemical reaction between amino acids and sugars that causes browning in foods. "... Maillard reaction ... browning ..."

- MMDiT: A rectified-flow diffusion transformer architecture used for image synthesis and editing. "which conditions an MMDiT (Esser et al., 2024) on frozen Qwen2.5-VL"

- Multimodal LLM (MLLM): A LLM that processes and reasons over multiple modalities like text and images. "Multimodal LLM (MLLMs)"

- Optical refraction: The bending of light as it passes between media of different refractive indices. "render the optical refraction phenomenon"

- Physical realism: The degree to which generated content adheres to physical laws and appears physically plausible. "improves over Qwen-Image-Edit by 5.9% in physical realism"

- Physical State Transition: Reformulation of editing as the time-evolution of a scene’s state under physical laws. "Our approach reformulates editing as a Physical State Transition"

- Principle-driven verification: A validation process that checks generated sequences against explicit physical principles. "Candidate videos conduct principle-driven verification by GPT-5-mini"

- Pseudo ground-truth embeddings: Target feature representations treated as ground truth for supervision, derived from teacher encoders. "where FDINO and FVAE denote the pseudo ground-truth embeddings"

- Semantic alignment: The consistency between the instruction’s meaning and the generated visual content. "Instruction-based image editing has achieved remarkable success in semantic alignment"

- Specular glint: A bright highlight caused by mirror-like reflection on a glossy surface. "the specular glint shifts across the face"

- State transition dynamics: The rules or operator describing how a system’s state changes over time. "where + denotes the state transition dynamics"

- Structured Generation: A controlled synthesis process guided by templated prompts delineating start, trigger, transition, and final states. "Structured Generation."

- Teacher branch: A supervisory pathway providing target features or guidance signals for student components to learn from. "we supervise these embeddings with a teacher branch derived from PhysicTran38K."

- Timestep-Aware Dynamic Modulation: A strategy that adapts guidance signals according to diffusion timesteps, emphasizing structure early and texture later. "Timestep-Aware Dynamic Modulation."

- Transition priors: Learned knowledge about typical intermediate evolutions between states, used to guide edits. "learn transition priors from video"

- Transition queries: Learnable tokens that encode and inject latent transition information into the generation process. "learnable transition queries"

- Unified multi-modal models (UMMs): Architectures that integrate reasoning and generation across text and vision within a single framework. "unified multi-modal models (UMMs)"

- Variational Autoencoder (VAE): A probabilistic generative model that encodes images into a latent distribution and decodes them back. "a VAE (Wu et al., 2025a) for fine- grained appearance"

- Verification score: A numeric measure summarizing how well a video adheres to proposed physical principles. "We then calculate a verification score Sverify"

- ViPE: Video Pose Engine; a system for 3D geometric perception used here to assess camera stability. "We therefore apply ViPE (Huang et al., 2025) as a geometric stability filter."

- Wan Prompt: A fixed prompt template specifying start, trigger, transition, and final states for video generation. "a fixed Wan Prompt template"

- Wan2.2-T2V-A14B: A text-to-video generative model used to synthesize candidate transition clips. "Using Wan2.2- T2V-A14B (Wan et al., 2025)"

Practical Applications

Overview

The paper introduces PhysicEdit, an image editing framework that enforces physical plausibility by reformulating edits as predictive physical state transitions, and PhysicTran38K, a 38K-video dataset covering five physical domains (Mechanical, Thermal, Material, Optical, Biological). The framework pairs a frozen multimodal LLM for physics reasoning (Qwen2.5-VL) with learnable latent “transition queries” distilled from videos, and a timestep-aware modulation that guides a diffusion backbone from structure to texture. The results demonstrate significant gains in physical realism and physics-related knowledge over strong baselines.

Below are practical applications derived from the paper’s methods and findings, organized by deployment horizon and linked to sectors. Each item notes potential tools/workflows and assumptions/dependencies that affect feasibility.

Immediate Applications

These applications can be deployed now using the released code, checkpoints, and dataset, with standard engineering effort.

- Creative production and advertising (software, media/entertainment)

- Use case: Physics-consistent still-image compositing and retouching (e.g., correcting refractions in glass, realistic shadows when turning off/on lights, plausible deformations of materials).

- Tools/workflows: Plugin or API integration into Photoshop, GIMP, Procreate, or web editors; “Physics-aware fill” and “Lighting & Optics Assistant” modes powered by PhysicEdit and its textual reasoning.

- Assumptions/dependencies: Access to GPU inference; licensing compatibility with Qwen2.5-VL/Qwen-Image-Edit; dataset’s synthetic-to-real generalization holds for typical studio imagery.

- E-commerce product imagery and catalogs (retail, marketing tech)

- Use case: Batch edits that preserve optical and mechanical plausibility (e.g., removing a display stand without breaking shadows; simulating condensation on cans; realistic deformation of fabrics).

- Tools/workflows: Image processing pipelines with a “physical plausibility pass” using PhysicEdit; quality gates using the reasoning trace to flag edits that violate constraints.

- Assumptions/dependencies: Throughput constraints and latency (A100-class GPUs or optimized inference); domain gap for niche materials or rare optical effects.

- Visual design QA and compliance (media integrity, policy-adjacent)

- Use case: “Physics plausibility checker” to audit edits for optical/mechanical inconsistencies before publication.

- Tools/workflows: Use the physically grounded reasoning output to generate constraint reports; add a plausibility score to CI pipelines; human-in-the-loop review.

- Assumptions/dependencies: Heuristics for pass/fail thresholds; coverage of physical categories relevant to brand guidelines; does not replace forensic techniques.

- STEM education and outreach (education)

- Use case: Classroom demonstrations of optics/mechanics via image edits that must obey laws (e.g., refraction/bending in water, shadow propagation when a lamp turns off).

- Tools/workflows: Interactive teaching apps where students propose a trigger and observe the physically grounded edited outcome; auto-generated textual explanations from the reasoning branch.

- Assumptions/dependencies: Educator-friendly UI; careful curation to avoid reinforcing incorrect intuitions in edge cases; age-appropriate scaffolding.

- Scientific visualization and lab documentation (academia, R&D)

- Use case: Annotative edits to still images that preserve physical constraints (e.g., illustrating likely deformation under load; adjusting lighting to highlight structures without breaking realism).

- Tools/workflows: PhysicEdit-backed add-ons for lab notebooks and figure preparation; structured reasoning captured as captions or supplemental material.

- Assumptions/dependencies: Not a substitute for quantitative simulation; synthetic dataset bias—must be used as illustrative, not evidentiary.

- Synthetic training data augmentation for computer vision (software/AI)

- Use case: Generating physically plausible edited pairs for tasks sensitive to optics and deformation (e.g., segmentation under refraction, recognition with lighting changes).

- Tools/workflows: Data pipelines that use PhysicTran38K categories to target hard phenomena; PhysicEdit for paired edits that resemble realistic transitions.

- Assumptions/dependencies: Maintain distributional similarity to downstream domains; label consistency checks; careful validation to avoid amplifying biases.

- AR filters and mobile photo apps (consumer software)

- Use case: More realistic single-image filters that respect optical laws (e.g., adding objects behind glass, turning light sources off/on with correct global illumination changes).

- Tools/workflows: Cloud-backed inference via lightweight endpoints; presets for common physical triggers (insert/remove object, deform, change illumination).

- Assumptions/dependencies: Latency constraints; simplified presets may be needed for on-device UX; edge-case failures should degrade gracefully.

Long-Term Applications

These require further research, scaling, optimization, or broader validation (e.g., real-world video supervision, safety certifications).

- Physics-aware video editing and animation (media/entertainment, software)

- Use case: Extend state-transition priors from single images to short sequences for physically consistent edits across frames (e.g., gradual melting, progressive deformation).

- Tools/workflows: Hybrid of PhysicEdit with temporal modules or video diffusion; latent transition queries that evolve over time; timeline-aware reasoning.

- Assumptions/dependencies: Compute cost, error accumulation across frames; richer supervision from real videos with ground-truth physical measurements.

- CAD/CAM and digital twin prototyping (manufacturing, industrial design)

- Use case: Rapid ideation by editing product renders or photos with plausible physical outcomes (e.g., material deformation under tension, lighting changes in environments).

- Tools/workflows: Integration with CAD viewers and digital-twin platforms; coupled pipelines that hand off from PhysicEdit to physics solvers for verification.

- Assumptions/dependencies: Alignment between appearance-based edits and engineering-grade simulations; standardized interfaces to FEA/optical simulators; domain-specific material models.

- Robotics and world-modeling data generation (robotics, autonomy)

- Use case: Physically plausible scene edits to enrich training for perception under changing physical conditions (illumination shifts, occlusions via refractions, deformable objects).

- Tools/workflows: Synthetic dataset programs that combine PhysicEdit edits with simulators; curriculum focusing on causal triggers and outcomes.

- Assumptions/dependencies: Transferability to robotic perception; tight coupling with physics engines for dynamics; safety validation in downstream policies.

- Architecture, interior, and lighting design planning (AEC, energy)

- Use case: Single-photo “what-if” edits of lighting and materials to preview plausible outcomes (e.g., lamp placement effects, daylight changes, reflective surfaces).

- Tools/workflows: Design platforms with physics-aware image adjustments as a fast previsualization step before ray-traced rendering.

- Assumptions/dependencies: Calibration against physically based renderers (PBR); accurate material properties; site-specific constraints.

- Content authenticity and policy tooling (platform governance, media forensics)

- Use case: Physics-based heuristics in forensic detectors to flag manipulated images with inconsistencies (e.g., impossible refraction or shadow behavior).

- Tools/workflows: Train detectors on counterexamples curated via PhysicTran38K; fuse physics-consistency features with other forensic signals.

- Assumptions/dependencies: Robustness to adversarial counterforensics; coverage of diverse physical interactions; governance and standards for thresholds and appeals.

- Healthcare imaging augmentation and education (healthcare, medical education)

- Use case: Teaching aids that illustrate plausible physical interpretations of anatomical imaging (e.g., elastic deformation) without altering clinical evidence.

- Tools/workflows: Non-diagnostic educational overlays; strict labeling that distinguishes illustrative edits from clinical data.

- Assumptions/dependencies: Strong guardrails to prevent misuse; domain-specific validation; likely limited to education rather than clinical workflows.

- Smartphone “physics-aware camera” modes (consumer hardware/software)

- Use case: On-device guidance for edits that respect optics/mechanics, aiding novice users in producing realistic composites.

- Tools/workflows: Edge-optimized variants of PhysicEdit; heuristic presets; hybrid cloud/offline processing.

- Assumptions/dependencies: Model compression and quantization; privacy constraints; battery/latency trade-offs.

Cross-cutting Assumptions and Dependencies

- Data realism and coverage: PhysicTran38K is largely synthesized via Wan T2V and filtered/annotated by LLMs; real-world edge cases or rare phenomena may be underrepresented. For critical applications, additional real-video supervision and domain-specific validation are needed.

- Model stack and licensing: PhysicEdit depends on a frozen Qwen2.5-VL and a Qwen-Image-Edit diffusion backbone, plus DINOv2 and VAE encoders. Deployment depends on licensing, model availability, and ongoing support.

- Compute and latency: Training used 4×A100 GPUs and LoRA; production workloads will require GPU inference or robust CPU/edge optimization. Real-time or mobile scenarios need aggressive model distillation/quantization.

- Safety and misuse: Increased realism of edited images heightens deepfake risks. Any rollout should pair generation tools with detection, watermarking, or audit trails; educational contexts require careful scaffolding.

- Domain transfer: The framework excels in optics, mechanics, and state transitions common in everyday imagery. Specialized domains (e.g., medical, aerospace materials) need custom object pools, physics constraints, and validation.

- Human oversight: For compliance and forensics, physics-based checks complement—not replace—expert review. Thresholds, false positives/negatives, and appeals must be operationalized.

Collections

Sign up for free to add this paper to one or more collections.