MT-PingEval: Evaluating Multi-Turn Collaboration with Private Information Games

Abstract: We present a scalable methodology for evaluating LLMs in multi-turn interactions, using a suite of collaborative games that require effective communication about private information. This enables an interactive scaling analysis, in which a fixed token budget is divided over a variable number of turns. We find that in many cases, LLMs are unable to use interactive collaboration to improve over the non-interactive baseline scenario in which one agent attempts to summarize its information and the other agent immediately acts -- despite substantial headroom. This suggests that state-of-the-art models still suffer from significant weaknesses in planning and executing multi-turn collaborative conversations. We analyze the linguistic features of these dialogues, assessing the roles of sycophancy, information density, and discourse coherence. While there is no single linguistic explanation for the collaborative weaknesses of contemporary LLMs, we note that humans achieve comparable task success at superior token efficiency by producing dialogues that are more coherent than those produced by most LLMs. The proactive management of private information is a defining feature of real-world communication, and we hope that MT-PingEval will drive further work towards improving this capability.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces a new way to test how well AI chat assistants work together over several back-and-forth messages (multi-turn conversations). The test is called MT-PingEval. It uses small cooperative games where each AI has some private (secret) information, like a picture or a table, and they must talk to combine what they know to solve a task. The big idea is to see whether extra turns of conversation actually help, especially when there’s a strict “word budget” for how much they can say.

What were the researchers trying to find out?

They focused on a few simple questions:

- Can today’s AI models use conversation to do better than a one-shot approach where one partner dumps info and the other immediately answers?

- If both partners have a fixed total “word budget,” does splitting it into more turns help or hurt?

- Which language habits help or harm teamwork (for example, being overly agreeable, being concise, staying on-topic)?

- How do AIs compare to humans when both try to solve these teamwork puzzles?

How did they test it?

They built “private information games” where two players each see something the other doesn’t, and must talk to succeed. The private info is hard to just copy into text (like images or structured tables), so the players need to choose what to say carefully.

They used five types of games:

- Chess: Each player sees a chessboard from the same game at different times. Together, they must decide which board came earlier.

- COVR (image reasoning): Each player sees one of two images and together they answer a detailed question about the pair.

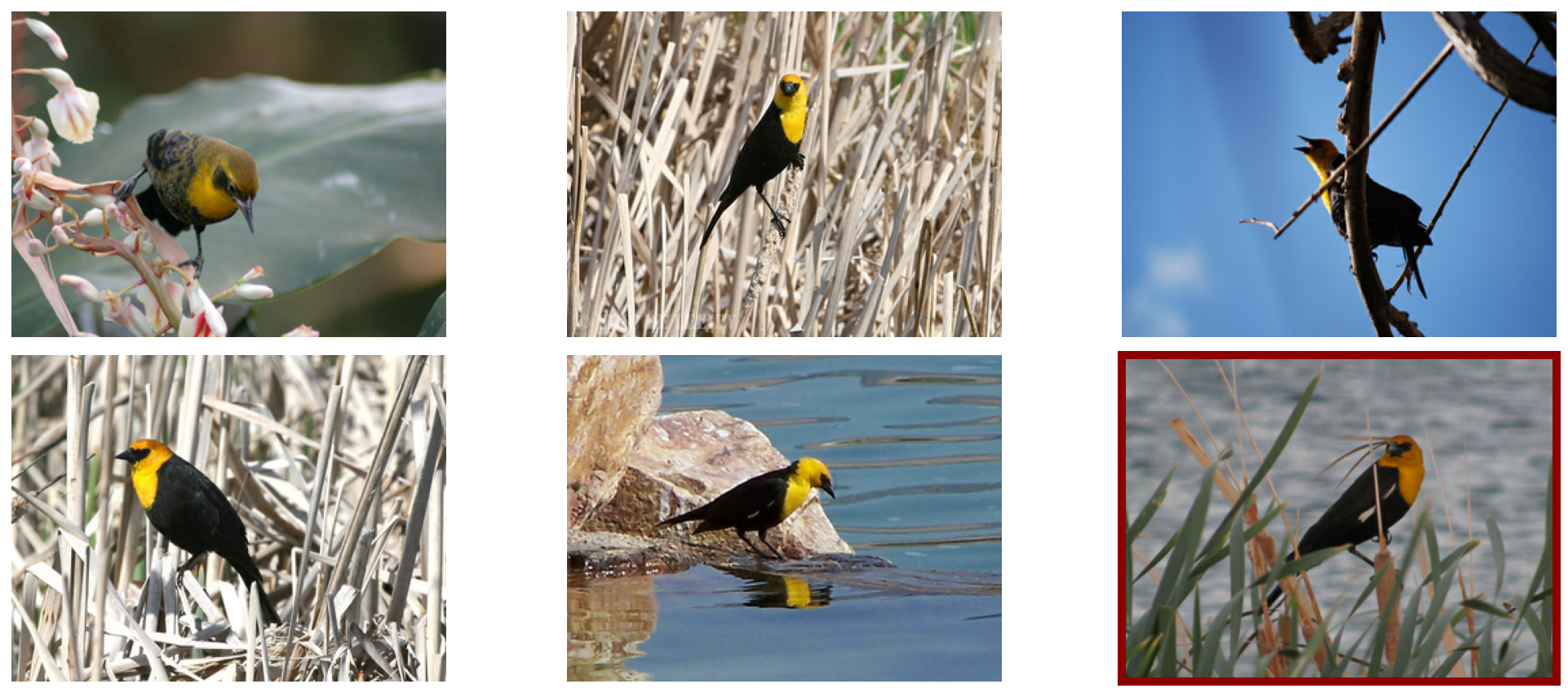

- MD3 (photo guessing): One “describer” sees one photo; the “guesser” sees six photos. The describer describes, the guesser picks the match.

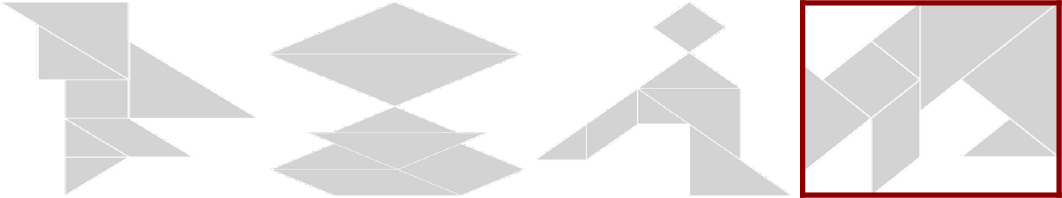

- Tangram (shape guessing): Same idea as MD3, but with abstract black-and-white shapes.

- Name-game (table matching): Each player has a list (a table) of people with features (like job, favorite musician). They must find the single person who appears in both lists.

They evaluated multiple modern AI models (from different labs) and sometimes turned on a “thinking mode,” where the model writes hidden scratch notes that don’t count against its word budget.

The key testing idea was “isotoken evaluation”:

- Give each player the same total word budget (think of tokens like words or chunks of words).

- Change how many turns they have, but keep the total budget the same. Example: 128 total words could be 2 turns of ~64 words each, or 16 turns of ~8 words each.

- In principle, more turns should never make things worse, because you can always ignore extra turns if they’re not helpful. If conversation truly helps, more turns should help the team do better.

What did they find?

Overall result: Extra turns usually did not help. In many cases, performance stayed flat or even got worse with more turns, even though the total word budget was the same.

Highlights by task:

- Chess: A few stronger models did better, sometimes using smart shortcuts (like counting how many pieces are left to guess which board is earlier). But many models hovered near chance (basically guessing).

- COVR (image reasoning): Performance was mostly flat as turns increased. For one strong model, “thinking mode” and more turns seemed to overlap in benefit—adding more turns didn’t improve much beyond what careful thinking already gave.

- MD3 and Tangram (image guessing): More turns often made things worse. Models tended to stop early instead of using all their turns to double-check and narrow down the answer. They also wasted words on fluff (like repeating greetings) instead of packing in useful details.

- Name-game (table matching): Scores went up with more turns—but often for the wrong reason. Many models just guessed a row each turn. With more turns, random guessing becomes more likely to hit the right answer, so accuracy rises even without smart teamwork. A simple “guess one new row each turn” strategy did similarly well.

Language habits that hurt:

- Sycophancy (being overly agreeable): Models sometimes accepted the partner’s claims too easily instead of checking against their own private info.

- Low information density: They used up words without conveying enough helpful details.

- Weak discourse coherence: They didn’t keep the conversation tightly focused on the goal or the most useful next question.

- Compared to humans: Humans reached similar success with fewer words by staying focused, sharing the most relevant info, asking sharper questions, and verifying before finishing.

Bottom line: Today’s models often fail to plan a multi-turn strategy, ask the right clarifying questions, share the most useful bits of private info, and verify their final answer.

Why does this matter?

Real conversations—like planning a trip, fixing a bug, or making a joint decision—depend on back-and-forth teamwork, asking the right questions, and sharing just the important parts of what you know. This study shows that current AI models struggle with those skills. MT-PingEval gives researchers a clear, repeatable way to measure and compare multi-turn collaboration so they can improve it.

If future models learn to:

- manage private information carefully,

- pack more useful info into fewer words,

- ask targeted follow-up questions,

- and verify before ending,

then AI teammates will become much more reliable in real-world, multi-step tasks.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of what remains missing, uncertain, or unexplored in the paper, phrased to guide follow-up research.

- Lack of instance-level grounding of “interactivity levels”: tasks are not labeled or constructed with provable minimal turn requirements; no oracle protocols to verify that specific items are truly level‑k interactive.

- No oracle upper bounds: absent a single‑agent baseline that sees both and (and an oracle “compressed encoding” protocol) to quantify absolute headroom and the gap attributable to interaction.

- Confounded compute budgets: “thinking” does not count against the token budget for Gemini models but is unavailable or uncounted for other models, undermining fairness in isotoken comparisons.

- Tokenization mismatch: budgets are enforced in subword tokens but communicated and mentally tracked by models in whitespace tokens; chess and domain-specific tokens exacerbate this, potentially biasing outcomes.

- Rigid per‑turn token allocation: enforcing equal per‑turn caps prevents adaptive token budgeting across turns; experiments do not test a fixed total budget with flexible per‑turn usage.

- Premature termination is noted but not systematically quantified: no taxonomy of failure modes (e.g., early guessing, non‑verification, off‑task chatter) or measurement of their frequency across tasks/models/turn budgets.

- Prompt sensitivity unexamined: no ablations on system instructions, end‑of‑turn reminders, few‑shot examples, or style constraints to assess robustness of the isotoken findings to prompt design.

- Name‑game metric design permits guess‑and‑check success: accuracy inflates with more turns without penalizing wrong guesses; lacks cost‑sensitive scoring (e.g., penalties per incorrect attempt or verification requirements).

- Limited human baselines: only a narrow human comparison is mentioned (MD3); missing systematic human‑human and human‑LM baselines across all tasks with matched token/turn budgets and efficiency measures.

- Modality coverage is narrow: focuses on images and small structured tables; excludes long documents, audio, video, and dynamic or stateful environments where private information management is critical.

- Only two‑player settings are evaluated: no extension to 3+ participants, subgroup information, or more complex turn‑taking—key aspects of real collaborative dialogue.

- Questionable distribution realism: chess positions sampled via uniform legal moves may be unnatural; image distractor selection difficulty is not calibrated or stratified; implications for external validity are unclear.

- Statistical reporting details are thin: limited discussion of confidence intervals, significance tests, and effect sizes for the observed (often small) differences across turn budgets.

- No difficulty stratification: tasks are not broken down by instance difficulty (e.g., minimal turns needed, compressibility), impeding analysis of where interactivity should help most.

- Training interventions are not explored: no attempts at supervised fine‑tuning, self‑play RL, or instruction‑tuning to learn better question‑asking, verification, or compression strategies.

- Interplay between internal “thinking” and external dialogue is not disentangled: when (and how much) does chain‑of‑thought substitute for multi‑turn interaction? No controlled study with matched “thinking” budgets.

- Homogeneous self‑play only: interactions are between identical models; no cross‑model pairings (heterogeneous agents) or human‑AI interactions to assess generality and synergy/misalignment effects.

- Robustness to partner noise or deception is untested: all settings assume cooperative, truthful interlocutors; no evaluation under misunderstandings, errors, or adversarial behavior.

- Exact‑match answer evaluation may mis-score valid outputs: reliance on strict formatting (“ANSWER: …”) risks false negatives; no semantic or robust parsing evaluation variants are provided.

- Information density is discussed but not measured in bits: there is no quantitative estimate of per‑turn information transfer (e.g., mutual information, compression ratios) or its correlation with success.

- Turn cost and latency are ignored: real deployments face latency trade‑offs per turn; no experiments that constrain wall‑clock time or penalize excessive turns.

- Truncation policy may alter semantics: outputs exceeding token limits are truncated; frequency and performance impact are not analyzed or mitigated via safer stopping strategies.

- Aggregated results obscure task size effects: name‑game experiments span 9/16/25 records, but results are not broken down by table size to analyze scaling with difficulty.

- Reproducibility and release details are unclear: it is not specified whether generators, prompts, seeds, and evaluation scripts are publicly released for replication.

- Model coverage is limited: evaluation skews toward a few vendors (Gemini, GPT‑4o, Qwen‑VL8B, Gemma3‑12B); broader coverage (Llama, Claude, Mixtral, etc.) could test generality.

- Asymmetric task role analysis is missing: in describer‑guesser settings, there is no role‑specific assessment of question quality, attribute selection, redundancy, or confirmation behavior.

- No controlled “verification required” variants: tasks do not enforce explicit check‑backs before final answers, limiting diagnosis of non‑verification vs. other failure modes.

- Linguistic overhead is not systematically measured or reduced: no quantification of per‑turn preamble/filler and no experiments with overhead‑minimizing instruction‑tuning.

- Perception vs. communication not cleanly isolated: no ablations with vision or structure “oracles” to separate failures of seeing/reading from failures of dialogue planning.

- Safety and ethical implications of sycophancy are not evaluated: no experiments on how sycophancy affects human‑AI collaboration quality or mitigations that reduce harmful agreement.

- Missing tasks with known optimal policies: no benchmarks where optimal dialogue policies are analytically known, preventing regret analyses of learned policies.

- Open training question: can information‑gain–based rewards (e.g., expected entropy reduction) or curiosity‑driven RL improve question‑asking and verification in these settings?

- Multilingual generalization is unstudied: all tasks are in English; it is unknown whether interactivity weaknesses persist across languages or cross‑lingual dialogues.

- Context‑length scaling remains unexplored: results are reported at 256 tokens per player; it is unclear how performance changes with much larger budgets or memory across long conversations.

Practical Applications

Immediate Applications

The paper introduces MT-PingEval (a suite of multi-turn “private information games”) and an isotoken scaling methodology (fixed total tokens, varying turns) to diagnose whether LLMs can use interaction to improve task performance. The following applications can be deployed now.

- CI/CD benchmark for multi-agent and assistant products (software, customer support, developer tools)

- Use MT-PingEval and isotoken curves to A/B test agents, prompts, and workflows that rely on multi-turn collaboration (e.g., tool-use agents, co-pilots, customer support bots).

- Tools/workflows: integrate an “isotoken-eval” harness into LLMOps dashboards; track accuracy vs turns; regression alerts when performance degrades with more turns.

- Assumptions/dependencies: ability to run two-model self-play; access to model “thinking” modes (if applicable); reliable token counting; automated verification for selected tasks.

- Guardrails to prevent premature termination (healthcare, finance, safety-critical support)

- The paper shows agents often end dialogues early; implement verification gates requiring evidence exchange before “ANSWER” is allowed.

- Tools/workflows: “final-turn reminders” and checklists; “answer-blocker” that enforces confirm-then-commit patterns.

- Assumptions/dependencies: control over prompts/system policies; alignment with compliance rules; minimal latency overhead.

- Model/vendor selection under fixed cost (procurement, platform teams)

- Use isotoken tests to choose between “few long turns” vs “many short turns” and to decide whether to pay for “thinking” tokens or more interactive turns.

- Tools/workflows: procurement scorecards including isotoken performance and inverse-scaling risk.

- Assumptions/dependencies: access to comparable models and pricing; stable tokenization across vendors.

- Dialogue quality telemetry: information density and coherence meters (LLMOps, QA)

- Monitor conversations for filler, topic drift, and low-density messaging; correlate with task failures.

- Tools/workflows: lightweight “coherence score” (e.g., entity-grid signals), “information-per-token” metrics, sycophancy probes; plug-ins for observability stacks.

- Assumptions/dependencies: availability of transcripts; calibrations for domain-specific discourse norms.

- Prompt/policy redesign for interactive planning (cross-sector)

- Encode “efficient summaries” (e.g., minimal sufficient statistics) and targeted questioning strategies; reduce verbose introductions and enforce turn discipline.

- Tools/workflows: prompt libraries that prioritize high-density encodings and structured questions; auto-suggest questions for disambiguation.

- Assumptions/dependencies: maintainability of prompt assets; model adherence to system policies.

- Human training and onboarding with information-gap games (education, call centers, sales)

- Use PING-style tasks (e.g., MD3, tangram, name-game) to teach concise descriptions, discriminative questioning, and verification.

- Tools/workflows: micro-sims embedded in LMS tools; scoring based on token efficiency and correctness.

- Assumptions/dependencies: adapted content for learner skill levels; simple UI for turn-taking and budgets.

- Data reconciliation drills with structured records (data engineering, CRM ops)

- Use “name-game”-style exercises to stress-test dedup and record-linking assistants that must ask/answer to find matches across silos.

- Tools/workflows: synthetic table generators with one shared row and distractors; auto-grading.

- Assumptions/dependencies: domain-adapted schemas; privacy-safe synthetic data.

- Agent debugging via inverse-scaling detectors (software, multi-agent platforms)

- Flag workflows where performance drops as turns increase; triage causes (premature stop, sycophancy, low-density turns).

- Tools/workflows: isotoken “health checks” in pre-production; failure mode labeling.

- Assumptions/dependencies: reproducible seeds; sufficient task volume for stable estimates.

- User-facing guidelines for better assistant interactions (daily life, knowledge work)

- Provide templates that: state private constraints up front, ask pinpoint questions, and agree on verification before finalizing.

- Tools/workflows: quick-reference prompt cards; IDE/office-suite extensions that insert verification steps.

- Assumptions/dependencies: minimal user training; product UI affordances for turn markers and checklists.

Long-Term Applications

The findings (models underuse interactivity, suffer from sycophancy, and lack coherent multi-turn planning) suggest product and research directions requiring further development.

- Training curricula for multi-turn collaboration (foundation model R&D)

- Self-play RL and supervised fine-tuning on PINGs to reward: high information density, targeted questions, non-sycophantic disagreement handling, and delayed commitment until verification.

- Tools/products: “interaction reward models,” dialogue-coherence critics, and anti-sycophancy calibrators.

- Assumptions/dependencies: access to training loops; scalable generation of automatically verifiable multi-agent traces.

- Standardized “AI handshake” protocols for cross-silo collaboration (enterprise software in healthcare, finance, supply chain)

- Protocols defining minimal, high-value summaries of private state (e.g., sufficient statistics) and turn-taking rules to converge efficiently.

- Tools/products: domain schemas; “interactive codecs” for images, tables, and logs under token budgets.

- Assumptions/dependencies: interop standards; privacy/governance frameworks; secure multi-agent messaging.

- Certification and policy standards for multi-turn capability (public sector, regulated industries)

- Procurement and safety guidelines mandating isotoken evaluations, verification gates, and thresholds for premature termination and sycophancy.

- Tools/products: evaluation suites for audits; third-party test labs; model cards with isotoken curves.

- Assumptions/dependencies: regulator buy-in; harmonized metrics and pass/fail criteria.

- Multimodal collaborative agents for perception-heavy workflows (manufacturing QA, robotics, media)

- Agents that reconcile views from different cameras/sensors or image sets via concise multi-turn exchanges before acting.

- Tools/products: “collaborative vision” modules tuned on high-interactivity tasks (e.g., MD3, tangram variants).

- Assumptions/dependencies: robust on-device multimodal models; latency-tolerant turn budgets; real-time verification signals.

- Conversational middleware for budget-aware interaction (agent frameworks, cloud platforms)

- Dynamic turn-budget managers that allocate tokens over turns based on observed information gain and stop only after verification.

- Tools/products: cost–performance controllers; APIs for adaptive token allotment and “request-more-info” escalations.

- Assumptions/dependencies: fine-grained API controls; reliable information-gain estimates; cost governance.

- Education technology for communication skills and L2 learning (edtech)

- Rich information-gap curricula that auto-generate tasks and assess clarity, coherence, and strategic questioning.

- Tools/products: teacher dashboards; student feedback on token efficiency and discourse moves.

- Assumptions/dependencies: age-appropriate content; fairness and accessibility checks.

- Research expansion: higher interactivity levels and multi-party tasks (academia, open benchmarks)

- Extend the “levels of interactivity” framework to 3+ agents and real-world domains (e.g., EHR + imaging + lab systems).

- Tools/products: new benchmarks with automatic verification; competitions emphasizing isotoken improvements (not just static accuracy).

- Assumptions/dependencies: novel automatic-checking designs; community adoption.

- Safety features for conflict-sensitive domains (compliance, legal review, risk ops)

- Disagreement detection and escalation policies to humans when agents’ private info conflicts; reduce sycophancy-driven errors.

- Tools/products: “conflict sentinel” that tracks claims vs private evidence before finalization.

- Assumptions/dependencies: robust contradiction detection; clear human-on-the-loop pathways.

- Consumer-grade multi-device orchestrators (productivity, personal knowledge bases)

- Assistants that coordinate across calendar, email, photos, and documents via disciplined multi-turn exchanges to avoid misinterpretation.

- Tools/products: on-device privacy-preserving agents with budget-aware turn planning and verification steps.

- Assumptions/dependencies: consented data access; strong privacy guarantees; smooth UX for turn-based flows.

- Domain-specific “efficient encoding” libraries (cross-sector)

- Catalogs of task-specific minimal summaries (akin to “piece count + pawn rank” in chess) to speed convergence in repeated workflows.

- Tools/products: encoding recipe libraries for IT tickets, medical summaries, financial reconciliations, etc.

- Assumptions/dependencies: expert-crafted or learned encodings; continuous evaluation against task outcomes.

These applications leverage the paper’s core innovations—private information games, levels of interactivity, and isotoken scaling—to improve how we evaluate, design, and govern multi-turn, multi-agent AI systems in the real world.

Glossary

- Automatic verification: Automated checking that task outputs are correct without human annotation. "To enable comparative analysis at scale, we design the games to enable automatic verification and generation of new instances."

- COVR: A compositional visual reasoning benchmark involving natural language and images. "Multimodal reasoning benchmarks such as NLVR and COVR require answering natural questions or evaluating sentences about sets of images."

- Discourse coherence: The degree to which a dialogue is logically connected and goal-directed across turns. "We analyze the linguistic features of these dialogues, assessing the roles of sycophancy, information density, and discourse coherence."

- Encoding function: A function that maps a player’s private information and the conversation state to a length-constrained textual message. "Assume each player~ has an encoding function "

- Exact match evaluation: An evaluation criterion where the model’s answer must exactly match the required format to be counted as correct. "it was necessary to instruct the LLMs on the desired answer format to enable exact match evaluation."

- Goal-directedness: The property of a dialogue being oriented toward achieving the task objective. "goal-directedness (via discourse coherence)."

- Information density: The amount of task-relevant information conveyed per token or unit of text. "We analyze the linguistic features of these dialogues, assessing the roles of sycophancy, information density, and discourse coherence."

- Information gap: A task type where participants must share private information to jointly accomplish a goal. "This basic idea echoes much earlier work on 'information gap' tasks in second-language instruction"

- Information modalities: Distinct forms of data (e.g., images, structured knowledge) that are hard to efficiently convert into text. "we use information modalities that are not easy to convert into text (under reasonable length constraints): images and structured knowledge."

- Interactive scaling analysis: Studying performance when a fixed token budget is partitioned across varying numbers of turns. "This enables an interactive scaling analysis, in which a fixed token budget is divided over a variable number of turns."

- Isotoken evaluation: An evaluation method with a fixed token budget per player while varying the number of turns. "we propose a new form of evaluation, called isotoken evaluation"

- Level of interactivity: A conceptual categorization of how many alternating encodings/turns are needed to solve a collaborative game. "we propose a framework for quantifying the level of interactivity for a collaborative private information game."

- MD3: An asymmetric image selection dialogue task with natural photographs, where a describer and guesser collaborate. "The MD3 and tangram tasks are asymmetric image selection tasks."

- Multimodal reasoning: Reasoning that integrates multiple data types, typically text and images. "Multimodal reasoning benchmarks such as NLVR and COVR require answering natural questions or evaluating sentences about sets of images."

- NLVR: Natural Language Visual Reasoning benchmark for evaluating consistency of language with visual inputs. "Multimodal reasoning benchmarks such as NLVR and COVR require answering natural questions or evaluating sentences about sets of images."

- Pragmatics: The study of language use in context, including implied meanings and conversational norms. "The example also highlights the questionable pragmatics of even relatively strong models"

- Private information: Information accessible only to one participant that must be communicated to solve the task. "The key feature is that each participant has access to private information, some of which is essential to the successful completion of the task."

- Private information games (PINGs): Collaborative tasks where each player holds private, hard-to-transmit information and must communicate to solve the task. "we present a new form of multi-turn evaluation through collaborative private information games (PINGs)."

- Sharding: Partitioning a message across multiple turns, here used to distribute a two-turn message over more turns. "the players can achieve the same communication by ignoring each other and sharding their message from the two-turn variant into however many turns are permitted."

- Subword tokens: Tokenization units smaller than word-level tokens, typically learned byte-pair or sentencepiece units. "The token budget is implemented in subword tokens, but the LLMs appear to be capable of reasoning only about whitespace-delimited tokens"

- Sycophancy: A tendency of models to agree or defer unduly to an interlocutor, potentially harming accuracy. "We analyze the linguistic features of these dialogues, assessing the roles of sycophancy, information density, and discourse coherence."

- Thinking: A model mode that performs internal reasoning steps that do not count against the external token budget. "The Gemini 2.5 models are evaluated with and without thinking, which does not count against the token budget."

- Thinking budgets: Limits or settings governing how much internal “thinking” a model can perform. "These negative results hold broadly across model scales and thinking budgets"

- Token budget: The maximum number of tokens allowed per player or per dialogue interaction. "both players are given a fixed total token budget"

- Token efficiency: Achieving comparable or better task performance with fewer tokens. "humans achieve comparable task success at superior token efficiency"

- Turn budget: The maximum allowed number of conversational turns in an evaluation. "performance significantly decreases with higher turn budgets"

- User simulator: A model-driven agent that simulates a human user for controlled evaluation. "a user simulator that is backed by another LLM"

- Whitespace-delimited tokens: Token units defined by splitting text on whitespace, used by models for counting. "the LLMs appear to be capable of reasoning only about whitespace-delimited tokens"

Collections

Sign up for free to add this paper to one or more collections.