Do LLMs Benefit From Their Own Words?

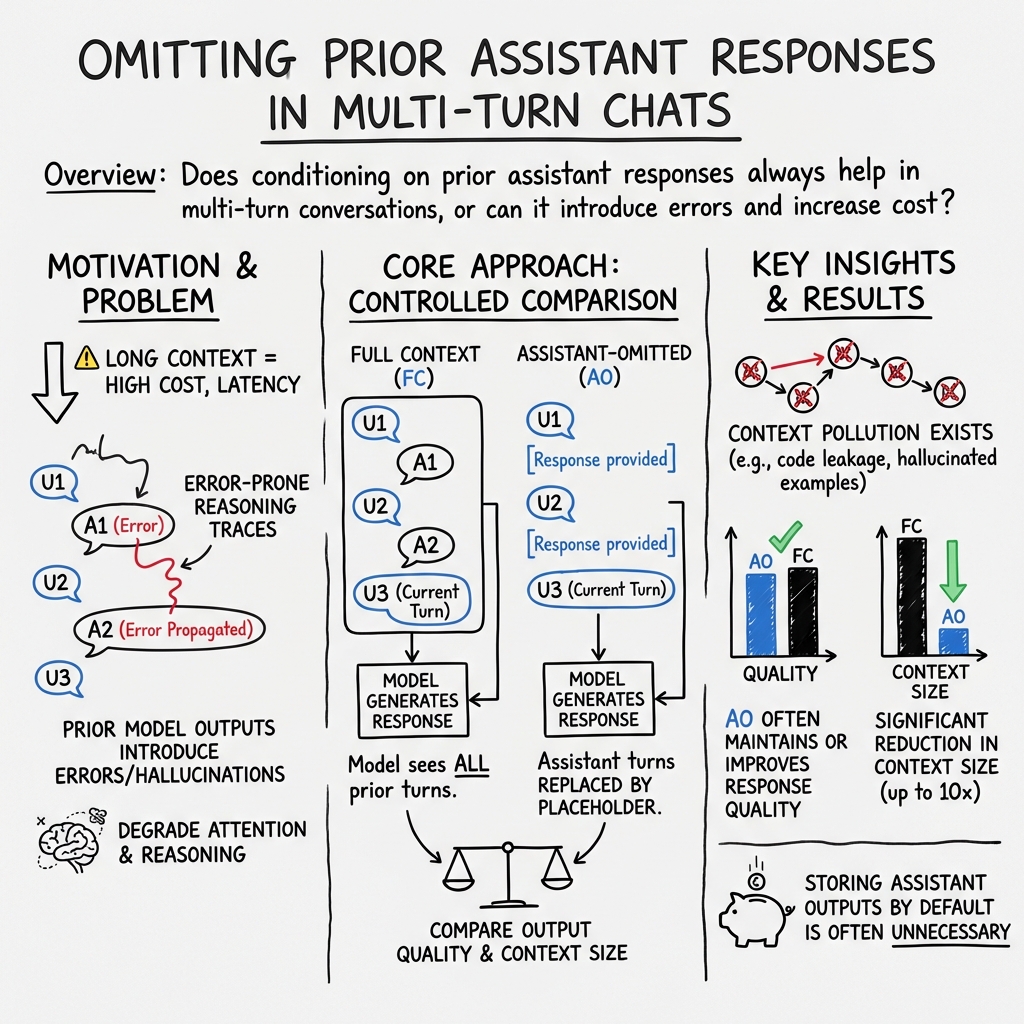

Abstract: Multi-turn interactions with LLMs typically retain the assistant's own past responses in the conversation history. In this work, we revisit this design choice by asking whether LLMs benefit from conditioning on their own prior responses. Using in-the-wild, multi-turn conversations, we compare standard (full-context) prompting with a user-turn-only prompting approach that omits all previous assistant responses, across three open reasoning models and one state-of-the-art model. To our surprise, we find that removing prior assistant responses does not affect response quality on a large fraction of turns. Omitting assistant-side history can reduce cumulative context lengths by up to 10x. To explain this result, we find that multi-turn conversations consist of a substantial proportion (36.4%) of self-contained prompts, and that many follow-up prompts provide sufficient instruction to be answered using only the current user turn and prior user turns. When analyzing cases where user-turn-only prompting substantially outperforms full context, we identify instances of context pollution, in which models over-condition on their previous responses, introducing errors, hallucinations, or stylistic artifacts that propagate across turns. Motivated by these findings, we design a context-filtering approach that selectively omits assistant-side context. Our findings suggest that selectively omitting assistant history can improve response quality while reducing memory consumption.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper asks a simple but important question about AI chatbots: When an AI is chatting with you over multiple messages, does it really need to see its own earlier replies to do well on the next message? The authors test what happens if you hide the AI’s previous answers and only show it what the user said. They find that, surprisingly often, the AI still does fine—and sometimes even does better—while using much less memory.

What questions did the researchers ask?

They focused on three easy-to-understand questions:

- Do AI models give better answers when they can read their own past replies, or can they do just as well with only the user’s past messages?

- In what kinds of chat turns is the AI’s past writing helpful, and when is it unnecessary or harmful?

- Can we build a simple system that decides, turn by turn, whether to include the AI’s past replies or leave them out?

How did they study it?

They compared two ways of feeding conversation history to AI models:

- Full Context (FC): The model sees everything—both the user’s past messages and the AI’s past replies.

- Assistant-Omitted (AO): The model sees only the user’s past messages. The AI’s past replies are replaced with a simple placeholder like “[Response provided]” so the model knows a response happened, but can’t read it.

They used real multi-turn chats from public datasets (like WildChat and ShareLM), focusing on technical topics such as coding and math. They tested several AI models (three open models and one state-of-the-art model) and generated answers for each chat turn using both FC and AO.

To judge which answer was better, they used another strong AI as a “referee” (an AI judge). Think of it like having a neutral teacher grade both versions of the answer and choose which one is clearer, more accurate, and more on-topic.

Key terms in plain language:

- Context: The chat’s memory—the messages the AI can read before answering.

- Conditioning on context: The AI “studies” that memory before writing its reply.

- Context window: A limit on how much text the AI can read at once (longer context costs more time and memory).

- Hallucinations: When the AI confidently makes up facts or details that aren’t true.

What did they find, and why does it matter?

Here are the main takeaways:

- Many chat turns are self-contained. About 36% of messages in real conversations are “new asks”—fresh questions that don’t depend on older AI replies. For these, hiding the AI’s past answers usually doesn’t hurt.

- Even some follow-ups can be answered without the AI’s old replies. If the user gives clear instructions (“Use Python instead of Java,” “Switch from UMAP to t-SNE”), the model can often start from scratch using only the user’s messages.

- Less context means big savings. Hiding AI replies shrank the average context size by about 5–10x by turn 8. That means faster, cheaper, and more efficient chats.

- Past AI replies can sometimes hurt—this is “context pollution.” The AI may copy its old mistakes, carry over wrong assumptions, or keep a style that no longer fits. Example: The user first asked for UMAP (a data plotting method) with a special “Jaccard” setting, then later said “switch to t-SNE.” When the AI could see its old reply, it accidentally reused the UMAP-only setting and produced broken t-SNE code. When its old reply was hidden, it generated correct t-SNE code.

- Results vary by model and by how you judge. Across several models, removing the AI’s past replies often kept quality the same, and sometimes even improved it. In general, answers stayed on-topic even without the AI’s past messages.

- A smart filter works best. The authors trained a small classifier (a simple predictive tool) that looks at the current turn and decides whether to keep or skip the AI’s past replies. This adaptive strategy kept over 95% of the full-context performance while using only about 70% of the context tokens—beating a simple rule like “omit on new asks only.”

Why this matters:

- It challenges a common default: always keeping every past AI reply. The study shows you can often skip them and still get high-quality answers.

- It shows a path to cleaner, less error-prone conversations by avoiding “context pollution.”

- It saves memory and cost, which is important for longer chats and real apps.

What methods did they use, in everyday terms?

- Head-to-head testing: For each turn in a conversation, they generated two answers: one with full memory (FC) and one with only user-side memory (AO). Then an “AI referee” compared the two and picked a winner for quality and for staying on-topic.

- Turn types: They grouped user messages into three kinds:

- New Ask: a fresh, self-contained request mid-conversation.

- Follow-up with Feedback: a clear change like “use Python” or “make the intro friendlier.”

- Follow-up without Feedback: vague references like “the second one is not working” without details.

- Smart chooser (adaptive filter): They trained a lightweight model that looks at the current situation and predicts whether full memory will help. If it likely helps, keep it; if not, skip it.

Think of it like an editor who decides what parts of your old notes to bring to the next assignment. Sometimes your old notes help; other times they just clutter your mind and cause mistakes.

What does this mean going forward?

- Better design for chat systems: Don’t automatically keep every past AI reply. Instead, selectively include only what’s likely to help. This can:

- Reduce costs and speed up answers.

- Avoid copying past mistakes.

- Keep the model focused on the user’s current request.

- Smarter tools for developers: Use simple classifiers to decide when to include AI history. Over time, build finer-grained filters that keep only the specific past reply that’s relevant, not the whole history.

- Improved benchmarks and research: Since many real chats don’t truly need long memory, future tests should carefully measure when long context is actually necessary.

- A note of caution: The study relied on an AI referee for grading. The authors checked alignment with humans and got high agreement, but larger human studies would make the results even stronger.

In short: AI chatbots don’t always need to read their own previous messages. Skipping them can often keep answers just as good, make them cleaner, and save a lot of memory and time. A simple “when-to-keep” filter can get you most of the benefits of full memory with much lower cost.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of what remains missing, uncertain, or unexplored in the paper, framed as concrete, actionable gaps for future work.

- External validity: Only 300 real-world conversations (technical, English, 5–10 rounds) were analyzed; generalization to other domains (e.g., creative writing, open-ended planning, education, customer support) and to longer dialogues (>30 turns) is untested.

- Multilingual coverage: Findings were not evaluated beyond primarily English data; robustness across languages, scripts, and code-switching remains unknown.

- Dataset biases: WildChat/ShareLM logs are ChatGPT-era, platform-specific, and keyword-filtered; distributional shift to current usage patterns or enterprise settings is unexamined.

- Evaluation dependency on LLM-as-judge: GPT-5 served as the primary judge; potential family/style bias (especially when GPT-5.2 is also a generator) and cross-judge robustness (vs. Claude, Gemini, open judges) were not systematically quantified.

- Human evaluation scale: Human–LLM alignment was only briefly referenced; large-scale, blinded human assessment across tasks and domains is needed to validate LLM-judge conclusions.

- Judge susceptibility to context: The judge sometimes sees full histories; whether judges themselves suffer from the same “context pollution” and how this affects verdicts is not rigorously controlled.

- Subjective metrics: Quality/on-topic judgments were used; task-grounded metrics (e.g., unit-test pass rates for code, numerical correctness in math, factual accuracy with citations) were not systematically measured.

- Safety and compliance: Effects of omitting assistant history on safety guardrails, consistency of disclaimers, and policy adherence were not evaluated.

- User experience: Impacts on perceived coherence, persona continuity, memory of user preferences, and satisfaction are unmeasured.

- Long-horizon effects: How AO vs. FC choices early in a conversation affect success or failure many turns later remains unexplored.

- Token/cost savings: Context savings were reported in characters; end-to-end token counts, latency, GPU memory, throughput, and dollar costs on real systems were not quantified.

- Sensitivity to decoding/prompting: Effects of temperature, top-p, prompt templates, and system prompts (including the AO placeholder) on outcomes were not ablated.

- AO design choice: The AO condition inserts “[Response provided]”; ablations removing assistant turns entirely or replacing them with structured summaries were not tested.

- Granularity of filtering: The study contrasts only binary FC vs. full AO; selective retention of specific assistant turns/sentences/tokens (or retrieval-based assistant-turn selection) is left for future work.

- Comparative baselines: No head-to-head comparison against strong, practical baselines (e.g., summarization, top-k turn selection, recency windows, entropy/attention-based filtering, ERGO on real data) is provided.

- Tool-augmented agents: The impact of omitting assistant history when additional artifacts (tool outputs, code execution logs, retrieved docs, images) are present was not evaluated.

- “Context pollution” quantification: Identification relied on LLM scoring and manual inspection; a reproducible, quantitative metric for prevalence, severity, and categories of pollution is missing.

- Causal analysis of over-conditioning: Architectural or training-time factors that cause over-conditioning (e.g., attention patterns, RLHF incentives) were not investigated; no interventions were tested.

- Handling references to prior assistant content: Strategies for resolving user pointers to specific assistant outputs (e.g., “line 12 of your code”, “the second example”) without full FC were not developed.

- Detection from user-side signals: While proposed, there is no validated method to predict from user-side behavior alone when assistant history will be needed.

- Adaptive policy limitations: The classifier uses PCA-reduced proprietary embeddings and L1 logistic regression; stronger models (e.g., transformers) and open embeddings were not compared.

- Offline vs. online optimization: The adaptive policy was evaluated offline; online learning, contextual bandits, or A/B tests with real users and latency/cost constraints remain to be done.

- Threshold selection: There is no principled method to set thresholds that jointly optimize utility and cost under different application constraints.

- Cross-model generality: Only four models were tested; replication across more families, sizes, and training paradigms (e.g., no-CoT vs. CoT, long-context-tuned models) is needed.

- Chain-of-thought exposure: Some models expose thinking traces and others do not; the effect of visible/hidden CoT on FC vs. AO performance was not isolated.

- Domain/task diversity: Beyond coding/math, tasks like retrieval QA with citations, legal/medical guidance, dialogue-based tutoring, and multi-document synthesis were not assessed.

- Robustness to adversarial inputs: Whether AO reduces or increases susceptibility to prompt injection, jailbreaks, or persistence of malicious content is unknown.

- Memory consistency trade-offs: AO may degrade stylistic consistency or role continuity; explicit measurement and mitigation (e.g., lightweight persona summaries) were not explored.

- Interaction with long-context mechanisms: Effects of varying context window sizes, KV cache strategies, and efficient attention implementations on FC vs. AO trade-offs were not measured.

- Benchmarking gap: The paper calls for benchmarks with true multi-turn dependence but does not provide construction criteria, datasets, or validation procedures.

- Reproducibility details: Hyperparameters, random seeds, and evaluation harness artifacts sufficient for exact reproducibility are not fully specified in the main text.

- Ethical and privacy considerations: Policies for storing vs. discarding assistant outputs under privacy regulations and audit requirements are not discussed.

- Generalization to multi-party or multimodal chats: Scenarios involving multiple users, images, tables, or code files were not examined.

- Lifecycle impact: How AO/FC choices interact with conversation export, search, and later resumption (e.g., “continue this chat next week”) is unaddressed.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that leverage the paper’s findings on omitting past assistant responses (AO), detecting/mitigating context pollution, and adaptively routing turns between full context (FC) and AO to cut costs, improve latency, and sometimes improve quality.

- Industry – Software/Developer Tools (IDE copilots, coding chat)

- Use case: Context-light coding assistance in IDEs (e.g., Cursor-/Claude Code–style agents) that omit prior assistant messages unless necessary to avoid “carrying over” wrong APIs, parameters, or styles.

- Tools/workflows:

- Add an “assistant-output garbage collection” step that replaces past assistant replies with a placeholder (e.g., “[Response provided]”) and a system instruction that clarifies omissions.

- Heuristic routing: omit assistant turns by default on “New Ask” detections, keep for follow-ups referencing assistant output (“the second one is broken”).

- Assumptions/dependencies:

- Models can answer many turns from user prompts alone; performance varies by model (e.g., GPT‑5.2 benefited from adaptive use rather than AO-only).

- Technical chats were the primary evaluation domain; validate on your codebase/dev workflows.

- Industry – Customer Support/CRM

- Use case: Token- and cost-efficient live support chat where many mid-conversation queries are standalone (36.4% “New Ask”), enabling 5–10x smaller contexts by round 8 without hurting quality for many turns.

- Tools/workflows:

- AO prompting by default with a keyword/regex filter for dependency cues (“that one”, “earlier answer”, “your previous step”) to fall back to FC.

- Topic-shift detector that resets assistant memory automatically to reduce style drift and hallucination carry-over.

- Assumptions/dependencies:

- Some follow-ups need specific assistant turns; include a “retrieve last referenced assistant turn” option or quick FC fallback.

- LLM-as-judge in the paper; confirm with task-specific human QA.

- Industry – Agentic Systems (software automation, test-time scaling)

- Use case: Slimmer trajectories by pruning assistant reasoning traces and tool outputs that cause context pollution and distract future steps.

- Tools/workflows:

- Insert a context manager that keeps all user prompts, prunes or summarizes tool logs, and omits assistant traces unless explicitly referenced by the user/tool.

- Build on the paper’s adaptive router that predicts P(FC > AO) per turn and chooses AO or FC.

- Assumptions/dependencies:

- Requires embeddings and simple classifier (e.g., L1-regularized logistic regression) or light heuristics while you train a better router.

- Domain shifts (non-technical tasks) may need re-tuning.

- Industry – Contact Centers/Voicebots

- Use case: Faster responses and higher throughput from reduced context size; lower risk of style contamination across turns.

- Tools/workflows:

- AO pipeline for most turns; FC fallback when anaphora to assistant content is detected.

- Monitoring dashboards to track token savings, latency, and resolution rates.

- Assumptions/dependencies:

- Must ensure accessibility and call QA standards; verify no loss on escalations or sensitive flows.

- Industry – Enterprise Knowledge Assistants (RAG)

- Use case: Prevent prior generated explanations from polluting later answers; rely on user queries + retrieved docs rather than past assistant text.

- Tools/workflows:

- Filter the memory chain to user turns + retrieved sources; index assistant turns, but only fetch a specific past turn when explicitly referenced.

- Assumptions/dependencies:

- Retrieval quality must be high; poor retrieval will offset AO benefits.

- Academia – Benchmarks/Evaluation

- Use case: Add AO baselines and context-pollution diagnostics to multi-turn benchmarks; report both quality and token consumption.

- Tools/workflows:

- Include user-only and FC conditions; annotate “New Ask” vs. follow-ups; log win/tie rates and context length.

- Assumptions/dependencies:

- Human evaluation needed to complement LLM-judge; paper reports ≥90% alignment but scope-limited.

- Academia – Reproducible Context Studies

- Use case: Study context dependence in new domains by training a lightweight AO/FC router and measuring quality-cost frontiers.

- Tools/workflows:

- Use embeddings + metadata (round number, context length) and a sparse classifier to route per turn; open-source scripts for plug-and-play.

- Policy/Compliance – Data Minimization

- Use case: Reduce retention of assistant outputs in logs by default (store user turns; replace assistant turns with placeholders), lowering privacy and compliance risk.

- Tools/workflows:

- “Privacy-by-default” logging policy: persist user prompts fully; store assistant outputs hashed and retrievable only if referenced or for audits.

- Assumptions/dependencies:

- Certain regulations require auditable records; maintain secure retrieval for regulated flows.

- Daily Life – Personal Assistants

- Use case: “Start fresh” mode that preserves only user prompts; faster, cheaper, and reduces the chance of stylistic or factual drift between tasks.

- Tools/workflows:

- A UI toggle “Use only my messages” + automatic topic-shift detection that clears assistant history mid-conversation.

- Assumptions/dependencies:

- Users may sometimes want continuity; provide explicit “resume with history” control.

- Energy/Sustainability – Cost and Carbon Reduction

- Use case: Lower compute and energy by reducing context lengths 5–10x in longer chats; more sessions per GPU hour.

- Tools/workflows:

- Fleet-level policy to default to AO for new asks; monthly reports on kWh and $ saved.

- Assumptions/dependencies:

- Savings depend on traffic mix and average conversation depth.

- Healthcare – Non-diagnostic Triage and Navigation

- Use case: Patient-facing navigators (appointments, insurance questions) where many turns are independent; reduce context to limit error propagation.

- Tools/workflows:

- AO default with strict fallback to FC when the user references earlier advice; include human oversight for safety-critical transitions.

- Assumptions/dependencies:

- Safety evaluation and clinician approval; avoid AO for longitudinal clinical reasoning without validation.

- Finance – Retail Banking Chatbots

- Use case: Reduce token use and drift in long customer threads (e.g., switching from card dispute to mortgage inquiry mid-chat).

- Tools/workflows:

- AO default on topic shifts; keep per-turn audit trails without storing full assistant histories.

- Assumptions/dependencies:

- Strong compliance constraints; ensure retrieval of relevant past assistant turns for regulated disclosures when required.

- Security – Prompt-Injection Containment

- Use case: Limit propagation of malicious tool outputs or compromised assistant turns by not persisting them in future context.

- Tools/workflows:

- Memory hygiene filter that quarantines tool outputs and only feeds user prompts + vetted sources forward.

- Assumptions/dependencies:

- Needs robust detection of injection cues; may require human-in-the-loop for high-risk actions.

Long-Term Applications

These opportunities require further research, scaling, or productization beyond straightforward AO heuristics.

- Industry/Research – Fine-Grained Assistant-Turn Retrieval

- Use case: Retrieve only the specific assistant turn(s) referenced by the current user, not the whole history.

- Tools/products:

- “Turn-level RAG” with vector indexing of assistant turns and pointer-based retrieval into context.

- Assumptions/dependencies:

- Reliable detection of cross-turn references and robust ranking to avoid re-introducing pollution.

- Industry – Learned Context Routers at Scale

- Use case: Production-grade routers predicting P(FC > AO) with richer features (topic models, anaphora resolution, dialogue acts) and per-domain training.

- Tools/products:

- Microservice “Context Router” with online A/B testing and budget-aware policies (quality vs. tokens).

- Assumptions/dependencies:

- Continuous training/evaluation to accommodate domain drift; labeled data or weak supervision to learn routing.

- Model Training – Self-Conditioning Robustness

- Use case: Pretrain/fine-tune models to de-emphasize their own prior outputs (reduce over-conditioning) and attend more to user inputs and verified evidence.

- Tools/products:

- Curriculum that includes AO-context examples; losses that penalize reliance on assistant-side tokens; preference optimization against pollution.

- Assumptions/dependencies:

- Requires access to training pipelines and sufficient data diversity; careful safety evaluation.

- Agentic Systems – Memory Garbage Collection Frameworks

- Use case: Generalize context hygiene to tool outputs, plans, and traces; keep only minimally sufficient artifacts across long runs.

- Tools/products:

- “Conversation Memory Budgeter” that compacts state across tools with just-in-time retrieval; policy DSLs for retention.

- Assumptions/dependencies:

- Strong observability; risk of dropping necessary state if policies are too aggressive.

- Benchmarks – True Multi-Turn Dependence Datasets

- Use case: Community benchmarks that isolate when assistant history is truly required, enabling better long-context model evaluation.

- Tools/products:

- Public datasets with annotated dependence types (new ask, follow-up w/ or w/o feedback), context-pollution tags, and cost/quality metrics.

- Assumptions/dependencies:

- Broad domain coverage and human validation to complement LLM-judges.

- Healthcare – Longitudinal Assistants

- Use case: Safe, AO-first clinical agents that selectively retrieve necessary assistant turns for continuity (medication histories, prior advice) without style/fact drift.

- Tools/products:

- Verified “turn whitelist” retrieval integrated with EHRs; provenance tracking for any assistant content reintroduced.

- Assumptions/dependencies:

- Regulatory approval, rigorous clinical trials, and fail-safes for critical decision points.

- Finance/Legal – Compliance-Aware Context Policies

- Use case: Dynamic retention strategies aligning with record-keeping laws while minimizing unnecessary assistant-context exposure.

- Tools/products:

- Policy engines that tag, hash, and selectively rehydrate assistant outputs when regulators require full reconstructions.

- Assumptions/dependencies:

- Jurisdiction-specific rules; secure storage and audit capabilities.

- Robotics/Automation – Drift-Resistant Planning

- Use case: Long-horizon agents that prune past verbal plans and tool logs to avoid carrying forward wrong assumptions.

- Tools/products:

- Planning layers that summarize to verified state variables; AO-like constraints for language planning tokens.

- Assumptions/dependencies:

- Requires integration with symbolic/learned state representations; safety validation in real environments.

- Energy/Hardware – Token-Aware Scheduling

- Use case: Cluster and device schedulers that exploit AO to pack more sessions per GPU and allocate compute dynamically to FC only when needed.

- Tools/products:

- “LLM Cost Optimizer” integrating routing signals with queueing and scaling policies.

- Assumptions/dependencies:

- Orchestration support (K8s/serverless) and accurate per-turn predictions of context size and quality impact.

- Security – Architecture for Ephemeral Assistant State

- Use case: Default-ephemeral assistant outputs with cryptographic linking for verifiable recovery; reduces risk of latent compromise persisting across turns.

- Tools/products:

- Ephemeral stores with expiration policies; integrity proofs and access-controlled rehydration for audits.

- Assumptions/dependencies:

- Enterprise key management and secure operations; policy alignment with internal governance.

- Education – Tutor Design for Independent Problem Solving

- Use case: Tutors that encourage fresh reasoning per question by default, pulling in only essential prior feedback when explicitly referenced.

- Tools/products:

- AO-first tutoring flows; analytics on dependence types to tailor pedagogy and reduce carry-over misconceptions.

- Assumptions/dependencies:

- Requires experiments on learning outcomes; domain-specific guardrails to avoid losing beneficial scaffolding.

- Tooling – Context Hygiene Analyzer

- Use case: Static/interactive analyzer that flags turns likely polluted by prior assistant content and recommends omissions.

- Tools/products:

- DevSecOps-style linting for conversations; plug-ins for chat platforms and IDEs.

- Assumptions/dependencies:

- Needs labeled examples of pollution and calibration for different models/domains.

- Governance – Standards for Context Management

- Use case: Industry-wide guidelines defining default omission of assistant outputs, minimal necessary retrieval, and transparency on context composition.

- Tools/products:

- Certification checklists; disclosure formats showing what past context influenced a response.

- Assumptions/dependencies:

- Multi-stakeholder alignment and evidence that quality is maintained for target use cases.

Notes on feasibility across all applications:

- Results are strongest in technical, English multi-turn chats and may not generalize uniformly (evaluate per domain and model).

- AO can reduce context usage by 5–10x at later turns; quality trade-offs depend on model (GPT‑5.2 needed adaptive routing).

- LLM-as-judge reliability is high but not perfect; validate with human review for critical domains.

- Implement safety fallbacks: automatically escalate to FC when users reference prior assistant content or when confidence is low.

Glossary

- Agentic systems: LLM-based tools that autonomously plan and execute sequences of actions in multi-turn workflows. "In response, agentic systems like Claude Code and Cursor have adopted context-editing strategies."

- Assistant-Omitted (AO) context: A prompting configuration that removes prior assistant responses from the conversation history, keeping only user turns. "Assistant-Omitted (AO) context, in which the model is prompted with only prior user turns."

- Assistant-side history: The accumulated past outputs from the assistant stored in the conversation context. "the average response quality decreases to some extent with the omission of assistant-side history"

- Binomial proportion 95% confidence intervals: Statistical intervals quantifying uncertainty around a proportion estimate. "Error bars indicate binomial proportion 95% confidence intervals."

- Context editing: Modifying and reorganizing multi-turn conversation history to improve relevance and efficiency. "Other work studies context editing of multi-turn conversation histories."

- Context filtering: Selectively omitting parts of the conversation context to reduce distraction and memory usage. "we design a context-filtering approach that selectively omits assistant-side context."

- Context management: Strategies for handling long conversation histories to control compute, memory, and relevance. "context management becomes an important challenge."

- Context pollution: A phenomenon where earlier model-generated responses inject errors, hallucinations, or stylistic artifacts that persist across turns. "We call this phenomenon context pollution."

- Context window: The maximum number of tokens a model can accept as input context. "context window 32,768 tokens"

- Conversational question answering (ConvQA): QA tasks that require understanding and leveraging prior turns in a dialogue. "An earlier line of work on conversational question answering (ConvQA)"

- Dense vector embeddings: High-dimensional numeric representations of text used for similarity and downstream modeling. "dense vector embeddings of the user prompt as well as the past conversation history"

- ERGO: A method that realigns multi-turn context by consolidating user inputs and omitting assistant responses. "ERGO (Khalid et al., 2025) attempts to dynamically realign conversation context in multi-turn settings by rewriting all prior user inputs into a single prompt and omitting past assistant responses."

- Full Context (FC): A prompting setup that includes both prior user and assistant turns in the input. "Full Context (FC), in which the model is prompted with both prior user and assistant turns"

- Frontier model: A state-of-the-art, cutting-edge LLM used as a benchmark against open models. "across three open reasoning models and one frontier model."

- Hallucinations: Fabricated or incorrect content generated by an LLM that appears plausible. "introducing errors, hallucinations, or stylistic artifacts"

- Jaccard metric: A set-based similarity measure often used with clustering or graph methods. "carries over the Jaccard metric from UMAP into the t-SNE implementation"

- L1-regularized logistic regression: A classification model with L1 penalty encouraging sparsity in learned weights. "we train a classifier (specifically, an L1- regularized logistic regression model)"

- LLM-as-judge framework: An evaluation setup where an LLM assesses and compares other LLM responses. "our evaluation relies on an LLM-as-judge framework"

- LLM-judge: An LLM used to score or pick winners between model outputs on defined criteria. "we use GPT-5 as an LLM-judge."

- PCA (Principal Component Analysis): A dimensionality reduction technique that projects data onto principal axes. "Thus, we apply PCA to reduce the prompt and conversation- history embeddings to 20 components each."

- Position bias: A systematic preference for items based on their order rather than their content. "To mitigate position bias, we randomize response ordering for each comparison."

- Prompt compression: Techniques that shorten prompts while preserving essential information to reduce cost and distraction. "A line of work studies prompt compression in the context of single-turn retrieval- augmented generation (RAG)"

- Retrieval-augmented generation (RAG): Generation methods that condition on external retrieved documents. "single-turn retrieval- augmented generation (RAG)"

- t-SNE: A nonlinear dimensionality reduction algorithm for visualizing high-dimensional data. "use t-SNE instead."

- Temperature: A sampling parameter controlling randomness in token selection during generation. "temperature 0.6"

- Thinking trace: The intermediate reasoning tokens or chain-of-thought generated before the final answer. "the judge is given both the thinking trace and the final response"

- Top-k: A sampling parameter restricting choices to the k most probable next tokens. "top-k 20"

- Top-p: Nucleus sampling that selects tokens from the smallest set whose cumulative probability exceeds p. "top-p 0.95"

- Trajectory reduction: Methods for shortening agent execution histories by removing irrelevant steps or artifacts. "agent-based systems are continuing to test out new trajectory reduction strategies."

- UMAP: A nonlinear dimensionality reduction algorithm used for clustering and visualization. "the user requests UMAP clustering code."

- User-turn-only prompting: A strategy that conditions responses only on current and prior user messages, omitting assistant outputs. "user-turn-only prompting approach"

- vLLM: An efficient serving system/framework for running LLMs at scale. "This model is served via vLLM."

Collections

Sign up for free to add this paper to one or more collections.