- The paper introduces an overflow-safe polylog-time parallel MWPM decoder that mitigates arithmetic overflow using truncated polynomial ring arithmetic.

- It employs innovative edge weight perturbation and polynomial Tutte matrix construction to accurately extract minimum-weight perfect matchings for surface codes.

- Experimental results demonstrate a 99.9% reduction in bit length, making the approach viable for implementation on current FPGA-based FTQC systems.

Overflow-Safe Polylog-Time Parallel MWPM Decoder for FTQC

Motivation and Context

Fault-tolerant quantum computation (FTQC) relies on efficient, accurate quantum error correction (QEC). For surface codes, the dominant classical decoding strategy is minimum-weight perfect matching (MWPM), typically solved via Edmonds' blossom algorithm with polynomial time complexity. Recent efforts have produced determinant-based approaches for MWPM achieving polylogarithmic parallel runtime using matrix determinants, offering a theoretical reduction in decoding time [takada2025doubly]. However, practical translation of these methods is constrained by massive bit-length requirements, causing arithmetic overflow in real finite-bit hardware and undermining mathematical correctness.

This work constructs an overflow-safe, polylog-time parallel MWPM decoder implementable at the scale of current surface-code experiments and details rigorous guarantees for correctness and failure detection under finite-bit constraints. The paper also presents strategies for reducing arithmetic bit length by over 99.9%, enabling hardware-efficient realization of asymptotically optimal MWPM decoding performance.

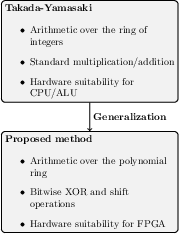

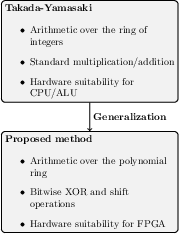

Figure 1: Conceptual comparison between Takada-Yamasaki [takada2025doubly] and the proposed bitwise algorithm.

Algorithmic Framework and Mathematical Structure

The MWPM decoding task for surface codes is cast as finding a perfect matching in a weighted detector graph constructed from syndrome extraction circuits. Edge weights reflect negative log-likelihoods of errors, discretized for practical numerical stability. The decoding goal is to identify an MWPM consistent with observed syndrome data, minimizing cumulative weight.

Overflow-Safe Polynomial Representation

Conventional integer arithmetic fails on real hardware due to overflow; large intermediate values render determinant-based decoding infeasible. To fix this, the authors reformulate arithmetic as operations in a truncated polynomial ring F2[X]/(Xn), where truncated digits algebraically encode overflow. This enables fully bitwise implementation (using XOR and shift operations), preserves polylogarithmic runtime, and allows explicit overflow detection.

Polylog-Time Parallel MWPM Algorithm

The decoding algorithm proceeds as follows:

- Edge Weight Perturbation: Random isolation perturbations are applied without costly amplification scaling, preventing bit-length blowup.

- Polynomial Tutte Matrix Construction: Edge weights are encoded as polynomial degrees in the matrix.

- Determinant Computation and MWPM Extraction: The determinant reveals the MWPM weight via the minimal degree term. Minors identify matching edges.

- Overflow-Aware Failure Signaling: If an MWPM weight overflows the representable range, the algorithm outputs a failure indicator, ensuring correctness.

Parallelization is rigorously proven to achieve polylogarithmic depth through the Samuelson–Berkowitz algorithm for determinants over polynomial rings, requiring only bitwise operations and sublinear numbers of processors.

Numerical Results and Bit Length Optimization

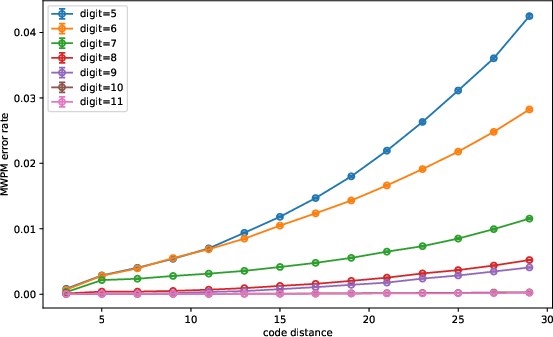

Discretization Precision and MWPM Fidelity

Simulations quantify the effects of integerization on MWPM results. For a range of code distances and binary precisions, MWPM mismatch rates between floating-point and integer-weighted graphs are measured.

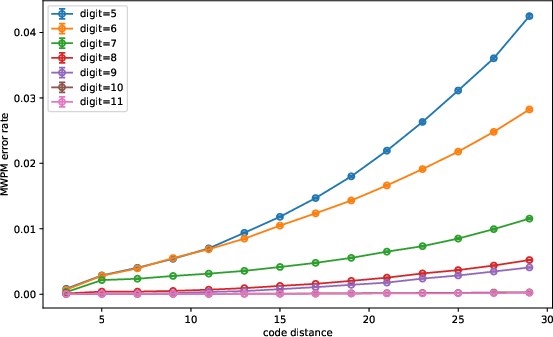

Figure 2: MWPM error rate versus code distance for varying binary digit precisions; ≥10 bits suffices for d≤9.

Results show rapid decay of mismatch rates with increasing digit precision. For distances d≤9, 10 binary digits suffice, keeping MWPM errors below logical error rates, confirming the feasibility of integer-weight-based decoding.

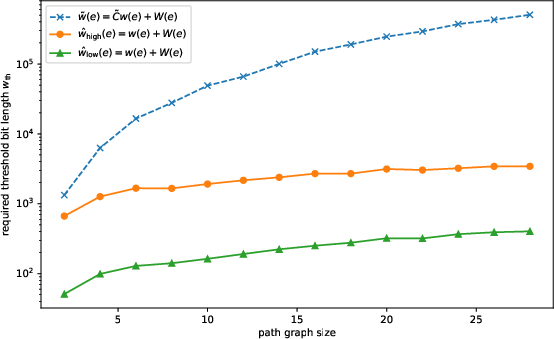

Arithmetic Bit Length Reduction

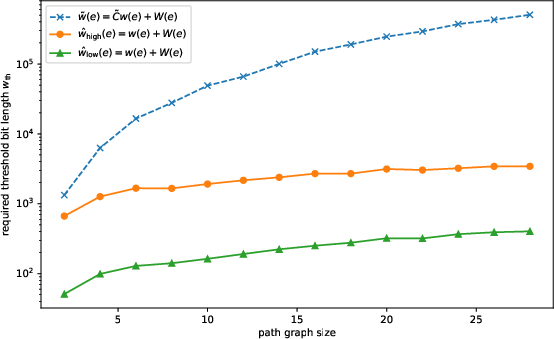

The authors benchmark the required arithmetic bit length for MWPM decoding under various perturbation and scaling strategies. Removing global amplification and employing variable precision (low for candidate generation, high for verification) yields dramatic bit length savings.

Figure 3: Required wth for correct MWPM versus path graph size; practical FPGA-friendly range achieved.

At path graph sizes n=28, bit length demands drop from ∼6×105 (prior method) to ∼5×102 bits, aligning well with FPGA hardware capabilities and equating to 99.9% reduction. This is achieved with negligible loss in decoding accuracy.

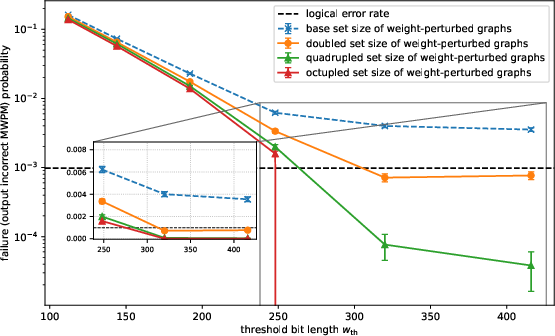

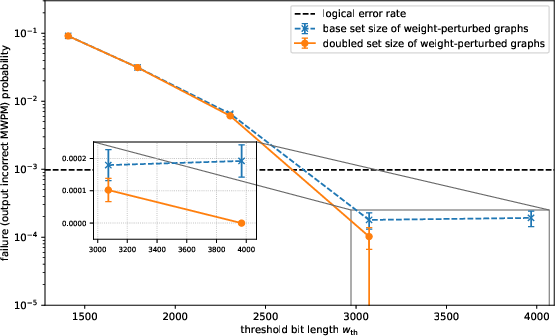

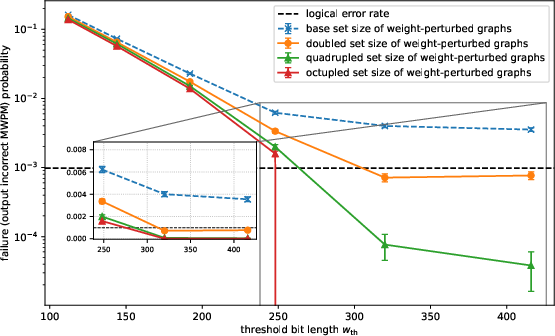

Failure Probability and Perturbation Sampling

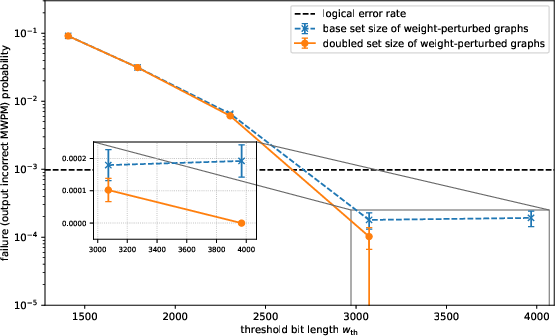

Monte Carlo simulations estimate failure probabilities as a function of bit length and perturbation set size. Both isolation-based and extended variable-precision schemes suppress decoding failure below logical error rates using practical bit ranges (3×102–5×102 bits) and moderate perturbation sampling.

Figure 4: Failure probability versus threshold bit length, showing suppression below logical error rates with practical bit lengths and augmented perturbation sampling.

Practical Implementation Guidelines

Critical parameters for real-world implementation in early FTQC are proposed:

- Arithmetic bit length: wth≥5×102 bits

- Low binary precision: $4$ bits

- High binary precision: ≥8 bits

- Perturbation range: ≥⌈0.8n0.8⌉

- Perturbation sets: ≥8Wmax

These guidelines enable direct translation to hardware architectures such as FPGAs and facilitate proof-of-principle demonstrations within current experimental constraints.

Theoretical and Practical Implications

The proposed framework rigorously addresses implementability barriers stemming from overflow and excessive bit requirements in determinant-based MWPM decoding, establishing mathematical consistency and efficient detection of computational failures. The reduction in bit length enables deployment on realistic hardware, narrowing the gap between theoretical polylog-time decoding and practical FTQC.

This approach, when combined with theoretical results linking polylog-time decoding to doubly-polylog-time FTQC overhead [takada2025doubly], strengthens prospects for experimentally viable, highly time-efficient quantum computation. It parallels recent FTQC milestones validating exponential error suppression at small code distances and supports future experiments demonstrating asymptotic decoding advantages.

Potential avenues for improvement include further tailoring determinant-based MWPM methods to exploit problem-specific structure, benchmarking against optimized blossom variants (e.g., sparse blossom [Higgott2025sparseblossom], fusion blossom [wu2023fusion]), and delineating crossover points where determinant-based decoders outperform blossom-based approaches in practice.

Conclusion

This paper delivers a formalized, overflow-safe polylog-time parallel MWPM decoder for FTQC, grounded in truncated polynomial ring arithmetic and optimized for practical hardware realization. The robust numerical analyses confirm near-optimal error suppression and dramatic resource reduction. The framework enables near-term experimental validation of asymptotic decoding speed, advancing the frontier of FTQC implementation and motivating deeper exploration of scalable, time-efficient quantum error decoding.