Minimum Weight Decoding in the Colour Code is NP-hard

Abstract: All utility-scale quantum computers will require some form of Quantum Error Correction in which logical qubits are encoded in a larger number of physical qubits. One promising encoding is known as the colour code which has broad applicability across all qubit types and can decisively reduce the overhead of certain logical operations when compared to other two-dimensional topological codes such as the surface code. However, whereas the surface code decoding problem can be solved exactly in polynomial time by finding minimum weight matchings in a graph, prior to this work, it was not known whether exact and efficient colour code decoding was possible. Optimism in this area, stemming from the colour code's significant structure and well understood similarities to the surface code, fanned this uncertainty. In this paper we resolve this, proving that exact decoding of the colour code is NP-hard -- that is, there does not exist a polynomial time algorithm unless P=NP. This highlights a notable contrast to some of the colour code's key competitors, such as the surface code, and motivates continued work in the narrower space of heuristic and approximate algorithms for fast, accurate and scalable colour code decoding.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper looks at a big step needed for building useful quantum computers: fixing errors. It focuses on a specific error-correcting scheme called the “colour code.” The authors prove that perfectly decoding the colour code—finding the smallest set of errors that explains what you measured—is extremely hard in the worst case. In computer science terms, they show it’s NP-hard, which means there’s almost certainly no fast algorithm that always solves it exactly.

Why this matters: colour codes are attractive because they can perform some important quantum gates more simply than other popular codes (like the surface code). But if their decoding is fundamentally hard, engineers will need smart approximate methods rather than exact ones.

What question are the authors trying to answer?

In simple terms:

- Can we decode the colour code exactly and quickly for every possible situation?

- More precisely: Is there a polynomial-time algorithm (a “fast” algorithm whose running time grows reasonably with problem size) that always finds the most likely error pattern consistent with the measurements?

- And even if we don’t need the exact errors, can we at least quickly tell whether the best correction changes the stored logical bit or not?

How did the researchers investigate this?

Think of error correction like a puzzle:

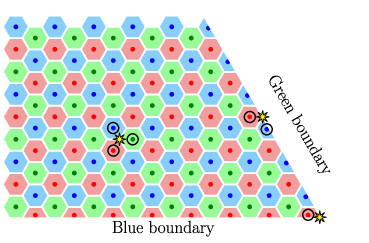

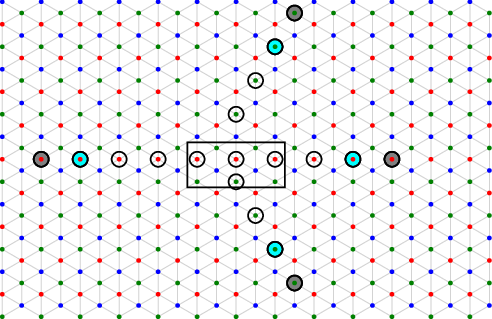

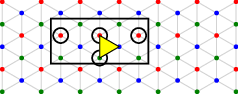

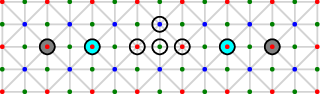

- Imagine a giant honeycomb (hexagonal) grid. Each little triangle is a place an error might happen. Each hexagon has a “check” that lights up when it detects something wrong.

- When you measure, you see which checks lit up—this is the “syndrome,” a pattern of alarms. Decoding means: “Find the smallest set of triangle errors that could cause exactly this alarm pattern.”

Key ideas explained with everyday analogies:

- Minimum weight decoding: “Weight” is just “how many errors.” Minimum weight means “fewest errors that explain the alarms.”

- NP-hard: A class of problems that are believed to be too complex for any algorithm to solve quickly in all cases.

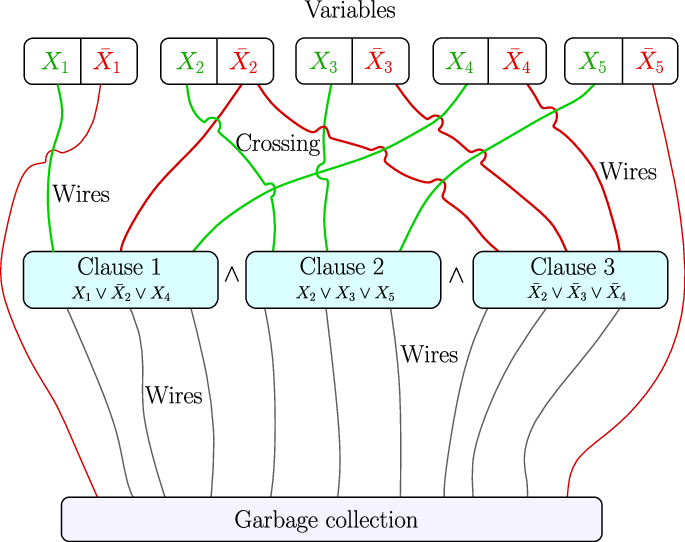

- Reduction from 3-SAT: 3-SAT is a classic “hard” puzzle. You have true/false variables and clauses like (X OR NOT Y OR Z). The question is: can you set the variables so every clause is satisfied? The authors cleverly build decoding puzzles inside the colour code that behave exactly like 3-SAT. If you could decode fast, you’d solve 3-SAT fast—so decoding must be hard too.

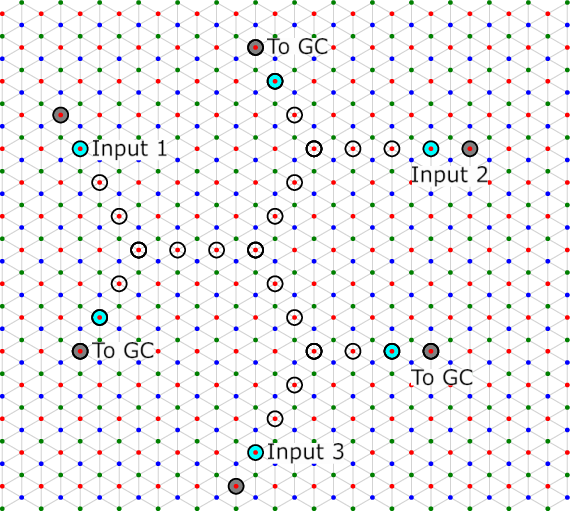

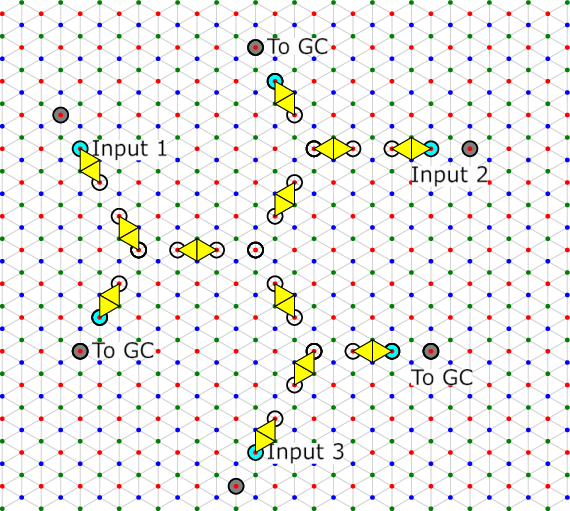

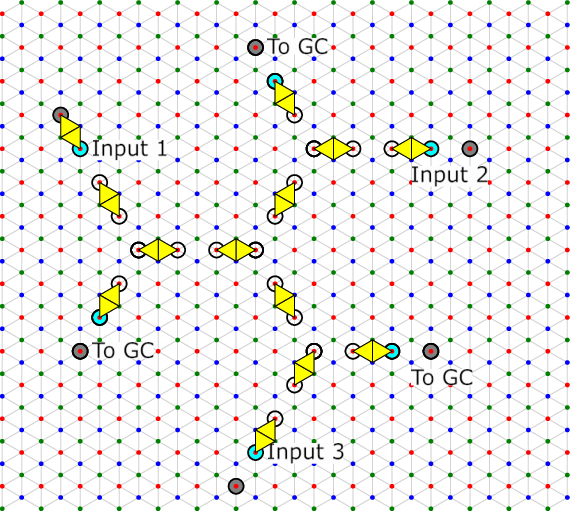

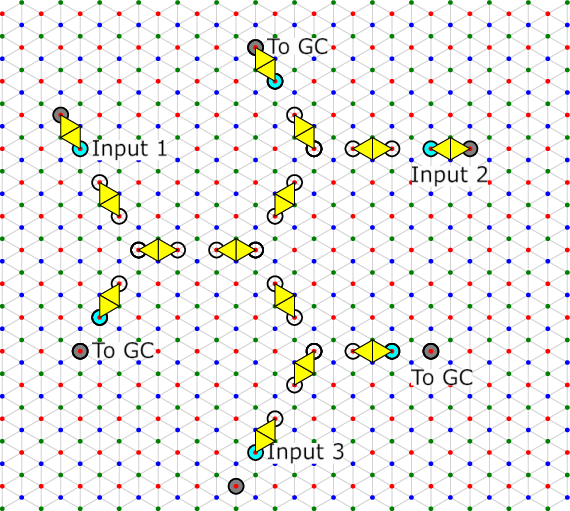

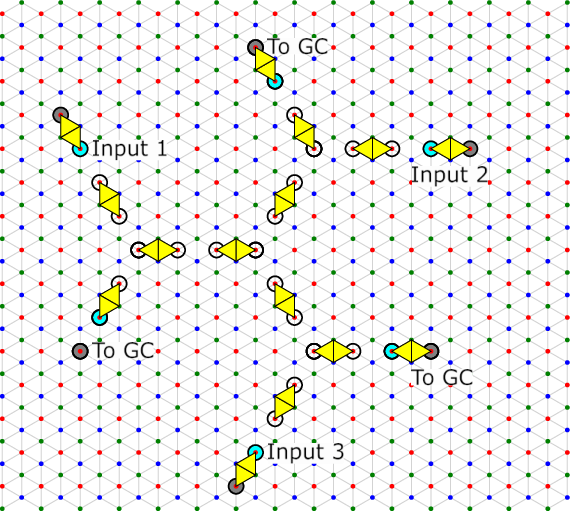

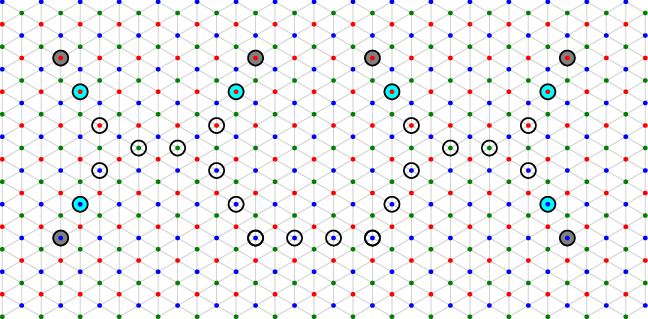

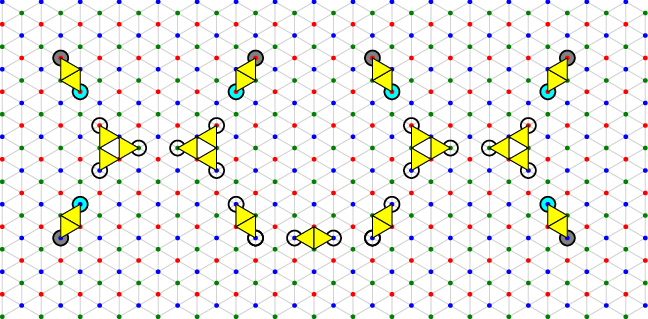

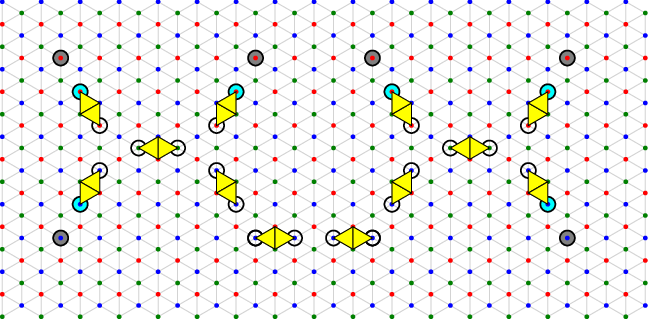

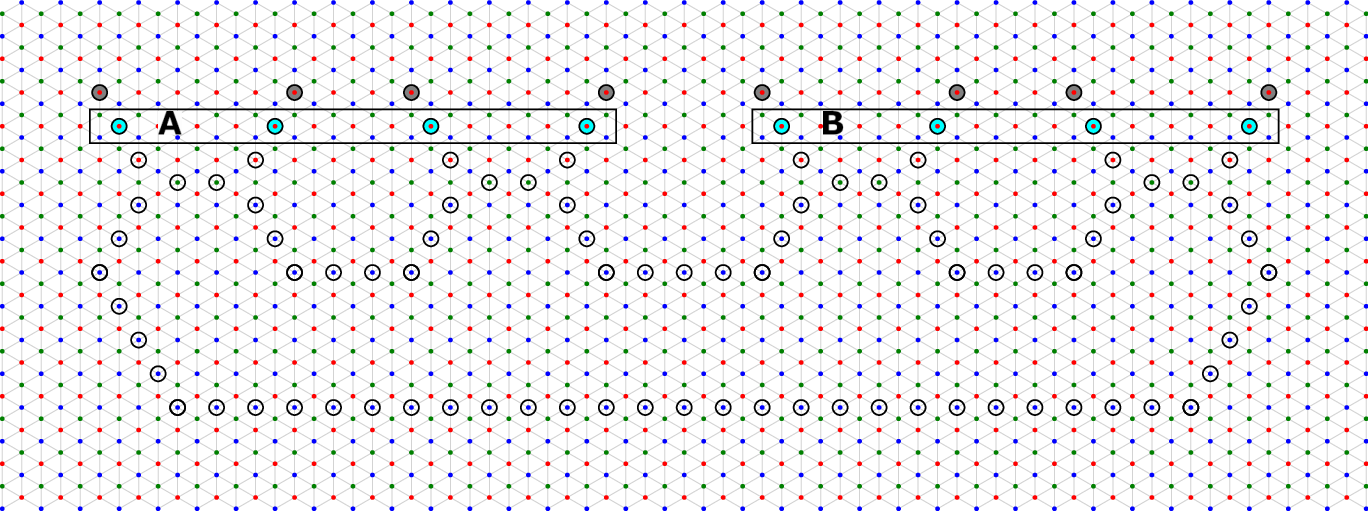

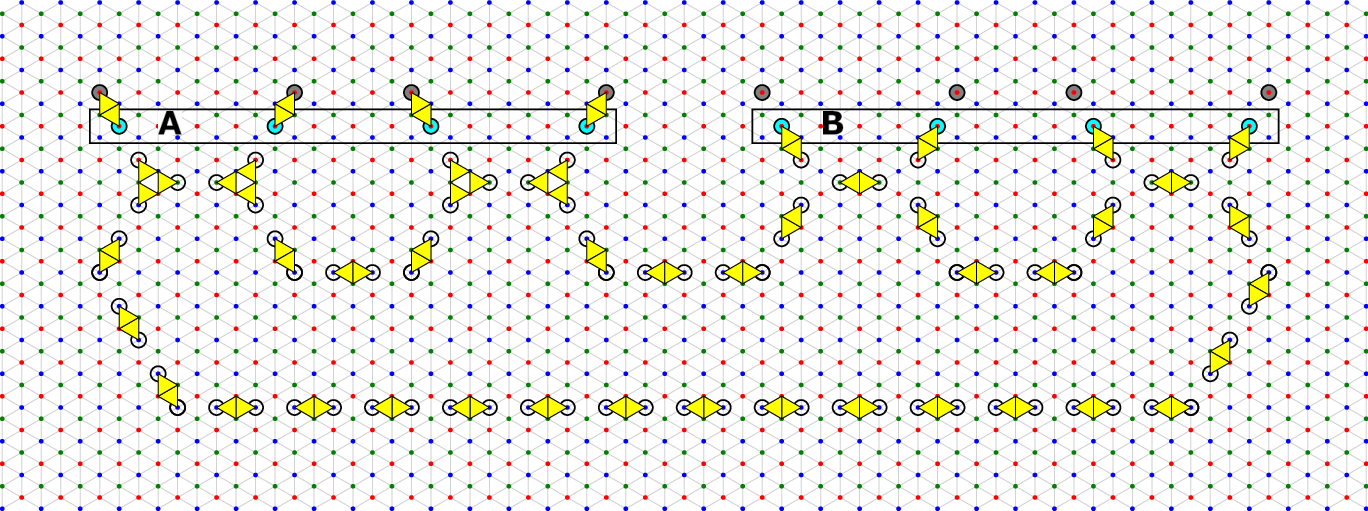

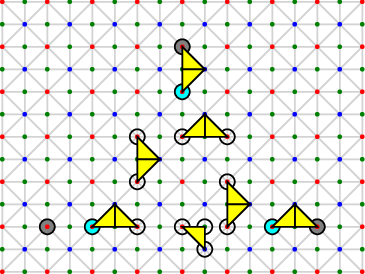

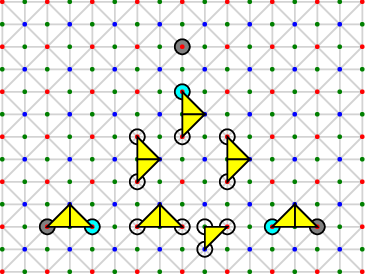

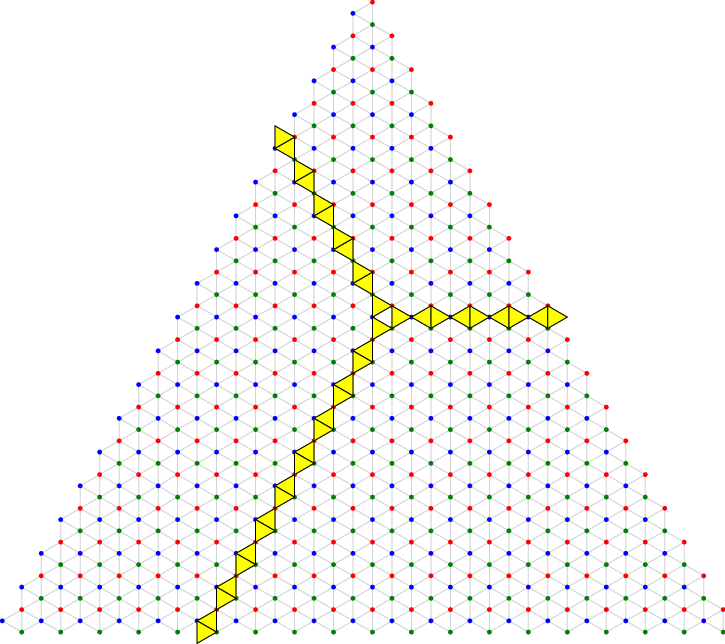

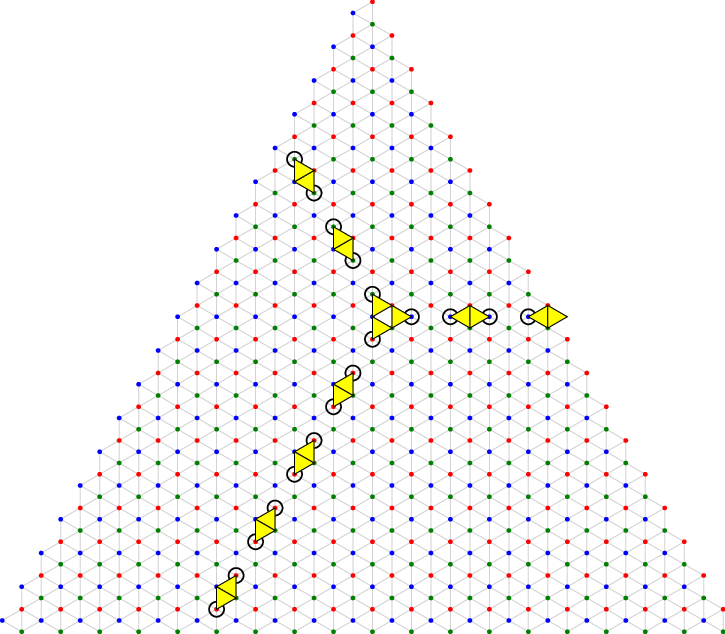

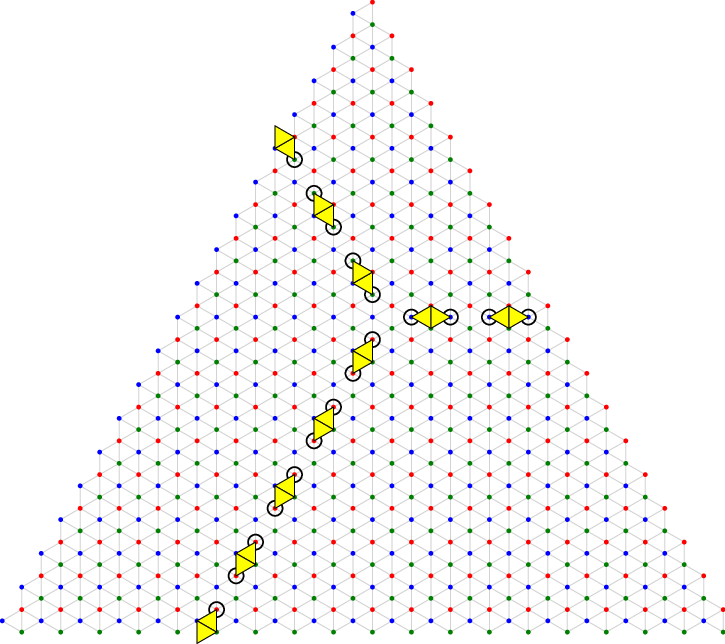

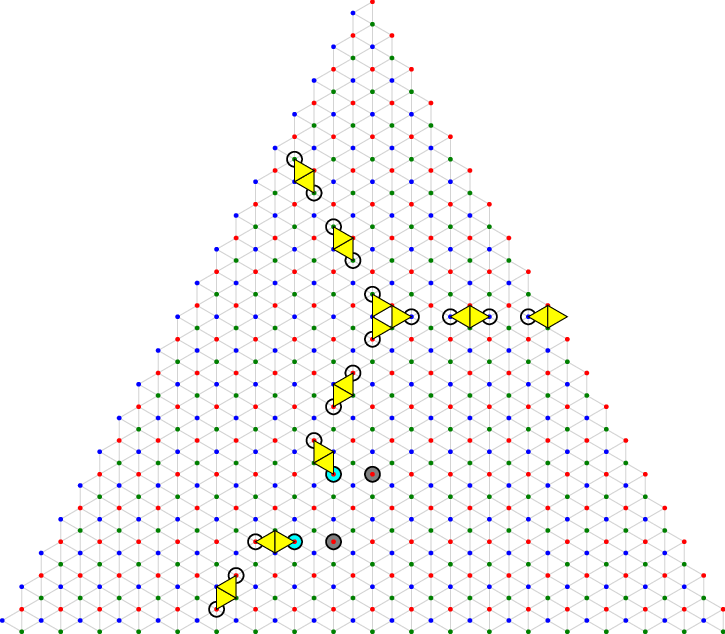

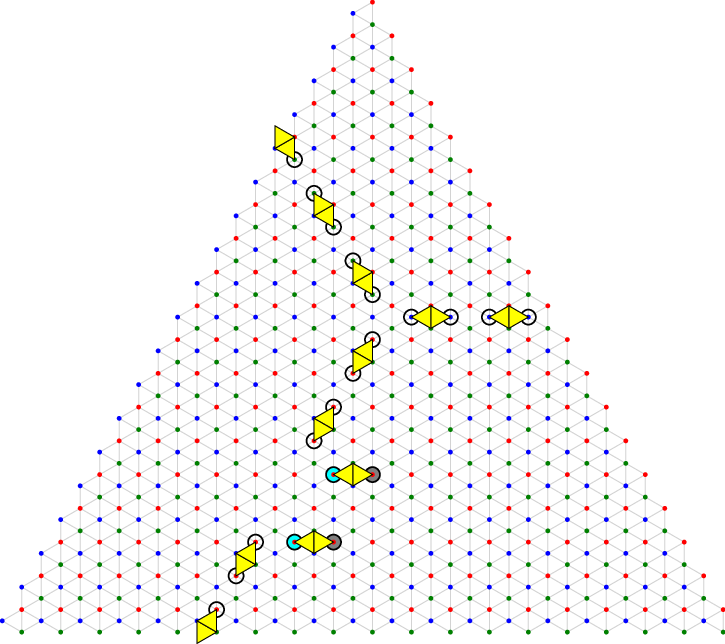

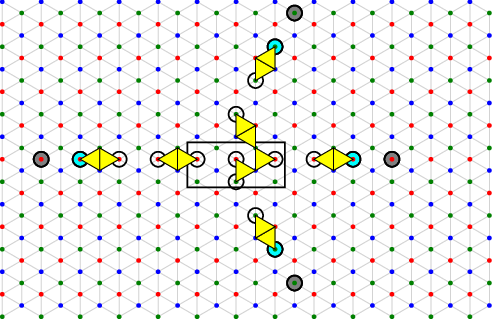

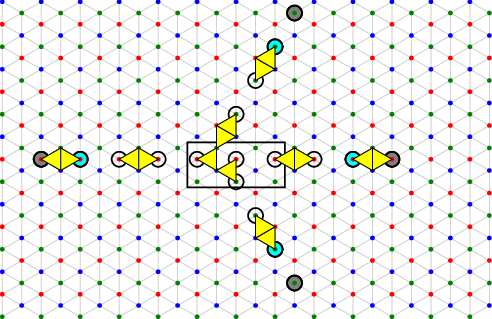

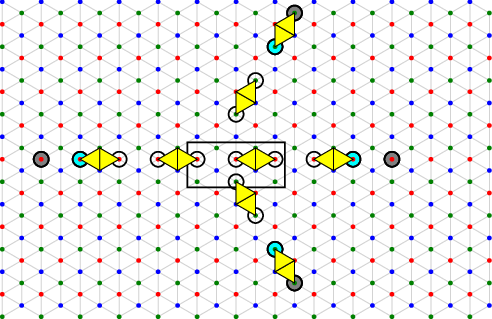

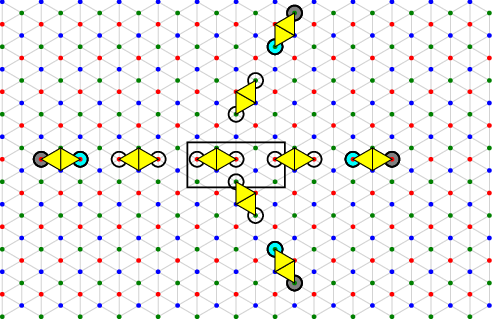

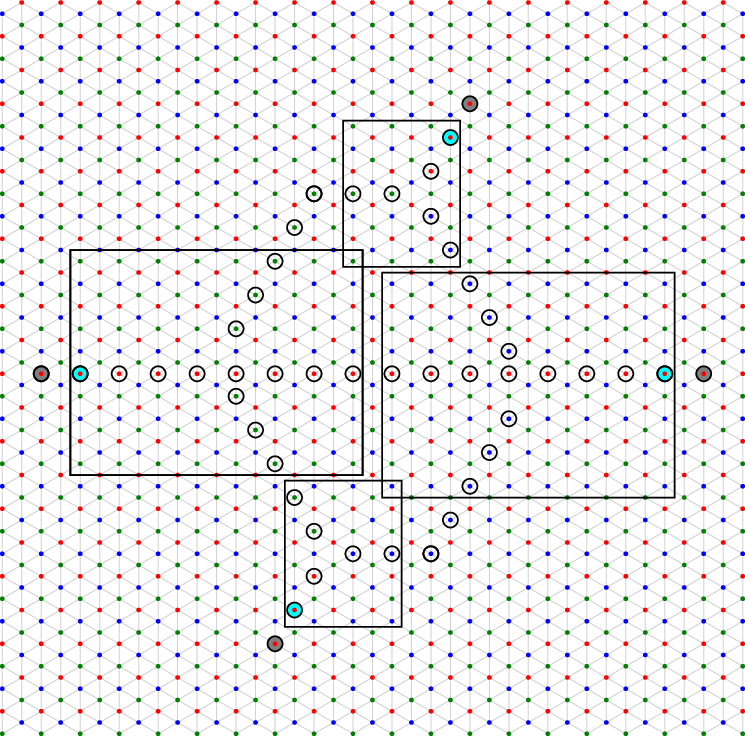

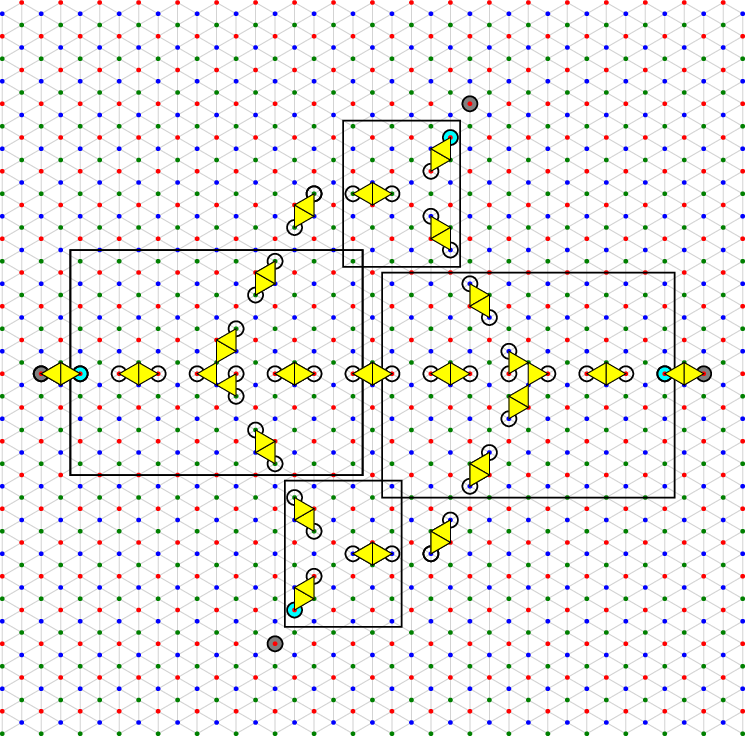

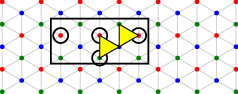

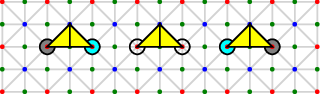

How their construction works (think LEGO gadgets wired together):

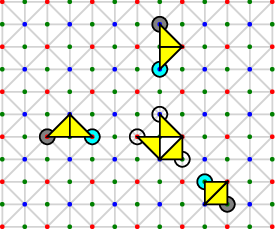

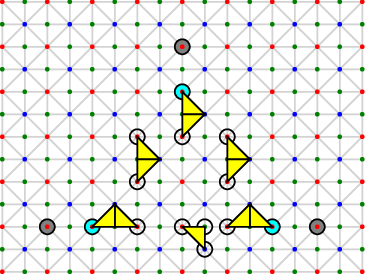

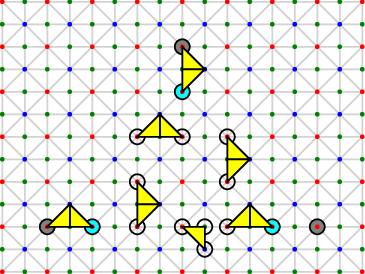

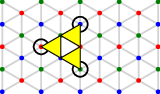

- Variable gadget: Represents a true/false variable. Choosing one of two exact covers puts it in TRUE or FALSE, and this choice is copied to multiple “output links” so it can influence several clauses.

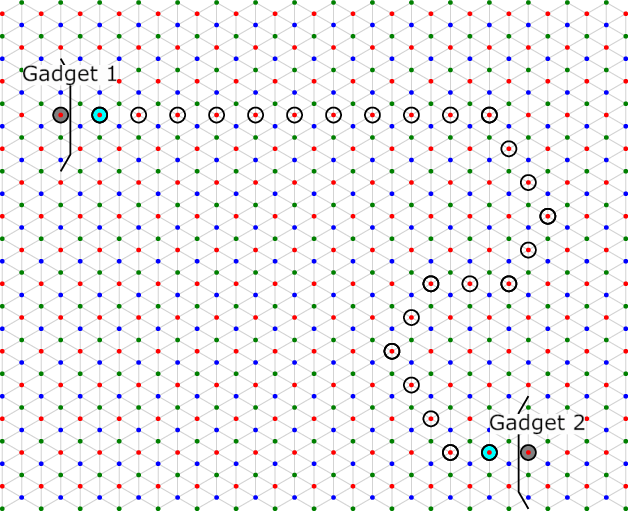

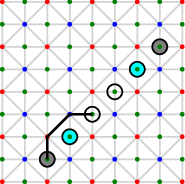

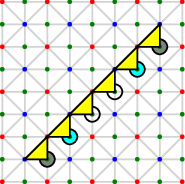

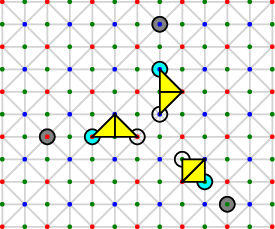

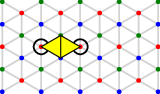

- Wire gadget: Copies a TRUE/FALSE signal from one place to another. If the wire starts TRUE, it ends TRUE; if it starts FALSE, it ends FALSE.

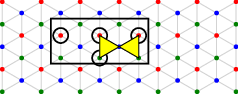

- Clause gadget: Represents an OR clause with three inputs. It can be perfectly covered if and only if at least one input is TRUE. If all inputs are FALSE, you cannot cover it exactly.

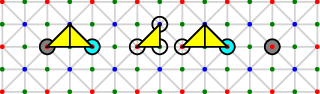

- Garbage collection gadget: Tidies up leftover parts so the whole construction can be covered exactly when the logical conditions are met. It uses simple “XOR” behavior to balance parity (even/odd counts of certain colored nodes).

- Crossing gadget: Lets wires cross without interfering (only needed in the simplest model; in more realistic models you can use time as a third dimension to avoid crossings).

A crucial simplification they use:

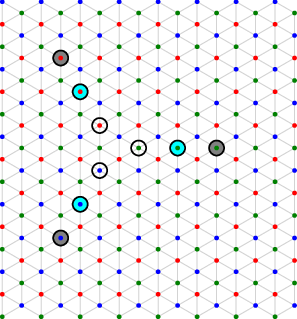

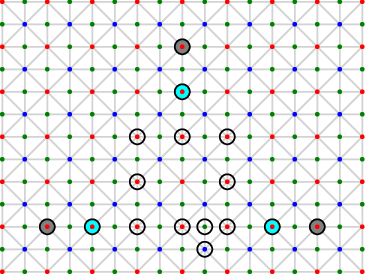

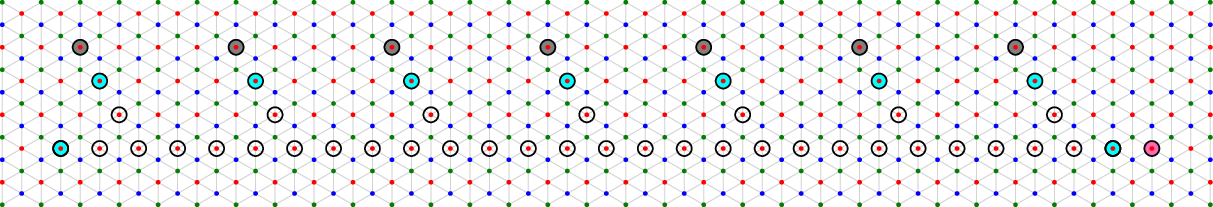

- Separated syndromes and exact covers: They space gadgets far apart. That way, each error triangle can hit at most one alarm, which forces a very neat “one-to-one” matching between errors and alarms in an exact cover. This makes reasoning about the puzzle much cleaner.

By building these gadgets and wiring them to match any 3-SAT formula, they show: decoding that syndrome exactly is as hard as solving 3-SAT.

What did they find, and why is it important?

Main results:

- Exact minimum weight decoding in the 2D colour code is NP-hard. In other words, unless P=NP (which most experts doubt), there is no general fast algorithm that always finds the perfect correction.

- A related decision problem—“Does there exist a set of ≤ k errors that explains this syndrome?”—is NP-complete. That means it’s both hard and, if someone gives you a solution, you can check it quickly.

- Even deciding whether the best correction flips the stored logical bit (the overall meaning of the quantum information) is NP-hard.

Why this matters:

- Surface codes (the main competitor) can be exactly decoded in polynomial time using a matching algorithm. Colour codes, despite having great features like easy Hadamard and S gates, don’t share this decoding advantage in the worst case.

- This directs future work: focus on heuristic and approximate decoders—methods that are fast and work well on typical cases, even if they can’t guarantee perfection for every possible syndrome.

- The results hold even in the simplest error model (only X-errors, all equally likely, no measurement errors), which strongly suggests hardness persists in more realistic noise settings.

What does this mean for the future?

- Colour codes remain appealing for practical quantum computing because they can implement certain gates very efficiently.

- However, their decoding won’t be universally fast and exact. So researchers should pour effort into:

- Heuristic decoders: clever rules that usually find good corrections quickly.

- Approximate methods: algorithms that are fast and very accurate on real-world error patterns, even if not guaranteed in worst-case constructions.

- This work doesn’t say colour codes are unusable—it just sets realistic expectations and guides the community toward the right kind of decoding strategies.

Short glossary

- Colour code: A 2D quantum error-correcting code laid out on a hexagonal grid with three “colors” of checks. It’s known for simple implementations of certain important gates.

- Surface code: Another leading 2D code that has highly efficient exact decoders.

- Syndrome: The pattern of checks that “complain” after measurement—your clues to what went wrong.

- Minimum weight decoding: Find the smallest set of errors that could cause the given syndrome.

- NP-hard / NP-complete: Classes of problems believed to be inherently hard to solve quickly in all cases.

- 3-SAT: A classic hard logic puzzle; used here to prove decoding is hard by building it into the code’s syndrome.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper establishes worst‑case NP‑hardness for exact minimum‑weight decoding in the 2D hexagonal colour code via a reduction from 3‑SAT. The following unresolved issues and open directions remain:

- Scope beyond the hexagonal (6.6.6) colour code

- Formal proofs for other 2D colour code lattices (e.g., 4.8.8, Union‑Jack, other trivalent tilings) are not provided; the text only “expects” generalization. Which lattice features (e.g., face degrees, 3‑colourability constraints) are necessary and sufficient for the reduction to go through?

- Extension to higher‑dimensional colour codes is not proven; which 3D and higher‑D variants admit analogous gadget constructions and with what complexity parameters?

- Noise models and syndrome extraction circuits

- Circuit‑level noise is claimed to inherit hardness “with minor caveats” that depend on the syndrome extraction circuit, but no explicit set of circuit conditions is given. What concrete circuit families and fault models guarantee the reduction’s validity (e.g., number of rounds, flagging schemes, hook error constraints)?

- For phenomenological noise (with measurement errors), a detailed 3D (space‑time) construction of gadgets is not spelled out. How to realize wire, clause, variable, and crossing gadgets robustly across time slices without increasing the decoding parameter (e.g., time diameter) beyond polynomial bounds?

- Mixed and correlated error channels (e.g., depolarizing, Y errors, biased noise, spatial/temporal correlations) are not treated explicitly. Under which correlations does NP‑hardness persist, and where does the construction break?

- Exact objective vs. practical decoding objectives

- The reduction targets exact minimum‑weight error set recovery. In quantum decoding, the practically relevant objective is often maximum‑likelihood decoding (most‑likely coset). Is maximum‑likelihood decoding (MLD) for the colour code also NP‑hard, and under which noise models?

- Degeneracy handling is not analyzed. What is the complexity of deciding the most‑likely equivalence class (coset) vs. a minimum‑weight representative, and how do these complexities differ for colour codes?

- Hardness of approximation and parity/logical inference

- Only exact minimum‑weight decoding is shown NP‑hard. Is it NP‑hard (or APX‑hard) to approximate the minimum weight within a constant or polylogarithmic factor? Are there additive‑error approximation guarantees that are tractable?

- The parity decision problem (“does the minimum‑weight correction flip the logical?”) is shown NP‑hard exactly. Is determining the logical effect under approximate decoding (e.g., within +1 weight slack) also hard? Are there efficient algorithms that decide logical parity under bounded approximation ratios?

- Average‑case and smoothed complexity

- The result is worst‑case; it remains unknown whether typical syndromes drawn from realistic noise are hard on average. For which syndrome distributions (e.g., i.i.d. depolarizing at p<p_threshold) is decoding average‑case hard, and is there evidence of a hardness threshold?

- Smoothed analysis: do small random perturbations to worst‑case gadget syndromes remain hard to decode?

- Tractable subfamilies and parameterized complexity

- The paper proves NP‑hardness as a function of syndrome diameter D. Are there fixed‑parameter tractable (FPT) algorithms under alternative parameters (e.g., treewidth/pathwidth of the syndrome interaction graph, number of “clause gadgets,” defect density, or number of connected components)?

- Are there natural, practically relevant restrictions (e.g., no crossings, bounded clause degree, no “garbage collection” branches) under which exact decoding becomes polynomial‑time solvable?

- Gadget constructions and formal verification details

- Crossing‑wire gadget: full construction and correctness are deferred to an appendix; explicit properties (e.g., exact‑cover soundness/completeness, no unintended interactions, overhead in D) are not fully detailed in the main text. A formal specification and proof of correctness would strengthen the reduction.

- Garbage‑collection gadget: the “optional pink node” parity argument is informal. A complete proof that, for all upstream configurations, the gadget admits an exact cover if and only if global red‑parity is matched would remove ambiguity.

- Variable and duplicator gadgets: while figures illustrate behavior, a rigorous combinatorial proof that no unintended exact covers exist (beyond TRUE/FALSE states) is not provided in the excerpt. Formalizing uniqueness or characterizing all exact covers would close this gap.

- Boundary effects: gadgets are designed for “bulk” placement; explicit proofs that boundary proximity, corners, or finite‑size constraints do not introduce spurious covers or invalidate parity arguments are missing.

- Integration with realistic layouts and operations

- The impact of lattice surgery, code deformation, and time‑dependent boundary moves on decoding complexity is not analyzed. Are the corresponding decoding problems during surgery sequences also NP‑hard?

- Decoding with repeated rounds (streaming/online decoding) under phenomenological or circuit noise is not formally addressed. Does NP‑hardness persist for online decision versions (e.g., per‑round or sliding‑window decoding)?

- Relation to hypergraph matching and structural limits

- While general hypergraph matching is NP‑hard, the paper does not delineate the precise subclass of hypergraphs induced by colour code syndromes. Characterizing the boundary between tractable and intractable subclasses (e.g., 3‑uniform, bounded‑degree, planar) could guide algorithm design.

- Conditions under which MWPM‑based methods are provably optimal for colour codes (e.g., restricted syndrome structures without crossings or with tree‑like factor graphs) are not identified.

- Counting problems and stronger complexity classifications

- Counting the number of minimum‑weight solutions (or minimum‑weight cosets) is not explored. Is this #P‑hard for colour codes?

- Stronger hardness (e.g., under randomized reductions) and quantum complexity (BQP/QMA‑hardness for related decoding or inference tasks) remain open.

- Practical incidence of hard instances

- The construction uses bespoke gadget assemblies. It is unclear how frequently similar hard syndromes occur under realistic error processes at practical distances. Designing diagnostics to detect “gadget‑like” hard substructures in real syndromes is an open applied question.

- Quantitative overheads of the reduction

- While hardness is shown with respect to diameter D and O(D2) scaling is discussed, explicit constants, gadget densities, and the blow‑up from a 3‑SAT instance to a syndrome are not quantified. Tighter bounds would clarify reduction efficiency and inform benchmark design.

- Generalization to subsystem (gauge) colour codes

- The paper cites gauge colour codes but does not analyze whether decoding their stabilizer or gauge syndromes remains NP‑hard under analogous objectives and noise models.

- Incomplete sections in the provided text

- The “Combining the gadgets” section and parts of appendices (e.g., circuit‑level caveats, other colour codes, crossing‑wire details) are truncated in the provided content, preventing verification of key steps. A complete, formal presentation of those sections is needed for full reproducibility.

Practical Applications

Overview

This paper proves that exact minimum-weight decoding for 2D color codes is NP-hard—even under the simplest (code-capacity) noise model—and that deciding the logical parity of a minimum-weight correction is also NP-hard. These results have immediate implications for how quantum computing systems should be architected and how decoder research and products should be prioritized. They also introduce concrete gadget constructions that can be used to build worst-case hard syndrome instances, which are valuable for benchmarking and stress-testing decoders.

Below are practical applications grouped into immediate versus long-term opportunities, with sector links, feasible tools/products/workflows, and key assumptions or dependencies noted for each item.

Immediate Applications

The following can be acted on now without requiring major breakthroughs:

- Architecture and roadmap decisions for fault-tolerant quantum computing (hardware, software)

- Use color codes where transversal S and Hadamard gates reduce logical gate overhead, but plan for heuristic/approximate decoders rather than exact ones (hardware/software).

- Prefer surface codes for workloads requiring strict latency guarantees from polynomial-time exact decoding (MWPM), or when decoder determinism is paramount (hardware/software).

- Integrate decoder compute budgeting into system design (e.g., classical co-processors, GPUs/FPGAs) anticipating worst-case instances and fallback strategies (hardware/software).

- Assumptions/dependencies: P ≠ NP; NP-hardness is worst-case; color-code advantages still hold in practice; workload mix determines the optimal code choice.

- Decoder engineering: Benchmarking and stress-testing with hard instances (software, academia)

- Build a “Color-Code Decoding Benchmark Suite” using the paper’s gadget-based syndrome construction (variable/clause/wire/XOR/garbage-collection gadgets) to produce controlled, separated syndromes with known hardness (software).

- Evaluate decoder performance as a function of syndrome diameter D and separatedness; report accuracy/latency trade-offs and failure modes on structured hard cases (academia/software).

- Assumptions/dependencies: Syndrome generators faithfully instantiate gadget properties; real noise distributions produce typical cases easier than worst-case.

- Heuristic decoder development and deployment (software)

- Prioritize fast approximate decoders (belief propagation, machine learning, local greedy, hypergraph MWPM hybrids) and implement confidence scoring/calibration to drive correction acceptance criteria (software).

- Introduce “diameter-aware” local decoders that exploit syndrome structure to bound search regions and provide early exits under latency constraints (software).

- Assumptions/dependencies: Training covers both typical and adversarial (gadget-generated) instances; latency targets align with hardware cycles; approximate methods meet logical error suppression targets.

- Runtime workflows and failover in QEC pipelines (software, hardware)

- Implement multi-tier decoding workflows: quick approximate pass; fallback to stronger heuristics; final resort to bounded-depth search if confidence is low (software/hardware).

- Add parity decision “soft voting” with calibrated error bars, acknowledging parity-of-minimum correction is NP-hard to decide exactly (software).

- Assumptions/dependencies: System can tolerate probabilistic correction under managed risk; confidence metrics are well-calibrated.

- Resource estimation and cost modeling tools (software)

- Update QEC resource estimators to reflect NP-hard worst-case decoding costs for color codes; expose trade-offs between transversal-gate benefits and decoder complexity (software).

- Include syndrome diameter distributions, decoder latency budgets, and expected classical compute footprint in architectural planning (software/hardware).

- Assumptions/dependencies: Accurate workload and noise models; practical diameter statistics; stable decoder performance profiles.

- Standards, procurement, and funding guidance (policy, industry consortia)

- Encourage standards/specs to report decoder performance on both average-case and adversarial benchmark sets; include syndrome diameter and separatedness metrics (policy/industry).

- Align funding calls toward heuristic/approximate decoders, benchmark datasets, and classical accelerator integration rather than exact decoders (policy).

- Assumptions/dependencies: Community adoption of benchmark suites; clarity on performance KPIs and reporting formats.

- Curriculum and training (academia, education)

- Integrate the NP-hardness results into QEC courses to temper expectations and emphasize decoder design under complexity constraints; use gadget constructions to teach reductions and benchmarking (academia).

- Assumptions/dependencies: Educational materials and open-source syndrome generators are available.

Long-Term Applications

These require additional research, scaling, or development:

- Provably-good approximation algorithms and guarantees (academia, software)

- Develop approximation algorithms with formal bounds (e.g., performance guarantees on restricted syndrome classes, average-case or smoothed analyses) for color-code decoding (academia/software).

- Assumptions/dependencies: Identification of tractable subclasses (e.g., bounded treewidth regions, structured noise), meaningful practical guarantees.

- Code/hardware co-design to mitigate decoding hardness (hardware, academia)

- Explore modified color codes, subsystem/gauge-fixing variants, or tailored boundaries/connectivity that reduce decoder complexity while preserving transversal gate benefits (hardware/academia).

- Leverage the paper’s parity constraints and separated-syndrome insights to architect decoder-friendly layouts (hardware/academia).

- Assumptions/dependencies: Manufacturable layouts; acceptable trade-offs in memory footprint and thresholds; compatibility with device constraints.

- Specialized decoder accelerators (ASICs/FPGAs/GPUs) (hardware, software)

- Design hardware accelerators for heuristic decoders (e.g., belief propagation kernels, ML inference, local-search primitives), with streaming support and tight latency budgets (hardware/software).

- Assumptions/dependencies: Stable decoder algorithms amenable to acceleration; power/latency budgets; toolchain support.

- Adaptive/hybrid QEC strategies (software, hardware)

- Combine codes dynamically: use color codes where transversal gates dominate costs and switch to surface codes for segments with tight decoding latency constraints (software/hardware).

- Integrate real-time workload-aware code selection and reconfiguration (software).

- Assumptions/dependencies: Reliable code-switching protocols; overheads don’t negate benefits; robust syndrome translation mechanisms.

- Certification and compliance frameworks for decoders (policy, industry)

- Establish certification processes defining acceptable risk profiles, reporting of worst-case vs typical error suppression, and confidence calibration for parity decisions (policy/industry).

- Assumptions/dependencies: Community consensus on metrics; reproducible benchmarks; regulators and customers understand probabilistic guarantees.

- End-to-end compiler/runtime optimization leveraging transversal gates (software)

- Build compilers and schedulers that exploit color-code transversal S/H gates to reduce circuit depth and magic-state overhead, while co-optimizing with heuristic decoder latency budgets (software).

- Assumptions/dependencies: Accurate models of decoder latency vs workload; robust integration with resource estimators; stable transversal gate implementations.

- Extended hardness results and cross-code analyses (academia)

- Generalize gadget-based reductions to other 2D/3D color codes and subsystem codes; map where efficient exact decoding is provably infeasible; inform long-term code choices (academia).

- Assumptions/dependencies: Careful adaptation to different lattices/boundaries; noise model generalization.

- Data and IP ecosystems for decoders (industry, policy)

- Create open repositories of hard/typical syndromes and standardized evaluation harnesses; define IP/licensing models that encourage innovation in heuristic decoders (industry/policy).

- Assumptions/dependencies: Community collaboration; sustainable funding; fair-use and benchmarking standards.

Notes on Assumptions and Dependencies Across Applications

- Complexity assumption: P ≠ NP; results are worst-case, not typical-case.

- Noise models: Proofs given under code-capacity with independent, equally likely X errors; arguments extend strongly to weighted/phenomenological and suggest circuit-level hardness, with circuit details mattering.

- Syndrome structure: Hard instances are constructed and separated; practical noise may produce more benign syndromes—benchmarks should include both.

- Hardware constraints: Classical decoding latency and power budgets are critical; transversal gate benefits must outweigh decoding complexity costs in practice.

- Confidence and risk: Approximate decoders require calibrated confidence measures and acceptance thresholds suitable for target logical error rates.

- Scalability: Tools/products must scale to utility-level qubit counts; streaming and accelerator support will be key.

Glossary

- anti-commutes: In quantum mechanics, two operators anti-commute if applying them in different orders flips the sign; here, errors trigger checks by anti-commuting with stabilizers. "A Pauli error on a bulk data qubit (the yellow star in the centre of the picture) anti-commutes with all three stabiliser checks acting on that qubit"

- Belief propagation: A message-passing inference algorithm used as a heuristic decoder in error-correcting codes. "Promising numerics have been observed with heuristic decoders based on both belief propagation~\cite{koutsioumpasColourCodesReach2025}"

- Blossom algorithm: Edmonds’ algorithm for solving minimum weight perfect matching in polynomial time on general graphs. "Minimum Weight Perfect Matching decoder (MWPM) based on Edmond's Blossom algorithm~\cite{edmondsPathsTreesFlowers1965}"

- clause gadget: A constructed set of defects that models a 3-SAT clause, having an exact cover if and only if at least one input is TRUE. "The clause gadget has three `input' link nodes."

- circuit level noise: A noise model that includes errors occurring during gates, measurements, and idle periods in the actual quantum circuits. "such as weighted models or phenomenological noise, and strongly suggests the same for circuit level noise."

- code distance: The minimum weight of a nontrivial logical operator; a code corrects all errors up to weight floor(d/2). "for a code of distance an exact minimum weight decoder will correct all errors up to weight "

- code-capacity model: A simplified noise model with only independent, equally likely data-qubit errors and no measurement errors. "we will work in the simplest possible noise model (the code-capacity model)"

- colour code: A topological stabiliser code on a tricolorable lattice, notable for transversal implementations of certain logical gates. "the colour code which has broad applicability across all qubit types"

- crossing wire gadget: A construction that allows wires to cross in a planar reduction when building syndrome gadgets. "variable gadgets and crossing wire gadgets (see Section~\ref{s:crossing-wire} in the Appendix)"

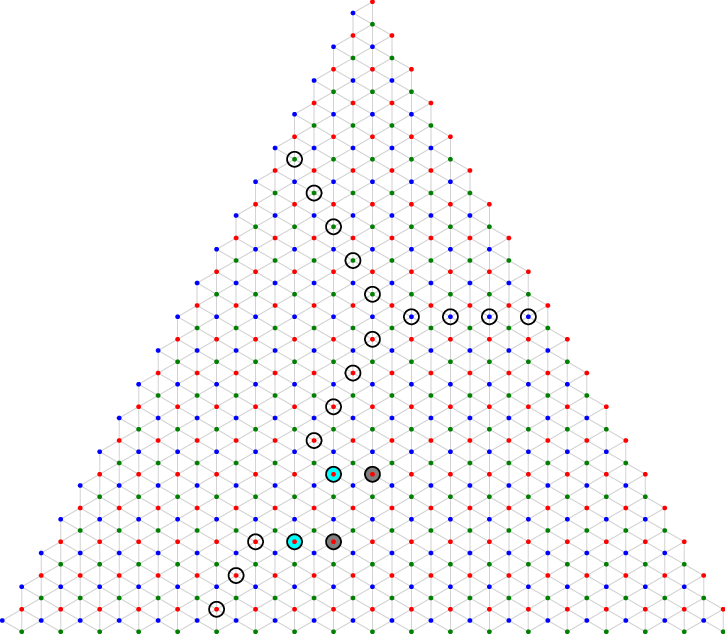

- dual lattice: The lattice whose nodes correspond to checks and faces correspond to data qubits, used for decoding. "For decoding we work on the dual lattice."

- dual lattice graph: The graph structure induced by the dual lattice used to measure distances between defects. "A syndrome is separated if it does not contain any pair of defects at distance one (measured on the dual lattice graph) from each other."

- duplicator subgadget: A subgadget that ensures multiple red link nodes share the same TRUE/FALSE state. "We call this a duplicator subgadget."

- error cluster: The union of all error components meeting a gadget’s defects; a gadget is exactly covered if its cluster size equals the number of defects it meets. "we define the error cluster of a gadget to be the union of all error components meeting any defect of the gadget."

- error component: A connected set of errors where two errors are connected if they share a vertex in the dual lattice. "Given an error-set define an error component to be a connected set of errors (where two errors are connected if they share a vertex in the dual lattice)."

- exact cover: An error-set that both generates the syndrome and uses exactly as many errors as there are defects. "An error-set is an exact cover of a syndrome if the number of errors in is equal to the number of defects in (i.e., ) and generates the syndrome ."

- fold transversality: A technique enabling transversal-like logical gates by increasing connectivity or folding the layout. "or increased connectivity to enable fold transversality~\cite{moussaTransversalCliffordGates2016,chenTransversalLogicalClifford2024}."

- garbage collection gadget: A gadget that “cleans up” unused links and parity constraints so an exact cover of the whole syndrome exists. "The garbage collection gadget allows us to extend any exact cover of the variables, wires and clauses to an exact cover of the whole syndrome."

- hypergraph matching: Matching in hypergraphs (edges connecting multiple vertices), which is computationally hard. "whilst general hypergraph matching is NP-hard~\cite{karpReducibilityCombinatorialProblems1972}"

- lattice surgery: A method to implement logical operations by merging and splitting code patches via repeated syndrome measurements. "syndrome extraction to implement the necessary lattice surgery protocols~\cite{geherErrorcorrectedHadamardGate2024, gidneyInplaceAccessSurface2024}"

- link node: A designated defect node used to connect gadgets; TRUE if matched to its partner in another gadget. "we will designate a small number of its defects as link nodes."

- logical operator: An operator that acts on the encoded (logical) degree of freedom, possibly flipping the logical state. "We take a weight logical operator and partition it into two pieces"

- logical qubit: The encoded qubit represented by a subspace of multiple physical qubits in a quantum code. "logical qubits are encoded in a larger number of physical qubits."

- Minimum Weight Decoding: Finding the most likely physical error consistent with a given syndrome. "Minimum weight decoding involves finding the most likely physical error consistent with the measurements."

- Minimum Weight Perfect Matching (MWPM): A polynomial-time decoder for the surface code that finds a minimum weight matching of defects. "Minimum Weight Perfect Matching decoder (MWPM) based on Edmond's Blossom algorithm"

- Pauli error: A single-qubit error from the Pauli set (X, Y, Z); X-errors trigger Z-checks in this context. "A Pauli error on a bulk data qubit (the yellow star in the centre of the picture) anti-commutes with all three stabiliser checks"

- phenomenological noise: A simplified time-evolving noise model incorporating both data and measurement errors. "such as weighted models or phenomenological noise, and strongly suggests the same for circuit level noise."

- stabiliser checks: Measured parity constraints (stabilizers) whose outcomes indicate defects when they anti-commute with errors. "stabiliser checks acting on that qubit"

- stabiliser code: A quantum error-correcting code defined by a commuting set of stabilizer generators. "can be defined as a stabiliser code as follows."

- surface code: A leading planar topological stabiliser code implementable with nearest-neighbour hardware. "A prominent error correction code is the surface code~\cite{kitaevQuantumComputationsAlgorithms1997,fowlerSurfaceCodesPractical2012}"

- syndrome: The pattern of triggered checks (defects) produced by errors; used by the decoder. "The syndrome is the state of all the checks."

- syndrome diameter: The maximum dual-lattice distance between any two defects in a syndrome. "with respect to the diameter of the syndrome"

- syndrome extraction: The repeated measurement of stabiliser checks to gather error information. "O(d) rounds of syndrome extraction to implement the necessary lattice surgery protocols"

- transversal: A property where a logical gate is implemented by applying single-qubit gates in parallel to all data qubits. "Notably, logical~S and Hadamard gates are transversal in the colour code"

- variable gadget: A gadget with two exact covers (TRUE/FALSE) and multiple outputs reflecting the variable and its negation. "The variable gadget has two possible exact covers which we call TRUE and FALSE."

- wire gadget: A gadget that propagates a TRUE/FALSE state between two link nodes so they share the same state. "The wire gadget has two link nodes."

- XOR-subgadget: A subgadget whose output state is the XOR of its two input link states. "The garbage collection gadget and its component XOR-subgadget"

- X-error: A Pauli X (bit-flip) error; in the code-capacity model only X-errors occur. "the only errors are -errors"

- Z-check: A Z-type stabiliser measurement that detects X-type errors; checks are triggered by an odd number of errors. "since we are only considering -errors, these are -checks."

Collections

Sign up for free to add this paper to one or more collections.