- The paper outlines a comprehensive co-design strategy that integrates AI algorithms and hardware innovations to achieve a 1000× improvement in energy efficiency by 2035.

- It details a multi-layered approach combining advances in hardware, algorithmic sparsity, and application-specific constraints to overcome the inefficiencies of brute-force scaling.

- The roadmap advocates for cross-sector collaboration among academia, industry, and government, emphasizing standardized benchmarks and reproducibility to drive sustainable AI progress.

AI+HW 2035: Shaping the Next Decade – An Expert Analysis

Executive Vision and Motivation

This position paper presents a comprehensive 10-year roadmap articulating the necessity and approach for deep co-design and co-evolution of AI and hardware (HW) to enable exponential scaling of intelligence per joule. The rapid escalation in model size, data demands, and compute requirements has created unsustainable energy and infrastructure burdens, laying bare inherent inefficiencies in the prevailing compute-centric paradigm. The authors assert that future progress hinges on integrated research spanning from materials science to systems, coupling AI algorithmic advances with radical hardware innovation and cross-layer optimization.

The central thesis is that achieving a 1000× efficiency improvement in AI training and inference by 2035 is both viable and necessary for sustainable, scalable, and globally impactful AI systems. The paper calls for a shift away from the brute-force scaling that has dominated recent progress, instead proposing energy-aware, memory-centric designs with continuous algorithm–hardware feedback and adaptation.

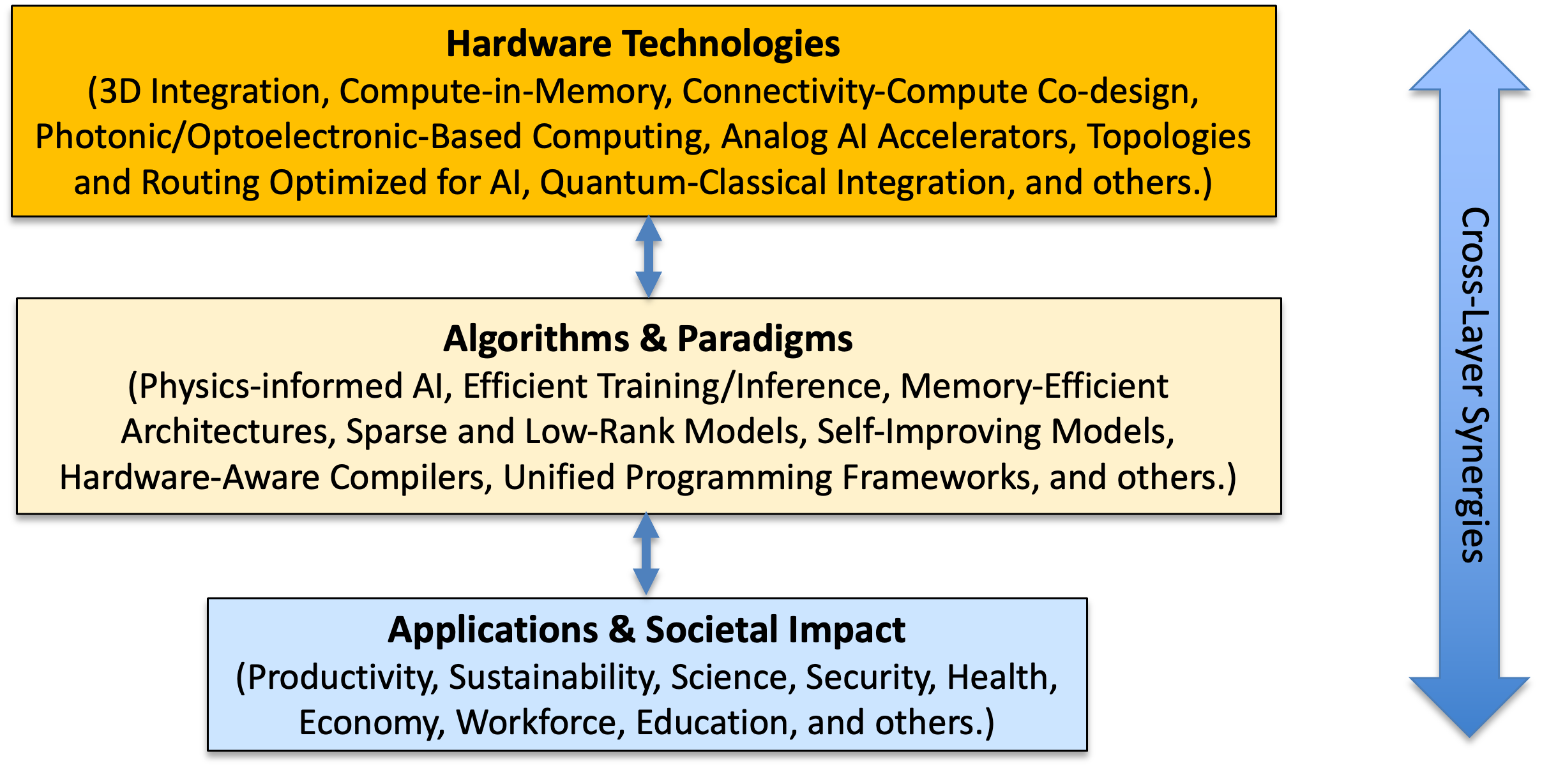

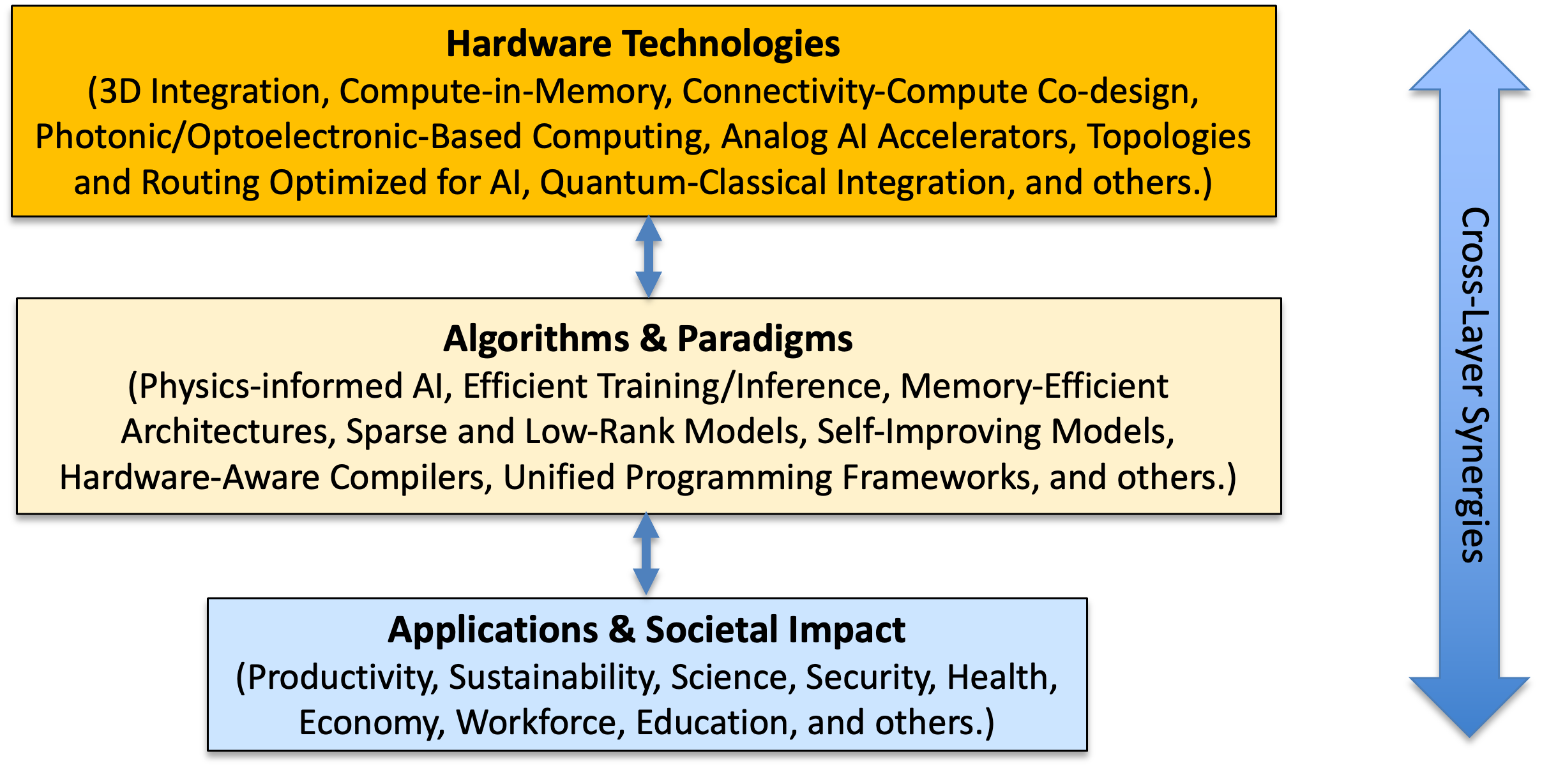

Figure 1: Multi-layered AI+HW co-design vision encapsulating hardware, algorithms, and applications in a synergistic feedback loop.

The Multi-Layered Co-Design Paradigm

The vision is formalized as a multi-layered abstraction—hardware technologies, AI algorithms/paradigms, and applications—where each layer contributes novel capabilities and constraints, and critically, all layers must evolve in concert.

- Hardware Layer: Innovation domains include 3D monolithic integration, memory-centric and compute-in-memory architectures, analog/photonic accelerators, and heterogeneous packaging. The bottleneck has decisively shifted from compute throughput to data movement, connectivity, and system-level integration.

- Algorithm Layer: Prioritizes low-complexity, high-quality, hardware-aware models supporting redundancy reduction, low-rank/low-precision execution, algorithmic sparsity, quantization, and composability. The aim is to maximize efficiency without compromising model performance, facilitating real-time and edge deployment.

- Application Layer: Imposes stringent requirements—robustness, energy, latency, trustworthiness, cost—forcing a convergence between high-level task requirements and hardware constraints. The success metric evolves to "intelligence per joule," realigning the field’s incentives towards sustainable, deployable systems.

The authors advocate for "cross-layer co-design," where algorithm development, system software, and hardware advancements are tightly coupled. For instance, memory-centric algorithmic patterns should inform 3D integration strategies, and analog/photonic computation must be harnessed via error-compensating, noise-tolerant models.

Trends in AI Scaling and Efficiency

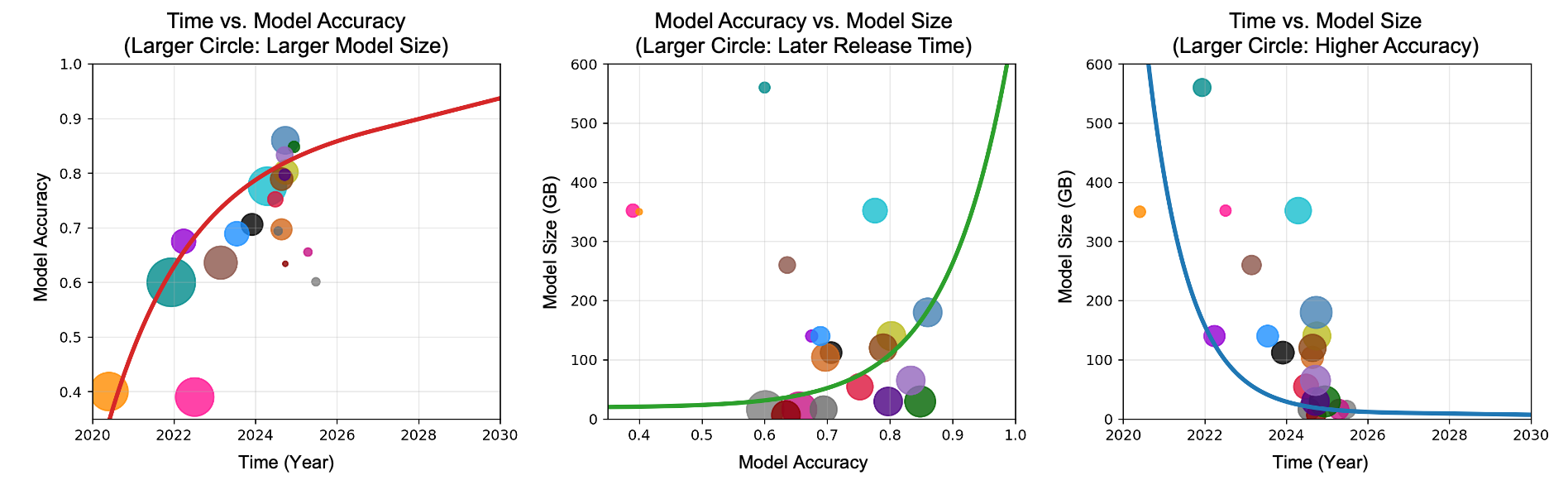

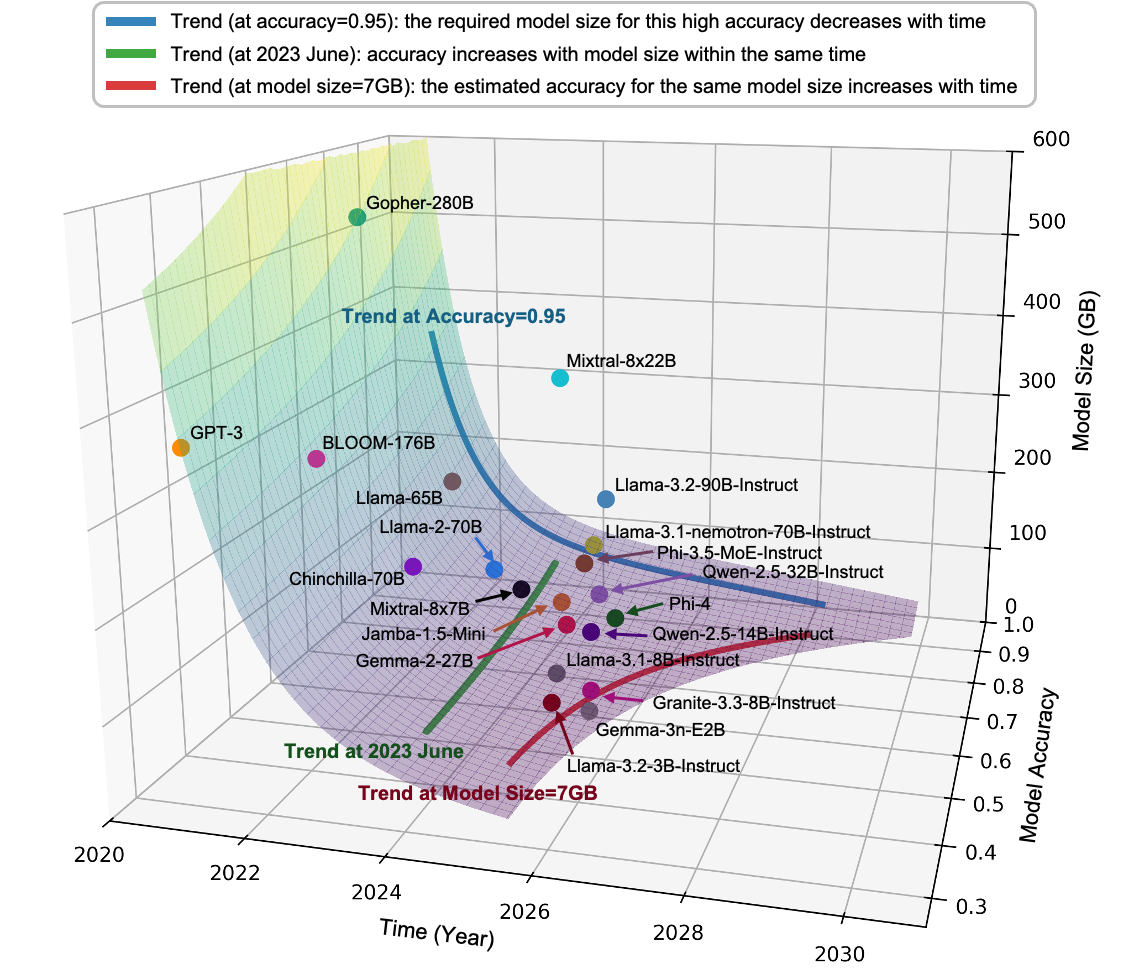

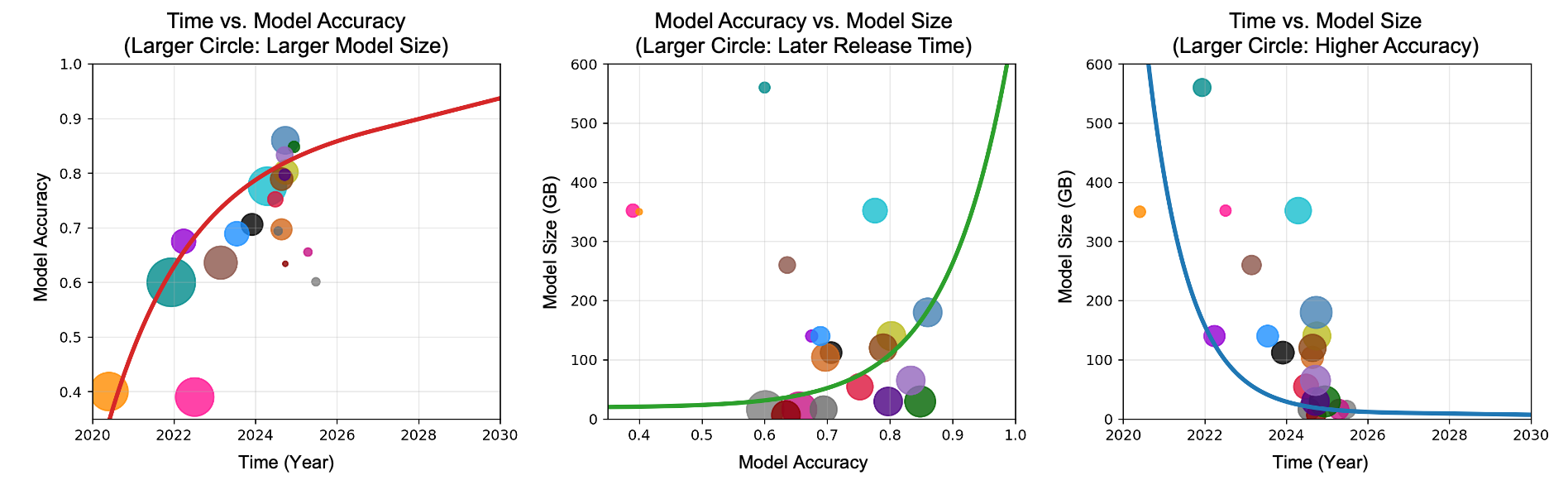

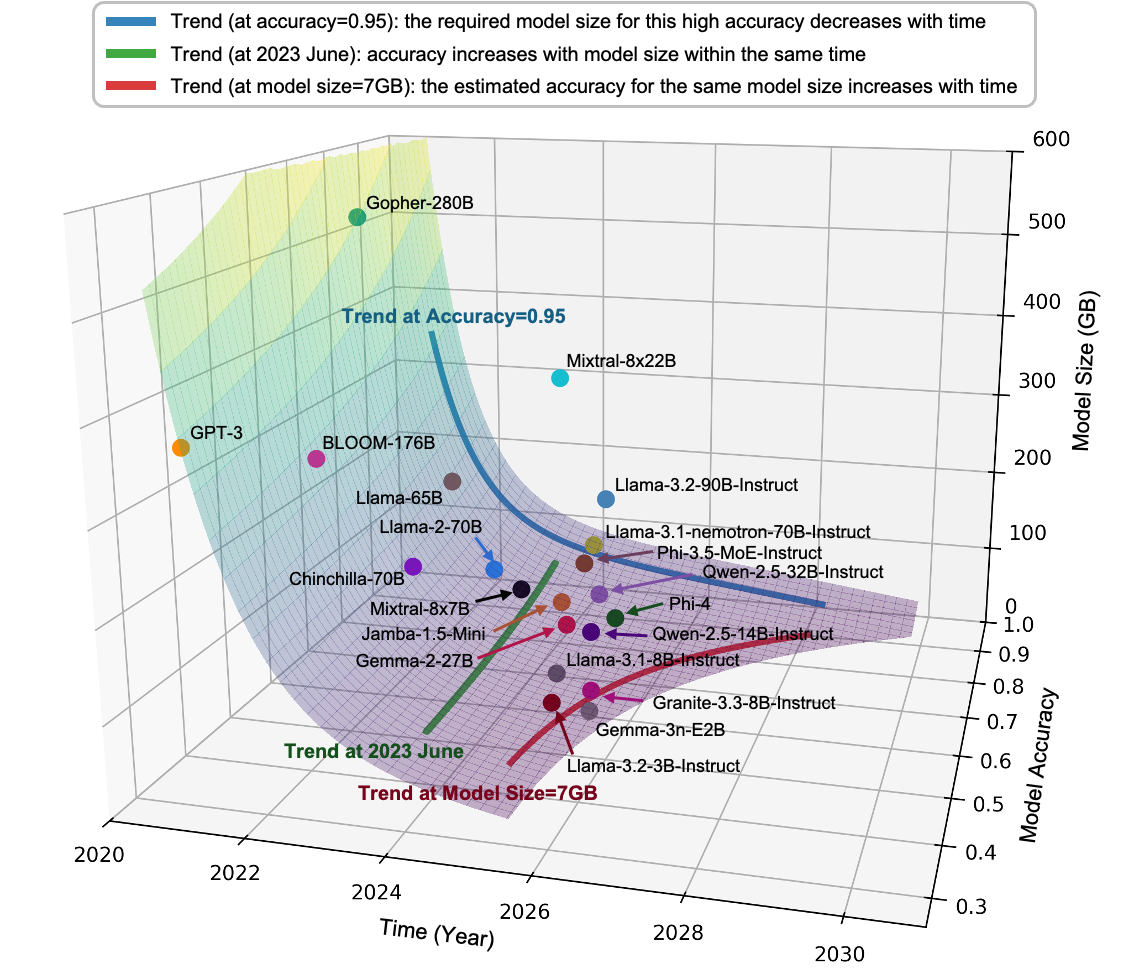

A key analytical contribution is the multi-dimensional modeling of AI system evolution, incorporating model size, accuracy, and temporal trends. The analysis, derived from contemporary empirical data and projected to 2030, demonstrates that while accuracy has historically scaled with model size, recent efficiency improvements stem increasingly from algorithmic and architectural innovations, breaking the direct proportionality.

Figure 2: Two-dimensional relationships among model size, accuracy, and time, highlighting evolving efficiency frontiers.

Figure 3: Three-dimensional trajectory showing joint evolution of model size, accuracy, and time, elucidating non-linear scaling trends and efficiency breakpoints.

The data imply an inflection point where scaling strategies pivot: smaller, domain-specialized models, co-designed with advanced hardware, will deliver maximal capability per energy and cost, especially in edge and physical AI deployments. The authors predict that by 2035, most real-world inference will occur on highly efficient small models, relegating massive foundation models to synthesis and orchestration roles in the cloud.

Hardware Innovation: Opportunities and Constraints

The paper details the emerging ecosystem of AI hardware, foregrounding several crucial trends and challenges:

- System-Level Constraints: Power delivery, cooling, and data movement eclipse chip-level computation as limiting factors.

- Integration Density: Advances such as dense 3D stacking compress the distance between logic and memory, enabling novel dataflows and reducing latency/energy.

- Connectivity: Optical and photonic interconnects are identified as transformative for bandwidth and energy scaling, but integration and software support remain non-trivial.

- Adaptive Hardware: The disparity in innovation speed between AI algorithms and silicon fabrication can only be mitigated via programmability, reconfigurability, and AI-in-the-loop hardware design automation.

The analysis is forthright about the difficulties in analog/photonic system robustness, yield in 3D integration, and persistent fragmentation in software stacks. The roadmap emphasizes error-compensating algorithms, modular interfaces, community-driven standards, and broadening access to design/test infrastructure as immediate research and policy imperatives.

Algorithms and Paradigms: Toward Efficient, Agentic AI

Algorithmic progress is posited as equally critical to hardware scaling for sustainability. Major research frontiers include:

- Model Compression and Specialization: Methods such as pruning, quantization, distillation, and hierarchical decompositions are forecasted to enable 10–100× reductions in model size while maintaining utility for well-chosen domains.

- Beyond Attention: While transformer architectures have dominated, the authors challenge the universality of attention, advocating for greater exploration of SSMs, convolutional inductive biases, and physical priors.

- Self-Optimizing, Agentic Systems: The future of AI for both design automation and deployment is envisioned as a self-optimizing pipeline, with models dynamically compiling themselves for diverse, heterogenous hardware substrates.

- Human–AI Interaction (HAI): The orchestration of multi-agent, multi-model pipelines is highlighted as a high-priority area for making complex systems responsive to human intent and requirement verification.

Deployment, Energy Crisis, and Societal Implications

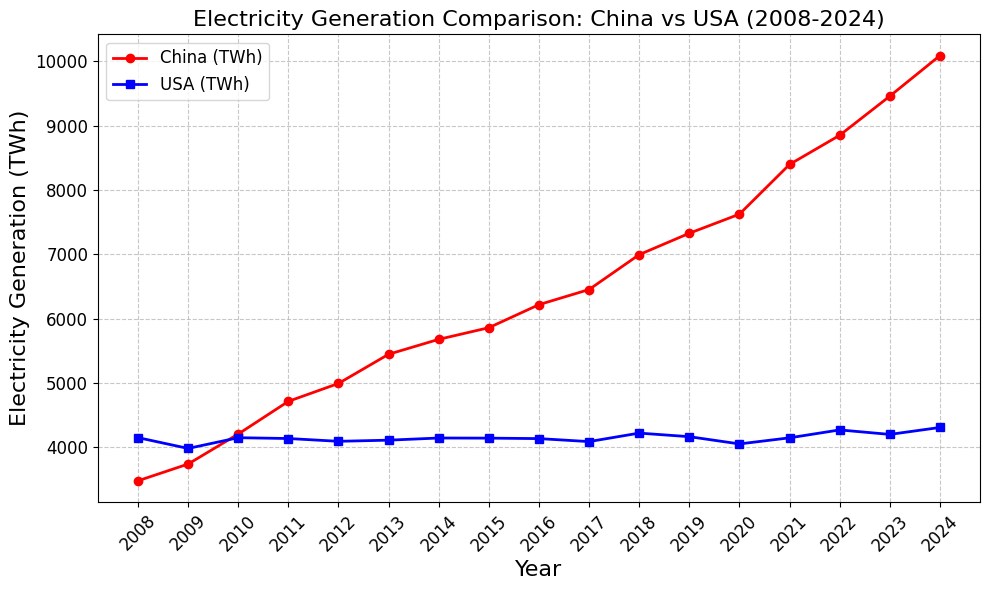

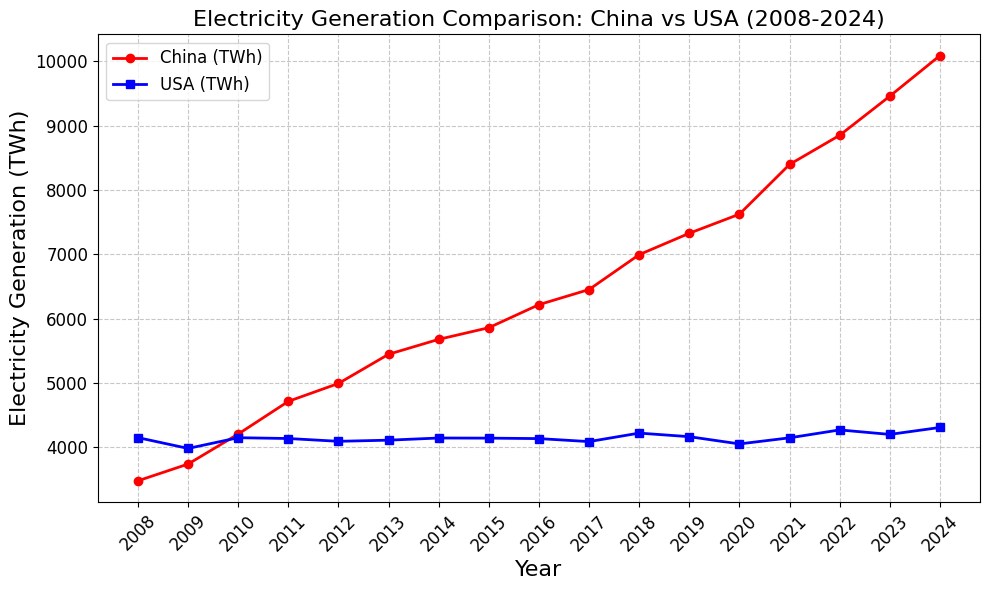

An incisive section addresses the infrastructural bottlenecks, focusing particularly on the looming datacenter power crisis and global disparities in hardware capacity. The authors forecast U.S. datacenter power constraints reaching a tipping point within five years absent major investments in generation and grid infrastructure, noting China’s comparative advantage in this domain.

Figure 4: Comparative charting of electricity sectors in China and the United States underscoring global infrastructure asymmetries.

Key application-level predictions include:

- A shift from hyperscale, cloud-only deployment to edge-optimized, energy-aware models and hardware.

- An increasing role for academia in foundational research, open-source ecosystem building, and independent validation, balanced with industrial scale in deployment and government intervention in infrastructure and access.

- Strong advocacy for standardized benchmarks and transparency in multi-metric reporting (latency, energy, utilization, robustness) to drive rational, reproducible progress.

Action Items and Success Metrics

Concrete recommendations articulate division of labor and coordination across academia, industry, and government:

- Academia: Interdisciplinary research, open testbeds, development of curriculum spanning HW–SW–AI boundaries, and a shift toward holistic, system-level metrics.

- Industry: Prioritizing cross-layer co-design, investment in shared infrastructure, and production-scale adoption of AI-driven design flows.

- Government: Funding long-horizon research (especially in 3D, photonic, analog, quantum systems), supporting public infrastructure, aligning policy incentives, facilitating global talent acquisition, and fostering standards.

- Community: Emphasis on reproducibility, openness, interoperability, cross-benchmarking, and the cultivation of a culture centered on system-level and societal impact over isolated component metrics.

Explicit milestones for 2035 include 1000× end-to-end energy efficiency, ≥60% cluster utilization at GW scale, open SLM ecosystems, and predictable, design-for-x (robustness, sustainability, adaptability) AI+HW platforms.

Conclusion

This paper delivers a technically rigorous, actionable framework for the AI and hardware community, mapping out both high-level goals and fine-grained research priorities. Its central claim is bold—a 1000× improvement in AI efficiency is attainable within a decade—grounded not in any single technological leap but in orchestrated, cross-layer innovation and the abandonment of siloed research.

Key implications include the anticipated convergence of algorithmic and hardware research trajectories, the necessity for tight feedback between edge and cloud optimizations, and the recognition of energy efficiency, modularity, and adaptability as first-class drivers of capability.

The realization of this agenda necessitates broad, sustained, cross-sectoral action: rigorous co-design initiatives, dynamic adaptation of talent and curriculum, and resource democratization. The impact is far-reaching—successful implementation will define the landscape of next-generation AI for both science and society.