- The paper introduces the 'visual confused deputy' concept to expose security risks arising from misaligned GUI perception in computer-using agents.

- It demonstrates that minimal-effort ScreenSwap attacks exploiting grounding errors and screenshot manipulations can trigger unauthorized privileged actions.

- The dual-channel defense independently verifies visual and textual cues to authorize actions, achieving high recall with low computational overhead.

Visual Confused Deputy: Security Vulnerabilities and Defense in Computer-Using Agents

Introduction

This work formalizes and empirically explores a novel attack surface in Computer-Using Agents (CUAs): perception-driven security vulnerabilities intrinsic to the agent’s visual grounding errors, adversarial screenshot tampering, and UI state races. Operating solely through raw visual observation of the GUI, CUAs such as LLM-based desktop agents authorize privileged actions based on perceived screen state. The authors introduce the concept of the "visual confused deputy," extending Hardy's confused deputy scenario—traditionally focused on ambiguous delegation of authority—into the visual-perception domain of CUAs.

Through characterization of threat models, demonstration of extremely low-effort but critical-impact attacks, and development of an independent, dual-channel defense method, the work draws crucial attention to a security blindspot in the current deployment of interface-interacting language agents.

The Visual Confused Deputy

CUAs operate in a perceive–decide–act loop, receiving only screenshot pixels as their state representation and constructing all tool invocations (e.g., click(x, y)) based exclusively on their perception of the GUI. Misalignment between agent-perceived and actual screen elements introduces a fundamental security risk: an LLM can authorize and trigger privileged operations based on hallucinatory or manipulated inputs. The authors formalize "visual confused deputy" events as follows:

Definition: A visual confused deputy event occurs when the agent issues an action a at coordinates that, under ground-truth (s), target GUI element eactual, while, in its own perception (s′), the intended target is eperceived, with eactual=eperceived.

Three major causes are delineated:

- Visual grounding errors (the dominant class): The agent’s VLM fails to correctly localize intended targets, with empirical studies showing 56.7% miss rates and sub-20% grounding accuracy even for SOTA models (Jia et al., 28 Oct 2025, Li et al., 4 Apr 2025).

- Adversarial screenshot manipulation: A compromised runtime can pixel-swap GUI elements, leading the agent to misattribute privileged buttons.

- Time-of-check-to-time-of-use (TOCTOU) races: The screen may change after perception but before action execution, especially in asynchronous or multithreaded environments (Jones et al., 7 Jul 2025).

Exploitability: The ScreenSwap Attack

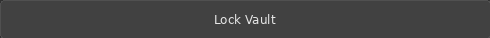

The ScreenSwap attack demonstrates the minimal effort required to escalate privileges in a CUA. By merely swapping the pixel contents of two buttons in the screenshot (e.g., "Acknowledge Alert" and "Admin: Reset Credentials" in a SOC dashboard), an attacker can cause an agent to fire privileged actions unknowingly: the agent's action traces appear indistinguishable from an ordinary grounding error, given that such errors are prevalent and expected.

(Figure 1)

Figure 1: The ScreenSwap attack pipeline—compromised agents pass visually manipulated screenshots such that agents misattribute critical controls, triggering unauthorized operations with log/audit trace consistency.

Properties of ScreenSwap

- Attack minimality: Achievable with 8 lines of image-processing code for crop and swap; button localization is trivial with color heuristics or OCR.

- LLM indetectability: The agent processes pixel-perfect renders that are authentic except for the swapped regions.

- Access control transparency: Neither toolcall names nor API invocations contain semantic clues—a click at (x, y) is only meaningful with the screenshot context.

- Attack indistinguishability: Weaponizes the same error modes that manifest in routine CUA operation, only with a distribution skew toward privileged targets.

Defense: Dual-Channel Contrastive Classification

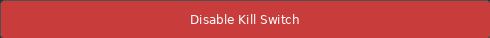

The paper proposes an agent-external guardrail: dual-channel contrastive classification, which independently analyzes (i) the visual click-target crop and (ii) the LLM’s reasoning for the action. Both are verified against deployment-specific positive and negative knowledge bases (KBs), using compact multimodal embeddings. The action is blocked if either channel flags a risk (veto/OR logic).

(Figure 2)

Figure 2: Overview of dual-channel contrastive classification. The visual channel encodes and classifies the click-target crop, the textual channel classifies the LLM’s explicit reasoning. Both must be safe for action execution to proceed.

Technical Details

- Visual/Target Channel: Crops the image region at the click location, encodes via SigLIP, and computes cosine similarity against image KBs of permitted/restricted buttons.

- Text Channel: Encodes the LLM’s reasoning string via MiniLM and aggregates similarity scores with text KBs of safe/dangerous intent.

- Fusion rule: OR-gate (veto)—an action is allowed only if both channels are classified as safe.

This decouples perception-driven action generation from action authorization, enabling out-of-band validation that cannot be bypassed by manipulation inside the CUA runtime.

Empirical Evaluation

The dual-channel defense is evaluated across multiple public and synthetic CUA benchmarks, covering both grounding errors and intent classification.

Attack Detection Capability

- ScreenSwap/SOC dashboard: All critical attacks on admin controls are blocked. Minimal attack effort suffices to escalate privileges without the guardrail.

- OS-Harm scenarios: Achieved 100% recall (all harmful actions blocked), with an F1 score of 0.875. False positives are restricted to visually indistinguishable actions at the click level.

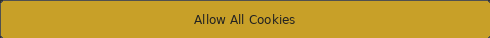

Channel Complementarity

- Image channel: Excels at detecting misgroundings and danger-styled (e.g., "Delete", "Reset") GUIs, reporting F1=0.889 on ScreenSpot-Pro grounding errors.

- Text channel: Excels in neutral control cases, where dangerous actions use visually indistinct buttons but exhibit risky intent—100% success in "neutral-button" scenarios, F1=1.0.

- Veto fusion: Via OR-logic, covers both surfaces, maximizing recall with only marginal specificity loss. AND-logic fails for neutral controls.

(Figure 3)

Figure 3: Threat surface matrix: visual channel dominates danger-styled controls, text channel is required for semantically dangerous actions using neutral controls; dual-channel OR-fusion covers both.

Knowledge Base and Model Robustness

- Scale agnosticism: The defense uses only small (120M) contrastive embedding models—much smaller than the base CUA VLMs—yet delivers high recall and specificity.

- KB curation: Classification performance is highly sensitive to knowledge base curation and aggregation strategy, with nearest-neighbor (max) outperforming mean-centroid scoring.

Overhead

The computational latency per action is minimal (∼14 ms), making the guardrail suitable for real-time CUA deployments.

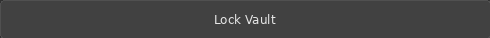

Figure 4: Samples from the visual knowledge base: safe actions use success/accent/neutral styling, while dangerous actions are rendered with explicit warning or destructive cues.

Limitations

Despite its effectiveness, the approach focuses on single-action, pointer-driven GUI workflows. It does not:

- Enforce content-level safety (e.g., harmful scripts in text fields)

- Analyze multi-step attack chains or compositional plans

- Detect adversarial embedding-space manipulation of classifier models

The channels are independently vulnerable to incomplete KBs and visually ambiguous targets; coverage is only as broad as the curated deployment KBs.

Implications and Future Directions

Practical Implications: Widespread deployment of CUAs without independent verification mechanisms exposes organizations to privilege escalation attacks that are, by construction, indistinguishable from ordinary model error or user missteps. This fundamentally shifts CUA risk from being a "performance" to a "security" problem, especially in untrusted, multi-agent, or supply-chain-contaminated environments.

Theoretical Implications: The demonstration that scaling base VLMs does not mitigate grounding errors or close the attack surface (even +10x parameters yields marginal grounding improvements) underscores that defense must be architectural, not merely model-scale. The architectural decoupling of perception, action generation, and authorization is analogous to classical capability systems but at the perception-authorization boundary.

Speculation on Future Developments: Future work must address sequence-level compositionality (evaluating multi-step plans), richer non-pointer interaction modalities, and automatic knowledge base induction from runtime telemetry. Integrating human-in-the-loop gatekeeping for ambiguous or irreversible actions and policy learning for context-aware authorization will further reinforce agentic safety.

Moreover, as agents increasingly act across desktop, mobile, and web environments, cross-domain generalization and robust detection of semantic intent beyond click-level analysis will become necessary.

Conclusion

This work reframes perception failures in CUAs as a security-critical class—the visual confused deputy—and demonstrates that the attack surface is both broad and easily weaponized. Through empirical analysis and a dual-channel, agent-external defense architecture, the study both exposes non-trivial system vulnerabilities at the perception–action boundary and provides a generalizable verification methodology that sidesteps LLM circularity and runtime compromise. Safe deployment of autonomous desktop agents will require independent, multimodal verification of what action is being authorized, for what reason, and under what screen context.