Agentic Business Process Management: A Research Manifesto

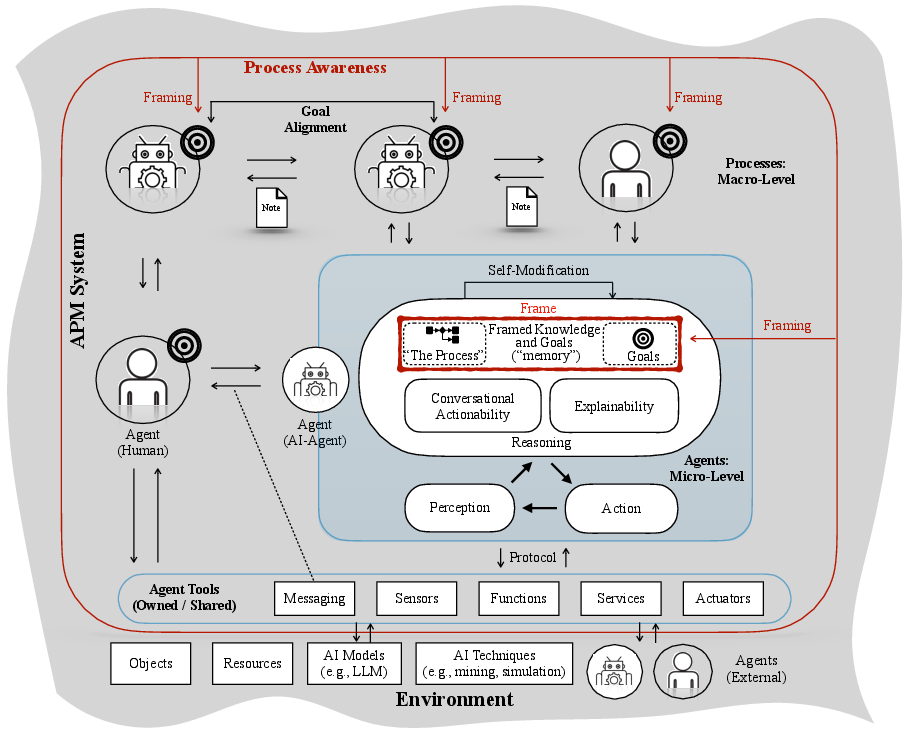

Abstract: This paper presents a manifesto that articulates the conceptual foundations of Agentic Business Process Management (APM), an extension of Business Process Management (BPM) for governing autonomous agents executing processes in organizations. From a management perspective, APM represents a paradigm shift from the traditional process view of the business process, driven by the realization of process awareness and an agent-oriented abstraction, where software and human agents act as primary functional entities that perceive, reason, and act within explicit process frames. This perspective marks a shift from traditional, automation-oriented BPM toward systems in which autonomy is constrained, aligned, and made operational through process awareness. We introduce the core abstractions and architectural elements required to realize APM systems and elaborate on four key capabilities that such APM agents must support: framed autonomy, explainability, conversational actionability, and self-modification. These capabilities jointly ensure that agents' goals are aligned with organizational goals and that agents behave in a framed yet proactive manner in pursuing those goals. We discuss the extent to which the capabilities can be realized and identify research challenges whose resolution requires further advances in BPM, AI, and multi-agent systems. The manifesto thus serves as a roadmap for bridging these communities and for guiding the development of APM systems in practice.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Agentic Business Process Management: A Simple Explanation

What is this paper about?

This paper introduces a new way for companies to manage work when smart “agents” (like AI programs, robots, or people using AI) do parts of the job on their own. The authors call this Agentic Business Process Management (APM). The big idea: let agents act autonomously, but keep them “process-aware” and inside clear boundaries so their decisions still follow the company’s rules, goals, and responsibilities.

What questions are the authors trying to answer?

The paper asks, in plain terms:

- How can organizations safely use autonomous agents to do real business work?

- What does it mean for agents to be “process-aware,” so their actions follow company goals and rules?

- What basic abilities do these agents need to work well with people and other agents?

- How should we design the overall system so everything stays aligned, explainable, and compliant?

How did the authors approach this?

This is a “manifesto,” not a lab experiment. Think of it as a roadmap or guide written by many experts after deep discussions:

- They met at a research seminar and workshops, split into groups, and debated what’s needed for agent-driven work.

- They combined ideas from business process management (BPM), AI, and multi‑agent systems (how multiple smart agents work together).

- They proposed shared concepts, a high-level system design, and a list of key agent abilities and research challenges.

To explain a few terms with everyday analogies:

- Business Process Management (BPM): Like a playbook for how work should be done in a company (who does what, in what order, to reach a goal).

- Agent: A “co-worker” that can sense what’s happening, think about goals, and act—this can be a person, an AI bot, a robot, or regular software with some autonomy.

- Process awareness: The agent doesn’t just act; it knows the company’s playbook (goals, rules, and constraints) and uses that to guide its choices.

- Framing: The “guardrails” or game rules that limit what agents can do, so they stay safe, fair, and compliant.

What are the main ideas and findings?

The paper proposes a clear structure and four must‑have abilities.

- A two-level architecture

- Macro level (the big picture): The APM system sets shared goals and guardrails (“the frame”) for all agents. It ensures agents’ personal goals line up with the organization’s goals.

- Micro level (the workers): Individual agents—human or AI—run a loop of Perceive → Reason → Act. They use tools (like apps, sensors, or services), talk to each other, and make choices within the frame.

- The four key capabilities agents need To make APM work in practice, agents should have:

- Framed autonomy: Freedom to make smart decisions, but within clear rules and constraints (the “frame”). The paper distinguishes:

- Normative frames: “What’s allowed or required?” (like laws or company policies)

- Operational frames: “How do we do it?” (step-by-step instructions)

- Explainability: Agents should be able to explain why they did something. This builds trust, helps debugging, supports fairness, and shows compliance with laws (like the EU AI Act or GDPR).

- Conversational actionability: Agents should communicate naturally and cooperate—negotiating, coordinating, and taking action through dialogue with people and other agents.

- Self-modification: Agents should improve over time—learning from outcomes, updating plans, and adapting while still respecting the frame.

- Examples to make it concrete

- Supplier onboarding: Buyer and supplier agents (some human, some AI) request and evaluate quotes, choose contracts, and ensure terms are met—always staying within the process rules.

- Ride-sharing: A passenger agent books a ride, an AI agent allocates cars, a vehicle agent plans routes, and a payment agent handles billing—all coordinated under shared goals and constraints.

- Why this matters

- Autonomy without chaos: Agents can act smartly and quickly, but stay aligned with rules and goals.

- Safer AI in business: Explainability and framing reduce risks like breaking policies, being unfair, or making unsafe choices.

- Better collaboration: Conversational agents help humans stay in the loop and adjust plans on the fly.

- Continuous improvement: Self-modification lets systems get better over time without losing control.

What’s the impact if this works?

If companies adopt APM:

- They could automate complex work more safely and responsibly, improving speed and quality while reducing errors and risk.

- Teams would trust AI agents more because actions are explainable and auditable.

- Organizations could handle changing laws, policies, and markets better by updating frames and letting agents adapt.

- Researchers in BPM and AI would have a shared roadmap to build practical, compliant, and human-aware agent systems.

In short: The paper argues that the future of using AI at work isn’t just “more automation.” It’s “guided autonomy”—agents that think and act on their own, but within clear, explainable boundaries that keep them aligned with what the organization and society expect.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper is a forward-looking manifesto and intentionally leaves many aspects underspecified. The following list distills concrete gaps and open questions that future research should address to operationalize Agentic Business Process Management (APM):

- Formal semantics for process awareness and framing: precise definitions of “process awareness,” “normative frame,” and “operational frame,” their compositional semantics, and mappings to existing BPM languages (e.g., BPMN, DECLARE, DMN).

- Engineering patterns for framing: concrete mechanisms to realize framing (orchestration vs. shared memory vs. decentralized governance), assignment of frame responsibility, runtime enforcement, and monitoring.

- Strategy synthesis under frames: algorithms for planning and decision-making with normative constraints in (i) single decision-maker, (ii) multiple decision-makers with local frames, and (iii) multiple decision-makers with process-level frames; support for partial observability and stochastic environments.

- Projection and reconciliation of norms: methods to project global normative frames to local agent-level norms, detect and resolve conflicts, and establish soundness/completeness of such projections.

- Verification and assurance: scalable static verification and runtime monitoring that agent behaviors conform to frames; conformance checking for agentic logs; techniques to cope with non-deterministic LLM-based decision modules.

- Metrics for autonomy and alignment: operational measures of “degree of process awareness,” autonomy, and goal alignment at agent and system levels; KPIs to compare APM designs and configurations.

- Reference architecture and interfaces: concrete APM reference architecture, component boundaries, and interface contracts for agents, tools, shared memory, and governance services; integration points with BPM suites.

- Interoperability and standards: how APM integrates with and extends BPMN/DMN/CMMN, FIPA/ACL, MCP/ACP; whether new notations are needed for goals/frames and how to achieve cross-vendor interoperability.

- Data and event models for observability: logging schemas connecting agent actions to process instances; extensions to process mining for multi-agent settings (e.g., agent system mining, conformance under norms); shared benchmarks and datasets.

- Explainability design: when/what/how to explain at both agent and process levels; explanation formats that combine operational and normative rationales; cross-agent explanations and aggregation of rationales.

- Explanation quality and governance: quantitative and qualitative measures of explanation usefulness, completeness, and faithfulness; triggers, recipients, escalation policies; trade-offs with autonomy and performance; regulatory adequacy (e.g., GDPR/AI Act).

- Conversational actionability protocols: standards for negotiation, coordination, and human-in-the-loop control; grounding in shared ontologies; resilience to prompt injection, social engineering, and conversation drift.

- Human–AI governance: roles, oversight models, and accountability (e.g., RACI for agents and humans); liability frameworks for agent decisions; organizational change management and practitioner training for APM design.

- Self-modification boundaries and safeguards: policies specifying what agents may change (knowledge, goals, tools, policies), approval gates, rollback/versioning, and mandatory post-change verification and auditing.

- Continual learning and concept drift: methods for agents to adapt without violating frames; synchronization of learned behaviors with process specifications; prevention and detection of specification drift.

- Secure and safe tool use: capability modeling, least-privilege access control, sandboxing, and monitoring for tool invocation; detection/mitigation of tool misuse and adversarial inputs; robustness to external service failures.

- Performance and scalability: quantifying overheads of framing, explainability, and monitoring; scaling to many agents/processes with acceptable latency and cost; performance–safety trade-offs.

- Cyber-physical integration: extending APM to embodied/robotic agents; real-time safety constraints, verification, and latency in physical environments.

- Cross-organizational APM: frames spanning organizational boundaries; choreography under contracts and regulations; data sovereignty, privacy, and secure shared memories in inter-organizational processes.

- Migration pathways: methods and tools to refactor existing BPM/RPA assets into frames and agent capabilities; incremental adoption strategies, compatibility layers, and ROI analysis.

- Decision core design: to what extent LLMs can serve as agent reasoning modules; design of hybrid architectures (symbolic planners, constraints, program synthesis + LLMs); calibration, testing, and fallback strategies.

- Pre-deployment validation: simulation and digital twins to test frames and agent interactions; scenario-based risk assessment, what-if analysis, and stress testing.

- Empirical validation: real-world case studies and controlled experiments quantifying benefits, risks, and failure modes of APM; user studies on trust, usability of explanations, and governance efficacy; ablation studies of the four capabilities’ contributions.

Glossary

- Agent Communication Protocol (ACP): A standardized protocol for agent-to-agent communication. "Examples include Model Context Protocol (MCP), Agent Communication Protocol (ACP), Resource Description Framework (RDF), and Knowledge Query and Manipulation Language (KQML)."

- Agent governance: The policies and mechanisms for regulating and overseeing autonomous agents’ behavior. "Agent governance has been a well-established line of research for several decades"

- Agent-oriented modeling: Modeling approaches that center on agents, their goals, and interactions (e.g., i*, Tropos). "agent-oriented modeling frameworks like i* and Tropos"

- agent system mining: Process mining techniques focused on extracting and analyzing agent behaviors and interactions. "the emerging research directions of agent system mining"

- agentic AI: AI approaches that frame software in terms of autonomous agents, often leveraging LLMs. "efforts to achieve greater autonomy in software systems often attempt to make use of LLMs and are summarized under the umbrella term of agentic AI"

- agentic AI process observability: Techniques and tools to observe and analyze processes executed by agentic AI. "as well as of agentic AI process observability"

- Agentic Business Process Management (APM): An extension of BPM focused on governing autonomous, process-aware agents executing processes. "This paper presents a manifesto that articulates the conceptual foundations of Agentic Business Process Management (APM)"

- agentic system: A software system composed of goal-driven agents that sense, reason, and act. "we introduce the concept of an agentic system as a collection of (one or more) individual goal-driven agents"

- AI Act: The European Union’s regulatory framework governing AI systems. "regulatory frameworks, such as the EU's GDPR and AI Act"

- AI-augmented BPM systems: BPM systems enhanced with AI capabilities but not centered on agents as primary executors. "This marks a paradigm shift from previously introduced AI-augmented BPM systems"

- BDI (Belief–Desire–Intention): An agent architecture modeling mental attitudes to drive decision-making. "classic AI constructs such as BDI and FIPA"

- Business Process Management (BPM): The discipline of managing coordinated activities to achieve organizational goals. "Business Process Management (BPM) literature and practice"

- BPMN (Business Process Model and Notation): A standard for modeling business processes with graphical notation. "classical process specification languages, such as BPMN and DECLARE"

- DECLARE: A declarative process modeling language specifying constraints instead of fixed flows. "classical process specification languages, such as BPMN and DECLARE"

- deontic requirements: Normative stipulations (obligations, permissions, prohibitions) that constrain behavior. "frames are normative: they specify deontic requirements to the process"

- DOLCE (Descriptive Ontology for Linguistic and Cognitive Engineering): A foundational ontology used to structure domain knowledge. "Drawing on foundational ontologies like DOLCE and GFO"

- eXplainable AI (XAI): Methods that make AI decisions understandable by providing explanations. "which explanation mechanisms---so-called eXplainable AI (XAI) techniques---may be employed"

- Embodied agents: Agents realized in physical form (robots) that act in the physical world. "(Physically) Embodied agents (a.k.a robots)"

- FIPA (Foundation for Intelligent Physical Agents): Standards and specifications for agent technologies. "classic AI constructs such as BDI and FIPA"

- foundation models: Large, pre-trained AI models adaptable to many tasks (e.g., LLMs). "foundation models---such as LLMs---organizations increasingly adopt"

- four-eyes approval policies: Governance rules requiring approval by at least two independent actors. "regarding four-eyes approval policies"

- framed autonomy: Autonomy that is constrained and aligned through explicit frames (rules and goals). "four key capabilities that such APM agents must support: framed autonomy, explainability, conversational actionability, and self-modification"

- GDPR (General Data Protection Regulation): The EU’s data protection and privacy regulation. "regulatory frameworks, such as the EU's GDPR and AI Act"

- GFO (General Formal Ontology): A foundational ontology for representing general categories and relations. "foundational ontologies like DOLCE and GFO"

- i*: A goal- and dependency-oriented modeling framework for requirements and organizations. "agent-oriented modeling frameworks like i* and Tropos"

- KQML (Knowledge Query and Manipulation Language): A language and protocol for exchanging information and knowledge among agents. "Resource Description Framework (RDF), and Knowledge Query and Manipulation Language (KQML)."

- LLMs: Generative AI models trained on large corpora to process and produce language. "foundation models---such as LLMs---"

- Mental model theory of reasoning: A cognitive theory explaining how humans reason using mental representations. "Mental model theory of reasoning"

- Model Context Protocol (MCP): A protocol to standardize how models are provided with and operate over context. "Examples include Model Context Protocol (MCP), Agent Communication Protocol (ACP), Resource Description Framework (RDF), and Knowledge Query and Manipulation Language (KQML)."

- Multi-Agent Systems (MAS): Systems composed of multiple interacting agents with possibly different goals. "promoting the notion of agents and Multi-Agent Systems (MAS) as the fundamental abstractions of software systems"

- normative frame: A frame capturing norms (obligations, permissions, prohibitions) that constrain agent behavior. "we call the two frames normative frame and operational frame"

- normative MAS: MAS that regulate agent behavior through formal norms to ensure compliance and social order. "the AI subfield of normative MAS, which focuses on regulating autonomous agent behavior through the specification of norms"

- operational frame: A frame that prescribes how processes should be executed operationally (e.g., steps, data handling). "we call the two frames normative frame and operational frame"

- orchestration: Centralized coordination of activities or agents to realize a process. "for example, by assigning collective responsibility to one or more agents, through orchestration, or via a shared memory"

- Perceive--Reason--Act loop: The continual cycle in which an agent senses, decides, and acts. "at the micro level each process-aware agent runs a Perceive--Reason--Act loop over framed knowledge and goals"

- process awareness: An agent’s awareness of process models, constraints, and goals, used to align its behavior. "We define process awareness as the assurance that agents’ inner workings conform to organizational processes and adhere to their operational constraints, regulations, and goals."

- process choreographies: Specifications of interactions among independent process participants without central control. "notably, work on process choreographies"

- process mining: Data-driven techniques to discover, monitor, and improve real processes from event logs. "within process mining"

- RDF (Resource Description Framework): A W3C framework for representing information about resources on the Web. "Agent Communication Protocol (ACP), Resource Description Framework (RDF), and Knowledge Query and Manipulation Language (KQML)."

- RPA (Robotic Process Automation): Automation technology using software robots to mimic deterministic human tasks. "Unlike traditional BPM or Robotic Process Automation (RPA) systems that follow rigid, predefined rules and workflows"

- shared memory: A shared state or storage used by agents to coordinate and maintain common context. "through orchestration, or via a shared memory (e.g., as part of the frame) that allows agents to progress along a mutual plan."

- socio-technical system: A system comprising both social (human) and technical (technological) elements. "An APM system is an agentic socio-technical system, jointly realized by a collection of agents"

- technical debt: The long-term maintenance cost incurred by expedient or suboptimal design decisions. "leading to system integration and maintenance challenges and, ultimately, to technical debt"

- Tropos: An agent-oriented software development methodology emphasizing goals and stakeholders. "agent-oriented modeling frameworks like i* and Tropos"

Practical Applications

Immediate Applications

Below are deployable use cases that apply the paper’s APM principles (framed autonomy, explainability, conversational actionability) using today’s BPM, AI, and integration tooling.

- Procurement and supplier onboarding with framed AI agents

- Sectors: manufacturing, retail, pharma

- What it looks like: Buyer and supplier agents issue/respond to RFQs, validate documents, negotiate terms, and route approvals under a normative frame (e.g., spend limits, four‑eyes approval, due diligence).

- Tools/products/workflows: BPM suites (BPMN/DMN/DECLARE) + policy/constraints engine, LLM-based agents with tool use (MCP/ACP), document intelligence, e-signature, ERP integration.

- Assumptions/dependencies: Clean master data; machine-readable policies; role-based access control (RBAC); human-in-the-loop for exceptions.

- Accounts payable and order-to-cash exception handling with guardrails

- Sectors: finance, shared services, SaaS

- What it looks like: Agents match POs/invoices, chase missing goods receipts, explain mismatches, and propose resolution plans, bounded by frames (e.g., SOX controls, segregation of duties).

- Tools/products/workflows: Process mining to detect deviations; AI agents orchestrated by BPM; explainability console with action traces; ERP/ITSM connectors.

- Assumptions/dependencies: Well-defined approval hierarchies; audit logging; API coverage for core systems.

- Customer service triage and resolution under normative frames

- Sectors: telecom, software, e-commerce

- What it looks like: Agents classify tickets, propose next best actions, negotiate SLAs with upstream/downstream teams, and escalate per policy; provide explanations for routing/decisions.

- Tools/products/workflows: ITSM (ServiceNow/Jira) + LLM agents with tool use; conversation-to-case frames; SLA policy engine; chatops interfaces.

- Assumptions/dependencies: High-quality taxonomy; documented SLAs and escalation policies; conversation safety filters.

- Healthcare administrative coordination (non-clinical)

- Sectors: healthcare, payers/providers

- What it looks like: Agents schedule appointments, handle prior-authorization paperwork, manage discharge logistics under compliance frames (HIPAA/GDPR), and produce explanations for denials.

- Tools/products/workflows: BPM + EHR integration; document extraction; agent guardrails for PHI; audit trails for compliance.

- Assumptions/dependencies: Clear data-sharing agreements; strong identity and privacy controls; human oversight for high-risk cases.

- Fair and compliant HR recruiting workflows

- Sectors: all with high-volume hiring

- What it looks like: Agents screen applicants, schedule interviews, and communicate feedback constrained by fairness and privacy frames (e.g., anti-discrimination, data retention).

- Tools/products/workflows: ATS integration; XAI to justify screening decisions; policy-as-code for fairness constraints; candidate-facing conversational interfaces.

- Assumptions/dependencies: Bias-mitigation policies codified; auditable logs; consent and data minimization in place.

- Framed dispatch and routing in mobility/logistics

- Sectors: ride-hailing, field service, last-mile delivery

- What it looks like: AI agents allocate vehicles/technicians and optimize routes while staying within safety, zoning, and service-level frames; provide rationale for assignments.

- Tools/products/workflows: Optimization engines + agent layer; telemetry; explainable dispatch dashboard; geofencing policies.

- Assumptions/dependencies: Reliable location data; regulatory constraints encoded as norms; override mechanisms.

- “Process copilot” for managers and operators (conversational actionability)

- Sectors: cross-industry

- What it looks like: Natural language interactions to query status (“Why is this case stuck?”), trigger framed actions (“Start rework path”), and modify thresholds within authority.

- Tools/products/workflows: Chat interfaces backed by process knowledge graphs and BPM APIs; role-aware guardrails; change request workflows.

- Assumptions/dependencies: Up-to-date process models; robust authorization; change impact checks.

- Agentic observability and explainability for existing automations

- Sectors: any with RPA/BPM

- What it looks like: Wrap current automations with agent-style traces and explanations (who/what/why), enabling governance dashboards and faster incident response.

- Tools/products/workflows: Process mining, event collection, XAI wrappers; “explain-why” endpoints exposed by agents and services.

- Assumptions/dependencies: Sufficient event coverage; standardized logging schemas; data retention policies.

- B2B negotiation agents under shared frames

- Sectors: manufacturing, wholesale, insurance

- What it looks like: Buyer/seller agents negotiate terms under agreed normative frames (payment windows, risk tolerances, compliance clauses) and maintain auditable transcripts.

- Tools/products/workflows: Agent communication protocols (MCP/ACP); contract clause libraries; e-invoicing/EDI connectors; negotiation playbooks as norms.

- Assumptions/dependencies: Legal alignment on machine-interpretable norms; dispute resolution mechanisms; counterparty risk checks.

- Research and teaching sandboxes for APM

- Sectors: academia, industry labs

- What it looks like: Benchmarking framed autonomy scenarios; synthetic multi-agent processes with varying observability, frames, and explainability demands.

- Tools/products/workflows: Open-source APM reference architecture; synthetic logs; evaluation harnesses for alignment and compliance.

- Assumptions/dependencies: Shared datasets/ontologies; reproducible baselines; ethics approvals for user studies.

Long-Term Applications

The following use cases depend on advances in multi-agent reasoning, formal verification, standards, safety, and scalable integration. They reflect the paper’s vision, including self-modification.

- End-to-end autonomous process execution across enterprises

- Sectors: supply chain, finance, pharma

- What it looks like: Multi-agent execution of procure-to-pay/order-to-cash with dynamic, cross-firm frames (contracts, regulations), minimal human intervention, and continuous explainability.

- Dependencies: Interoperable normative standards; cross-organizational identity and trust; robust verification under partial observability; dispute resolution frameworks.

- Self-modifying processes with safe learning and governance

- Sectors: cross-industry

- What it looks like: Agents propose and implement process/frame updates (e.g., new approval path) based on outcomes; changes are verified, simulated, and rolled out with rollback plans.

- Dependencies: Formal change control, causal/impact analysis, safe RL/continual learning, “frame diff” tooling, and certification workflows.

- Machine-readable regulation to frame agents (RegTech at scale)

- Sectors: finance, healthcare, energy, public sector

- What it looks like: Laws/policies encoded as normative frames consumed by APM systems for continuous compliance; regulators audit via explainable traces.

- Dependencies: Regulatory standards for computable norms; co-regulatory sandboxes; liability and auditability schemas; legal/technical harmonization.

- Autonomous compliance and audit for high-risk domains

- Sectors: banking, insurance, clinical operations

- What it looks like: Agents continuously reconcile actions against dynamic norms, flag risks preemptively, and generate legally sound explanations and evidence packages.

- Dependencies: Assurance cases; certified XAI; evidence provenance; privacy-preserving audit tech.

- Human–AI mixed-initiative process redesign

- Sectors: cross-industry

- What it looks like: Agents discover bottlenecks from logs, simulate alternatives, propose redesigns, and coordinate adoption—within strategic frames and constraints.

- Dependencies: Reliable process mining across agents; simulation fidelity; organizational change management; incentive alignment.

- Safety-critical embodied APM on factory floors

- Sectors: manufacturing, warehousing, construction

- What it looks like: Robots and human agents coordinate tasks under safety and productivity frames, with formal verification of norms and runtime monitoring.

- Dependencies: Verified controllers; certified human-robot interaction norms; low-latency observability; fallback/stop mechanisms.

- Personalized education orchestrated by framed agents

- Sectors: education, edtech

- What it looks like: Agents plan learning paths aligned with curricular frames, ensure fairness (e.g., accessibility), and explain progression decisions to students/teachers.

- Dependencies: Trusted student models; fairness and privacy frames; content quality assurance; teacher oversight.

- Smart grid and energy markets with framed autonomy

- Sectors: energy, utilities

- What it looks like: Agents orchestrate demand response, storage, and trading within stability and regulatory frames; self-adapt to grid events with explainable actions.

- Dependencies: Real-time telemetry; interoperable market norms; safety constraints; resilience against cascading failures.

- Framed algorithmic trading and treasury operations

- Sectors: capital markets, corporate finance

- What it looks like: Agents execute trades and liquidity moves within risk, compliance, and ethical frames; adapt strategies with self-modification under strict oversight.

- Dependencies: Verified risk constraints; market abuse detection; auditable strategies; regulator-approved explainability.

- Public administration and permitting with APM

- Sectors: government

- What it looks like: Agents process permits/benefits under legal frames, coordinate across departments, and provide clear explanations to citizens.

- Dependencies: Digitized statutes; inter-agency data sharing; transparency mandates; redress mechanisms.

- Standards and infrastructure for agent interoperability

- Sectors: software, platforms

- What it looks like: Broad adoption of protocols (MCP/ACP), shared ontologies, and secure tool-access policies enabling plug-and-play process-aware agents.

- Dependencies: Industry consortia; security and isolation frameworks; certification of agent capabilities; economic incentives for standardization.

- Security-by-design for agent tool use and isolation

- Sectors: all

- What it looks like: Capability-based permissions, sandboxed tool execution, and continuous policy enforcement embedded in APM frames.

- Dependencies: Strong identity/attestation for agents; fine-grained policy engines; runtime monitoring and kill-switches.

Notes on feasibility across applications:

- Many immediate applications rely on existing BPM/RPA stacks, LLM-based agents with tool use, policy-as-code engines, and strong human-in-the-loop controls.

- Long-term applications require advances in formal normative reasoning, multi-agent coordination under uncertainty, computable regulation, certified explainability, interoperability standards, and robust governance frameworks.

Collections

Sign up for free to add this paper to one or more collections.