Foundations of Schrödinger Bridges for Generative Modeling

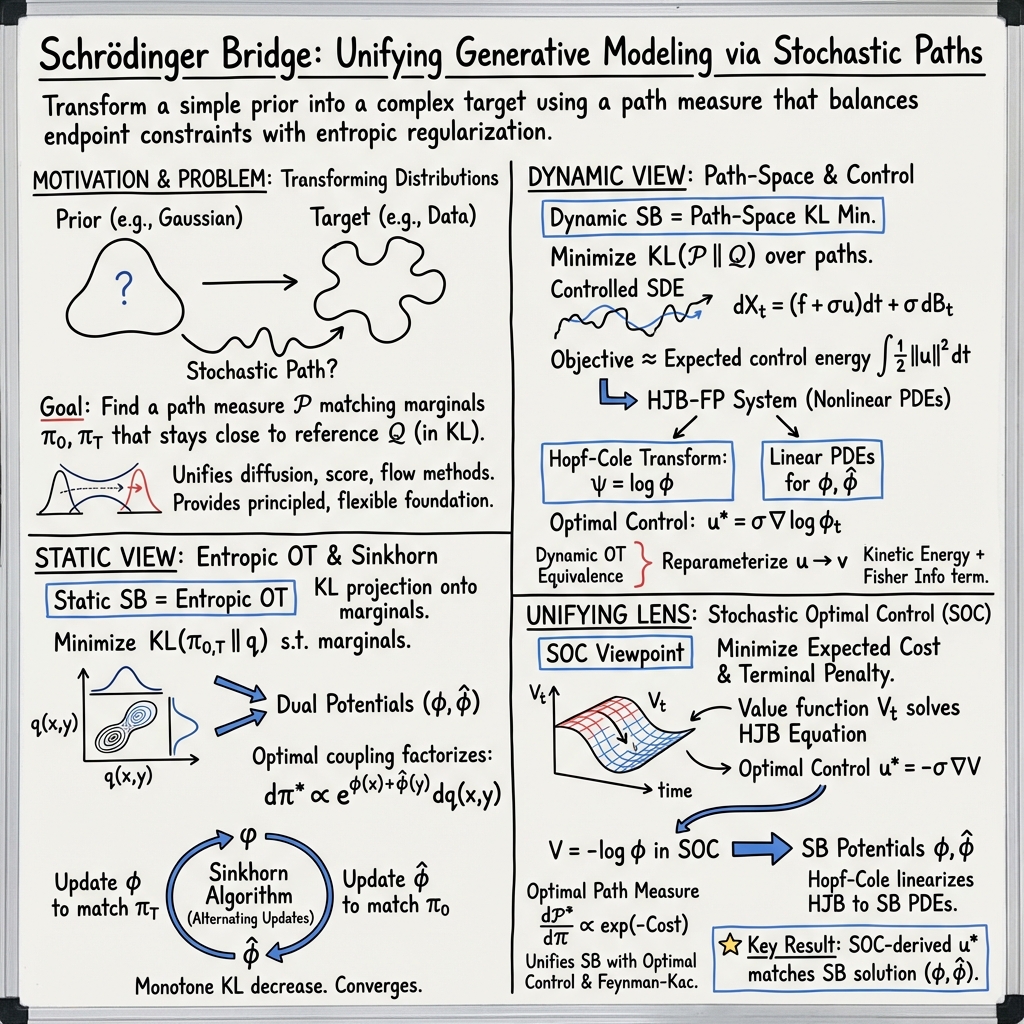

Abstract: At the core of modern generative modeling frameworks, including diffusion models, score-based models, and flow matching, is the task of transforming a simple prior distribution into a complex target distribution through stochastic paths in probability space. Schrödinger bridges provide a unifying principle underlying these approaches, framing the problem as determining an optimal stochastic bridge between marginal distribution constraints with minimal-entropy deviations from a pre-defined reference process. This guide develops the mathematical foundations of the Schrödinger bridge problem, drawing on optimal transport, stochastic control, and path-space optimization, and focuses on its dynamic formulation with direct connections to modern generative modeling. We build a comprehensive toolkit for constructing Schrödinger bridges from first principles, and show how these constructions give rise to generalized and task-specific computational methods.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Plain-language summary of “Foundations of Schrödinger Bridges for Generative Modeling”

What is this paper about?

This paper explains a powerful idea called a Schrödinger bridge and shows how it ties together many of today’s best AI image and data generators (like diffusion models, score-based models, and flow matching). The big picture: you start with simple random noise and want to transform it into complex, realistic data (like a photo) in a smart, step-by-step way. A Schrödinger bridge gives a principled recipe for doing that by asking: “What is the most likely noisy path that gets me from point A (simple noise) to point B (the target data), while staying as close as possible to some simple, known random motion?”

What questions does the paper try to answer?

In simple terms, the guide tackles these questions:

- How can we move from an easy-to-sample distribution (like pure noise) to a complicated one (like a real photo) in a careful, reliable way?

- How do ideas from probability, physics, and control (steering systems) connect to modern generative AI?

- Why do different popular methods (diffusion, score matching, flow matching) actually describe the same core idea when viewed through Schrödinger bridges?

- How can we build practical algorithms based on these ideas, and how do we adapt them to different situations (continuous data, discrete data, multiple targets, branching outcomes, etc.)?

How do they approach it? (Methods and ideas in everyday language)

The paper is a tutorial-style “toolkit” that builds up the theory step by step and shows how to compute with it. Here are the main building blocks, with simple analogies:

- Optimal Transport (moving sand): Imagine two piles of sand shaped differently. What is the cheapest way to move the grains from the first pile to form the second? This is “optimal transport.” The paper starts here because it’s the cleanest way to think about moving mass from one distribution to another.

- Entropy and KL divergence (uncertainty and mismatch): Entropy measures how spread out or “uncertain” something is. KL divergence measures how different two probability distributions are (think: how badly one model “misguesses” data from another). The paper uses KL divergence as a gentle penalty to prefer solutions that don’t stray too far from a simple, known reference behavior.

- Schrödinger bridges (most likely noisy journey): Picture a cloud of tiny particles doing random motion (like pollen bouncing in water). You take a snapshot at the start and another at the end. Among all possible noisy paths connecting those snapshots, the Schrödinger bridge picks the one that is most likely while staying closest to the usual random motion. This is like choosing the “fairest” explanation for how you got from start to finish.

- Static vs. dynamic viewpoints:

- Static: only cares about the start and end distributions and treats the problem like “move mass from here to there” with an entropy (KL) penalty. It uses an efficient algorithm called Sinkhorn that repeatedly “rebalances” the plan until it fits both ends.

- Dynamic: cares about the entire path over time. It describes the movement using stochastic differential equations (SDEs), which are like recipes for noisy motion. It also uses equations that track how the “cloud” of probability moves forward in time (Fokker–Planck) and how certain values move backward (Feynman–Kac). A key tool called Girsanov’s theorem explains how to change the “rules of the noise” and measure how different the new rules are using KL divergence.

- Control viewpoint (steering with minimal effort): Think of the reference noise as a car that drifts randomly. You can “steer” it with a control signal. The goal is to reach the target distribution at the end while spending as little “control effort” as possible. This links Schrödinger bridges to optimal control and planning (Bellman’s principle, value functions).

- Practical construction tools:

- Time reversal: work backward from the target as well as forward from the start and make them meet.

- Forward–backward SDEs: couple two equations that move in opposite time directions.

- Doob’s h-transform: tilt the reference process by a special function so it naturally aims for the target.

- Markov and reciprocal projections: project onto families of processes that are easier to compute with.

- Stochastic interpolants: blend a simple path with noise to “morph” one distribution into another.

- Variations and extensions: The paper shows how to extend the idea to Gaussian cases (with neat formulas), multiple intermediate targets, unbalanced mass (allowing creation or removal), branching to multiple modes, long-memory noise, and more.

- Connections to modern generative modeling: It explains how score-based models (learn gradients of log-densities), diffusion models, and flow matching all fit inside the Schrödinger bridge view. It also introduces training methods that avoid simulating full paths, and strategies that work even when you don’t have direct samples from the target.

- Discrete settings: Not all data is continuous. The paper adapts everything to discrete spaces using continuous-time Markov chains (think: hopping between a finite set of states) and builds matching algorithms there too.

What did they find, and why does it matter?

Main takeaways:

- A unifying framework: Schrödinger bridges give a single, clean way to understand and connect many popular generative modeling methods. Instead of seeing diffusion, score matching, and flows as separate tricks, you can view them as different faces of the same core idea.

- Strong mathematical foundations: By grounding everything in probability, optimal transport, and control theory, the paper explains why these methods work and how to extend them safely.

- Practical algorithms: The guide doesn’t just do theory—it shows how to build solutions, from Sinkhorn for static problems to forward–backward SDE methods and control-based training in dynamic cases.

- Flexibility for real-world needs: The framework naturally handles special cases like multiple goals, branching outcomes, and discrete data. It even gives ways to sample from “energy-based” models where you don’t know the exact probabilities, only relative energies.

Why it matters:

- Better understanding leads to better models: A clear, unified theory helps researchers design faster samplers, improve quality, and add constraints (like physics or fairness) without breaking the method.

- Bridges science and AI: The same tools apply to scientific problems, such as modeling how cells change over time or translating data from one condition to another.

What could this change in the future? (Implications)

- Smarter, faster generators: By using the control and bridge viewpoint, we may get more efficient generative models that produce high-quality results with fewer steps.

- More control and safety: Adding constraints (like keeping certain properties) becomes more natural, which can lead to safer, more reliable AI generations.

- New applications: Because the framework works in both continuous and discrete settings and supports branching and partial information, it could help in areas like biology, physics, and any task where we want to simulate realistic pathways between two states.

- A common language for progress: Researchers across different communities (machine learning, control theory, physics, applied math) can collaborate more easily using the Schrödinger bridge perspective.

In short, this paper builds a clear, step-by-step foundation that shows how many powerful generative AI methods are connected, provides tools to build them from first principles, and opens doors to faster, more flexible, and more scientifically grounded models.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of unresolved issues and open problems that are not fully addressed by the paper and that merit targeted investigation.

Foundations and assumptions

- Existence and uniqueness under weak regularity: Precise conditions on drifts, diffusion coefficients (including degenerate or state-dependent noise), and marginals guaranteeing existence/uniqueness of dynamic Schrödinger bridges, especially with heavy-tailed or support-mismatched distributions.

- Regularity of Schrödinger potentials: Smoothness, boundary behavior, and integrability conditions under which forward/backward potentials and their gradients (used as drifts) exist and are numerically stable in high dimensions.

- Minimal assumptions for equivalence: Formal equivalence between SB, score-based diffusion, flow matching, and stochastic optimal control under the weakest possible assumptions on data distributions and reference processes.

- Reference process design: How to choose or learn the reference process (e.g., drift and diffusion schedule) to optimize computational efficiency and sample quality; principled criteria beyond convenience (e.g., Brownian vs. Ornstein–Uhlenbeck).

- Entropy weight selection: Theory and practice for setting/adapting the entropic regularization (or bridge temperature) across time and tasks; impacts on bias, mode coverage, and convergence.

Numerical analysis and algorithms

- Convergence rates in high dimensions: Rigorous convergence and stability guarantees for Sinkhorn/IPF-like procedures and forward–backward schemes when implemented with continuous states and neural parameterizations.

- Discretization bias and error control: Quantitative error bounds induced by time discretization of SDEs/ODEs and density evolution (Fokker–Planck), and step-size selection strategies that ensure target marginal accuracy.

- Monte Carlo variance and gradient estimation: Variance analysis and variance-reduction techniques for path-space KL and control-cost estimators used in training, especially with long horizons.

- Consistency of simulation-free objectives: Conditions under which score/flow matching and adjoint matching recover the true SB in finite data and finite model capacity regimes; identifiable parameterizations and failure modes.

- Function approximation and sample complexity: Approximation error and sample complexity bounds for neural drifts/potentials (e.g., dependence on dimension, smoothness, and data size).

- FBSDE solvers at scale: Numerically stable, scalable solvers for forward–backward SDE formulations in high dimensions, with guarantees on bias, variance, and convergence.

- Likelihood evaluation and calibration: Practical and theoretically sound methods to compute or bound log-likelihoods and calibration metrics for SB-based generative models.

- Sampling acceleration: Error–speed trade-offs for deterministic ODE solvers, partial-noise samplers, and multistep integrators; theory linking step count to fidelity for SB dynamics.

Model variants and generalizations

- Mean-field/generalized SB: Existence, uniqueness, and convergent numerical schemes when interactions are mean-field; relation to mean-field games and stability under interacting particle approximations.

- Multi-marginal SB: Complexity scaling with the number of marginals; convergence of alternating projections/dual updates; memory-efficient architectures for time-dependent potentials.

- Unbalanced SB: Identifiability and statistical properties under mass creation/destruction penalization; how to set penalties and diagnose over/under-mass bias.

- Branched SB: Formal treatment of topology changes and branch assignment; learning consistent branch allocations under partial or noisy labels.

- Fractional/noise with memory: Existence and computational methods for SB with fractional Brownian motion or other non-Markovian noise; handling memory costs and long-range dependence in training/inference.

Discrete state spaces

- Large-vocabulary scaling: Methods and theoretical guarantees for CTMC-based SB on very large discrete spaces (e.g., language), including sparse generators and low-rank structure.

- Irreversibility and absorbing states: Well-posedness and algorithms when the generator is non-reversible or contains absorbing/transient states; ensuring feasibility of endpoint constraints.

- Time-inhomogeneous CTMCs: Parameterization, identifiability, and training stability for time-dependent generators; discretization schemes and calibration across continuous and discrete time.

Robustness, identifiability, and diagnostics

- Support mismatch and outliers: Behavior of SB when target support is disjoint or nearly disjoint from the prior; regularization or reweighting strategies to avoid pathological bridges.

- Distribution shift and OOD generalization: How SB dynamics degrade under covariate shift; diagnostics and adaptation mechanisms for robust generation.

- Gauge freedoms and potential identifiability: Characterizing non-uniqueness of potentials/drifts up to additive/transformational constants and implications for learning and interpretability.

- Hyperparameter selection: Systematic guidelines for choosing time horizon T, diffusion schedule σ(t), and discretization parameters to meet accuracy and compute constraints.

Applications and empirical gaps

- Benchmarking against alternatives: Comprehensive empirical comparisons of SB methods to diffusion/flow models on standard benchmarks (quality, speed, likelihood) with controlled ablations.

- Data translation and invertibility: Conditions ensuring cycle-consistency or invertibility of learned SB maps in domain translation tasks; failure cases and remedies.

- Single-cell dynamics: Identifiability from snapshot measurements, handling confounders/batch effects, and causal interpretation of learned drifts in biological systems.

- Energy-based sampling: Theoretical and empirical comparisons to AIS/SMC; mixing-time analysis and conditions under which SB sampling improves partition-function estimation or sampling efficiency.

Practical Applications

Below is an overview of practical, real-world applications enabled by the paper’s foundations and methods for Schrödinger Bridges (SB). Each item includes sectors, potential tools/workflows/products, and key assumptions/dependencies.

Immediate Applications

These can be prototyped or deployed with current SB tooling (e.g., Sinkhorn, forward–backward SDEs, diffusion SB matching, score/flow matching, discrete SB for CTMCs).

- Data translation and domain alignment (software, healthcare, media)

- What: Map samples from one distribution to another without paired data (e.g., image-to-image style transfer, MRI protocol harmonization, omics batch-effect correction).

- Tools/workflows/products: SB-based domain translation pipelines; stochastic interpolants; simulation-free score/flow matching for training; integration with diffusion frameworks (PyTorch/JAX).

- Assumptions/dependencies: Access to representative marginal distributions in each domain; suitable choice of reference process (often Brownian); stability in high dimensions; adequate compute (GPU).

- Faster and more controllable generative sampling (software, media)

- What: Use SB’s stochastic-control view to reduce steps (NFEs) and improve control in diffusion/flow models (text-to-image, video, audio).

- Tools/workflows/products: “SB Sampler” modules for existing diffusion stacks; adjoint matching for training without explicit target samples; forward–backward SDE controllers for guidance.

- Assumptions/dependencies: Well-specified potentials/score estimates; proper discretization of SDEs; quality of condition signals (text prompts, guidance scores).

- Sampling from unnormalized energy models (chemistry, materials, physics, ML)

- What: Bridge a simple prior to a Boltzmann distribution defined by an energy function to generate molecular conformations, crystal structures, or samples from energy-based models.

- Tools/workflows/products: SB-based Boltzmann samplers; Doob’s h-transform and FBSDE solvers; coupling with differentiable energy evaluators (e.g., ML force fields).

- Assumptions/dependencies: Access to energy gradients; calibration of diffusion coefficients; ergodicity and mixing properties; compute for high-dimensional systems.

- Single-cell state dynamics and perturbation response modeling (healthcare, life sciences)

- What: Infer stochastic population trajectories and responses to interventions from scRNA-seq or multi-omics snapshots.

- Tools/workflows/products: SB pipelines for cell fate inference; branched/unnormalized SB variants for differentiation and proliferation; trajectory simulators for in silico perturbations.

- Assumptions/dependencies: High-quality, sufficiently sampled marginals (e.g., baseline vs perturbed); appropriate noise models; validation against known biology.

- Fair and smooth allocation via entropic OT/SB (policy, urban planning, logistics)

- What: Entropy-regularized couplings for fairer, smoother assignments (e.g., rideshare matching, school placements, logistics routing) that avoid overly sparse or brittle mappings.

- Tools/workflows/products: Sinkhorn-based solvers; EOT-backed dispatch/allocation engines with tunable entropy; multi-objective cost design.

- Assumptions/dependencies: Accurate marginal estimates of supply and demand; interpretable and aligned cost design; computational safeguards for large-scale optimization.

- Stochastic trajectory planning under uncertainty (robotics, autonomy)

- What: Use the equivalence of SB and stochastic optimal control to plan paths between distributional start/goal states with minimal-entropy deviations from a reference dynamics.

- Tools/workflows/products: Forward–backward SDE controllers; value/potential function parameterizations; reciprocal/Markov projections for iterative fitting.

- Assumptions/dependencies: Reasonably accurate system dynamics and noise models; real-time constraints; safety certifications for deployed controllers.

- Dataset shift correction and covariate shift adaptation (software, ML ops)

- What: Bridge training-data distributions to deployment distributions to reweight or adapt models.

- Tools/workflows/products: SB-based reweighting and density-ratio workflows; KL-regularized transport for minimal perturbation of training data.

- Assumptions/dependencies: Measurable target marginals; stability of high-dimensional transport; governance to avoid shifting labels inappropriately.

- Discrete SB for networked systems (operations, recommender systems, A/B testing)

- What: CTMC-based SB for routing, load balancing, or state transitions in discrete systems (queues, ads/click flows, workflow state-machines).

- Tools/workflows/products: Discrete SB solvers (generators, RN derivatives on CTMCs); iterative Markovian fitting for discrete chains.

- Assumptions/dependencies: A reasonable CTMC generator or learnable surrogate; data to estimate marginals; scalability to large graphs.

- Privacy-preserving synthetic data generation (policy, software)

- What: Generate data that matches target marginals while staying close to a benign reference process, aiding de-identification and sanitization.

- Tools/workflows/products: SB synth-data toolkits; explicit KL controls to restrict deviations; audit pipelines for disclosure risk.

- Assumptions/dependencies: Differential privacy (DP) add-ons may still be needed; governance for utility–privacy trade-offs; evaluation protocols.

- Efficient likelihood training for generative models (academia, ML research)

- What: Likelihood training of forward–backward SDEs and adjoint matching to avoid trajectory simulation and reduce compute.

- Tools/workflows/products: Training APIs that exploit adjoint matching/simulation-free objectives; integration with autodiff SDE libraries (torchsde, diffrax).

- Assumptions/dependencies: Correctness of continuous-time objectives; numerical stability of backward equations; careful regularization.

Long-Term Applications

These require further research, scaling, validation, or domain-specific maturation.

- Multi-stage planning with multi-marginal SB (supply chain, climate pathways, clinical pathways)

- What: Plan trajectories that must satisfy multiple intermediate constraints (e.g., inventory targets, emissions thresholds, treatment milestones).

- Tools/workflows/products: Multi-marginal SB solvers; hierarchical cost design; spatiotemporal orchestration dashboards.

- Assumptions/dependencies: Reliable intermediate targets; scalable multi-marginal optimization; robustness to model misspecification.

- Unbalanced SB for systems with mass creation/destruction (epidemiology, ecology, manufacturing)

- What: Model birth/death, churn, or attrition in stochastic flows (e.g., disease spread, population dynamics, defect rates).

- Tools/workflows/products: Unbalanced SB frameworks integrated with mechanistic simulators; inference of creation/destruction rates from data.

- Assumptions/dependencies: Identifiability of sources/sinks; domain-calibrated priors; data quality and temporal coverage.

- Branched SB for multimodal futures (developmental biology, robotics, creative generation)

- What: Represent diverging trajectories to multiple modes (cell fates, route options, narrative branches).

- Tools/workflows/products: Branched SB modules for multimodal generative planners; branch-aware regularizers and evaluators.

- Assumptions/dependencies: Annotated modes or learnable branching structures; scalable FBSDE/reciprocal projections for multi-modal splits.

- Fractional SB for long-memory processes (finance, climate, geoscience)

- What: Incorporate fractional Brownian motion to capture long-range dependencies (volatility clustering, hydrological memory, climate anomalies).

- Tools/workflows/products: Fractional SDE solvers; estimation of Hurst parameters; fractional score models.

- Assumptions/dependencies: Reliable long-memory parameter estimation; stable fractional integration; computational efficiency for long horizons.

- Mean-field/Generalized SB for interacting agents (traffic, markets, energy grids)

- What: Model collective dynamics with mean-field interactions for better control/prediction (congestion, market microstructure, grid stability).

- Tools/workflows/products: Mean-field SB controllers; scalable simulators with learned interaction kernels.

- Assumptions/dependencies: Partial observability challenges; identifiability of interactions; coordination with physical/market constraints.

- Regulatory and policy design as minimal-entropy interventions (public policy, economics)

- What: Design taxes, subsidies, or nudges as minimal-entropy deviations from baseline dynamics under distributional targets (e.g., emissions distributions, equity targets).

- Tools/workflows/products: Policy SB planners; counterfactual simulators; fairness- and welfare-aware cost functions.

- Assumptions/dependencies: Agreement on targets and cost metrics; causality and confounding controls; transparency and auditability.

- Real-time embedded SB controllers (edge robotics, AR/VR, IoT)

- What: On-device SB sampling/controls for latency-sensitive tasks (navigation, rendering).

- Tools/workflows/products: Hardware-accelerated SDE integrators; distilled SB controllers; surrogate models for potentials.

- Assumptions/dependencies: Efficient model compression/distillation; rigorous latency and safety guarantees.

- SB-enhanced recommender and reasoning systems on graphs (software, knowledge graphs)

- What: Discrete SB for controlled transitions over large graphs (user/item state evolution, curriculum sequencing, knowledge navigation).

- Tools/workflows/products: CTMC-SB layers integrated in GNNs; multi-marginal constraints for sequencing; evaluation suites.

- Assumptions/dependencies: Scalable generators for massive graphs; avoidance of bias amplification; interpretability requirements.

- Scientific discovery workflows coupling SB with simulators (materials, drug discovery)

- What: Plug SB into high-fidelity simulators to propose hypotheses conforming to terminal constraints (properties, yields) while minimally disrupting plausible dynamics.

- Tools/workflows/products: SB–simulator co-design (surrogate models, active learning loops); uncertainty calibration and Bayesian posteriors via path-space KLs.

- Assumptions/dependencies: Differentiable or well-wrapped simulators; tractable uncertainty quantification; compute budgets.

- Standards and governance for SB-based generative systems (policy, compliance)

- What: Define auditing protocols leveraging SB’s KL-based interpretability (how far a system departs from reference dynamics) for transparency and risk management.

- Tools/workflows/products: Conformance dashboards (path-space KLs, data processing checks); red-team toolkits for distributional shift stress tests.

- Assumptions/dependencies: Consensus metrics for distance/deviation; access to reference and operational data; legal frameworks.

Cross-cutting assumptions and dependencies

- Availability and quality of marginal distributions (or endpoint couplings); correct specification of reference processes (drift/noise).

- Numerical stability and scalability of Sinkhorn (static) and SDE solvers/FBSDE (dynamic), especially in high dimensions or long horizons.

- Compute resources (GPU/TPU), and robust autodiff for SDEs; integration with existing ML stacks (PyTorch/JAX).

- Domain-specific validation (e.g., biological ground-truth for cell trajectories, regulatory approval for healthcare/finance/robotics).

- Governance for fairness, privacy, and safety; when needed, augmentation with privacy mechanisms (e.g., DP) and causal safeguards.

Glossary

- Absolute continuity: A relationship between measures where one measure assigns zero to any set that another measure assigns zero to; foundational for defining Radon–Nikodym derivatives. "where means that is absolutely continuous with respect to "

- Adjoint matching: A training approach that learns optimal bridge dynamics without needing target samples by matching adjoint dynamics. "presents adjoint matching, which learns the optimal SB while avoiding explicit sampling from target distributions."

- Bellman’s Principle of Optimality: A core concept in dynamic programming stating that an optimal policy remains optimal regardless of past decisions, given the current state. "including Bellman's Principle of Optimality given a terminal constraint"

- Boltzmann distributions: Probability distributions proportional to the exponential of negative energy; common in statistical physics and energy-based models. "applies SB to sampling Boltzmann distributions, showing how the SB frameworks can generate from unnormalized energy distributions without explicit samples."

- Brownian motion: A continuous-time stochastic process with independent Gaussian increments, modeling random diffusion. "pure Brownian motion path measure with SDE "

- Chain rule for KL divergence: A decomposition of KL divergence between joint distributions into a sum of marginal and conditional divergences. "We now establish the {chain rule for KL divergence}, which decomposes the KL divergence of a joint measure into the divergence between its marginals and the expected conditional divergence."

- Continuous-time Markov chain (CTMC): A Markov process on a discrete state space evolving in continuous time. "introduces continuous-time Markov chains (CTMCs) as discrete analogues of stochastic processes."

- Control drift: The additional drift term applied to a reference SDE to steer the process toward constraints or objectives. "the control drift refers to added term to the reference drift which is scaled by the diffusion coefficient, generally denoted "

- Data processing inequality: States that applying the same stochastic mapping to two distributions cannot increase their divergence. "the {data processing inequality}, which formalizes the idea that applying the same stochastic transformation to two probability measures cannot increase their divergence."

- Doob’s h-transform: A technique to condition Markov processes by reweighting with a positive function h, yielding a new process with desired endpoint behavior. "presents Doobâs -transform, which constructs conditioned stochastic processes by tilting the reference process using -function."

- Endpoint law: The joint distribution of initial and terminal states of a path measure or coupling. " & endpoint law or coupling distribution"

- Entropic optimal transport (EOT): An entropy-regularized version of optimal transport that penalizes deviation from a reference coupling to ensure smooth, unique solutions. "introduces the entropic optimal transport (EOT) problem, which leverages entropy as a method of regularizing the OMT problem with a pre-defined reference coupling, which yields a unique solution."

- Entropy-regularized transport: Transport formulations augmented with entropy terms to promote smoothness and uniqueness. "path-space optimization and entropy-regularized transport"

- Feynman–Kac equation: A backward-time PDE linking expectations over SDEs to solutions of parabolic PDEs with potentials. "and the Feynman-Kac equation, governing how functions evaluated at the end of a stochastic process evolve backward in time."

- Fokker–Planck equation: A forward-time PDE describing the time evolution of probability densities under SDE dynamics. "derives the Fokker-Planck equation, governing how the probability density over the stochastic paths generated from an SDE evolves forward in time"

- Forward-backward stochastic differential equations (FBSDEs): Coupled SDE systems that jointly characterize forward state evolution and backward adjoint (potential) processes. "introduces forward-backward stochastic differential equations (FBSDEs), providing a coupled characterization of SB with respect to the time-dependent Schrödigner potentials."

- Fractional Brownian motion: A generalization of Brownian motion with long-range temporal dependence controlled by the Hurst parameter. "studies fractional SB problems, incorporating long-range temporal dependencies through fractional Brownian motion."

- Girsanov’s theorem: A result enabling changes of measure for SDEs by adjusting drift terms, central to defining relative entropy on path space. "derives Girsanov's theorem from first principles, which allows us to define changes in measure and KL divergences on path space."

- Iterative Markovian Fitting: A procedure that iteratively fits Markov drifts to match desired couplings or bridges. "parameterizes the Iterative Markovian Fitting procedure with a learned Markov drift."

- Kantorovich’s OMT problem: The relaxation of Monge’s problem that optimizes over couplings (joint distributions) rather than deterministic maps. "Kantorovich's OMT problem aims to find the optimal coupling "

- Kullback–Leibler (KL) divergence: A measure of relative entropy quantifying how one probability distribution diverges from another. "Illustration of Relative Entropy or Kullback-Leibler (KL) Divergence."

- Markov kernel: A mapping from states to probability measures, defining stochastic transformations between spaces. "a Markov kernel "

- Markov projection: The entropy-minimizing projection of a reciprocal measure onto the set of Markov measures. "Markov projection of a reciprocal measure"

- Markovian and reciprocal projections: Entropy-minimizing projections in path space onto Markov or reciprocal classes, yielding approximations to optimal bridges. "formalizes Markovian and reciprocal projections, which perform entropy-minimizing projections in path space that converge to the optimal SB measure."

- Mean-field interactions: Interactions where each particle/process is influenced by aggregate statistics (e.g., density), common in many-body systems. "generalizes dynamic SB to model mean-field interactions."

- Monge’s OMT problem: The original deterministic formulation of optimal transport seeking a cost-minimizing map between distributions. "Monge's OMT problem aims to find the optimal transport map that minimizes:"

- Optimal mass transport (OMT): The problem of transporting one distribution to another at minimal cost; foundational to OT theory. "optimal mass transport (OMT) problem"

- Path measure: A probability measure over trajectories (paths) in continuous time and space. "path measure in "

- Path-space optimization: Optimization over distributions of entire trajectories rather than just endpoint distributions. "path-space optimization"

- Pushforward (measure): The distribution obtained by mapping a random variable through a function; in OT, used to express transported marginals. "the pushforward of (i.e., )"

- Radon–Nikodym derivative: The density of one measure with respect to another when absolute continuity holds; central to defining KL. "Radon-Nikodym derivatives between path measures"

- Reciprocal class: The set of mixtures of bridges (conditioned path measures) sharing the same reference dynamics’ bridge structure. "reciprocal class of containing all mixtures of bridges"

- Schrödinger bridge (SB): The entropy-minimizing stochastic process connecting given marginals while remaining close to a reference process. "Schrödinger bridges provide a unifying principle underlying these approaches"

- Schrödinger potentials: Dual potentials (forward and backward) whose exponentials reweight a reference process to realize the optimal bridge. "a pair of Schrödinger potentials that uniquely yield a clean form of the optimal solution."

- Score-based generative modeling: A framework that learns gradients of log-densities (scores) to sample from complex distributions via SDEs/ODEs. "provides a primer on score-based generative modeling, which learns gradients of log-densities."

- Sinkhorn’s algorithm: An iterative scaling method to compute entropy-regularized OT/SB by alternating updates of dual potentials. "known as Sinkhorn's algorithm, which alternates between optimizing the dual Schrödinger potentials."

- Stochastic differential equation (SDE): A differential equation driven by stochastic processes (e.g., Brownian motion) modeling random dynamics. "stochastic differential equations (SDEs)"

- Stochastic interpolants: Constructions that interpolate between distributions via deterministic paths plus noise to form bridges. "introduces stochastic interpolants, providing a practical way to construct bridges between distributions as deterministic interpolants with Gaussian noise."

- Stochastic optimal control (SOC): The theory of optimizing control policies for stochastic dynamics to minimize expected costs. "introduces the general framework of stochastic optimal control"

- Time-reversal formula (of SDEs): The relationship describing backward-in-time dynamics consistent with forward SDEs, crucial for bridge sampling. "derives the time-reversal formula of SDEs, which is fundamental to backward dynamics in SB."

- Unbalanced Schrödinger bridge problem: A variant of SB allowing creation or destruction of probability mass along paths. "develops the unbalanced SB problem, allowing mass creation and destruction along the stochastic trajectories."

- Value function: The optimal expected cost-to-go in control problems, characterizing optimal control policies. "deriving the value function that defines the optimal control."

Collections

Sign up for free to add this paper to one or more collections.