- The paper introduces a method that computes per-instance noise schedules based on the spectral properties of each input image, improving generative fidelity.

- It employs a mixed schedule formulation that blends frequency-focused and power-focused strategies to optimally match the noise level with image structure.

- Empirical results show that the approach outperforms traditional fixed schedules, reducing denoising steps by up to 2–3× while enhancing sample quality.

Spectrally-Guided Diffusion Noise Schedules: A Principled Approach to Instance-Adaptive Pixel Diffusion

Introduction

The paper "Spectrally-Guided Diffusion Noise Schedules" (2603.19222) proposes a method for constructing per-instance noise schedules for pixel diffusion models driven by the spectral properties of the input image. Departing from the canonical practice of employing globally fixed, handcrafted schedules (e.g., linear, cosine, log-normal), the authors derive theoretically motivated, spectrally conditioned schedules that align the forward diffusion process with the inherent frequency composition of each image. This instance-adaptive approach not only eliminates heuristic tuning across varying resolutions but also yields enhanced generative fidelity, particularly when the number of denoising (sampling) steps is reduced.

Theoretical Framework and Methodology

Traditional denoising diffusion probabilistic models (DDPMs) are structured around a forward process of sequentially adding Gaussian noise to data, parameterized by a schedule that determines the noise level at each timestep. The reverse process is trained to synthesize samples by denoising, step by step. The choice of noise schedule (λ(t), often in terms of logSNR) critically influences both sample quality and sample efficiency, especially in pixel-based (non-latent) formulations where neither the autoencoding bottleneck nor the learned tokenizer obfuscate the signal's inherent spectral structure.

Crucially, the authors observe that the spectral power distribution of natural images—captured, for instance, by the radially-averaged power spectral density (RAPSD)—differs significantly across images and is resolution dependent. This motivates three major methodological advances:

- Per-instance schedule construction: For each image during training, compute its RAPSD, parameterize it (as a power law), and use it to derive tight theoretical lower and upper bounds on noise levels that avoid under- or over-noising at any denoising step.

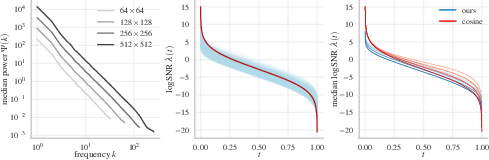

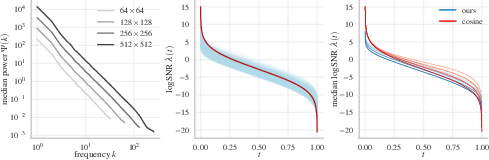

- Mixed schedule formulation: Construct schedules that interpolate between frequency-focused (uniform coverage of frequencies) and power-focused (coverage proportionally to signal power density) strategies, thereby capturing both fine and coarse image structure (Figure 1).

- Conditional schedule prediction for inference: Since ground-truth spectra are unavailable during unconditional or conditional generation, a GMM-based spectrum sampler (conditioned on label or prompt) samples the spectrum, from which the schedule is derived and conditioned upon during generation. The denoiser is FiLM-conditioned on both schedule parameters and the relevant logSNR interval.

Figure 1: Median RAPSD curves for ImageNet at varying resolutions and the corresponding cosine vs. spectrally-adaptive noise schedules, demonstrating consistent adaptation without heuristic parameter changes.

Empirical Results

Experiments on class-conditional generation across ImageNet resolutions (128, 256, 512) establish that spectrally-guided schedules outperform state-of-the-art pixel diffusion models (notably SiD2, PixelFlow, PixelDiT, and PixNerd) across standard metrics—FID, sFID, Inception Score, precision, and recall—while reducing the number of required denoising function evaluations (NFEs) by up to 2–3×. Particularly, the benefit is amplified in the low-NFE regime, a critical setting for practical deployment.

Furthermore, the gap between spectrally-guided and baseline cosine/sigmoid schedules grows as step count decreases, indicating higher sample efficiency. However, with very high NFEs, a slight degradation in FID is observed, suggesting a resolution-dependent optimum in the schedule's effectiveness.

(Figure 2)

Figure 2: FID comparison across number of denoising steps: spectrally-guided schedules deliver superior sample quality, especially as steps decrease.

The approach surpasses median- or dataset-level schedule baselines, and ablations indicate that both the per-image spectrum conditioning and the schedule's closed-form parameterization contribute to the performance gains. Conditioning the network on min/max logSNR further improves learning stability and guidance effectiveness.

Generated samples visually confirm that, for fewer sampling steps, the spectrally-guided models produce outputs with less over-smoothing, fewer artifacts, and more preserved fine details compared to SiD2, confirming the alignment between spectrum-aware noising and sample fidelity.

(Figure 3)

Figure 3: ImageNet 256×256 generations: spectrally-guided samples (bottom) preserve details and structure at low step counts versus SiD2 (top).

Spectrum Manipulation and Control

An additional byproduct of the instance-specific RAPSD-parameterized schedules is fine-grained control over the spectral content of generated samples. By manipulating the sampled spectrum (e.g., scaling the power law exponent, or the power at specific frequencies), the generative process can be steered to produce outputs with more (or less) high-frequency detail, effectively modulating texture and visual sharpness.

(Figure 4)

Figure 4: Varying the sampled spectral power at high frequencies modulates image detail and texture continuously within the same trained model.

Implications and Theoretical Significance

The paper's approach introduces a principled connection between the microstructure of visual data and the stochastic regularization imposed by the forward diffusion process. By eschewing global, heuristic schedule design and instead focusing on per-instance optimality (in the sense of signal destruction and retention at each frequency), the method eliminates a longstanding source of hyperparameter sensitivity in pixel diffusion. The ability to tune or conditionally sample relevant spectral properties has direct implications for controlled generation, augmenting latent or token-based schemes with spectrum-level manipulability.

The findings further suggest that the traditionally observed superiority of LDMs over pixel diffusion in step efficiency may, to some extent, be an artifact of misaligned schedule priors, and that efficient schedule adaptation narrows this efficiency/quality gap for pixel-space models. The method is theoretically agnostic to the underlying pixel/latent dichotomy, and extensions to multi-stage or VQ-encoded models may be explored.

Limitations and Future Work

Spectrally-guided schedules, while successful in reducing compute for pixel diffusion, do not close the gap with state-of-the-art LDMs and distilled models at extreme low-step settings. Some architectural aspects (loss bias, guidance intervals) still require empirical tuning. The pipeline also presumes that the spectral distribution is informative of image complexity—a property more robustly exhibited in natural images than in specialized domains, and which may vary for text-guided or out-of-domain data. Extensions toward spectrum scheduling in latent spaces or for multimodal diffusion are promising directions.

Conclusion

This work demonstrates that spectral analysis can ground an efficient, principled rethinking of the noise schedule in denoising diffusion models. Instance-adaptive, spectrum-matched schedules deliver clear empirical gains in both generative quality and inference speed for pixel diffusion models, and enable new spectrum-level controls during generation. The theoretical framework and empirical findings invite future work on extending spectral scheduling to more general generative settings and integrating spectrum guidance with alternative acceleration and distillation techniques.