The color code, the surface code, and the transversal CNOT: NP-hardness of minimum-weight decoding

Abstract: The decoding problem is a ubiquitous algorithmic task in fault-tolerant quantum computing, and solving it efficiently is essential for scalable quantum computing. Here, we prove that minimum-weight decoding is NP-hard in three quintessential settings: (i) the color code with Pauli $Z$ errors, (ii) the surface code with Pauli $X$, $Y$ and $Z$ errors, and (iii) the surface code with a transversal CNOT gate, Pauli $Z$ and measurement bit-flip errors. Our results show that computational intractability already arises in basic and practically relevant decoding problems central to both quantum memories and logical circuit implementations, highlighting a sharp computational complexity separation between minimum-weight decoding and its approximate realizations.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper studies a core problem in quantum error correction: decoding. Decoding means looking at the “warning lights” from a quantum code (called a syndrome) and figuring out the smallest set of fixes (an error pattern) that could have caused those warnings. The authors prove that, in three very common and important settings, finding the absolute smallest set of fixes is NP-hard. In simple terms, NP-hard means “extremely hard to solve quickly as the problem gets large,” even with the best computers.

The paper’s main questions

- Is exact minimum-weight decoding (finding the fewest total errors consistent with the warnings) computationally easy or hard for popular quantum codes and a key logical gate?

- Does this hardness show up even in basic, practical situations that people actually use?

They focus on three settings:

- The color code with only Z-type errors.

- The surface code with full Pauli errors (X, Y, and Z).

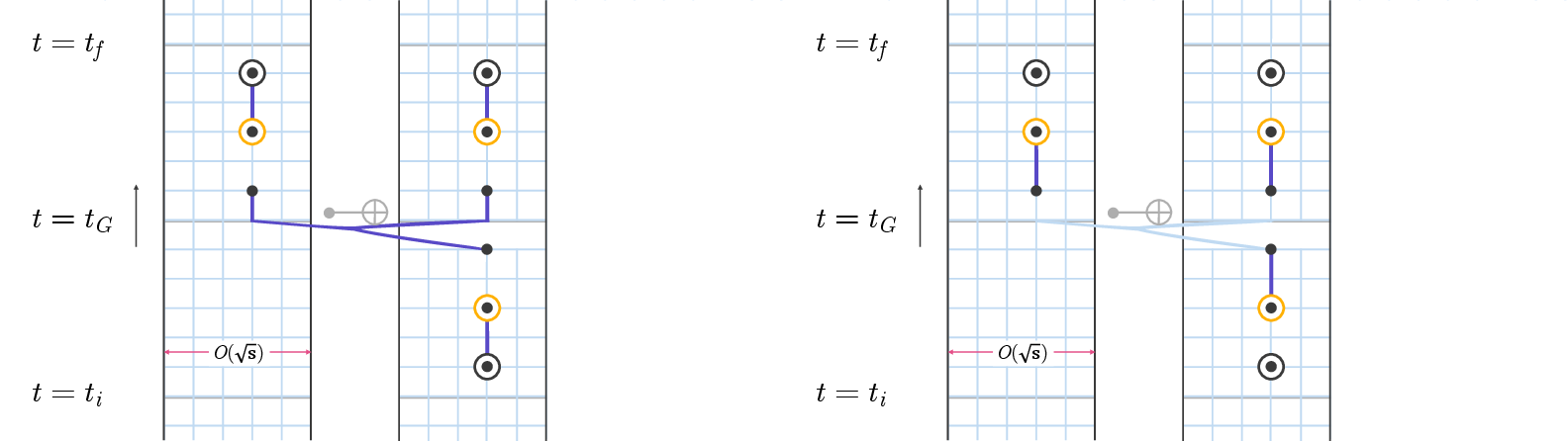

- Two surface-code patches running a transversal CNOT gate, with Z errors and “flipped” measurement readings over time.

How they studied it (methods in plain language)

Think of decoding like a puzzle:

- You see a pattern of blinking lights (the syndrome).

- You must place the fewest “patches” (errors) so the pattern makes sense and the lights turn off.

- The “fewest patches” rule is called minimum-weight decoding.

To prove this puzzle is NP-hard, the authors use a classic technique called a reduction:

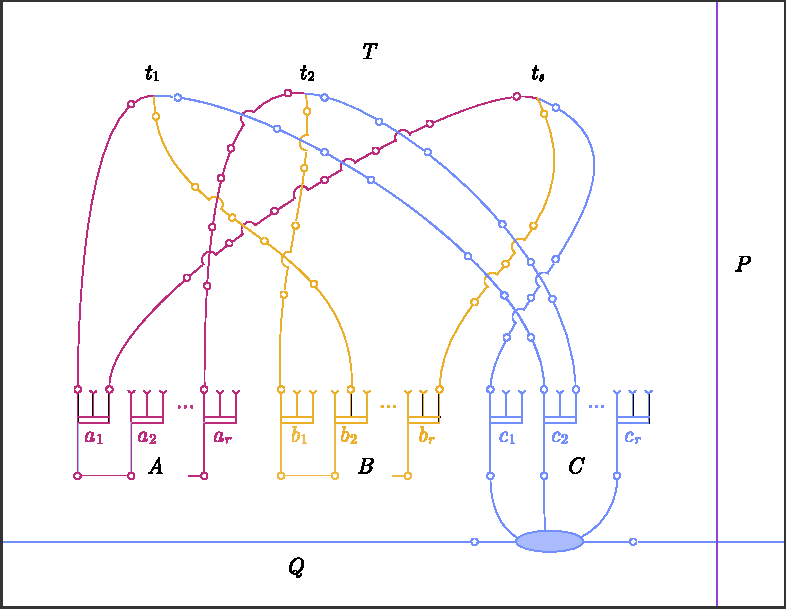

- Start with a known hard puzzle called 3-dimensional matching (3DM). In 3DM, you try to pick triples from a list so that each item is used exactly once—like forming perfect teams of three from three groups without overlap.

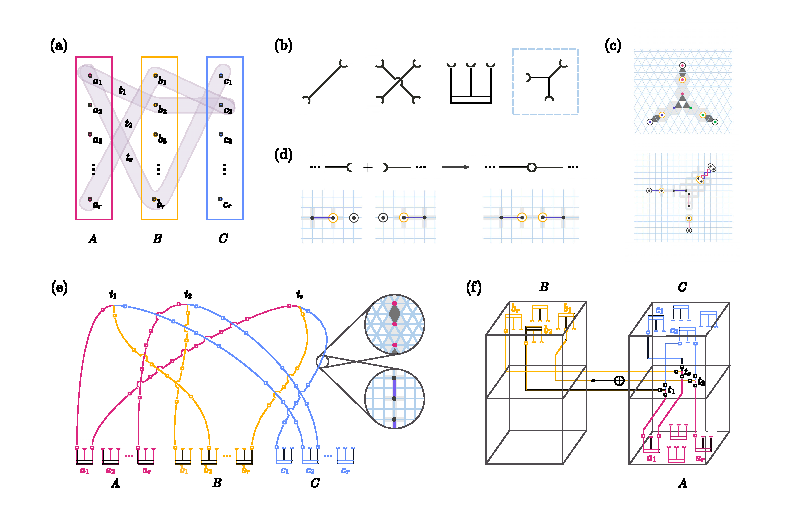

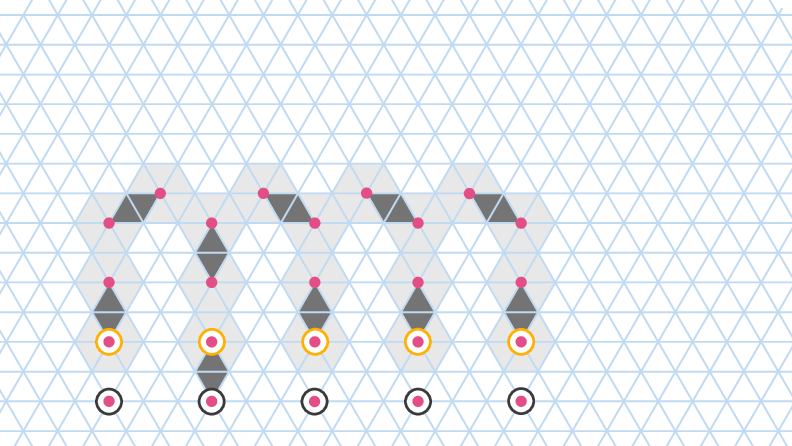

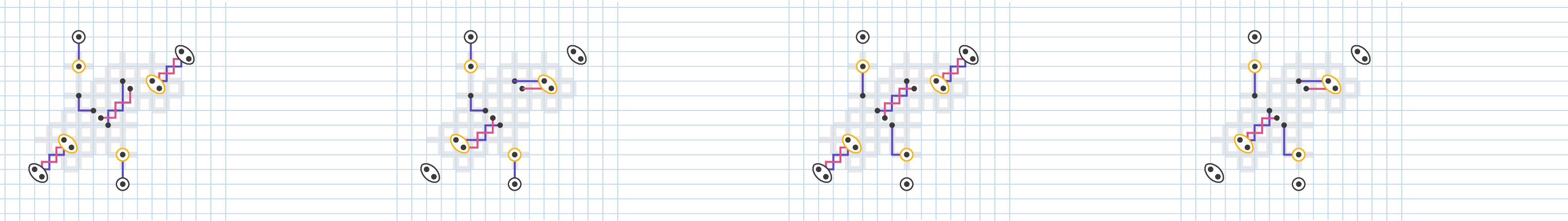

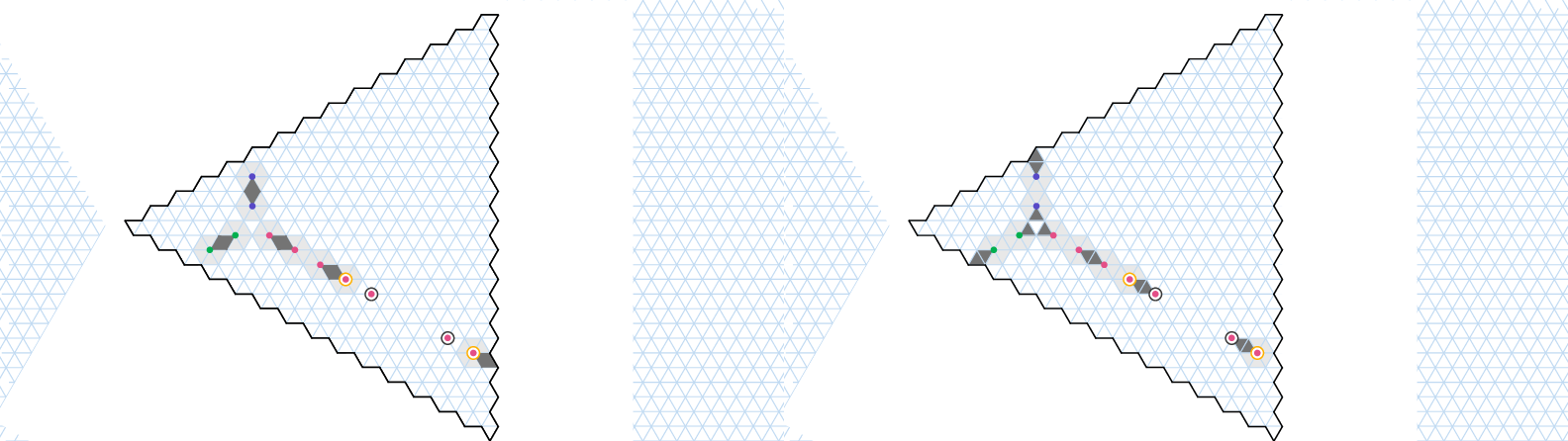

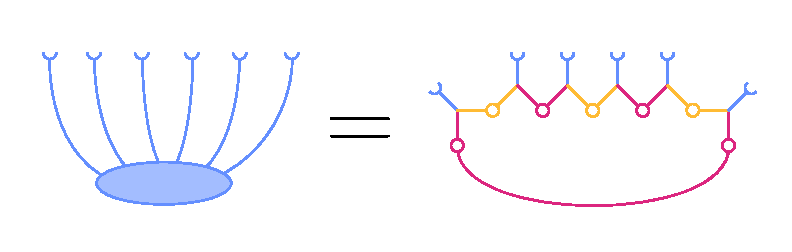

- They cleverly transform any 3DM puzzle into a decoding puzzle for a quantum code. This is done by building small, reusable structures called gadgets (like Lego pieces). Each gadget uses carefully placed warning lights (defects) so that solving the decoding puzzle exactly is the same as solving the 3DM puzzle.

- If you could always decode exactly and quickly, you could solve 3DM quickly too—which we believe is not possible. So decoding exactly must also be hard.

What are these gadgets?

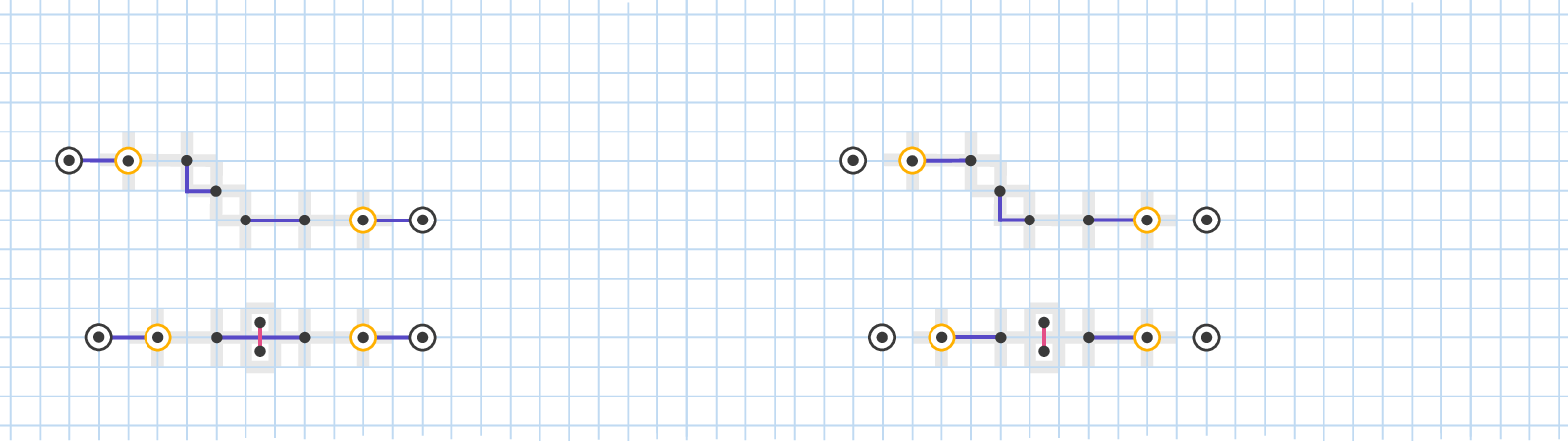

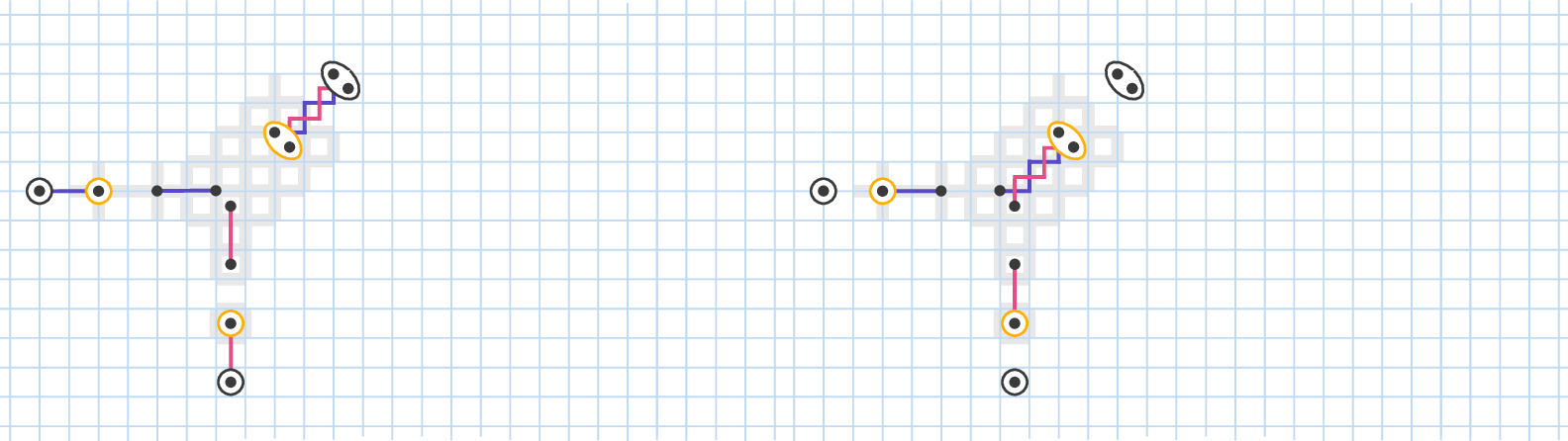

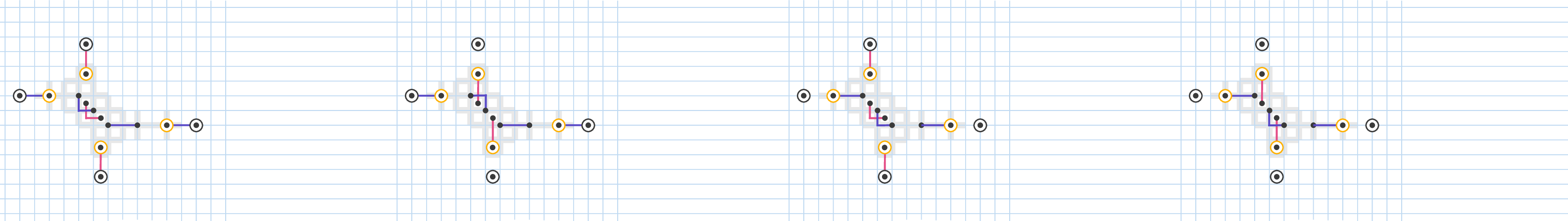

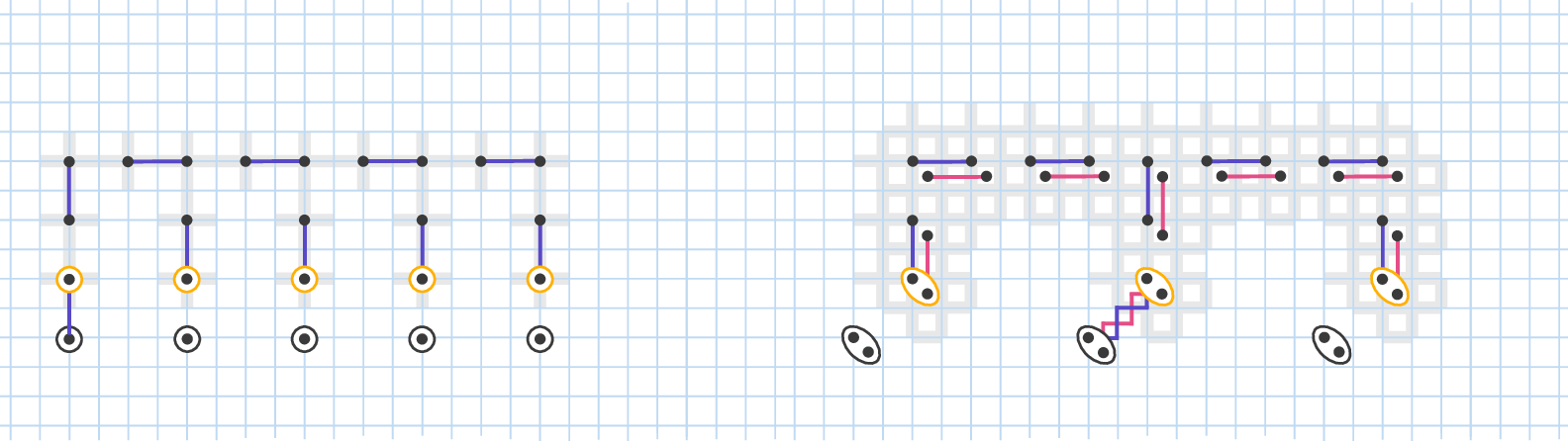

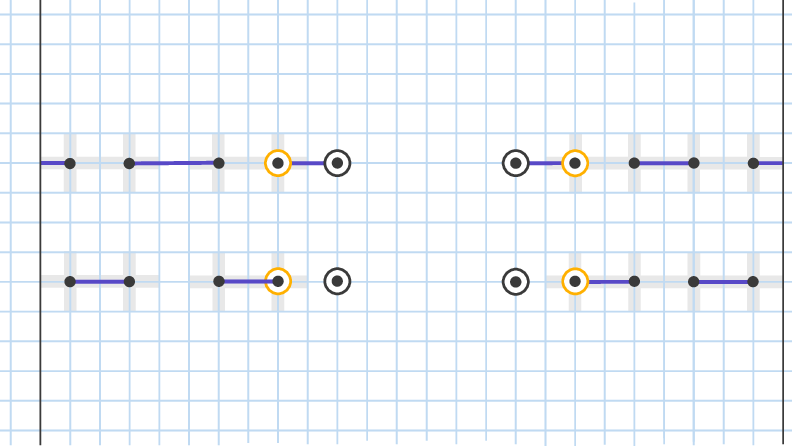

- Wire gadget: carries a “true/false” choice from one spot to another, like a straight wire carrying a signal.

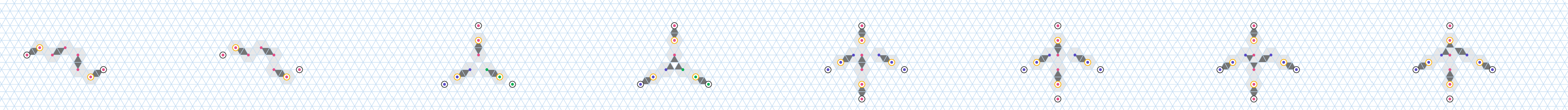

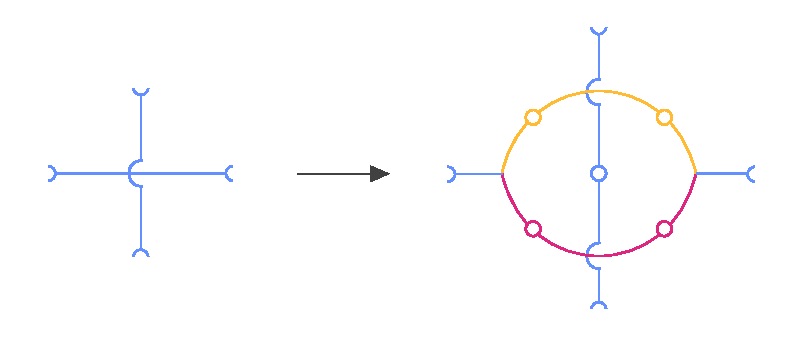

- Crossing gadget: lets two wires cross without mixing up their signals, like a bridge or an overpass.

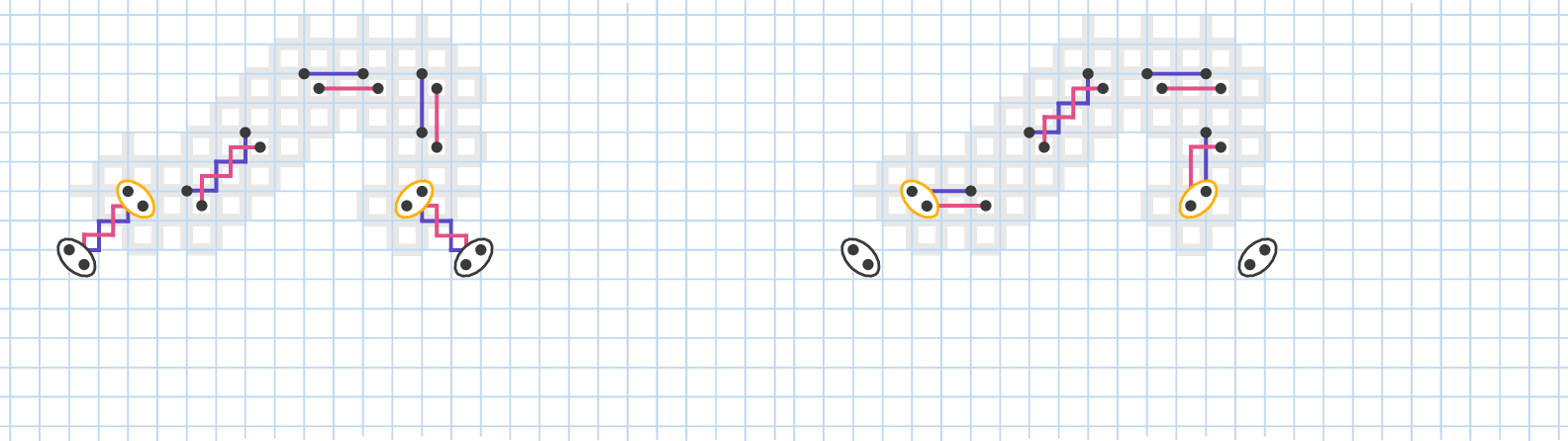

- Element gadget: enforces “pick exactly one” among several options, matching the 3DM “use each item once.”

- Splitting gadget: duplicates a signal into three parts, matching the three types in 3DM (A, B, C).

They place these gadgets far enough apart so they don’t interfere except where intended. For the transversal CNOT case, time adds a third dimension, and wires can “braid” around each other instead of needing many crossings.

Along the way, they use simple counting rules and distance arguments to show:

- Any valid fix must have at least a certain number of patches (errors).

- When the minimum is reached, the fixes around each gadget must look like one of a few specific patterns.

- Matching these local patterns across connected gadgets is possible if and only if the original 3DM puzzle has a perfect matching.

Main findings

- Exact minimum-weight decoding is NP-hard in all three settings: 1) Color code with Z errors. 2) Surface code with X, Y, Z errors. 3) Surface code with a transversal CNOT and time-correlated measurements (Z errors plus measurement flips).

- This shows that even very basic, practical decoding tasks can be computationally intractable when you insist on the exact minimum.

- However, there’s a silver lining: in each setting there are known efficient decoders that get very close to the minimum—within a small constant factor (like 2x or 3x the minimum). So while perfect is hard, “near-perfect” is doable.

Why this is important

- Quantum computers constantly produce syndrome data that must be decoded in real time. If exact minimum-weight decoding is NP-hard, then trying to do it perfectly could become the bottleneck, slowing everything down.

- The results push the community toward:

- Using fast, approximate decoders that are good enough in practice.

- Designing systems and workflows that accept small suboptimalities in exchange for speed and scalability.

- Investing in hardware acceleration and algorithms that balance accuracy and runtime.

- The paper also clarifies a key difference: exact minimum-weight decoding can be fundamentally hard, while smart approximations can remain efficient and highly effective. This helps set realistic goals for building large, fault-tolerant quantum computers.

A bit more context (helpful definitions)

- Surface code and color code: Popular “layouts” for organizing many qubits so that errors can be detected and corrected.

- X, Y, Z errors: Three basic types of quantum errors, like flipping different “directions” of a quantum bit.

- Syndrome: The pattern of warnings you get by measuring special checks; it tells you something went wrong but not exactly where.

- Transversal CNOT: A way to do a big logical CNOT gate by applying many small CNOTs in parallel across two code blocks. It’s attractive because it helps keep errors from spreading too much.

- Measurement bit-flip: The device reads a “1” instead of “0,” or vice versa—like a misread meter.

In short: The paper proves that exact minimum-weight decoding is computationally hard in three central, real-world quantum error correction scenarios—but also reassures us that fast, near-optimal decoders exist and are the practical way forward.

Knowledge Gaps

Unresolved gaps, limitations, and open questions

Below is a single, consolidated list of concrete gaps and questions that remain open based on the paper’s findings and scope.

- Average-case complexity remains unaddressed: the results establish worst-case NP-hardness, but do not analyze typical-case difficulty under realistic i.i.d. noise, smoothed analysis, or random-syndrome ensembles.

- Parameterized complexity is unexplored: no results for fixed-parameter tractability with respect to syndrome weight, code distance, treewidth of the decoding graph, or maximum defect cluster size.

- Inapproximability thresholds are not characterized: while constant-factor (2–3) approximation algorithms are noted to exist, there are no hardness results (e.g., APX-hardness) or limits on achieving better-than-2 approximations or PTAS/EFPTAS.

- Maximum-likelihood (coset) decoding complexity is not addressed: the paper focuses on minimum-weight decoding; the NP-hardness or #P-hardness status of maximum-likelihood decoding in the same settings is not established.

- Boundary conditions are not treated formally: hardness is shown by embedding in the bulk of a large lattice; it remains open whether similar NP-hardness persists for syndromes constrained near specific boundaries or for small-distance codes.

- Circuit-level noise models are excluded: the reduction handles code-capacity and phenomenological noise (with a special tCNOT detector graph), leaving open whether NP-hardness holds for realistic circuit-level extraction (including hook/correlated faults) beyond the cited qLDPC capture.

- Noise biases and non-uniform weights are not analyzed: robustness of NP-hardness to biased Pauli noise (e.g., dephasing-dominant), spatially/temporally varying weights, or measurement-vs-data cost asymmetries is not established.

- Other operations beyond transversal CNOT are not covered: the complexity of minimum-weight decoding during lattice surgery, twist-based operations, or other transversal gates (e.g., H, S) remains open.

- Generalization to other codes is open: extensions to subsystem codes (e.g., Bacon–Shor), 3D surface codes, color codes under full Pauli noise, heavy-hex or other hardware-native lattices, and general quantum LDPC codes are not provided.

- Tight characterization for the surface code under restricted noise is missing: known polytime minimum-weight decoding for independent X/Z is contrasted with NP-hardness for XYZ; a full boundary separating easy and hard noise regimes is not given.

- Planarity/treewidth constraints are not leveraged: while the constructions use local gadgets, the resulting Tanner/detector graphs may have growing treewidth; complexity on bounded-treewidth graphs (where dynamic programming is feasible) is not resolved.

- Streaming/online decoding complexity is not discussed: the backlog issue is mentioned, but no bounds are given for online or real-time variants, e.g., amortized time or memory requirements under continuous syndrome streams.

- Robustness of gadgets is not quantified: minimal-cover arguments rely on local case analysis; tolerance to small perturbations (extra noise, missing/extra detectors, or slight geometric distortions) is not analyzed.

- Average error weight regime is not studied: hardness instances may involve dense, crafted syndromes; whether NP-hardness persists when the syndrome weight scales like o(n) or in the sparse-noise limit is unknown.

- Completeness of the logical-operator result is unclear: the section introducing NP-hardness of determining the logical effect of minimum-weight corrections is incomplete in the provided text; full statement, scope, and proof details are missing.

- Complexity with imperfect first/last measurement rounds is not treated: tCNOTZ assumes ideal first/last rounds; whether similar results hold without this simplification remains open.

- Constraints on geometry and routing are idealized: reductions rely on long wire gadgets and sorting-network-like routing; the minimal overhead, congestion limits, and constant-factor blowups in physically realistic layouts are not quantified.

- Hybrid or learned decoders are not compared: no discussion of whether practical neural/ML or heuristic decoders can avoid the worst-case hardness instances in practice, or whether they offer approximation guarantees.

- Hardness under erasures and mixed noise models is unstudied: the impact of erasure channels (where exact positions of some errors are known) or mixed Pauli+erasure models on NP-hardness is not analyzed.

- Fine-grained complexity and lower bounds are absent: there are no conditional lower bounds (e.g., SETH-based) on running time for exact or approximate decoding in these settings.

- Structural characterization of hard syndromes is missing: there is no taxonomy of which syndrome topologies are hard/easy (e.g., based on component size, separator structure, or graph minors).

- Practical limits of constant-factor decoders are not elaborated: while Appendix references note 2–3 factor approximations, the tightness, empirical performance, and trade-offs between weight optimality and logical failure are not assessed.

- Extension to non-Pauli/coherent errors is not discussed: the minimum-weight formulation is inherently Pauli; complexity under coherent or amplitude damping noise (and corresponding optimal recovery formulations) remains open.

Practical Applications

Immediate Applications

The following items translate the paper’s findings into concrete actions that can be deployed now across industry, academia, policy, and practice. Each bullet lists relevant sectors, potential tools/workflows, and key assumptions/dependencies.

- Prioritize approximate decoding over exact minimum-weight decoding

- Sectors: Quantum hardware/software, cloud quantum services, HPC

- What to do: Replace exact minimum-weight decoders with fast algorithms that have provable constant-factor guarantees (2×–3× of optimal, as shown to exist in all three settings studied: color code Z-noise, surface code XYZ-noise, and surface-code tCNOT with Z + measurement noise).

- Tools/workflows: Union-Find decoders, Matching-based approximations (e.g., PyMatching), belief propagation variants, sparse-blossom-style decoders, decoder autotuning (select decoder by code, noise, and latency constraints).

- Assumptions/dependencies: NP-hardness is worst-case; approximate algorithms assume i.i.d./locally structured noise and code geometries similar to those analyzed.

- Establish decoder benchmarking regimes using hard-instance generators

- Sectors: Academia, software vendors, standards bodies

- What to do: Use the paper’s gadget construction (wire, crossing, element, splitting) to synthesize adversarial syndromes for surface/color code decoders, including tCNOT syndromes with 3D routing.

- Tools/workflows: Stim (detector error models), syndrome generators that place gadgets with controlled spacing; benchmark suites comparing factor-approximate decoders against baselines.

- Assumptions/dependencies: Hard-instance generators emulate worst-case structure; results should be complemented with average-case tests under physical noise models.

- Update resource estimation and runtime scheduling for QEC to avoid classical backlog

- Sectors: Quantum hardware operations, runtime systems, cloud orchestration

- What to do: Incorporate NP-hard worst-case spikes into classical processing budgets; implement load-smoothing (buffering, streaming decoding, batching), graceful degradation paths (e.g., switch to cheaper approximations under load).

- Tools/workflows: Streaming pipelines on FPGAs/GPUs, edge co-processors near cryo-control, latency-aware schedulers, queue-length monitors triggering decoder mode-switches.

- Assumptions/dependencies: Workflows rely on reliable latency bounds for chosen approximations; hardware compatibility for accelerator integration.

- Modify compilers/runtimes to remain in decoder-friendly regimes

- Sectors: Quantum compilers, control software, algorithm design

- What to do:

- For surface code with XYZ noise, avoid patterns that force joint non-separable decoding unless specialized approximations are in place.

- For transversal CNOT (tCNOT), ensure decoders handle the altered detector graph across the gate time slice; consider circuit structures that reduce gadget-like crossings/braidings.

- Tools/workflows: Passes that annotate operations with decoder cost models; operation scheduling that avoids dense, adversarial syndrome layouts; runtime flags to choose decoding strategies per operation class.

- Assumptions/dependencies: The mapping passes must know the detection graph implications; success depends on noise stationarity and code layout stability.

- Standardize decoding performance metrics beyond exact optimality

- Sectors: Standards/policy, benchmarking consortia, procurement

- What to do: Define KPIs embracing approximation (e.g., approximation ratio, logical error rate inflation vs optimal, tail-latency under bursty syndromes, energy per round).

- Tools/workflows: Benchmark specs that include mixed X/Y/Z errors, tCNOT phenomenological noise, and adversarial placements; reporting templates for vendors.

- Assumptions/dependencies: Agreement on noise/interconnect models; availability of reference datasets.

- Improve QEC simulation practices and reporting

- Sectors: Academia, software tools, hardware validation

- What to do: Use approximate decoders in Monte Carlo threshold studies; report sensitivity to decoder choice and approximation ratio; explicitly state when exact min-weight is infeasible and replaced by surrogates.

- Tools/workflows: Stim + PyMatching pipelines; reproducible configs with both optimistic (exact on small patches) and realistic (approximate at scale) decoders.

- Assumptions/dependencies: Comparable noise parameterizations across studies; careful separation of code performance vs decoder performance in claims.

- Curriculum and training updates

- Sectors: Education, workforce development

- What to do: Introduce NP-hardness of minimum-weight decoding, gadget reductions, and practical decoder design in QEC courses; provide hands-on labs that implement gadget-based adversarial testcases.

- Tools/workflows: Open-source notebooks that instantiate gadget sets and run decoders; problem sets on approximation guarantees and trade-offs.

- Assumptions/dependencies: Access to simulation tooling; basic background in complexity and stabilizer codes.

- Risk management for real-time systems

- Sectors: Operations/security in quantum data centers

- What to do: Recognize that adversarially structured syndromes could trigger worst-case decoding compute; include throttling, anomaly detection on detection-event density, and fallbacks (e.g., coarser decoding or delayed frame updates).

- Tools/workflows: Runtime monitors, admission control, circuit-level guardrails.

- Assumptions/dependencies: Worst-case patterns are rare in physical noise but possible under stress or misconfiguration.

Long-Term Applications

The items below will likely require further research, scaling, or engineering development before broad deployment.

- Hardware decoder co-processors with provable approximation guarantees

- Sectors: Semiconductor, quantum control hardware, HPC

- What to build: ASIC/FPGA/GPU accelerators for streaming approximate decoding with 2×–3× guarantees; near-sensor compute to minimize latency; multi-accelerator load balancing.

- Dependencies: Mature detection-graph interfaces (e.g., Stim-like), power/thermal constraints, compiler hooks to exploit hardware features.

- Code/architecture co-design for decoding tractability

- Sectors: Quantum architecture, code design

- What to pursue:

- Codes and schedules that maintain separability (e.g., bias-exploiting codes like XZZX), single-shot or algorithmic fault tolerance, or LDPC constructions with matching-friendly structure.

- Gate synthesis/scheduling that avoids hard crossings at critical times (e.g., around transversal CNOTs).

- Dependencies: Verified logical performance under realistic noise; fault-tolerance proofs; layout constraints of specific hardware platforms.

- Adaptive, decoder-aware compilation and runtime control

- Sectors: Compiler/runtime, cloud orchestration

- What to build: Toolchains that predict decoder cost from IR/circuit features, choose operations/schedules accordingly, and adapt decoding modes at runtime based on observed syndrome statistics.

- Dependencies: Predictive models linking circuit structure to detection-graph hardness; telemetry from decoders; stable APIs between compilers and decoders.

- Standards for decoder energy/latency reporting and SLAs

- Sectors: Policy, procurement, cloud markets

- What to establish: Requirements to publish classical post-processing energy, latency distributions, and approximation guarantees as part of quantum service SLAs.

- Dependencies: Community consensus on metrics; vetted benchmark suites; regulatory engagement.

- Average-case and smoothed-analysis theory for decoding

- Sectors: Academia (theory + systems)

- What to study: When physical noise distributions yield easy instances (despite worst-case NP-hardness); guarantees for ML-based decoders; hybrid strategies that detect and short-circuit hard substructures online.

- Dependencies: Realistic noise models and datasets; theoretical frameworks linking physics and complexity.

- ML-guided decoders with certificates

- Sectors: Software, AI for systems

- What to build: Learned decoders that suggest corrections rapidly, paired with lightweight certifiers or a posteriori bounds on suboptimality; fallback to guaranteed approximations on detected hard cases.

- Dependencies: Training corpora covering both typical and adversarial syndromes; certifiers compatible with streaming constraints.

- Open challenges and shared datasets for decoder stress-testing

- Sectors: Academia, industry consortia

- What to do: Annual competitions akin to SAT/ML contests using gadget-based adversarial instances and physically realistic workloads; leaderboards tracking approximation, latency, and energy.

- Dependencies: Community coordination; standardized formats for detector graphs and syndromes.

- System-level resilience to worst-case decoding spikes

- Sectors: Data-center-scale quantum services

- What to build: Multi-tenant schedulers that anticipate and redistribute decoding load; preemption and priority rules for protected experiments; cross-node pooling of decoder accelerators.

- Dependencies: High-speed interconnects; robust telemetry; economic models for scheduling trade-offs.

Notes on Assumptions and Dependencies (global)

- The NP-hardness shown is worst-case and applies to minimum-weight decoding in three practically relevant settings: color code under Z noise; surface code under XYZ noise; surface code with a transversal CNOT under phenomenological Z + measurement noise.

- Efficient constant-factor approximation decoders exist in all three settings (2×–3×); their practical performance depends on noise bias, code layout, and hardware latency constraints.

- Gadget-based hardness constructions produce artificial but instructive syndromes; physical noise typically yields more benign instances, but system design must handle spikes safely.

- Some recommendations (e.g., single-shot or algorithmic fault tolerance) assume access to codes/protocols and hardware that support them; migration may require architectural changes.

- Cross-layer co-design (hardware, compiler, decoder, scheduler) is essential to meet latency and energy targets while maintaining logical error rates.

Glossary

- Abelian subgroup: A group in which all elements commute, used to define the stabilizer of a quantum code. "an Abelian subgroup of the -qubit Pauli group "

- Algorithmic fault tolerance: A paradigm that achieves fault tolerance through algorithmic techniques rather than solely hardware-level redundancy. "algorithmic fault tolerance"

- Backlog problem: A bottleneck where classical decoding cannot keep up with incoming syndrome data. "the backlog problem, in which classical post-processing becomes the bottleneck"

- Code-capacity setting: A noise model where parity-check measurements are assumed perfect. "Two canonical scenarios for the decoding problem are the code-capacity and phenomenological noise models"

- Color code: A topological quantum error-correcting code defined on (dual) triangular/hexagonal lattices. "the triangular color code~\cite{bombin2006} defined on a hexagonal lattice"

- Connected error component: A maximal connected set of error locations under adjacency in the Tanner graph. "A connected error component of is the restriction of to a connected set under this metric."

- Crossing gadget: A constructed pattern of defects that allows two “wires” (information paths) to cross while enforcing consistency constraints. "A crossing gadget has two pairs of nodes."

- Decoding hypergraph: A hypergraph whose vertices represent syndrome detectors and whose hyperedges represent elementary errors. "a decoding hypergraph, whose vertices and hyperedges correspond to, respectively, the syndrome and elementary errors."

- Degenerate (code degeneracy): The property that different physical errors can yield the same syndrome. "Since stabilizer codes are degenerate, i.e., different errors can have the same syndrome,"

- Depolarizing noise: A noise model where X, Y, and Z errors occur with certain probabilities, often equally likely. "depolarizing noise"

- Detector (X detector): A product of consecutive stabilizer measurement outcomes used to detect changes across time. "The constraints are the detectors"

- Detector check matrix: The matrix mapping errors to detector outcomes in the phenomenological model. "detector check matrix~\cite{Higgott2025sparseblossom}"

- Dual lattice: The lattice formed by interchanging faces and vertices of a primal lattice; used in code definitions. "In the dual lattice description, qubits are placed on the triangles of the triangular lattice."

- Element gadget: A gadget representing a 3DM element with multiple nodes of one defect type, exactly one of which must be TRUE in a minimal cover. "An element gadget has nodes, all of the same defect type."

- Equivalence class of errors: The set of errors that differ by stabilizers and thus have the same syndrome effect. "the most likely equivalence class of errors."

- Error excess: The integer offset between the number of defects in a gadget and the minimal number of errors needed to cover them. "an integer , called the error excess."

- Error syndrome: The vector of check outcomes indicating which stabilizers were violated by an error. "given an error syndrome describing which errors may have occurred in the system"

- Hamming weight: The number of nonzero entries in a binary vector; here, the number of qubits or measurements in error. "The weight of an error is the Hamming weight, i.e., the number of corrupted qubits."

- Hyperedge: A generalized edge connecting multiple vertices (here, a triple from A×B×C). "a set of hyperedges ."

- Incidence matrix: A binary matrix encoding adjacency between two types of objects (e.g., checks and qubits). "the incidence matrix between the triangles and vertices on the dual lattice."

- Maximum-likelihood decoder: A decoder that selects the most probable equivalence class of errors given the syndrome. "the maximum-likelihood decoder"

- Minimal cover: A minimal-weight error configuration that covers all defects of a gadget within its neighborhood. "is a minimal cover of ."

- Minimum-weight decoding: The problem of finding the lowest-weight error consistent with a given syndrome. "minimum-weight decoding is NP-hard"

- Pauli group: The group of tensor products of Pauli operators with phases on multiple qubits. "the -qubit Pauli group "

- Parity-check matrix: The matrix H mapping candidate error vectors to syndromes in the (code-capacity) decoding problem. "parity-check matrix"

- Phenomenological noise model: A decoding model where both data qubits and measurement outcomes can be noisy. "phenomenological noise model"

- Phase-flip noise: Noise that applies Z errors to qubits independently with some probability. "phase-flip noise"

- Single-shot property: The ability to reliably decode with a single round of (noisy) measurements without repetition. "the single-shot property"

- Splitting gadget: A gadget with three nodes of different defect types whose truth values are constrained to be equal. "A splitting gadget has three nodes, each of different defect type."

- Stabilizer checks: The commuting Pauli operators measured to obtain the syndrome. "we first measure the stabilizer checks of "

- Stabilizer code: A quantum code defined as the +1 eigenspace of a commuting subgroup of the Pauli group. "A stabilizer code on qubits is defined as the -eigenspace of an Abelian subgroup of the -qubit Pauli group "

- Surface code: A topological stabilizer code defined on a square lattice with checks on vertices and faces. "We consider the surface code~\cite{Kitaev2003,Dennis2002} defined on a square lattice"

- Syndrome extraction circuits: Circuits implementing stabilizer measurements to obtain syndromes over time. "syndrome extraction circuits"

- Tanner graph: A bipartite graph representing relationships between error locations (variables) and constraints (checks). "we can create a Tanner graph , which is a bipartite graph"

- Three-dimensional matching (3DM): An NP-complete problem asking for a perfect matching in a 3-uniform hypergraph. "The 3-dimensional matching problem (3DM) is among Karp's 1972 foundational list of 21 NP-complete problems"

- Topological quantum codes: Quantum codes defined on lattices/manifolds with local checks and topological properties. "other topological quantum codes"

- Transversal logical CNOT gate: A logical entangling gate applied transversally across code blocks to implement CNOT. "a transversal logical CNOT gate is applied"

- Translationally-invariant codes: Codes whose check patterns repeat periodically across the lattice. "two-dimensional translationally-invariant codes"

- Wire gadget: A gadget that propagates a truth value between two partner nodes of the same defect type. "A wire gadget has two nodes of the same defect type"

Collections

Sign up for free to add this paper to one or more collections.