- The paper introduces SDIFP, which applies a global affine transformation to convert infinite-dimensional constraints into a tractable low-dimensional root-finding problem for exact conservation.

- It employs a doubly-stochastic unbiased gradient estimator that decouples global constraint evaluation from mini-batch gradients, reducing memory usage significantly.

- Numerical experiments show that SDIFP achieves conservation errors near machine precision, outperforming traditional methods in scalability and stability for ultra-high-dimensional PDEs.

Stochastic Dimension Implicit Functional Projections for Exact Integral Conservation in High-Dimensional PINNs

Introduction

This work addresses a central problem in the physics-informed neural networks (PINNs) literature: enforcing exact macroscopic conservation laws—such as mass, momentum, and energy—in high-dimensional, mesh-free neural PDE solvers. While PINNs have demonstrated flexibility in approximating solutions to a wide range of PDEs, conventional enforcement of integral conservation via soft constraints induces only approximate satisfaction, which does not guarantee the elimination of non-physical artifacts or long-term stability. Existing hard-constraint techniques resort to explicit projection of discrete network outputs via structured deterministic quadrature. However, these approaches introduce exponential grid-dependency (the curse of dimensionality) and are fundamentally incompatible with modern mesh-free, randomly collocated PINN architectures. Additionally, implicit optimization layers and operator-splitting schemes lack convergence guarantees under the non-convex quadratic constraints characteristic of energy conservation.

The paper introduces the Stochastic Dimension Implicit Functional Projection (SDIFP) framework, which enforces exact integral constraints in a continuous, mesh-free PINN setting. It presents two main technical innovations: i) a global affine transformation applied to network outputs, transforming the intractable infinite-dimensional constraint into a tractable low-dimensional algebraic root-finding problem; ii) a doubly-stochastic unbiased gradient estimator (DS-UGE) that decouples the evaluation of global constraints from stochastic mini-batch gradients, enabling tractable memory usage even for ultra-high-dimensional systems.

Theoretical Framework

Limitations of Discrete Projections and Convex Optimization Layers

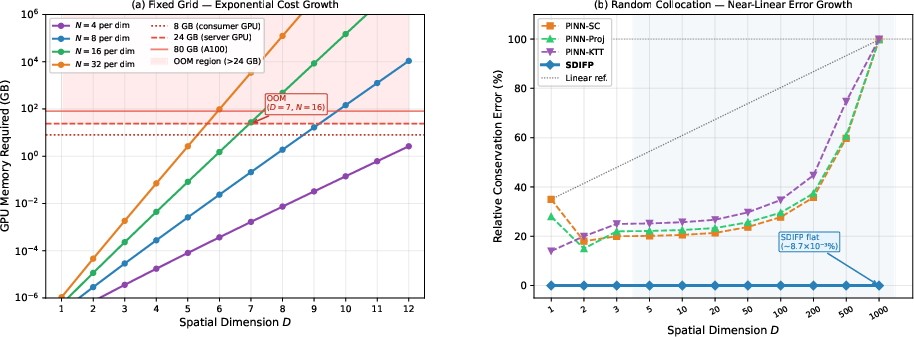

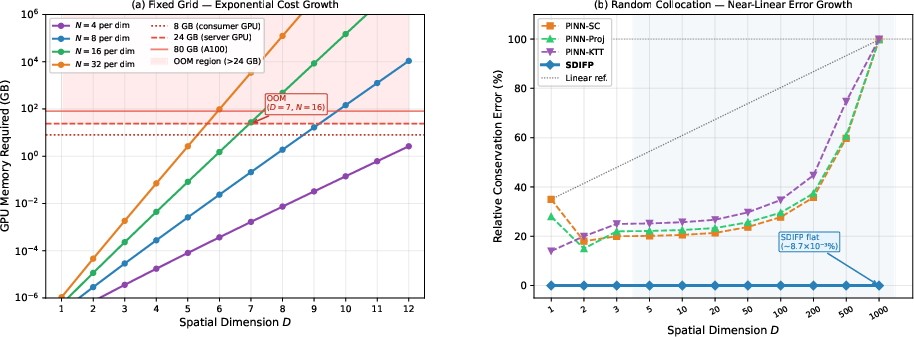

Existing projection frameworks enforce conservation by projecting the vector of neural outputs on each mesh onto the intersection subspace defined by the constraints. These projections are tractable over uniform grids with deterministic quadrature, enabling closed-form or iterative solution via Lagrangian multipliers. However, this grid-dependency couples computational tractability to dimension and disables feasibility for high-dimensional or unstructured domains, as the memory required grows exponentially with dimension (Figure 1).

Figure 2: Fixed-grid memory scaling with spatial dimension D (left) and relative conservation error under random collocation versus D for SDIFP and baseline PINNs (right).

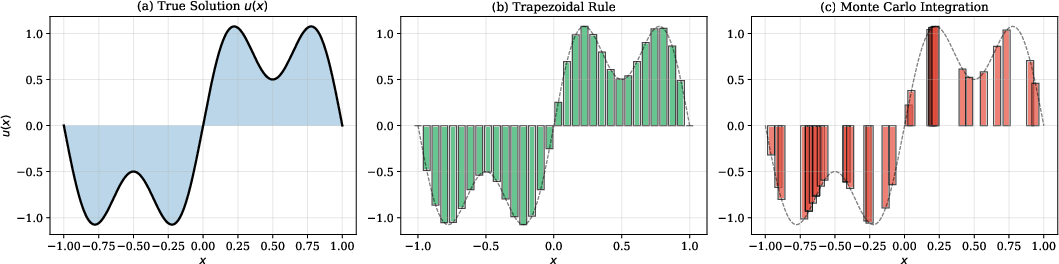

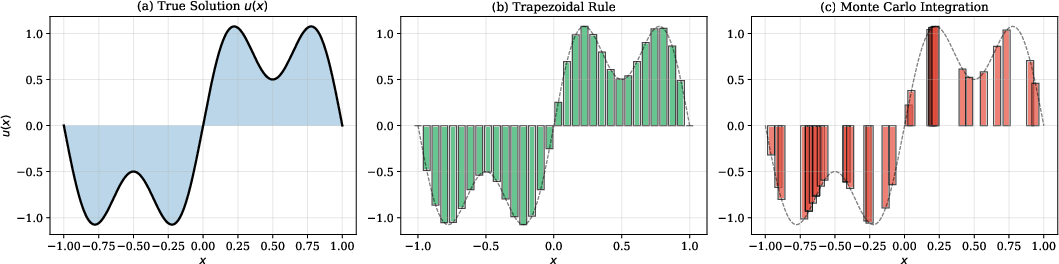

Attempts to generalize to random or mesh-free collocation using stochastic quadrature replace constant grid weights with heterogeneous MC integration, rendering the analytic forms of projection invalid and introducing persistent statistical bias (see Figure 2).

Figure 3: Conservation error comparison under fixed-grid and random collocation.

Alternative implicit optimization layers (e.g., Πnet) lift the projection operation to differentiable convex optimization, invoking the implicit function theorem to compute gradients. However, the quadratic energy constraint yields a non-convex manifold (a hyper-ellipsoid), violating the foundational convexity assumptions of these solvers, which can yield unbounded operator splitting iterates or divergence.

Continuous Affine Functional Projections

The core of SDIFP is to decouple constraint enforcement from the spatial discretization, acting directly on the continuous parameterization of the PINN. Rather than projecting a vector of finite outputs, an affine transformation is applied to the backbone network output:

u~(x,t)=α(t)uraw(x,t;θ)+β(t)

where the scalars α(t) and β(t) are energy- and mass-projection parameters, respectively, computed to enforce the integral constraints on the entire functional family spanned by the network output over the domain.

The required system for (α,β) consists of two moment-matching equations (one linear, one quadratic) that are algebraically solved in closed-form at each time instant. Importantly, these macroscopic averages are evaluated by detached (i.e., not included in the computational graph) Monte Carlo quadrature over large unstructured batches, breaking the curse of dimensionality without mesh constraints.

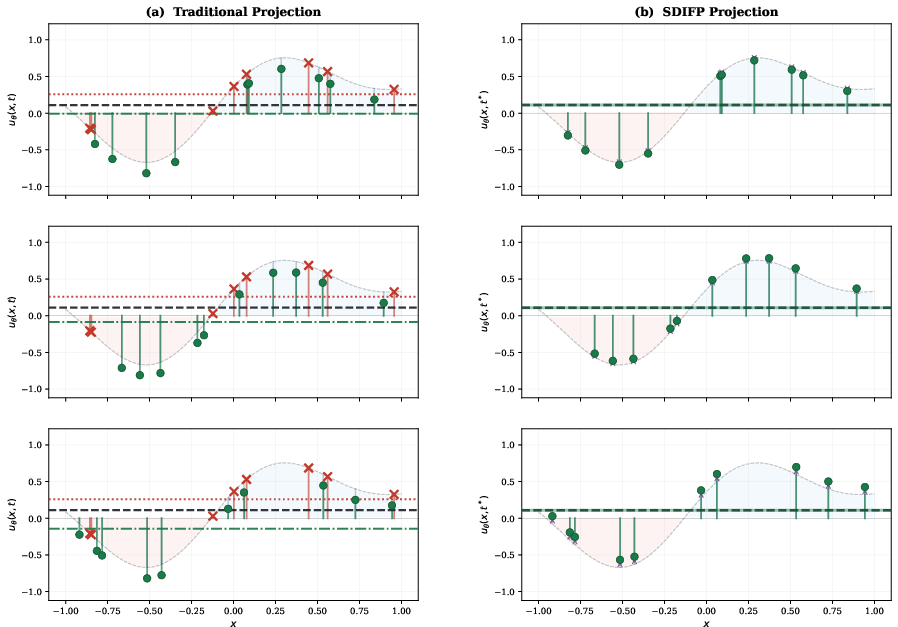

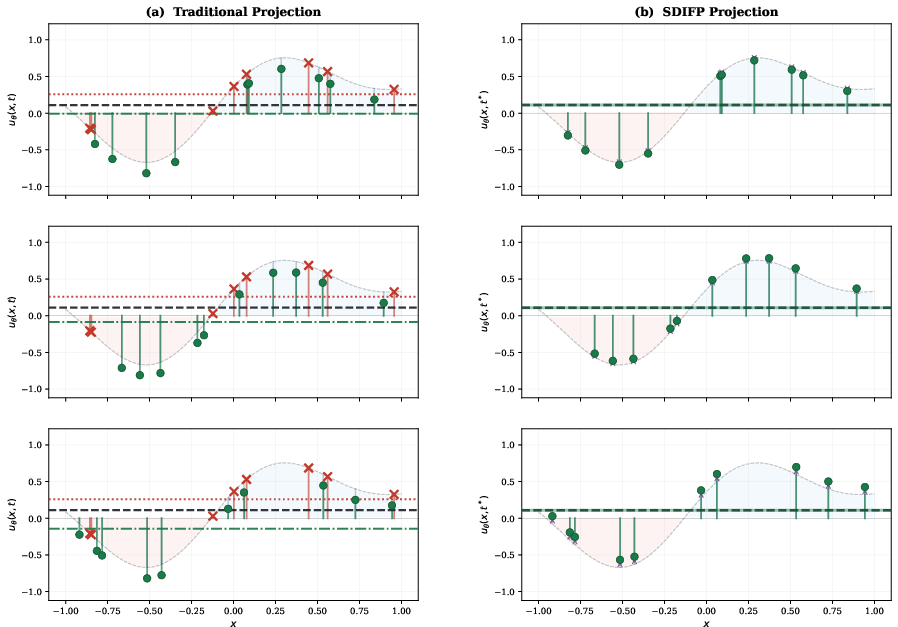

Figure 3: Comparison of two projection strategies under random collocation. (a) Traditional projection: a fixed quadrature set causes deviation from cˉ1 when the collocation set varies. (b) SDIFP projection: adaptive shifts align the projected mean with cˉ1 on every batch.

Doubly-Stochastic Unbiased Gradient Estimation

A principal technical challenge is the need to compute network parameter gradients through the affine projection step, given that the global moment computations are decoupled from the AD graph (as they are detached for scalability and efficiency). The SDIFP architecture achieves this by formulating the gradients of the affine transformation parameters (with respect to the neural network weights) in terms of the Jacobians of the empirical spatial moments, which can themselves be unbiasedly estimated from the current mini-batch.

The DS-UGE thus splits the stochasticity in two orthogonal directions: decoupling the stochastic evaluation of the PDE residuals from the stochastic estimation of the constraint gradients. This strategy dramatically reduces the memory requirements for backpropagation—from O(M⋅NL) for naive full tracking to D0—without introducing bias, as theoretically proven.

SDIFP also incorporates stochastic dimension gradient descent for differential operator estimation, employing randomized sub-selection across the principal directions of the governing linear operator while treating the affine transformation action analytically.

Numerical Validation and High-Dimensional Scaling

Low-Dimensional and Medium-Dimensional PDEs

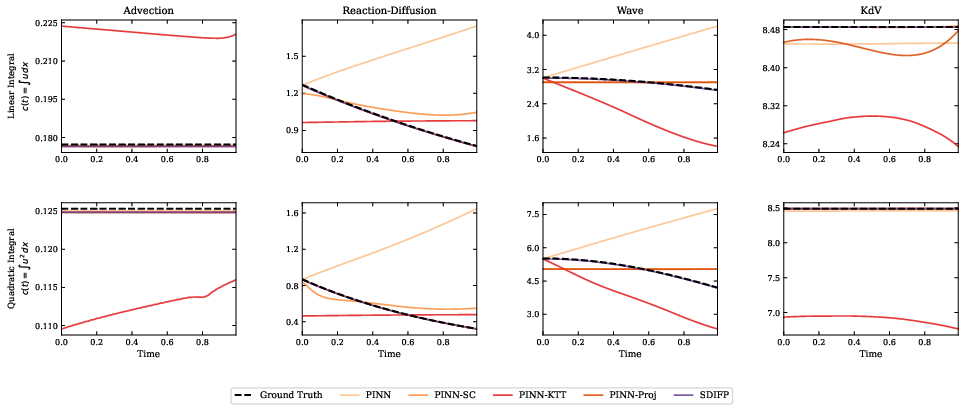

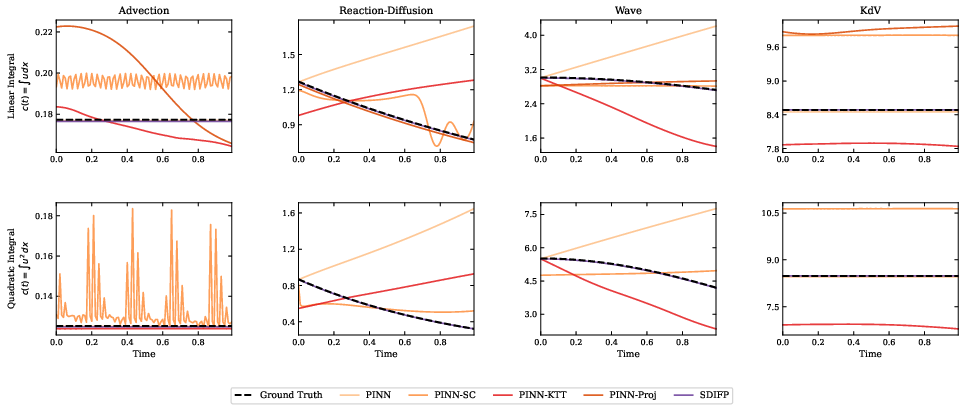

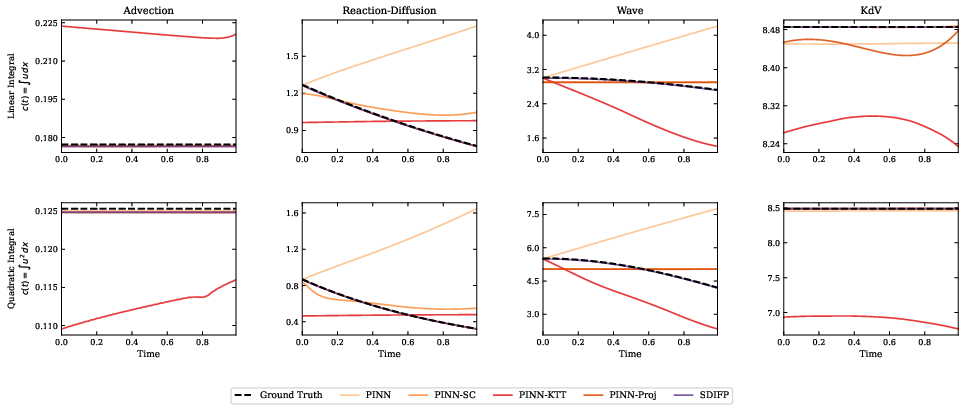

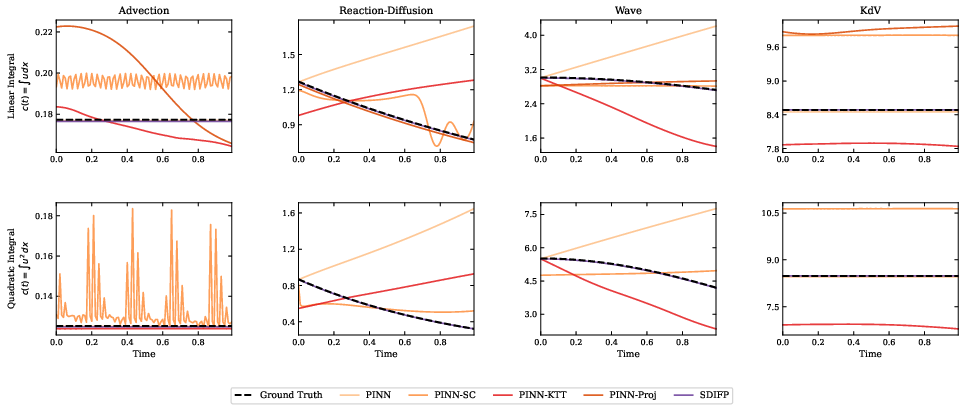

Extensive experiments on 1D, 2D, and 3D canonical problems (advection, reaction-diffusion, wave, and KdV equations) demonstrate that SDIFP maintains several orders of magnitude tighter conservation law satisfaction than baseline projection and soft constraint methods—across both uniform and mesh-free samplings.

Figure 4: Time evolution of conserved quantities under fixed grid sampling.

Figure 5: Time evolution of conserved quantities under random collocation sampling.

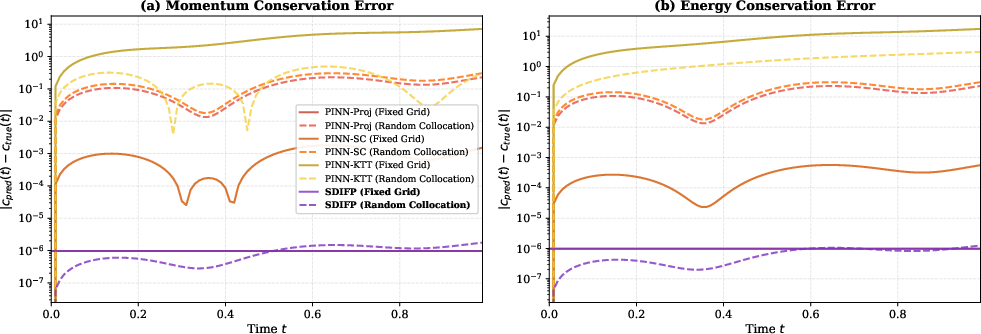

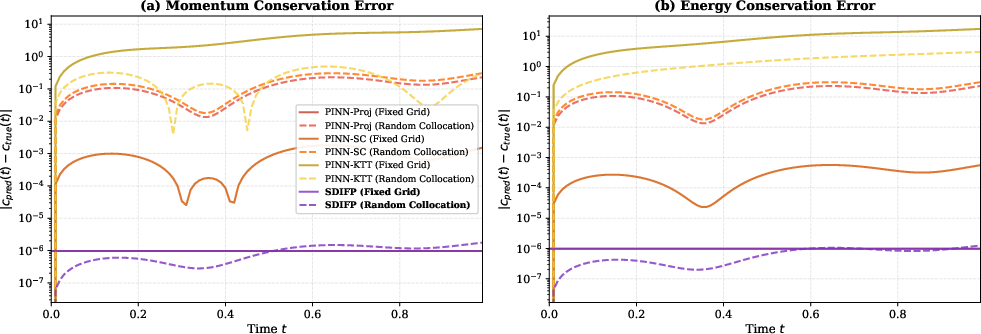

Error breakdowns show that SDIFP consistently attains absolute conservation errors on the order of machine epsilon, while mesh-based projection approaches and penalty-based PINNs exhibit error amplification under random collocation and in higher dimensions (Figures 5–7).

Figure 6: Momentum and energy conservation errors for representative PINN-based methods under fixed-grid and random collocation (1D validation).

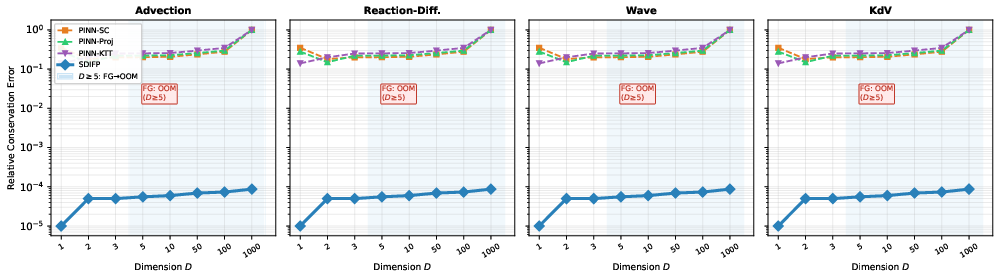

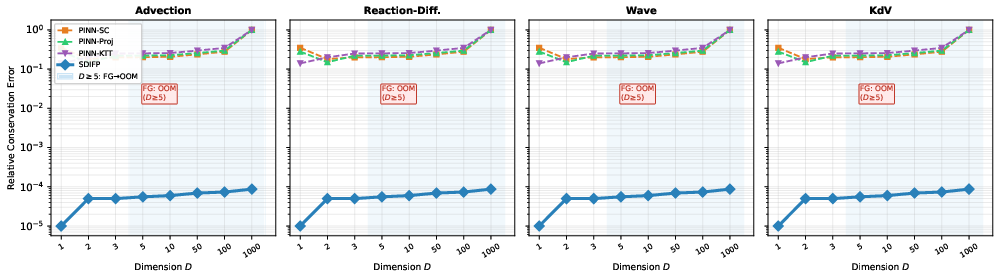

Ultra-High-Dimensional PDE Regime

A central claim is that SDIFP’s mesh-free integral enforcement enables exact conservation even as the spatial dimensionality reaches hundreds or thousands—regimes where deterministic grid-based methods are not even feasible (out-of-memory failures for D1; Figure 1 right, Figure 7). Across all tested PDEs, relative conservation error under mesh-free sampling remains nearly flat as dimension increases, in stark contrast to discrete projection and penalty approaches.

Figure 8: Relative conservation error versus D2 under random collocation for four PDE families; FG indicates fixed-grid OOM beyond moderate D3.

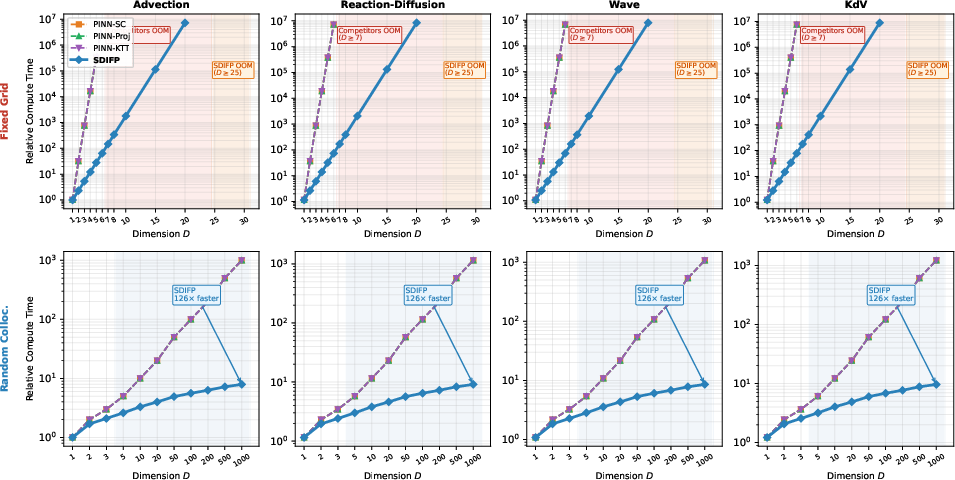

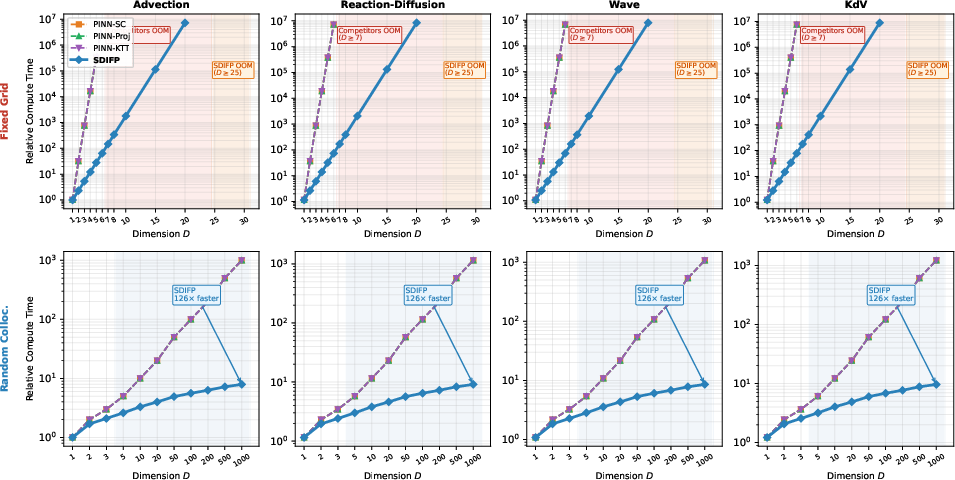

Compute time measurements highlight an additional major advantage: as dimensionality grows, the enforcement cost for discrete projections becomes prohibitive, and memory/compute requirements for differentiable implicit layers diverge, while SDIFP’s detached-quadrature and stochastic-dimension architecture maintain scalable cost (Figure 9).

Figure 10: Relative compute time for integral-constraint enforcement versus D4: fixed grid (top) and random collocation (bottom), for four PDE families.

Practical and Theoretical Implications

By enforcing conservation in the functional space via an analytic closed-form affine projection, SDIFP unequivocally solves the grid-dependency and non-convexity bottlenecks for hard constraint enforcement in mesh-free PINNs. The formalism ensures global invariants are satisfied by construction, regardless of collocation topology or domain dimension, establishing a new baseline for physical fidelity in neural PDE solvers. In practice, this yields theoretically justified, memory/scalability-efficient algorithms with applications ranging from quantum many-body systems to high-dimensional kinetic theory, climate modeling, or other extreme-dimensional uncertainty quantification settings.

A limitation is the incompatibility of the affine enforcement with forced Dirichlet boundary conditions, as constant translation violates prescribed boundary values; addressing this is an avenue for future research.

Conclusion

SDIFP provides a theoretically rigorous and scalable mechanism to enforce exact conservation in high-dimensional, mesh-free PINNs. By shifting the projection paradigm to the affine functional level and decoupling constraint satisfaction from the discretization, it simultaneously resolves two longstanding barriers: the grid curse of explicit projections and the non-convex optimization limitations of implicit layers. The empirical results validate robust, stable, and efficient enforcement of both linear and quadratic integral invariants at scale, highlighting SDIFP as a critical advance for physically consistent machine learning for PDEs and offering a foundation for future work on integrating exact invariance in neural operator methods and other constrained learning frameworks.

Reference:

Stochastic Dimension Implicit Functional Projections for Exact Integral Conservation in High-Dimensional PINNs (2603.29237)