- The paper presents a unified framework that distinguishes unique attack surfaces and vulnerabilities in virtual versus robotic assistive systems.

- It details tailored methodologies for threat modeling, secure input validation, and privacy-preserving operations across both architectures.

- Practical recommendations include context-aware security measures, sensor integrity assurance, and human-in-the-loop controls to mitigate emerging risks.

Comparative Security and Privacy Frameworks for Virtual and Robotic Assistive Systems

Introduction

Advancements in artificial intelligence, machine learning, and sensor technologies have precipitated substantial deployment of assistive systems in both domestic and healthcare environments. These systems exist as either virtual (voice-enabled digital assistants leveraging cloud-based processing) or robotic (cyber-physical platforms integrating perception, decision-making, and actuation) entities. The paper "Security and Privacy in Virtual and Robotic Assistive Systems: A Comparative Framework" (2603.29907) systematically analyzes the security and privacy concerns inherent to these architectures, articulating a unifying framework for comparative assessment. This approach provides a synthetic perspective, acknowledging the convergence and orthogonality of privacy, cybersecurity, and safety in assistive technology risk modeling.

Architectural Divergence and Attack Surfaces

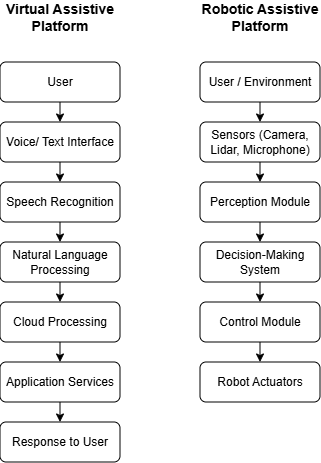

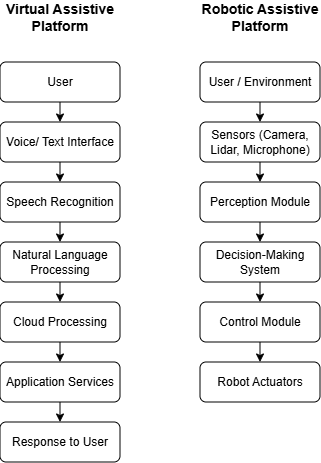

Virtual assistive systems are characterized by cloud-centric, voice-driven interaction, with primary reliance on speech recognition, NLP, and externalized computation. The critical attack vectors in these systems are associated with the user interface layer, cloud communication, and third-party service integration, elevating the risk of privacy breaches, adversarial input manipulation, and unauthorized access.

Robotic assistive systems exhibit distributed cyber-physical architectures, integrating multimodal sensing, on-device perception, local or hierarchical planning, and actuation. The attack surface for these platforms is substantially broader, encompassing sensor spoofing, perception manipulation, command injection, and both network and physical-system vulnerabilities.

Figure 1: Comparative architecture of virtual and robotic assistive systems, highlighting distinct attack surfaces in digital and cyber-physical domains.

The figure underscores that while virtual systems concentrate risk in software-mediated interfaces and remote data pathways, robotic systems expand exposure to physical world manipulation and actuator compromise.

Threat Modeling for Assistive Systems

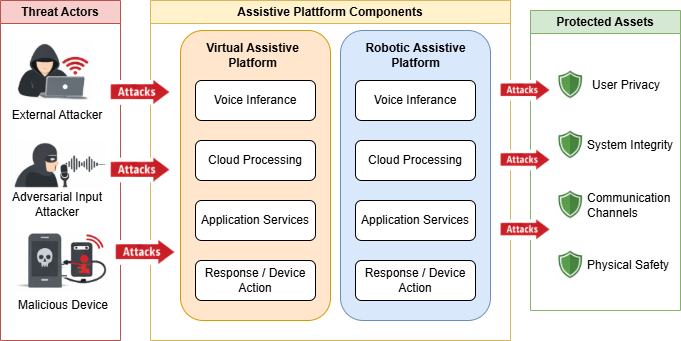

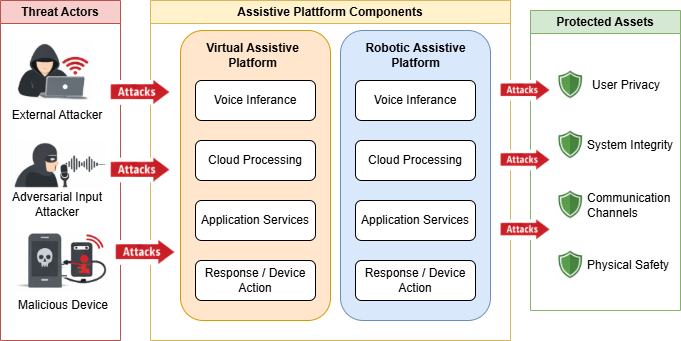

The paper offers a rigorous threat model applicable to both system classes, identifying four core assets: user data, system functionality, communication channels, and—uniquely for robotics—physical safety. Threat actors include external network adversaries, malicious insiders, compromised devices, and adversarial input injectors.

Trust boundaries are meticulously delineated at the user-device, device-cloud, perception-control, and third-party integration interfaces. These boundaries are pivotal for structuring defense in depth, as they dictate where authentication, encryption, and anomaly detection mechanisms are most required to prevent privilege escalation and lateral movement.

The attack taxonomy is systematically categorized:

- Virtual systems: Adversarial voice commands, replay attacks, data interception, and API exploitation.

- Robotic systems: Sensor spoofing, perception attacks (including model evasion), command injection, and cyber-physical safety violations.

- Cross-domain: Network channel threats affecting both paradigms.

Figure 2: Threat model illustration, showing the relationships among attackers, interfaces, assets, and system boundaries for both virtual and robotic assistive systems.

This comparative model enables formal reasoning about risk profiles given attacker capabilities, system deployment context, and trust assumptions.

Security and Privacy Challenges

Virtual Assistive Systems

The most salient vulnerabilities in virtual assistants arise from weak authentication (e.g., absence of robust speaker verification), enabling adversarial audio attacks and replay-based impersonation. The exposure of sensitive conversational data to cloud services, unencrypted communication, and weak API access controls further increase privacy risks. Theoretical and empirical evidence in the literature demonstrates the feasibility of hidden command injection (via inaudible signals) and exfiltration following cloud compromise [Bilika_2023, Zhang2017].

Assistive Robotic Systems

Robotic platforms, by virtue of their embodiment, are highly susceptible to sensor- and perception-layer attacks. Sensor spoofing and adversarial perceptual inputs can cause misclassification, unsafe behaviors, and even physical harm. Command injection—whether via compromised middleware or network interception—undermines control integrity, posing direct risks to end users. Notably, attacks that are purely informational in virtual systems escalate to safety-critical threats in the robotic context, given the physical agency of these devices.

The risk assessment matrix advanced in the paper elucidates that while attack likelihood is often higher for virtual manipulation (due to ubiquitous connectivity), the impact and safety criticality are significantly elevated for robotic platforms when cyber-physical vulnerabilities are exploited.

Risk Comparison and Systemic Implications

The unified comparative framework establishes that privacy, access control, and input validation are foundational for both system types; however, robotic systems additionally necessitate mechanisms for sensor integrity assurance, robust model validation, and runtime safety interlocks.

Notably, traditional cybersecurity defenses (e.g., encryption, access control) must be complemented by adversarial ML resilience techniques and fail-safe controls at the physical interface. The absence of such multi-layered protections in robotic assistive systems can result in systemic risk transfer—where adversarial compromise results in both digital asset exposure and direct physical harm.

Furthermore, the need for careful balancing of usability and security is accentuated by the intended user base (individuals with disabilities or cognitive limitations), for whom complex authentication or access procedures may become prohibitive.

Recommendations for Resilient Design

The paper articulates several architectural and operational recommendations:

- Secure and context-aware input validation: Integration of speaker verification and multi-modal challenge-response for virtual assistants; anomaly detection for sensor inputs in robotics.

- Privacy-preserving operation: Adoption of on-device or edge-based data processing to minimize cloud exposure.

- Robust perception systems: Adversarial training and redundancy in sensory pipelines for robots.

- End-to-end encrypted communications: Across all interfaces and protocols, particularly for devices in networked smart environments.

- Human-in-the-loop and fail-safe mechanisms: Real-time user override capabilities and emergency stop logic to prevent harm during anomalous robotic behavior.

These recommendations stress the necessity of aligning system design with the unique cyber-physical risk envelope of the assistive technology deployed.

Future Directions and Open Challenges

The comparative risk modeling enables avenues for future research, notably in the quantitative formalization of safety and security tradeoffs, dataset-driven attack simulation, and empirical validation of proposed mitigation strategies. The extension of the framework to encompass new modalities (e.g., AR/VR assistive systems, federated adaptive learning on edge devices) is a natural next step for the field.

Integration of standardized, independently evaluated security certification processes for assistive technologies will also become essential as deployment becomes pervasive in safety-critical environments.

Conclusion

The study delivers a robust comparative framework for assessing security and privacy risks in virtual and robotic assistive systems, contextualized within the broader paradigm of cyber-physical system security (2603.29907). By characterizing attack surfaces, threat actors, and architectural divergence, it enables systematic risk assessments and guides the design of more resilient, trustworthy assistive technologies. The delineated recommendations for secure input validation, privacy preservation, model robustness, and user-centric safety controls provide a technical blueprint for future innovation in both virtual and embodied assistive platforms. Such comparative analysis is imperative to ensure that the increasing autonomy and integration of assistive technologies does not outpace the development of requisite security and safety assurances.