Short proofs in combinatorics and number theory

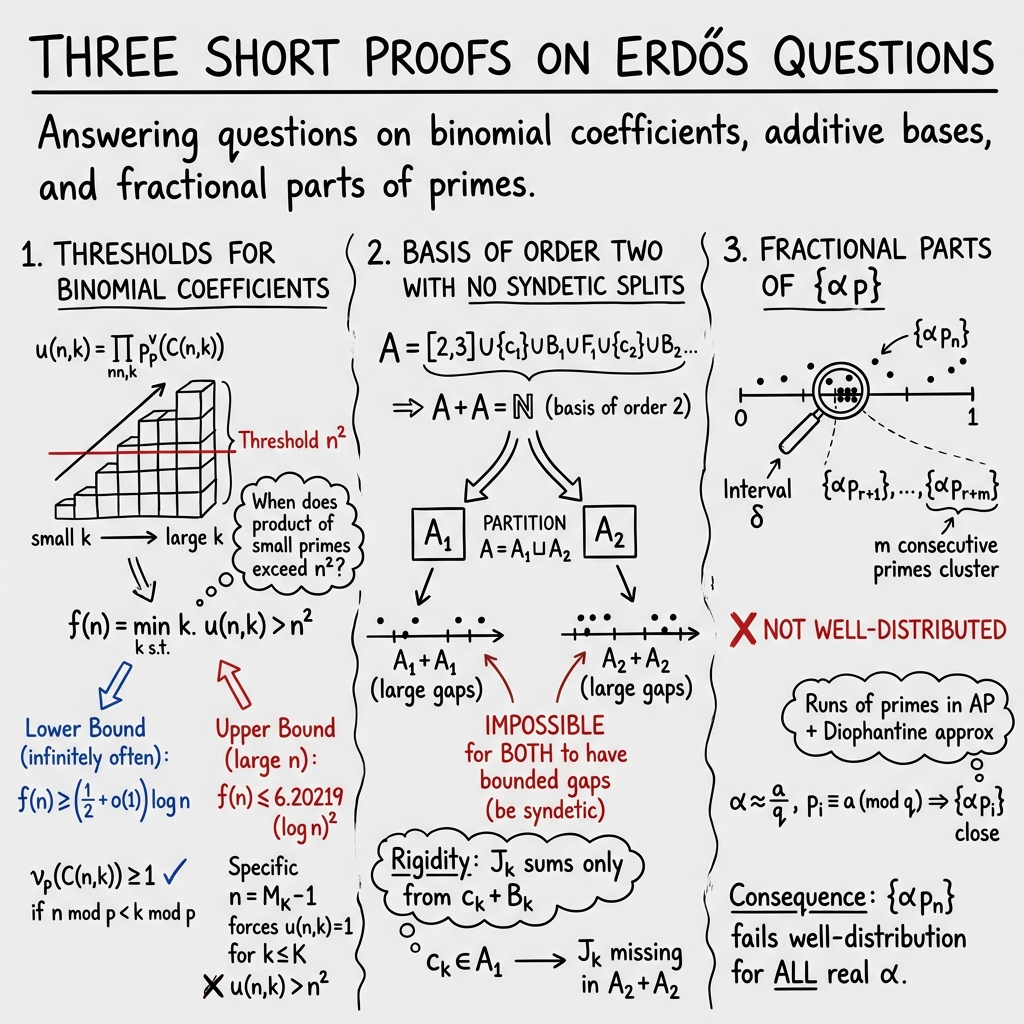

Abstract: We give a triplet of short proofs, each of which answers a question raised by Erdős. The first concerns the small prime factors of $\binom{n}{k}$, the second concerns whether an additive basis $A$ can always be split into pieces $A_1$ and $A_2$ such that each of $A_i + A_i$ has bounded gaps, and the final concerns whether ${αp}$ is "well-distributed" in the sense introduced by Hlawka and Petersen. In each case, the proof is due entirely to an internal model at OpenAI.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “Short proofs in combinatorics and number theory” (for a 14-year-old)

What is this paper about?

This paper gives three short, clever solutions to old questions asked by the famous mathematician Paul Erdős. All three questions are about patterns in numbers:

- how small prime numbers show up in certain combinations,

- how to split a special kind of number set into two parts, and

- how evenly numbers get spread around a circle when you multiply an angle by prime numbers.

The fun twist: the core ideas of the proofs were found by an AI model, and the human authors polished the write-ups.

The three questions and what the paper shows

1) Small primes in binomial coefficients

- Goal (in simple terms): Consider the binomial coefficient (read as “n choose k”), which counts how many ways you can pick items from . Every number breaks down into prime factors. The question is: if you only pay attention to primes up to , how big is the product of the powers of these small primes inside , and how big does have to be before this “small-prime part” is larger than ?

- Main finding: You don’t have to go very far. They prove that, for large , a about as big as is enough. Even better, for infinitely many , you sometimes need at least about . In short, the “threshold” is between about and , not something like a power of .

2) Splitting a basis of order 2 (sumset question)

- Goal (in simple terms): A set of whole numbers is a “basis of order 2” if every large enough number can be written as the sum of two numbers from . Erdős asked: if you split into two parts and , is it always possible that both and (the sets of sums you get from each half) have no long gaps (i.e., are “syndetic”)?

- Main finding: No. The authors build a specific set that is a basis of order 2, but for any way you split it into and , at least one of the two sumsets will have arbitrarily long holes. That means you can’t make both halves “densely cover” the numbers with sums at the same time.

3) Are the fractional parts well-distributed?

- Goal (in simple terms): Pick a real number (like an angle). Multiply it by prime numbers , and look at the fractional parts (think of placing dots around a unit circle at positions ). Are these dots always “evenly spread” in a strong, sliding-window sense called “well-distributed”?

- Main finding: No. For every real , the sequence is not well-distributed in that strong sense. There are infinitely many times when a bunch of these dots cluster in a tiny arc, which breaks the “even spread” requirement.

What were the researchers trying to figure out?

Here are the core questions in approachable terms:

- How quickly do small primes “pile up” inside the number , as grows?

- Can you always split a “sum-friendly” set into two pieces so each piece is still sum-friendly with no big gaps?

- Do the points (fractional parts of primes times a number) spread out evenly in every stretch of consecutive terms?

How did they approach the problems?

To make the ideas concrete, imagine these analogies and simple tools:

1) Counting small primes in

- Key idea: Each prime number contributes some number of copies (powers) to . A classical tool (Legendre’s formula) helps count how many times divides a factorial, which leads to how many times divides .

- Analogy: Think of as a sliding window and each prime as a color. As you move , you count how often color shows up in the factorization. By averaging over many (up to about ), you guarantee that for at least one , the small primes together outweigh .

- For the lower bound, they cleverly build a special number that avoids small primes in for all . This shows sometimes you truly need at least around .

2) Building a set that can’t be nicely split

- Key idea: They construct in “stages,” placing blocks of numbers at carefully spaced locations (scaled by powers of 5), plus single “key” numbers at each stage.

- Analogy: Think of making a Lego city where certain buildings (numbers) can only be combined in one specific way to make target streets (intervals of sums). In particular, there’s a narrow interval that can only be formed as with from a specific block . If you color red, then only red+red sums can hit that interval; if you color it blue, only blue+blue can. Since infinitely many land in one color, the other color’s sumset misses infinitely many such intervals—forcing big gaps.

3) Showing clusters too much to be well-distributed

- Key idea: Two powerful tools:

- Dirichlet’s approximation theorem: Any real number is very close to some fraction with not-too-big .

- Modern prime results (Maynard, Tao, and a corollary by Banks–Freiberg–Turnage-Butterbaugh): There are infinitely many runs of consecutive primes that all sit in the same remainder class mod and are bunched within a small interval of size about .

- Analogy: Think of the fractional parts as points on a clock. If several consecutive primes are all the same “type” mod , then multiplying by makes those points land close together on the clock. This clustering keeps happening, so the dots aren’t evenly spread in every moving window—breaking the “well-distributed” rule.

Why do these results matter?

- For binomial coefficients: They show that small primes dominate surprisingly quickly—by about . This sharpens previous estimates and deepens our understanding of how primes factor into combinatorial quantities like “n choose k.”

- For additive bases: The construction answers an Erdős question with a clear “no.” Even if a set can make every large number as a sum of two elements, you can’t always split it so both halves still make sums without large gaps.

- For fractional parts with primes: It settles a long-standing question of Erdős decisively: no matter which real number you choose, fails this strong version of “even spread.” It also showcases how modern breakthroughs about prime patterns can solve problems in a completely different area (uniform distribution).

Big picture: impact and implications

- These are clean, elegant answers to classic questions—each tied to Erdős—using short arguments.

- The third result demonstrates the power of recent advances on prime gaps and patterns to resolve broader questions about distribution on the unit interval.

- The paper also hints at a new way of doing math research: using AI to generate proof ideas that humans refine. That’s exciting because it can accelerate discoveries and offer fresh angles on old problems.

Overall, the paper blends clever counting, careful constructions, and modern prime number theory to give crisp, easy-to-state answers with significant mathematical consequences.

Knowledge Gaps

Below is a concise list of the paper’s unresolved knowledge gaps, limitations, and open questions, organized by topic to guide future investigation.

Thresholds for small prime factors of binomial coefficients

- Tight order of f(n): The paper proves f(n) ≤ (C + o(1)) (log n)2 and f(n_j) ≥ (1/2 + o(1)) log n_j for infinitely many n_j. It remains open to determine the true order of growth of f(n): is f(n) = Θ(log n), Θ((log n)2), or something in between, in worst case, on average, or for typical n?

- Matching lower bounds: The lower bound is exhibited only on the special sequence n = M_K − 1. Can one prove f(n) ≥ c log n for a density-1 set of n, or even infinitely often with a larger constant c > 1/2? Conversely, can one construct n with f(n) ≥ c (log n)2 for some c > 0?

- Limsup/liminf behavior: Determine limsup and liminf of f(n)/(log n) and of f(n)/(log n)2, and characterize the distribution of f(n) across n.

- Constant optimization: The constant 24/(π2 − 6) in the upper bound arises from a coarse averaging argument and a first-level truncation of Legendre’s formula. Can the constant be reduced, possibly by exploiting higher-power contributions in v_p or sharper weighting of primes?

- Methodological refinement: The proof lower-bounds v_p by a single first-level indicator 1_{n mod p < k mod p}, discarding higher-order terms. Can using Kummer’s theorem or a full multi-level analysis of v_p yield sharper (possibly O(log n)) bounds?

- Effectivity and explicit ranges: The upper bound holds for “n sufficiently large” with o(1) terms. Can one give fully explicit, effective constants and ranges for which the bound is guaranteed?

- Robustness under hypotheses: Earlier bounds under RH/Density Hypothesis are polynomial. Can stronger hypotheses (e.g., Elliott–Halberstam, or distribution of primes in short intervals/APs) imply improved polylogarithmic or even O(log n) behavior?

- Variants and generalizations:

- Change the threshold: Study f_α(n) = min{k: u(n,k) > nα} for general α > 0.

- Distinct-prime product: Replace u(n,k) by the squarefree kernel over p ≤ k dividing C(n,k).

- Other combinatorial coefficients: Extend to multinomial coefficients or related arithmetic combinatorial quantities.

- Algorithmics: Given n, can one efficiently locate a k achieving u(n,k) > n2 within the proved bounds?

Basis of order two with no syndetic splits

- Density and sparsity: The constructed basis A is very structured and sparse. Can one produce such a counterexample A with prescribed density properties (e.g., positive upper density or controlled growth |A ∩ [1,N]| ~ Nθ), or optimize density subject to the “no syndetic split” property?

- Quantitative gap growth: The proof guarantees arbitrarily long gaps in one monochromatic sumset for every 2-coloring, but does not quantify a uniform lower bound on gap lengths as a function of partition size or scale. Can one obtain explicit, quantitative lower bounds on the gap growth rate uniform over all partitions?

- Strengthening the obstruction: Beyond non-syndeticity, can one force stronger failures (e.g., not piecewise syndetic, upper Banach density 0 of the monochromatic sumset, or specific structural obstructions)?

- Characterization problem: Classify (or find broad families of) additive bases of order 2 that admit a syndetic split versus those that do not. What necessary/sufficient structural conditions govern splittability into two syndetic sumsets?

- More colors and higher order: For r-colorings (r ≥ 3), must at least one monochromatic A_i + A_i fail to be syndetic for the same A? For bases of higher order h ≥ 3, does there exist A such that for every 2-coloring, at least one monochromatic h-fold sumset is non-syndetic?

- Positive results and thresholds: While the paper gives a negative example, for which classes of bases A is a syndetic split always possible? Determine thresholds on additive structure/density ensuring splittability.

Fractional parts of {α p}

- Quantitative non-well-distribution: The proof shows failure of well-distribution (WD) but does not quantify the discrepancy. Can one prove a universal quantitative lower bound, e.g., limsup_{k→∞} sup_{n,I} |#(n < m ≤ n+k: {α p_m} ∈ I) − |I| k|/k = 1 for every α?

- Frequency and density of clustering: Maynard–Tao results guarantee infinitely many long clusters; the proof does not bound how often such clusters occur. Can one estimate the density or frequency of these clustering blocks as a function of m, q, or α?

- Effectivity of constants: The argument relies on C_m from deep prime gap results but does not provide explicit values. Can one make the non-WD conclusion fully effective with explicit quantitative parameters (δ, m, q, and the number of occurrences up to X)?

- Alternative proofs: Is there a more elementary or softer argument (perhaps leveraging equidistribution plus known irregularities) to show non-WD for irrational α without invoking heavy prime gap machinery?

- Generalizations:

- Replace p_n by primes in specified sets (e.g., in APs, in short intervals) or by prime polynomials; analyze WD for sequences {α f(p)} (e.g., f(p) = pk, log p, smooth functions).

- Study analogous WD failures for sequences on higher-dimensional tori.

- Interface with equidistribution: For irrational α, {α p} is equidistributed mod 1 (Vinogradov), yet not WD. Characterize precisely which stronger distribution properties fail and in what quantitative sense, beyond WD.

Cross-cutting and methodological

- Empirical landscape: No computational/empirical data are provided. Numerical evidence on f(n), on densities/structures of admissible bases A, and on discrepancy of {α p} could guide sharper conjectures.

- Structural robustness: Each proof is purpose-built. Understanding the robustness of these methods (e.g., which features are essential vs. artifacts) could enable generalizations or stronger bounds.

- Presentation gaps: Several displayed formulas in Section 2 contain typographical inconsistencies (e.g., missing brackets in Legendre’s formula lines), making exact replication nontrivial. A fully rigorous, error-checked write-up with explicit constants would aid verification and extension.

Practical Applications

Overview

This paper delivers three concise advances in combinatorics and number theory and documents AI-generated proofs. While the results are primarily theoretical, they have actionable implications for software tooling, algorithm design, numerical simulation practices, education, and research workflows. Below, we translate each result, plus the AI methodology, into concrete applications, grouped by immediacy and annotated with sectors and feasibility notes.

Immediate Applications

Small prime factors of binomial coefficients (polylogarithmic threshold for u(n,k))

- Build fast routines for bounding prime power content of binomial coefficients

- Sector: Software (computer algebra systems), HPC

- Application: Implement a function that, for large n, only checks primes up to Y ≈ 6.21·(log n)2 to certify that the “small-prime” part of a binomial coefficient exceeds n2. This short-circuits exhaustive prime valuation scans in exact arithmetic workflows.

- Tools/Products/Workflows: Additions to FLINT/NTL/Pari/GP/SageMath to:

- compute u(n,k) quickly for k ≤ Y,

- provide certificates that u(n,k) > n2 using Legendre valuations,

- expose heuristics that focus on small primes first to accelerate binomial factorization tasks.

- Assumptions/Dependencies: Asymptotic bounds rely on the prime number theorem; constants guarantee performance for “sufficiently large” n. For small n, include a fallback exact path.

- Optimize combinatorial divisibility checks and p-adic valuation queries

- Sector: Software (CAS), Education

- Application: When proving/computing integrality or divisibility properties of combinatorial sums, use the (log n)2 cap to limit how many primes must be considered to certify large valuation lower bounds.

- Tools/Products/Workflows: CAS “valuation-aware” simplification modules that first accumulate small-prime exponents; structured logs/certificates for formal proof archives.

- Assumptions/Dependencies: Correctness is unconditional; performance benefits grow with n.

- Testbed datasets for algorithmic research on binomial arithmetic

- Sector: Academia (algorithm design), Software

- Application: Package instances (n, k) around the proved threshold for benchmarking integer-factorization routines for binomial coefficients.

- Tools/Products/Workflows: Public datasets and reproducible scripts (Python/Sage) for validating heuristic vs. guaranteed thresholds.

- Assumptions/Dependencies: None beyond standard big-integer libraries.

Additive basis of order 2 with no syndetic splits (explicit counterexample)

- Stress-testing for partition-based algorithms that target dense pairwise-sum coverage

- Sector: Software (algorithm testing), Theoretical CS

- Application: Use the explicit A construction to build worst-case inputs for:

- sharded/partitioned 2-sum coverage algorithms,

- streaming/approximation algorithms attempting to preserve “coverage density” under splits.

- Tools/Products/Workflows: A generator for A (using the 5-adic scaling with intervals B_k, F_k and points c_k) for arbitrary depth, and a test harness to verify coverage gaps in A_i + A_i after arbitrary partitions.

- Assumptions/Dependencies: Construction is explicit and unconditional. Adjust constants if porting to bounded integer ranges.

- Design guidance for partitioning policies that aim for contiguous coverage

- Sector: Operations research, Systems/DB sharding

- Application: As a cautionary example, demonstrate that “splitting to keep both shards dense in pairwise sums” is impossible for some sets; motivates cross-shard cooperation when contiguous coverage is required.

- Tools/Products/Workflows: Internal policy notes and simulation dashboards showing inevitable gap growth under arbitrary 2-way partitions on A-like inputs.

- Assumptions/Dependencies: Result is existential; use the provided A to instantiate realistic-sized demonstrations.

- Teaching modules and assignments in additive combinatorics

- Sector: Education

- Application: Turn the construction and rigidity lemma into problem sets exploring additive bases, sumsets, and syndeticity.

- Tools/Products/Workflows: Jupyter notebooks visualizing A, A+A, and gaps; auto-graders that verify student-implemented versions of the construction.

- Assumptions/Dependencies: None.

{α·p_n} is not well-distributed (for every α)

- Update guidance for low-discrepancy and quasi-random sequence design

- Sector: Software (simulation libraries), Finance (Monte Carlo), Engineering/Physics (numerical integration)

- Application: Avoid using sequences of the form {α·p_n} when short-window uniformity is needed (e.g., rolling estimation, sliding-window sampling in quasi-Monte Carlo). The Hlawka–Petersen well-distribution property fails due to clustering.

- Tools/Products/Workflows:

- Documented “do-not-use” patterns in simulation libraries,

- alternative recommendations (e.g., scrambled Sobol’, lattice rules with random digital shifts).

- Assumptions/Dependencies: The result is unconditional and holds for every real α; it rules out a strong windowed-uniformity property (even if equidistribution may still hold in the limit).

- New statistical tests for RNG and sequence quality focusing on short-window clustering

- Sector: Software (RNG testing suites)

- Application: Add a “Hlawka–Petersen windowed uniformity” test to TestU01/Dieharder-like suites that detects clustering behaviors in moving windows; include {α·p_n} as a canonical failing sequence.

- Tools/Products/Workflows: Implement sup over windows and intervals approximations for practical k and window sizes; report deviations as a function of window-length.

- Assumptions/Dependencies: Finite-sample proxies for the lim-sup definition; validated using the Maynard–Tao induced clustering.

- Security and correctness warnings for hash/PRNG designs based on prime-indexed Kronecker sequences

- Sector: Cybersecurity, Software

- Application: If any internal sampling uses {α·p_n}, replace with constructions having proven small-window uniformity or add randomized dithering to break the structure that causes clustering.

- Tools/Products/Workflows: Code audits; drop-in replacement with proven generators; regression tests emphasizing sliding-window uniformity.

- Assumptions/Dependencies: Unconditional proof; replace legacy designs that adopted prime-indexed fractional parts for “pseudo-randomness.”

AI-derived proofs (methodological)

- Integrate LLMs into math research workflows with reproducibility and credit practices

- Sector: Academia, Software tools for research

- Application:

- Use LLMs to propose proof sketches, then human-verify and formalize (Lean/Isabelle).

- Maintain “prompt notebooks,” seed control, and verification artifacts.

- Tools/Products/Workflows: Git-based proof pipelines linking prompts, LLM outputs, and formal proof scripts; metadata for contribution credit; CI that runs proof-checkers.

- Assumptions/Dependencies: Human validation remains essential; formalization may lag behind informal proofs.

Long-Term Applications

Small prime factors of binomial coefficients

- Specialized algorithms for p-adic and factorization-aware combinatorial computation

- Sector: Software (CAS), Cryptography (number-theoretic primitives), HPC

- Application: Develop algorithms that exploit the concentration of valuation mass in small primes (up to polylog n) to:

- accelerate exact combinatorial summations and certifications,

- improve p-adic lifting routines,

- speed up integer relation detection involving binomial terms.

- Tools/Products/Workflows: p-adic “front-end” passes for binomial-heavy computations, with lazy evaluation for larger primes.

- Assumptions/Dependencies: Further engineering and empirical validation on mixed workloads.

- Structured compression and certificate schemes for large binomial coefficients

- Sector: Data compression for scientific computing, Reproducible research

- Application: Store binomial coefficients via small-prime exponent vectors plus residual metadata; support verifiable recomputation.

- Tools/Products/Workflows: Compressed encodings tied to provable bounds; verifiers that reconstitute values on demand.

- Assumptions/Dependencies: Trade-offs depend on n-scale and access patterns.

Additive basis with no syndetic splits

- Hardness instances for partitioning and coverage problems

- Sector: Theoretical CS, Optimization

- Application: Use A-like constructions to establish lower bounds or impossibility results for:

- partition-based coverage algorithms,

- sharded two-sum/coverage strategies that demand bounded-gap guarantees on each shard.

- Tools/Products/Workflows: Formal reductions and benchmark families that scale (via the 5-adic pattern) for asymptotic studies.

- Assumptions/Dependencies: Extending from specific A to broader problem classes may need new proofs.

- Design of cross-shard coordination protocols

- Sector: Systems/Distributed databases

- Application: Protocols that dynamically route cross-shard pairs to avoid unavoidable coverage gaps predicted by the theorem when maintaining dense local pairwise coverage is required.

- Tools/Products/Workflows: Shard-aware routers informed by gap-detection heuristics; adaptive rebalancing policies.

- Assumptions/Dependencies: Theoretical insight, but system design needs empirical validation.

{α·p_n} is not well-distributed

- Theory-driven construction of sequences with tunable short-window distributions

- Sector: Simulation, Signal processing

- Application: Invert the obstruction to produce controllably clustered sequences (via residue-class synchronization) for variance-reduction tests, stress-testing of estimators, or modeling bursty arrivals.

- Tools/Products/Workflows: Sequence generators that fix a, q, and m to induce clusters with known bounds (via Maynard–Tao style results).

- Assumptions/Dependencies: Practical parameterization depends on available constants C_m; requires tuning.

- Formal criteria for window-based discrepancy in quasi-Monte Carlo design

- Sector: Numerical analysis, Software (QMC libraries)

- Application: Develop and adopt standards that measure short-window discrepancy (not just global discrepancy) when evaluating and certifying sequence quality.

- Tools/Products/Workflows: New test batteries and certification metrics; empirical libraries documenting performance across windows and integrand classes.

- Assumptions/Dependencies: Requires community consensus and benchmarking.

AI-derived proofs (methodological)

- Formal-proof integration and provenance standards for AI-generated mathematics

- Sector: Academia, Policy

- Application: Establish guidelines for:

- mandatory disclosure of AI involvement,

- minimal reproducibility artifacts (prompts, seeds, models, versions),

- formal verification targets (e.g., convert to Lean as a condition for acceptance in certain venues).

- Tools/Products/Workflows: Journal/conference templates and artifact review processes; repositories hosting formalizations and AI logs.

- Assumptions/Dependencies: Community adoption; tooling maturity for formal proof assistants.

- Hybrid human–AI discovery platforms for conjecture generation and rapid vetting

- Sector: Software for scientific discovery

- Application: Platforms that:

- generate multiple proof attempts,

- auto-check key lemmas computationally or formally,

- prioritize human attention to promising lines.

- Tools/Products/Workflows: Orchestrators integrating LLMs, SAT/SMT, CAS, and proof assistants; dashboards for triaging proof states.

- Assumptions/Dependencies: Continued advances in LLM reasoning and proof-assistant interoperability.

Notes on Feasibility and Dependencies

- All three mathematical results are unconditional; practical impact hinges on n-scale (for the binomial coefficient bound) and on windowed-uniformity needs (for {α·p_n}).

- Short-window tests and RNG guidance require careful finite-sample approximations to asymptotic definitions; nonetheless, they are practically implementable now.

- The additive basis construction is explicit and easy to generate; its impact as a hardness/stress-testing tool is immediate, while systemic design implications (e.g., cross-shard protocols) require contextualization and empirical work.

- AI-methodology recommendations assume a human-in-the-loop and, for stronger guarantees, a path to formal verification; policy adoption is a governance question rather than a technical limitation.

Glossary

- Additive basis (of order 2): A set of integers A such that every sufficiently large integer can be written as a sum of two elements of A. " \cref{lem:basis-cover} implies that is an additive basis of order $2$."

- Bounded gaps: A property of a set of integers meaning there exists a constant M so that every interval of length M contains an element of the set (equivalently, gaps between consecutive elements are uniformly bounded). "such that and both have bounded gaps."

- Congruence modulo q: The relation a ≡ b (mod q), indicating q divides a−b; used to describe numbers lying in the same residue class. "p_{r+1} \equiv p_{r+2} \equiv \cdots \equiv p_{r+m} \equiv a \pmod q"

- Density Hypothesis: A conjectural bound on the density of zeros of Dirichlet L-functions that, if true, yields stronger results about the distribution of primes. "under the Riemann Hypothesis (or Density Hypothesis)"

- Dirichlet's approximation theorem: A result in Diophantine approximation stating that any real number can be approximated by rationals a/q with error at most 1/(qQ) for some q ≤ Q. "By Dirichlet's approximation theorem, for all there exists and such that and"

- Indicator function (1_{E}): A function that equals 1 when the condition E holds and 0 otherwise; used to encode conditions compactly in sums. "= 1_{n\pmod p< k\pmod p}."

- Lacunary sequence: A rapidly growing sequence (e.g., n_{k+1} ≥ λ n_k for some λ > 1) that is sparse enough to exhibit special distribution phenomena. "if is lacunary"

- Legendre's formula: A formula expressing the exponent of a prime p in n! (and hence in binomial coefficients) as a sum of floor terms. "By Legendre's formula,"

- Monochromatic (in a coloring): All elements having the same color in a colored partition; often used in combinatorial arguments. "the opposite monochromatic sumset has arbitrarily long gaps"

- Prime Number Theorem: The asymptotic estimate π(x) ~ x/log x for the number of primes up to x; commonly used to approximate sums over primes. "by the prime number theorem"

- Residue classes modulo p: The set of equivalence classes of integers under congruence mod p; arithmetic cycles through these classes. "the residue classes modulo run through all of once."

- Riemann Hypothesis: The conjecture that all nontrivial zeros of the Riemann zeta function lie on the line Re(s)=1/2; used here as an assumption yielding stronger bounds. "under the Riemann Hypothesis (or Density Hypothesis)"

- Sumset (A+B): The set {a+b : a ∈ A, b ∈ B} formed by adding elements of two sets. "the two sumsets and "

- Syndetic: A set of integers with bounded gaps; equivalently, a set that intersects every sufficiently long interval. "cannot both be syndetic"

- v_p (p-adic valuation): The exponent of a prime p in the prime factorization of an integer (or of a binomial coefficient). "p{v_p\big(\binom{n}{k}\big)}"

- Well-distributed: A strong uniform distribution property requiring that, in every sliding window of a sequence, the empirical distribution across subintervals of [0,1] matches Lebesgue measure asymptotically. "is not well--distributed."

Collections

Sign up for free to add this paper to one or more collections.