Resolution of Erdős Problem #728: a writeup of Aristotle's Lean proof

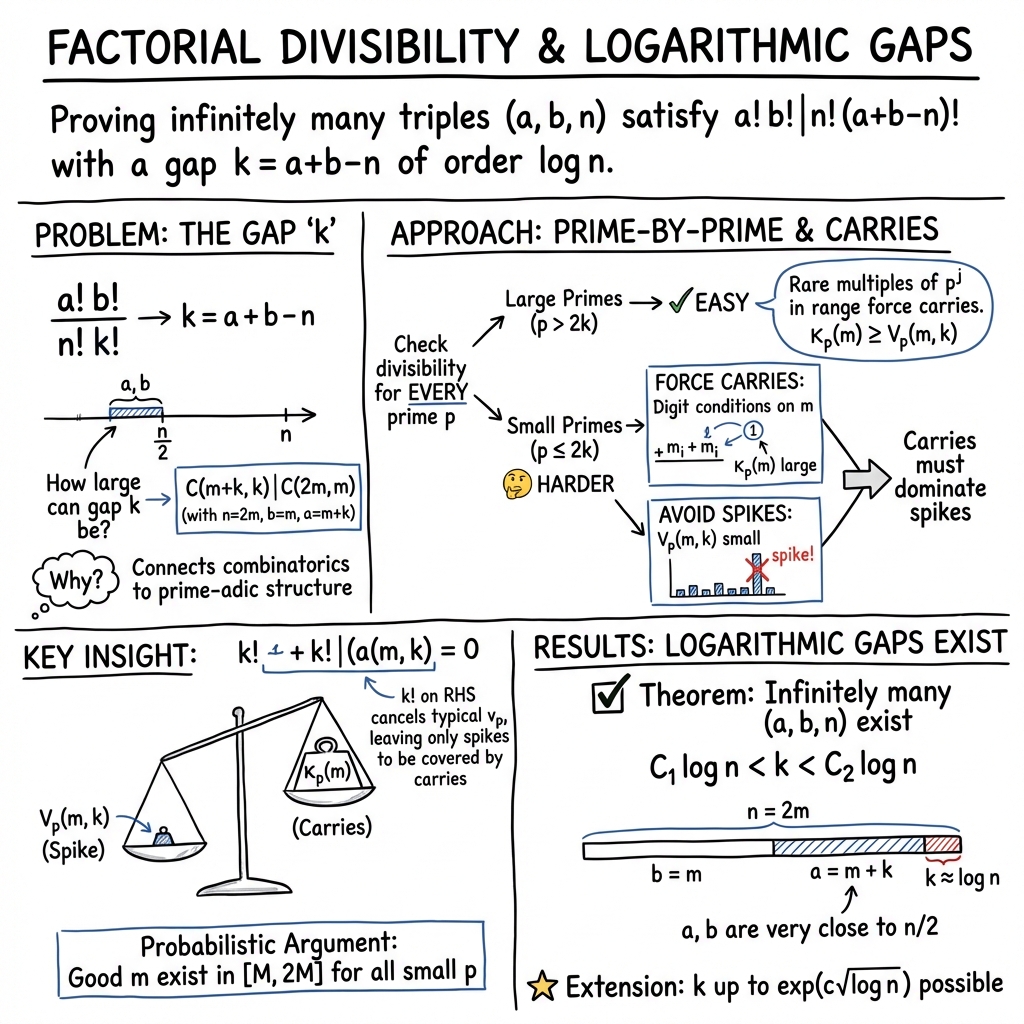

Abstract: We provide a writeup of a resolution of Erdős Problem #728; this is the first Erdos problem (a problem proposed by Paul Erdős which has been collected in the Erdos Problems website) regarded as fully resolved autonomously by an AI system. The system in question is a combination of GPT-5.2 Pro by OpenAI and Aristotle by Harmonic, operated by Kevin Barreto. The final result of the system is a formal proof written in Lean, which we translate to informal mathematics in the present writeup for wider accessibility. The proved result is as follows. We show a logarithmic-gap phenomenon regarding factorial divisibility: For any constants $0<C_1<C_2$ there exist infinitely many triples $(a,b,n)\in\mathbb N3$ such that [ a!\,b!\mid n!\,(a+b-n)!\qquad\text{and}\qquad C_1\log n < a+b-n < C_2\log n. ] The argument reduces this to a binomial divisibility $\binom{m+k}{k}\mid\binom{2m}{m}$ and studies it prime-by-prime. By Kummer's theorem, $ν_p\binom{2m}{m}$ translates into a carry count for doubling $m$ in base $p$. We then employ a counting argument to find, in each scale $[M,2M]$, an integer $m$ whose base-$p$ expansions simultaneously force many carries when doubling $m$, for every prime $p\le 2k$, while avoiding the rare event that one of $m+1,\dots,m+k$ is divisible by an unusually high power of $p$. These "carry-rich but spike-free" choices of $m$ force the needed $p$-adic inequalities and the divisibility. The overall strategy is similar to results regarding divisors of $\binom{2n}{n}$ studied earlier by Erdős and by Pomerance.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper solves a number puzzle first asked by the famous mathematician Paul Erdős (Problem #728). It studies when one big product of numbers (a factorial) divides another and shows there are infinitely many times this works with a gap that’s about as big as a constant times the logarithm of a number. It’s also notable because the solution was first found and fully checked by AI systems, then rewritten here in human-friendly math.

Before we begin, here are two quick ideas:

- The factorial of a number, written , means .

- “ divides ” means can be evenly split into without leftovers.

The main question (in simple terms)

We look at three whole numbers , , and . Consider the divisibility:

- Does divide ?

The “gap” is . The question asks: How large can be while the divisibility still holds?

The paper proves that there are infinitely many choices of where this works and the gap is between two constant multiples of :

- For any fixed constants , there are infinitely many triples with

Here, is a slowly growing function (roughly: like the number of digits of in a fixed base).

The key objectives (in everyday language)

The paper aims to:

- Show that the gap can be as large as a constant times , infinitely often.

- Do this by converting the factorial divisibility into a cleaner statement about binomial coefficients (the “ choose ” numbers from combinatorics).

- Prove the statement by checking, prime number by prime number, that each prime divides the left side no more than it divides the right side.

How the proof works (the approach and ideas)

The authors use number bases, carries in addition, and some clever counting to find the needed examples. Here are the main steps, with analogies and simple explanations.

Step 1: Turn factorials into binomial coefficients

A binomial coefficient is written and means “the number of ways to choose things from things.” It’s related to factorials by

The authors show the original divisibility is equivalent to:

for certain choices of and (they set , , , so that ). They plan to take about for some constant .

Why this helps: Binomial coefficients are easier to analyze prime-by-prime.

Step 2: Check prime factors one at a time

To prove a division like , it’s enough to check that for every prime , doesn’t appear “more times” in than in . In math-speak, for each prime , compare how many copies of divide versus .

So the paper reduces the goal to: For every prime ,

- the exponent of in is at most the exponent of in .

Step 3: Carries in base- addition (Kummer’s Theorem)

Here’s a very cool fact: If you write the number in base (like base 10, but with digit size ), then the number of carries you get when adding (i.e., doubling ) in base is exactly the exponent of in .

Analogy:

- Think about normal base-10 addition. If you add 57 + 57, at the ones place you get 14, so you write 4 and “carry” 1 to the tens place. Carries happen where a digit sum overflows the base.

- In base , the same idea holds, but the threshold is instead of 10.

- Kummer’s theorem says: “How many carries happen when doubling in base ?” equals “How many times divides .”

So to make have lots of -power, the authors try to force many carries when doubling in base .

Step 4: Forcing carries by choosing the digits of

If a base- digit of is at least half of (like 5,6,7,8,9 in base 10), then when you double that digit, it forces a carry at that digit position (because the sum will be at least ).

So the plan is to choose so that, for each small prime (those with ), many of the first few base- digits of are “big” (at least half of p\binom{2m}{m}m[M, 2M]mp\binom{m+k}{k}(m+1)\cdots(m+k)k!pm+1,\ldots,m+kpp^JJmp^Jm \in [M, 2M]mpp > 2km+1,\dots,m+kpp^jp \le 2kmpm[M,2M]k \approx c \log MMkC_1 \log nC_2 \log n0 < C_1 < C_2(a,b,n)a!\,b!\mid n!\,(a+b-n)!\quad\text{and}\quad C_1\log n < a+b-n < C_2\log n.k = a+b-n\log nkpm\binom{2m}{m}$.

What’s the impact?

- Mathematical impact:

- It advances our understanding of how factorials and binomial coefficients divide each other and how prime factors distribute in these numbers.

- It builds on classic insights (like Kummer’s theorem) and recent ideas about “digits behaving randomly,” turning them into a concrete tool to produce infinitely many examples with controlled gap size.

- The strategy is flexible and relates to other results about divisibility of central binomial coefficients, connecting to work by Erdős and more recent research.

- Broader significance:

- The proof was first found and formalized by AI systems (OpenAI’s GPT-5.2 Pro and Harmonic’s Aristotle, producing a Lean formal proof), then translated into a human-style writeup. This marks a significant milestone: a recognized Erdős problem resolved autonomously by AI and certified in a proof assistant.

- It hints at a future where AI can not only discover proofs but also help guide human-understandable explanations and connect with existing mathematical literature.

Overall, the paper combines clever number-base thinking (carries), prime-by-prime reasoning, and probabilistic counting to settle Erdős Problem #728 with a clean “logarithmic gap” answer, and it showcases how AI can contribute to modern mathematics.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of concrete gaps and questions the paper leaves unresolved, focusing on what remains missing, uncertain, or unexplored, and framed so that future work can act on them.

- True order of magnitude of the maximal gap: The paper proves infinitely many triples with k = a + b − n in a logarithmic window, and remarks that the method’s bottleneck might allow k up to exp(c sqrt(log n)). It remains open to determine the correct growth rate of the maximal achievable k (as a function of n), and to establish matching upper bounds.

- Formal upgrade beyond logarithmic k: The remark states the union-bound step k * e{−μ̃(M)/8} = o(1) can be made to work up to k = exp(c sqrt(log n)), but the paper does not provide a complete proof or a formal theorem at that scale. Making this precise (with rigorous parameter choices, explicit constants, and a Lean formalization) is an actionable target.

- Lack of upper bounds: The paper gives lower bounds (existence) for k but no nontrivial upper bounds on k under natural side conditions (e.g., a, b ≤ (1 − ε)n). Establishing upper bounds that match or nearly match the achievable lower bounds is open.

- Density of good m (quantitative counting): The proof only claims existence of at least one m ∈ [M, 2M] for each large M. The bounds in Lemma 4.13 imply the bad set is o(M) (hence a 1 − o(1) density of good m is plausible), but this stronger density statement is not stated nor quantified. Providing explicit asymptotics or a positive-density result would strengthen the conclusion.

- Per-n guarantees: The construction fixes n = 2m and shows infinitely many even n. It remains open whether for each sufficiently large n (or for a positive density of n), one can find a, b with k ≥ c log n (or larger), and to quantify how often such triples exist as n varies.

- Explicit proximity of a and b to n/2: The introduction claims “a and b are extremely close to n/2,” but the paper does not quantify this proximity. Providing explicit bounds on |a − n/2| and |b − n/2| (in terms of k or n) would clarify the strength of the construction.

- Constructive/algorithmic aspects: The argument is non-constructive (via counting). Is there an efficient randomized or deterministic algorithm that, given M (or n), finds suitable m (hence a, b) with k in the desired range, with provable runtime and success probability guarantees?

- Parameter optimization: The proof uses fixed choices (e.g., η = 1/10 and t(M) = ⌈10 log log M⌉), coarse bounds θ(p) ≥ 1/3, and a union bound across primes. Optimizing these parameters (and using sharper tail or sieve bounds, or dependency tooling such as the Lovász Local Lemma) could increase the admissible k; the best obtainable k via this method is not analyzed.

- Threshold for the “large prime” regime: Lemma 3.1 imposes p > 2k to force carries. It is unclear whether the threshold 2k is optimal for this argument; tightening this (e.g., to p > (1 + o(1))k) could further relax constraints and enlarge k.

- Correlations across primes: The analysis treats primes independently and unions the bad events. Understanding and exploiting correlations between carry events in different bases (or demonstrating a form of pseudo-independence) could enable larger k beyond what union bounding permits.

- Classification problem for divisibility: The paper proves existence of many (m, k) with C(m + k, k) | C(2m, m). A structural characterization (or threshold behavior) of all such pairs (m, k), or an asymptotic for their count up to X, remains open.

- Generalizations beyond n = 2m: Can analogous results be obtained for more general linear families (e.g., C(m + k, k) | C((1 + δ)m, m) for fixed δ ≠ 1) or for broader triples (a, b, n) subject to a, b ≤ (1 − ε)n? Extending the carry analysis beyond doubling is not explored.

- Beyond binomial coefficients: Are there analogous “carry-rich implies divisibility” phenomena for multinomial coefficients or for q-analogues (Gaussian binomial coefficients)? The extent to which Kummer-type interpretations can be adapted remains unexplored.

- Sharpening carry lower bounds: The proof enforces many large base-p digits among the first L_p digits. Refined carry-count lower bounds (e.g., tracking incoming carries or using precise digit-sum/carry-process martingale inequalities) might yield stronger results for k.

- Spike control improvements: The “no-spike” condition V_p(m, k) < J_p + t(M) uses a crude union bound over i ≤ k and primes p ≤ 2k. Can sharper divisor-distribution results (or dense-set avoidance techniques) reduce t(M) and thereby enlarge k?

- Explicit constants and ranges: While the theorem allows any 0 < C1 < C2, the proof does not provide explicit upper limits on admissible C2 as a function of the chosen parameters (η, t(M), etc.). Making the allowable range of constants explicit would aid applications.

- Multi-k robustness for a fixed m: For a given good m, for how many k in 1, K does the divisibility hold simultaneously? Establishing such “interval-robustness” would parallel the interval-product divisibility results in the literature.

- Stronger “almost all n” analogues: Erdős’s Aufgabe 557 has an “almost all n” refinement. An analogous statement here (e.g., for almost all m in [M, 2M] the divisibility holds with k ≍ log m) is not stated and would be valuable to formalize and prove.

- Methodological barriers: The paper does not delineate inherent limits of the “carry-rich but spike-free” strategy. Pinpointing where this approach must fail (e.g., via entropy or second-moment barriers) would guide the search for new ideas to push k further.

Practical Applications

Immediate Applications

Below are concrete use cases that can be deployed now, drawing on the paper’s mathematical results and the demonstrated AI+Lean workflow.

- Carry-count API and binomial divisibility checker for large integers

- Sector: software (computational mathematics, CAS), education

- What: Implement functions that compute ν_p(2m choose m) via Kummer’s carry interpretation and check divisibility relations like (m+k)! m! | (2m)! k! using prime-by-prime valuation comparisons rather than constructing huge integers.

- Tools/products/workflows:

- A Python/Rust library module “CarryCount” that exposes κ_p(m) via carry counts.

- A “BinomialDivisibility” module that relies on Legendre’s formula and the paper’s valuation reductions (W_p ≤ κ_p + v_p(k!)).

- CAS plugins (e.g., for SageMath, Mathematica) to simplify factorial ratios and binomial divisibility questions exactly.

- Assumptions/dependencies: Efficient base-p digit extraction; prime enumeration up to O(log n); correctness relies on Kummer’s theorem and Legendre’s formula (well-established).

- Stress-test generator for integer addition (base-2 carry chains)

- Sector: semiconductors, systems software (BigInt libraries), HPC

- What: Generate integers that force dense carry propagation on doubling/addition in base 2, to benchmark and validate adder implementations, CPU micro-ops, and arbitrary-precision integer libraries.

- Tools/products/workflows:

- “CarryStress” generator using the paper’s digit-threshold idea specialized to p=2 (require many low-order bits equal to 1) to produce worst-case carry chains.

- Assumptions/dependencies: For hardware, base-2 is the only relevant base; while simple patterns (e.g., 2L−1) already exist, the paper’s probabilistic construction provides families inside arbitrary ranges [M, 2M], which is useful for randomized stress suites.

- Residue-class filtering utilities for modular constraints

- Sector: software testing, cryptography tooling (auxiliary), optimization

- What: Use the paper’s residue-class counting and union bounds to generate integers within [M, 2M] that simultaneously avoid “rare spikes” (high power divisibility by many small primes).

- Tools/products/workflows:

- A utility that, given primes p ≤ P and thresholds J_p, filters m in a range to avoid m+i ≡ 0 (mod p{J_p}) for small i, enabling robust test-case generation for arithmetic routines and modular algorithms.

- Assumptions/dependencies: Prime list and moduli sizes must satisfy Q ≤ M{1−η} for uniformity bounds; efficiency depends on fast residue checks.

- Lean-to-readable proof translation pipeline

- Sector: academia, publishing, education

- What: A semi-automated workflow to convert formal Lean proofs into human-readable papers, preserving proof provenance and correctness.

- Tools/products/workflows:

- “Lean-to-Text Translator” combining LLM summarization with Lean proof artifacts (lemmas, dependencies) to produce accessible expositions.

- Assumptions/dependencies: Quality assurance and human editorial passes; availability of robust mathlib references.

- Provenance and reproducibility practices for AI-generated proofs

- Sector: academic policy, journals, research integrity

- What: Adopt a workflow to archive AI prompts, Lean scripts, and proof artifacts to establish transparent provenance for AI-driven results.

- Tools/products/workflows:

- A “ProofProvenance Ledger” storing artifacts (LLM inputs/outputs, Lean files, version hashes) and links to public repos.

- Assumptions/dependencies: Journal and community acceptance; repository sustainability; minimal privacy issues over model interactions.

- Curriculum modules and interactive demos on carries and valuations

- Sector: education (secondary, undergraduate)

- What: Hands-on visualizations of Kummer’s theorem (carry counts) and Legendre’s formula; exercises on binomial divisibility via digit patterns.

- Tools/products/workflows:

- Interactive web apps to visualize base-p addition and carries; classroom-ready notebooks showing why ν_p(2m choose m) equals the carry count of m+m in base p.

- Assumptions/dependencies: None beyond standard web tooling; content alignment with curricula.

- Fast valuation-aware checks for combinatorial simplification in CAS

- Sector: software (symbolic algebra)

- What: Use valuation inequalities (W_p ≤ κ_p + v_p(k!)) to decide exact integrality/divisibility in simplification routines, avoiding expensive expansion of factorials/binomials.

- Tools/products/workflows:

- CAS heuristics that short-circuit simplifications by valuation checks; e.g., deciding whether interval products divide central binomial coefficients.

- Assumptions/dependencies: Correct mapping of combinatorial expressions to valuation bounds; prime enumeration up to needed thresholds.

Long-Term Applications

These use cases require further research, scaling, engineering, or consensus-building before broad deployment.

- End-to-end autonomous mathematical research assistants

- Sector: academia, research software

- What: Generalize the demonstrated GPT+Aristotle pipeline into systems that propose conjectures, refine strategies, formalize, and publish with formal verification, for broad classes of problems.

- Tools/products/workflows:

- Integrated environments combining exploratory LLMs, formal proof systems (Lean/Isabelle/Coq), literature search, and provenance tracking.

- Assumptions/dependencies: Stronger automated tactic libraries, better interaction models, and community buy-in for AI-authored work.

- Standards for AI-generated proof acceptance and credit attribution

- Sector: policy, scholarly publishing

- What: Policies that define acceptable evidence for autonomy, set provenance requirements, and establish credit frameworks for human–AI collaboration.

- Tools/products/workflows:

- Journal policies, audit protocols for AI proofs, and citation standards referencing formal artifacts.

- Assumptions/dependencies: Community consensus, legal/IP clarity, infrastructure for audits.

- CAS and compiler-level optimization using valuation- and carry-aware reasoning

- Sector: software (CAS, compilers for numerical kernels)

- What: Embed valuation/carry logic into simplification and code generation to avoid expensive numeric paths when divisibility can be decided symbolically.

- Tools/products/workflows:

- Compiler passes or CAS kernels that translate combinatorial identities into valuation constraints, reducing computational overhead.

- Assumptions/dependencies: Robust symbolic-to-valuation mapping and guaranteed correctness; benchmarking on real workloads.

- Hardware design analytics informed by carry distributions

- Sector: semiconductors

- What: Use probabilistic digit models (and thresholds ensuring “carry-rich” events) to bound worst-case carry propagation, guide adder and pipeline depth design, and predict performance tails.

- Tools/products/workflows:

- Statistical models for carry behavior leveraged in microarchitectural simulations; stress-suite generation guided by multi-digit constraints.

- Assumptions/dependencies: Translating multi-base theory to base-2 specifics; validation against silicon or cycle-accurate simulators.

- Side-channel and timing analysis using controlled carry behavior

- Sector: cryptography/security

- What: Evaluate whether carry propagation patterns (e.g., in multi-precision addition/doubling) introduce measurable timing/leakage and design tests with carry-rich inputs to probe defenses.

- Tools/products/workflows:

- Security test harnesses generating controlled inputs; analytic models linking carry density to leakage/timing signatures.

- Assumptions/dependencies: Demonstrable linkage between carry patterns and side-channel signals; careful experimental design.

- Multi-modulus constraint solvers for residue number systems (RNS) and number-theoretic algorithms

- Sector: cryptography, HPC

- What: Adapt the “rare spike elimination” plus union-bound methodology to choose integers meeting many simultaneous modular constraints (across several coprime bases) for testing, scheduling, or algorithmic tuning.

- Tools/products/workflows:

- RNS-aware integer selection tools controlling ν_p patterns across a prime set; performance tuners that exploit predictable modular behavior.

- Assumptions/dependencies: Mapping carry-based criteria to RNS (which generally avoids carries); demonstration of practical gains.

- Integrated education platforms blending number theory and probability

- Sector: education

- What: Coherent curricula that unify base expansions, carries, p-adic valuations, and Chernoff bounds to teach cross-disciplinary reasoning.

- Tools/products/workflows:

- Modular courseware, auto-graded problem sets, and visual labs aligned with standards.

- Assumptions/dependencies: Curriculum development and teacher training; assessment integration.

- Data-driven discovery of combinatorial divisibility phenomena

- Sector: academia (number theory, combinatorics)

- What: Generalize the carry-rich/spike-free construction to uncover new divisibility windows (e.g., extend beyond logarithmic to exp(c√log n) scales) and related phenomena.

- Tools/products/workflows:

- Search pipelines combining randomized digit constraints, modular filters, and formal verification to propose and test new results.

- Assumptions/dependencies: Further theoretical breakthroughs; computational experiments; integration with formal systems.

Notes on feasibility and dependencies

- The divisibility result itself is existential and asymptotic; while constructive search within [M, 2M] is feasible using residue-class filtering, producing “closed-form” m is not guaranteed by the proof.

- Prime handling is modest since p ≤ 2k and k ≈ c log n; practical implementations rely on enumerating small primes and base-p digit extraction up to L_p ≈ Θ(log n / log p).

- The AI+Lean pipeline is already shown viable for this problem; scaling to broader classes requires stronger automation, tactic libraries, and editorial workflows to ensure readability and trust.

Glossary

- Asymptotic density: The limiting proportion of integers up to x that satisfy a property, as x grows. "has a positive asymptotic density "

- Asymptotic formula: An expression describing the leading behavior of a quantity as a parameter tends to infinity. "and they obtain an asymptotic formula for as ."

- Base- digits: The digits of an integer when written in base p. "Let be the number of the first base- digits of which are ."

- Big-O notation: A notation that bounds the growth rate of a function up to constant factors. "Erd\H{o}s proved an upper bound "

- Binomial divisibility: The property that one binomial coefficient divides another. "The argument reduces this to a binomial divisibility "

- Carry count: The number of carry operations produced when adding numbers in a given base. "By Kummer's theorem, translates into a carry count for doubling in base ."

- Carry-poor: Having few carries in base- addition (e.g., when doubling a number). "constructs integers that are carry-poor for doubling in each of the bases simultaneously."

- Carry-rich: Having many carries in base- addition (e.g., when doubling a number). "In contrast, the present proof is ``carry-rich'', forcing many carries simultaneously."

- Central binomial coefficient: The binomial coefficient , central in the nth row of Pascal’s triangle. "divisors of the central binomial coefficient ."

- Chernoff inequality: A tail bound for sums of independent Bernoulli random variables. "This is the standard Chernoff inequality with ."

- Erdős Problem: A problem posed by Paul Erdős, often part of a curated collection of number-theoretic questions. "We provide a writeup of a resolution of Erd\H{o}s Problem #728;"

- Interval product divisibility: Divisibility of a product of consecutive integers by another structured integer. "one has for almost all the interval product divisibility [(n+1)\cdots(n+a)\ \mid\ \binom{2n}{n}]."

- Kummer's theorem: A characterization of the -adic valuation of binomial coefficients via carries in base- addition. "Kummer's theorem identifies with the number of carries when adding in base~."

- Lean (theorem prover): An interactive proof assistant used to construct formal, machine-checked proofs. "The final result of the system is a formal proof written in Lean, which we translate to informal mathematics in the present writeup for wider accessibility."

- Legendre's formula: A formula for the -adic valuation of factorials: . "By Legendre's formula, ."

- Little-oh notation: A notation indicating a function grows strictly slower than another (e.g., ). "the main constraint is "

- p-adic valuation: The exponent of a prime p in the prime factorization of an integer. "usually governed by Kummer's carry interpretation of -adic valuations."

- Prime counting function: The function that counts the number of primes less than or equal to . "where is the prime counting function."

- Residue class: An equivalence class of integers modulo a given modulus. "Each residue class modulo occurs at most times in the interval."

Collections

Sign up for free to add this paper to one or more collections.