- The paper introduces sharp LMI conditions as a rigorous framework for certifying contractivity in neural networks across continuous and discrete time.

- It provides an explicit algebraic synthesis of weight matrices that enhances model expressivity and ensures robust stability in FRNN and HNN architectures.

- The methodology is applied to integral control and implicit neural network design, achieving competitive performance with reduced parameter counts.

Sharp LMI-Based Contractivity Analysis of Neural Networks: Theory and Applications

This work develops a systematic contractivity analysis for recurrent neural network (RNN) architectures, including firing-rate neural networks (FRNNs) and Hopfield neural networks (HNNs), with the objective of certifying robust stability and enabling advanced control or learning applications. The analysis is rooted in contraction theory, leveraging sharp Linear Matrix Inequality (LMI) conditions. The technical focus is on characterizing weight matrices W that guarantee contraction for networks with non-expansive or monotone non-expansive activation nonlinearities (e.g., ReLU, tanh, sigmoid) in both continuous and discrete time. The work addresses a central need: stability and expressivity certification for RNNs in edge computing and deep implicit learning contexts.

Contractive Dynamics and LMI Synthesis

The core theoretical contribution is the derivation of necessary and sufficient LMI conditions for contractivity of Lur'e-type systems with diagonal and Euclidean metrics, covering both continuous- and discrete-time settings. The approach utilizes incremental multiplier matrices (IMMs) and the S-lemma to generate tractable convex constraints over W, P≻0 (the metric), and Q≻0 (the multiplier). Two nonlinearity classes are formalized:

- CONE (Component-wise Non-Expansive): Slope-restricted in [−1,1].

- MONE (Monotone Non-Decreasing, Non-Expansive): Slope-restricted in [0,1].

The resulting LMI criteria are shown to be sharp for the classes considered. Strong inclusion and duality relationships are elucidated between the sets of contracting weights under these categories, and for FRNN and HNN topologies. Notably, the discrete-time CONE LMI recovers (and is equivalent to) Schur diagonal stability, and the continuous-time MONE LMI dominates all other sets in terms of allowable W, strictly increasing model expressivity.

The paper further establishes that, via appropriate variable substitutions, all matrix inequalities reduce to convex feasibility problems, and equivalently form LMIs in the free parameters (P,Q,S), with ReLU0 a reparametrization of the weighted synaptic matrix.

Structural Properties and Parameterization

Detailed structural analysis demonstrates:

- Inclusion hierarchy: The discrete-time CONE set is the smallest (most restrictive) and the continuous-time MONE set is the largest (least restrictive) set of contracting ReLU1 for a fixed contraction rate.

- Duality: The FRNN and HNN LMI conditions are duals under transposition.

- Necessity/Sufficiency: Lyapunov diagonal stability (LDS) is necessary but insufficient for contractivity in general asymmetric cases.

- Optimality for symmetric weights: The MONE continuous-time LMI recovers known log-optimal contractivity rates for symmetric ReLU2.

A crucial application-oriented result is the exact algebraic parameterization of all contracting ReLU3:

ReLU4

with ReLU5, ReLU6 full-rank, ReLU7 free. This enables expressive, LMI-certified synthesis of weight matrices for neural network design.

Application to Integral Control and Reference Tracking

Utilizing the developed LMI conditions, the paper proposes an LMI-based design for low-gain integral controllers for contracting FRNNs targeting robust reference tracking. The key technical insight is the separation of timescales: the FRNN operates as a fast subsystem, and the integral control law acts on the slow variable. The singular perturbation analysis guarantees that, provided a certain LMI is feasible, one can choose a low-enough controller gain to ensure global asymptotic tracking.

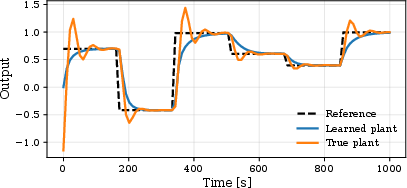

This approach is numerically validated on a learned two-tank system, with the FRNN identified via the expressive LMI parameterization. The system tracks setpoints exactly via the synthesized controller.

Figure 2: System identification and integral control tracking of a two-tank system using a contracting FRNN parameterization.

Implicit Neural Networks: Expressivity and Safety

The sharp contractivity parameterization is leveraged to construct input-dependent implicit neural networks with provable contraction properties. Allowing weights and biases (ReLU8) to depend on the input, while ensuring contractivity via algebraic constraints, yields equilibrium mappings that are locally (but not globally) Lipschitz. This strictly enhances representational capacity compared to traditional globally Lipschitz constraints.

Empirical validation on MNIST and CIFAR-10 benchmarks demonstrates that models designed with this methodology achieve accuracy competitive with existing implicit architectures but with a significantly reduced parameter count—highlighting an increase in model expressivity for a fixed model size. For example, on MNIST, the approach yields 99.33% accuracy with 89K parameters; on CIFAR-10, 78.3% with 134K parameters, and 82.3% with augmentation, outperforming monDEQ models of equivalent size.

Implications and Future Directions

These results have broad implications for both robust control and machine learning:

- For control: The LMI framework provides tractable, certifiable synthesis of high-dimensional stable subsystems, enabling modular integral or feedback designs for nonlinear plants learned from data.

- For deep learning: Allowing maximal expressivity under guaranteed contraction enables the systematic exploration of highly nonlinear, stable equilibrium architectures without risk of divergence.

- Theory: The duality and inclusion properties set the stage for future investigations into the interplay between topology, activation, and stability in large-scale RNNs, and generalization to broader classes such as RENs and GNNs.

Future work includes extension to more general neural models (e.g., random edge networks), output feedback stabilization, and distributed/graph architectures.

Conclusion

This paper introduces and analyzes a sharp, LMI-based framework for certifying and synthesizing contracting neural networks, with demonstrated applications in robust integral control and deep implicit learning. The results sharply characterize the maximal admissible weights for robust contractivity, yield strict inclusion relationships across nonlinearity classes and time domains, and provide an explicit algebraic synthesis parameterization. Empirical results confirm strong practical applicability in both control and learning contexts.