- The paper introduces a multi-tier privacy benchmark that systematically categorizes privacy transformations for video-based action recognition.

- It employs spatial obfuscation, edge extraction, and cryptographic scrambling to measure the degradation in recognition accuracy with increased privacy.

- Empirical results reveal a monotonic accuracy decline, especially with context removal, highlighting the trade-off between privacy and utility.

PrivHAR-Bench: A Graduated Privacy Benchmark Dataset for Video-Based Action Recognition

Motivation and Benchmark Gaps

Current research in privacy-preserving human activity recognition (HAR) is hindered by a lack of standardized benchmarks that enable systematic assessment of the privacy-utility trade-off in video-based action recognition. Prior works predominantly report results on binary settings—comparing original versus single transformation modalities—which fails to characterize the continuum between visual privacy protection and recognition accuracy. Furthermore, background context bias and non-comparable evaluation protocols pervade the literature, confounding cross-method comparison.

PrivHAR-Bench "PrivHAR-Bench: A Graduated Privacy Benchmark Dataset for Video-Based Action Recognition" (2604.00761) directly targets these deficiencies by introducing a multi-tier, context-controlled benchmark for video-based HAR, incorporating a diversity of privacy transformations, background-removal variants, comprehensive ground-truth and pose annotations, standardized train/test splits, and a released evaluation toolkit.

Graduated Privacy Spectrum Design

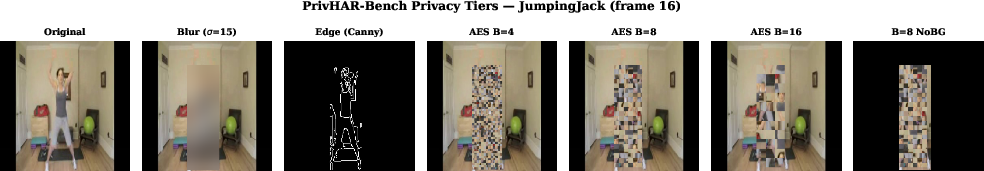

PrivHAR-Bench systematically organizes privacy transformations into three semantically meaningful categories (tiers): spatial obfuscation, structural abstraction, and cryptographic block permutation. Each transformation targets a specific class of visual features, enabling quantitative tracing of the degradation in recognition utility as privacy strength increases.

- Tier 1 applies local Gaussian blurring to the region-of-interest (ROI), suppressing high-frequency spatial features (e.g., facial landmarks) while preserving gross posture information.

- Tier 2 employs Canny edge detection, discarding all pixel-level texture and color, retaining only the contour representation of the human subject.

- Tier 3 leverages cryptographically strong AES block scrambling at variable spatial granularities (B∈{4,8,16}), obliterating local spatial structure in the ROI while keeping global low-level statistics.

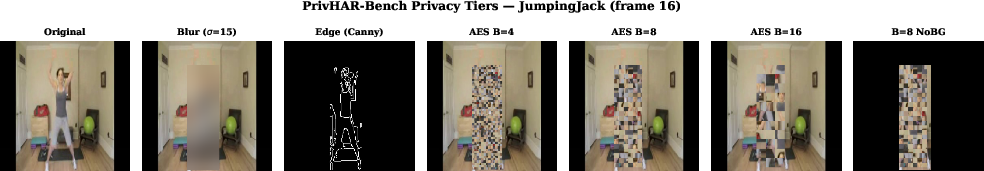

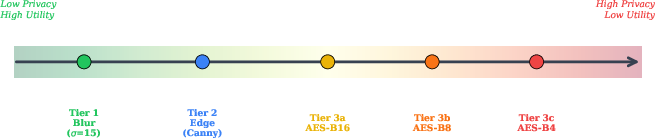

Transformed examples along the privacy spectrum are illustrated in Figure 1.

Figure 1: A single frame from the PrivHAR-Bench dataset across privacy tiers, showcasing progressive destruction of spatial identity features.

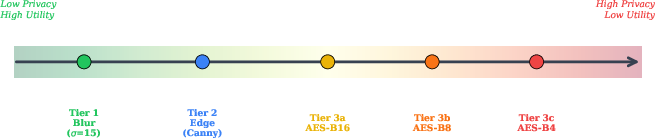

The tier structure is further summarized in Figure 2, clarifying which categories of visual information are destroyed at each privacy level.

Figure 2: The PrivHAR-Bench privacy spectrum, decomposing how each tier controls the presence of appearance, contour, and pixel arrangement information.

This fine-grained discretization of the privacy continuum enables clear empirical analysis that has been lacking in prior benchmarks.

Context Bias and Background Removal

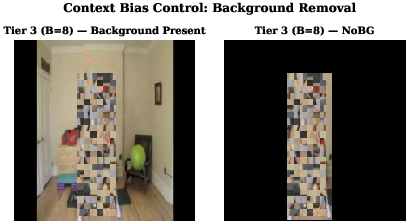

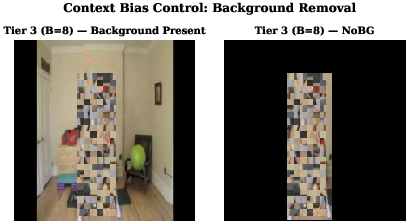

Empirical studies have established that HAR models frequently leverage static environmental context rather than subject motion, which is antithetical to the goals of privacy transformations. To isolate the effect of privacy transformations and preclude context-driven shortcuts, PrivHAR-Bench provides background-removed versions of all Tier 3 variants—the NoBG condition—in which all non-ROI pixels are set to zero.

The background bias distinction is depicted in Figure 3.

Figure 3: Context bias control via background removal; only the transformed ROI is preserved in the NoBG variant.

This affords precise measurement of the contribution from human-centered features as opposed to peripheral visual cues.

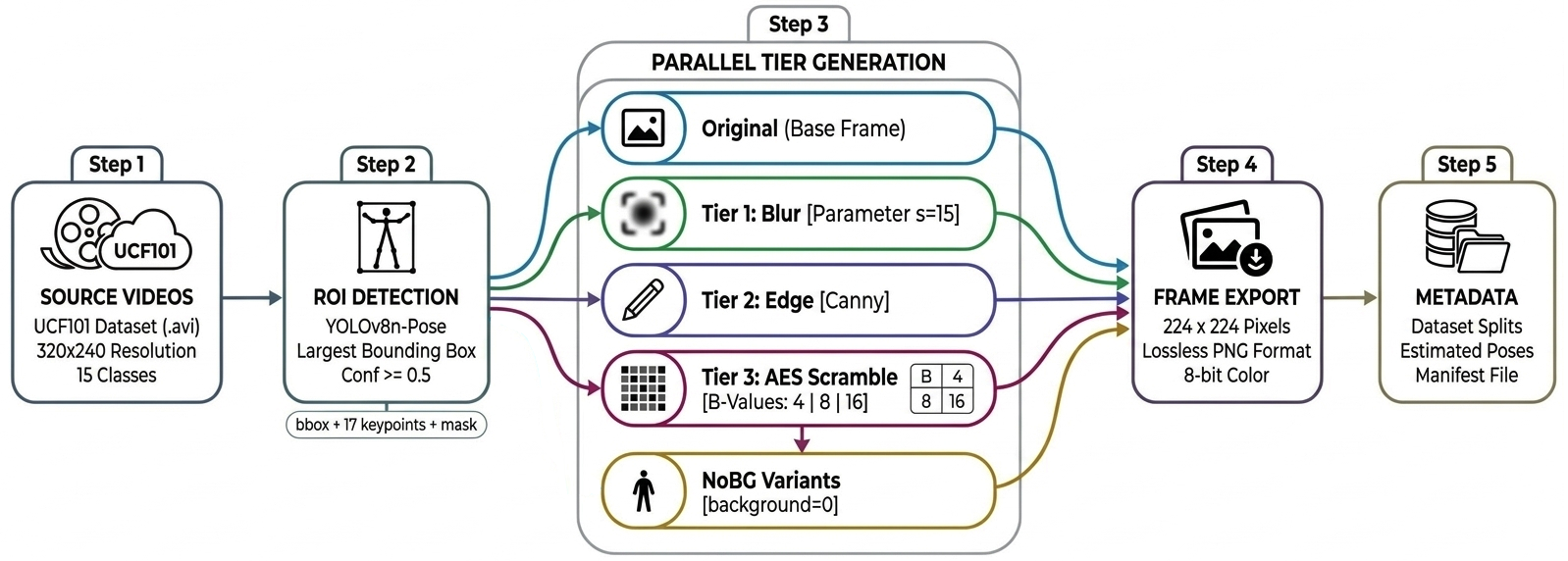

Dataset Structure, Generation, and Protocol

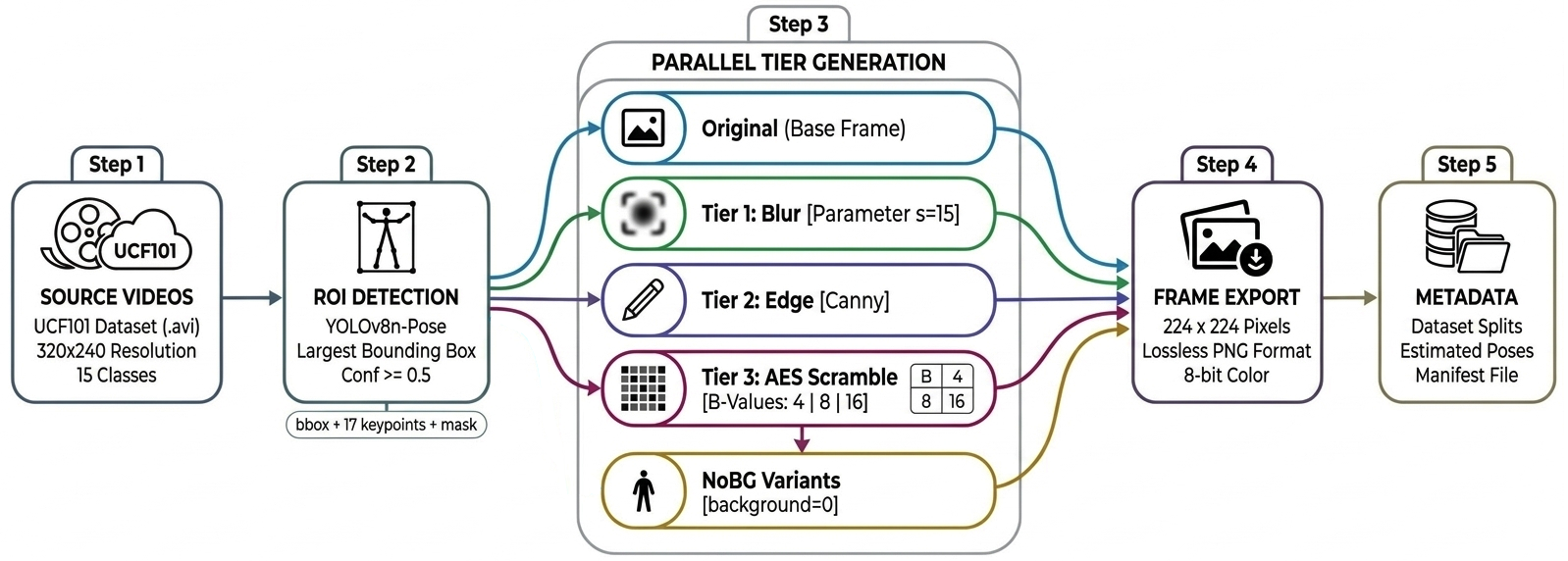

PrivHAR-Bench draws from a subset of 15 classes of UCF101, emphasizing diversity of body articulation while minimizing object and context dependencies. The generation pipeline relies on YOLOv8n-Pose for robust ROI and pose estimation. For each of the 1,932 clips, every frame (centered 32-frame windows at 224×224 resolution) has lossless PNG storage under all privacy tiers, preserving transformation integrity and precluding codec artifacts.

The complete pipeline—encompassing ROI detection, privacy transformation, mask application, and deterministic randomization (AES-based, frame-specific permutations)—is released for full reproducibility.

Figure 4 details the pipeline structure.

Figure 4: End-to-end PrivHAR-Bench pipeline: from input video through parallel privacy tier generation using unified ROI detection and masking.

All evaluation follows prescribed group-based (identity-controlled) splits, with both per-tier within-domain and cross-domain (trained on clear, tested on transformed) protocols. Model and evaluation heterogeneity is eliminated through a released eval.py toolkit, standardizing all recognition and privacy metrics.

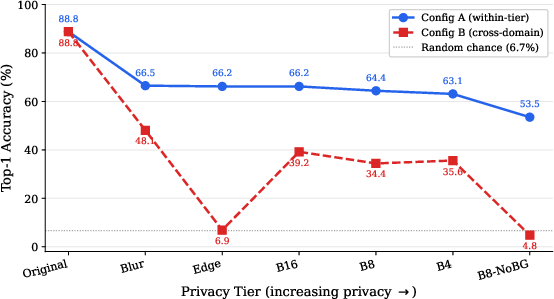

Empirical Findings: Privacy-Utility Trade-Off and Context Isolation

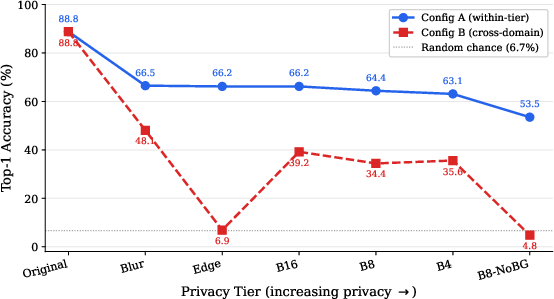

Baseline experiments with R3D-18 (3D ResNet-18, Kinetics-400 pre-trained) quantify the utility cost of privacy escalation and rigorous context bias control. Under within-tier training, accuracy degrades in an interpretable and monotonic fashion with increasing privacy, from 88.8% (clear) to 63–66% (privacy tiers), and further to 53.5% when the background is removed under the B8 NoBG variant. Notably, context removal (NoBG) yields a 10.9 pp accuracy drop, confirming substantial reliance on background cues. Under cross-domain evaluation (clear-trained, transformed-test), performance deteriorates to random chance for edge representations (6.9%), and to 4.8% in the B8-NoBG scrambled context, indicating no transferable signal remains.

These trends are visualized thoroughly in Figure 5.

Figure 5: Top-1 recognition accuracy of R3D-18 across privacy tiers and context conditions, demarcating within-tier and cross-domain generalization gaps.

Privacy Audit: Face Obfuscation, SSIM/PSNR, and Privacy-Utility Metrics

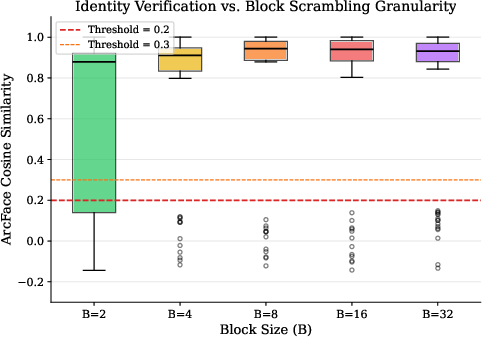

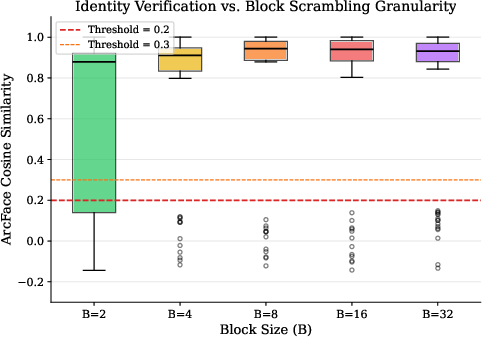

Block scrambling was empirically shown to render 89% of faces undetectable by a state-of-the-art ArcFace model across all tested block sizes; the failure rate is invariant to B given UCF101's low facial resolution. For the few faces surviving spatial scrambling, conditional similarity remained above verification thresholds, but these are a small minority, primarily large and off-center faces. Figure 6 illustrates the detection and similarity rates as a function of B.

Figure 6: ArcFace verification similarity post-scrambling, demonstrating the abrupt failure of face localization even at coarser block sizes.

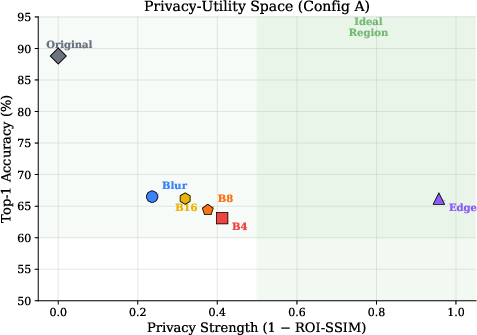

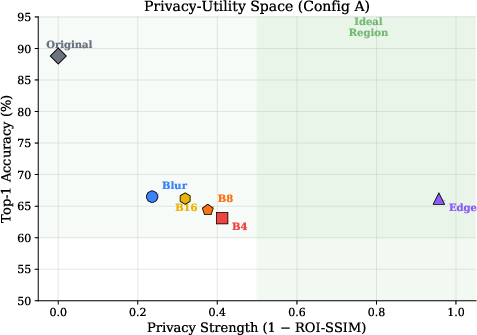

Analysis of SSIM and PSNR values (computed only within ROIs) further supports that scrambling induces severe perceptual distortion, while ROI-PSNR is less informative due to unmodified block-internal pixels; ROIs with edge extraction show the lowest SSIM, yielding the highest composite privacy-utility (PU) score. The privacy-utility spectrum is depicted for all tiers in Figure 7.

Figure 7: Privacy-Utility space for PrivHAR-Bench R3D-18: highest utility with maximum privacy (top-right) is uniquely achieved by edge-based Tier 2.

Critically, the results support the bold claim that Tier 2 (edge) achieves both high privacy (ROI-SSIM = 0.043) and competitive utility (66.2% accuracy), yielding the highest PU score of all tiers.

Limitations, Theoretical Implications, and Future Development

PrivHAR-Bench's empirical findings are qualified by several critical limitations, including: the low native resolution of UCF101 (impacting privacy metric generalizability to higher-resolution deployments), absence of multi-person/class-interaction scenarios, incomplete temporal privacy (gait-based re-ID not addressed), and the lack of surveillance-relevant activity classes. Training regime asymmetry (15 vs 5 epochs for clear vs transformed tiers) slightly disadvantages privacy-preserved tiers but does not invalidate trend analysis. The pipeline and protocol are designed for extensibility with additional transformations, higher-resolution sources, and expanded class sets.

From a theoretical perspective, PrivHAR-Bench allows future research to rigorously quantify the effects of privacy destruction at different visual abstraction levels and to differentiate methodological advances in privacy-preserving HAR that are confounded by the presence of background signal. Practically, the benchmark provides a community-anchoring standard for future method comparisons, supporting deployment-evaluable rigor in privacy-sensitive HAR.

Conclusion

PrivHAR-Bench (2604.00761) establishes a comprehensive, reproducible framework for quantitative analysis of the privacy-utility frontier in video-based action recognition. Its multi-tier privacy spectrum, context-bias control, and fixed protocol resolve critical gaps in current HAR evaluation practices. Benchmarked results demonstrate both the cost and value of increasing privacy and highlight the inadequacy of existing architectures for utility retention under strong privacy guarantees. Future benchmark extensions—especially inclusion of higher-resolution video, additional classes, more diverse cryptographic transformations, and multi-person activities—will be essential for bridging the gap to real-world privacy-compliant HAR. The released dataset and code form a robust substrate for future research in privacy-preserving machine perception.