- The paper presents a data-driven optimization of 3D Kronecker point sets that outperforms classical sequences in star discrepancy reduction.

- It employs evolutionary heuristics and Irace for tuning parameters, achieving state-of-the-art performance for various set sizes.

- The study highlights the limitations of the Kronecker approach in higher dimensions, motivating future exploration in quasi-Monte Carlo methods.

Algorithmic Construction of Low Star Discrepancy 3D Kronecker Point Sets via Algorithm Configuration

Introduction

The paper "Finding Low Star Discrepancy 3D Kronecker Point Sets Using Algorithm Configuration Techniques" (2604.00786) addresses the problem of generating 3D point sets with minimal Ld∗ (star) discrepancy, which is crucial in quasi-Monte Carlo methods, experiment design, one-shot optimization, and related domains. Star discrepancy quantifies the non-uniformity of point distributions in [0,1)d, directly linking the quality of numerical integration and sampling-based algorithms to the properties of the underlying point sequences. While classical constructions (e.g., Sobol', Halton, Hammersley) provide theoretical guarantees in the asymptotic regime, evidence has accumulated that, for finite set sizes—a setting ubiquitous in practical applications—bespoke optimization can produce markedly superior point sets.

This work focuses on Kronecker sequences, which generalize the 2D Fibonacci lattice construction to higher dimensions. Their uniformity depends on a small set of continuous parameters, whose values are non-trivial to tune due to the highly multimodal, non-convex optimization landscape governing discrepancy. The paper rigorously investigates automatic algorithm configuration methods, particularly black-box metaheuristics (e.g., CMA-ES) and algorithm configuration (Irace), for the direct minimization of star discrepancy in the 3D case, delivering new state-of-the-art results for broad ranges of point set cardinalities.

The star discrepancy, LP∗, for a finite set P⊂[0,1)d of cardinality n, is defined as the supremum over the difference between the volume of anchor boxes and the empirical measure (fraction of points inside such boxes). Theoretical results such as the Koksma-Hlawka inequality underscore its role in bounding the error of quasi-Monte Carlo estimators.

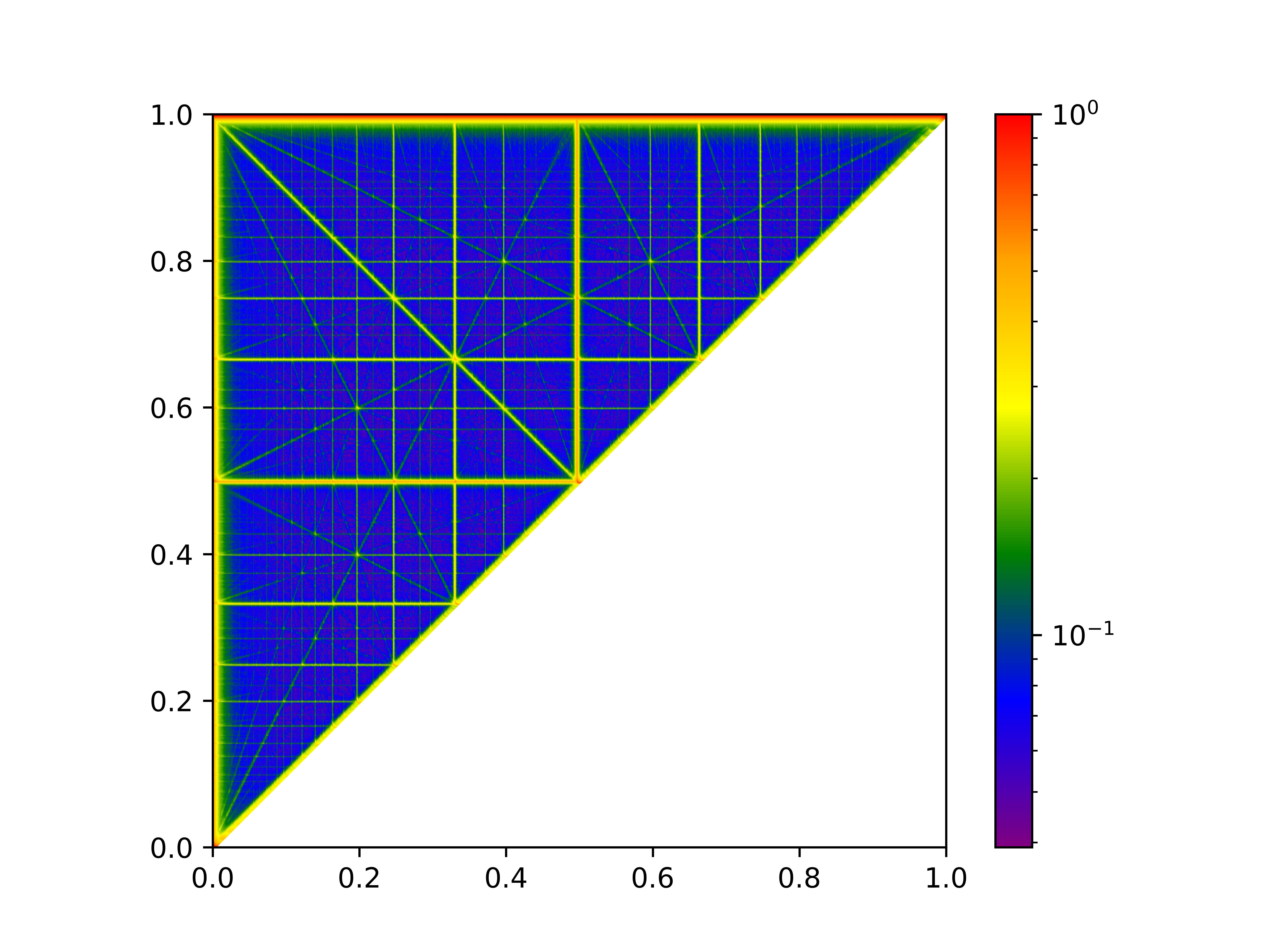

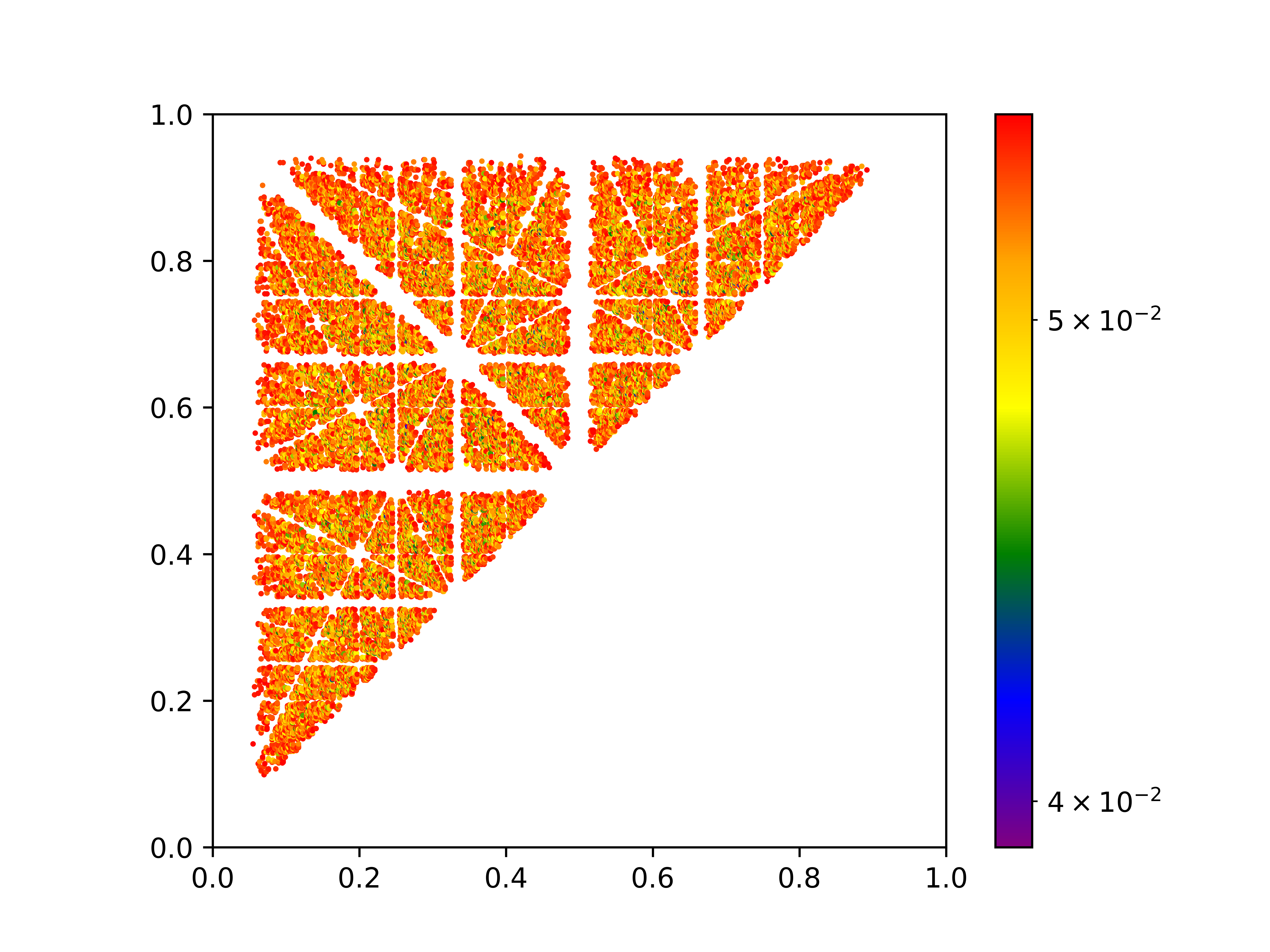

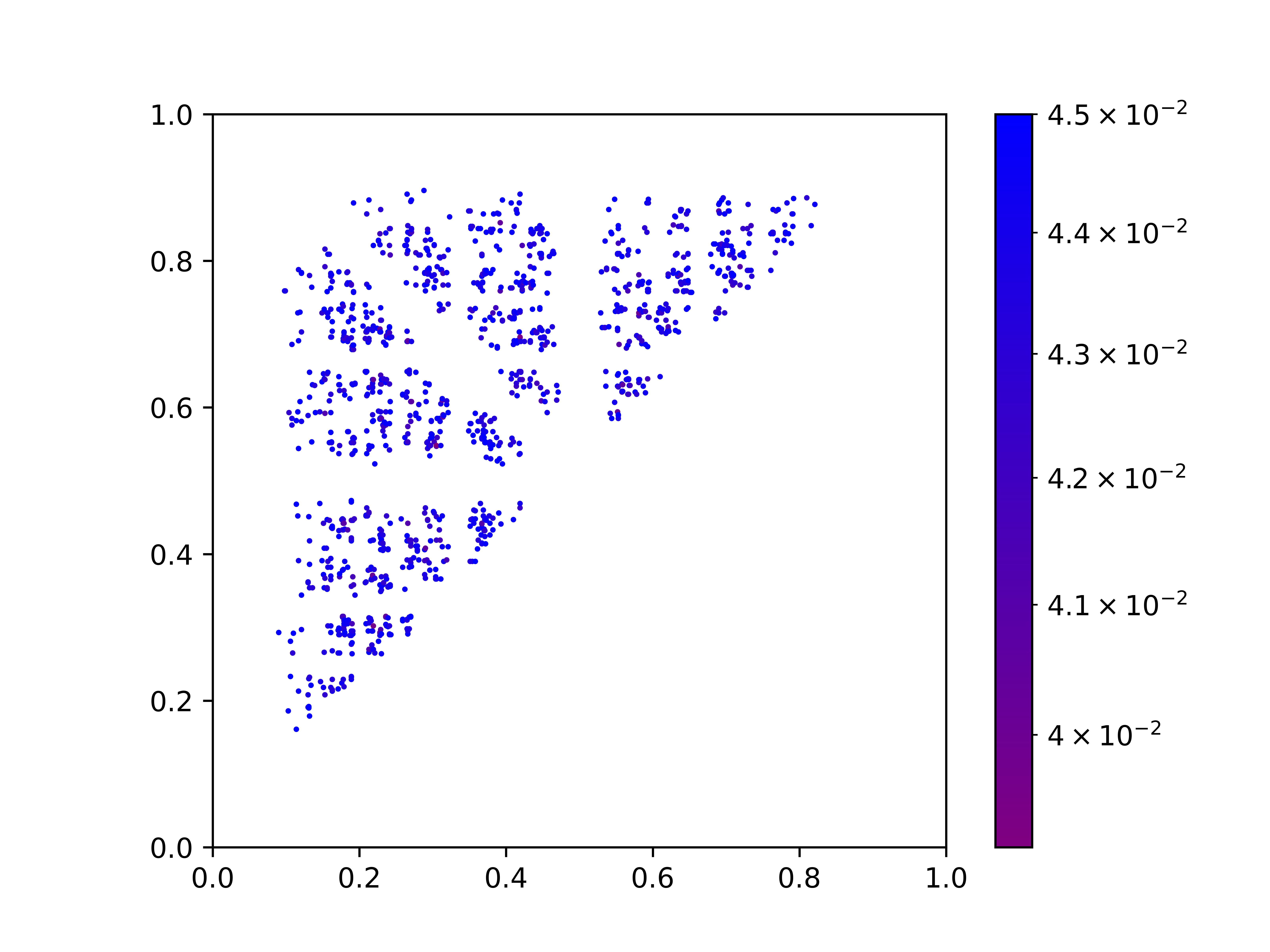

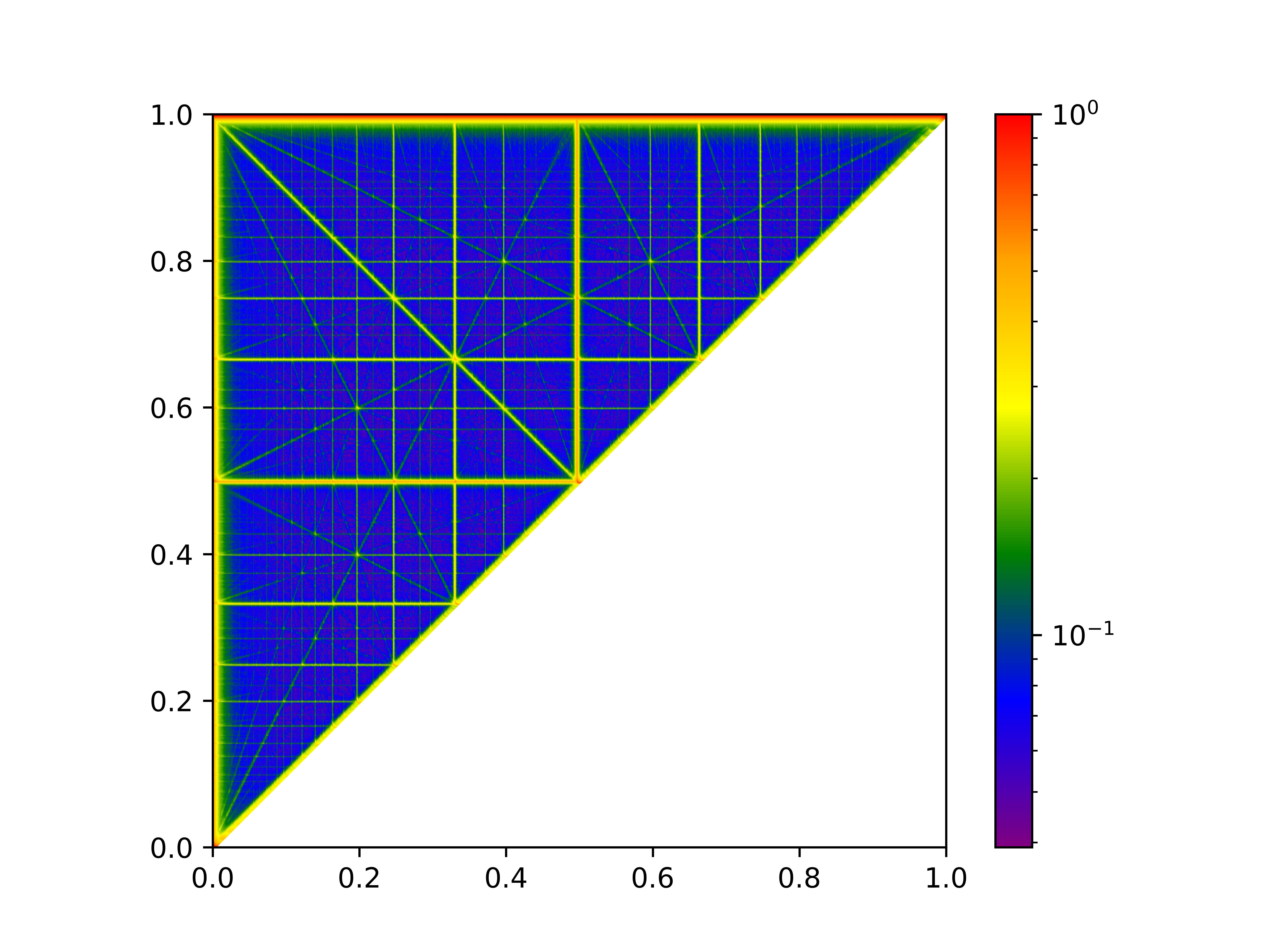

Kronecker sequences in d dimensions have d real-valued parameters (p1,…,pd) and instantiate the sequence as the point set {(ip1mod1,…,ipdmod1)∣i=1,…,n}. For tractability and based on empirical evidence, p1 is fixed to [0,1)d0, reducing the search space to [0,1)d1 for the 3D case. The optimization landscape for discrepancy as a function of [0,1)d2 is shown to be highly multimodal, with many local optima and pronounced dependence on [0,1)d3.

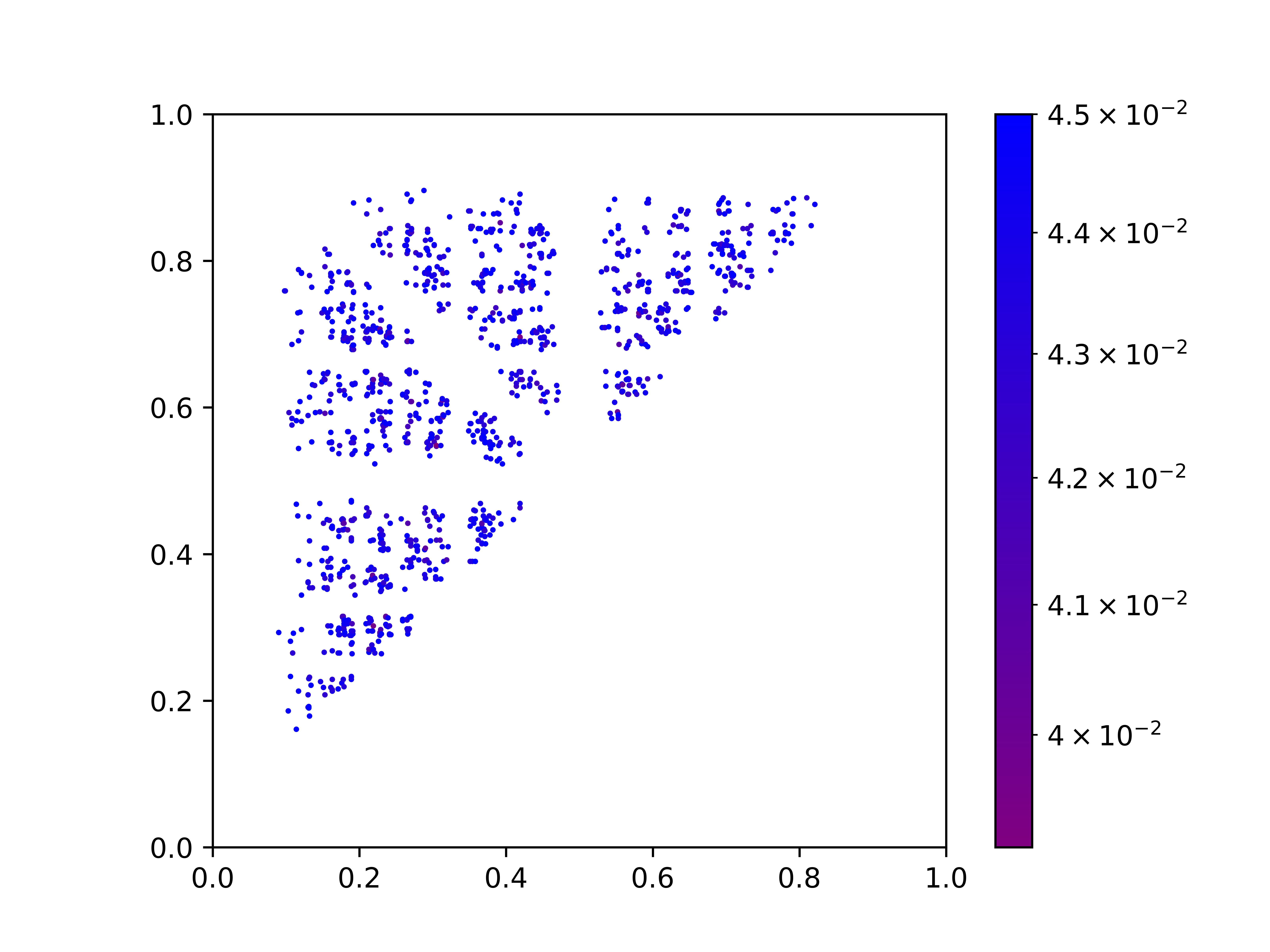

Figure 1: Heatmaps. The [0,1)d4 and [0,1)d5 axes show [0,1)d6 and [0,1)d7, color encodes [0,1)d8 for 3D Kronecker sets with [0,1)d9. Rightmost plot—very low discrepancy regions—exhibits parameter scattering and multimodality.

Black-Box Optimization and Algorithm Configuration for Kronecker Sequences

To overcome the challenges posed by the intricate discrepancy landscape, the paper evaluates evolutionary black-box algorithms—primarily CMA-ES—versus systematic data-driven AC (notably, Irace). The former optimizes parameters for each LP∗0 separately, while the latter attempts to learn robust parameterizations valid across intervals of LP∗1 (e.g., for all LP∗2 in LP∗3, LP∗4, etc.).

CMA-ES is used with 10,000 function evaluations per run, leveraging its adaptation capabilities for high-dimensional, non-separable continuous optimization. The Irace framework iteratively races parameter configurations against subsets of the problem domain (here, intervals of LP∗5), focusing resources on promising configurations.

Empirical Results

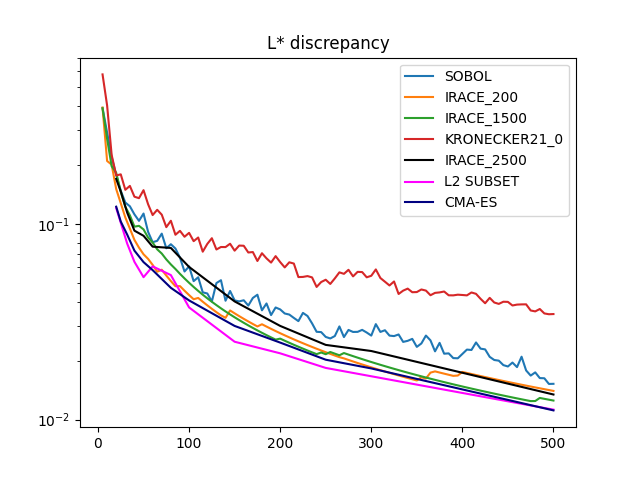

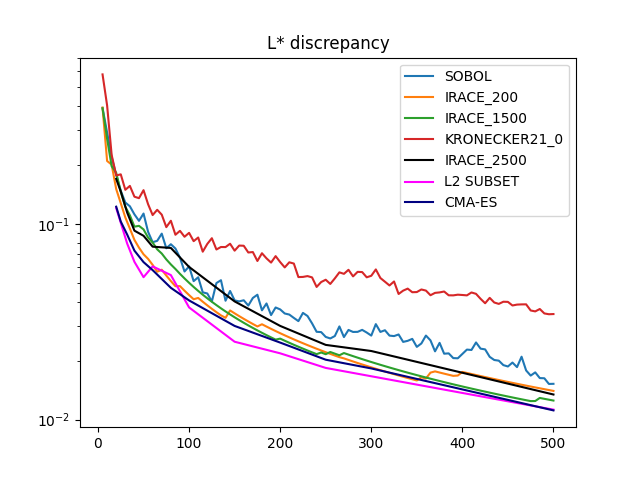

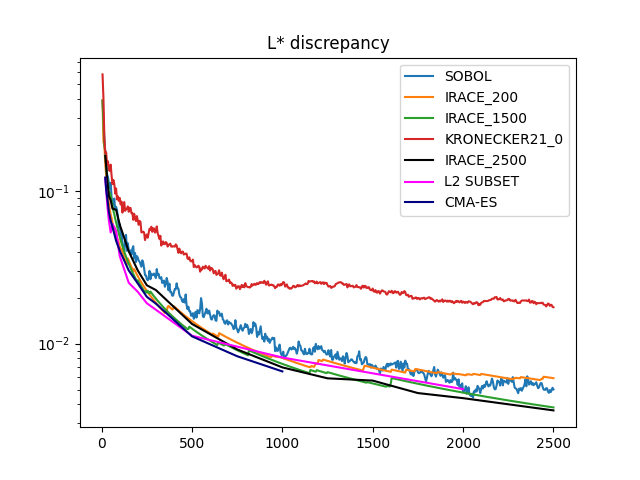

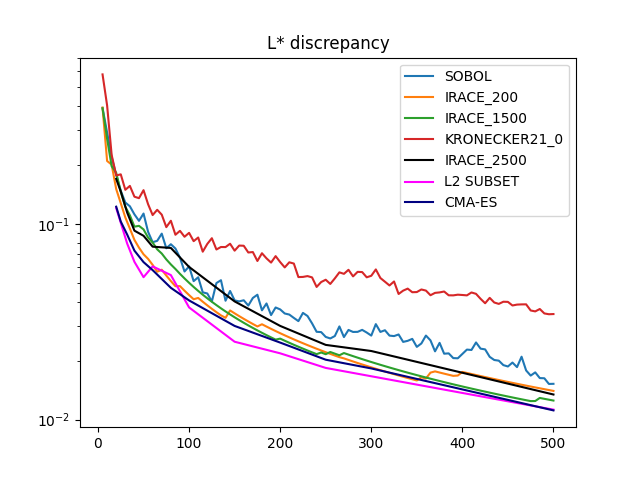

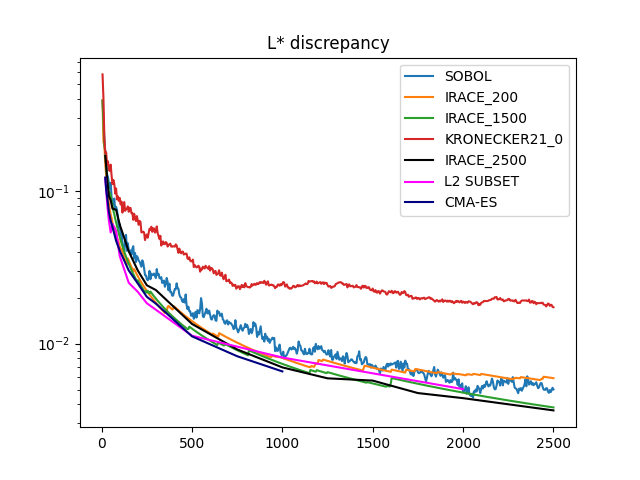

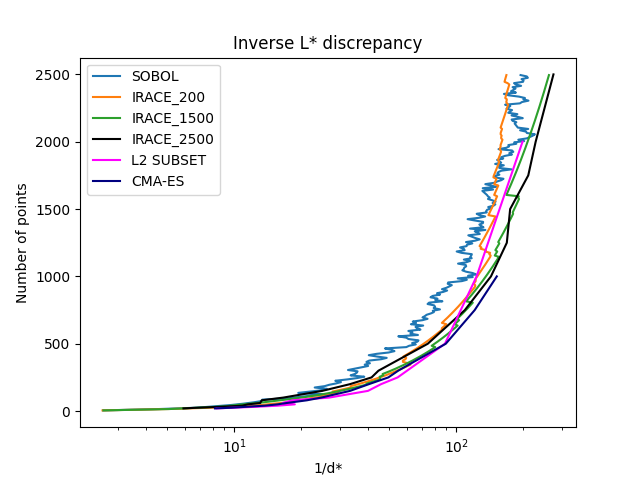

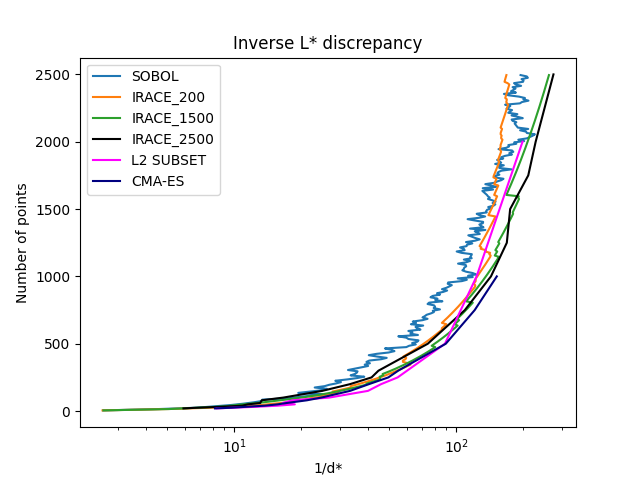

Experimental results validate that both CMA-ES (for small-to-moderate LP∗6) and Irace-tuned Kronecker sequences (especially when tuned for LP∗7) generate point sets with lower LP∗8 than classical Sobol' or L2_Subset approaches, with a clear lead as LP∗9 increases.

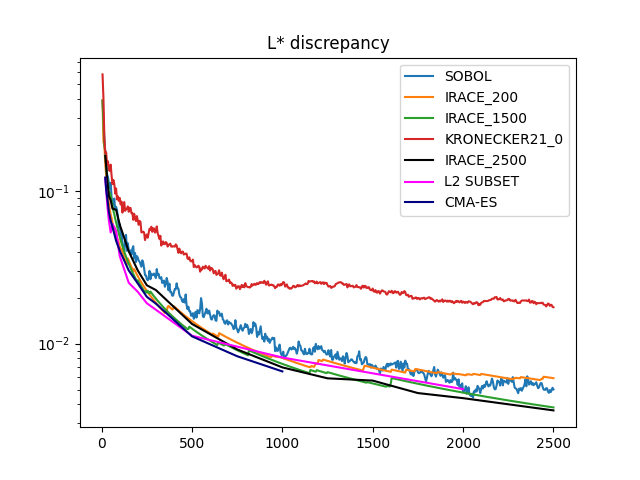

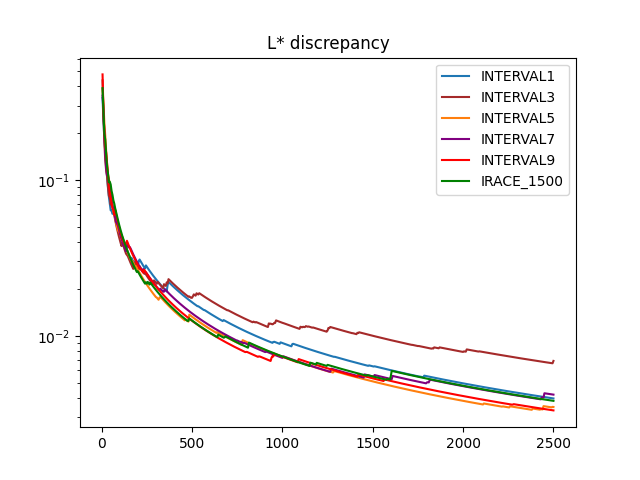

Figure 2: Plot of the P⊂[0,1)d0 discrepancy for 3D Kronecker sets across P⊂[0,1)d1, comparing tuned and classical constructions.

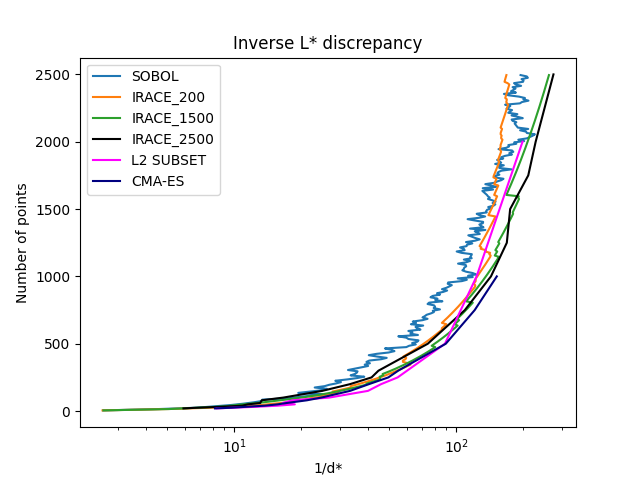

Figure 3: The inverse-star discrepancy: the minimum number of points P⊂[0,1)d2 required to reach a target discrepancy, highlighting substantial efficiency gains for Irace/CMA-ES-tuned Kronecker sets over L2_Subset and Sobol'.

Quantitative highlights include:

- For P⊂[0,1)d3, and all P⊂[0,1)d4, Kronecker sequences optimized via CMA-ES or Irace deliver lower discrepancy than any classical or metaheuristic method previously reported.

- At large P⊂[0,1)d5 (e.g., P⊂[0,1)d6), Irace-tuned parameter pairs generalize well, consistently outperforming Sobol' and maintaining competitive performance even outside their training P⊂[0,1)d7 interval.

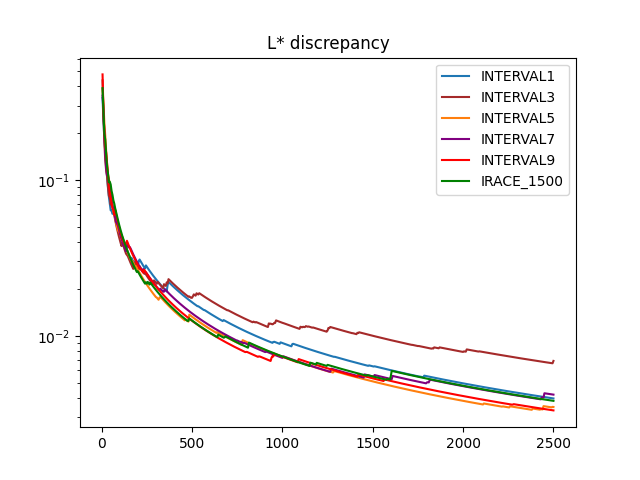

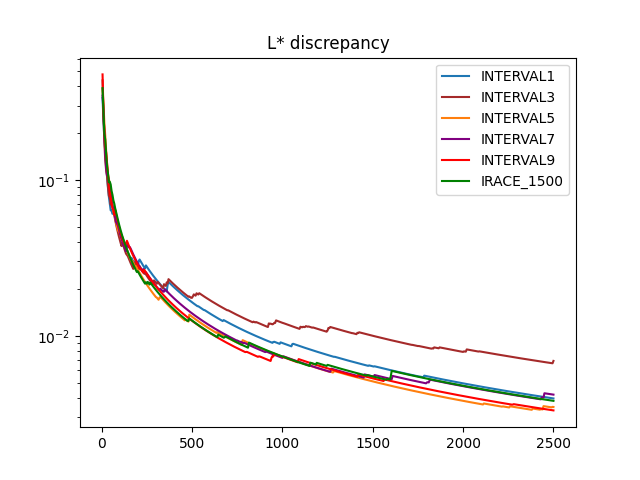

The paper also systematically studies whether parameter tuning over small intervals (e.g., P⊂[0,1)d8, ..., P⊂[0,1)d9) yields generalizable parameter sets. It finds that while configurations tuned for small n0 may deteriorate at large n1, those obtained from larger intervals (or the largest one considered) provide robust, near-optimal performance across scales.

Figure 4: Interval tuning robustness—n2 evolution for Kronecker parameter pairs optimized for small n3 intervals. For small n4, discrepancies are similar, but only large-interval-tuned parameters retain optimality as n5 increases.

Effects of Postprocessing and Higher Dimensions

The authors also apply postprocessing strategies (which exploit point-set symmetries and coordinate ordering) to Kronecker sequences, confirming the expected benefit but also showing that high-quality Kronecker sets before postprocessing remain among the best after. However, computational expense limits these experiments to small n6.

For dimension n7, all tested optimization strategies (CMA-ES, RTS, Irace) failed to surpass the truncated Sobol' sequence, indicating intrinsic limitations of Kronecker-type constructions in higher dimensions or a dramatic increase in the search difficulty of the parameter landscape.

Theoretical and Practical Implications

This paper makes several authoritative claims, highlighted by:

- Optimized 3D Kronecker sequences outperform all classical and contemporary heuristic constructions for star discrepancy at moderate-to-large set sizes (n8).

- The effectiveness and generalization of parameter tuning grows with the interval of n9 considered, suggesting that robust, interval-based configuration is preferable to pointwise optimization for practical deployments in experiment design and QMC applications.

- The observed limitations in dimension d0 signal that Kronecker sequence generalization may not be tractable or competitive, motivating either alternative constructions or enhanced search methods.

By demonstrating strong state-of-the-art improvements in practical discrepancy minimization, the paper provides immediately applicable advances for computational fields requiring uniformly distributed sample sets, while also contributing to the understanding of algorithm configuration methods in large, multimodal, non-convex continuous search spaces.

Future Directions

Key anticipated developments involve:

- Deeper analysis of the parameter-to-discrepancy mapping to understand the observed highly multimodal landscape and to improve global optimization reliability, particularly in higher dimensions.

- Hybrid methods combining heuristic search, metaheuristics, and learning-based models (e.g., neural architectures) to attack the d1 regime.

- Adaptive or online Kronecker parameter selection schemes that dynamically tune sequence parameters as additional samples are incorporated in sequential designs or augmentations.

Such directions align with the broadening focus in AI and optimization research on scalable, adaptive sampling for high-dimensional integration, uncertainty quantification, and experimental design.

Conclusion

The study establishes that algorithm configuration for Kronecker sequence parameters in 3D yields point sets of record-low star discrepancy for moderate and large d2, demonstrating the superiority of black-box optimization over both classical and previous state-of-the-art heuristic constructions in this setting. While 3D optimization is largely solved via these methods, extension beyond three dimensions remains an open and challenging problem, and the insights drawn here motivate ongoing research into both structure-exploiting search and fundamentally different low-discrepancy construction paradigms.

(2604.00786)