- The paper introduces MBGR, a framework combining business-aware semantic ID, multi-business prediction, and label dynamic routing to improve recommendation quality.

- It employs a Transformer-based autoregressive model with a parameter-sharing Mixture-of-Experts tower to generate domain-specific item embeddings.

- Empirical evaluations demonstrate significant Hit Rate@10 improvements, especially for minority domains, and successful deployment in Meituan's production system.

MBGR: Multi-Business Prediction for Generative Recommendation at Meituan

Motivation and Context

Generative Recommendation (GR) systems have shown superior efficiency and performance compared to traditional two-tower architectures by utilizing Semantic IDs (SIDs) and Next Token Prediction (NTP) frameworks. However, GR approaches in industrial-scale recommendation frequently encounter two major issues in multi-business contexts: (1) the "seesaw phenomenon" induced by NTP's lack of cross-business behavioral modeling, and (2) representation confusion from a unified SID space, undermining semantic differentiation among business domains. The MBGR framework addresses these by advancing generative recommendation architectures into multi-business applications, targeting business heterogeneity and representation integrity.

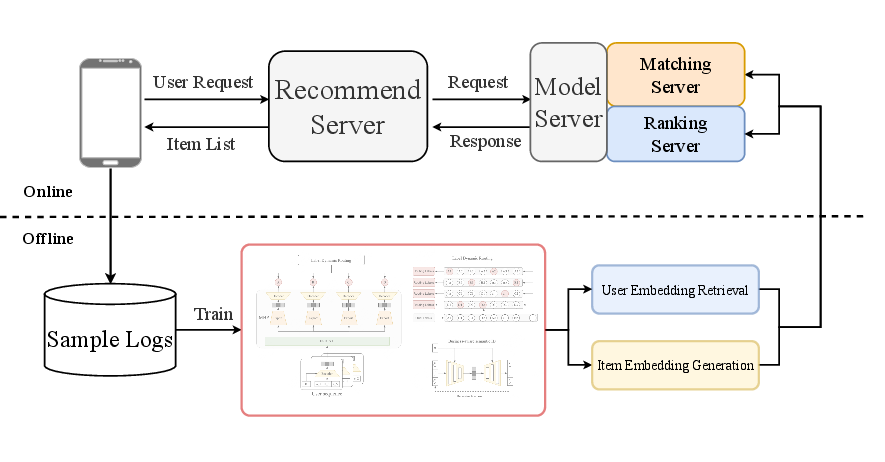

Architectural Overview

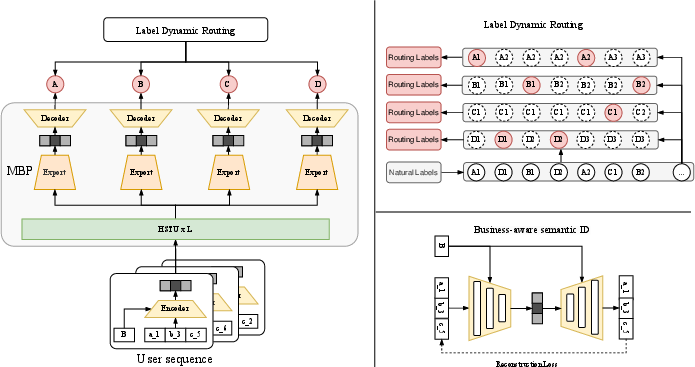

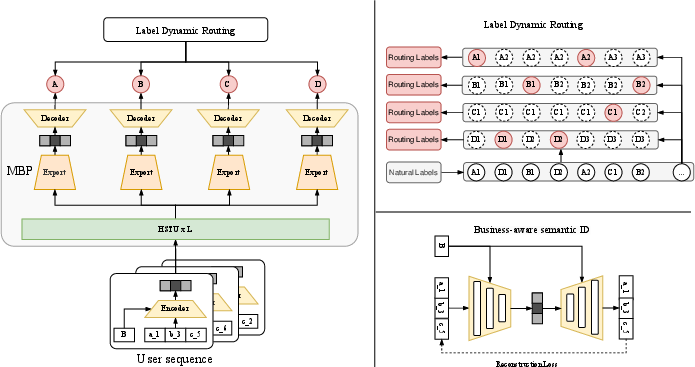

MBGR comprises three modules: Business-aware Semantic ID (BID), Multi-Business Prediction (MBP), and Label Dynamic Routing (LDR). The overall system design incorporates business contextualization into tokenization, domain-specialized generative heads, and label densification for robust multi-business item forecasting.

Figure 1: The overall architecture of MBGR, integrating BID, MBP, and LDR modules for domain-specific generative recommendation.

Business-aware Semantic ID (BID)

BID implements a dual-path autoencoder architecture, encapsulating business-aware encoding and semantic reconstruction. Business embeddings are fused alongside token representations, allowing minimal semantic information loss and domain adaptation during both encoding and decoding.

Multi-Business Prediction (MBP)

MBP utilizes a parameter-sharing Mixture-of-Experts (MoE) tower within a Transformer-based autoregressive model. Item representations produced by BID are transformed via business-conditioned fusion, adaptive gating, and expert aggregation, producing domain-specific item embeddings for simultaneous prediction across business lines.

Label Dynamic Routing (LDR)

LDR converts sparse, sequential item labels into dense business-aligned label spaces by routing prediction targets to the nearest subsequent relevant item within the same business. This dense labeling further enhances multi-business sequence generation performance.

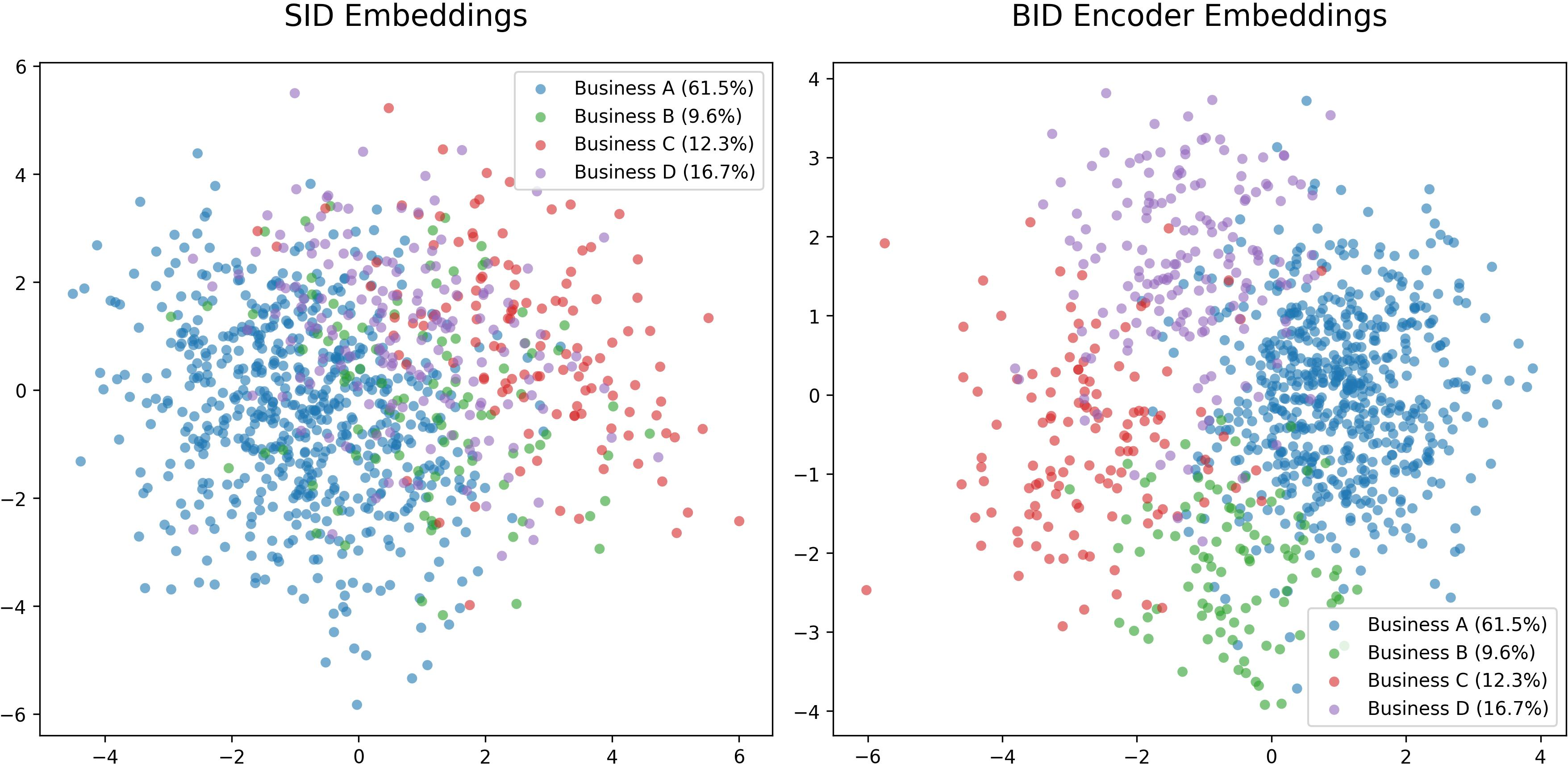

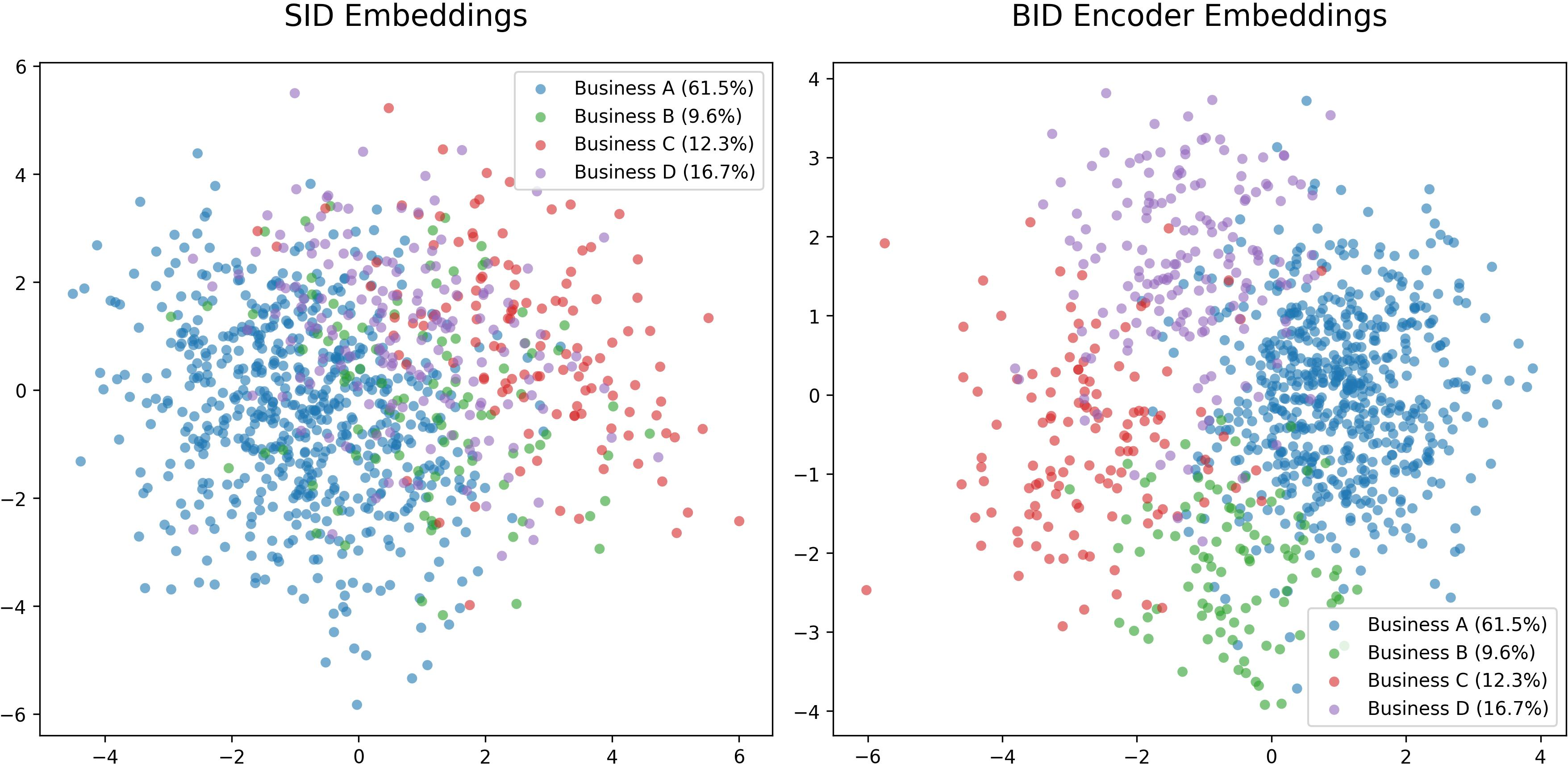

Representation and Embedding Analysis

To analyze the domain separation capability, MBGR compares sum pooling and BID encoder embedding distributions using PCA projections. BID exhibits distinct clustering by business type, enabling both semantic consistency and improved business discrimination, with smaller businesses showing more pronounced improvements.

Figure 2: Embedding distribution comparisons—BID encoder achieves business separation and semantic integrity, outperforming sum pooling in reflecting domain boundaries.

Model Training and Optimization

MBGR employs end-to-end training with a composite objective combining InfoNCE loss for multi-business sequential prediction and L2 reconstruction loss for semantic preservation. Hyperparameters, such as time decay for interaction weighting and expert count in MBP, are tuned to balance contribution across businesses and optimize for evolving user preferences. Business weights are empirically set to counteract gradient dominance by larger domains and align with platform strategy.

Experimental Evaluation

Offline Experiments

MBGR significantly outperforms baselines (SASRec, HSTU, Tiger) in Hit Rate@10, with pronounced gains for less-represented business domains (B, C). Ablation studies demonstrate that all components—LDR, MBP, BID—have synergistic effects, with LDR contributing the largest performance increment.

Hyperparameter Impact

Optimal time decay (α=0.05) maximizes the influence of recent versus historic interactions; smaller businesses benefit more from moderate time decay settings. Empirical business weighting improves Hit@10 for minority domains by up to 16.4% compared to uniform weighting.

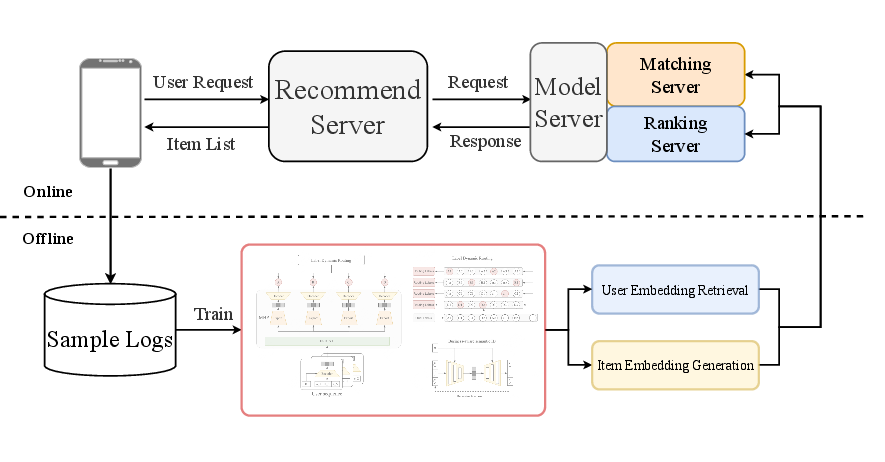

Online Deployment

MBGR is incrementally integrated into Meituan's RTB production system, utilizing generated user and item embeddings both as auxiliary retrieval channels and ranking features.

Figure 3: MBGR's online deployment architecture enhances feature enrichment and retrieval, supporting industrial robustness and high-QPS requirements.

Online A/B testing with a 30% traffic split yields a weighted +3.98% increase in CTCVR over baseline models, with smaller business categories registering the highest absolute gains.

Implications and Future Directions

MBGR resolves critical industrial challenges in scaling GR to multi-business platforms. The BID encoder demonstrates domain-specific embedding segregation, MBP enables domain-adaptive generative prediction, and LDR ensures dense, relevant label supervision. MBGR's improvements, particularly for minority domains, motivate broader investigation into domain-aware generative models, adaptive gradient adjustment, and cross-business representation learning. Extension toward dynamic business weighting, further MoE specialization, and robust streaming adaptation are pertinent future directions for large-scale AI recommendation engines.

Conclusion

MBGR advances the generative recommendation paradigm into multi-business contexts, countering gradient coupling, representation confusion, and label sparsity. The business-aware modular architecture provides robust signal separation and domain-specific modeling, demonstrated by superior offline/online performance and practical deployment success. The framework's principled business-specific adaptation and scalable integration underscore its theoretical and practical relevance for future industrial AI systems.