- The paper introduces a novel variational Bayesian approach that leverages internal stochastic signals for decision routing during training and inference.

- It demonstrates superior performance over deterministic baselines with measurable gains in cross-entropy, perplexity, and accuracy.

- The work integrates homeostatic latent regulation and structurally aware checkpointing to maintain robust uncertainty management and internal agency.

Agentic Control in Variational LLMs: A Technical Essay

Introduction

The paper "Agentic Control in Variational LLMs" (2604.12513) provides a formal exploration of minimal agentic control within LLMs using a variational Bayesian framework. Rather than relying on external orchestration or post hoc uncertainty measurements, the method is distinguished by its direct use of internal stochastic evidence as both a regulatory and operational signal across the training and inference pipeline. This approach tightly couples uncertainty management, structural checkpointing, and decision routing—realizing a compact yet robust form of internal agency. The following essay presents the technical composition, empirical substantiation, and broader implications of this work.

Framework and Methodology

Model Architecture and Variational Backbone

The pipeline utilizes GPT-2 embeddings as a fixed front end, ensuring that all comparatives are isolated to post-embedding computation. Two model families are constructed: EVE (an explicit variational model with local latent variables and stochastic computation in each hidden layer block) and DET (a structurally identical deterministic baseline with all stochasticity ablated).

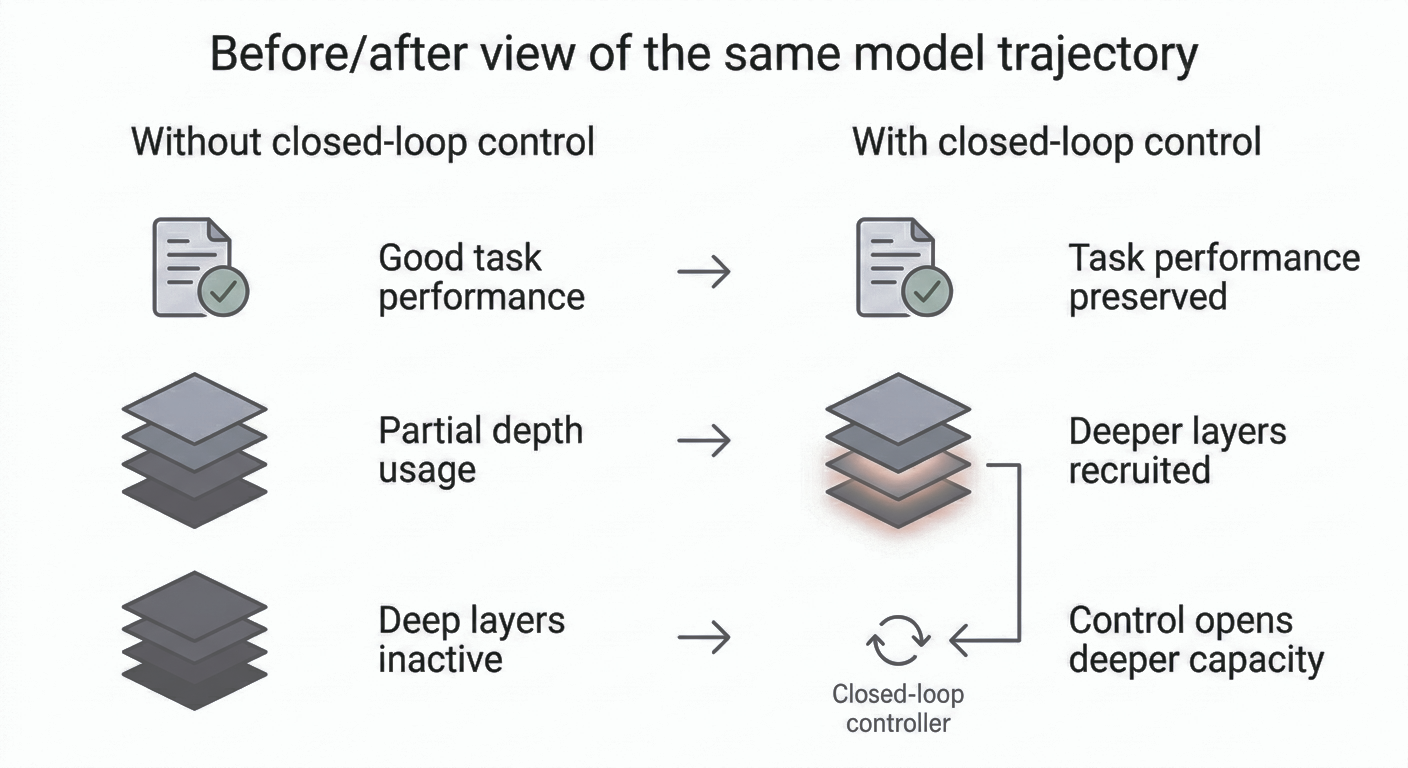

The EVE model’s hidden stacks employ per-unit variational computation, allowing the internal state to support nontrivial uncertainty metrics such as KL divergence, mutual information, and stochastic-pass disagreement. The framework is parameterized for both the number of transformer heads and hidden MLP dimensions, maintaining architectural parity with the deterministic backbone.

Homeostatic Regulation

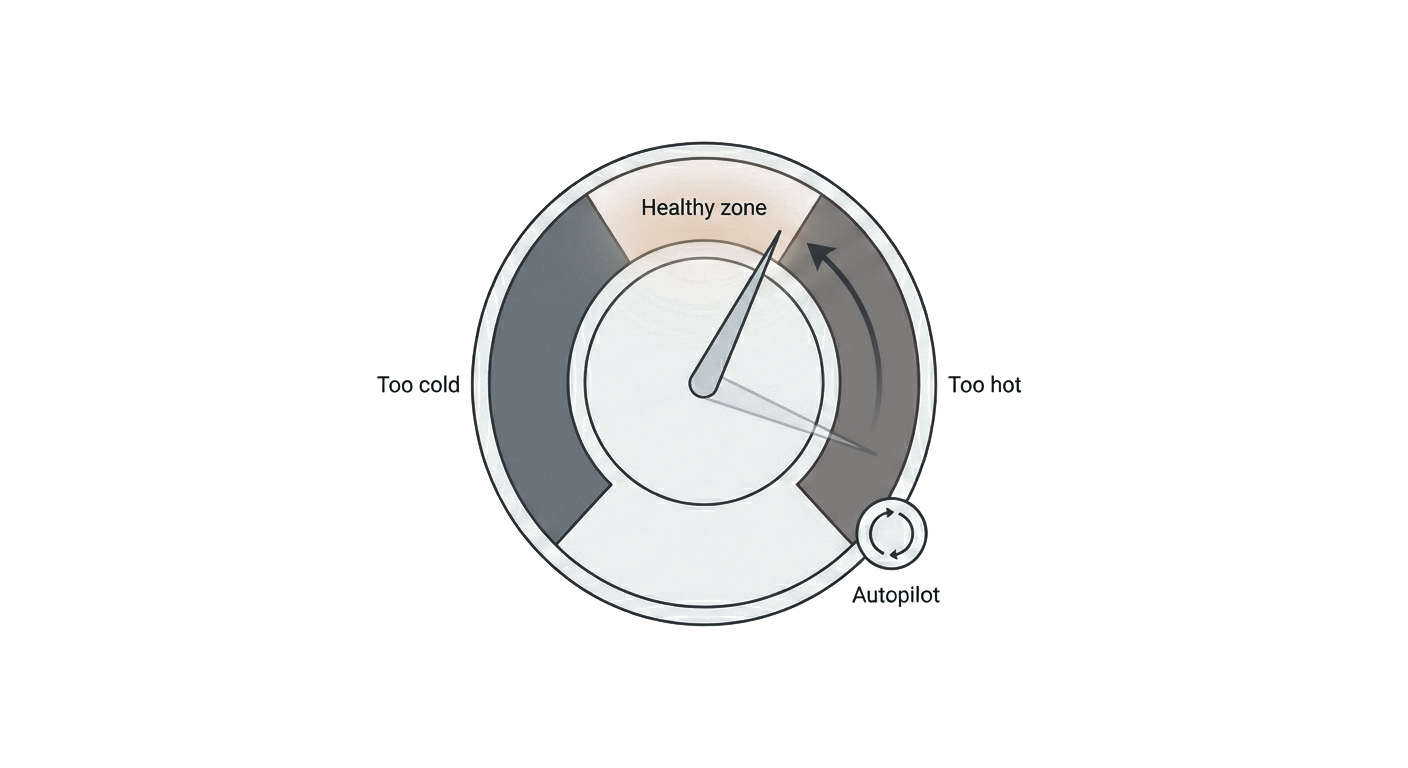

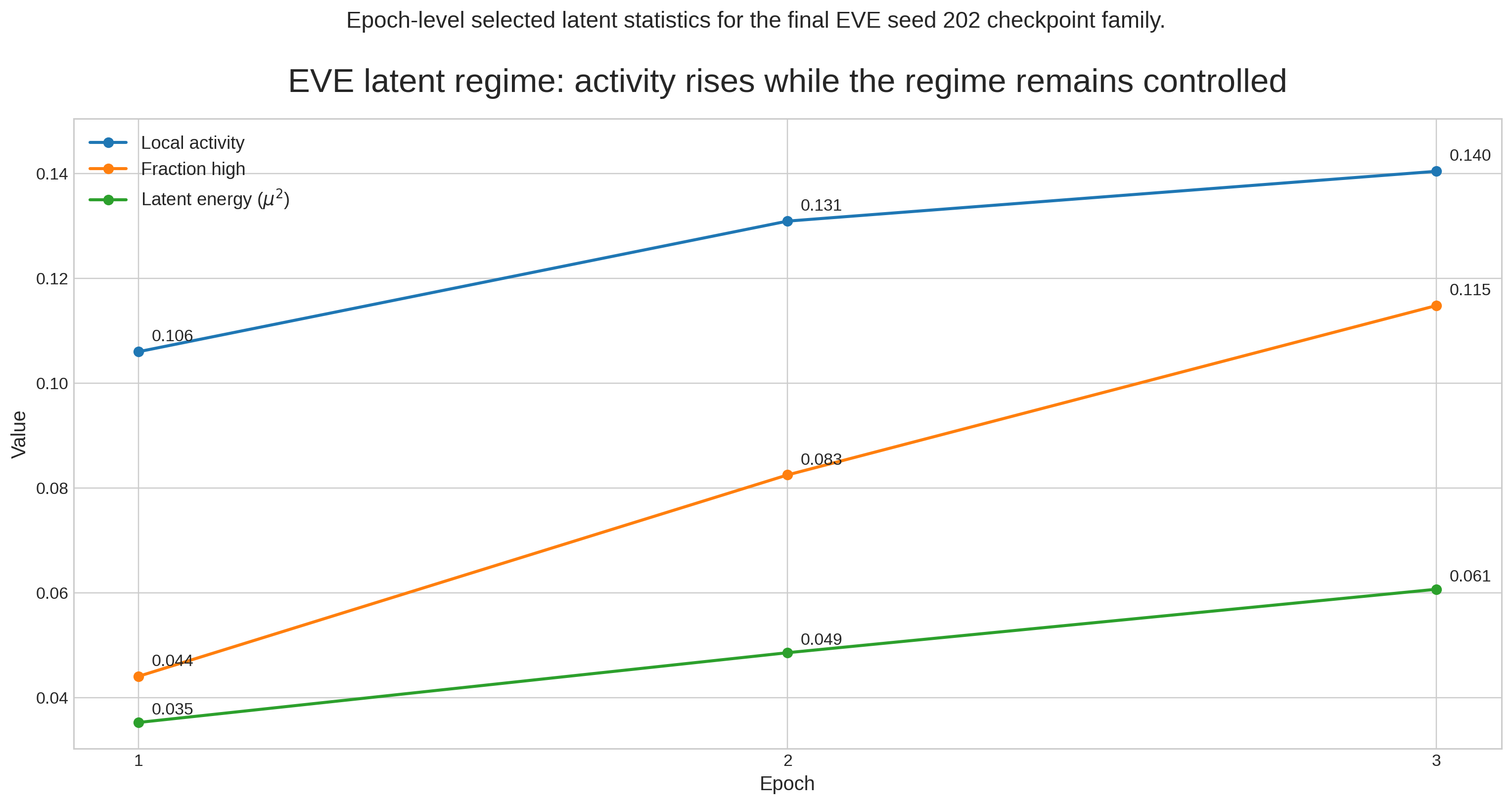

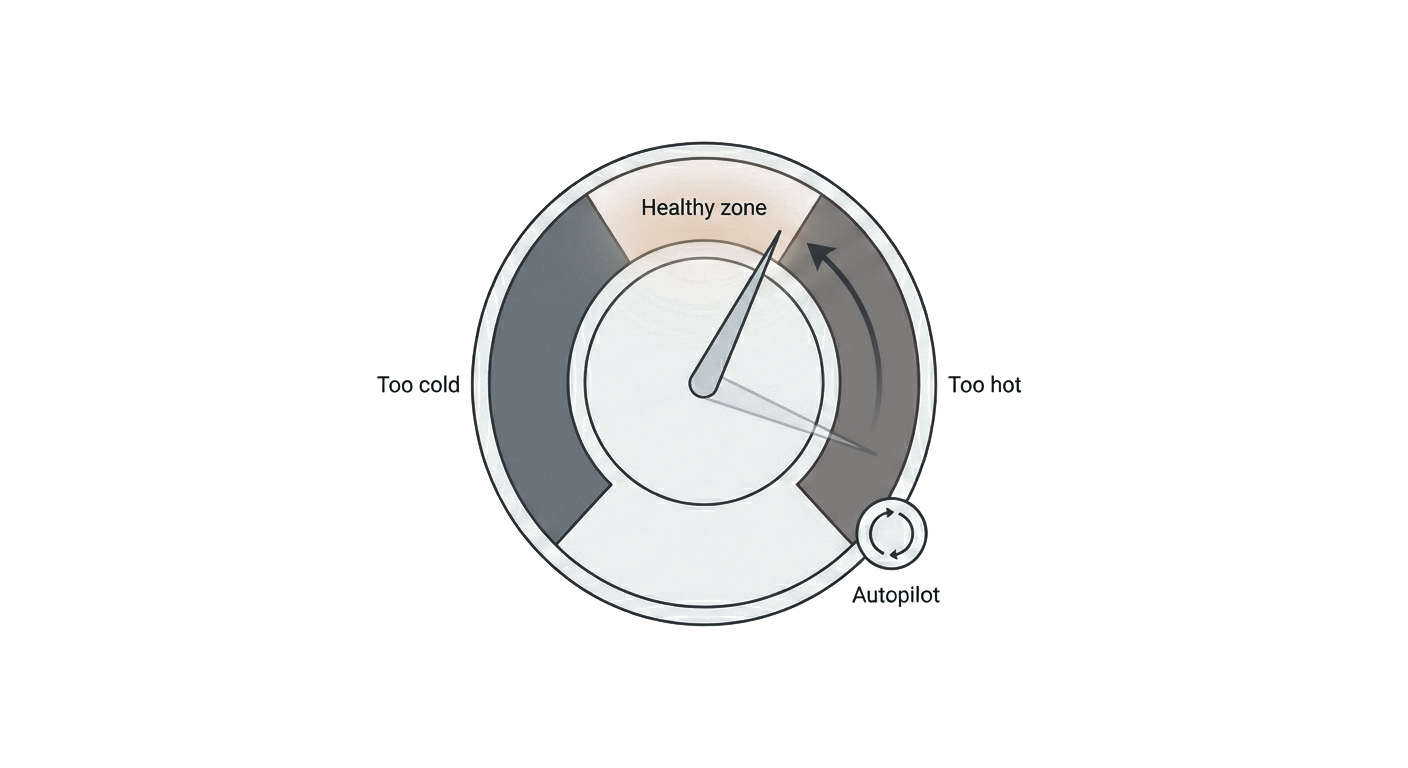

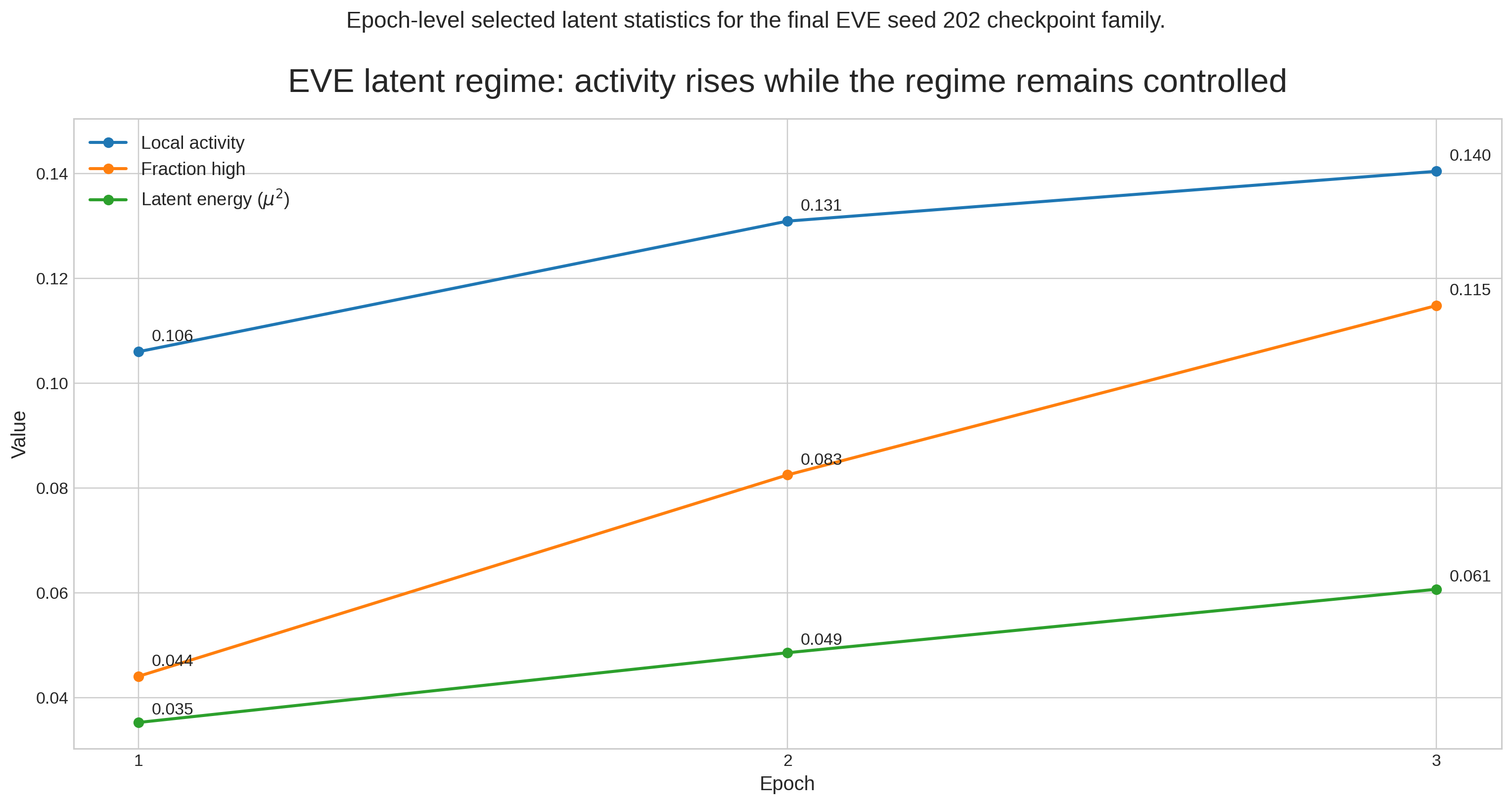

A central contribution is the incorporation of homeostatic latent regulation. Utilizing mechanisms akin to an automated latent-band controller, the system maintains the informativeness and viability of the stochastic regime during training. The latent regime is penalized when deviating from predefined bounds, keeping both "dead" (inactive) and overactive units controlled. The controller operates using internal signals, such as latent energy (μ2) and unit-band occupancy statistics, ensuring that stochasticity remains usable for downstream control.

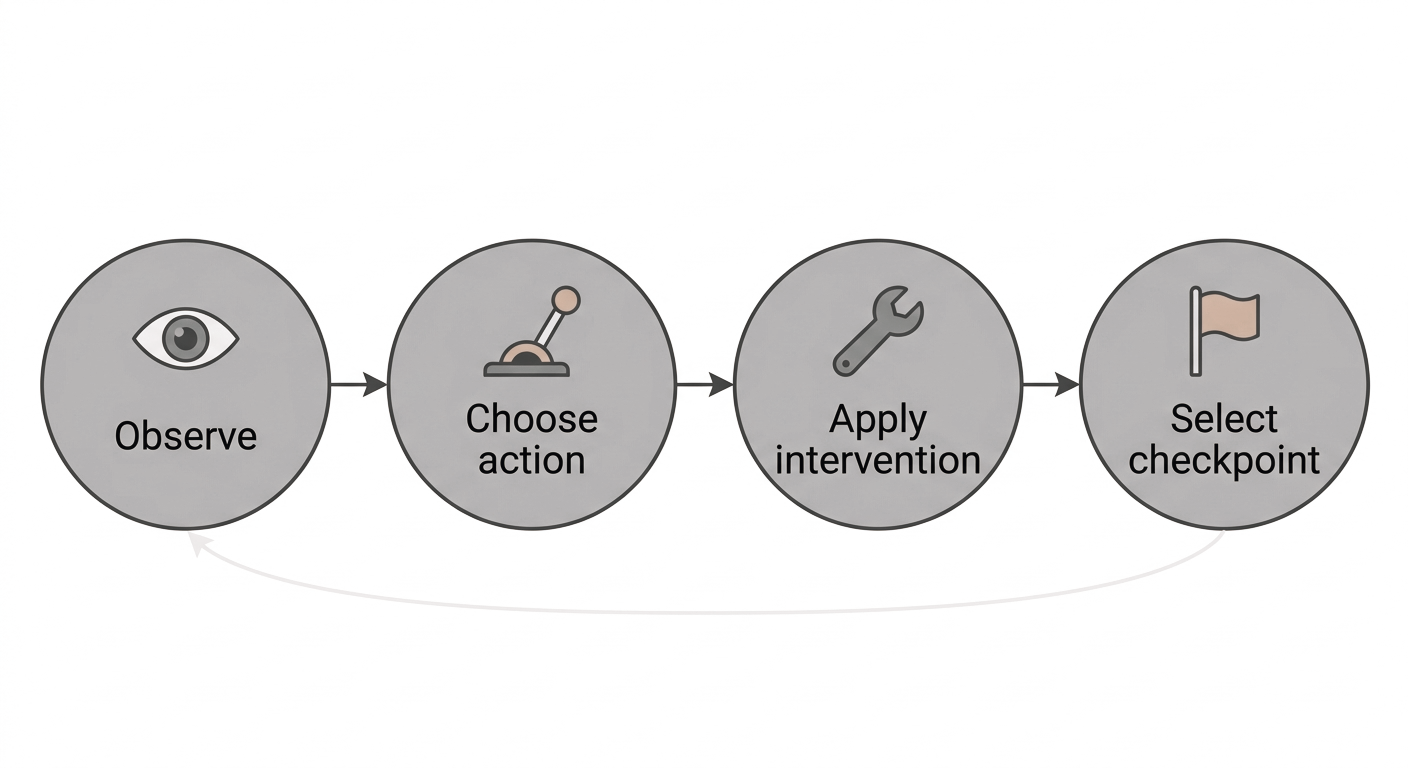

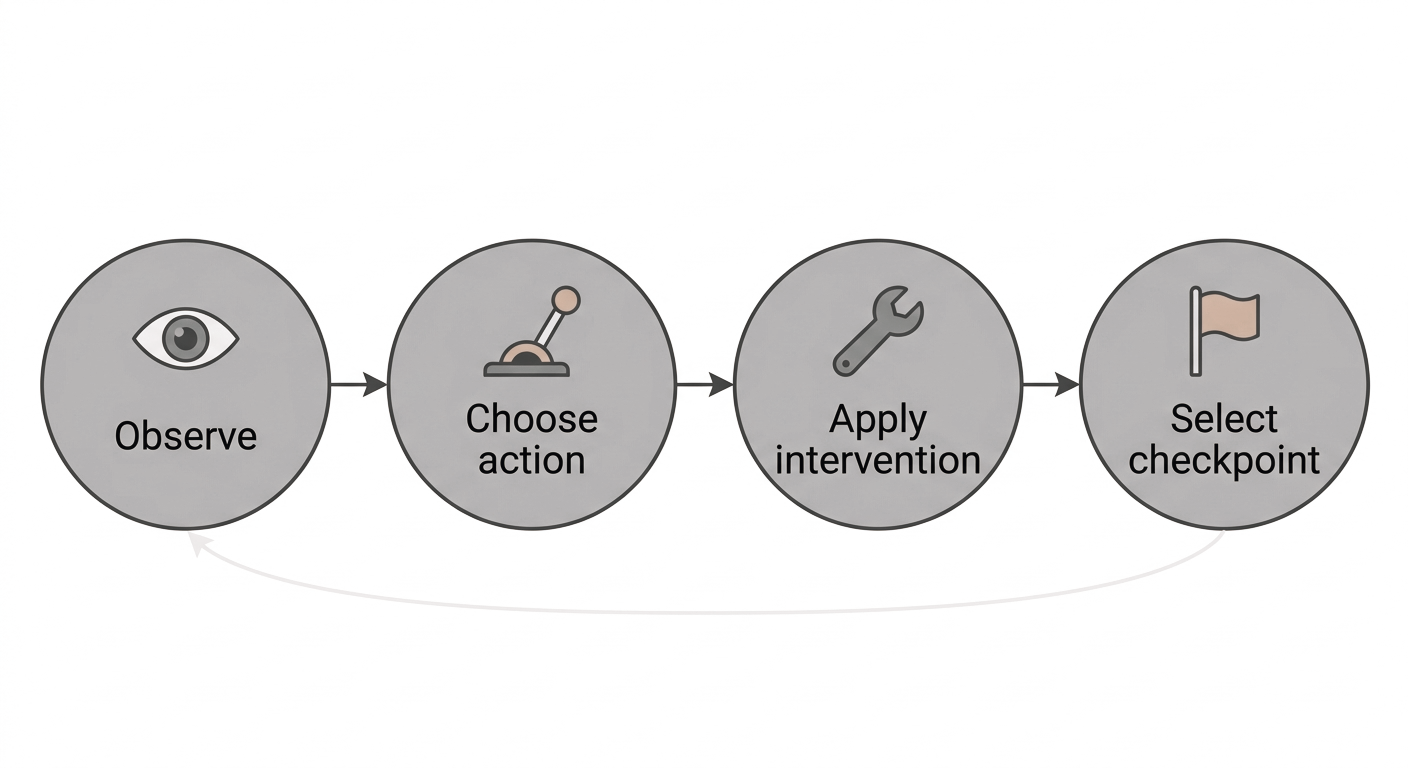

Figure 1: A minimal agentic loop, showing how internal evidence is observed, mapped to action, and subject to structural checkpoint retention.

Structurally Aware Checkpoint Retention

The checkpoint retention mechanism enforces a joint criterion over both validation-set cross-entropy and latent-state diagnostics (e.g., fraction of high-activity units, proximity to target μ2). The explicit structural selection rule filters non-task-safe checkpoints and prioritizes those with healthy latent activity, supporting reproducibility and internal regime consistency.

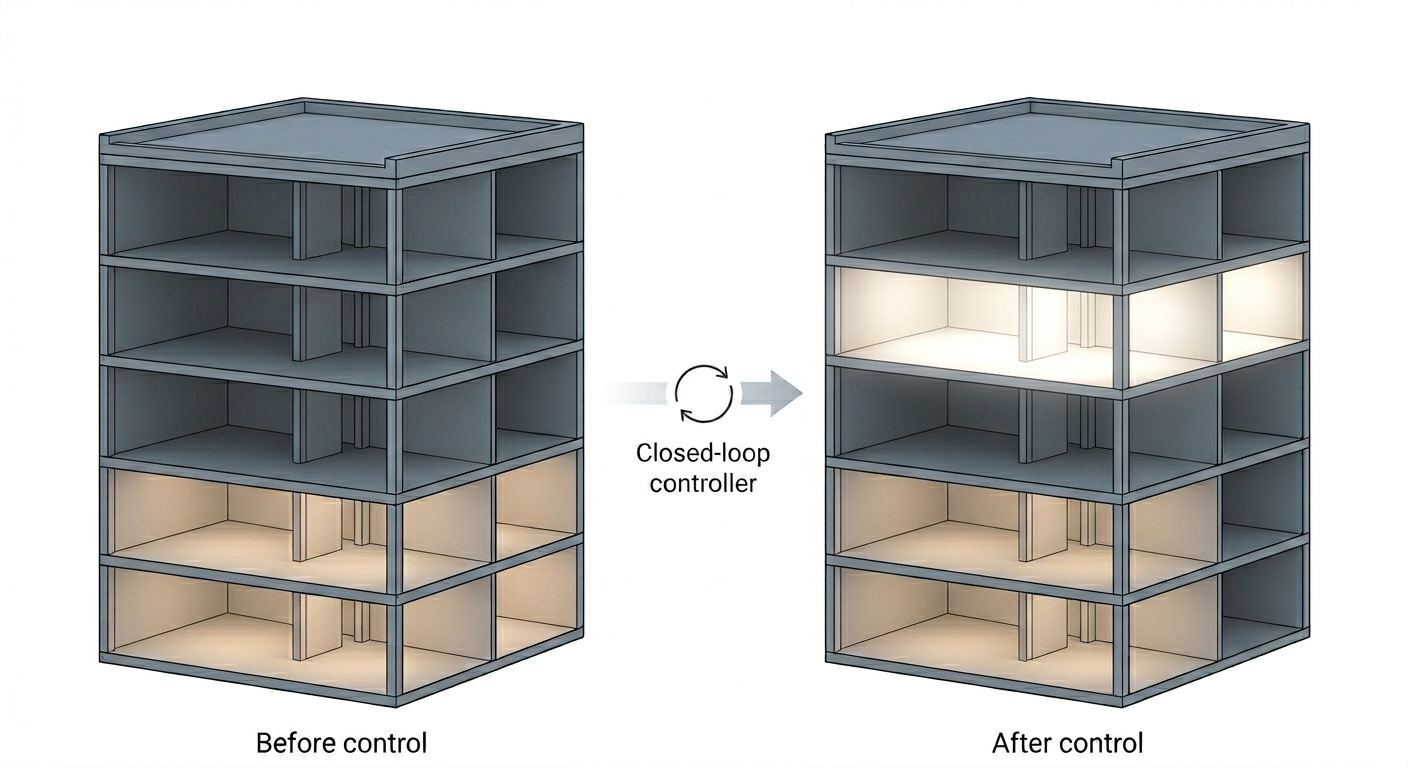

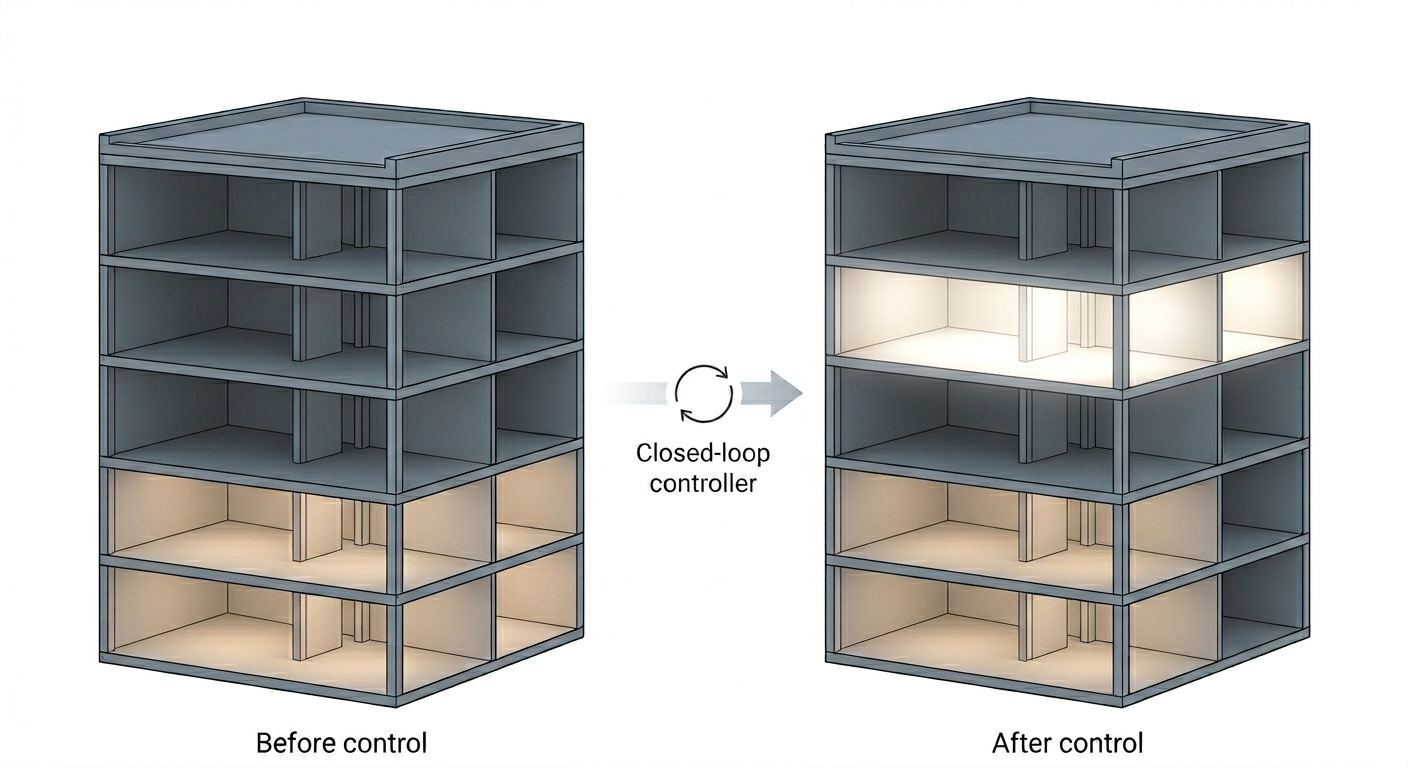

Figure 2: A building metaphor for underused and recruited depth, illustrating that intervention can activate unused model capacity.

Uncertainty-Aware Control and Multi-Action Routing

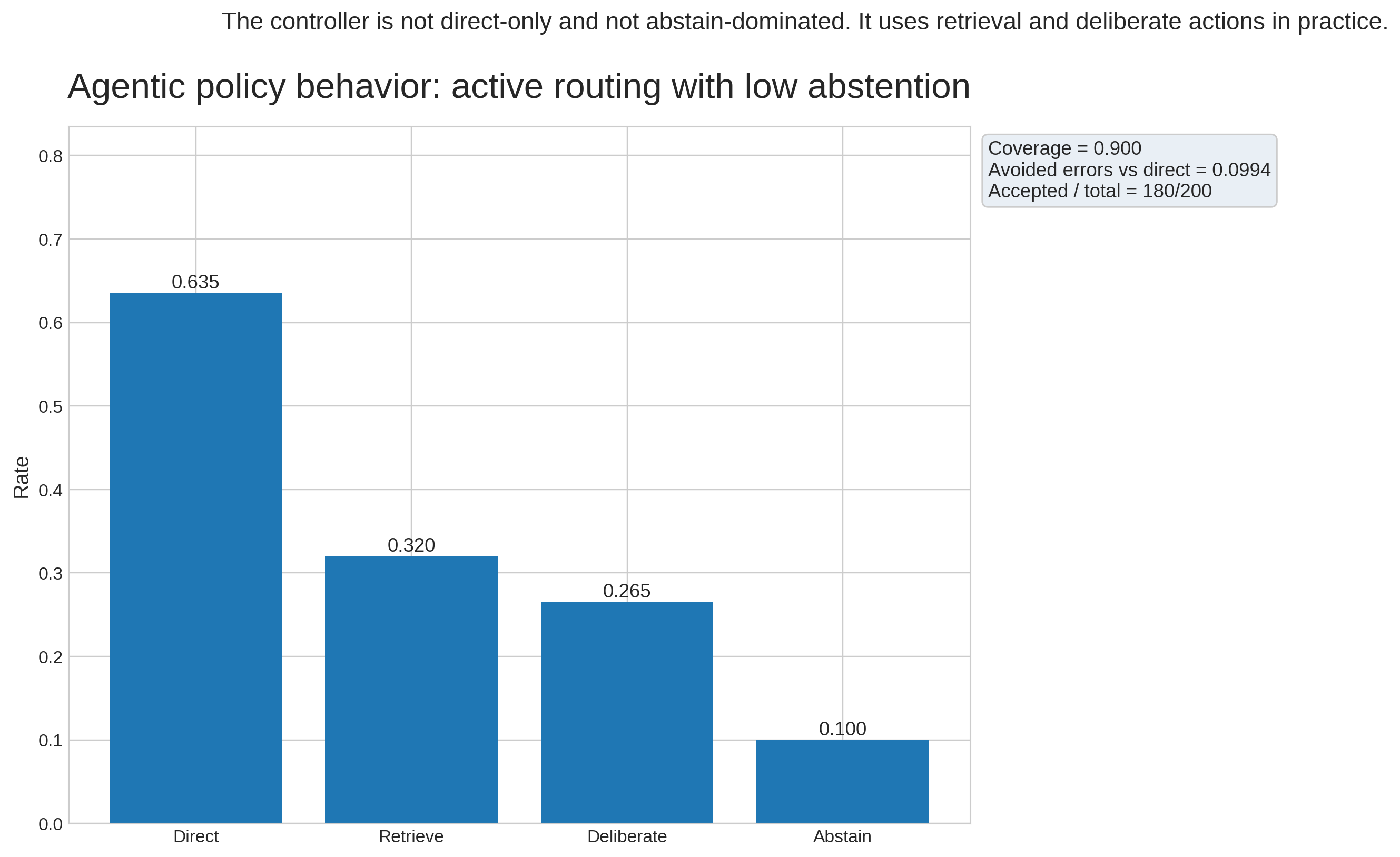

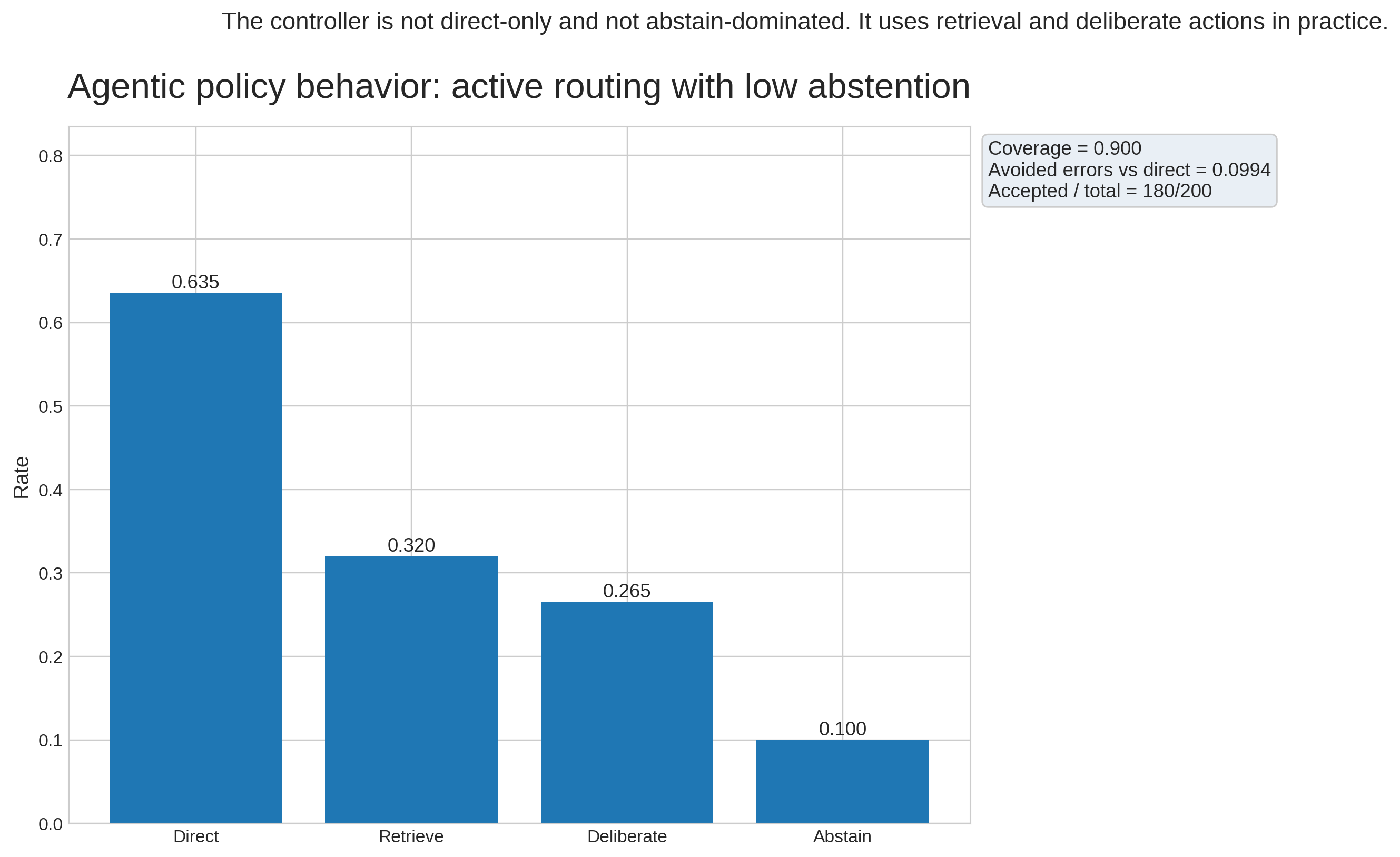

Inference-time control is operationalized by synthesizing predictive entropy, mutual information, and top-1 flip rate into a unified uncertainty score. This score is thresholded—via quantile calibration on validation rows—into discrete agentic actions: direct answer, further deliberation, retrieval/resampling, or abstention/escalation. Action selection is therefore a direct function of observed internal evidence rather than a heuristic overlay.

The action space is evaluated with explicit cost penalties, allowing for quantification of utility along a quality–cost Pareto curve.

Figure 3: A thermostat metaphor for homeostatic latent regulation, representing the maintenance of controlled stochastic activity.

Empirical Results

Predictive Metrics

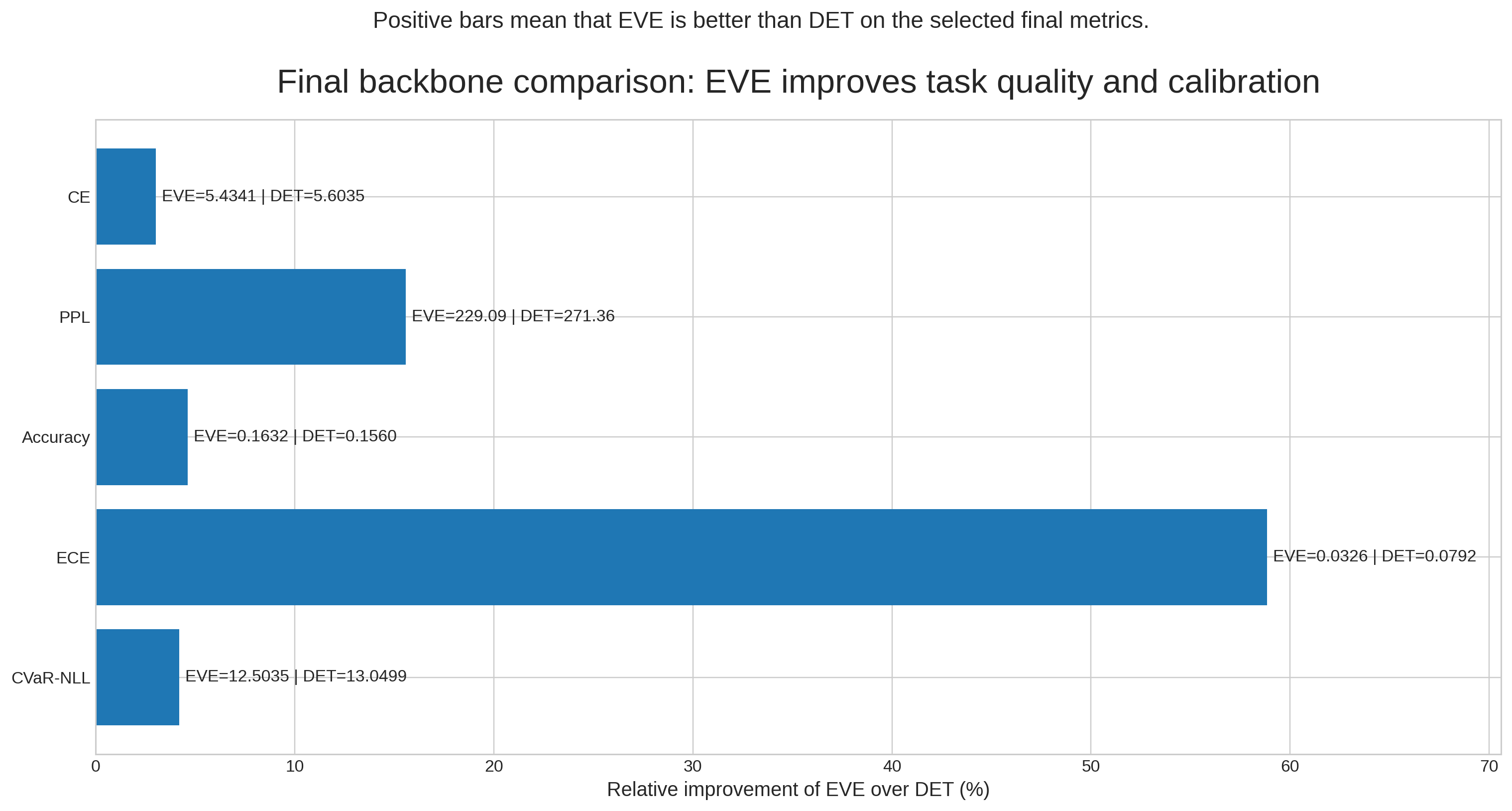

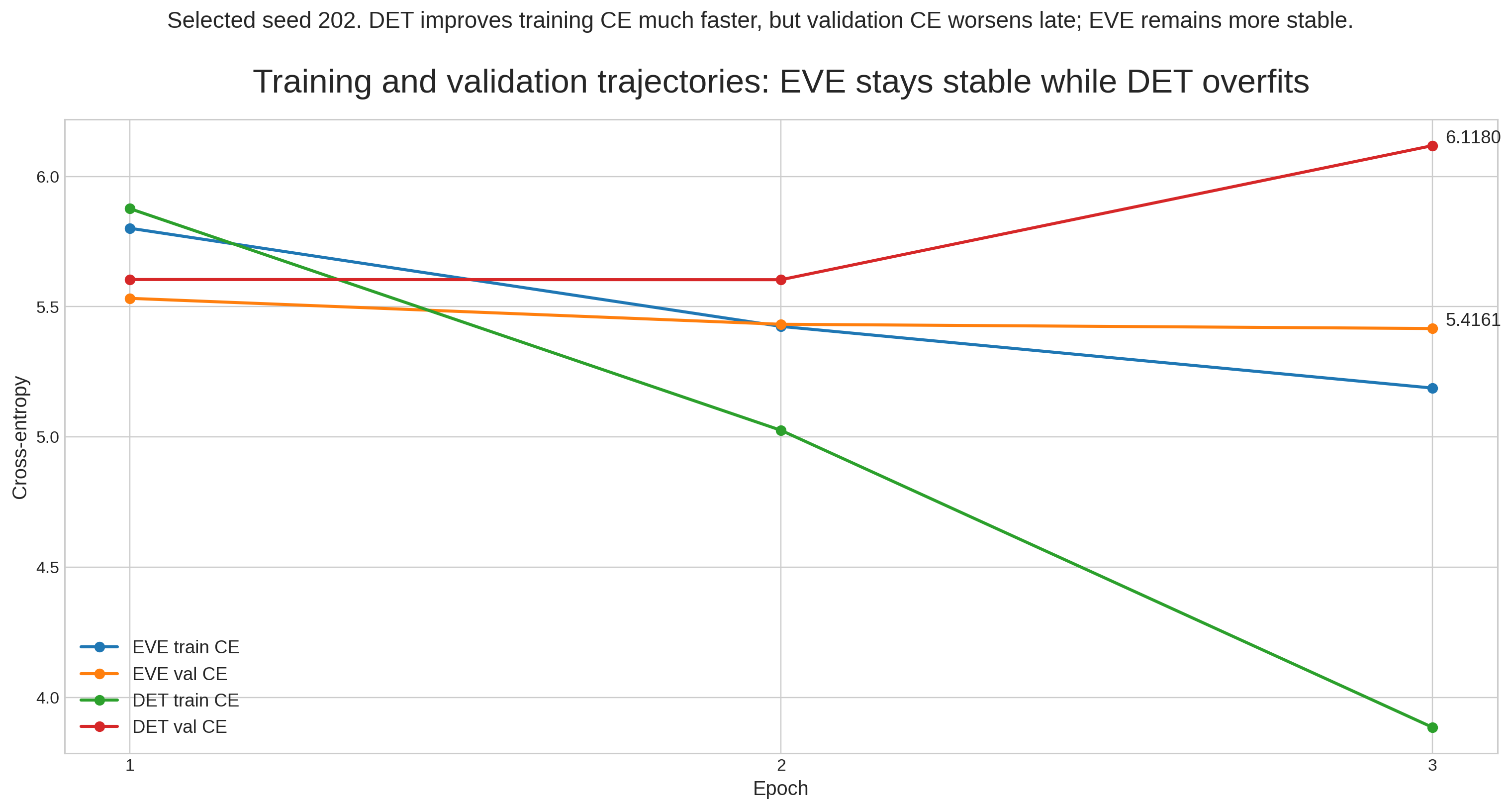

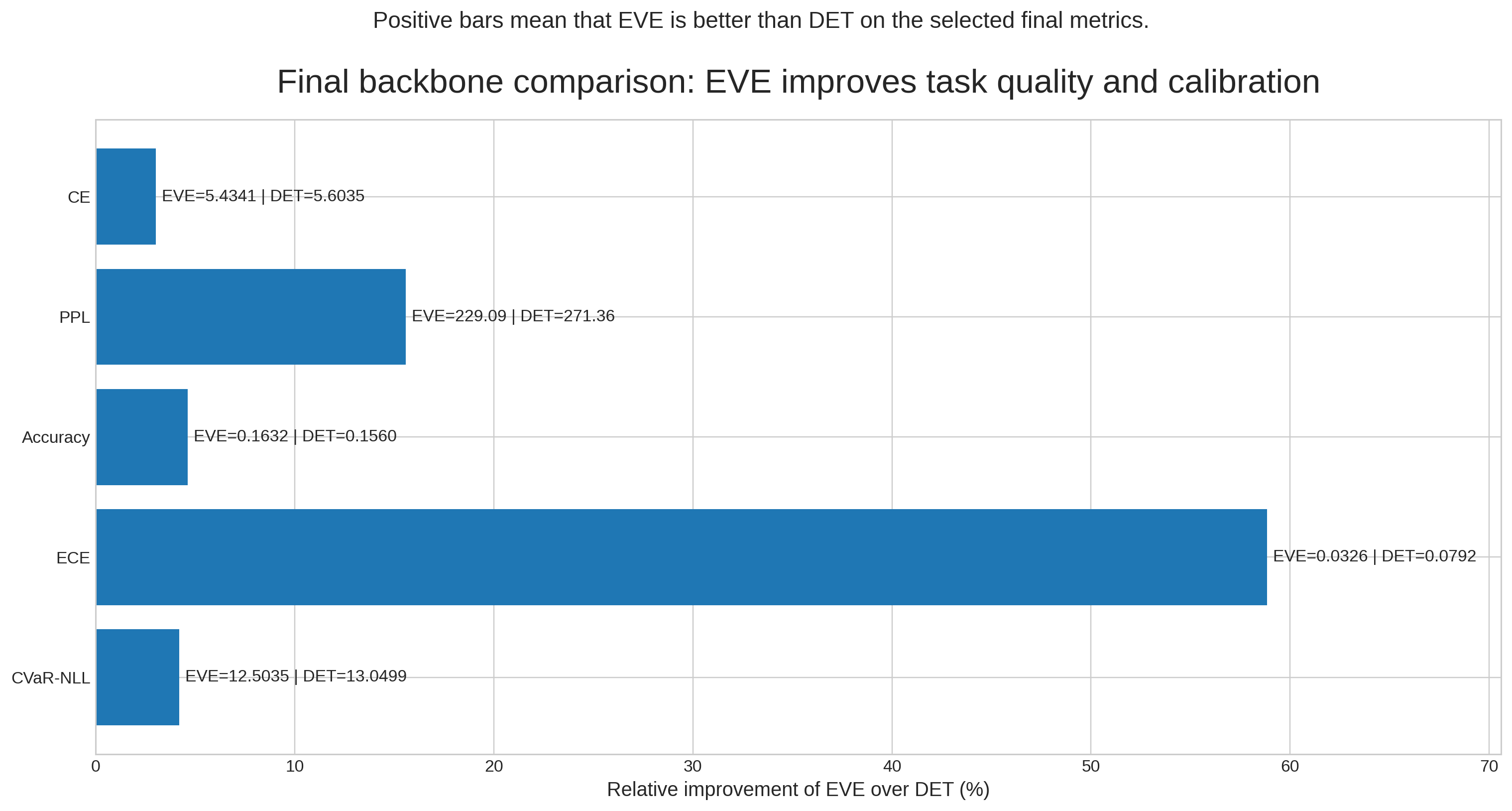

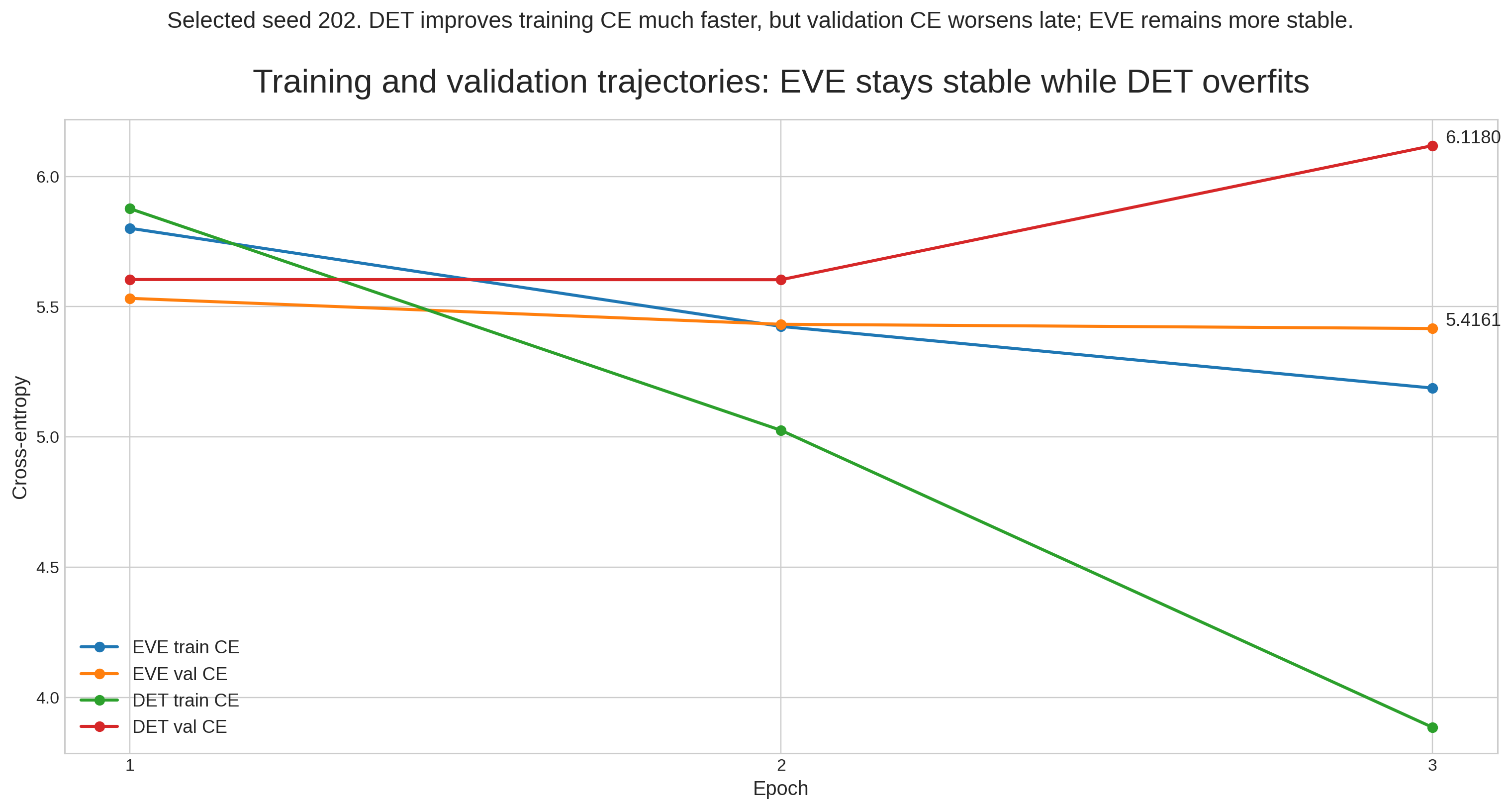

On language modeling, EVE consistently outperforms DET in cross-entropy, perplexity, and accuracy. For example, on the primary validation set, EVE achieves a cross-entropy improvement of $0.17$ and a perplexity reduction of over $42$ compared to DET, accompanied by an absolute accuracy gain.

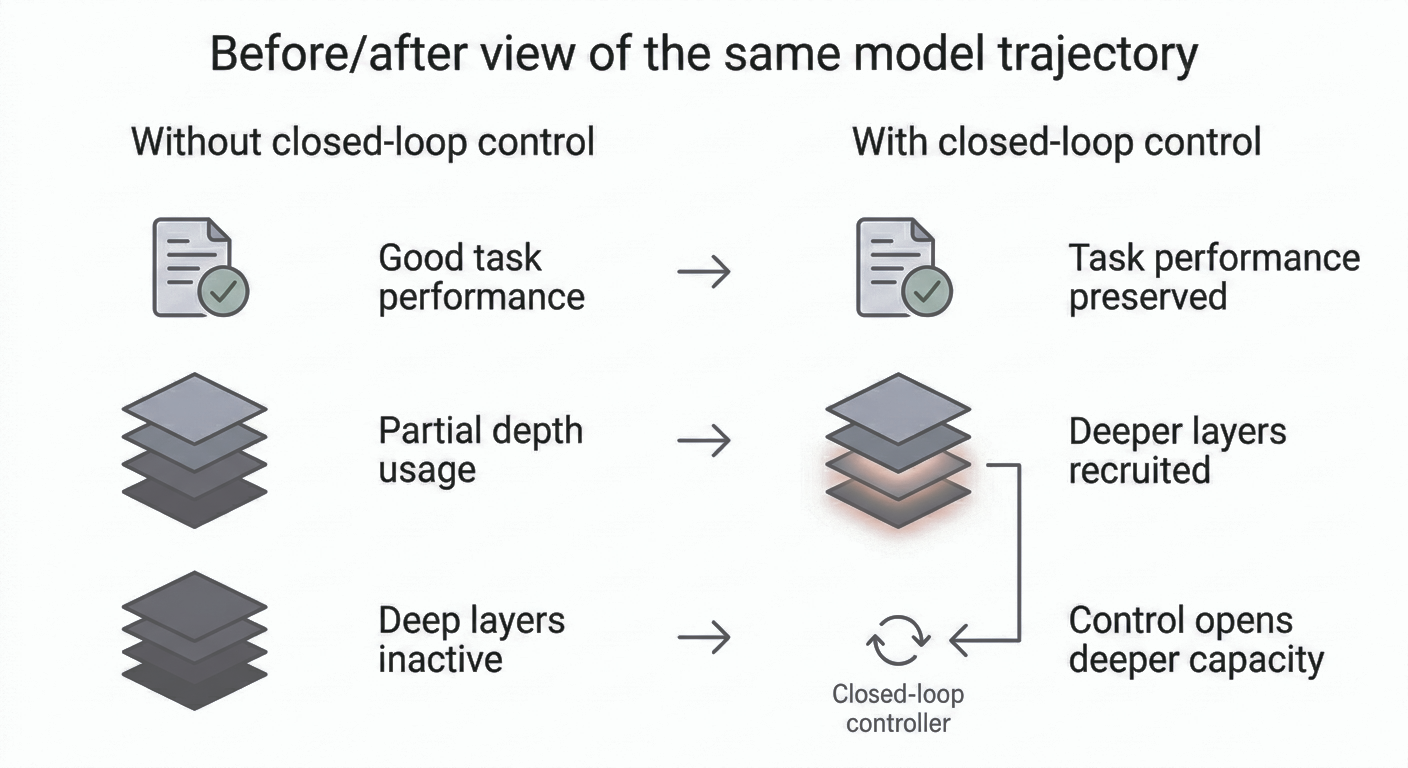

Figure 4: Before and after system behavior, demonstrating transition from prediction-only to prediction-plus-calibrated-control under the EVE framework.

Figure 5: Observed backbone differences between EVE and DET on validation metrics; positive values indicate EVE’s superiority.

Uncertainty and Calibration

EVE produces nonzero mutual information, higher predictive entropy, and substantive epistemic signal (e.g., increased MC-sample flip rates) unavailable in the deterministic variant. Expected Calibration Error (ECE) is markedly reduced, and the system exposes richer uncertainty structure supporting actionable control.

Latent State Health

Epoch-level diagnostics demonstrate stable evolution of latent energy, reconstruction activity, and the fraction of high-activity units, evidencing ongoing, meaningful stochastic engagement.

Figure 6: Training and validation cross-entropy trajectories for EVE, indicating reliable performance improvements across epochs.

Figure 7: Epoch-level latent-state diagnostics for EVE, charting the activation of latent units and aggregate structural health throughout training.

Agentic Evaluation

In full multi-action evaluation, the uncertainty-aware controller attains 90% coverage with a selective abstention rate, activation of retrieval and deliberation actions on nontrivial subsets, and positive utility under cost penalization. Notably, the controller eliminates nearly 10% of errors that would have occurred under a direct-only policy, confirming the operational benefit of uncertainty-driven routing.

Figure 8: Observed behavior of the calibrated controller in the full multi-action evaluation, reporting error avoidance and action coverage.

Theoretical and Practical Implications

The paper demonstrates that minimal agentic control need not be scaffolded via external components, tool APIs, or post hoc uncertainty heuristics. Instead, meaningful agency can be instantiated solely by leveraging internal variational signals, provided these signals are maintained through disciplined latent regulation and structurally aware checkpointing. This portends significant implications for the design of self-regulating or introspection-capable LLMs—enabling their calibration, abstention, and deliberation actions to be justified by empirical internal evidence rather than brittle heuristics.

The strict separation between regulation (latent stabilization during training), retention (filtering structural/probabilistic regimes post-training), and control (calibrated inference-time routing) gives the pipeline interpretability, modularity, and robustness. The approach naturally extends as a building block for externally agentic systems (e.g., retrieval-augmented generation, toolformer architectures), but without reliance on external triggers for basic self-control.

From a research perspective, the findings invite evaluations at larger scales and in diverse task contexts, as well as the exploration of more elaborate policy designs and uncertainty-driven hybridization with non-parametric or environment-facing modules.

Conclusion

This work establishes that variational LLMs, when equipped with controlled latent stochasticity and explicit checkpoint/uncertainty management, can support a measurable and highly practical form of internal agentic control. Internal uncertainty, often considered a latent diagnostic, is here elevated to a primary operational signal, regulating model state, checkpointing, and inference-time decision policy.

The explicit demonstration—across metrics, uncertainty signals, and action utility—substantiates that agency in LLMs can be grounded internally. Extensions of this approach could unify internal and external agentic mechanisms, broadening the spectrum of self-regulating AI systems.