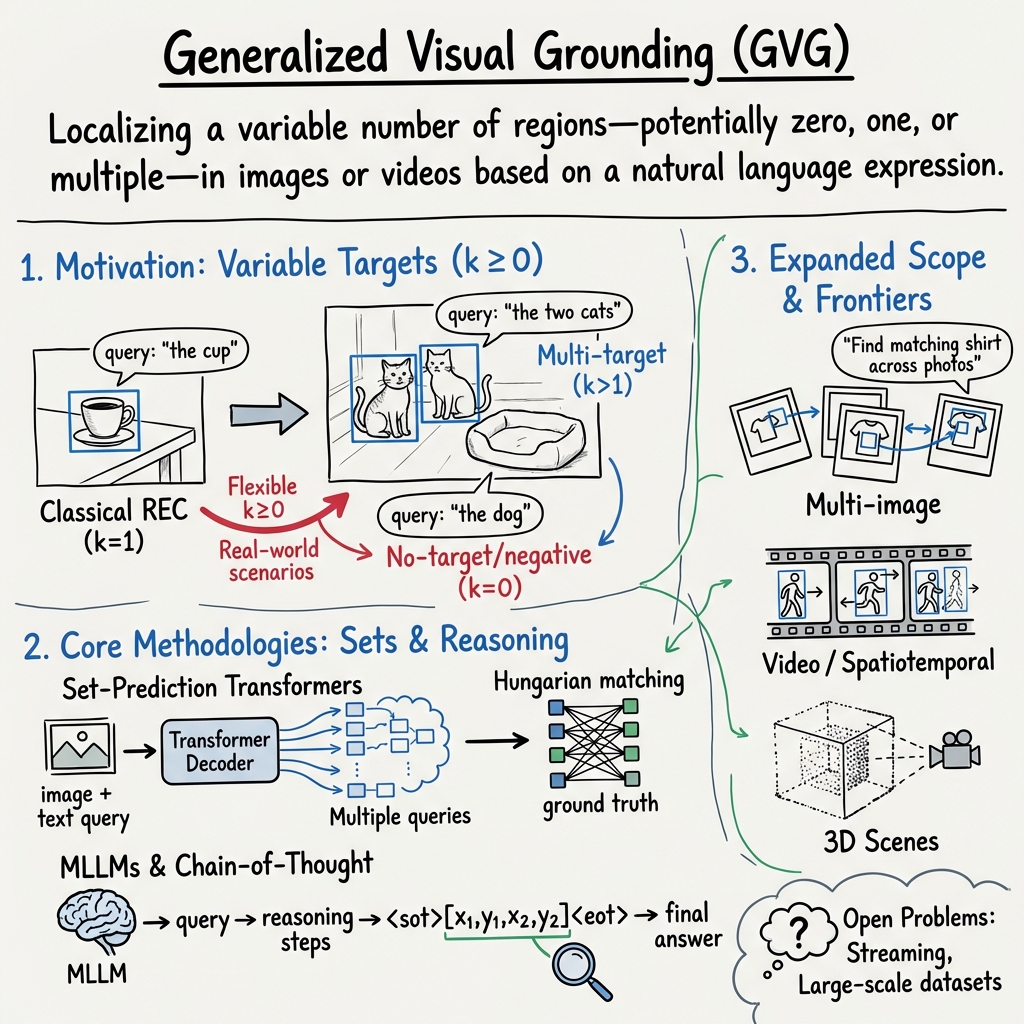

Generalized Visual Grounding (GVG)

- GVG is a task that localizes variable numbers of regions in visual data based on natural language queries, accommodating multi-target and no-target scenarios.

- Recent methodologies leverage transformer decoders, set-prediction losses, and multimodal architectures to integrate detection, segmentation, and reasoning.

- Benchmarking protocols like precision@F1 and no-target accuracy, along with datasets such as gRefCOCO and Ref-ZOM, drive evaluation in GVG research.

Generalized Visual Grounding (GVG) is the overarching task of localizing a variable number of regions—potentially zero, one, or multiple—in images or videos based on a natural language expression. Unlike classical visual grounding, which assumes exactly one referent per query, GVG enables broader, more realistic understanding of language-vision referential acts, covering multi-target, no-target, open-vocabulary, multimodal, and reasoning-driven scenarios. Advances in GVG span architectures for single- and multi-image input, 3D scenes, video streams, and more, with a rigorous benchmarking and methodological ecosystem establishing GVG as a central multimodal vision-language task.

1. Formalization and Motivation

Let denote an input image (or video frame) and a tokenized query of length . A GVG model predicts a set of bounding boxes

with each , where is unconstrained. In contrast, classical referring expression comprehension (REC) restricts .

The motivation for GVG is to accommodate the full range of referential phenomena encountered in natural language and real-world tasks, including:

- Single-object grounding: standard REC/phrase grounding;

- Multi-object grounding: e.g., “the two men on the left” yielding ;

- No-object/negative grounding: e.g., “the unicorn in the scene” when no unicorn is present, requiring .

This shift from fixed to flexible is driven by use-cases such as surveillance, human–robot interaction, instructional dialog, and open-domain visual search, where expressions may correspond to multiple or no regions (Xiao et al., 2024).

2. Evaluation Protocols and Benchmarks

GVG employs evaluation protocols explicitly designed for variable . Two primary metrics are established:

- Precision@(=1, IoU0.5): For a prediction to count as correct, the set of predicted boxes must exactly match the ground truth, measured by bipartite IoU matching and score of 1.0 (i.e., , , all properly accounted for at the instance level).

- No-target accuracy (N-acc): The fraction of true no-target queries for which no box is predicted.

Datasets specifically designed for GVG include:

- gRefCOCO: 278,232 queries over 19,994 COCO images; mixture of single-target, multi-target, and no-target cases.

- Ref-ZOM: 90,199 queries over 55,078 images, with detailed control over target cardinality.

- D³: 422 challenging high-res multi-target and no-target queries (Xiao et al., 2024).

For videos, the GVG dataset (distinct from gRefCOCO) targets spatio-temporal localization of multiple tubes per query, with challenging distractor and multi-segment cases (Feng et al., 2021).

3. Core Methodologies and Model Design

Set-Prediction Transformers and Query-Based Decoders

GVG architectures must produce a flexible number of outputs. Accordingly, recent models adopt Transformer decoders with multiple queries, Hungarian (bipartite) matching, and set-prediction losses:

- InstanceVG: Implements generalized referring expression comprehension (GREC), segmentation (GRES), and instance-aware prediction by tying each decoder query to a point, box, and mask, enforcing 1:1:1 consistency via Hungarian matching and multi-task loss (Dai et al., 17 Sep 2025).

- MDETR/UNINEXT/SimVG: Extended to handle by using multiple prediction queries, set-based L1+GIoU loss, and a confidence threshold to control output count (Xiao et al., 2024).

Multimodal LLMs (MLLMs) and Chain-of-Thought

VGR (Visual Grounded Reasoning) introduces an MLLM with chain-of-thought reasoning that issues explicit <sot>[x1,y1,x2,y2]<eot> replay signals to extract and attend to fine-grained visual regions as the model generates intermediate reasoning steps. This design integrates region selection into the CoT process and yields state-of-the-art results on tasks requiring comprehensive image detail understanding, while being highly token-efficient (Wang et al., 13 Jun 2025).

Zero-/Few-Shot and Open-Vocabulary Grounding

- GroundVLP: Achieves zero-shot GVG by fusing GradCAM heatmaps from vision-language pre-training (VLP) with open-vocabulary detector proposals, using only generic cross-modal supervision and without any task-specific finetuning (Shen et al., 2023).

- IMAGE: Introduces adaptive masking (Gaussian radiation modeling on feature maps) into the vision backbone, enhancing generalization and zero/few-shot transfer without scaling data size or architecture (Jia et al., 2024).

- Context Disentangling/Prototype Inheriting: TransCP disentangles referent/context features and uses a prototype bank for transfer to novel categories, fusing modalities via Hadamard product (Tang et al., 2023).

Multi-image, Spatiotemporal, and 3D GVG

- Generalized Multi-image Grounding: GeM-VG formalizes groundings across image groups, introducing a taxonomy of retrieval, association, and reasoning types (semantic, temporal, spatial), hybrid RL-SFT optimization (for both direct and CoT outputs), and a large dataset, MG-Data-240K. This supports k-variable per image, per group, and across cross-image relations (Zheng et al., 8 Jan 2026).

- Video GVG: DSTG constructs decoupled spatial and temporal graphs per video, with contrastive routing to address appearance/motion distractors, and is evaluated on general video datasets with multi-object and multi-clip expressions (Feng et al., 2021).

- 3D and Multimodal Input: PanoGrounder bridges 2D VLMs and 3D scenes with panoramic renderings, semantic/geometric feature injection, and multi-view fusion, supporting robust GVG under varied viewpoints and language (Jung et al., 24 Dec 2025). RGBT-Ground expands datasets to RGB-TIR pairs, addressing robustness under night, small-object, or adverse weather settings (Zhao et al., 31 Dec 2025).

4. Joint Learning, Consistency, and Instance Awareness

Advanced GVG models increasingly unify multiple grounding tasks (detection, segmentation), modalities (RGB/TIR, text), and instance-level outputs:

- InstanceVG: Imposes joint supervision and tight 1:1:1 point–box–mask alignment, outperforms prior methods on GREC/GRES and related benchmarks, and supports multi- and non-target expressions (Dai et al., 17 Sep 2025).

- Cross-task regularization and negative-query suppression further improve the model’s ability to balance background/foreground, suppress spurious outputs, and yield consistent multi-granularity predictions.

5. Performance, Challenges, and Benchmarks

Model performance in GVG, as of 2025–2026, demonstrates a clear gap to single-object grounding:

- Top multi-target precision@(=1, IoU0.5) is 40–60% on gRefCOCO/Ref-ZOM, while classical REC can achieve 90%+.

- No-target accuracy (N-acc) now routinely exceeds 80% for best models.

- InstanceVG and GeM-VG are current leaders in segmentation, multi-image, and multi-object metrics (Dai et al., 17 Sep 2025, Zheng et al., 8 Jan 2026).

However, challenges persist. GVG datasets remain modest in scale; evaluation protocols may require further refinement (e.g., varying k-recall, error analysis beyond all-or-none F1); and many algorithmic advances are incremental extensions of single-object architectures. Streaming, long-horizon video, and open-world adaptation are still open problems (Xiao et al., 2024).

6. Future Directions and Open Problems

The field is expected to evolve in several directions:

- Larger, high-quality datasets covering diverse target counts, modalities, and negative cases, with rigorous “no-leak” splits and standardized protocols.

- Unified models for robust, token-efficient, and truly universal GVG, tightly integrating detection, segmentation, spatiotemporal tracking, and language reasoning as first-class objectives.

- Algorithmic advances in instance-aware learning, set prediction, proposal-free architectures, and dynamic output control.

- Evaluation metrics reflecting practical needs, with error diagnosis for both multi-target and false positive/negative cases.

- New paradigms in self-supervised region-level or masking-based pre-training adapted for k-variable grounding and sparse supervision.

The synthesis of insights from rich pre-trained multimodal models, scene- and instance-level joint learning, and open-world transfer will define the next generation of GVG research (Xiao et al., 2024, Dai et al., 17 Sep 2025, Zheng et al., 8 Jan 2026).