Vision-Language Pre-Training Models

- Vision-Language Pre-Training models are architectures that learn joint representations of visual and textual data, enabling precise cross-modal alignment.

- They leverage techniques like contrastive learning, transformer-based fusion, and parameter-efficient fine-tuning to improve performance on various tasks.

- These models support applications such as visual grounding, VQA, and multimodal retrieval while addressing challenges like domain adaptation and scalability.

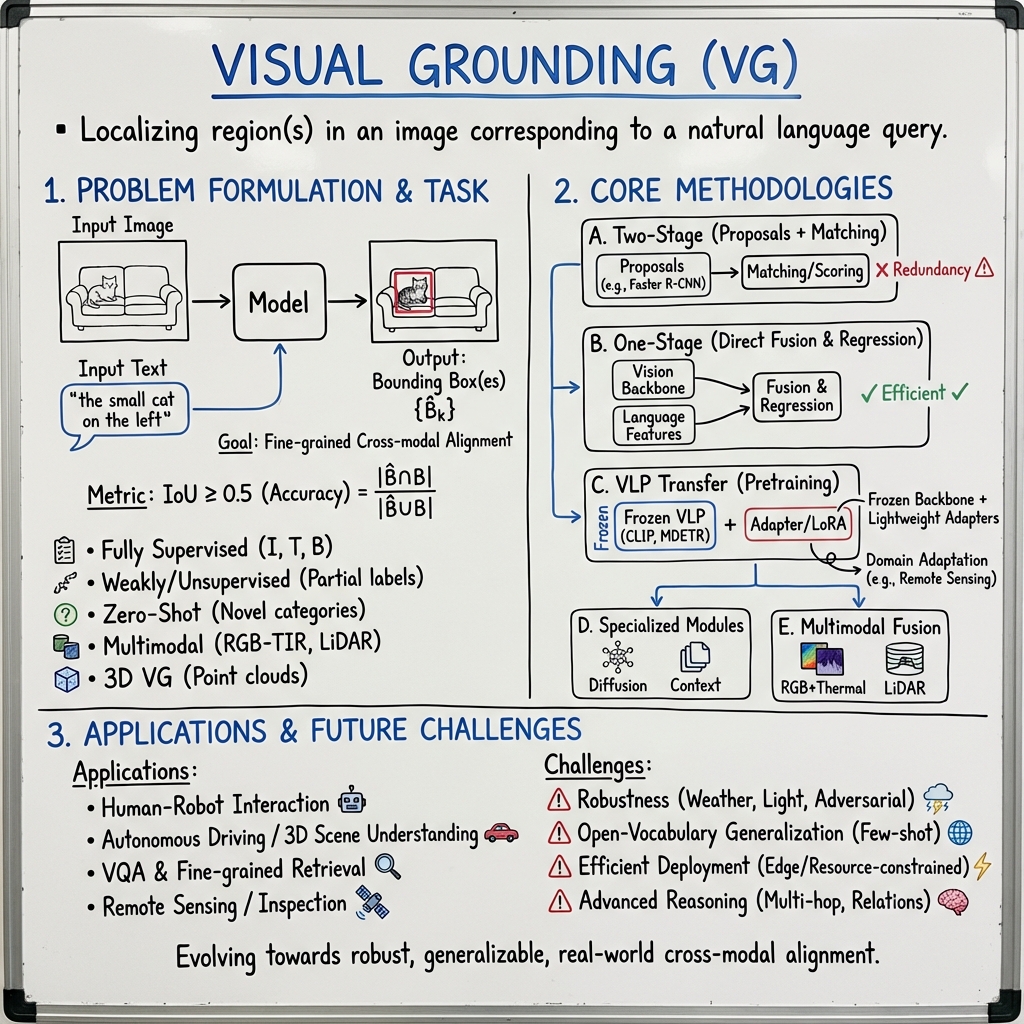

Visual Grounding (VG) refers to the computational problem of localizing, within an image (or, more generally, in a multimodal perceptual input), the region(s) corresponding to an open-ended natural language query. Unlike object detection, which categorizes from a predefined label set, VG receives as input an image and a free-form referring expression—potentially containing categories, attributes, relations, context, and external knowledge—and must output precise bounding boxes for the relevant regions. This capability underlies a broad range of vision–language tasks, from human–robot interaction to fine-grained multimodal retrieval, and has driven recent advances in cross-modal modeling, robust alignment, and efficient adaptation for open-world and challenging domains.

1. Problem Formulation, Terminology, and Task Settings

The canonical VG task, sometimes called Referring Expression Comprehension (REC), can be formalized as follows: Given an image and a referring expression , output a set of bounding boxes such that each matches an object or region semantically denoted by . Traditionally, (one-object REC), but generalized VG (GREC) allows for (zero/multi-object or ambiguous expressions) (Xiao et al., 2024).

The core challenge is one of fine-grained cross-modal alignment. The model must encode both image and text, then fuse or match their representations such that precise spatial localization is achieved for arbitrarily complex queries. Accuracy is evaluated using intersection-over-union (IoU); , considering predictions correct if with the ground truth (Xiao et al., 2024).

A taxonomy of VG tasks includes:

- Fully Supervised VG: triplets with explicit supervision.

- Weakly/Semi/Unsupervised VG: Partial or no direct region–phrase supervision; methods leverage pseudo-labels, contrastive alignments, or curriculum learning (Xiao et al., 2023).

- Zero-Shot/Open-Vocabulary VG: Expressions referring to novel categories or attributes; often evaluated on cross-dataset and open-domain generalization (Tang et al., 2023).

- Multi-Modal/Generalized VG: Complex inputs (e.g., RGB-TIR, LiDAR or radar views) and queries requiring multi-object and context-aware grounding (Zhao et al., 31 Dec 2025, Baek et al., 2024, Guan et al., 2024).

- 3D Visual Grounding (3DVG): Extends VG to 3D point clouds and spatial reasoning (e.g., LiDAR data in autonomous driving; indoor/outdoor 3D scenes) (Baek et al., 2024, Li et al., 28 May 2025).

2. Core Methodologies and Architectures

Progress in VG has unfolded across several methodological axes, with representative technical waves:

A. Two-Stage (Proposals + Matching): Early pipelines generate dense region proposals (e.g., via Faster R-CNN), encode both candidate regions and the referring text, and perform cross-modal scoring or matching (Xiao et al., 2024). These methods, while interpretable, suffer from proposal redundancy and lose end-to-end adaptation.

B. One-Stage (Direct Fusion & Regression): Models such as FAOA, SSG, and YOLO-style approaches modulate the visual backbone with language features and regress box coordinates in a single pass (Xiao et al., 2024). Later, Transformer-based frameworks (TransVG, RefTR, SeqTR) adopt fusion at every layer, enabling dense cross-modal attention.

C. Vision–Language Pretraining and Transfer: With the advent of CLIP, MDETR, and related contrastive/grounded pretraining, VG models increasingly employ frozen or lightly adapted vision–language backbones, then add lightweight adapters, bridges, or LoRA modules to tune for grounding (Xiao et al., 2024, Xiao et al., 2023). Efficient fine-tuning (PEFT) techniques, such as LoRA and adapters, are crucial for domain and modality adaptation (e.g., remote sensing or RGB+TIR) (Moughnieh et al., 29 Mar 2025, Zhao et al., 31 Dec 2025).

D. Specialized Modules and Reasoning: To overcome limitations in context and relation sensitivity, new architectures integrate context disentangling, iterative diffusion (LG-DVG), hierarchical cross-attention, and relation-aware reasoning. Notably:

- Context Disentangling & Prototype Inheriting for open-vocabulary and ambiguity-robust VG (Tang et al., 2023)

- Language-Guided Diffusion Models for generative, multi-step, anchor-free grounding (Chen et al., 2023)

- Hierarchical and Multi-Layer Cross-Modal Bridges to adapt generic VLP features to fine-grained localization (Xiao et al., 2024)

- Multi-Stage False-Alarm Sensitive Decoders to handle negative/no-object queries and suppress false alarms (Li et al., 2023)

E. Multimodal and Multidomain Extension: Multimodal fusion (e.g., for RGB+Thermal, LiDAR+RGB, mmWave radar+image) utilizes lightweight cross-attention, adaptive modality weighting, and efficient fusion modules to support challenging perception environments (Zhao et al., 31 Dec 2025, Guan et al., 2024).

| Architecture | Key Features | Representative Works |

|---|---|---|

| Two-Stage | Proposals + matching, explicit region set | MattNet, DGA, VLTVG |

| One-Stage | Fusion backbone, direct box regression | TransVG, SimVG, ReSC |

| VLP Transfer | Frozen or LoRA-adapted multi-modal models | CLIP-VG, HiVG, CLIP-G, MDETR, D-MDETR |

| Diffusion/Iterative | Multi-step, denoising-based refinement | LG-DVG (Chen et al., 2023) |

| Context/Prototype | Disentangling, prototype inheritance | TransCP (Tang et al., 2023) |

| Multimodal Fusion | Sensor fusion (RGB-TIR, radar, LiDAR) | RGBT-VGNet (Zhao et al., 31 Dec 2025), Potamoi (Guan et al., 2024), LidaRefer (Baek et al., 2024) |

3. Benchmarks, Datasets, and Evaluation Protocols

VG research has benefited from a rich suite of curated benchmarks and datasets:

- RefCOCO / RefCOCO+ / RefCOCOg: MS COCO–based, differ in language constraints (e.g., COCO+ disallows locatives, COCOg has compositional queries).

- ReferItGame, Flickr30k Entities: Focus on sentence-level and phrase-level grounding.

- Specialized Datasets: RGBT-Ground (RGB+TIR for adverse conditions) (Zhao et al., 31 Dec 2025), AerialVG (high-res aerial, relational) (Liu et al., 10 Apr 2025), WaterVG (waterway, radar-guided) (Guan et al., 2024), SK-VG (scene knowledge–guided queries) (Chen et al., 2023), GRefCOCO (generalized, many/no-object), GigaGround (ultra-high-res).

- 3DVG/Outdoor Lidar: Talk2Car-3D, ScanRefer, Nr3D (Baek et al., 2024, Li et al., 28 May 2025).

Evaluation metrics standardize on accuracy at , occasionally using or for box tightness. Generalized VG may use F1-integrated metrics ( @IoU=0.5), and domain-specific settings (remote sensing, multimodal) often track precision, meanIoU, and recall across object size and environmental splits (Zhao et al., 31 Dec 2025, Moughnieh et al., 29 Mar 2025).

For VQA-integrated VG, faithful and plausible visual grounding (FPVG) metrics have emerged to measure not just plausibility (spatial overlap with ground-truth) but faithfulness (answer change under region erasure) (Reich et al., 2023).

4. Advanced Topics: Robustness, Generalization, and Scene Understanding

Robustness to Adversarial/Domain Shift: VG in complex real-world environments faces illumination, weather, and scale challenges. RGBT-Ground, AerialVG, and WaterVG benchmarks quantify performance on severe conditions—weak/strong light, night-time, small/occluded/distant objects, radar or multi-spectral data—and require fine-grained sensor and language fusion (Zhao et al., 31 Dec 2025, Liu et al., 10 Apr 2025, Guan et al., 2024).

Generalization: Open-vocabulary and cross-dataset evaluation expose brittleness of "standard scene" architectures. TransCP (Tang et al., 2023) leverages context disentangling and prototype banks to generalize to novel categories. Efficient adaptation in domain transfer (e.g., remote sensing) is addressed by LoRA, adapter, and BitFit schemes that enable near–state-of-the-art accuracy with trainable parameters, critical for deployment in compute-constrained settings (Moughnieh et al., 29 Mar 2025).

Multi-Object and Scene-Level Reasoning: Generalized VG benchmarks and scene knowledge–guided tasks (SK-VG) go beyond single-object REC, requiring reasoning over object relations, unobserved attributes, and multi-hop inference via external knowledge (Chen et al., 2023, Liu et al., 10 Apr 2025). Relation-aware grounding modules, hierarchical cross-attention, and weakly/unsupervised curriculum approaches (AlignCAT, CLIP-VG) are designed to handle ambiguity, multi-target queries, and minimal supervision (Wang et al., 5 Aug 2025, Xiao et al., 2023).

5. Practical Applications and Impact

Visual Grounding systems form the infrastructure for an array of vision–language AI applications:

- Referring Expression Generation and Understanding: Underlies referential dialogue, human-robot instruction following, and AR interaction (Xiao et al., 2024).

- Robust Multimodal Perception: Enables visual navigation, surveillance, and anomaly detection in adverse environments (night, fog, glare) by fusing cues across RGB, TIR, radar, and LiDAR (Zhao et al., 31 Dec 2025, Baek et al., 2024, Guan et al., 2024).

- 3D Scene Understanding: 3DVG connects language to spatially-grounded AR/VR, mobile robotics, and autonomous driving contexts (Baek et al., 2024, Li et al., 28 May 2025).

- Remote Sensing and Industrial Inspection: High-res and multi-spectral VG extends to monitoring, infrastructure analysis, and environmental sensing (Moughnieh et al., 29 Mar 2025, Liu et al., 10 Apr 2025).

- Visual Question Answering (VQA): VG is fundamental for explainable, faithful VQA—methods such as lattice-based retrieval enforce answer grounding in credible image regions over shortcut exploitation (Reich et al., 2022, Reich et al., 2024).

6. Open Challenges, Limitations, and Future Directions

While accuracy on classical datasets like RefCOCO is nearing saturation, several persistent challenges define future VG research:

- Data and Domain Scaling: High-quality, multi-object, multi-domain benchmarks (e.g., GigaGround, generalized VG, scene knowledge) are essential for progress beyond single-object, fixed-scene assumptions (Xiao et al., 2024).

- Open-Ended and Few-Shot Generalization: Efficient adaptation and robust zero-shot transfer to new domains, categories, and modalities remain critical research goals (Moughnieh et al., 29 Mar 2025, Tang et al., 2023, Xiao et al., 2024).

- Interpretability and Evaluation: There is an increasing demand for faithful and plausible grounding metrics, OOD evaluation protocols that enforce visual reasoning, and more interpretable architectures capable of error attribution (Reich et al., 2023, Reich et al., 2024).

- Efficient and Deployable Models: Inference/FLOPs–efficient architectures (e.g., SimVG, HiVG) and parameter-efficient fine-tuning are increasingly important for edge and resource-constrained scenarios (Dai et al., 2024, Xiao et al., 2024).

- Advanced Reasoning: Scene knowledge integration, graph-based reasoning, multi-hop inference, and multimodal fusion remain open frontiers (Chen et al., 2023, Zhao et al., 31 Dec 2025).

In sum, Visual Grounding has evolved into a central multimodal task driving research at the interface of vision, language, and real-world interaction. Advances now hinge on developing robust, generalizable models capable of precise cross-modal alignment, context and relation reasoning, and scalable real-world deployment across diverse application domains (Xiao et al., 2024).