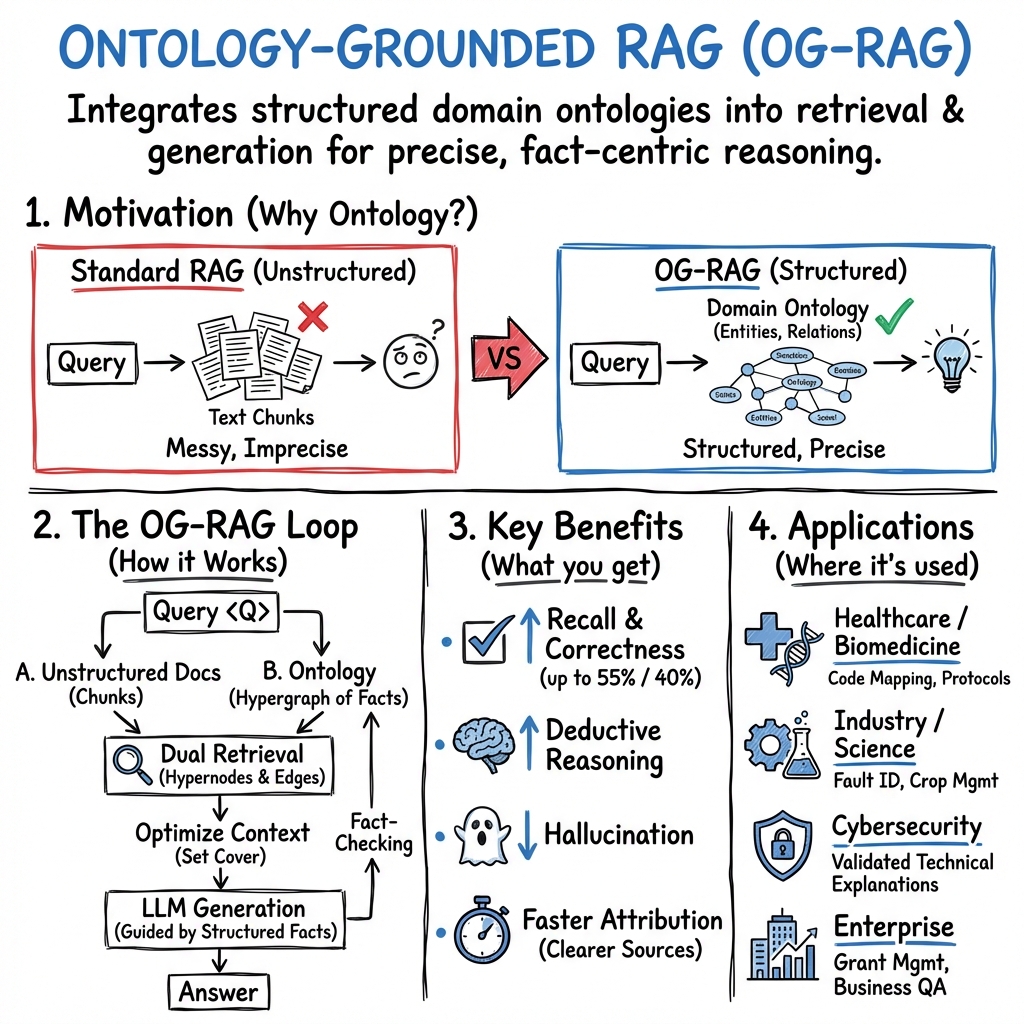

Ontology-Grounded RAG

- OG-RAG is a paradigm that leverages domain ontologies to structure the retrieval process, transforming documents into hypergraph representations for enhanced fact-centric generation.

- It employs a dual retrieval strategy using cosine similarity and set cover optimization to ensure high recall and precise context assembly.

- Empirical results show significant improvements in recall, answer correctness, and deductive reasoning, with successful applications in biomedicine, law, and industrial workflows.

Ontology-Grounded Retrieval-Augmented Generation (OG-RAG) is a paradigm in neural information retrieval and LLM augmentation that explicitly leverages domain ontologies to optimize retrieval and context assembly for generative models. Unlike standard RAG pipelines that rely solely on dense or sparse retrieval from unstructured documents, OG-RAG integrates structured ontological knowledge—entities, relations, and constraints—into every stage of the retrieval-generation loop. This ontology grounding enables precise, fact-centric generation and supports high-fidelity reasoning in specialized knowledge domains such as industry, biomedicine, law, and technical education. OG-RAG systems have demonstrated substantial gains in recall, correctness, attribution speed, and deductive reasoning relative to non-ontology-aware baselines, and underpin several recent frameworks in both academic and applied contexts (Sharma et al., 2024, Feng et al., 26 Feb 2025, Tiwari et al., 31 May 2025, Zhao et al., 1 Apr 2025, Cruz et al., 8 Nov 2025, Wu et al., 11 Jun 2025, Nayyeri et al., 2 Jun 2025).

1. Formal Frameworks and Mathematical Foundations

A canonical OG-RAG system begins by defining an ontology as a set of entities, attributes, and relations:

where is a set of domain entities, is a set of attributes (relations), and denotes slots to be filled from data if not specified in the ontology (Sharma et al., 2024). Documents are mapped to factual blocks by slot-filling against , producing extracted atomic facts as triples.

Facts are compiled into a hypergraph , where hypernodes comprise concatenated subject-attribute–value key–value pairs and each hyperedge encodes a flattened factual block—potentially involving multi-entity relationships.

Given a query , OG-RAG employs a dual retrieval process:

- Top- selection of hypernodes using cosine similarity between embedded keys/values and the embedded query.

- Optimization of the set cover over hyperedges to ensure all relevant nodes are captured while minimizing context length:

0

This set cover is approximated by a greedy algorithm providing a 1 guarantee (Sharma et al., 2024).

In variants (notably (Wu et al., 11 Jun 2025)), joint retrieval unifies KG- and corpus-based scores, with temperature normalization and mixture weighting:

2

where 3 ranges over both ontology elements and unstructured passages.

2. Core Methodologies: Hypergraph Construction and Retrieval

Ontology-grounded mapping is typically realized via LLM-guided slot-filling on pre-chunked input (e.g. sectioned documents, table rows, or extracted entity spans), followed by flattening composite nested facts into hyperedges. Hypergraph construction ensures preservation of conceptual relations.

Retrieval layers can be realized by:

- Embedding-based similarity over hypernodes for initial recall.

- Submodular hyperedge selection or, in graph-based alternatives, Prize-Collecting Steiner Tree methods to maximize relevant prize nodes while minimizing connection cost (Cruz et al., 8 Nov 2025).

Some systems utilize symbolic query interfaces like SPARQL (e.g., in biomedical code mapping via OntologyRAG (Feng et al., 26 Feb 2025)), with LLMs translating natural language to formal graph queries before context assembly.

Extended approaches, such as those in KG-Infused RAG (Wu et al., 11 Jun 2025), apply cognitive-inspired spreading activation within the ontology graph, recursively expanding the subgraph relevant to the query based on activation flows.

3. Context Assembly and Prompt Composition

Following retrieval, selected hyperedges are materialized into a context representation (e.g., JSON-like or linearized triple lists), concatenated up to token window limits. The canonical LLM prompt structure is:

OG-RAG systems often inject explicit ontology metadata or chain-of-thought steps to guide the LLM's reasoning, and in some workflows, include symbolic/rule-based answer validation (e.g., passage–answer consistency checking against the ontology (Zhao et al., 1 Apr 2025)).

In preference-optimized pipelines, retrieval and generation modules are further fine-tuned by pairwise ranking objectives and faithfulness regularization to the ontology (Wu et al., 11 Jun 2025).

4. Evaluation Metrics and Empirical Results

Evaluation of OG-RAG systems leverages both retrieval and generation-centric metrics such as:

- Context Recall (C-Rec): proportion of ground-truth claims in the context.

- Answer Correctness (A-Corr): combined semantic similarity and factual overlap.

- Deductive Reasoning Accuracy: correctness on multi-step fact-based tasks.

- Attribution speed/support: human-effort measures.

- Comprehensive QA metrics (e.g. BERTScore, METEOR, ROUGE, F1).

Representative performance comparisons are shown below (aggregated from (Sharma et al., 2024)):

| Method | Context Recall | Answer Correctness | Deductive Reasoning Accuracy | Attribution Time (s) |

|---|---|---|---|---|

| RAG | 0.24 | 0.31 | 0.43 | 61.2 |

| RAPTOR | 0.68 | 0.44 | 0.46 | — |

| GraphRAG | — | — | 0.48 | — |

| OG-RAG | 0.87 | 0.57 | 0.52 | 43.5 |

Results consistently indicate large improvements in recall (up to 55%), correctness (up to 40%), faster and clearer context attribution (30% improvement), and enhanced deductive reasoning (27% gain) relative to conventional and graph-based RAG methods (Sharma et al., 2024, Cruz et al., 8 Nov 2025).

5. Domain-Specific Instantiations and Applications

OG-RAG's effectiveness has been validated across multiple verticals:

- Healthcare and Biomedicine: OntologyRAG (Feng et al., 26 Feb 2025) supports code equivalence mapping across rapidly evolving ontological standards (e.g., ICD), providing interpretable mapping levels with minimal LM retraining.

- Industrial and Scientific Workflows: Applied to agriculture (crop management), healthcare (decision protocols), and electrical relays (fault identification) by leveraging procedural ontologies for deterministic, rules-compliant answers (Sharma et al., 2024, Tiwari et al., 31 May 2025).

- Cybersecurity Education: CyberBOT (Zhao et al., 1 Apr 2025) uses ontology-based reranking and passage filtering to deliver validated, axiom-compliant technical explanations, deployed at academic scale.

- Enterprise and Grant Management: Evaluation on real-world business and grant datasets shows ontology-KG integration delivers an order of magnitude accuracy boost over vector RAG (Cruz et al., 8 Nov 2025).

Example question–answer pairs from agriculture workflow (Sharma et al., 2024):

- Q: "Which pest can be controlled by Imidacloprid 48 FS?"

- OG-RAG Context: Pest Name: White Grub; Pesticide Name: Imidacloprid 48 FS; Stage: Vegetative

- A: White Grub.

6. Limitations, Challenges, and Future Directions

OG-RAG is constrained by ontology quality, coverage, and update latency. Static ontologies may quickly become obsolete in dynamic domains, motivating integration of automated ontology learning and alignment. Hypergraph or graph-based approaches scale less efficiently than vector retrieval, introducing computational bottlenecks for large corpora (Tiwari et al., 31 May 2025, Cruz et al., 8 Nov 2025).

Future directions highlighted in the primary literature include:

- Automating or continuously updating ontologies using LLM-driven extraction pipelines (Tiwari et al., 31 May 2025, Nayyeri et al., 2 Jun 2025).

- Integration of edge weights or confidence scores for calibrated retrieval (Sharma et al., 2024).

- Incorporating deep graph embeddings or graph neural networks for end-to-end relevance ranking (Cruz et al., 8 Nov 2025).

- Optimization for multimodal and cross-domain input (e.g., combining diagrams with textual facts) (Sharma et al., 2024).

- Interactive, expert-in-the-loop or GUI-driven KG maintenance (Feng et al., 26 Feb 2025, Cruz et al., 8 Nov 2025).

A plausible implication is that OG-RAG architectures, by formalizing retrieval as a hypergraph cover or subgraph extraction problem grounded in ontological vocabularies, offer a generalizable template for fact-centric, interpretable, and domain-complete LLM augmentation in knowledge-intensive workflows.