Parametric Human Models: SMPL, SKEL & BSM

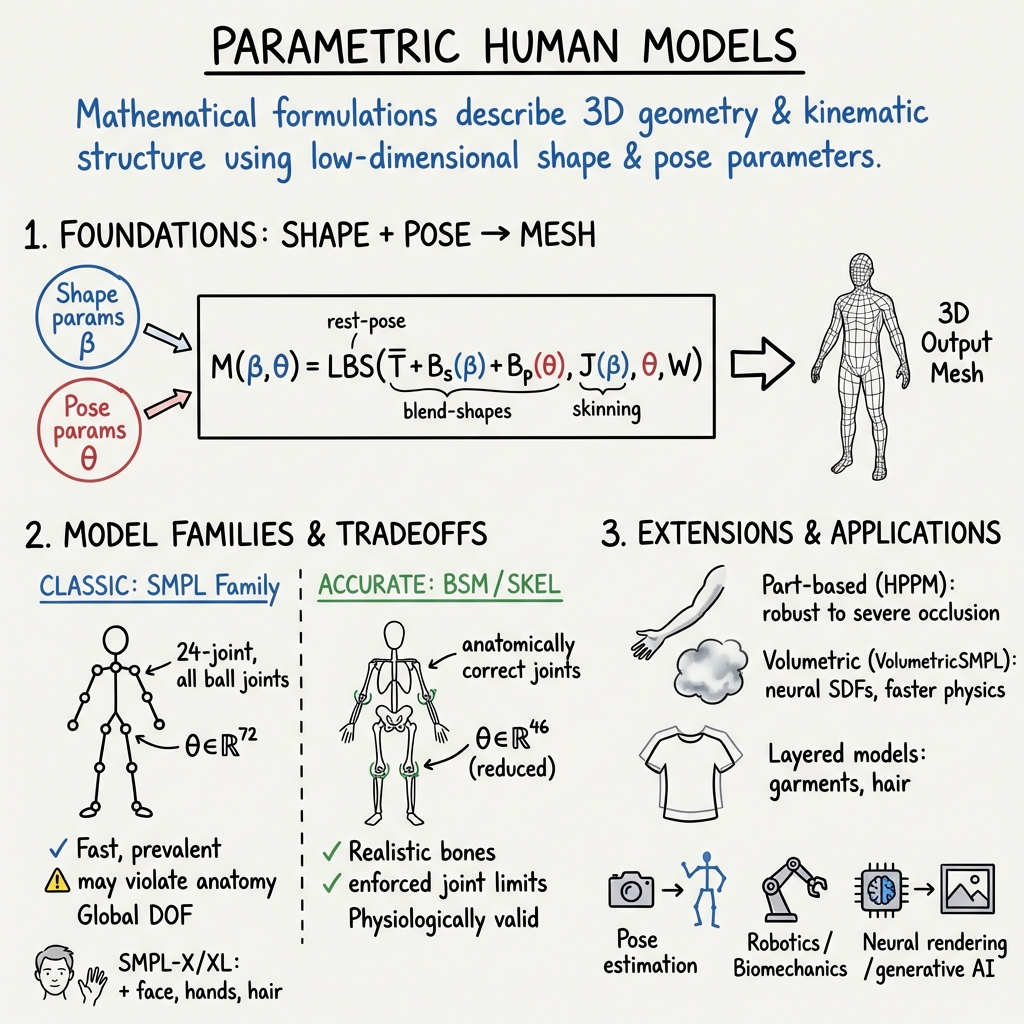

- Parametric Human Models are mathematical frameworks that use low-dimensional shape and pose parameters to recreate 3D human geometry with trade-offs between anatomical realism and computational efficiency.

- They employ techniques like linear blend skinning, joint limit enforcement, and learned optimizers to achieve accurate mesh recovery, realistic pose estimation, and efficient model fitting.

- These models are widely used in computer vision, graphics, biomechanics, and robotics, supporting applications such as neural rendering, animation, and human-environment interactions.

Parametric human models are mathematical formulations that describe the 3D geometry and kinematic structure of the human body using low-dimensional shape and pose parameters. These models are foundational in computer vision, graphics, biomechanics, and robotics for tasks such as mesh recovery, animation, pose estimation, neural rendering, and human-environment interaction. Three major classes of such models are SMPL (Skinned Multi-Person Linear), BSM (Bone Skeleton Model), and the newer SKEL (biomechanically accurate skeletons), each encoding distinct tradeoffs between mesh fidelity, anatomical realism, efficiency, and downstream application suitability.

1. Mathematical Foundations and Model Families

The SMPL family defines the human body mesh as a function of global shape coefficients (typically a low-dimensional principal-component basis) and pose parameters (joint angles or local rotations for a fixed hierarchy of joints). The body surface is deformed through blend-shape models and articulated via linear blend skinning (LBS). SMPL models the body as: where is the rest-pose mesh, and are shape and pose blend-shape bases, computes joint positions, and are fixed skinning weights. SMPL-X and SMPL-XL extend this principle to face, hands, hair, and procedural garments using a layered approach (Yang et al., 2023).

The BSM and SKEL models replace the SMPL kinematic chain with an anatomically accurate skeleton, in which each joint has degrees of freedom (DOF) and limits matching real human anatomy, and joint centers correspond to physiological articulations. SKEL notably has only 46 DOF (compared to SMPL's 72) arising from the mapping of OpenSim-based bone types (e.g., 1-DOF hinge knees, ball-and-socket shoulders, anatomically constrained spines and scapulae) to the mesh (Keller et al., 8 Sep 2025, Xia et al., 27 Mar 2025, Li et al., 25 Nov 2025).

2. Model Parameterization: Shape, Pose, Skeleton, and Beyond

Key parameter spaces and features across models are summarized as follows:

| Model | Shape params | Pose params | Skeleton Type | Surface Rep. | Key Properties |

|---|---|---|---|---|---|

| SMPL | 24-joint, all ball joints | Mesh (6890 verts) | Fast, prevalent; may violate anatomy; global DOF | ||

| SMPL-X/XL | , face, hands | + expr | As above, + face/hands joints | Layered mesh | Multi-layer (body, face, hands, clothes, hair) |

| BSM/SKEL | 19-24 anatomically correct joints | Mesh (SMPL-based) | Realistic bones, enforced joint limits | ||

| ATLAS/MHR | Decoupled skeleton/surface | Mesh (up to 115K) | Explicit skeleton/shape separation, non-linear correctives | ||

| Anny | (gender, age, etc.) | 163 bones, full semantics | High-res mesh | Scan-free, semantic control, continuous ages | |

| HPPM | , local | Per-part, indep. PCA subspace | Part meshes | Robust to severe occlusion, 15 part subspaces |

SMPL and its successors utilize global principal components for shape () and full-axis angles for pose (), encoded as tangent-vector or rotation-matrix representations. In SKEL/BSM, the pose vector is reduced to only those joint angles actually permitted by anatomy, and bounded by empirically derived joint-limits (Keller et al., 8 Sep 2025, Xia et al., 27 Mar 2025). MHR (Momentum Human Rig) and ATLAS provide fully decoupled control over skeleton length/scale () and soft-tissue surface shape () for improved expressivity and real human joint control (Park et al., 21 Aug 2025, Ferguson et al., 19 Nov 2025).

3. Model Fitting, Inference, and Learning Protocols

Fitting parametric human models to data—images, point clouds, or scans—proceeds by optimizing model parameters such that projected mesh joints and silhouette/appearance cues match the observations. Typical fitting involves minimizing reprojection losses, regularization terms (on shape or pose), and, for physically based models, explicit priors enforcing joint limits or skeletal realism (Leroy et al., 2020, Song et al., 2020, Xia et al., 27 Mar 2025).

Recent architectures use learned optimizers for fast convergence (Song et al., 2020), transformer-based encoder-decoder networks for coarse-to-fine prediction (SKEL-CF (Li et al., 25 Nov 2025)), and probabilistic models for multi-hypothesis recovery and localized uncertainty quantification (Biggs et al., 2020, Sengupta et al., 2021). SKEL fitting also requires pseudo-ground-truth generation by “upgrading” SMPL datasets via joint/bone optimization and anatomical marker correspondences (Xia et al., 27 Mar 2025).

ATLAS and MHR train over hundreds of thousands of high-resolution scans, learning both skeletal and surface latent spaces, while Anny uses artist-provided blendshapes and anthropometric priors from global databases (e.g., WHO statistics) for interpretable, demographically grounded shape control (Brégier et al., 5 Nov 2025).

4. Extensions: Part Models, Volumetric SDFs, Neural Meshes, and Clothing

Parametric models have been extended to address limitations in occlusion, clothing detail, and interaction modeling:

- Part-based models (HPPM): Meshes are reconstructed separately for each visible body part, using local PCA and rigid transforms, then seamlessly fused. HPPM achieves sub-millimeter error even when only a few parts are observed, outperforming global models under occlusion (Luan et al., 2024).

- Volumetric models (VolumetricSMPL): The surface mesh is replaced by neural SDF decoders, where each body part’s SDF is blended efficiently via Neural Blend Weights (NBW). This enables order-of-magnitude faster collision/contact computation, efficient self-intersection handling, and real-time volumetric queries (Mihajlovic et al., 29 Jun 2025).

- Clothed and layered models: SMPL+displacements (SMPL+D) and layered parametric models (SMPL-X/SMPL-XL, SynBody) support surface details beyond the naked body, either via per-vertex offsets optimized to fit outer clothing (Bhatnagar et al., 2020, Yang et al., 2023) or explicit multi-layer garments and hair with transferred blendshapes and skinning (Yang et al., 2023, Jiang et al., 2024).

- Skeleton growth and exoskeletons: Modular exoskeleton representations (ToMiE) adaptively extend the SMPL joint tree by learning new external joints, allowing animation and modeling of objects or loosely attached garments beyond the human skin envelope (Zhan et al., 2024).

- Pose/shape control in neural generative frameworks: SMPL and its embedding have been used as control conditions in text-to-image and video diffusion pipelines, yielding improved pose/shape fidelity and animation consistency compared to 2D-pose conditions (Buchheim et al., 2024, Zhu et al., 2024).

5. Empirical Results and Benchmarks

A variety of benchmarks have established SMPL/SMPL-X as the dominant representation for human mesh recovery and pose estimation. SMPL-based predictors achieve MPJPE (mean per-joint position error) in the range 41.6–59.9 mm (Human3.6M, 3DPW) (Biggs et al., 2020), but may fail under occlusions or extreme poses. SKEL and BSM models, by enforcing biomechanically realistic constraints and joint limits, reduce error and eliminate physiologically impossible joint rotations in challenging datasets—showing 18.6–24.5% improvement over prior SKEL methods and comparable (or better) accuracy to SMPL in standard settings, with greatly reduced occurrence of anatomical violations (Xia et al., 27 Mar 2025, Li et al., 25 Nov 2025).

VolumetricSMPL enables 10× faster volumetric inference and 6× lower GPU memory than prior volumetric occupancy models (COAP) at equal or higher accuracy. HPPM achieves mm per-vertex fitting error and robust recovery in partially visible settings (Luan et al., 2024). Anny, despite using only artist-curated and anthropometric blendshapes, attains 2–3 mm scan fitting accuracy and outperforms SMPL-X in child- and age-consistent estimation (Brégier et al., 5 Nov 2025).

6. Anatomical Realism, Control, and Limitations

SMPL’s simplified kinematic tree (24 ball joints) is computationally efficient but not anatomically correct—leading to physically implausible poses (e.g., knees and elbows with >15% violation of normal ranges in up to 60% frames under certain conditions (Xia et al., 27 Mar 2025, Keller et al., 8 Sep 2025)). SKEL/BSM strictly enforces per-joint limits and uses 46 DOF, enabling real-world simulation, biomechanics, and physically valid animation (Xia et al., 27 Mar 2025, Li et al., 25 Nov 2025). ATLAS, MHR, and ToMiE further decouple skeleton and soft tissue, allowing user-specified control over bone lengths and shape. Part-based and exoskeleton models enable animation of garments and hand-held objects, substantially extending the applicability of parametric frameworks (Zhan et al., 2024, Luan et al., 2024).

The main limitations across all methods are reconstruction under severe occlusions, ambiguity in viewpoint or depth, and spurious artifact introduction when text/2D pose conditions contradict the 3D shape/pose specification (in generative frameworks) (Buchheim et al., 2024). For fully realistic clothing, two-stage deformation (first fitting SMPL, then adding per-vertex offsets or using layered garments) is currently required.

7. Applications and Ongoing Development

Parametric human models underpin a broad spectrum of applications:

- Pose and shape recovery from images/videos: SMPL, SMPL-X, and BSM/SKEL are the staple representations in monocular and multi-view mesh regression, animation, and motion tracking (Leroy et al., 2020, Xia et al., 27 Mar 2025, Li et al., 25 Nov 2025).

- Neural rendering and generative modeling: Integration of SMPL control as diffusion model conditioning (with domain-adaptation) provides high-fidelity, physically plausible samples for human image and video generation (Buchheim et al., 2024, Zhu et al., 2024).

- Biomechanics, AR/VR, robotics: The adoption of biomechanically-accurate skeletons (SKEL, BSM) and modular rigs (MHR, ATLAS) enables simulation, physically valid animation, and scalable synthesis/configuration of avatars for immersive environments (Park et al., 21 Aug 2025, Ferguson et al., 19 Nov 2025).

- Synthetic data, benchmarking, and domain transfer: SynBody, SMPLX-Lite, and related datasets, featuring layered meshes, high-fidelity textures, and demographic/phenotypic diversity, allow for robust training, benchmarking, and fair evaluation across a broad range of pose, shape, and environmental conditions (Yang et al., 2023, Jiang et al., 2024, Brégier et al., 5 Nov 2025).

Future trends include the unification of mesh, volumetric, and part-based methods; systematic biomechanical validation; and richer, semantically grounded, demographically representative shape spaces for universal and interpretable human modeling.