AI Tackles Research-Level Math Autonomously

This presentation explores Aletheia, a mathematics research agent that autonomously solved six out of ten research-level problems from the FirstProof challenge. Using the Gemini 3 Deep Think model with strict autonomy constraints and no human mathematical input, Aletheia demonstrates a reliability-first approach—abstaining from problems rather than generating incorrect proofs. The talk examines the system's problem-solving protocol, evaluation standards, inference cost patterns, and implications for autonomous mathematical reasoning.Script

Can an AI system prove mathematical theorems at research level, completely on its own, without any human hints or guidance? That's the audacious challenge Aletheia set out to tackle with the FirstProof benchmark.

Let's start by understanding what makes FirstProof so demanding.

The FirstProof challenge presents 10 research-level problems that demand proofs meeting publishable standards. The key constraint? Absolute autonomy—no human can inject mathematical ideas during the solving process.

Now, how does Aletheia approach these formidable problems?

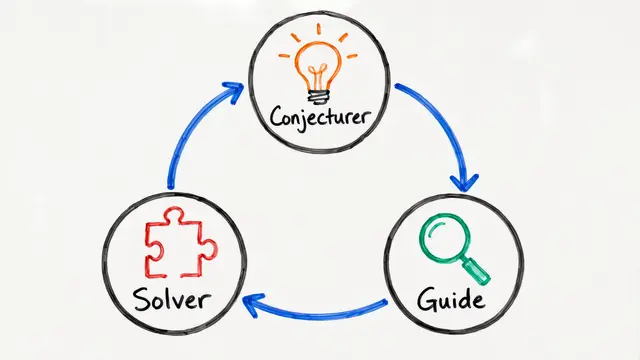

Building on the question from before, Aletheia centers on Gemini 3 Deep Think with a critical design choice: it would rather return no solution than provide an incorrect proof. This reliability-first philosophy, combined with automated verification and a best-of-2 selection protocol, forms the backbone of its approach.

So what did Aletheia actually achieve?

Aletheia solved 6 problems with expert consensus confirming correctness, while deliberately abstaining on the remaining 4. For Problem 8, a majority of experts found the proof sound despite some under-detailed arguments—consistent with minor revision feedback in standard peer review.

The computational resources Aletheia consumed tell us something fascinating about problem difficulty. Problem 7 demanded an order of magnitude more inference than baseline cases, revealing the agent's internal struggle with generator and verifier complexity.

Human evaluators applied the same standard used in academic peer review, and here's the crucial finding: no expert identified fundamental mathematical errors in the top-rated outputs. Critiques focused on details and presentation, not correctness.

These results carry profound implications for autonomous mathematical reasoning. Aletheia demonstrates that state-of-the-art language models, when equipped with robust verification scaffolds, are approaching practical utility for research-level mathematics—though reliability and problem specification remain active challenges.

Aletheia proves that AI can tackle research mathematics autonomously, not by solving everything, but by knowing when to abstain and ensuring every solution it offers meets rigorous standards. Visit EmergentMind.com to explore more cutting-edge AI research.