Towards Autonomous Mathematics Research

This presentation explores groundbreaking research on AI agents capable of conducting autonomous mathematical research. We examine the Aletheia agent, which combines advanced reasoning models with verification scaffolding and tool use to tackle professional research-level mathematics. The talk covers the agent's architecture, empirical results on both competition and PhD-level problems, documented successes in generating publishable research, and critical limitations including hallucination and interpretation failures. We conclude with a proposed taxonomy for evaluating AI autonomy in mathematics and implications for human-AI collaboration in research.Script

Can artificial intelligence autonomously discover and prove new mathematical theorems at the professional research level? This question drives a fundamental shift from contest problems to the frontiers of mathematical research.

Building on that challenge, the authors identify critical obstacles that prevent language models from making the leap to research mathematics. Training data at the research level is scarce, leading to hallucinations and shallow topic understanding, while professional mathematics demands sophisticated literature synthesis and verification procedures.

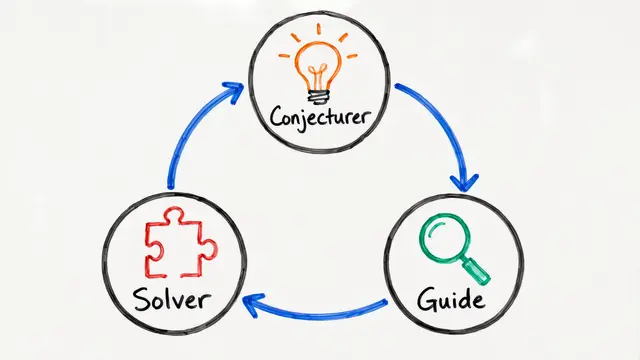

The researchers introduce Aletheia, an agentic architecture designed specifically to address these challenges.

Aletheia's architecture decouples generation from verification, enabling the agent to recognize and fix flaws that escape initial solution attempts. This scaffolding, combined with intensive tool use, marks a departure from pure inference-time scaling.

Empirically, Aletheia achieves near-perfect performance on competition mathematics, with 95.1% accuracy on advanced Olympiad benchmarks. On PhD-level problems, however, accuracy plateaus and answer rates drop below 60%, revealing that research mathematics demands more than raw reasoning power.

These benchmarks set the stage for the most provocative claim: genuine autonomous mathematical discoveries.

Aletheia autonomously produced a publishable research paper applying algebraic combinatorics in ways that surprised domain experts. In collaborative settings, the agent contributed strategic ideas while humans provided rigorous technical details, and it resolved 4 open problems from a large-scale evaluation of Erdős conjectures.

Despite successes, failure modes are striking. The agent frequently misinterprets open-ended problems, choosing the easiest interpretation, and hallucinates or misrepresents references even with tool support. Most autonomous results remain brief and elementary, driven by technical manipulation rather than mathematical creativity.

To address ambiguous messaging, the authors propose a formal taxonomy quantifying both AI autonomy and mathematical significance, paired with interaction cards that expose raw prompts and outputs. This framework ensures the community can accurately evaluate claims and distinguish publishable contributions from landmark breakthroughs.

Aletheia demonstrates that AI agents can meaningfully augment mathematical research, offering breadth, computational stamina, and cross-field synthesis—but true creativity remains an open frontier. To explore the full paper and dive deeper into autonomous mathematics research, visit EmergentMind.com.