KLong: Training LLM Agent for Extremely Long-horizon Tasks

This presentation introduces KLong, a breakthrough approach to training large language model agents for extremely long-horizon tasks that span hundreds to thousands of interactions. The talk explores how the Research-Factory pipeline automates data generation, how trajectory-splitting supervised fine-tuning overcomes context limitations, and how progressive reinforcement learning enables agents to master tasks requiring hours of sustained reasoning. With results surpassing models 10 times larger on research paper replication and multiple coding benchmarks, KLong demonstrates that intelligent decomposition and progressive training can unlock AI capabilities for complex, multi-stage scientific and engineering workflows.Script

Imagine asking an AI agent to replicate a machine learning research paper from scratch, working for hours through code, experiments, and iterations. Most language models collapse under tasks this long. This paper introduces KLong, a system that trains agents to handle workflows spanning thousands of interactions.

Building on that vision, let's examine why extremely long-horizon tasks have remained out of reach.

The researchers identified three critical barriers. Tasks like replicating a research paper demand sustained reasoning over hundreds of tool calls. Traditional context windows simply overflow. And reinforcement learning struggles when the reward signal arrives only after hours of interaction.

To tackle this, the authors built an automated data generation system.

The Research-Factory pipeline systematically generates training data at scale. It retrieves accepted papers from major conferences, filters them for quality and impact, converts PDFs to standardized Markdown, and critically, constructs detailed evaluation rubrics by analyzing both papers and reference implementations. An anti-cheating mechanism blacklists official repositories to prevent simple copying. This infrastructure produces thousands of high-quality trajectories for training.

With data in hand, the team developed a two-stage training approach that breaks conventional limits.

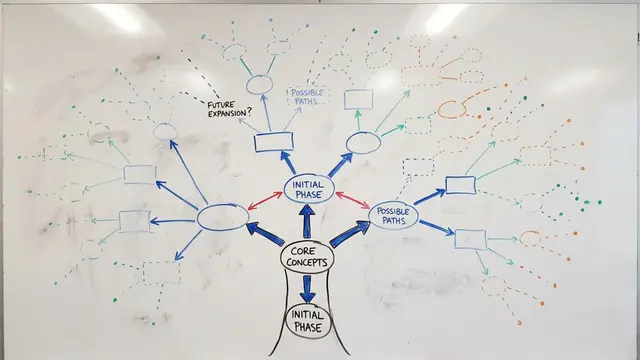

Trajectory-splitting supervised fine-tuning solves the context problem by slicing extremely long interactions into manageable pieces while preserving global task awareness. This enables the model to learn from interactions that would otherwise exceed its limits. Progressive reinforcement learning then extends the agent's capabilities in stages, gradually increasing task complexity from 2-hour to 6-hour timeouts, allowing stable adaptation to sparse, delayed reward structures.

The ablation study isolates each component's impact. Baseline performance with standard fine-tuning is limited. Adding trajectory-splitting gains 17 points. Progressive reinforcement learning adds another 7 points at the longest horizon. Notice how both assistant turns and runtime increase systematically, confirming the agent learns to sustain longer, more complex workflows.

KLong's results are striking. At just 106 billion parameters, it outperforms models 10 times larger on research replication benchmarks. It also generalizes strongly to other long-horizon coding challenges, including software engineering, security analysis, and machine learning competitions. The performance gap with proprietary models narrows substantially, and on specific tasks, KLong establishes new open-source state-of-the-art results.

This work opens pathways to AI-driven research replication, large-scale engineering automation, and complex multi-stage scientific tasks. It demonstrates that intelligent decomposition and progressive training can overcome apparent architectural limits. However, challenges remain including potential information loss from splitting, resource requirements, and reward signal accuracy. Future work may explore hierarchical memory and hybrid human feedback approaches.

KLong proves that extremely long-horizon tasks are within reach when we match training methods to task structure. Visit EmergentMind.com to explore the full paper and discover how this approach is reshaping what language model agents can accomplish.