Self-Adapting Language Models

This presentation explores SEAL, a groundbreaking framework that enables language models to teach themselves. Instead of relying on manually curated training data, SEAL allows models to generate their own finetuning instructions and training examples through reinforcement learning. We'll examine how this approach transforms model adaptation across knowledge incorporation and few-shot learning tasks, demonstrating that models can learn to optimize their own learning process with impressive results that sometimes surpass traditional methods using far larger auxiliary systems.Script

What if a language model could write its own study guide, decide what to practice, and update itself accordingly? That's the radical premise behind Self-Adapting Language Models, a framework where models take control of their own learning process.

Building on that idea, the authors identify a fundamental gap in how models learn. While humans naturally rewrite and restructure information to understand it better, language models passively consume whatever training data we provide, regardless of whether that format is actually optimal for learning.

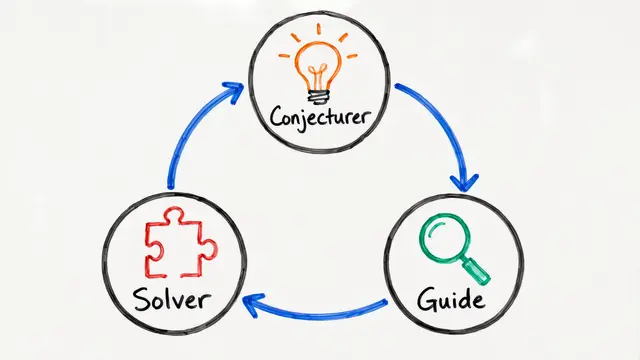

SEAL transforms this dynamic by giving models agency over their own adaptation process.

Here's the mechanism at work. The framework operates through nested loops: an outer reinforcement learning process optimizes how the model generates self-edits, while an inner loop applies those edits through gradient descent, essentially teaching the model to teach itself.

The researchers validated SEAL across two distinct domains. For knowledge incorporation, the model learns to restructure factual passages into implications that optimize retention. In few-shot learning, it masters the more complex challenge of generating both synthetic training examples and the optimization settings needed to solve abstract reasoning puzzles.

The results are striking. On abstract reasoning tasks, SEAL achieved 72.5% success where traditional in-context learning completely failed, and on knowledge incorporation, this 7 billion parameter model actually outperformed using synthetic data generated by the far larger GPT-4.1.

However, the framework faces important challenges that constrain its current applicability.

The primary limitation is catastrophic forgetting—as the model continues learning, earlier knowledge degrades. Additionally, the reinforcement learning loop for test-time training is computationally expensive, creating practical barriers to deployment at scale.

This research arrives at a critical moment. With projections suggesting we'll run out of human-generated text for training by 2028, SEAL points toward a future where models generate their own learning materials, potentially bootstrapping increasingly capable systems through iterative self-improvement.

Self-adapting language models represent a fundamental shift from passive learning to active self-optimization, where the boundary between learner and teacher dissolves. Visit EmergentMind.com to explore more cutting-edge research pushing the frontiers of artificial intelligence.