- The paper introduces a reduced U-Net architecture with a recurrent extension that achieves near-human performance in dense honeybee tracking.

- It leverages high-resolution imaging and temporal context to lower orientation error from 34° to 15°, enhancing detection accuracy in occluded environments.

- The approach facilitates baseline trajectory tracking and offers a scalable framework for automated behavioral analysis in complex, densely populated systems.

Dense Object Tracking in a 2D Honeybee Hive: A Convolutional and Recurrent Segmentation Approach

Introduction and Context

Dense object tracking poses persistent challenges in computational ethology, surveillance, and cellular imaging, particularly where objects are highly similar, occluded, and distribute densely in a two-dimensional scene. This work addresses these challenges in the context of tracking honeybees within a 2D hive environment—an archetypal problem typified by occlusions, self-similarity, and a visually complex background. Traditional object detection frameworks—whether based on sliding windows, region proposals, or marker-based systems—fail to scale effectively in this regime primarily due to the inapplicability of unique markers, significant labeling effort, and the combinatorial explosion of possible object configurations.

The paper introduces a method leveraging a fully convolutional segmentation architecture (U-Net) with extensions for instance orientation estimation and temporal regularization, trained on a substantial, newly-labeled dataset of honeybee hives. The approach eschews proposal-driven architectures, instead learning directly to assign instance labels and orientations at the pixel level. This work further demonstrates human-level performance in per-frame detection and orientation estimation, while also offering a foundation for longitudinal tracking via simple trajectory matching.

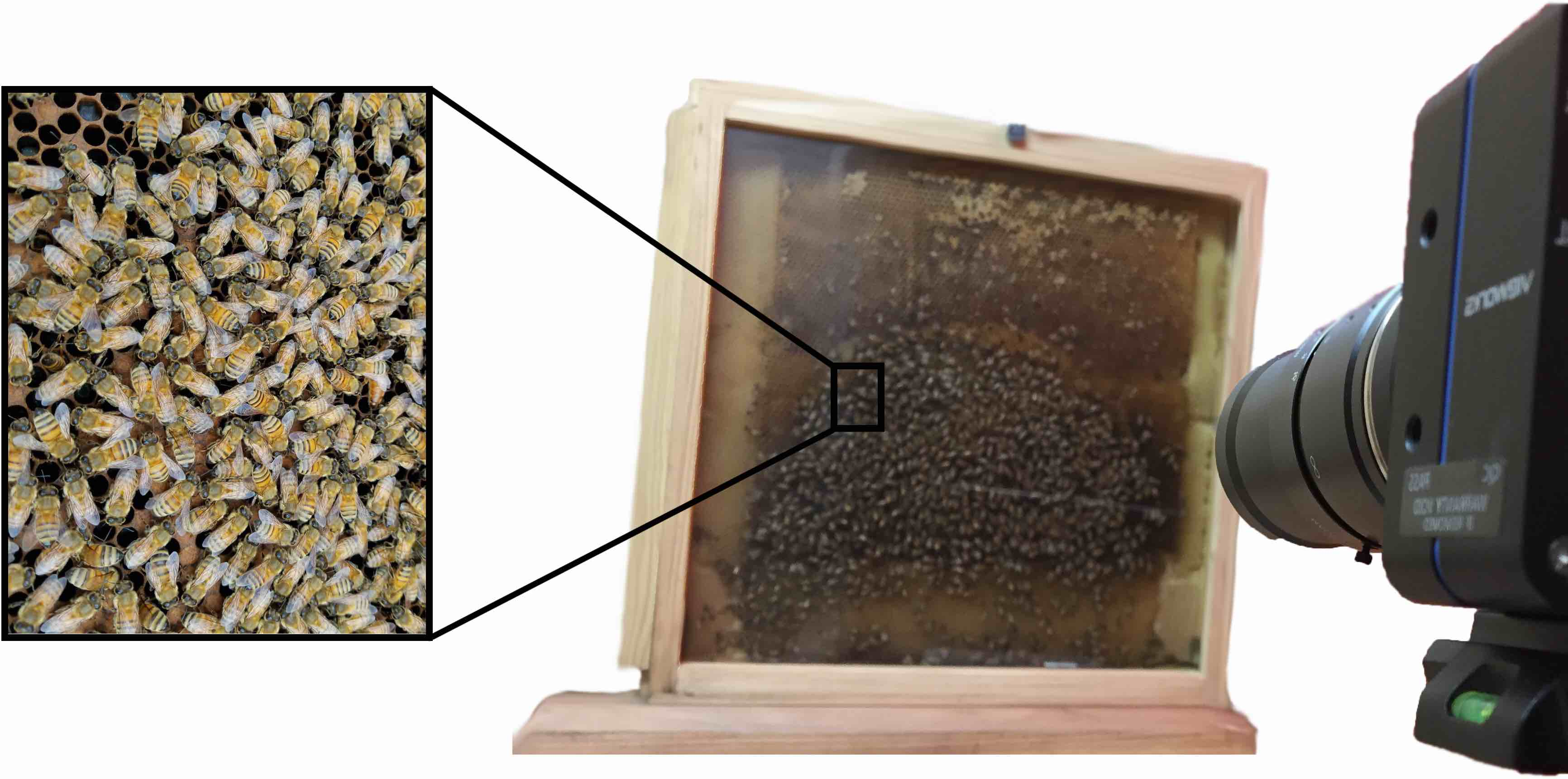

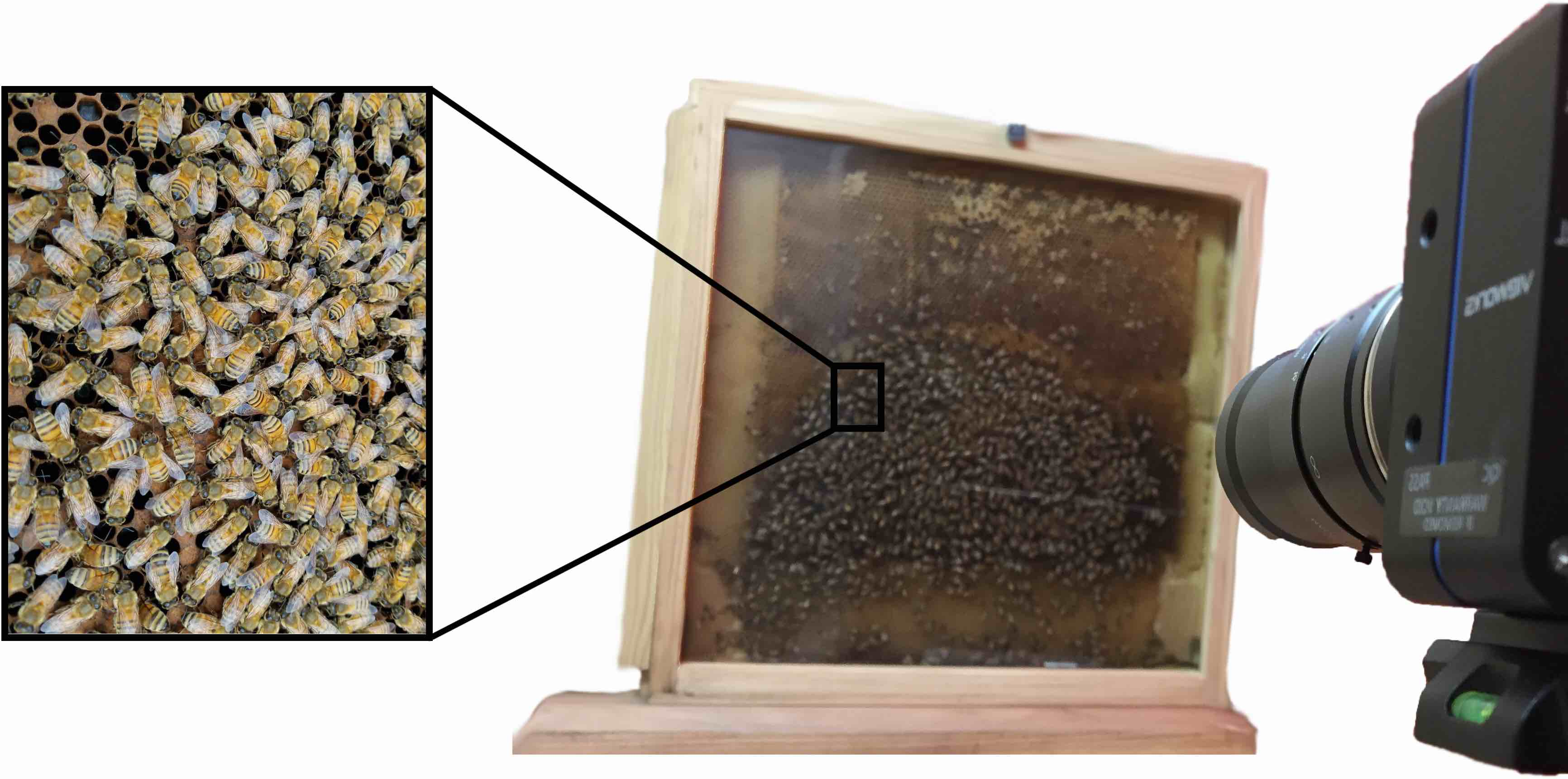

Figure 1: Observation beehive and imaging arrangement showing the two-dimensional imaging of a densely packed colony.

Data Acquisition and Labeling Pipeline

The system employs high-resolution (25MP, 5120x5120 pixels) grayscale imaging of a laboratory-maintained 2D hive with hundreds to thousands of honeybees on an artificial comb under infrared illumination, providing both favorable imaging conditions and natural animal behavior.

Labeling is achieved via a custom JavaScript interface deployed on Amazon Mechanical Turk, wherein annotators provide (x,y,t,α) per bee: location, type (fully visible or only abdomen visible in a cell), and body axis orientation. This process results in over 375,000 labeled bee instances across 720 frames, yielding a sufficiently large and diverse dataset to train and evaluate dense instance segmentation and orientation estimation models.

Network Architecture and Training Modifications

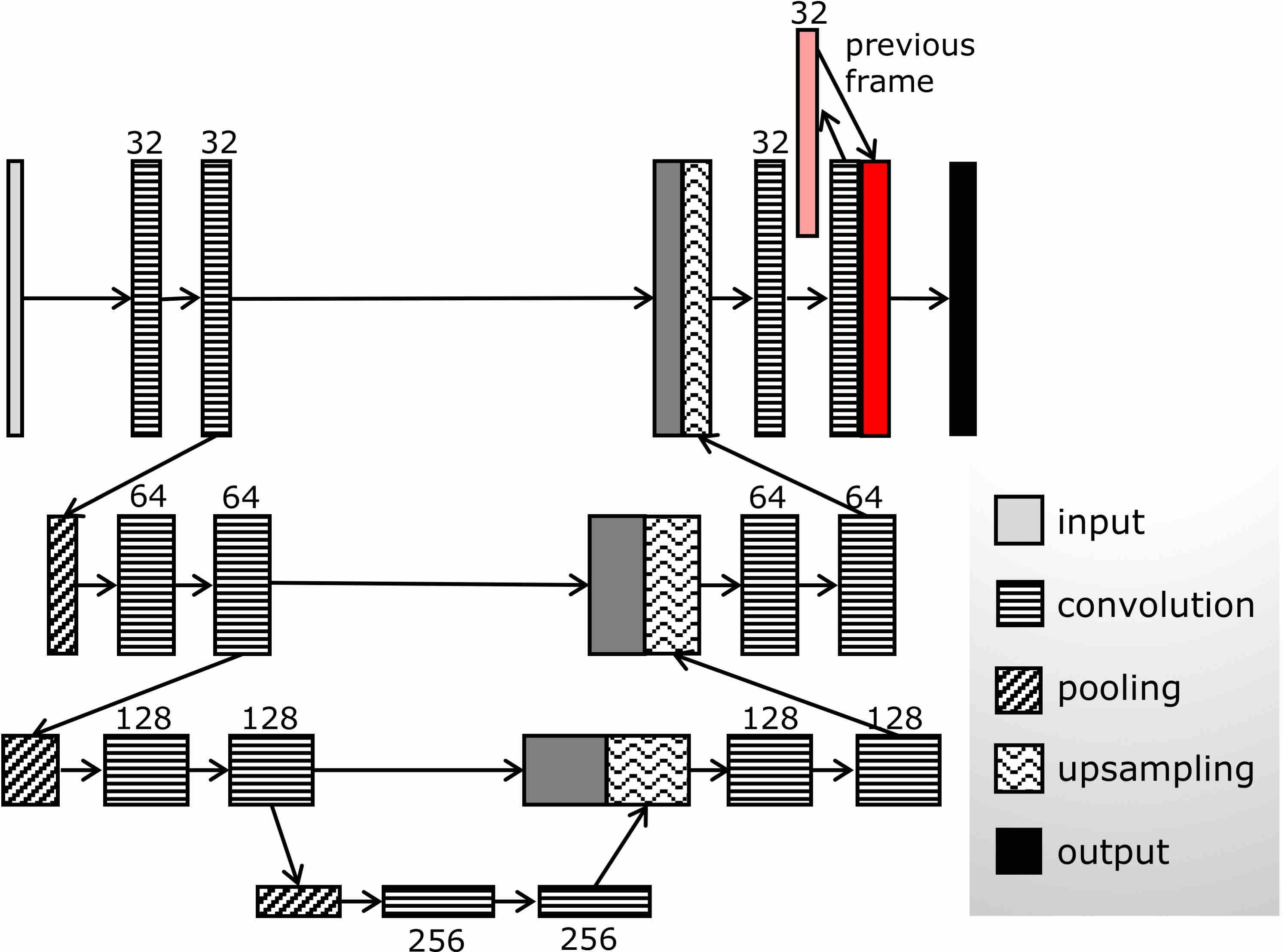

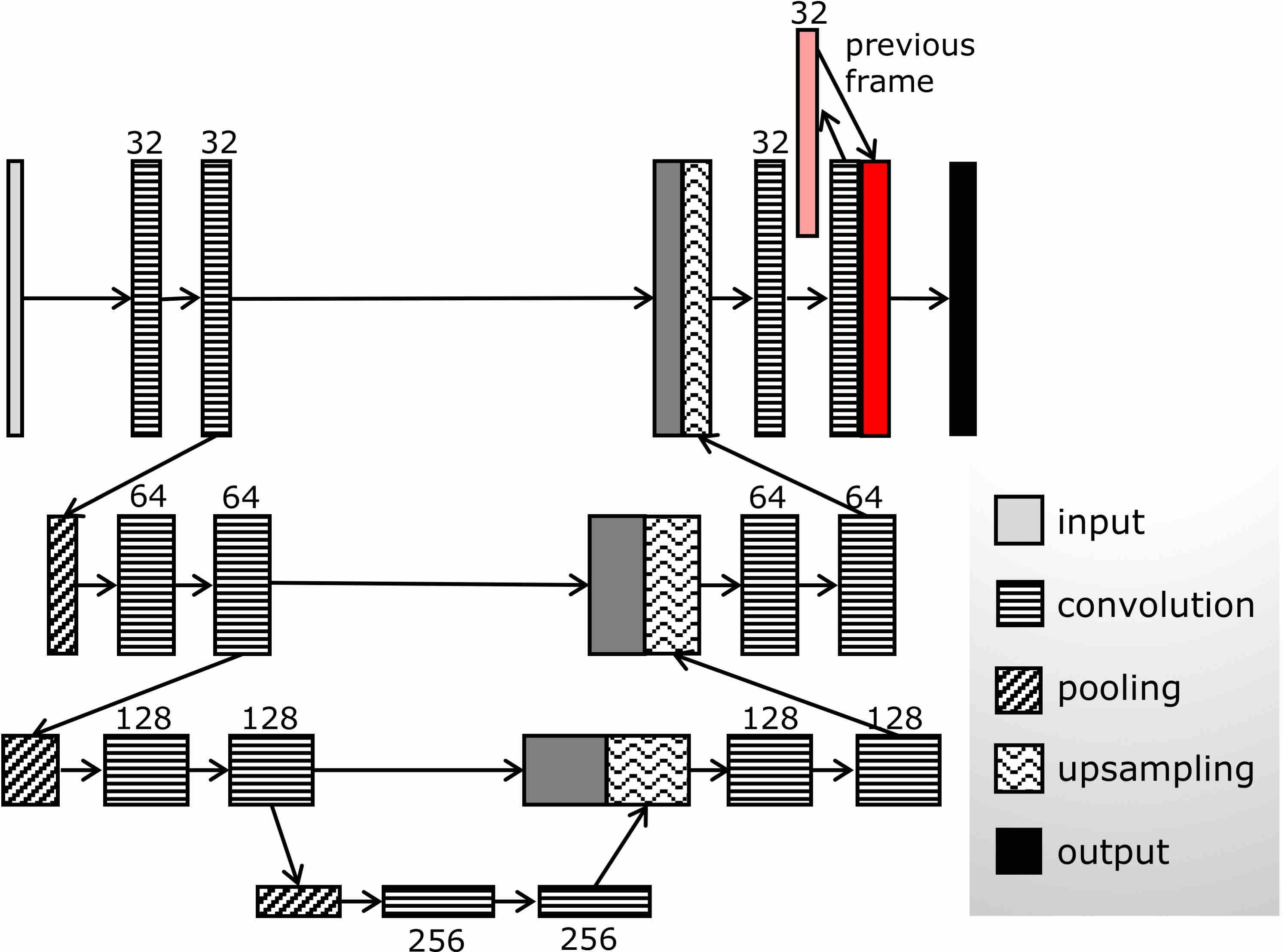

The core segmentation model is an adapted U-Net architecture, substantially pruned to ~1.9M parameters (compared to 31M in standard U-Net) by reducing channel widths and removing one encoder-decoder stage. This reduction is empirically necessitated by pronounced overfitting when using the default architecture in this regime. The architecture is further extended with a recurrent mechanism: before the final prediction layer, the representation from the penultimate layer at time t is concatenated with that from time t+1, integrating temporal context through implicit recurrence.

Figure 2: Network architecture—reduced-filter U-Net with added recurrent connections at the penultimate layer to leverage temporal cues.

Training utilizes class-balanced loss (3-class softmax for segmentation) to handle substantial class imbalance (background vs. bee classes), with per-pixel weights proportional to the frequency of background and bee-region pixels. For orientation estimation, the loss penalizes sin((α−α^)/2), explicitly training the network for angular precision. Foreground segmentation both distinguishes full-body and "abdomen-only" bees, and infers an approximate axis of orientation via PCA over labeled ellipses. Early stopping is adopted rather than dropout or weight decay, as these standard regularizers prove ineffective for the residual overfitting due to the lack of dense fully connected layers.

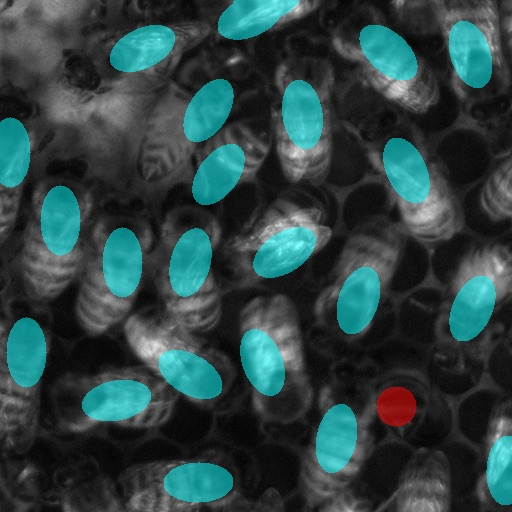

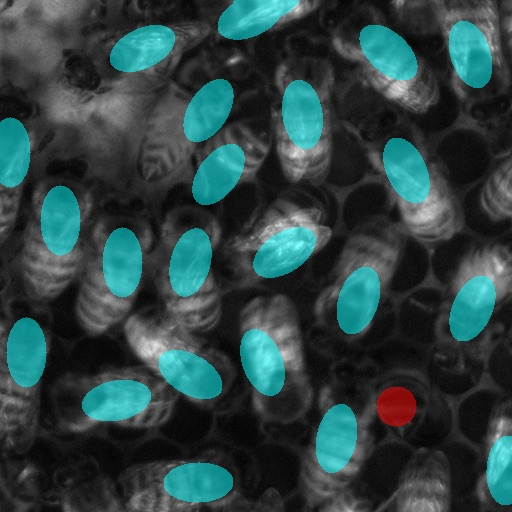

The model’s precision is rigorously benchmarked against human annotators with repeated labeling on held-out subsets. Detection accuracy (TPR) reaches 96%, with a location error of ~6 pixels (for bee bodies ~70 pixels wide), and median orientation error for the main body axis of 13∘. Human labelers exhibit average disagreement of ~7 pixels in position and 7.7∘ in axis angle, indicating the model is approaching inter-rater variability.

Boundary artifacts remain an issue—false positives concentrate at patch edges due to partial visibility—highlighting a practical consideration for future implementation: the use of overlapping sliding windows at inference time or post-processing to eliminate edge artifacts.

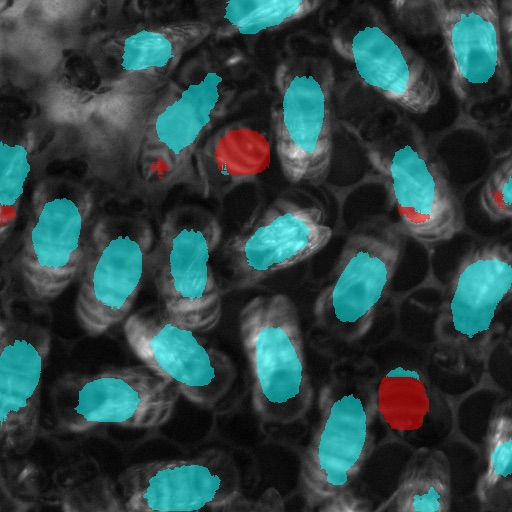

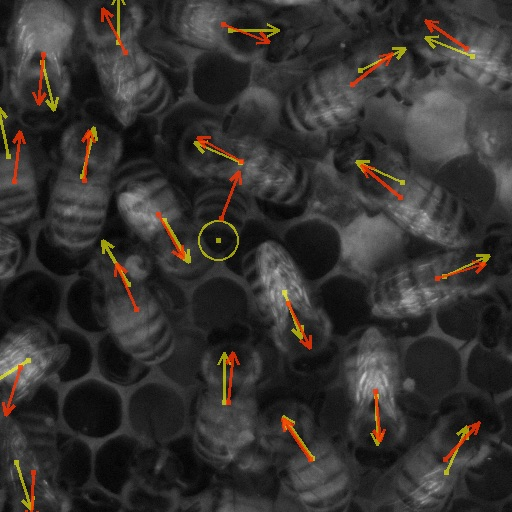

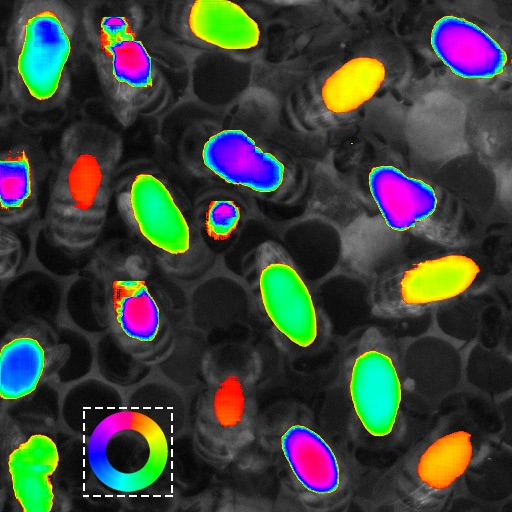

Segmentation labeling provides robust cues for centroid-based localization and axis estimation, while the extended orientation estimation network predicts directed angle per instance, with the best recurrent version achieving a 12∘ orientation error—statistically indistinguishable from human performance for this task.

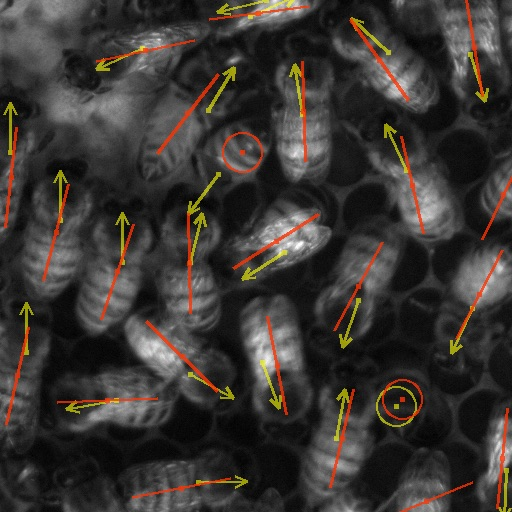

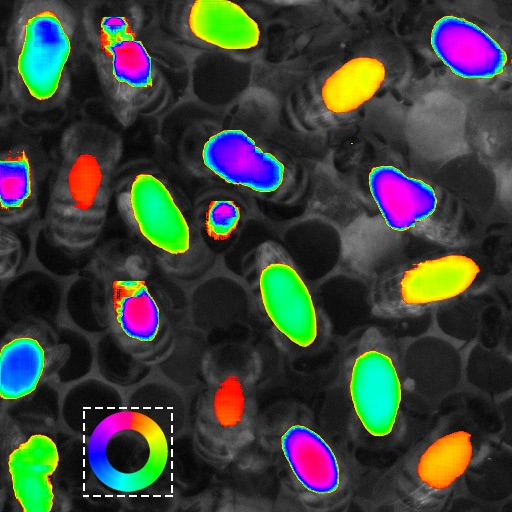

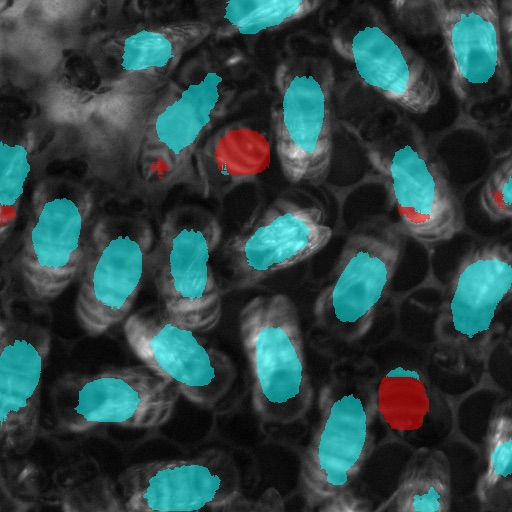

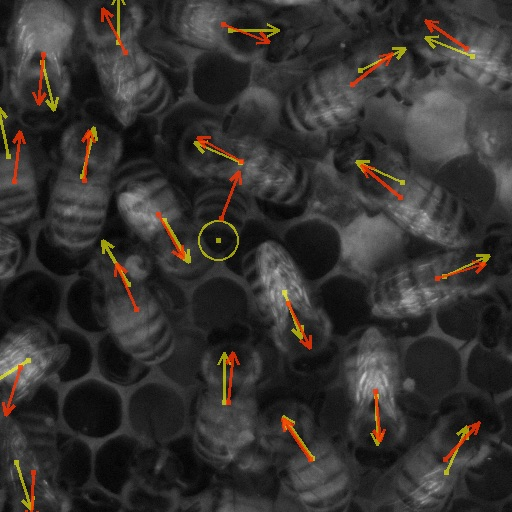

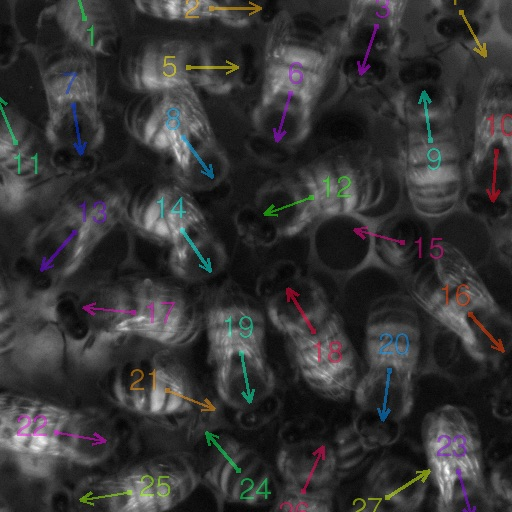

Figure 3: Example segmentation outputs; blue—predicted bees, red—predicted abdomens, and yellow—comparison with human labels.

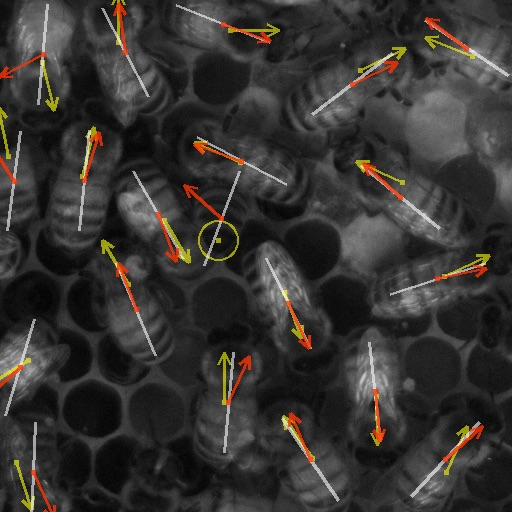

Orientation estimation benefits significantly from the inclusion of temporal context; error is reduced from 34∘ (non-recurrent) to 15∘ (recurrent) for orientation angle and similarly for body axis direction, strongly supporting the utility of cross-frame temporal regularization even in simple, shallow recurrence schemes. The orientation heatmaps and directed arrows demonstrate visual congruence between model predictions and manual ground truth.

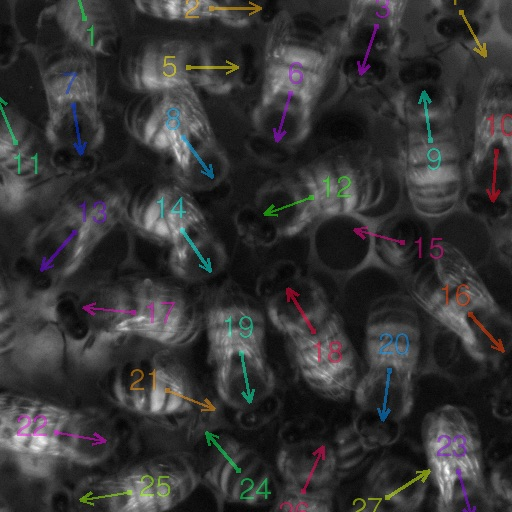

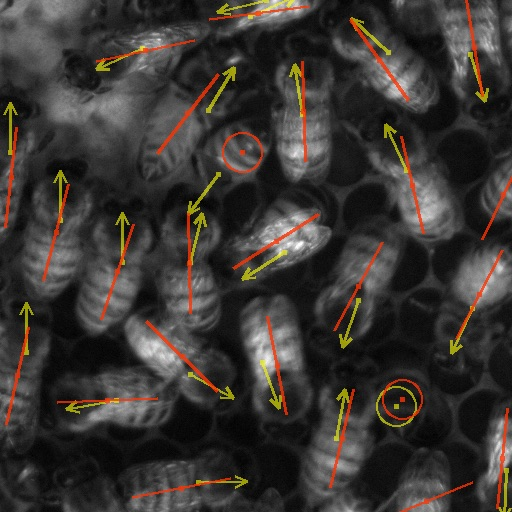

Figure 4: Per-instance body axis and orientation estimation, visualized against human annotation across several representative frames.

Towards Individual Trajectory Tracking

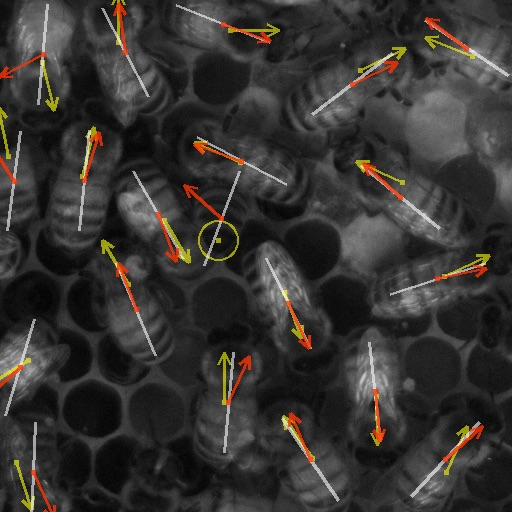

The model's outputs are incorporated into a simple tracking-by-detection pipeline matching objects on proximity and orientation in adjacent frames. While this remains a baseline approach—lacking stateful tracking (e.g., using Kalman filters, Hungarian matching, or appearance descriptors)—it demonstrates viability: trajectories reconstructed over 36-frame sequences frequently align with intuitive expectations.

Figure 5: Trajectory reconstruction based on recurrent detection output and nearest-neighbor matching.

No ground-truth identity correspondence exists for these trajectories, so quantitative trajectory accuracy cannot be directly established, but qualitative results are promising. This approach can be readily extended: incorporating appearance features, predictive motion models, and explicit identity assignment frameworks would further improve multi-object tracking in this dense, occlusive environment.

Implications and Future Directions

This work establishes dense, real-world, biologically relevant datasets and model techniques for high-fidelity, per-frame detection and keypoint estimation in environments where classical detection methods are infeasible. The demonstrated performance—attaining near-human annotation consistency—implies the method is mature enough to underpin future large-scale behavioral studies, replacing labor-intensive manual observation with automated, repeatable pipelines.

The recurrent architectural extension suggests that lightweight temporal integration suffices to substantially reduce error in local orientation tasks when dense spatial cues are ambiguous. Similar architectures could be generalized to dense cellular systems, crowd analysis, or any collective behavior domain where background complexity and object similarity limit traditional detector performance.

The publicly available labeled dataset has clear utility for benchmarking future multi-object tracking, instance segmentation, and orientation estimation methods, likely accelerating algorithmic development in both computational ecology and general computer vision.

Methodologically, the problem-specific label design—encoding both centroid and orientation as region attributes—presents a useful direction for other domains where both instance and pose information are critical but instance segmentation alone is under-constrained.

Conclusion

The presented system provides an efficient, accurate, and scalable approach to dense object detection and orientation estimation, with demonstrated efficacy in the complex task of honeybee tracking. Integrating object-centric labeling, tailored loss functions, and temporal recurrent architectures, the method achieves error rates comparable to inter-human annotation variability. These advances facilitate robust behavioral tracking in dense collectives and open new avenues for automated analysis in systems biology and beyond. Future development should focus on integrating these detection advances with explicit identity tracking, developing more sophisticated temporal models, and generalizing across biological and physical domains with similar density and occlusion challenges.