- The paper's main contribution is the development of a pixel personality model that achieves 74% and 71% tracking accuracy over short segments in dense honeybee hives.

- The methodology integrates a modified UNet for object detection with Inception V3 for identity matching, ensuring robust performance even under occlusions and rapid movements.

- Results indicate that deep learning can effectively discern individual identities in crowded environments, offering scalable applications to various biological systems.

Pixel Personality for Dense Object Tracking in a 2D Honeybee Hive

Introduction

This paper introduces a novel approach for multi-target tracking in dense environments using a 2D honeybee hive as a model system. The core innovation centers on "pixel personality," a concept which captures individual identity through visual appearance across frames. The experimental setup captures the motion of approximately 1,000 honeybees in a constrained 2D plane, facilitating reliable video recording for detailed analysis. The objective is to associate short-track fragments through appearance-based models executed via deep learning, predominantly leveraging convolutional neural networks (CNNs).

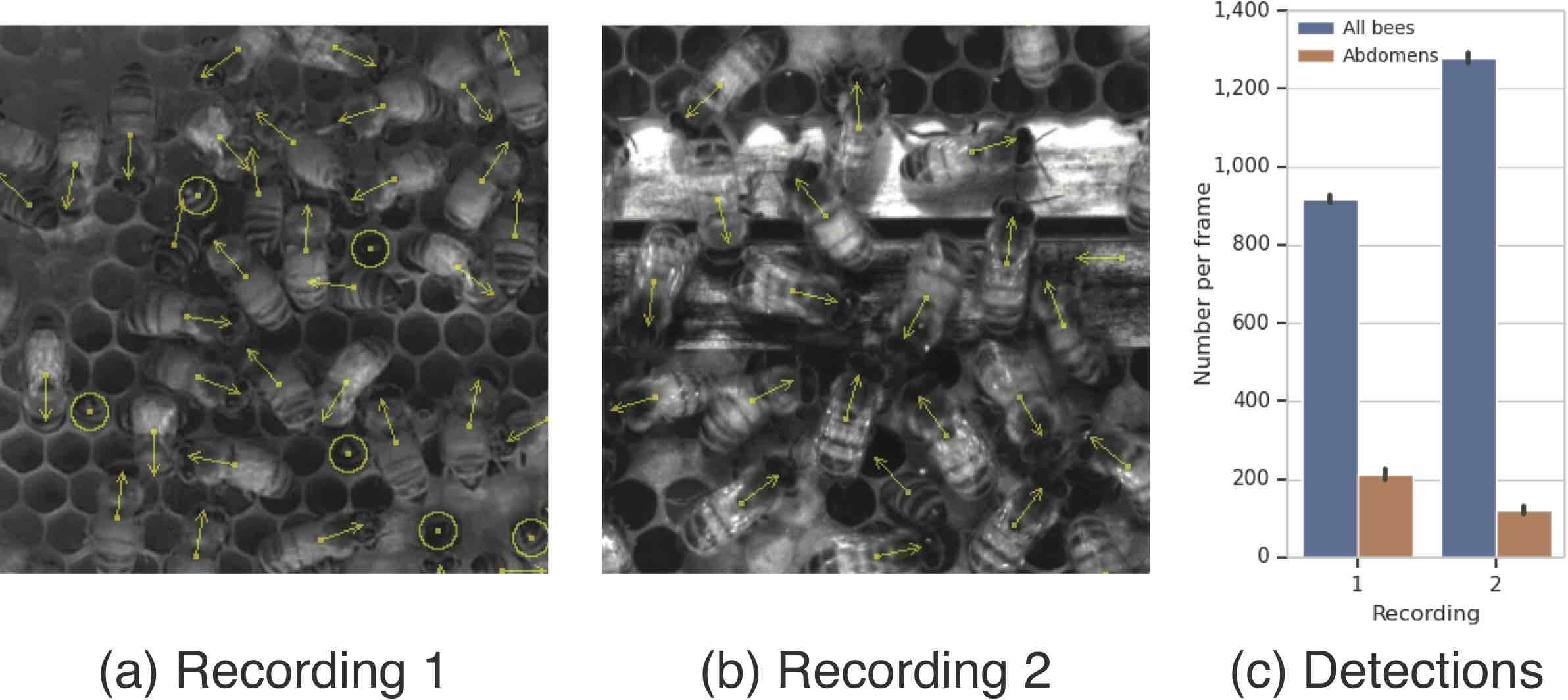

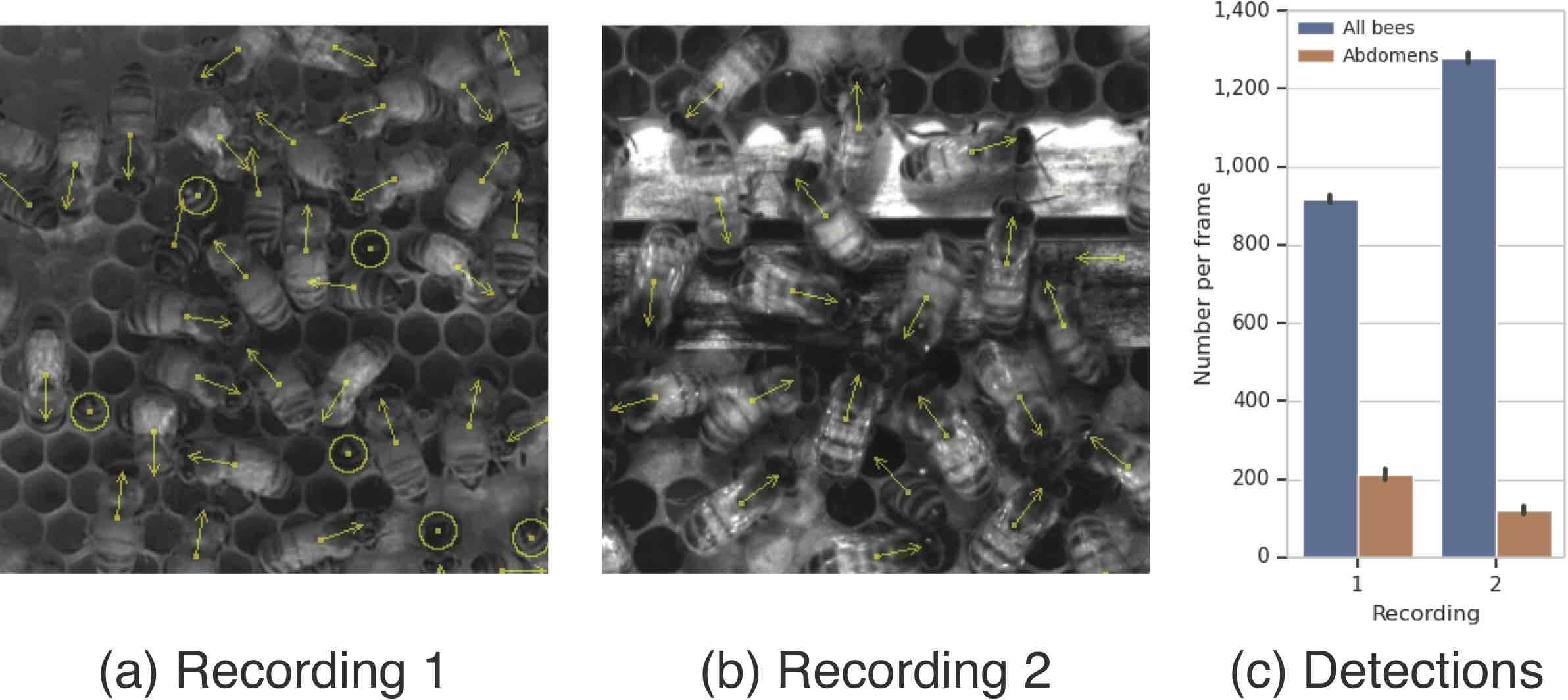

Figure 1: Reliable object detection is the foundation of the dense tracking approach.

Methodology

Data Collection and Preprocessing

Two separate video recordings from observation beehives were collected, each recorded at different resolutions. These were downsampled to achieve uniform spatial quality, ensuring consistency in object detection and tracking.

Object Detection

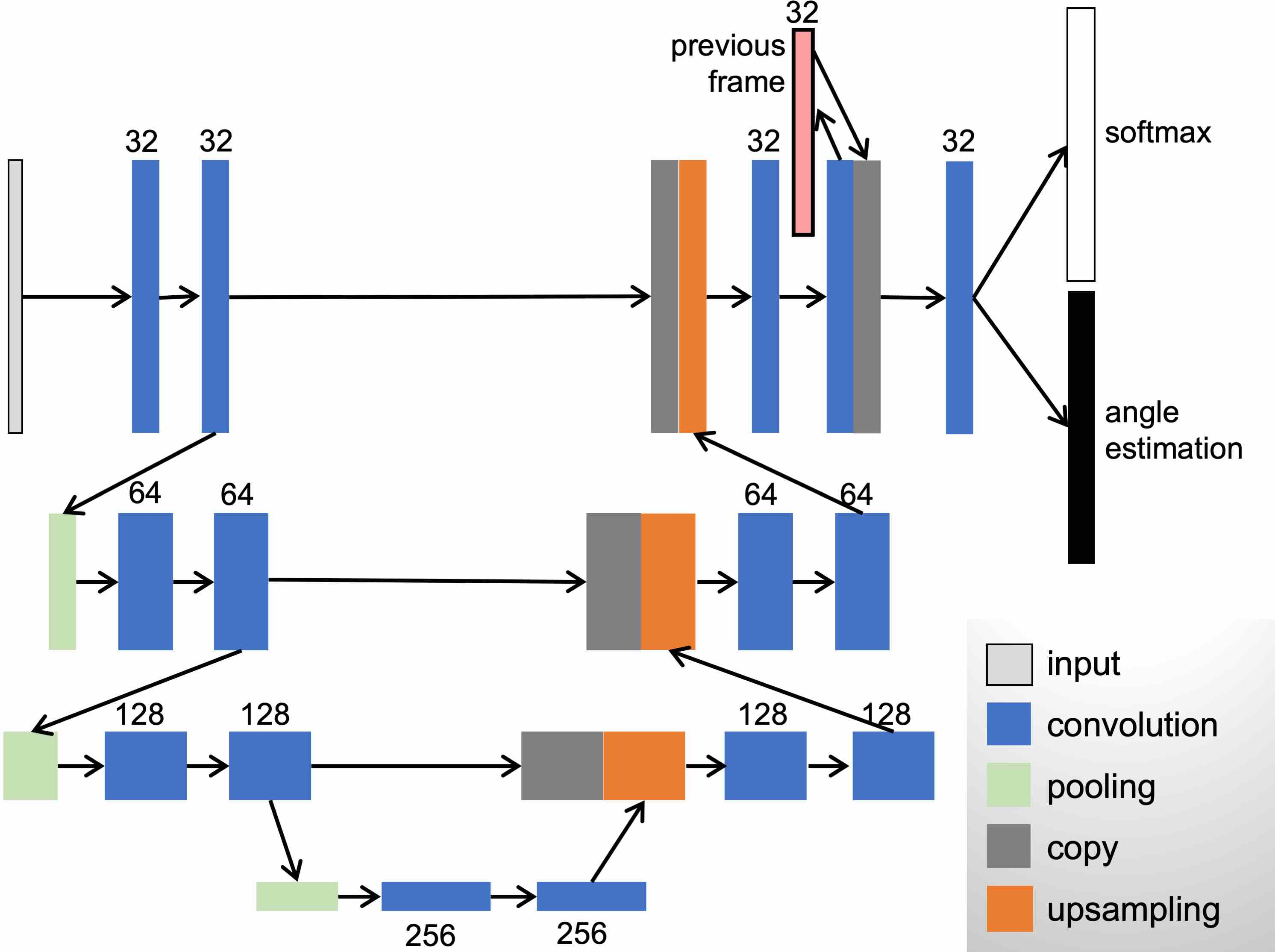

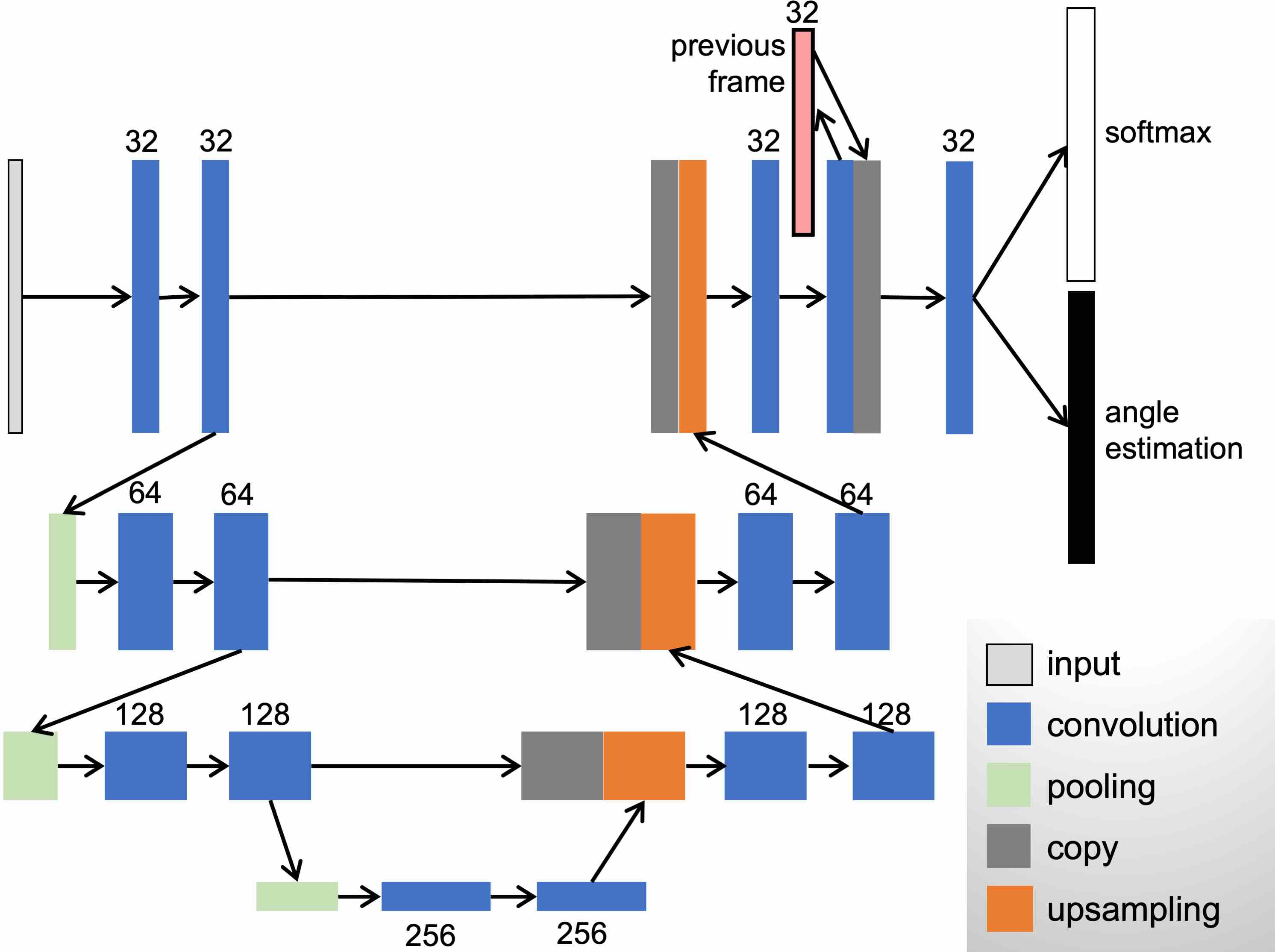

A modified UNet architecture (Figure 2) was implemented to detect honeybee positions, aiming to simultaneously compute orientation and class in a dense environment. This model utilized ellipse and circular shaped labels for segmentation, facilitating distinct classification of fully visible bees and those obscured due to hive interactions.

Figure 2: UNet architecture used for object detection.

A crucial step involved iterative retraining with downsampled data from previous datasets. Furthermore, a custom labeling interface (Figure 3) facilitated labeling corrections, enhancing the precision of retraining processes.

Figure 3: Labeling interface.

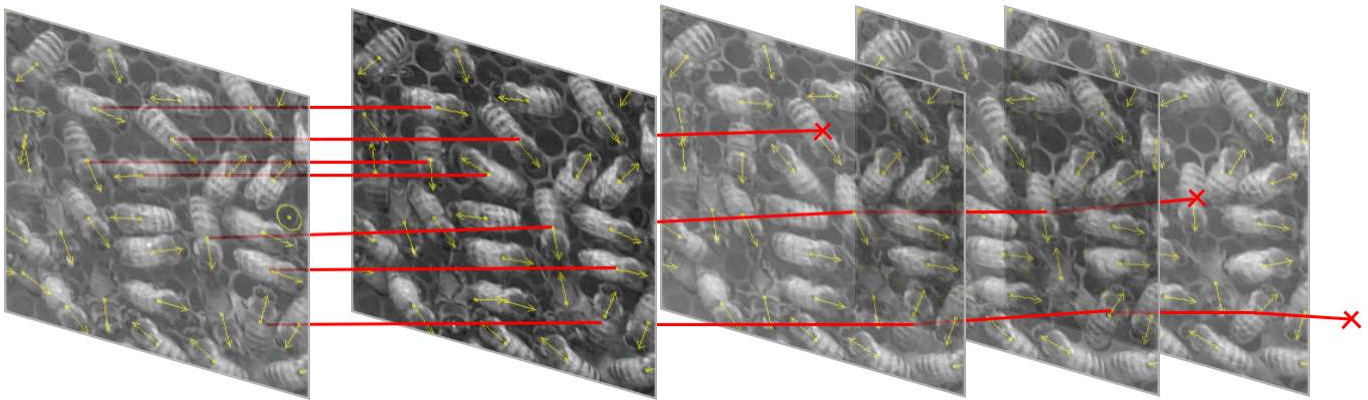

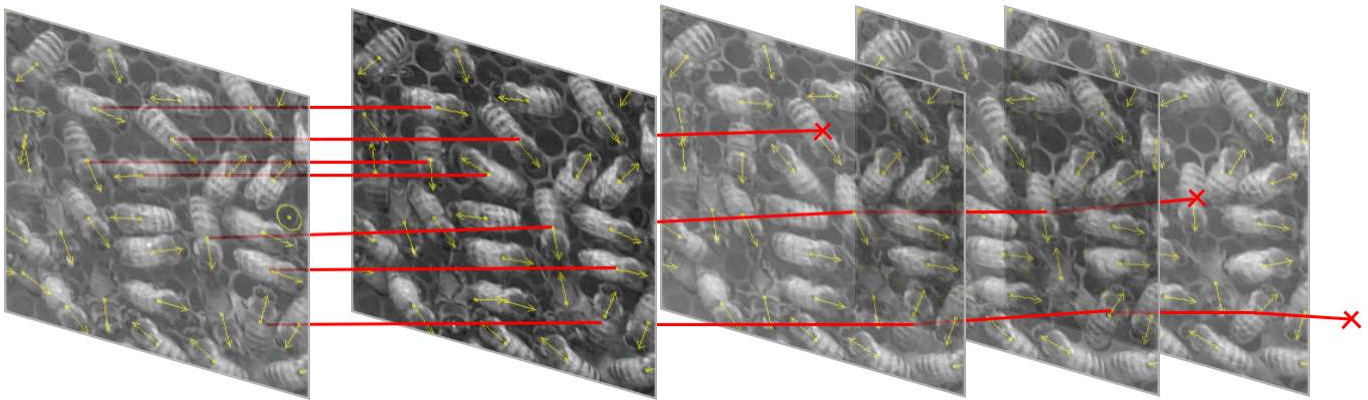

Track Fragment Construction

Initial short-track fragments were created by evaluating spatial and visual similarities across frames, using features including position (x,y), orientation (α), and class (c). Distance metrics employed a combination of Euclidean and angular variances to match fragments iteratively.

Figure 4: Object detections are joined into short track fragments using a simple distance metric.

Pixel Personality: Learning Identity

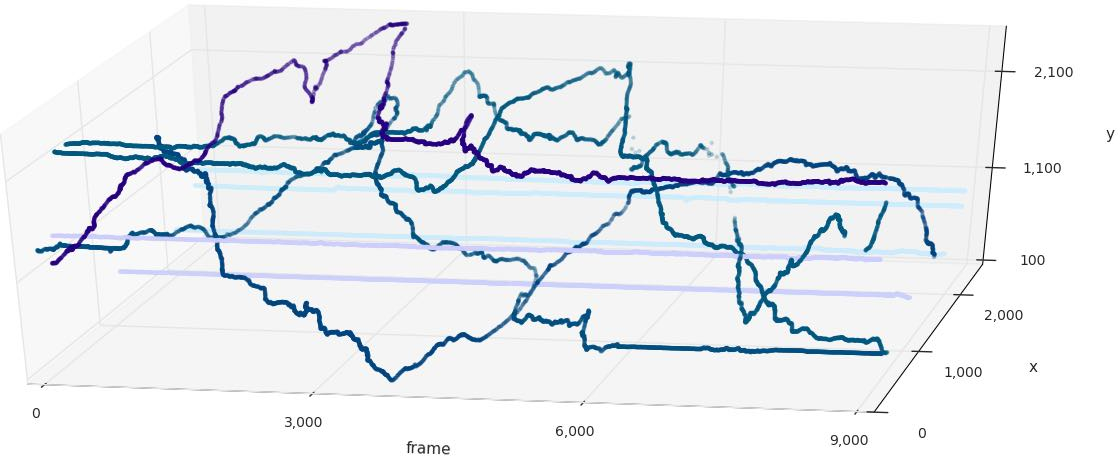

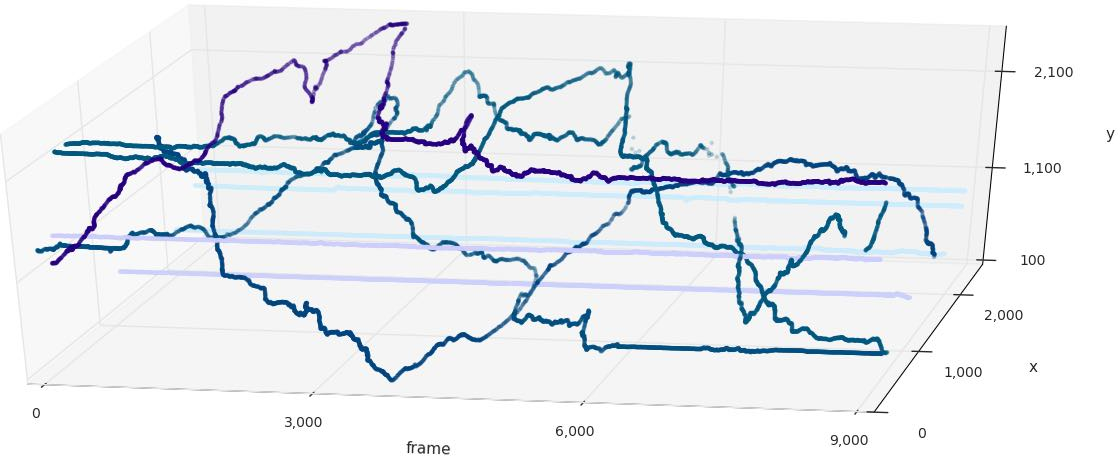

To reconstruct coherent trajectories, the Inception V3 network was trained to capture subtle identity cues termed "pixel personality" (Figure 5). This involved compiling image crops of individual detections to train recognition networks iteratively. The recognition model matched track fragments by evaluating softmax scores, iterating over frames to expand fragments across longer recordings.

Figure 5: Overview of the track joining algorithm.

Results

The approach enabled the tracking of a substantial portion of hive activity with 74% and 71% of trajectories categorized as "mostly tracked" over shorter segments ($2$ min) in recordings 1 and 2 respectively, exhibiting significant accuracy compared to multi-object tracking benchmarks such as MOT16. The method efficiently handled dense configurations, achieving impressive identity retention and trajectory completion even under challenging conditions like occlusions and rapid motion.

Figure 6: Tracking reveals a variety of movement dynamics within the hive.

Conclusion

This study demonstrates the viability of employing deep learning-based object recognition to overcome conventional challenges in dense object tracking scenarios. The pixel personality model not only addresses limitations in environments with minimal individual differentiation but also proposes a scalable solution adaptable to various biological systems. Future enhancements could involve integration with hybrid models incorporating temporal dynamics explicitly, potentially extending the tracking horizon beyond five minutes and to other domains beyond biological hives.