- The paper introduces the BSN architecture that integrates individual and global boundary-sensitive kernels to enhance segmentation accuracy around object boundaries.

- It improves fine detail segmentation by jointly training a boundary-sensitive attribute classifier, enabling precise detection of features like hair and accessories.

- Experimental results show that BSN outperforms existing methods, achieving a mean IoU of 96.7% on the PFCN dataset and superior performance on COCO and PASCAL VOC.

Boundary-Sensitive Network for Portrait Segmentation

The paper "Boundary-sensitive Network for Portrait Segmentation" (1712.08675) introduces a novel deep learning architecture, the Boundary-Sensitive Network (BSN), tailored for high-precision portrait segmentation. The BSN distinguishes itself through the incorporation of individual and global boundary-sensitive kernels, as well as a boundary-sensitive attribute classifier, all designed to improve segmentation accuracy, particularly around object boundaries. The network is evaluated on the PFCN dataset and portrait images from COCO, COCO-Stuff, and PASCAL VOC datasets, demonstrating superior performance compared to existing methods.

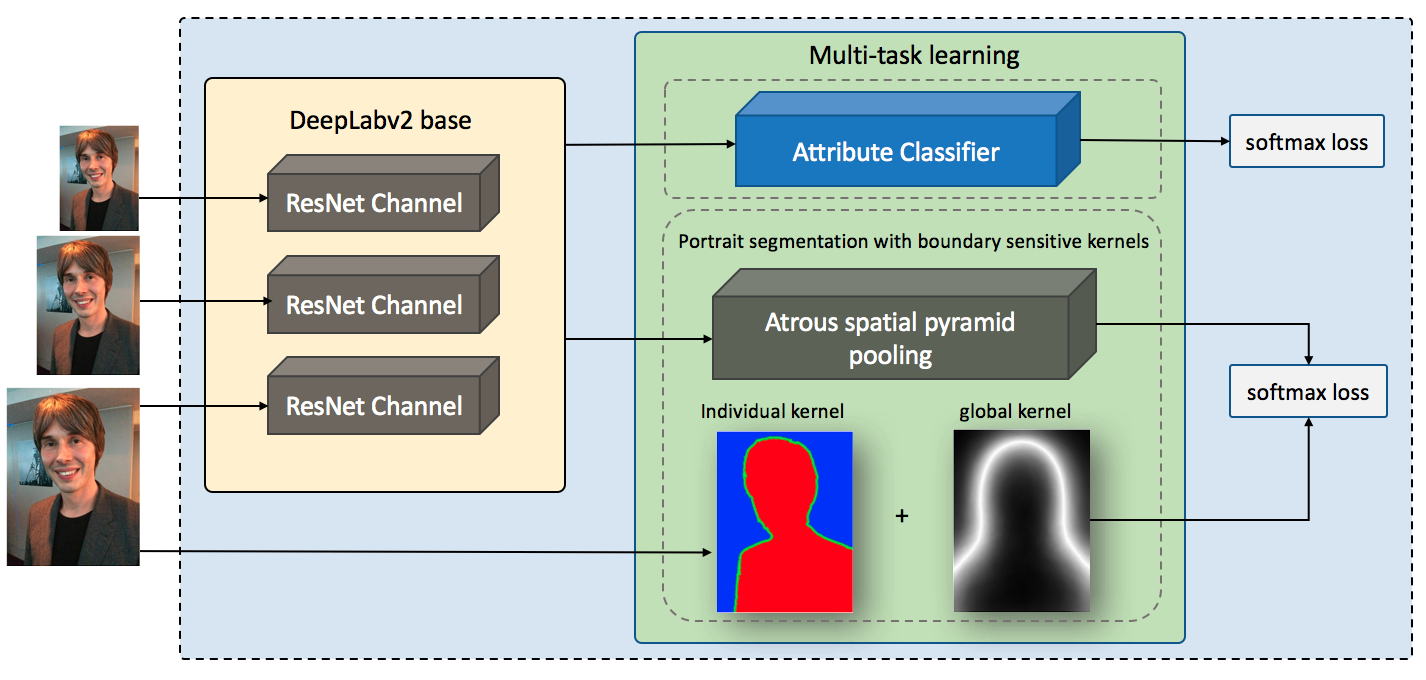

Proposed Method: Boundary-Sensitive Network (BSN)

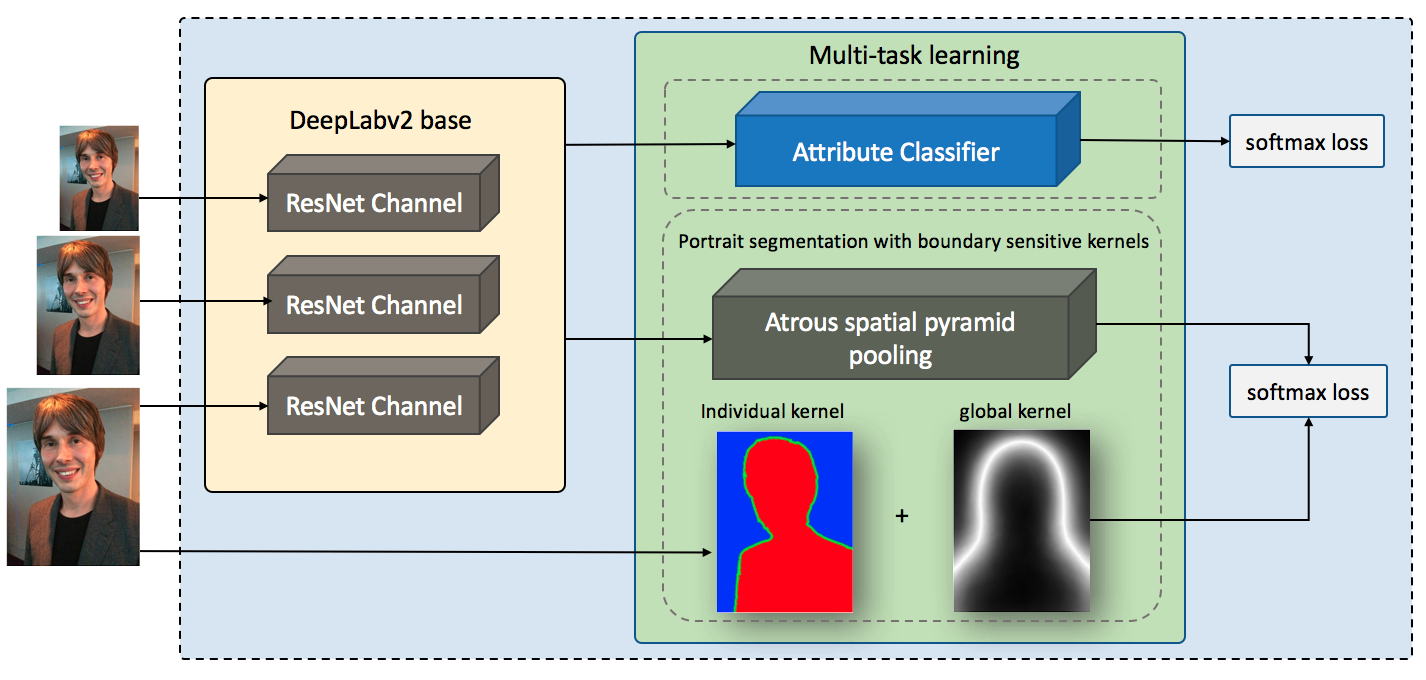

The BSN architecture, illustrated in (Figure 1), builds upon the DeepLabv2_ResNet101 model, enhancing it with three key innovations to address the challenges of portrait segmentation.

Figure 1: The whole architecture of our framework.

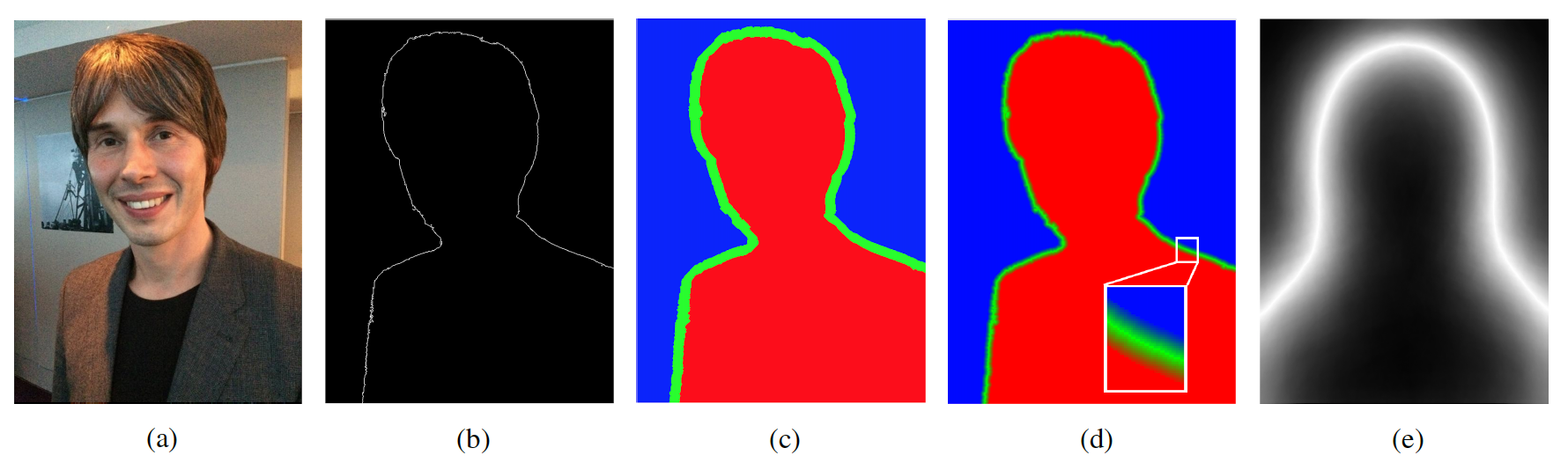

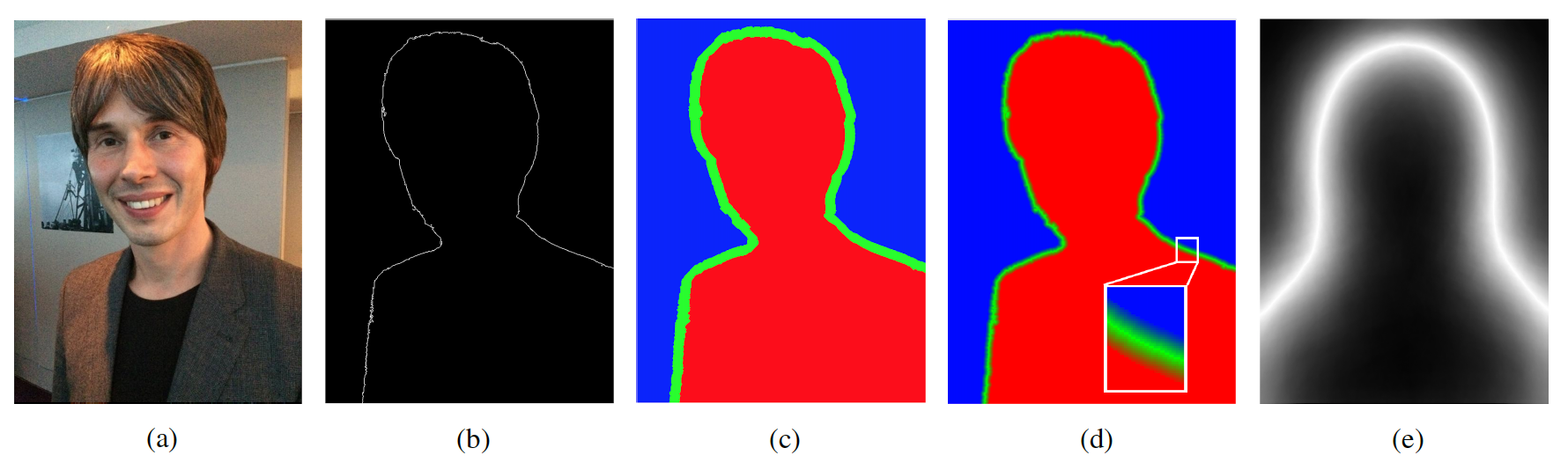

First, the method employs an individual boundary-sensitive kernel, achieved by dilating the contour line of the portrait foreground and assigning multi-class labels to the boundary pixels using a soft-label approach. This approach enables the network to learn better representations for the boundary class by assigning a 1×3 floating-point vector Kindv=[lfg,lbdry,lbg] to each pixel in the boundary class, representing the likelihood of the pixel belonging to the foreground, boundary, or background. The loss function is then updated to incorporate this soft-label information, guiding the network to focus on accurate boundary prediction. The kernel generating process is illustrated in (Figure 2).

Figure 2: The kernel generating process in our method: (a) represents the original image; (b) represents the detected contour line; (c) shows the three class labels: foreground, background, and dilated boundary; (d) shows the individual boundary-sensitive kernel; (e) shows the global boundary-sensitive kernel.

Second, a global boundary-sensitive kernel is introduced as a position-sensitive prior to further constrain the overall shape of the segmentation map. This kernel is generated by computing a mean mask from the training data and normalizing the values to a range [a,b], where higher values indicate a higher probability of the location being a boundary. This encourages the network to focus more on difficult-to-classify boundary locations.

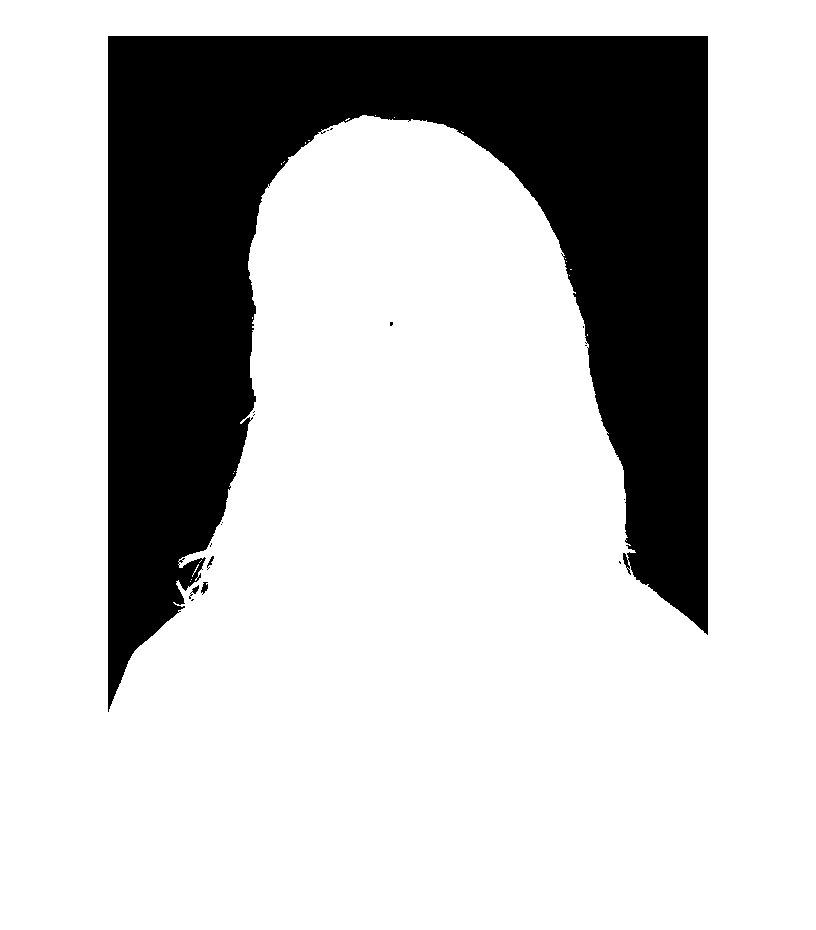

Third, a boundary-sensitive attribute classifier is trained jointly with the segmentation network to provide semantic boundary shape information. This leverages the observation that portrait attributes, such as hair style, significantly influence the portrait's shape. The attribute classifier shares base layers with the segmentation network and consists of three fully connected layers, enabling multi-task learning and reinforcing the training process. An example of how the hair style attribute affects the portrait's shape is illustrated in (Figure 3).

Figure 3: An example of how boundary-sensitive attributes affect the portrait's shape: long hair vs. short hair.

Experimental Results and Analysis

The BSN was evaluated on the PFCN dataset (Figure 4), a large public portrait segmentation dataset, as well as portrait images collected from COCO, COCO-Stuff, and PASCAL VOC datasets (Figure 5).

Figure 4: Sample images from the PFCN dataset.

Figure 5: Sample images from COCO, COCO-Stuff, and Pascal VOC portrait datasets.

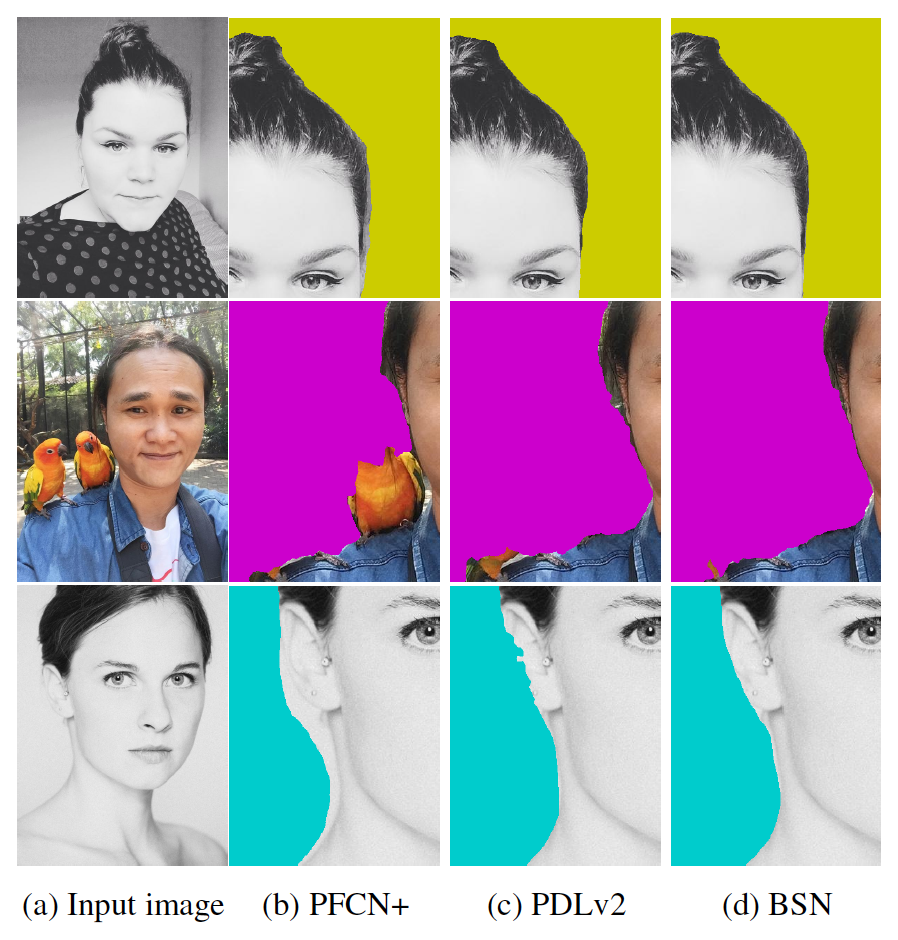

The results demonstrate that the BSN achieves superior quantitative and qualitative performance compared to state-of-the-art methods on all datasets, particularly in the boundary areas. On the PFCN dataset, the BSN achieves a mean IoU of 96.7%, outperforming PortraitFCN+ and PortraitDeepLabv2. The effectiveness of the boundary-sensitive techniques is further validated by testing on portrait images from COCO, COCO-Stuff, and PASCAL VOC datasets, where the BSN significantly outperforms PortraitFCN+.

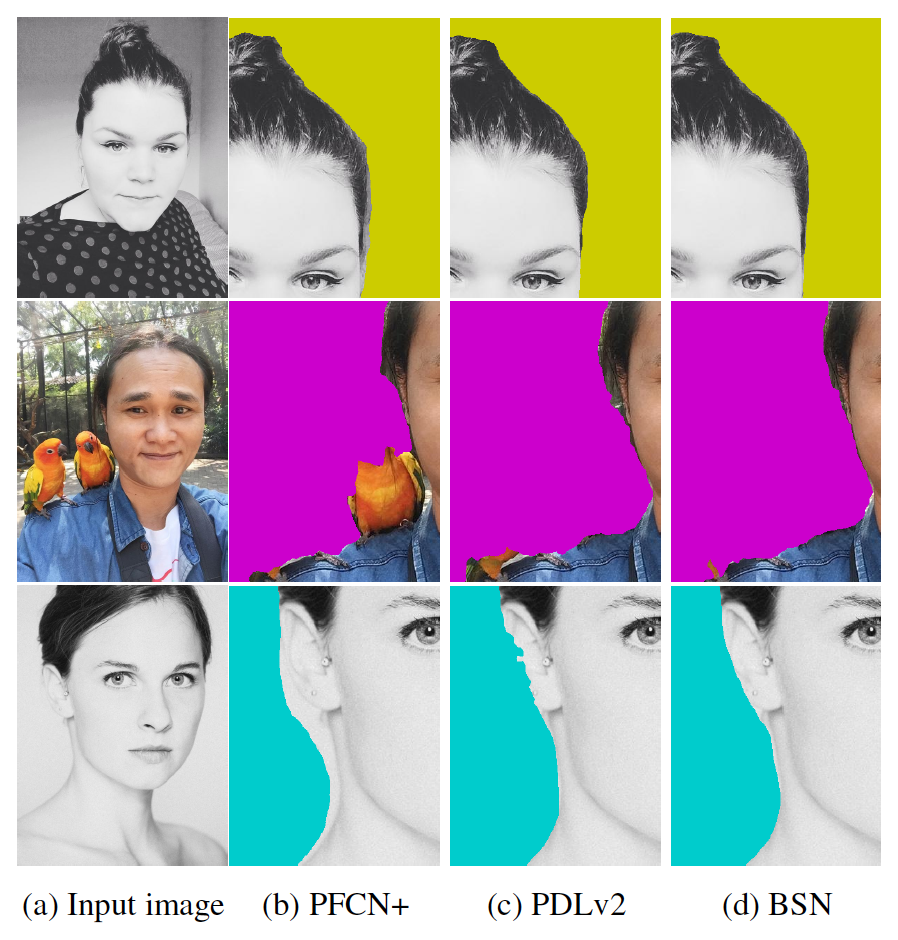

Qualitative results show that the BSN is more accurate and robust in challenging scenarios, such as images with confusing backgrounds, multiple people, or background colors similar to the foreground (Figure 6).

Figure 6: Result visualizations of three challenging examples. The first row shows contains confusing objects in the background; the second row includes multiple people in the background; in the third row the background color is close to the foreground.

Furthermore, the BSN delivers more precise boundary predictions, enabling accurate segmentation of hair, accessories, and other fine details (Figure 7).

Figure 7: Boundary segmentation comparisons. The first column are the original images. The three subsequent columns represent the results from the PortraitFCN+ method, the fine-tuned DeepLabv2 model with the attribute classifier, and our final model (magnified for best viewing).

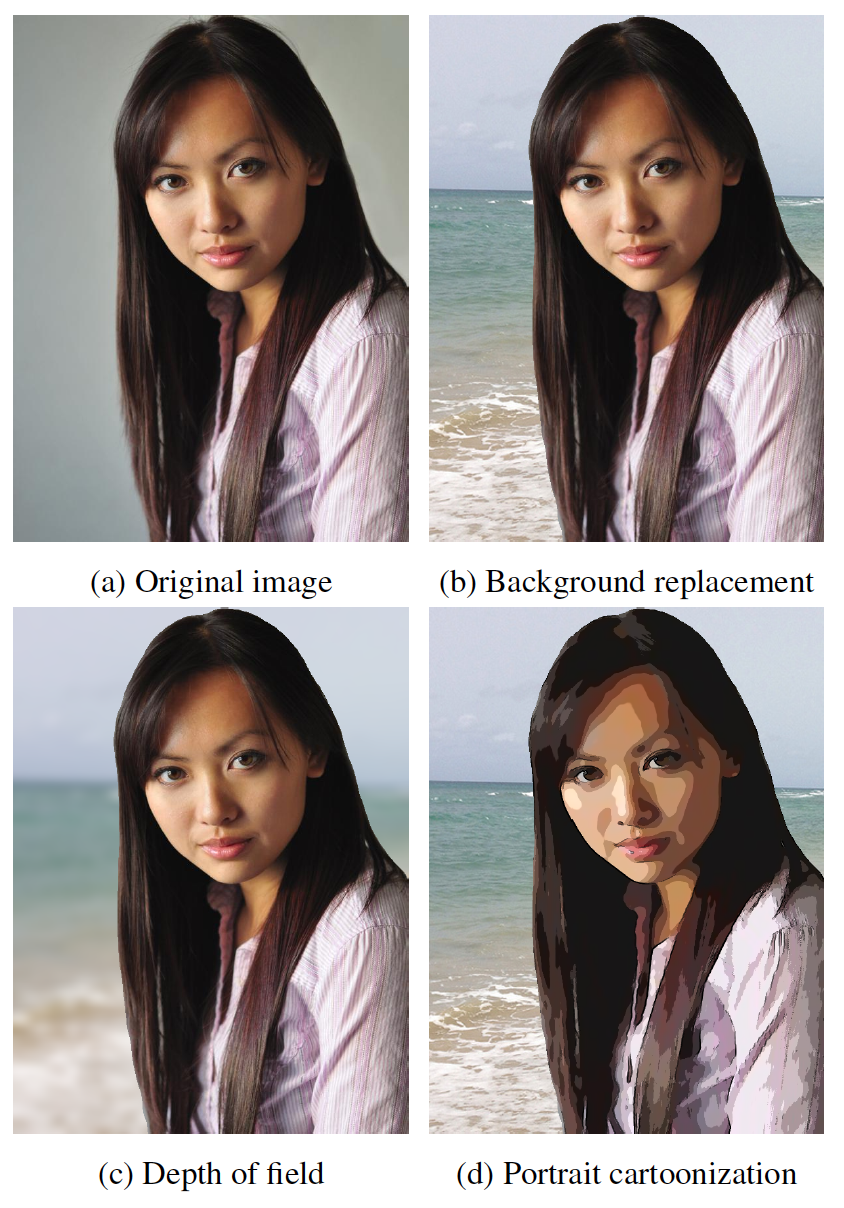

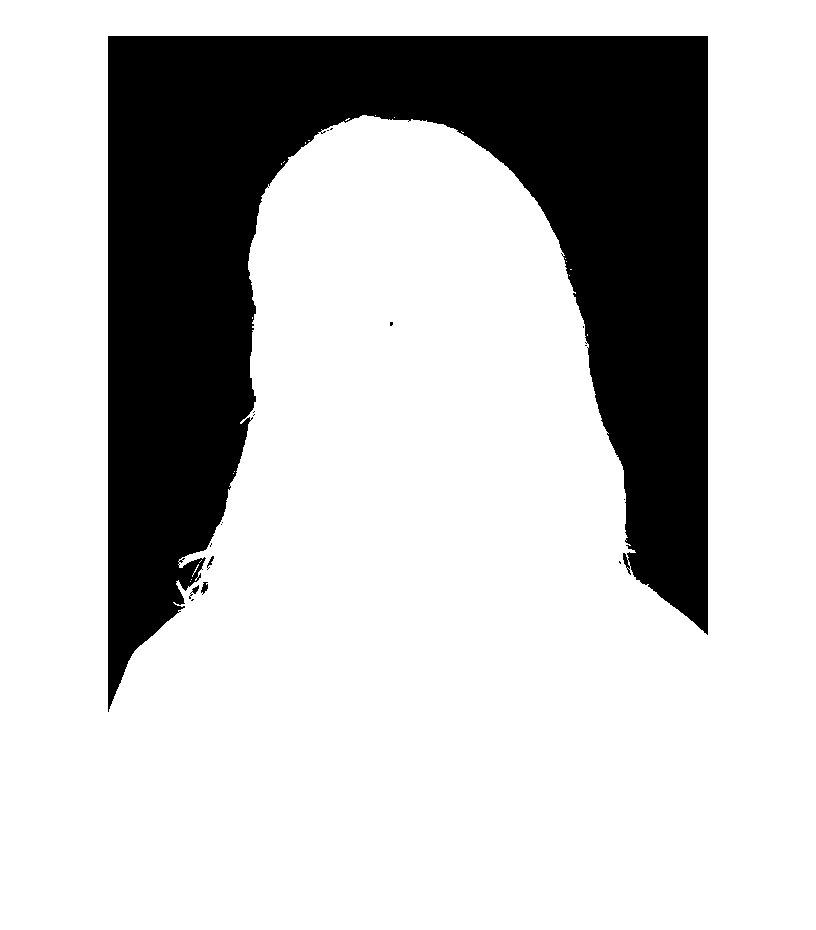

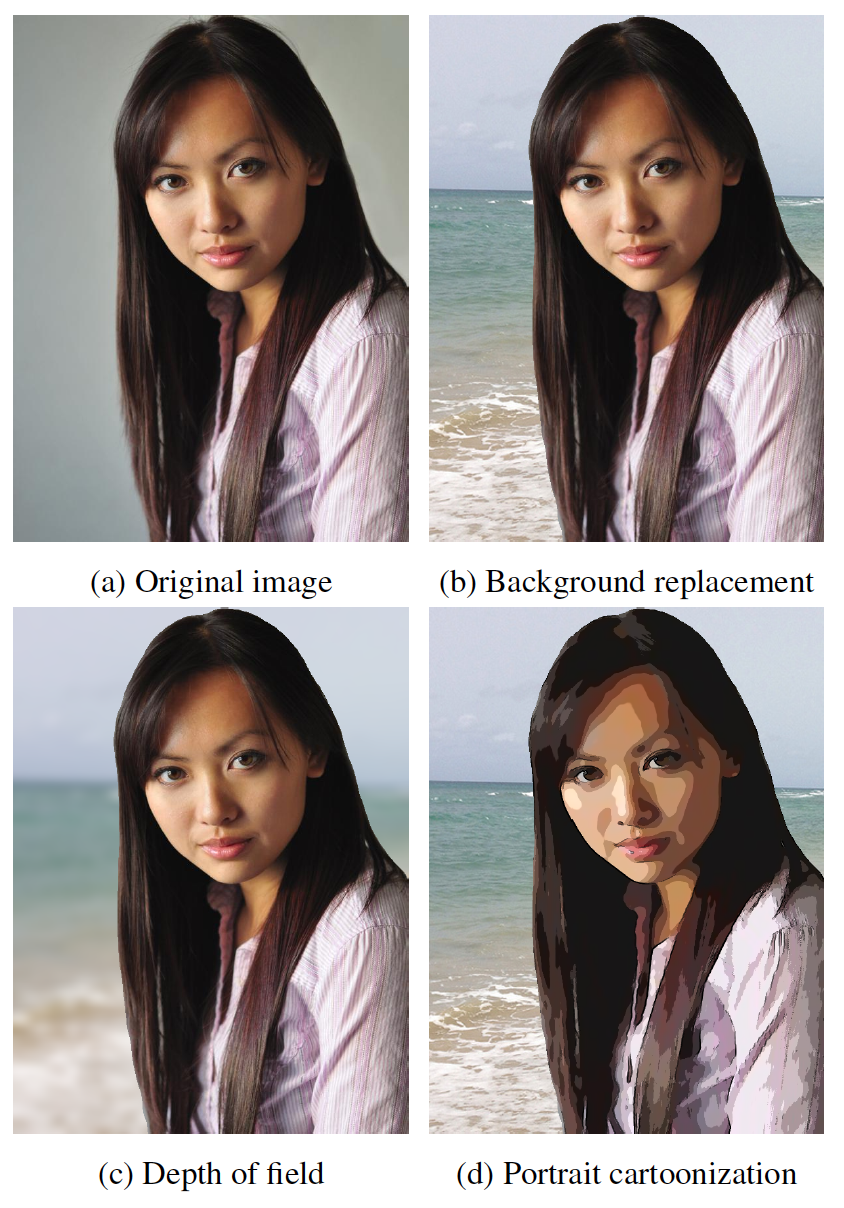

The high-quality segmentation maps generated by the BSN can be used to generate trimaps for image matting models (Figure 8) and facilitate various image processing applications such as background replacement, depth of field, and augmented reality (Figure 9).

Figure 8: Trimaps generated from our segmentation maps.

Figure 9: Some applications of portrait segmentation.

Conclusion

The BSN architecture represents a significant advancement in portrait segmentation, achieving state-of-the-art performance through the incorporation of boundary-sensitive kernels and a boundary-sensitive attribute classifier. The results demonstrate the effectiveness of these techniques in improving segmentation accuracy, particularly around object boundaries, and highlight the potential for extending these methods to general semantic segmentation problems and exploring additional semantic attributes to reinforce the training process.