- The paper presents a novel Residual U-Net approach for pixel-level semantic segmentation to automate Gleason grading.

- It improves traditional U-Net by integrating residual blocks to address vanishing gradients, achieving higher Dice indices and Cohen’s kappa scores.

- Experimental results demonstrate enhanced differentiation of Gleason patterns, offering promising advances in prostate cancer diagnostics.

Gleason Grading of Histology Prostate Images through Semantic Segmentation via Residual U-Net

Introduction

Prostate cancer represents a significant health concern globally, as it is the second most prevalent cancer in men. The Gleason grading system remains instrumental in assessing the tumor's severity, based on the architectural patterns of glands in prostate tissues. Traditionally, pathologists manually inspect stained tissue samples to determine Gleason patterns, a subjective and labor-intensive process. This study proposes utilizing advanced computer-aided diagnosis systems, specifically through deep learning models, to automate this process with semantic segmentation techniques applied to histology images.

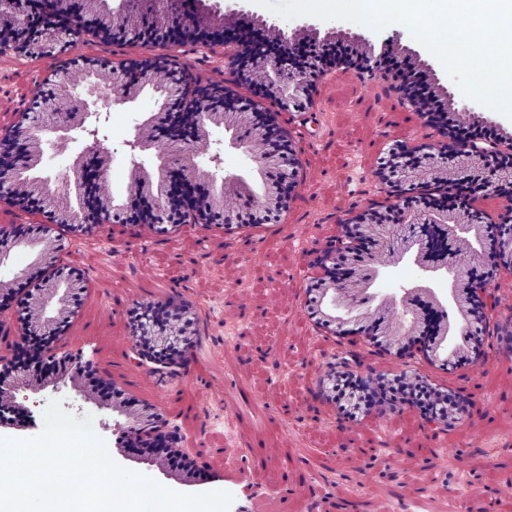

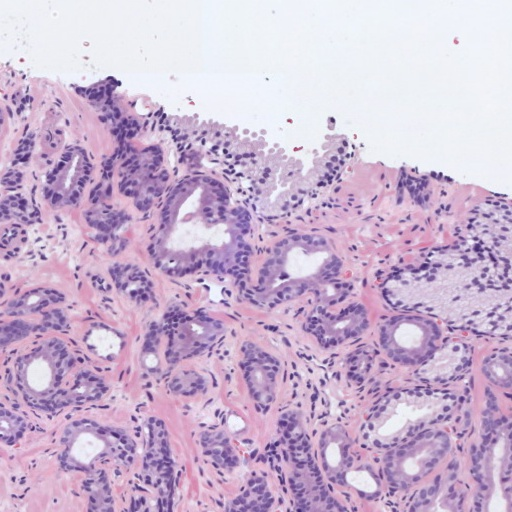

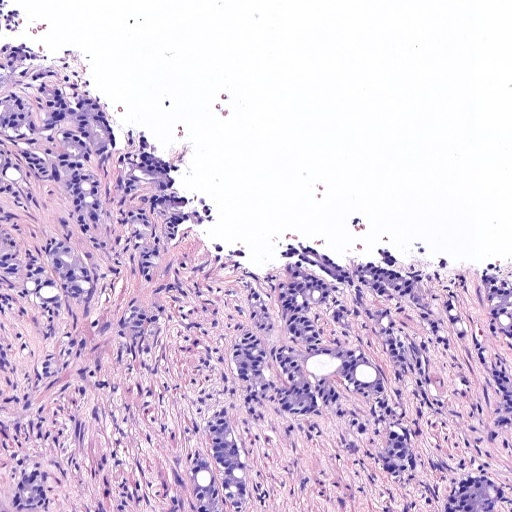

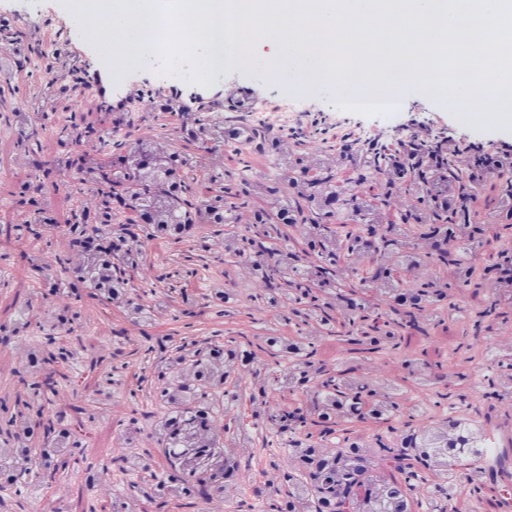

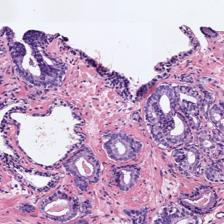

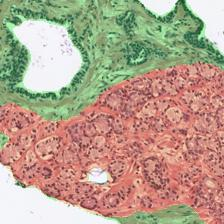

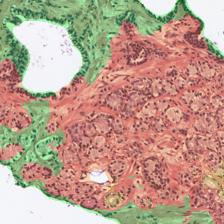

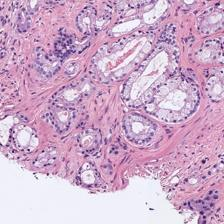

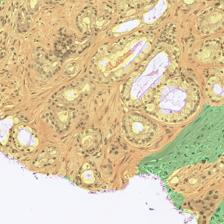

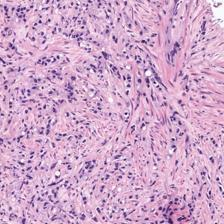

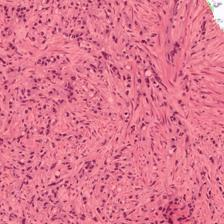

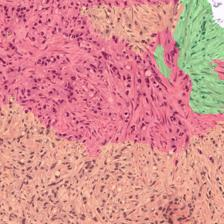

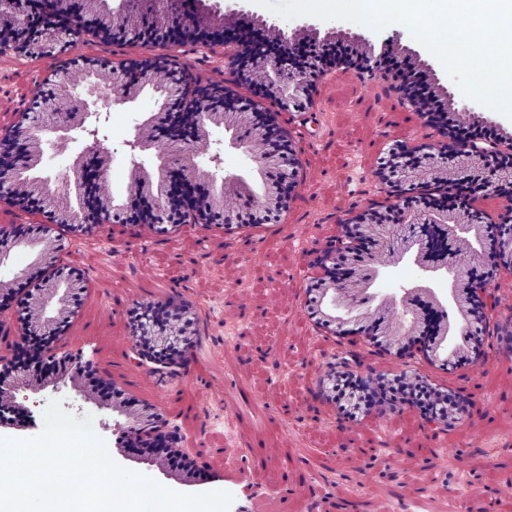

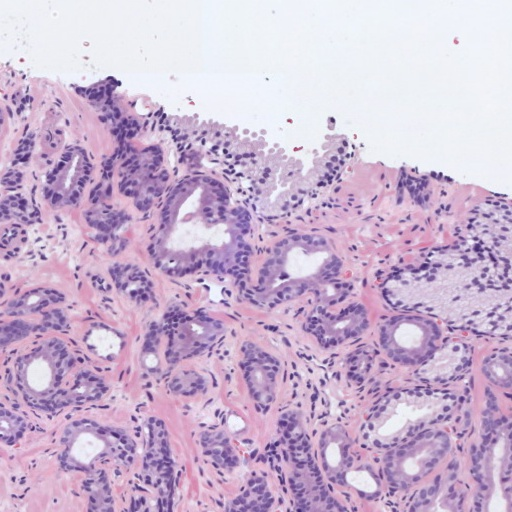

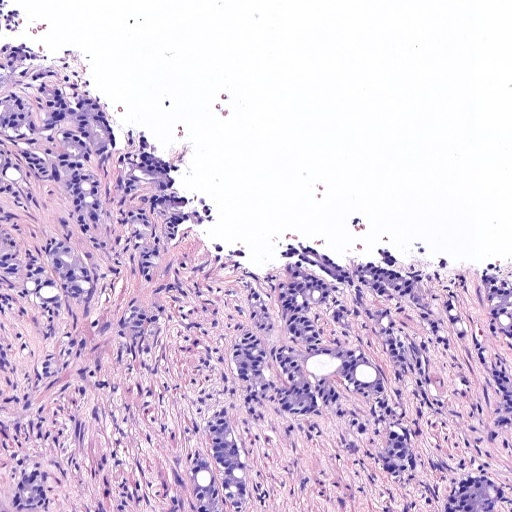

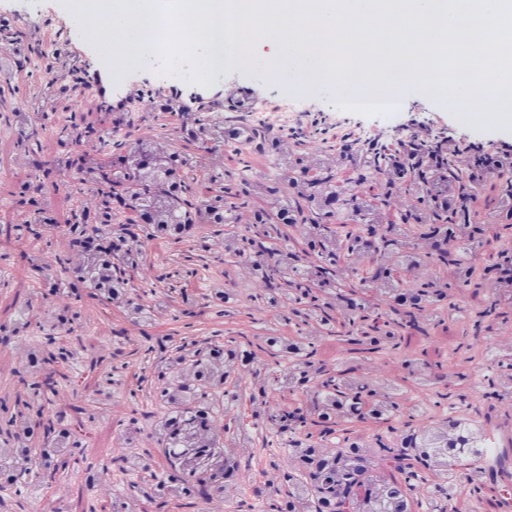

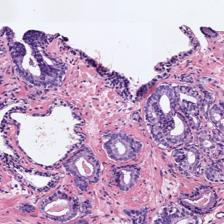

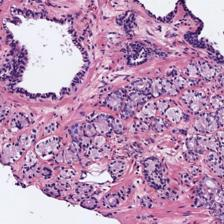

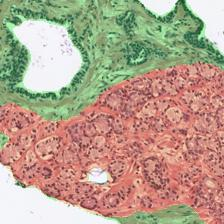

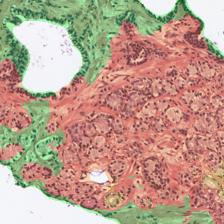

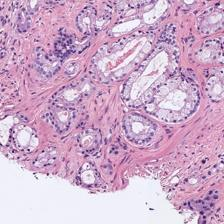

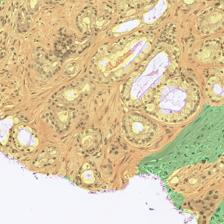

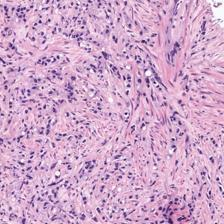

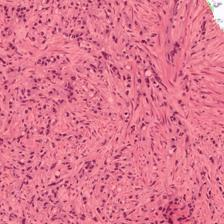

Figure 1: Examples of histology prostate regions. (a): Non-cancerous glands, (b): Gleason pattern 3, (c): Gleason pattern 4, and (d) Gleason pattern 5.

Methodology

The paper introduces a novel approach using a Residual U-Net architecture to perform pixel-level semantic segmentation, aiming for precise classification and localization of prostate cancerous patterns according to the full Gleason grading system. Different convolutional neural networks (CNNs), including Fully-Convolutional Networks (FCN), SegNet, and U-Net models, were evaluated for their effectiveness in this task.

The standard U-Net, known for its dual encoder-decoder structure, was enhanced with residual blocks to improve performance. Using identity mappings, these residual blocks facilitate training deeper neural networks without encountering vanishing gradient issues. This modification was pivotal in achieving superior segmentation accuracy for the challenging high-grade Gleason patterns.

Figure 2: U-Net architecture for prostate cancer gradation. BN: background, NC: non-cancerous, GP3: Gleason pattern 3, GP4: Gleason pattern 4, GP5: Gleason pattern 5.

Experimental Results

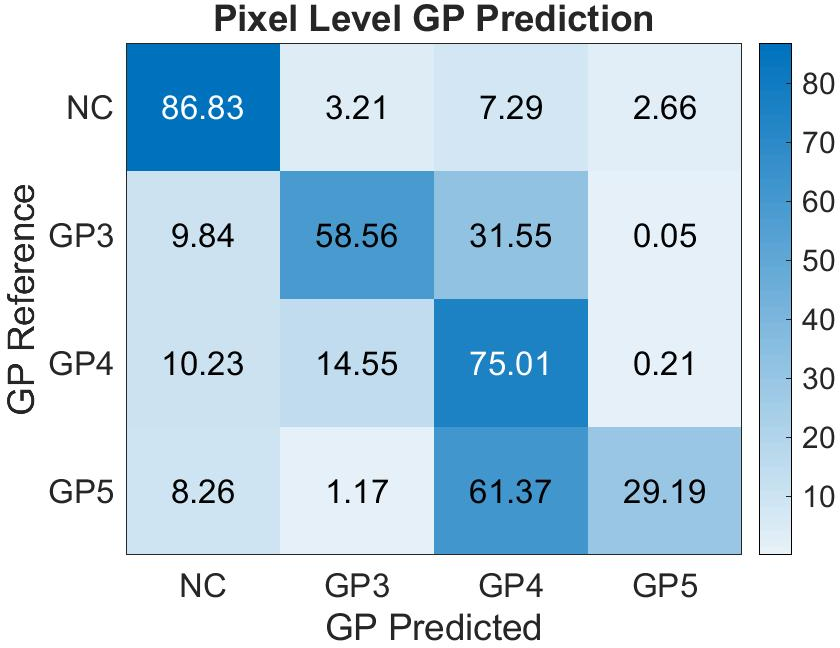

A comprehensive experimental validation was conducted using a dataset of 182 prostate biopsies, annotated at the pixel level by pathologists. The database was processed to create patches for training, validation, and testing of the models. Among the different architectures tested, the ResU-Net demonstrated the best outcomes, particularly in distinguishing between Gleason patterns with an average Dice index significantly higher than its counterparts.

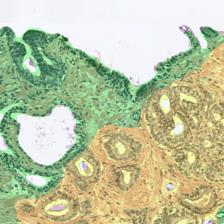

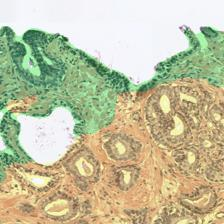

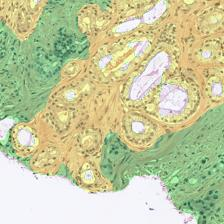

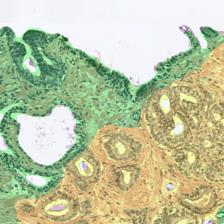

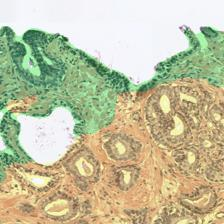

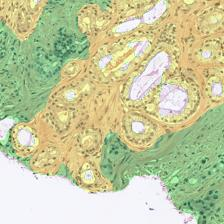

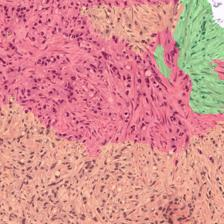

Figure 3: Examples of ResU-Net performance in the test set. Green: non-cancerous, yellow: Gleason pattern 3, orange: Gleason pattern 4, and red: Gleason pattern 5. (a): Original Image, (b): Reference, (c): Predicted.

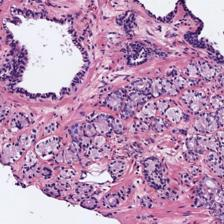

Despite its success, the model faced challenges due to data heterogeneity. Although the Dice index decreased in the test cohort, the ResU-Net still achieved a Cohen's quadratic kappa of 0.52, comparable to established image-level grading methods.

Figure 4: Confusion matrix of the pixel-level Gleason grade prediction in the test cohort with the proposed ResU-Net model. NC: non-cancerous, GP3: Gleason pattern 3, GP4: Gleason pattern 4, GP5: Gleason pattern 5.

Implications and Future Directions

The implications of this research are substantial in clinical practice, mainly due to its potential to enhance the accuracy and efficiency of prostate cancer grading, thus supporting diagnostic decision-making. The Residual U-Net offers a granular analysis capability beyond existing image-level approaches, promising to redefine computational pathology, particularly in the segmentation of Gleason grades.

Future research could explore integrating gland-level analyses with pixel-level segmentation to bolster the detection of diverse cancerous structures. Additionally, exploring the synergy between deep learning models and clinical data to refine machine learning predictions could further bridge the gap between computational results and clinical pathology.

Conclusion

This study provides a significant advance in the automated grading of prostate cancer tissue through the application of deep learning in medical image analysis. The ResU-Net architecture has been validated as a robust model for detailed and precise segmentation according to the Gleason grading system, marking a step forward in computer-aided medical diagnostics. Continued research will be vital in expanding upon these methodologies, ensuring that computational advancements align with clinical needs for optimal patient outcomes.