- The paper proposes an early exit strategy using class means to bypass unnecessary computations in DNNs.

- It achieves up to 50% faster inference while maintaining or improving accuracy on datasets like ImageNet across various DNN architectures.

- The plug-and-play design proves practical for deploying models on low-power devices in both supervised and unsupervised learning scenarios.

Efficient Learning with Early Exit Mechanism: An Analysis of E2CM

Introduction

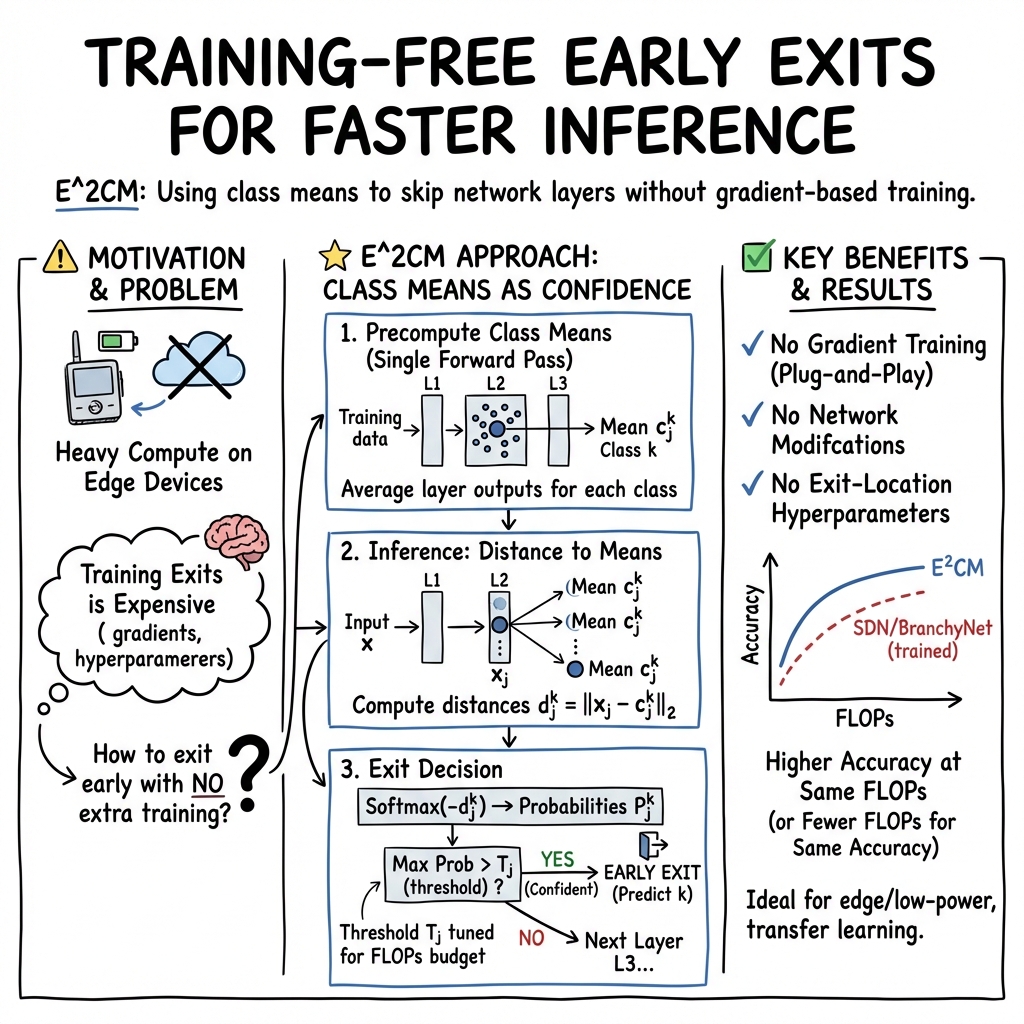

The expansion of deep learning models has often been limited by hardware constraints, particularly when deploying models on low-power devices. Traditional deep neural networks (DNNs) process every input through a fixed sequence of operations, which is often unnecessary for inputs with simpler features. The paper introduces Early Exit Class Means (E2CM), a novel mechanism intended to address this limitation by enabling efficient early exiting during both supervised and unsupervised learning tasks (2103.01148). This approach leverages class means, computed from the outputs of neural network layers, to determine optimal early exit points without requiring gradient-based training or network modification, thus making it particularly suitable for resource-constrained environments.

Methodology

Core Mechanism: Class Means: E2CM calculates class means by averaging the outputs of a model's layers for each class. These class means serve as reference points to determine the likeness of incoming data during inference. The similarity is assessed through Euclidean distance, with the decision to exit early based on this metric being sufficiently small. This plug-and-play feature ensures ease of integration without the high overhead typically associated with internal classifier training in early exit schemes.

Plug-and-Play Flexibility: Unlike traditional early exit systems, E2CM retains the underlying architecture of the base DNN and only appends mechanisms for early deduction. This flexibility, particularly beneficial for edge computing applications, allows the use of pretrained models without retraining, facilitating the deployment of DNNs onto diverse hardware platforms.

Combination and Clustering: E2CM's utility was underscored in both supervised and unsupervised learning frameworks. When coupled with existing methodologies, it improved accuracy without excess computation. Furthermore, it extended to unsupervised tasks by showing efficiency in clustering applications when combined with frameworks like Deep Embedding Clustering (DEC).

Results

Modeling Efficacy: E2CM demonstrated significant advantages in reducing computational expenses while sustaining or enhancing model accuracy across various DNN architectures such as ResNet, MobileNetV3, and EfficientNet. In supervised settings, it achieved up to 50% faster inference times or equivalent accuracy increases when compared to existing mechanisms.

Efficiency in Resource Utilization: The statistical outcomes illustrate E2CM's low overhead both computationally and in memory usage. Specifically, the integrated setup led to a noticeable reduction in FLOPs without compromising memory, even when scales of classes and layers were increased, making it ideal for large-scale problems.

Performance in Real-World Deployments: Crucially, the fine-tuning example on the ImageNet dataset reiterated its prowess in practical low-power scenarios, where E2CM not only preserved battery life but enhanced performance metrics compared to traditional exhaustive inference schemes.

Discussion and Future Work

The implications of E2CM are multifaceted and promising. It provides a practical solution for optimizing DNN deployment and operations in environments where power and computational resources are limited. The potential scalability to various architectures highlights its adaptability, although exploration into further reductions in overhead through quantization and pruning could bolster its utility.

Moreover, the rising prominence of edge computing and IoT applications offers a fertile ground for E2CM’s expanded adoption. Future research could explore the integration of E2CM with other large-scale LLMs and extend its early exit decision criteria beyond Euclidean norms to include more sophisticated distance metrics or incorporate learnable parameters to enhance inference adaptability.

Conclusion

E2CM stands as a robust and effective early exit strategy that aligns with the growing demand for efficient machine learning deployment on low-resource platforms. Its non-reliance on extensive retraining and straightforward integration into existing networks makes it a significant development for the field, promising refined computational efficiency and enhanced model practicality (2103.01148).