- The paper presents a Bilayer Tensor Network that integrates perception, episodic memory, and semantic decoding into a unified cognitive framework.

- It details an architecture with an index layer and a representation layer that blend recent and remote memories with real-time perceptual data.

- Experiments using augmented datasets (VRD-E and VRD-EX) validate the model’s superior performance in visual relationship detection tasks.

"The Tensor Brain: A Unified Theory of Perception, Memory and Semantic Decoding"

Introduction

The paper "The Tensor Brain: A Unified Theory of Perception, Memory, and Semantic Decoding" introduces a computational model called the Bilayer Tensor Network (BTN) designed to integrate perception and memory into a cohesive framework. The model employs oscillating interactions between a symbolic index layer and a subsymbolic representation layer. These layers form a Bilayer Tensor Network (BTN), providing a unified approach to understanding the cognitive processes of perception and memory, and offering insights into human intelligence.

Bilayer Tensor Network Architecture

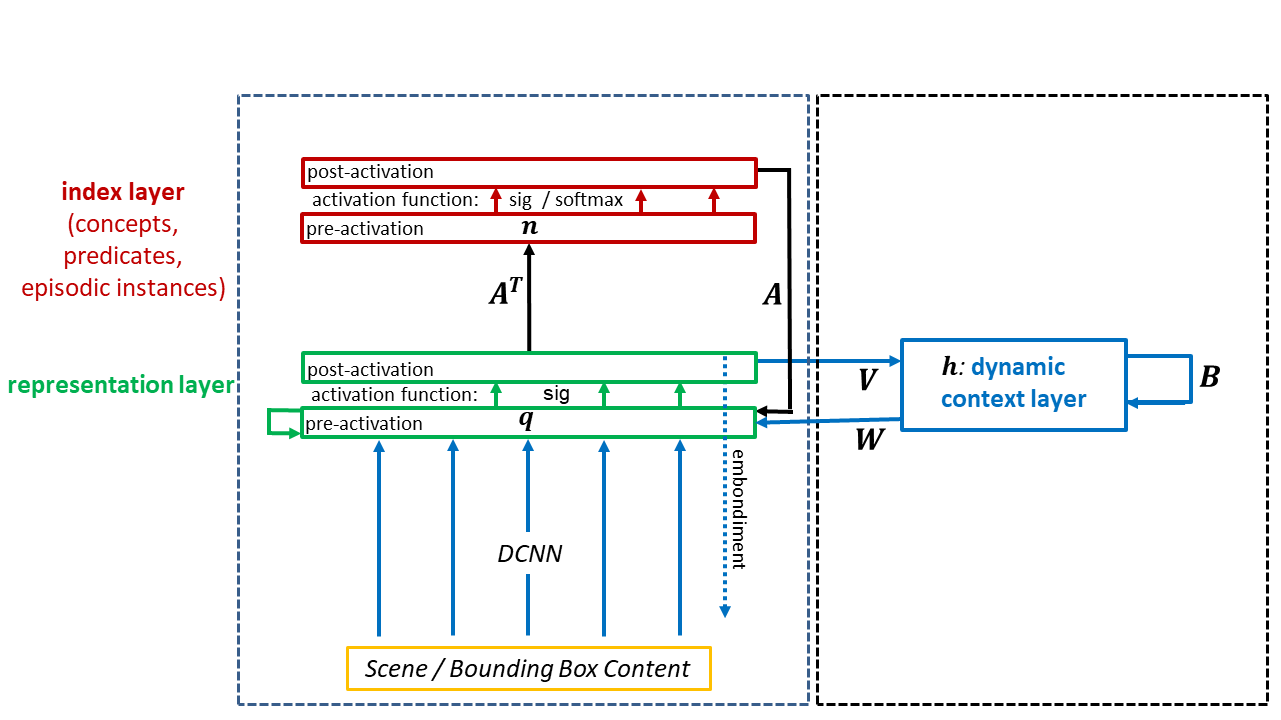

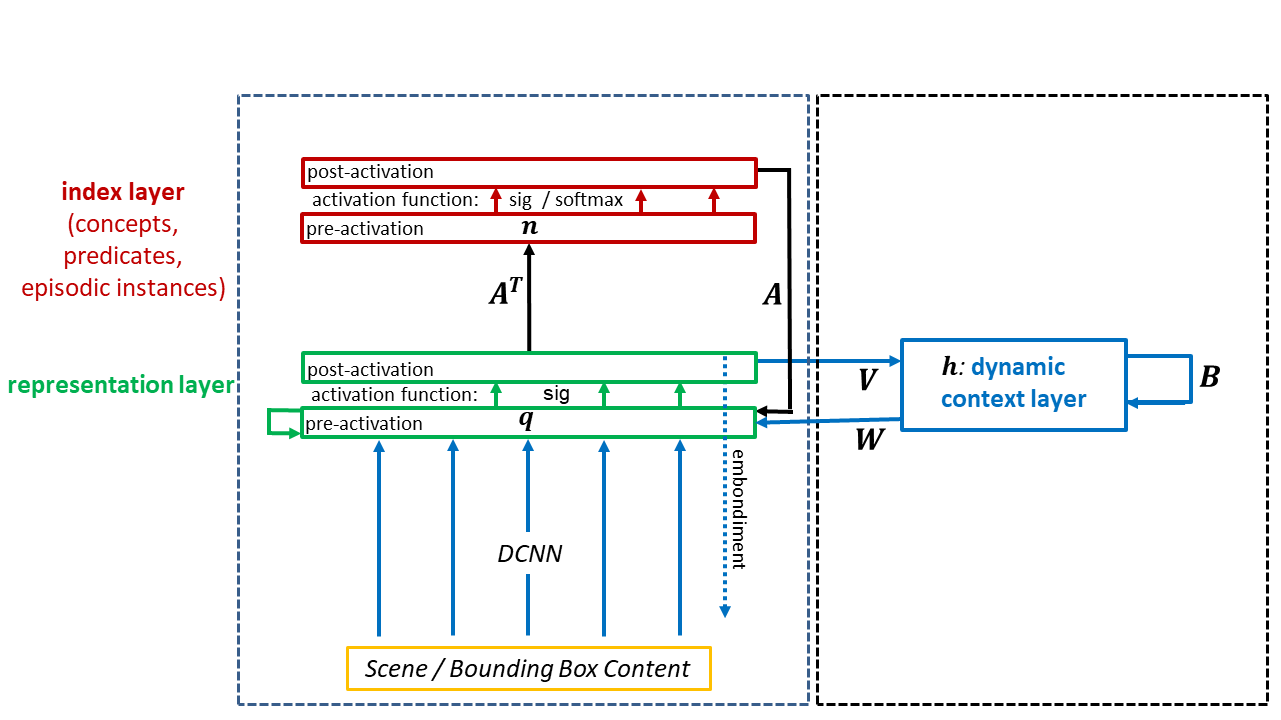

The BTN comprises two primary layers: the index layer and the representation layer. The index layer encodes indices for concepts, predicates, and episodic instances, functioning as focal points of activity. The representation layer, akin to a "mental canvas," broadcasts cognitive states throughout the brain. The BTN thus serves as a communication platform linking perceptual inputs to high-level cognitive processes.

Figure 1: Perception and memory: Our model architecture consists of two main layers, the representation layer q and the index layer n.

Perception and Episodic Memory

The paper posits that perception and memory are deeply intertwined processes. Perception activates the representation layer, triggering the index layer to sample symbolic indices representing currently perceived entities. This process enables the blending of recent and remote episodic memories with real-time perceptual data, thereby enriching the agent's present contextual understanding. The "recent episodic memory" provides immediate context, while "remote episodic memory" recalls analogous past experiences, guiding decision-making.

Figure 2: Recent episodic memory experience: An illustration of the effect of recent episodic memory using VRD-E data.

Semantic Memory and Generalization

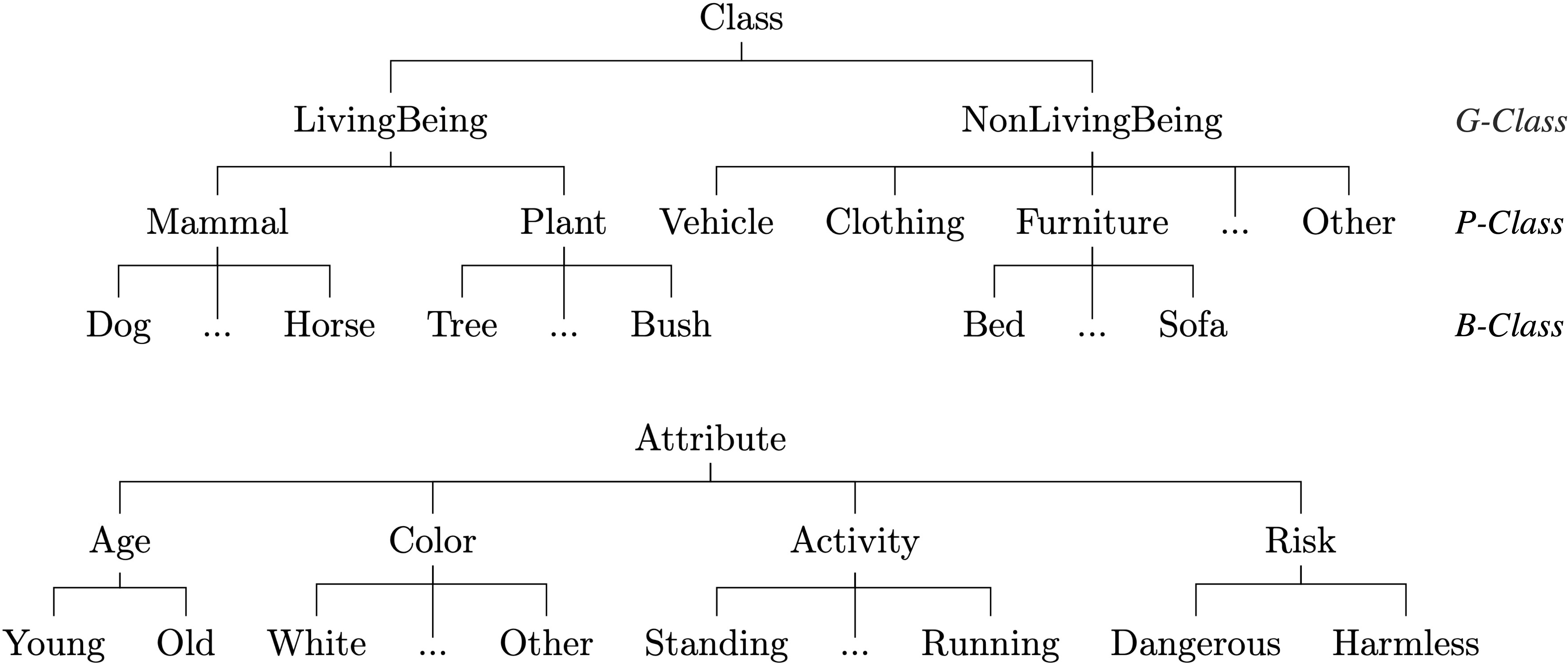

Semantic memory models the time-invariant statistical distributions of concepts and relations. It acts as a repository for knowledge spanning different episodes, forming a bridge to future instances. Generalizations, such as ontological relationships, are encoded as generalized statements that can be embedded symbolically and are robust to new perceptual inputs. Semantic decoding within the BTN involves reconstructing triple relationships (e.g., [Entity, Predicate, Object]) from the embedded representations, grounding abstract concepts in perceptual realities.

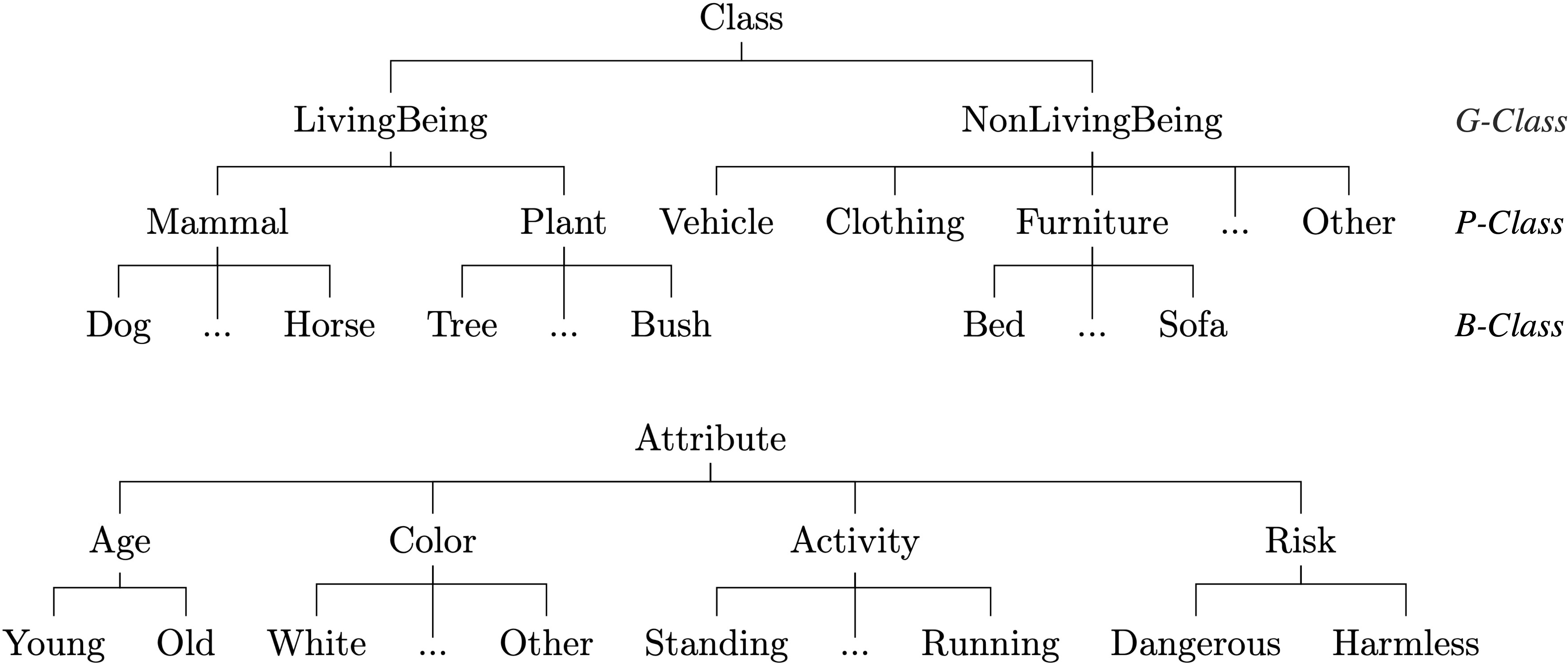

Figure 3: Ontologies. (Top) Class ontology. (Bottom) Attribute ontology.

Functional Implications and Consciousness

The BTN offers potential explanations for phenomena in cognitive neuroscience like the "global workspace" theory of consciousness. By leveraging semantic attention, the BTN continuously updates the brain's cognitive state, contributing to a seamless flow of conscious perception, episodic recall, and semantic understanding. This model suggests that consciousness could emerge from cyclical modulations between subsymbolic representations and symbolic decoding.

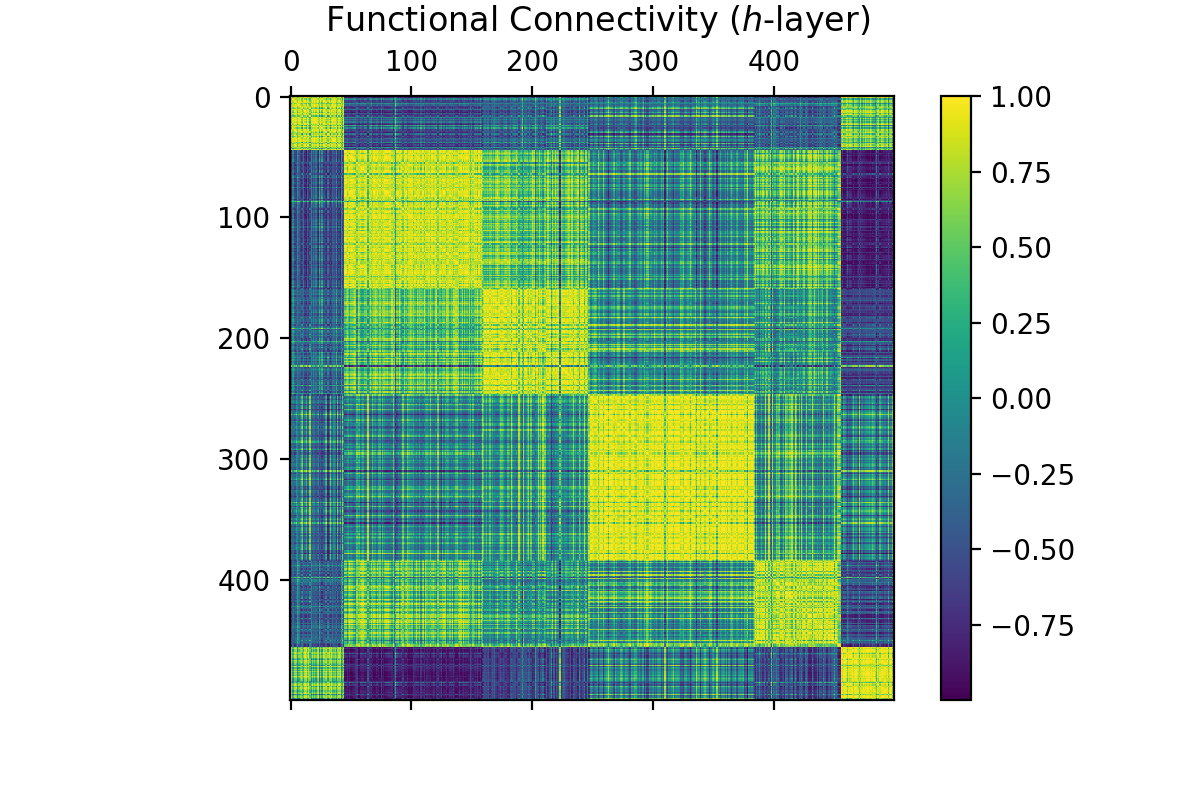

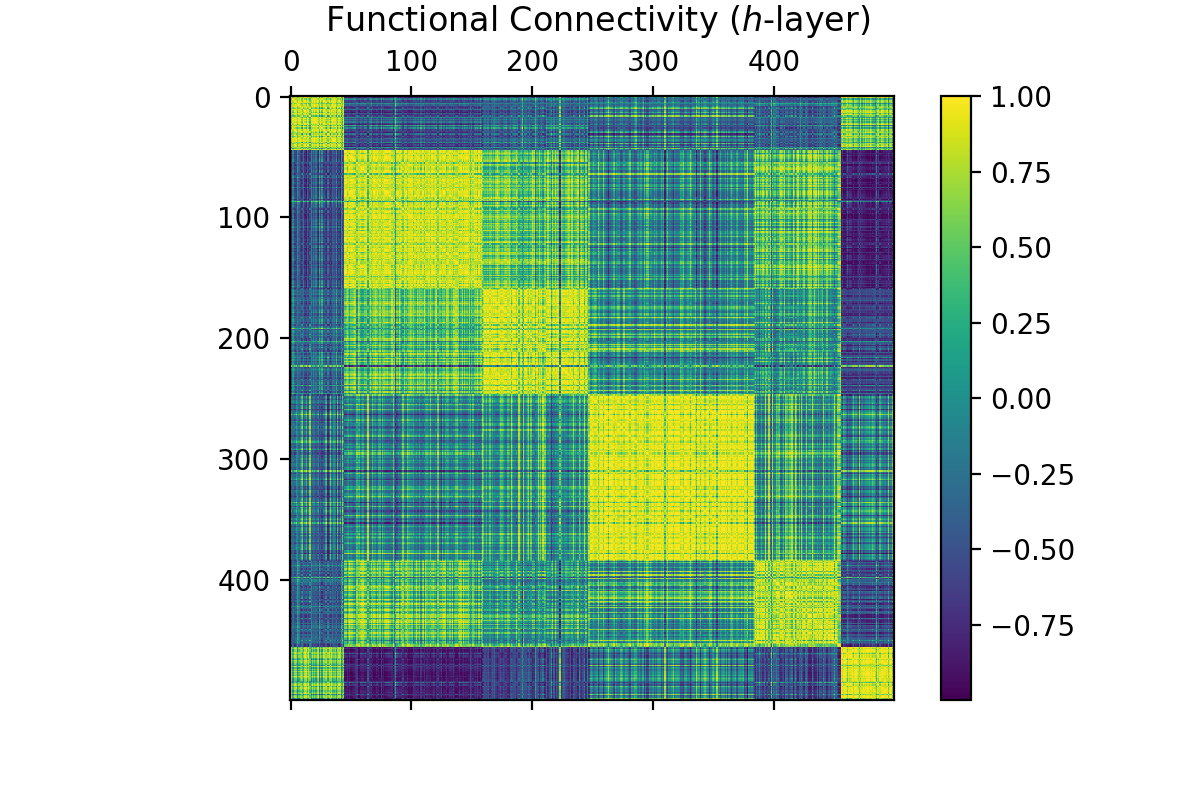

Figure 4: Pearson correlation between units in the dynamic context layer, followed by spectral clustering to order columns and rows.

Experiments and Validation

Experiments conducted with augmented datasets such as VRD-E and VRD-EX validate the model's effectiveness in integrating perception with memory. By simulating various operational modes — direct perception, sampling, and attention — the BTN demonstrates superior performance in visual relationship detection tasks. These results underscore the BTN's capability in both intuitive and analytical cognitive tasks, making it a viable model for understanding complex brain functions.

Conclusion

The paper presents the BTN as a groundbreaking framework for integrating perception and memory into a unified cognitive model. By doing so, it not only sheds light on how brains may achieve such complex processes but also provides a comprehensive tool for implementing AI systems that mimic these abilities. Future work involves exploring the refinement of this architecture to encompass more nuanced aspects of human cognition and consciousness.

By bridging gaps between perception, memory, and semantic understanding, this model has significant implications for developing more sophisticated artificial intelligence systems, capable of human-like contextual reasoning and learning.