- The paper introduces TimeVAE, a novel VAE model that effectively generates synthetic multivariate time series by capturing temporal dependencies.

- The model incorporates interpretable temporal structures, such as trend and seasonal components, to enhance forecasting and prediction tasks.

- Experiments show that TimeVAE outperforms GAN-based methods in quality and efficiency across diverse datasets with limited training data.

TimeVAE: A Variational Auto-Encoder for Multivariate Time Series Generation

Introduction

The paper introduces TimeVAE, a novel Variational Auto-Encoder (VAE) architecture designed to generate synthetic multivariate time series data. TimeVAE addresses the limitations of previous generative models, notably Generative Adversarial Networks (GANs) and Recurrent Conditional GANs (RCGANs), which often require significant training data and computational resources while struggling to capture the temporal dependencies inherent in time series data. The VAE framework serves as a generative model, leveraging its inherent de-noising capability and allowing for the inclusion of domain-specific temporal structures, such as polynomial trends and seasonal patterns.

Methodology

Variational Auto-Encoder Framework

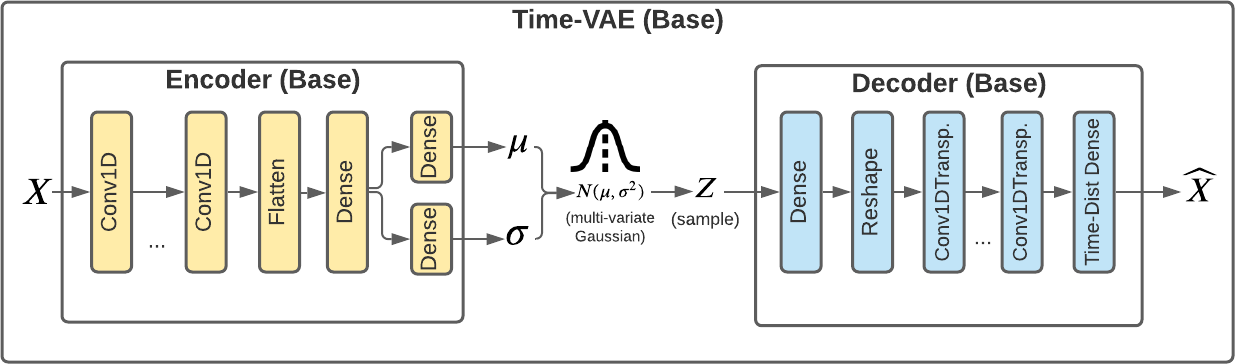

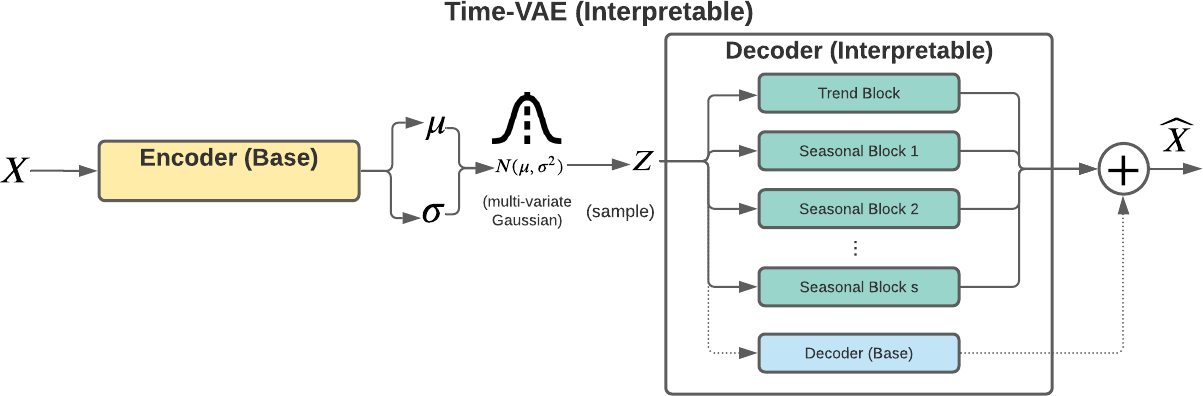

TimeVAE employs a VAE with innovative encoder and decoder designs to optimize the generation of realistic time series samples. The VAE utilizes the reparameterization trick to model the posterior distribution, enabling end-to-end training while capturing essential temporal and feature distributions. The architecture supports the injection of specific temporal constructs to facilitate interpretable and high-quality generative processes, which is advantageous when real-world training data is sparse.

Figure 1: Block diagram of components in Base TimeVAE.

Interpretable Architecture

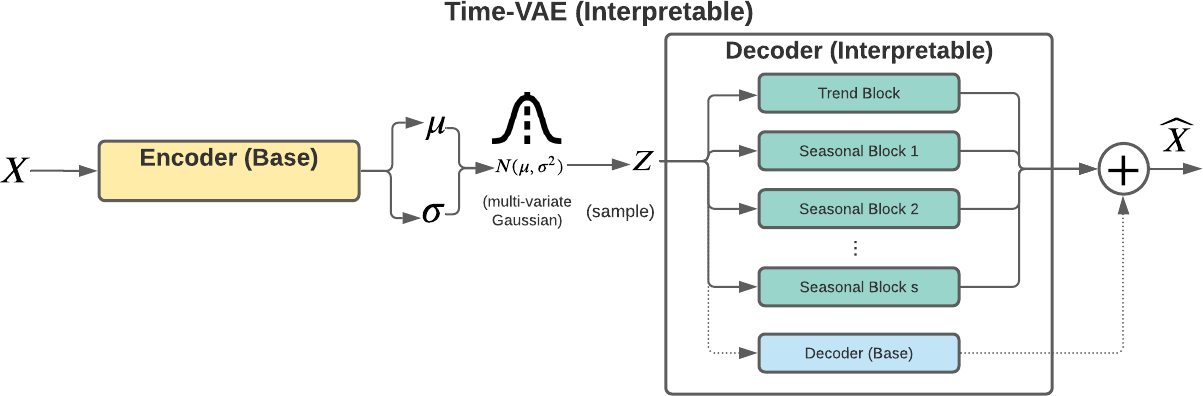

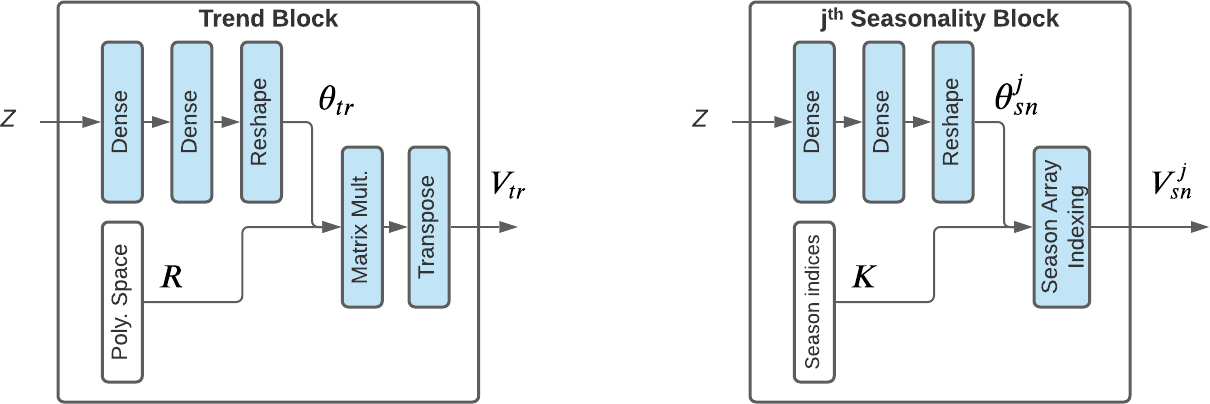

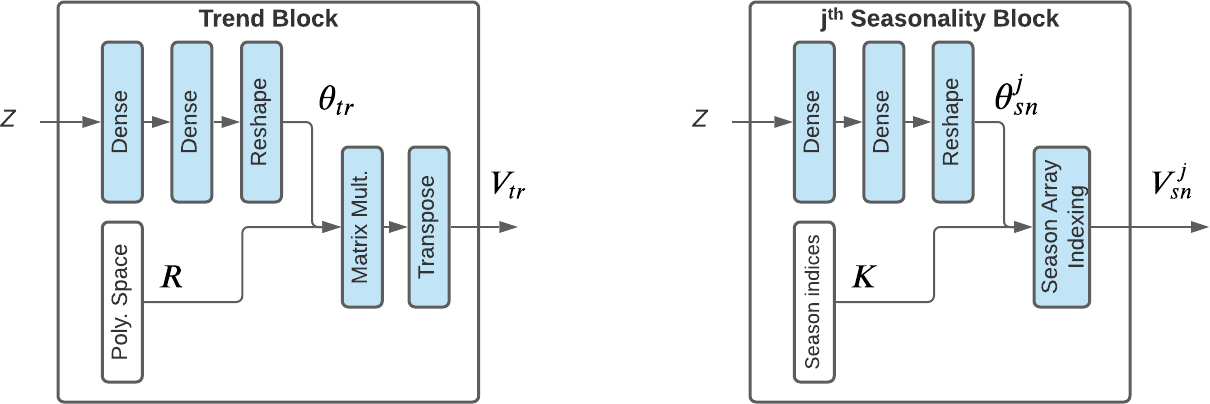

The Interpretable TimeVAE extends the standard VAE model by incorporating custom temporal structures within the decoder. These structures, represented as parallel blocks, facilitate the decomposition of time series into level, trend, and seasonal components. Such decomposition draws on established forecasting techniques like Holt-Winters exponential smoothing. The trend and seasonality blocks, displayed in Figure 2, inject subject matter expertise, enhancing the interpretability and utility of generated data. The architecture allows toggling of its components, providing flexibility in generating time series with varying temporal dynamics.

Figure 3: Block diagram of components in Interpretable TimeVAE.

Figure 2: Trend and Seasonality Blocks in Interpretable TimeVAE.

Experimentation and Results

Data Sets and Comparative Evaluation

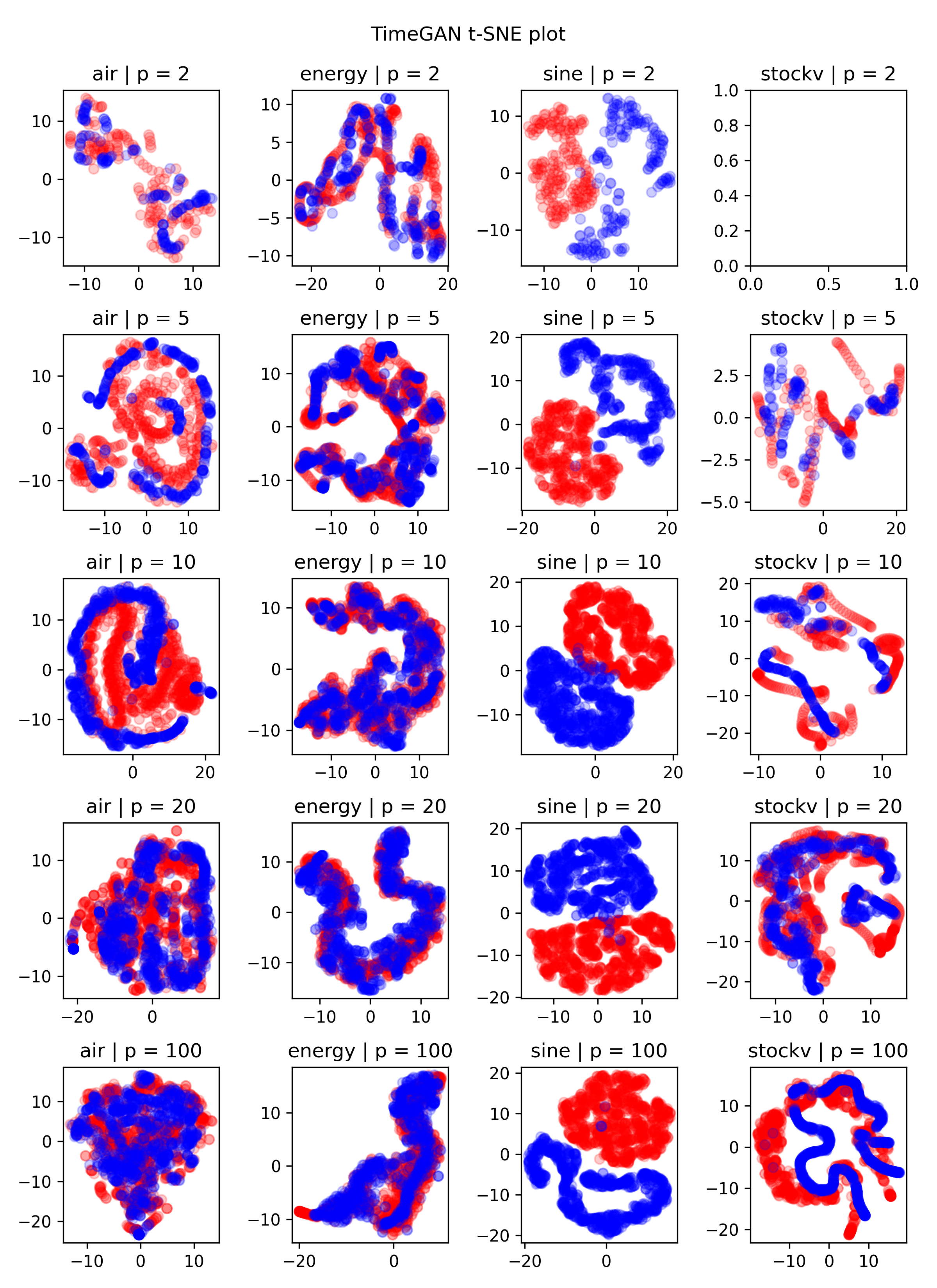

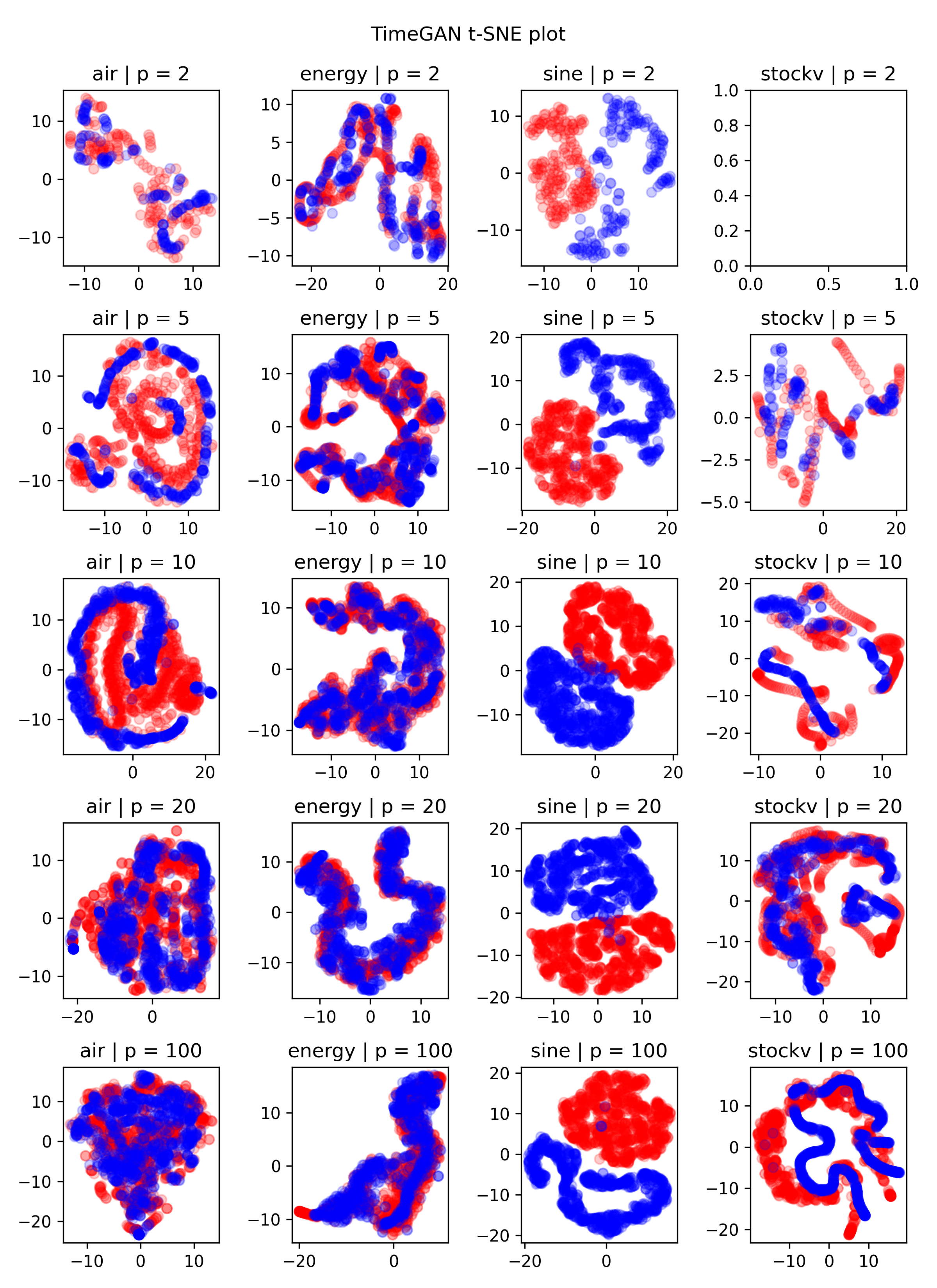

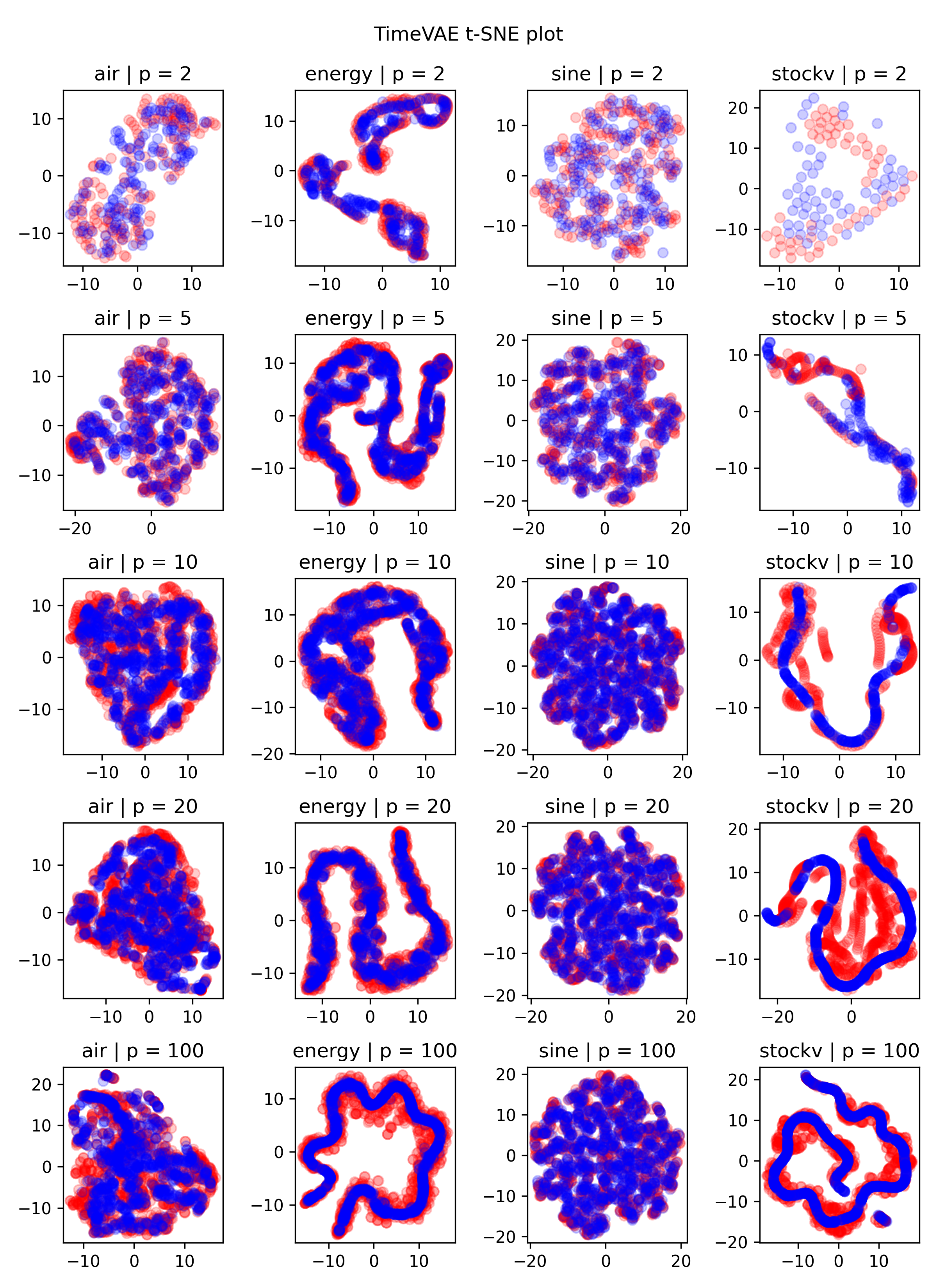

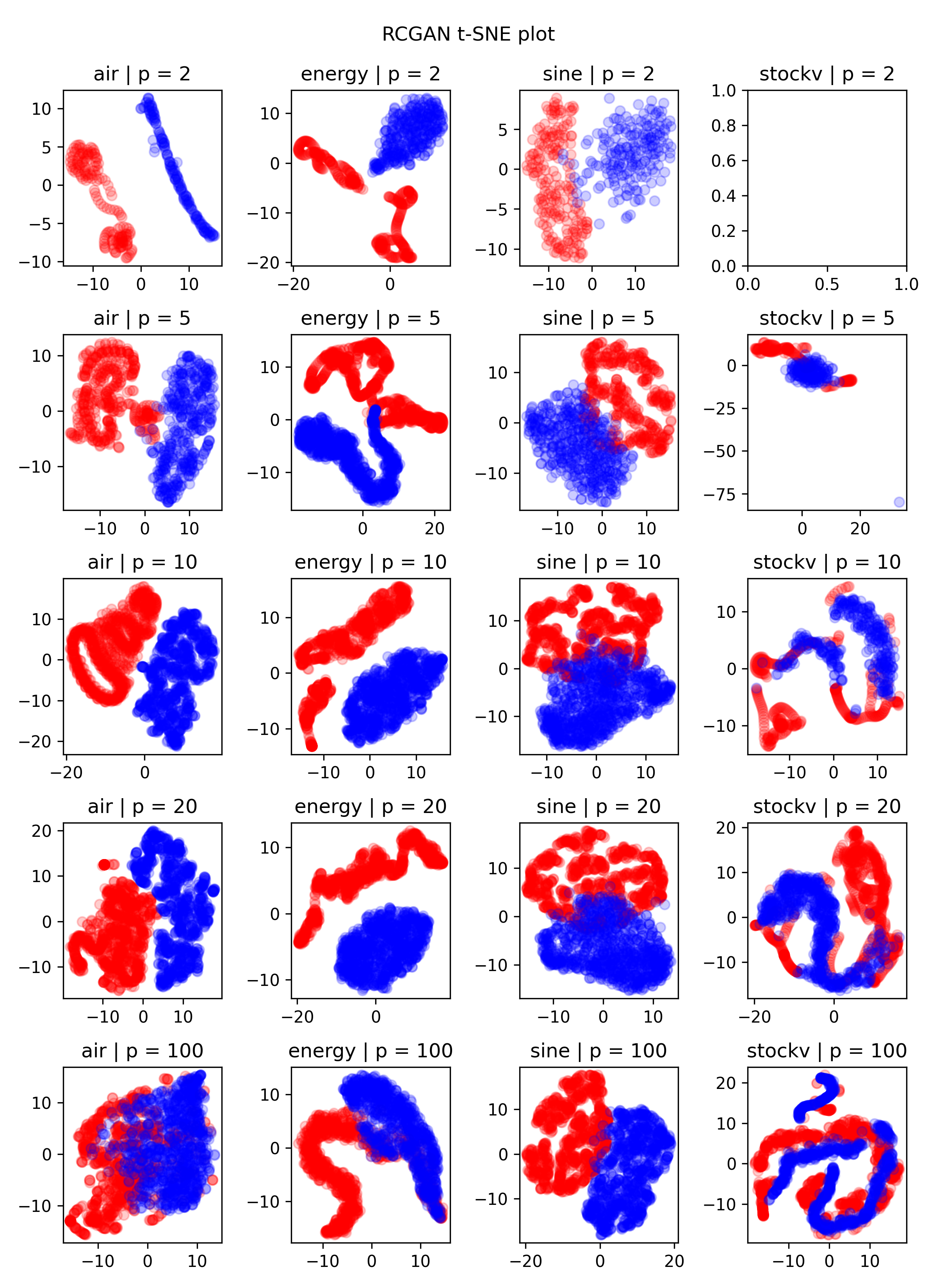

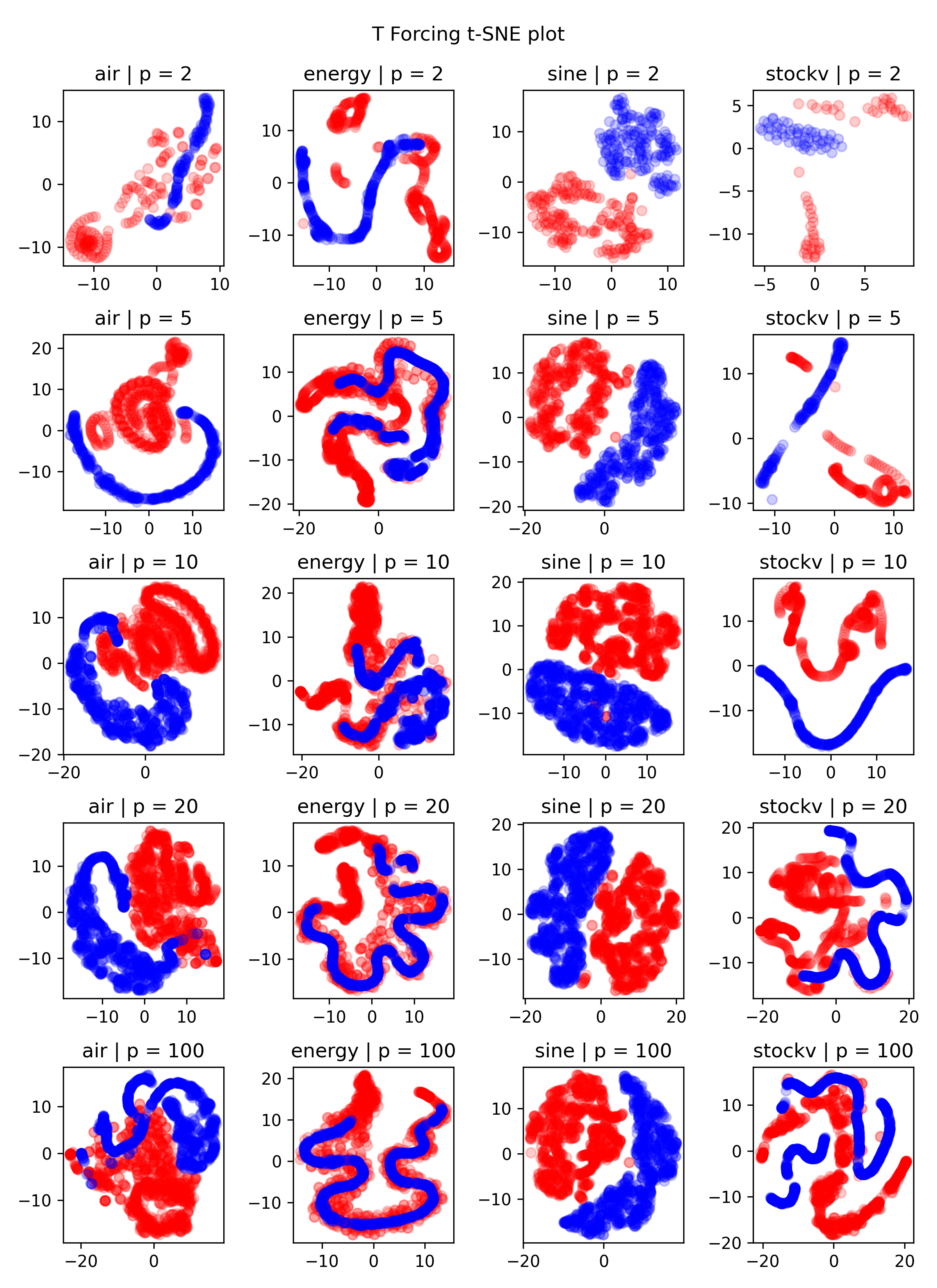

The experiments utilized four multivariate datasets: synthetic sinusoidal sequences, stock market data, appliances energy predictions, and air quality sensor readings. TimeVAE's performance was compared against RCGAN, TimeGAN, and a teacher-forcing trained autoregressive RNN model. Evaluation metrics included t-SNE plot analysis, discriminative score accuracy, and next-step prediction performance measured by Mean Absolute Error (MAE) on original datasets.

Figure 4: t-SNE plots comparing original data against synthetic data generated by TimeGAN (top left), TimeVAE (top right), RCGAN (bottom left), and T-Forcing (bottom right).

The results demonstrated that TimeVAE consistently achieved high-quality synthetic data generation, surpassing competing models particularly with limited training data. The discriminative and predictive scores revealed TimeVAE's robustness and efficacy across diverse data sets and training conditions. Notably, TimeVAE was found to be computationally efficient, significantly reducing the time and cost required for training relative to GAN-based methods.

Conclusion

TimeVAE represents a valuable advancement in synthetic time series data generation, addressing critical limitations faced by GAN-based models. Its architecture integrates domain-specific knowledge and achieves interpretable outputs, making it suitable for applications requiring transparent model predictions. Given its computational efficiency and accuracy, TimeVAE holds promise for enabling predictive and forecasting tasks in scenarios constrained by limited real-world data. Future work will focus on expanding temporal constructs within TimeVAE to enhance its capability for complex multi-step forecasting tasks.