- The paper introduces TimePFN, a transformer-based model that improves multivariate time series forecasting by generating synthetic data to simulate cross-channel interactions.

- It employs diverse Gaussian process kernels and linear coregionalization to create realistic synthetic datasets that capture temporal and inter-channel dependencies.

- The model achieves competitive error rates even with minimal data, demonstrating strong performance in zero-shot, few-shot, and univariate forecasting scenarios.

TimePFN: Effective Multivariate Time Series Forecasting with Synthetic Data

Introduction

The paper "TimePFN: Effective Multivariate Time Series Forecasting with Synthetic Data" (2502.16294) introduces TimePFN, a transformer-based architecture aimed at improving multivariate time-series forecasting. This innovation is driven by the challenges posed by the heterogeneous nature of time series data and the scarcity of domain-specific datasets. TimePFN leverages the concept of Prior-data Fitted Networks (PFNs) to approximate Bayesian inference and demonstrates superior performance in zero-shot and few-shot learning scenarios.

Synthetic Data Generation

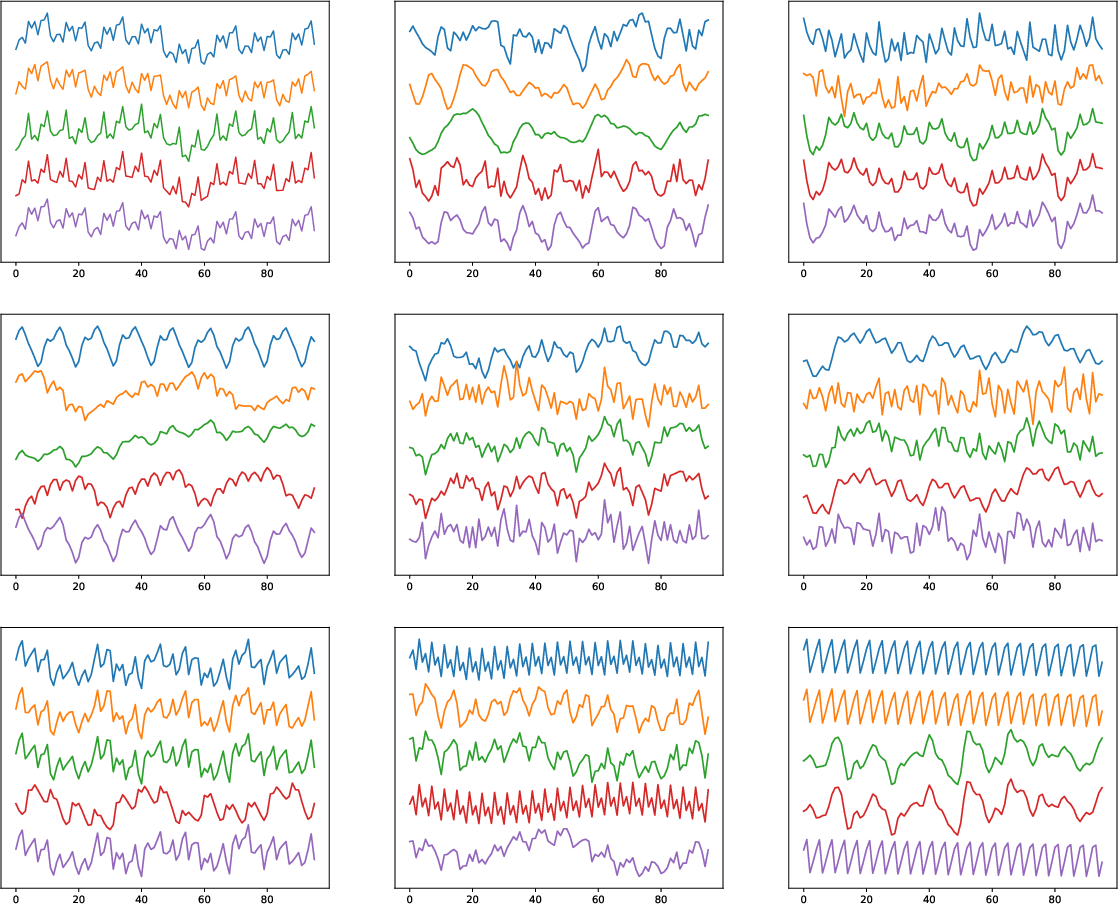

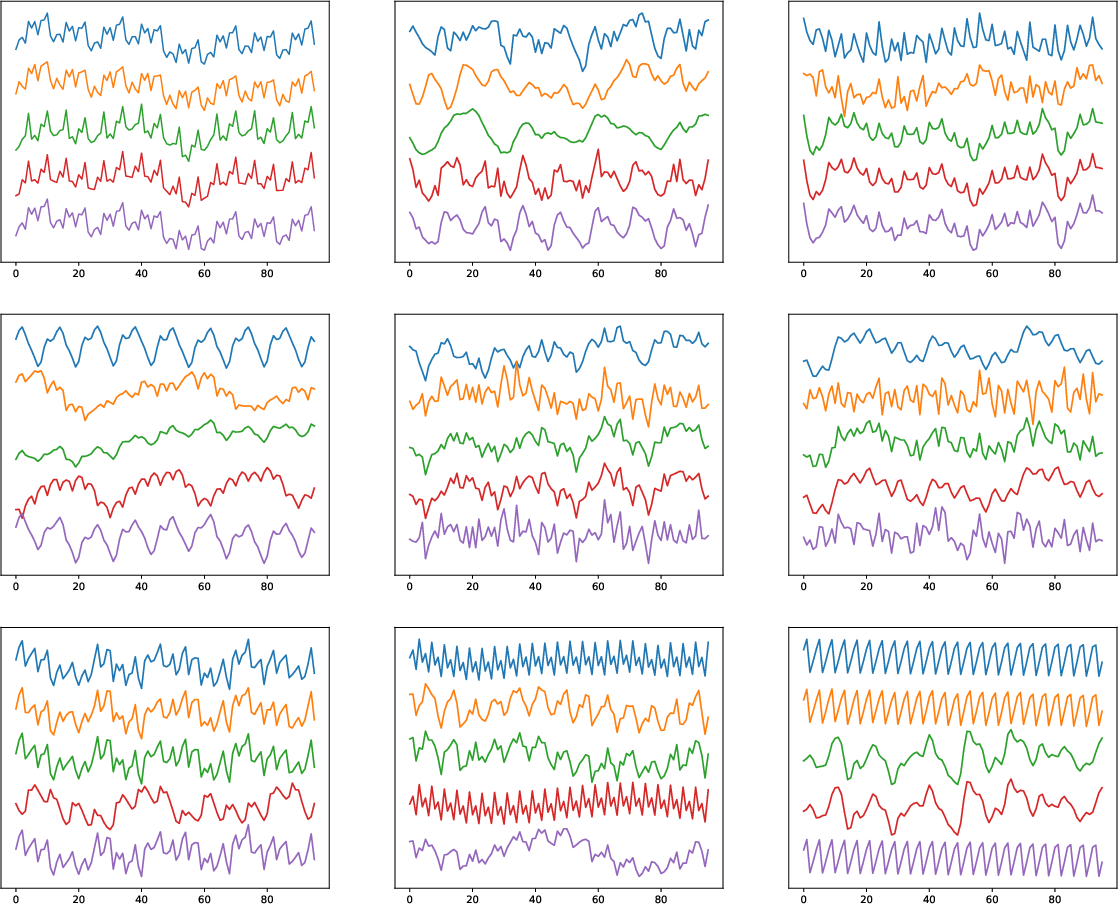

A critical component of TimePFN is its method for generating synthetic multivariate time series (MTS) data. By employing diverse Gaussian process kernels and the linear coregionalization method, the authors create synthetic datasets that capture temporal and cross-channel dependencies. This synthetic data generation is key to training models capable of effective forecasting in real-world scenarios.

Figure 1: Examples of synthetic multivariate time time-series data generated by LMC-Synth. For the ease of understanding, we took C=5 and sequence length = 96. Dirichlet concentration parameter controls the diversity of variates from one another.

TimePFN Architecture

The TimePFN architecture builds upon PatchTST, introducing improvements to handle multivariate forecasting effectively. It incorporates channel mixing and convolutional embedding modules which enhance cross-channel interaction, enabling the extraction of richer temporal features from the data. This is shown to benefit forecasting tasks where variates are correlated.

Evaluation and Results

The paper evaluates TimePFN on several benchmark datasets, demonstrating its superiority over existing models in zero-shot and few-shot settings. Notably, fine-tuning TimePFN with a minimal dataset of 500 points nearly achieves errors comparable to full dataset training, and even using only 50 points yields competitive results. TimePFN also exhibited strong univariate forecasting performance despite being trained with multivariate data, highlighting its robust generalization capabilities.

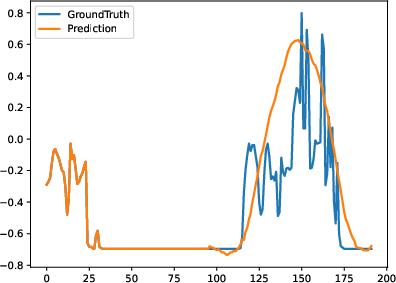

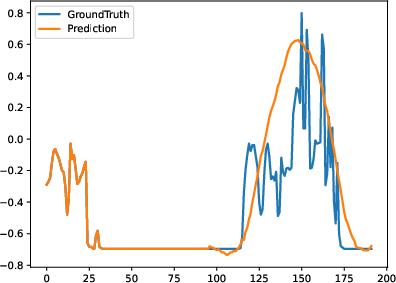

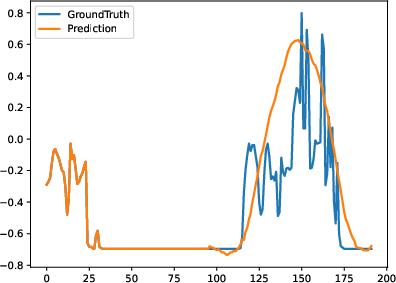

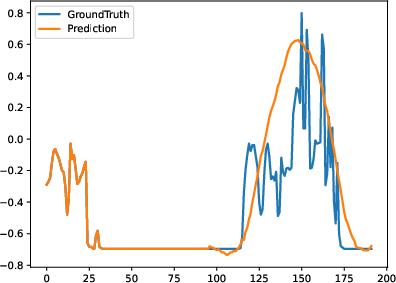

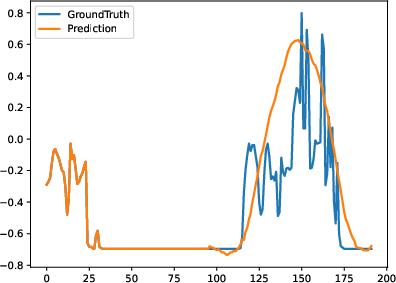

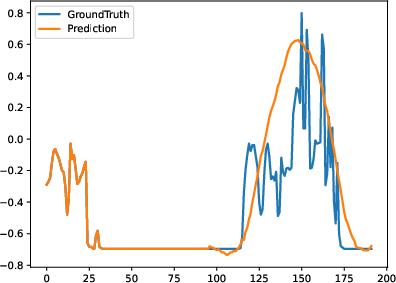

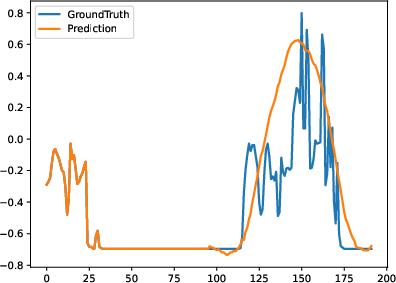

Figure 2: Zero Shot

Implications and Future Work

The implications of this research are substantial, illustrating the power of synthetic data priors in improving multivariate time series forecasting. Practically, this approach enables effective deployment of models in scenarios with limited available data. Theoretically, TimePFN challenges existing paradigms in time series model architectures by emphasizing the importance of data-centric approaches and cross-channel interactions.

In the future, the development of foundation models for time series tasks and further applications of PFNs in multivariate time-series data generation are promising avenues for advancing AI capabilities in complex real-world forecasting scenarios.

Conclusion

TimePFN represents an important step forward in utilizing synthetic data for enhancing the performance of time series forecasting models. By addressing the complexities inherent to multivariate datasets and leveraging innovative architectural designs, TimePFN sets a benchmark for future research in the field.