- The paper presents MSDT, a transformer-based static analysis approach designed to efficiently detect anomalies and malicious code injections.

- The methodology leverages Code2Seq embeddings with clustering and ranking techniques, achieving high precision (up to 0.909) on a large Python function dataset.

- Results demonstrate enhanced function-level detection over file-level tools, with promising opportunities for multi-language support and further robustness improvements.

Introduction

The paper "Malicious Source Code Detection Using Transformer" (2209.07957) addresses the increasing threat of software supply chain attacks, wherein malicious actors inject harmful code into legitimate software to compromise its security. Such attacks exploit the widespread dependency on open-source software components and automatic code integration processes. The study presents the Malicious Source Code Detection Transformer (MSDT) algorithm, designed to detect code injection within function source codes through a static analysis method.

Methodology

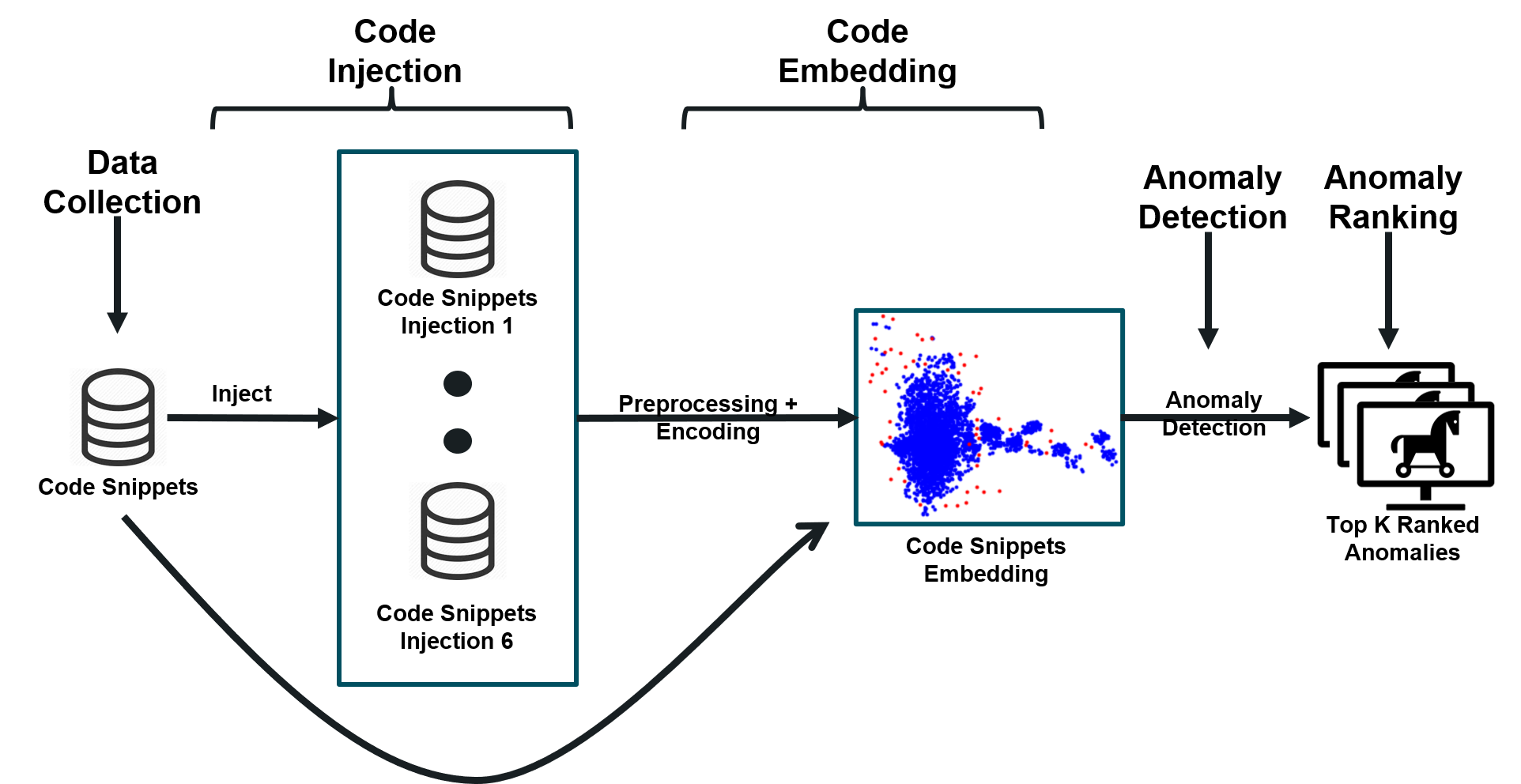

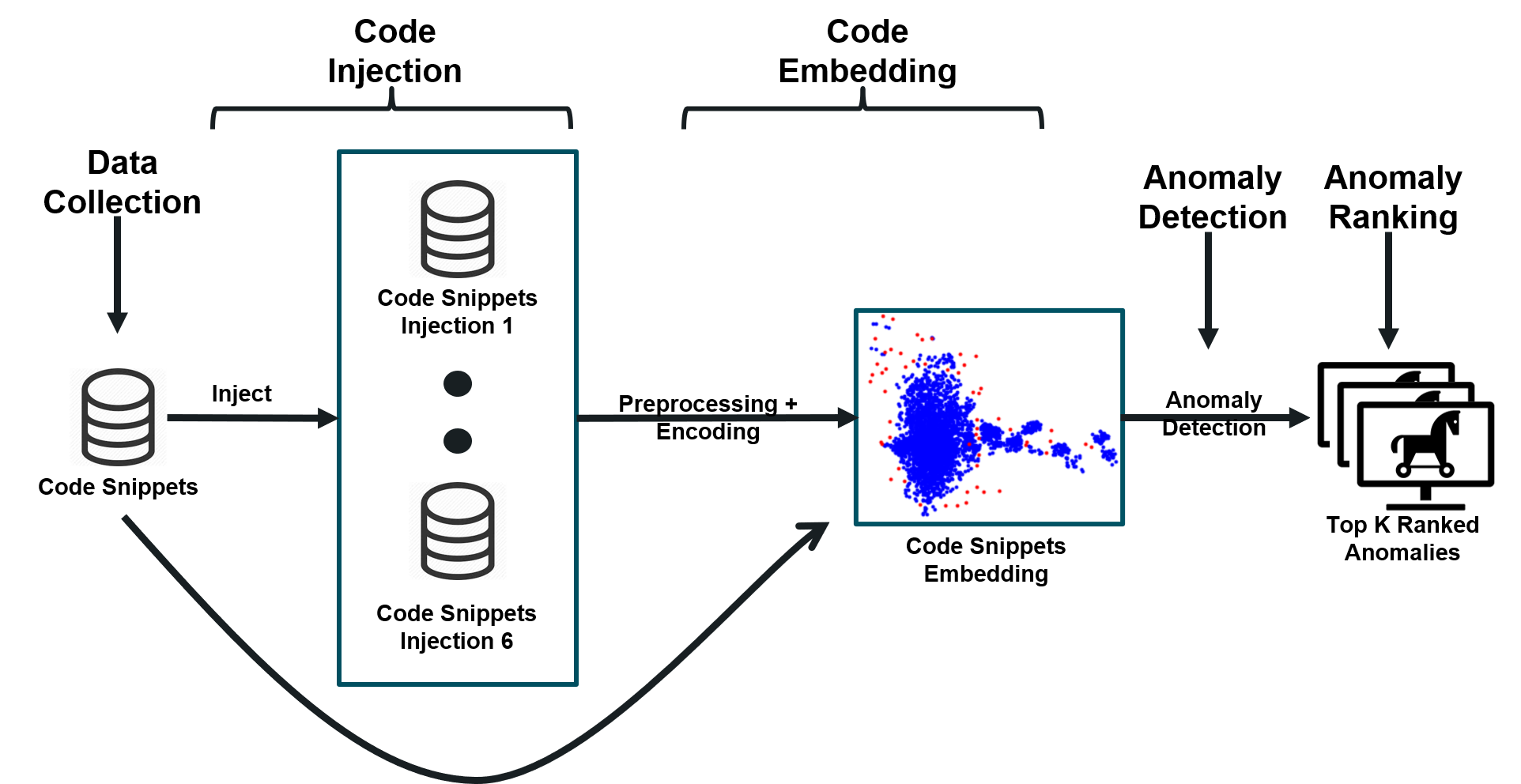

The study focuses on MSDT, which employs a transformer-based model for static code analysis to identify anomalies that may indicate malicious intent. This approach includes four primary steps:

- Data Collection: Functions from chosen programming languages (PL) are gathered to estimate the distribution of function implementations. This collection leverages existing datasets like PY150 and CodeSearchNet (CSN).

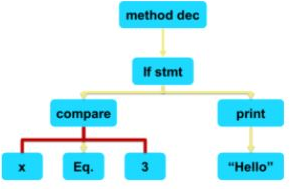

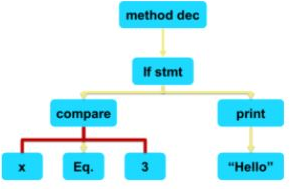

- Code Embedding: The transformer, specifically a Code2Seq model, encodes the function source code into vector representations. This allows for the identification of similar patterns and anomalies across code snippets.

- Anomaly Detection: Anomalies are identified through clustering algorithms like DBSCAN. Functions farthest from cluster centroids are considered potential anomalies.

- Anomaly Ranking: Anomalies are ranked based on their distance from the cluster border points, aiding in prioritizing which functions require further examination.

Figure 1: Overview of the MSDT data embedding and anomaly detection process.

Experimentation and Results

MSDT's performance was rigorously tested using a large dataset consisting of over 607,461 Python functions injected with known malicious code samples. The results demonstrated that MSDT achieved a precision@k value of up to 0.909, particularly for detecting injections in frequently implemented functions like get. Moreover, the results indicated a positive correlation between the number of function implementations and MSDT's detection accuracy.

Figure 2: Example AST transformation illustrating the structure used in the Code2Seq model.

Various clustering and detection methodologies were compared, with MSDT outperforming others like ECOD, especially for real-world injection scenarios. The evaluation also highlighted the advantage of MSDT's function-level analysis compared to file-level methods used by tools like Bandit and Snyk, which lack granularity in detecting function-specific anomalies.

Discussion

MSDT's efficacy lies in its ability to identify recurring functional patterns and effectively delimit anomalies due to code injections. Its transformer-based architecture facilitates a nuanced static analysis that stands out against traditional static analysis tools by handling function-level detection. However, the approach is notably challenged by functions with diverse implementations, limiting model performance in such cases. Moreover, scripts with significant line lengths tend to reduce detection precision, indicative of the challenges in capturing nuanced semantic shifts.

The study also notes the potential expansion of MSDT to support multiple PLs through appropriate grammar and transformer models, an area ripe for future research. Further exploration of alternative source code embeddings, like CodeBERT or CodeX, could enhance MSDT's robustness against a broader range of injection methods.

Conclusion

The development of MSDT presents a significant step towards enhancing software security in open-source ecosystems, particularly against the backdrop of prevalent supply chain attacks. By leveraging transformers for static analysis, MSDT offers a precision-driven method for anomaly detection in source codes. Future work promises to deepen its application scope, improve detection rates for diverse code bases, and further integrate semantic and execution-flow-aware models.