- The paper introduces a novel metric, BER, which combines segment-level error rate (SER) with duration and speaker-weighted errors to improve evaluation.

- It applies graph-based segment matching with adaptive IoU thresholds to accurately assess diarization performance despite arbitrary segmentation.

- Experimental results demonstrate that BER highlights errors in short utterances and less-spoken speakers, setting a new standard in diarization evaluation.

BER: Balanced Error Rate For Speaker Diarization

The paper presents a novel metric, Balanced Error Rate (BER), for evaluating speaker diarization systems. Unlike existing metrics, BER aims to address the limitations of conventional measures like Diarization Error Rate (DER) and Jaccard Error Rate (JER), which tend to overlook errors in short utterances and less-spoken speakers. The proposed BER incorporates a segment-level error rate (SER) and combines it with speaker-weighted, duration, and segment errors in a unified framework.

Motivation and Problematic Areas in Conventional Metrics

Speaker Diarization, the process of identifying "who spoke when", struggles with several issues in evaluation metrics. Traditional metrics like DER and JER mainly focus on duration, disregarding the significance of errors in short utterances or from less-talked speakers. These omissions lead to a skewed evaluation as longer segments dominate error calculations, thereby obscuring performance issues in handling shorter segments that may carry important semantic information.

The introduction of the CDER metric attempted to address some limitations by considering segment-level errors. However, its fixed IoU threshold can result in bias, particularly with segments of differing lengths, leading to tolerance issues in evaluations.

Proposed Metrics: SER and BER

The paper introduces SER as a segment-level metric utilizing graph-based segment matching.

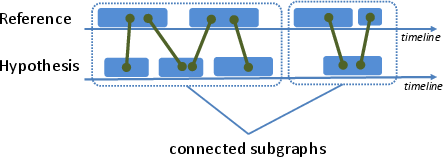

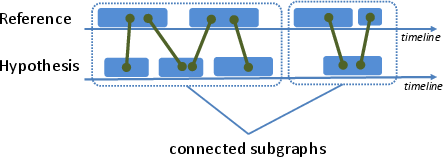

Figure 1: Graph-based segment matching demonstrates handling arbitrary segmentation with adaptive IoU.

- Segment Error Rate (SER): Utilizes connected sub-graphs and adaptive IoU thresholds to accurately match segments between the reference and hypothesis. This methodology facilitates the treatment of arbitrary hypothesized segmentation without merging adjacent segments.

- Balanced Error Rate (BER): Integrates SER with duration and speaker-weighted errors. The BER calculation involves a harmonic mean between duration and segment errors for each speaker, followed by a weighted average to provide an overarching assessment.

The evaluation framework is laid out in Algorithm 1, detailing speaker-specific and overall error computations.

Experimental Evaluation

Evaluation Setup

The proposed metrics are evaluated using various publicly available datasets, including AMI, CALLHOME, DIHARD2, VoxConverse, and MSDWild, employing different methods such as a modularized system, VBx, and EEND-VC. Evaluations consider both overlapped speech scenarios with no suppression of boundary collar effects, ensuring a comprehensive assessment of speaker discrimination capabilities.

Results and Discussion

Metric Comparisons Across Datasets

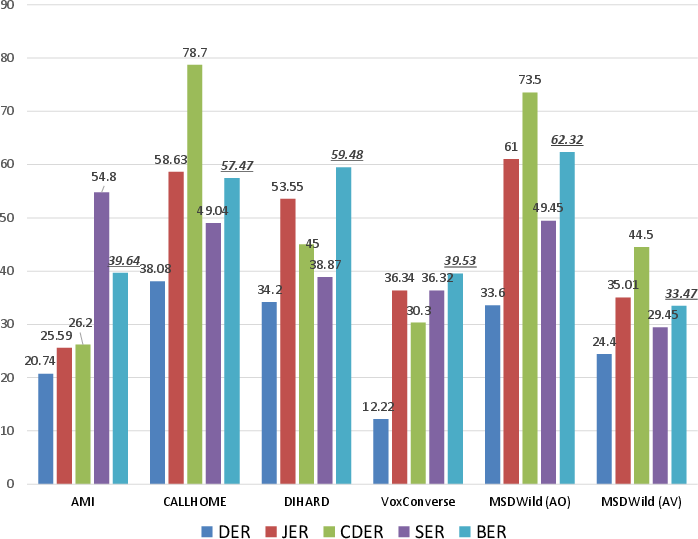

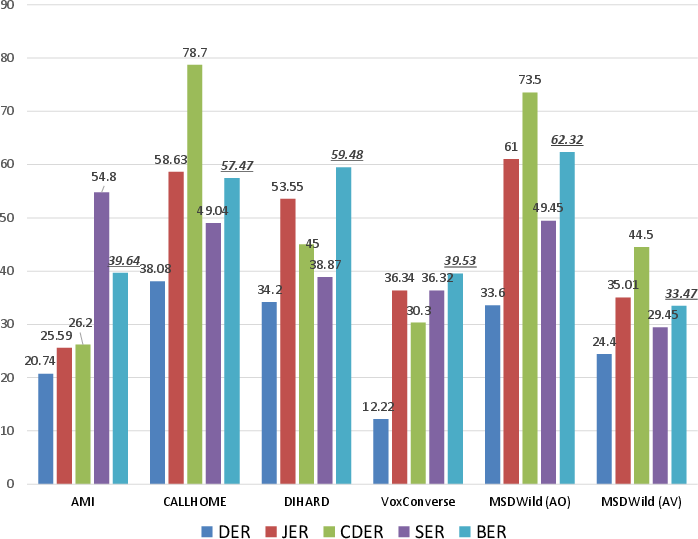

The results demonstrate that BER offers a more comprehensive evaluation compared to existing metrics. For instance, in the AMI dataset, despite a low JER, the high BER identifies numerous false alarms in short segments, highlighting the drawbacks of conventional measures that fail to penalize such errors effectively.

Figure 2: Overview of SER and BER across various datasets and systems.

System Comparison

Metrics applied across different diarization systems reveal insightful contrasts. The VBx system shows improved duration and segment evaluation over a modularized system, while EEND-VC is observed to have paradoxically higher DER yet better SER, suggesting its enhanced capability in discriminating segments but challenges in handling arbitrary speaker numbers, affirming the utility of BER in highlighting such nuanced disparities.

Conclusion

The introduction of BER alongside SER marks a significant advancement in the evaluation of speaker diarization systems. These metrics address critical shortcomings of existing measures by incorporating comprehensive segment-level analysis and accommodating variations in speaker participation and utterance lengths. With validated effectiveness across diverse datasets and systems, BER sets a new standard for speaker diarization evaluation, offering a balanced view of system performance that can foster further developments and improvements in diarization methodology.