DiarizationLM: Speaker Diarization Post-Processing with Large Language Models

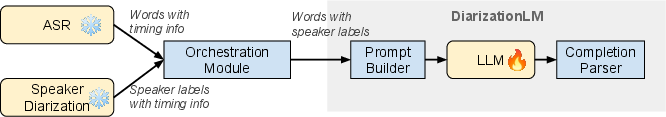

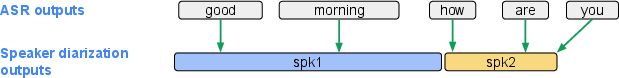

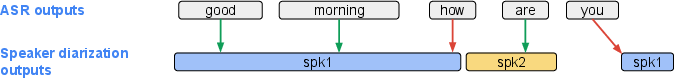

Abstract: In this paper, we introduce DiarizationLM, a framework to leverage LLMs (LLM) to post-process the outputs from a speaker diarization system. Various goals can be achieved with the proposed framework, such as improving the readability of the diarized transcript, or reducing the word diarization error rate (WDER). In this framework, the outputs of the automatic speech recognition (ASR) and speaker diarization systems are represented as a compact textual format, which is included in the prompt to an optionally finetuned LLM. The outputs of the LLM can be used as the refined diarization results with the desired enhancement. As a post-processing step, this framework can be easily applied to any off-the-shelf ASR and speaker diarization systems without retraining existing components. Our experiments show that a finetuned PaLM 2-S model can reduce the WDER by rel. 55.5% on the Fisher telephone conversation dataset, and rel. 44.9% on the Callhome English dataset.

- “A review of speaker diarization: Recent advances with deep learning,” Computer Speech & Language, vol. 72, pp. 101317, 2022.

- “Speaker diarization: A journey from unsupervised to supervised approaches,” Odyssey: The Speaker and Language Recognition Workshop, 2022, Tutorial session.

- “Feature learning with raw-waveform CLDNNs for voice activity detection,” in Proc. Interspeech, 2016.

- “Target-speaker voice activity detection: a novel approach for multi-speaker diarization in a dinner party scenario,” in Proc. Interspeech, 2020.

- “Personal VAD: Speaker-conditioned voice activity detection,” in Odyssey: The Speaker and Language Recognition Workshop, 2020.

- “Personal vad 2.0: Optimizing personal voice activity detection for on-device speech recognition,” arXiv preprint arXiv:2204.03793, 2022.

- “Turn-to-Diarize: Online speaker diarization constrained by transformer transducer speaker turn detection,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2022, pp. 8077–8081.

- “Augmenting transformer-transducer based speaker change detection with token-level training loss,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2023.

- “Generalized end-to-end loss for speaker verification,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2018, pp. 4879–4883.

- “Deep speaker: an end-to-end neural speaker embedding system,” arXiv preprint arXiv:1705.02304, 2017.

- “X-Vectors: Robust dnn embeddings for speaker recognition,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2018, pp. 5329–5333.

- “Speaker diarization with LSTM,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2018, pp. 5239–5243.

- “Speaker diarization using deep neural network embeddings,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2017, pp. 4930–4934.

- “Developing on-line speaker diarization system,” in Proc. Interspeech, 2017, pp. 2739–2743.

- “Auto-tuning spectral clustering for speaker diarization using normalized maximum eigengap,” IEEE Signal Processing Letters, vol. 27, pp. 381–385, 2019.

- “Multi-scale speaker diarization with neural affinity score fusion,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2021, pp. 7173–7177.

- “Highly efficient real-time streaming and fully on-device speaker diarization with multi-stage clustering,” arXiv preprint arXiv:2210.13690, 2022.

- “Fully supervised speaker diarization,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2019, pp. 6301–6305.

- “Discriminative neural clustering for speaker diarisation,” in Spoken Language Technology Workshop (SLT). IEEE, 2021.

- “End-to-end neural speaker diarization with permutation-free objectives,” in Proc. Interspeech, 2019, pp. 4300–4304.

- “EEND-SS: Joint end-to-end neural speaker diarization and speech separation for flexible number of speakers,” arXiv preprint arXiv:2203.17068, 2022.

- “Speaker diarization using an end-to-end model,” US Patent US011545157B2, 2019.

- “End-to-end speaker diarization for an unknown number of speakers with encoder-decoder based attractors,” arXiv preprint arXiv:2005.09921, 2020.

- “Who said what? Recorder’s on-device solution for labeling speakers,” Google AI Blog.

- “Joint speech recognition and speaker diarization via sequence transduction,” in Proc. Interspeech, 2019, pp. 396–400.

- “Joint speaker counting, speech recognition, and speaker identification for overlapped speech of any number of speakers,” arXiv preprint arXiv:2006.10930, 2020.

- “Minimum bayes risk training for end-to-end speaker-attributed ASR,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2021, pp. 6503–6507.

- “End-to-end speaker-attributed ASR with transformer,” arXiv preprint arXiv:2104.02128, 2021.

- “Streaming speaker-attributed ASR with token-level speaker embeddings,” arXiv preprint arXiv:2203.16685, 2022.

- “Speaker-aware neural network based beamformer for speaker extraction in speech mixtures,” in Proc. Interspeech, 2017, pp. 2655–2659.

- “Single channel target speaker extraction and recognition with speaker beam,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2018, pp. 5554–5558.

- “End-to-end SpeakerBeam for single channel target speech recognition,” in Proc. Interspeech, 2019, pp. 451–455.

- “Auxiliary interference speaker loss for target-speaker speech recognition,” arXiv preprint arXiv:1906.10876, 2019.

- “Towards word-level end-to-end neural speaker diarization with auxiliary network,” arXiv preprint arXiv:2309.08489, 2023.

- “Lexical speaker error correction: Leveraging language models for speaker diarization error correction,” arXiv preprint arXiv:2306.09313, 2023.

- “An overview of Bard: an early experiment with generative AI,” https://ai.google/static/documents/google-about-bard.pdf, 2023.

- OpenAI, “Introducing ChatGPT,” https://openai.com/blog/chatgpt, 2022.

- Vladimir I Levenshtein, “Binary codes capable of correcting deletions, insertions, and reversals,” Soviet physics doklady, vol. 10, no. 8, pp. 707–710, 1966.

- Harold W Kuhn, “The Hungarian method for the assignment problem,” Naval research logistics quarterly, vol. 2, no. 1-2, pp. 83–97, 1955.

- “The Fisher corpus: A resource for the next generations of speech-to-text,” in LREC, 2004, vol. 4, pp. 69–71.

- “CALLHOME American English speech LDC97S42,” LDC Catalog. Philadelphia: Linguistic Data Consortium, 1997.

- “CHiME-6 challenge: Tackling multispeaker speech recognition for unsegmented recordings,” arXiv preprint arXiv:2004.09249, 2020.

- “Google USM: Scaling automatic speech recognition beyond 100 languages,” arXiv preprint arXiv:2303.01037, 2023.

- Alex Graves, “Sequence transduction with recurrent neural networks,” arXiv preprint arXiv:1211.3711, 2012.

- “PaLM 2 technical report,” arXiv preprint arXiv:2305.10403, 2023.

- “SentencePiece: A simple and language independent subword tokenizer and detokenizer for neural text processing,” arXiv preprint arXiv:1808.06226, 2018.

- “Diarization resegmentation in the factor analysis subspace,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2015, pp. 4794–4798.

- “Neural speech turn segmentation and affinity propagation for speaker diarization,” in Proc. Interspeech, 2018, pp. 1393–1397.

- “DiaCorrect: Error correction back-end for speaker diarization,” arXiv preprint arXiv:2309.08377, 2023.

- “The majority wins: a method for combining speaker diarization systems,” in Proc. Interspeech, 2009.

- “System output combination for improved speaker diarization,” in Proc. Interspeech, 2010.

- “DOVER: A method for combining diarization outputs,” in Automatic Speech Recognition and Understanding Workshop (ASRU). IEEE, 2019, pp. 757–763.

- “End-to-end speaker diarization as post-processing,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2021, pp. 7188–7192.

- “Incorporation of the ASR output in speaker segmentation and clustering within the task of speaker diarization of broadcast streams,” in International Workshop on Multimedia Signal Processing (MMSP). IEEE, 2012, pp. 118–123.

- “Multimodal speaker segmentation and diarization using lexical and acoustic cues via sequence to sequence neural networks,” in Proc. Interspeech, 2018, pp. 1373–1377.

- “Speaker diarization with lexical information,” arXiv preprint arXiv:2004.06756, 2020.

- “Empirical evaluation of gated recurrent neural networks on sequence modeling,” arXiv preprint arXiv:1412.3555, 2014.

- “Transformer transducer: A streamable speech recognition model with transformer encoders and RNN-T loss,” in International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2020, pp. 7829–7833.

- “Roberta: A robustly optimized bert pretraining approach,” arXiv preprint arXiv:1907.11692, 2019.

- “Enhancing speaker diarization with large language models: A contextual beam search approach,” arXiv preprint arXiv:2309.05248, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.