- The paper reveals that diffusion models can operate as zero-shot classifiers by leveraging ELBO to approximate class-conditional likelihoods.

- It employs Monte Carlo sampling and stage-wise pruning to enhance computational efficiency and improve classification accuracy.

- The study demonstrates competitive performance against established zero-shot and supervised classifiers, highlighting robustness to distributional shifts.

Your Diffusion Model is Secretly a Zero-Shot Classifier

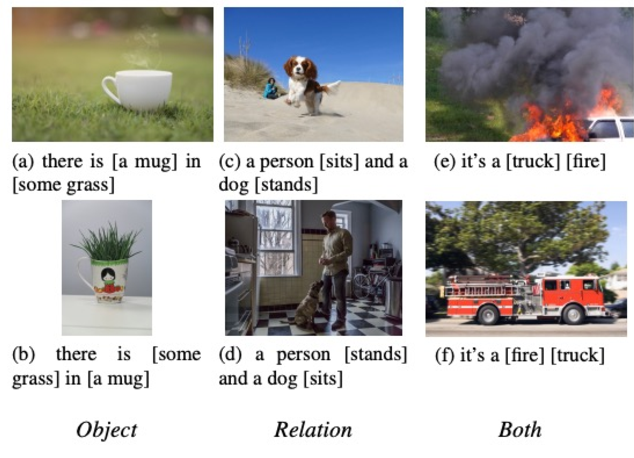

The paper "Your Diffusion Model is Secretly a Zero-Shot Classifier" explores the potential of large-scale text-to-image diffusion models, like Stable Diffusion, to function as zero-shot classifiers by leveraging their conditional density estimation capabilities. This research indicates that, while traditionally used for image generation, these models can also perform classification tasks without additional training and demonstrate superior multimodal compositional reasoning compared to discriminative approaches.

Introduction to Diffusion Models

Diffusion models traditionally operate through iterative processes: a fixed forward process that adds noise to data and a trained reverse process that removes it. This paper shows that these models' ability to model data distributions enables them to excel in zero-shot classification tasks by estimating class-conditional likelihoods.

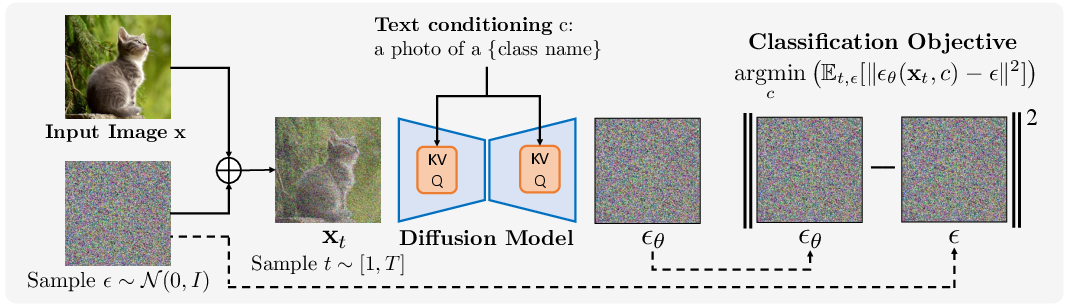

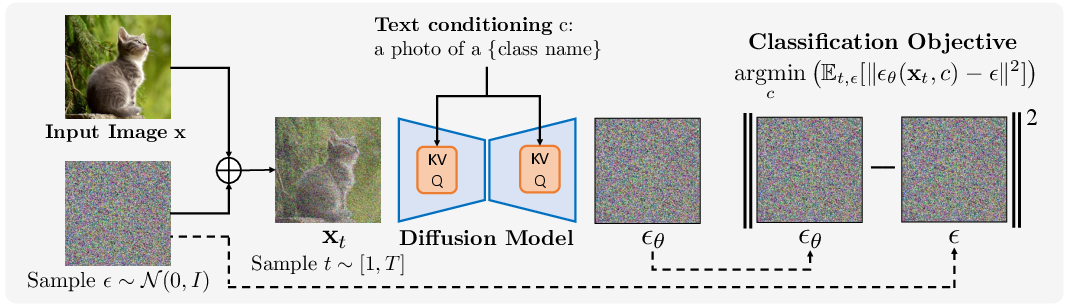

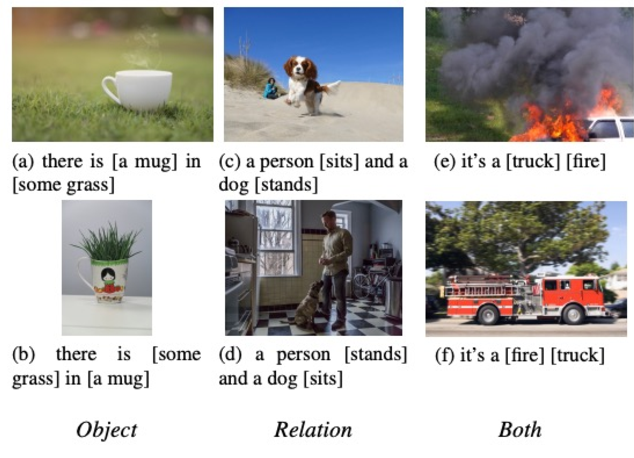

Figure 1: Overview of our Diffusion Classifier approach: Given an input image x, the model chooses the best-fitting conditioning input using an ELBO-based approximation.

The Diffusion Classifier utilizes the evidence lower bound (ELBO) of diffusion models as an approximation of the log-likelihood, a method previously under-utilized in classification tasks. This generative strategy offers an alternative to discriminative methods, demonstrating significant performance gains in zero-shot scenarios.

Practical Classification with Diffusion Models

Methodology

Diffusion Classifier applies Bayes' theorem to class-conditional diffusion models to compute class probabilities and employs a Monte Carlo estimate of the ELBO for accurate noisy image reconstruction.

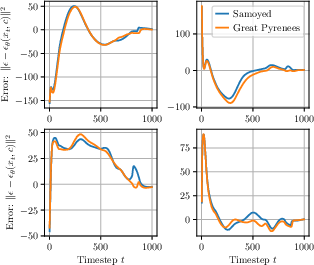

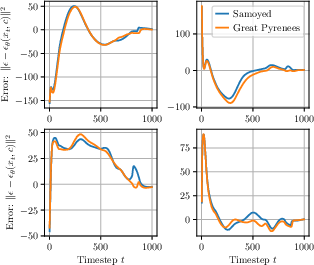

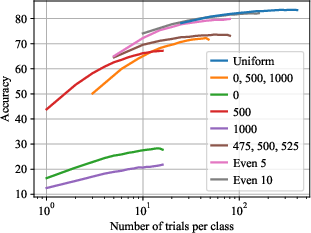

Figure 2: Epsilon-prediction errors for different prompts highlight variance reduction strategies critical to classification accuracy.

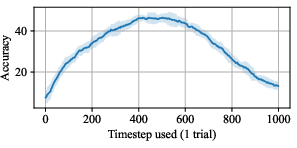

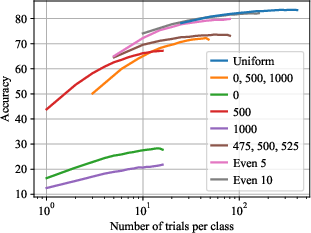

The paper proposes a sampling strategy that allocates epsilon evaluations evenly across timesteps, balancing overhead with classification accuracy, a significant improvement over uniform sampling methods.

Computational Efficiency

The paper outlines an optimized algorithm for Diffusion Classifier, which reduces computational costs without compromising accuracy. An efficient strategy involves stage-wise pruning of unlikely classes based on noise prediction errors, significantly decreasing the inference time required.

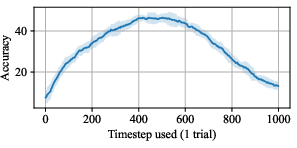

Figure 3: Pets accuracy evaluating single timestep per class emphasizes performance improvements with noise parameter tuning.

Experimental Results

Zero-Shot Classification

Diffusion Classifier demonstrates competitiveness with state-of-the-art, zero-shot discriminative classifiers such as CLIP, particularly on tasks requiring compositional reasoning. It significantly improves performance on the Winoground benchmark by excelling in tasks requiring high-level abstraction.

Figure 4: Zero-shot scaling curves identified the best timestep sampling strategies for diffusion models.

Supervised Classification

Comparisons on ImageNet benchmarks show that while Diffusion Classifier does not entirely bridge the gap with discriminative models, its robustness to distributional shift and minimal training data requirements position it as a promising alternative.

Figure 5: Robustness without extra labeled data illustrates Diffusion Classifier's potential over discriminative models.

Conclusion

These findings mark progress towards adopting generative models for discriminative tasks, showcasing their unique advantages and suggesting avenues for future work, particularly in enhancing classification efficiency and robustness. Future developments could extend these insights to other classification challenges, refining generative model applications in practical AI deployments.

In conclusion, the paper identifies significant opportunities for leveraging diffusion models in classification tasks, challenging the prevailing dominion of discriminative models in machine learning and inspiring innovative approaches to AI research and development.