- The paper introduces SR-MARL, which leverages structural information principles to automate hierarchical role discovery in multi-agent reinforcement learning.

- It employs a novel sparsification and optimization process that enhances performance, achieving up to 6.08% win rate improvement and reduced deviation by 66.30%.

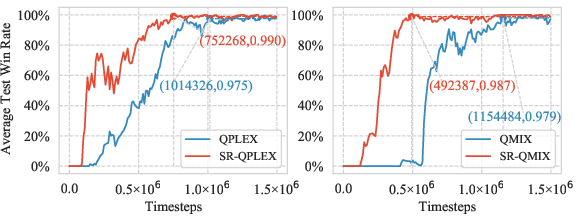

- Integration with QMIX and QPLEX demonstrates that SR-MARL improves coordination and adaptability in challenging StarCraft II micromanagement scenarios.

Effective and Stable Role-Based Multi-Agent Collaboration

The paper "Effective and Stable Role-Based Multi-Agent Collaboration by Structural Information Principles" (2304.00755) proposes a novel method for enhancing multi-agent reinforcement learning (MARL) by incorporating structural information principles into role discovery. The proposed framework, SR-MARL, aims to improve performance in cooperative scenarios by addressing common challenges such as scalability and partial observability.

Framework Overview

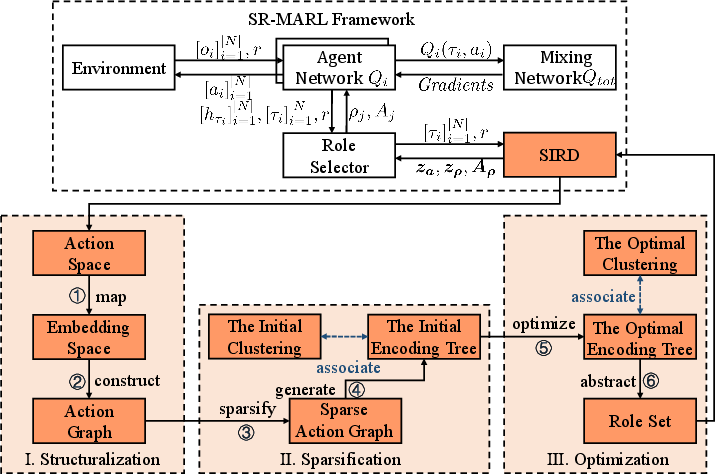

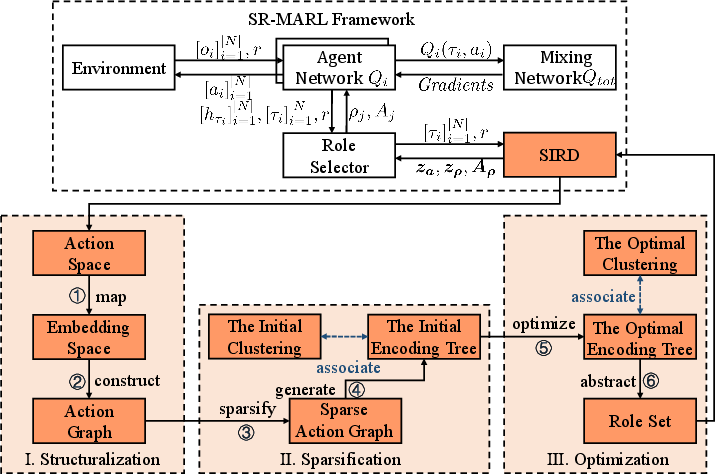

The SR-MARL framework is composed of several modules, including a role discovery module (SIRD), which transforms the task of role discovery into hierarchical action space clustering. The framework integrates seamlessly with value function factorization approaches, enabling flexible improvements in multi-agent coordination without manual tuning.

Figure 1: The overall framework of the SR-MARL.

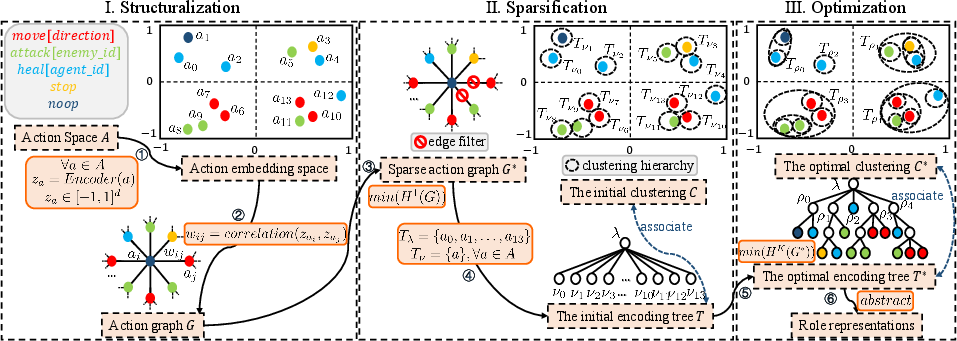

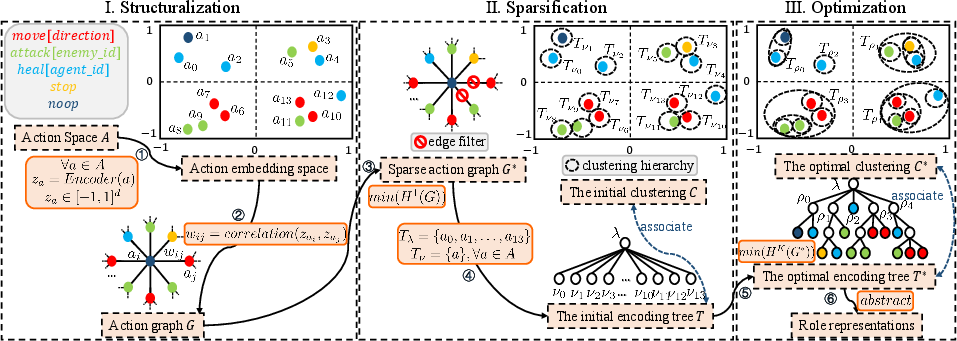

The SIRD method leverages structural information principles to derive an optimal hierarchical clustering of the action space using a multi-stage process:

- Structuralization: Constructs an action graph where actions are vertices connected by edges representing functional correlations. Action representations are learned using an encoder-decoder structure.

- Sparsification: Employs one-dimensional structural entropy minimization to reduce the graph's complexity by creating a k-nearest neighbor graph.

- Optimization: Utilizes operators derived from K-dimensional structural entropy to iteratively refine the encoding tree, achieving optimal hierarchical clustering for role discovery.

Figure 2: The structural information principles-based role discovery.

Empirical Evaluation

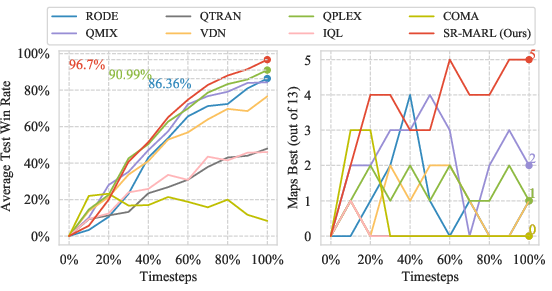

The SR-MARL framework was evaluated on the StarCraft II micromanagement benchmark, demonstrating performance improvements in various task scenarios (easy, hard, and super hard maps).

SR-MARL outperformed existing state-of-the-art MARL algorithms, achieving significant improvements in both average test win rates and stability across different task complexities, especially in high-exploration environments:

Integration and Ablation Studies

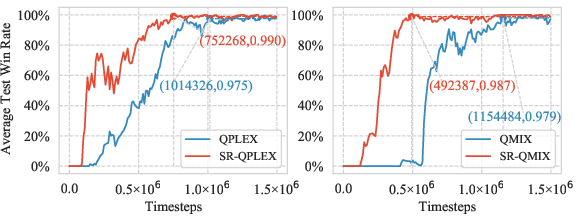

The framework's integrative capabilities were tested by combining SIRD with existing MARL methods such as QMIX and QPLEX, which resulted in enhanced coordination and learning efficiency.

Ablation studies confirmed the importance of structuralization and sparsification. Variants without these components demonstrated lower performance, emphasizing the necessity of these modules for achieving effective role discovery and performance.

Figure 4: Average test win rates of the SR-MARL integrated with value decomposition methods QMIX and QPLEX.

Conclusion

The paper introduces a robust role discovery method using structural information principles that substantially improves multi-agent collaboration in reinforcement learning. By automating role discovery and optimizing hierarchical action space clustering, SR-MARL delivers a more effective and stable performance. Future research could explore further refinement of the encoding tree and adaptation to diverse MARL environments.