Training Strategies for Vision Transformers for Object Detection

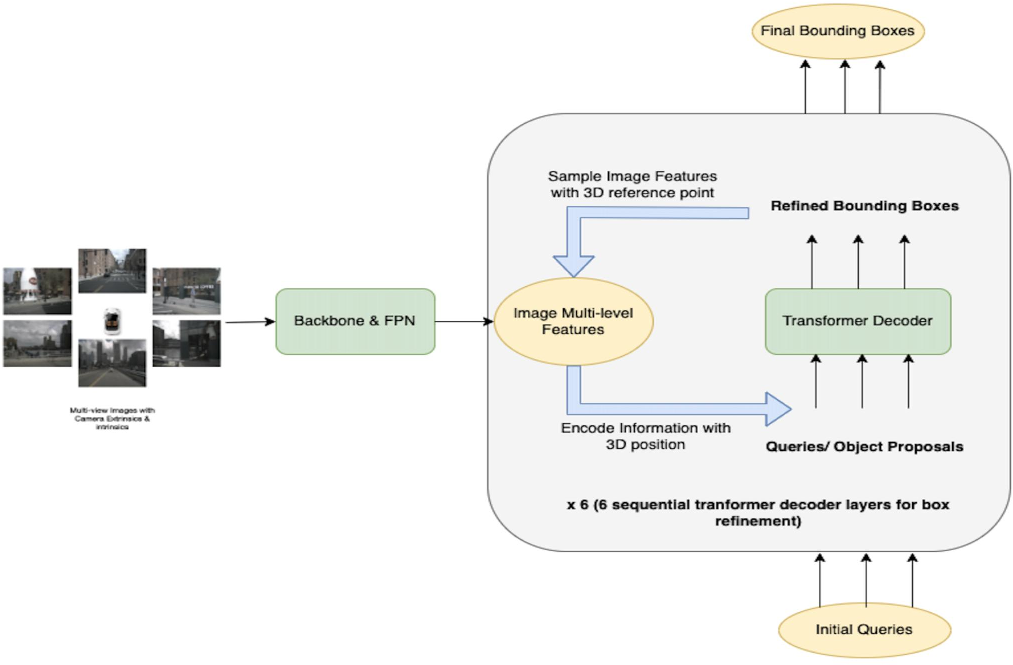

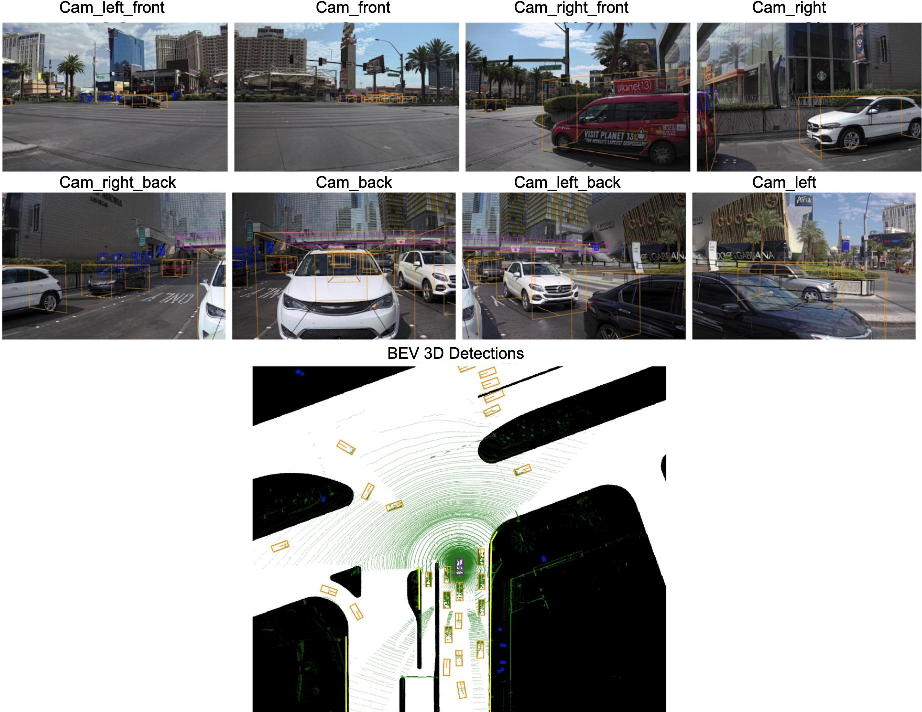

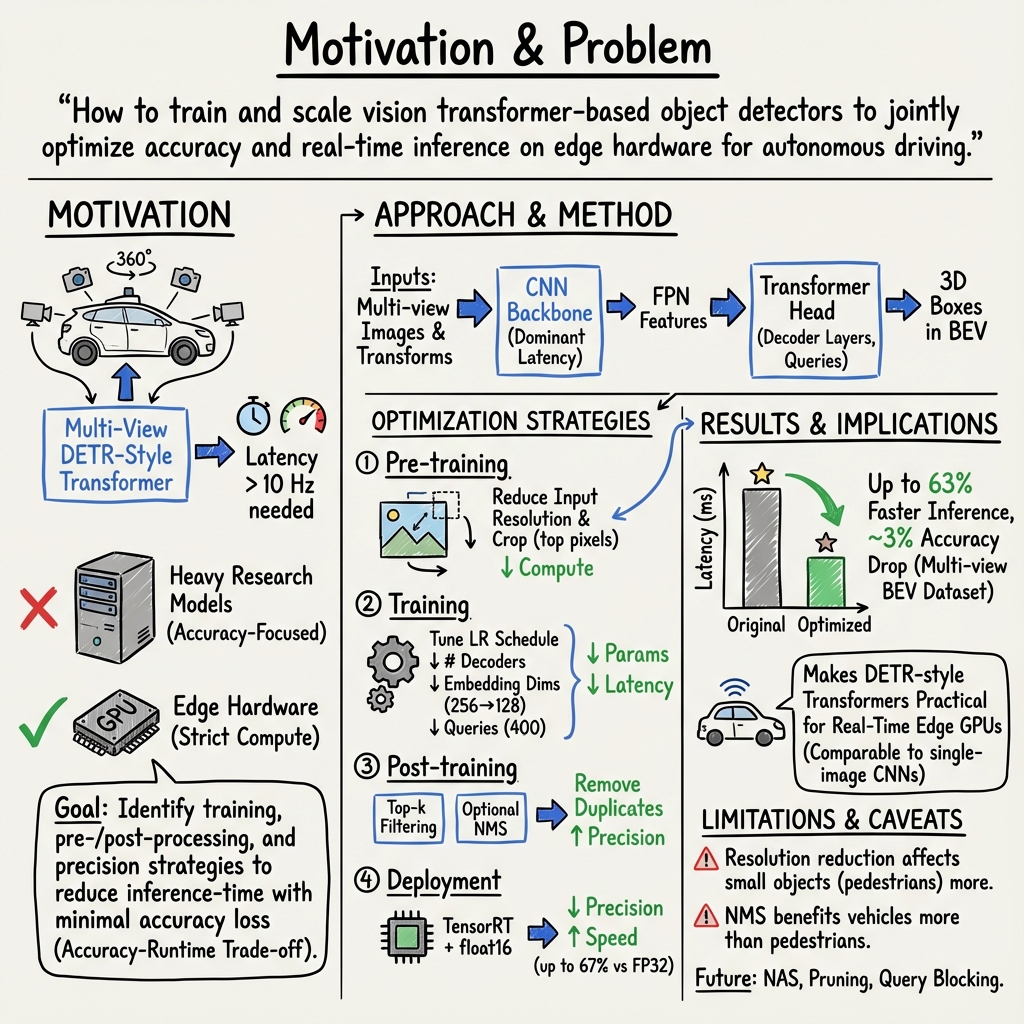

Abstract: Vision-based Transformer have shown huge application in the perception module of autonomous driving in terms of predicting accurate 3D bounding boxes, owing to their strong capability in modeling long-range dependencies between the visual features. However Transformers, initially designed for LLMs, have mostly focused on the performance accuracy, and not so much on the inference-time budget. For a safety critical system like autonomous driving, real-time inference at the on-board compute is an absolute necessity. This keeps our object detection algorithm under a very tight run-time budget. In this paper, we evaluated a variety of strategies to optimize on the inference-time of vision transformers based object detection methods keeping a close-watch on any performance variations. Our chosen metric for these strategies is accuracy-runtime joint optimization. Moreover, for actual inference-time analysis we profile our strategies with float32 and float16 precision with TensorRT module. This is the most common format used by the industry for deployment of their Machine Learning networks on the edge devices. We showed that our strategies are able to improve inference-time by 63% at the cost of performance drop of mere 3% for our problem-statement defined in evaluation section. These strategies brings down Vision Transformers detectors inference-time even less than traditional single-image based CNN detectors like FCOS. We recommend practitioners use these techniques to deploy Transformers based hefty multi-view networks on a budge-constrained robotic platform.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list summarizes what remains missing, uncertain, or unexplored in the paper, framed as concrete and actionable directions for future research.

- Generalization uncertainty: results are reported only on a proprietary in-house dataset; replicate the study on public benchmarks (nuScenes, Waymo) with identical protocols to validate robustness across domains, weather, lighting, and city distributions.

- Limited class coverage: accuracy is reported for only vehicles and pedestrians; extend analyses to small/rare classes (e.g., cyclists, cones, signs) that are critical for autonomy and more sensitive to resolution/cropping.

- Ambiguity in 3D metrics: AP is used without clarifying whether IoU is BEV or full 3D; report 3D detection metrics (mATE, mASE, mAOE, mAVE, NDS) and class-specific localization/orientation errors that matter for planning.

- Accuracy impact of precision/format changes not measured: FP16/TensorRT results present runtime only; quantify accuracy/calibration changes after conversion (PyTorch→TensorRT; FP32→FP16), including numerical stability in attention layers and any class-specific degradation.

- Latency variance and worst-case behavior unknown: provide 95th/99th percentile latency, jitter, and tail behavior under realistic multi-camera input streaming and system load; verify end-to-end 10 Hz guarantees with safety margins.

- Hardware scope narrow: experiments are on NVIDIA T4 only; replicate on automotive edge platforms (NVIDIA Orin/Xavier), desktop GPUs, and different CUDA/driver versions to confirm portability of conclusions (e.g., “power-of-2” embedding dimensions).

- Quantization left unexplored: evaluate INT8 (post-training vs quantization-aware training), per-channel/per-tensor calibration, accuracy-drop trade-offs, and TensorRT deployment details for transformer components.

- Memory, bandwidth, and power budgets omitted: report VRAM footprint, GPU memory bandwidth utilization, energy consumption, and thermal behavior, especially under FP16 and TensorRT optimizations on edge devices.

- Pre-cropping safety/intrinsics gaps: top-row cropping assumes uninformative pixels; formally recompute camera intrinsics/extrinsics and assess impact across rigs (tilt, pitch), elevated objects, bridges/overpasses, and rare corner cases.

- Resolution scaling side-effects under-characterized: quantify small-object and long-range detection impacts beyond pedestrians; explore content-adaptive resolution (dynamic downscaling based on scene) and per-camera resolution policies.

- Fixed query budget robustness: tuning queries to ~400 based on dataset average leaves crowded scenes unaddressed; test failure modes when ground-truth count exceeds queries and develop adaptive/dynamic query allocation.

- NMS usage vs set-based loss: systematically characterize when NMS helps transformer detectors (class-/size-dependent thresholds, 3D vs BEV IoU), its effect on recall/duplicates, and whether learned duplicate suppression can replace post-hoc NMS.

- Reproducibility details missing: publish training schedules (epochs, batch size, optimizer, weight decay, augmentations, seed control), code/configs, and dataset splits to enable replication and variance estimation across runs.

- Compound scaling ablations incomplete: disentangle the cumulative effect of simultaneous changes (resolution, embedding size, queries, decoders) via controlled ablations and develop “compound scaling” rules akin to EfficientNet for transformer detectors.

- Baseline parity unclear: provide speed-accuracy Pareto comparisons against strong CNN (FCOS/YOLO) and transformer baselines (DETR3D, PETR, BEVFormer dense queries) under identical hardware and deployment settings.

- Confidence calibration not addressed: analyze calibration (ECE), threshold sensitivity, and the effect of top-k filtering on precision/recall and downstream planner reliability; consider calibration-aware deployment policies.

- Range restriction rationale: results are restricted to 60 m; justify this limit and study accuracy/runtime trade-offs across distance bands (e.g., 0–30 m, 30–60 m, >60 m) relevant for high-speed driving.

- MACs/params-to-latency mapping: develop predictive latency models that account for memory-bound behavior and kernel fusion, linking architectural changes (MACs/params) to observed inference-time across hardware.

- Transformer design space underexplored: examine attention head count, MLP width, positional encodings, and efficient attention variants (Linformer, Performer, FlashAttention), as well as pruning/distillation and transformer-focused NAS.

- Pipeline-level gaps: measure full system latency including pre-processing (undistortion, resizing, camera transforms), post-processing, inter-process communication, and concurrency with other autonomy modules.

- Distribution shift and failure modes: evaluate robustness under adverse weather, night, occlusion, motion blur, lens contamination, and sensor dropouts; characterize both accuracy and runtime stability under shifts.

- TensorRT conversion details: document plugin usage, layer fusion patterns, dynamic-shape support, precision fallback cases, and common failure/accuracy pitfalls during conversion for transformer heads.

- 3D box quality beyond AP: report centroid error, orientation/yaw error, and dimension error distributions; AP can mask localization errors that are critical for collision avoidance.

- Throughput vs latency trade-offs: study batching, multi-stream inference, and concurrency to understand how to balance throughput (frames/sec) and per-frame latency in multi-camera setups.

- Dataset access and validation: the in-house dataset is not available; provide summary statistics (size, class distribution, scene taxonomy), and perform cross-dataset generalization studies to substantiate claims of diversity/difficulty.

- Environmental impact quantification: move beyond qualitative statements to measure training/inference energy use and carbon footprint, and quantify savings from proposed scaling strategies.

- Security/robustness concerns: assess susceptibility to adversarial perturbations, compression artifacts, photometric distortions, and FP16-specific vulnerabilities; propose defenses or validation protocols.

Collections

Sign up for free to add this paper to one or more collections.