Automatic Root Cause Analysis via Large Language Models for Cloud Incidents

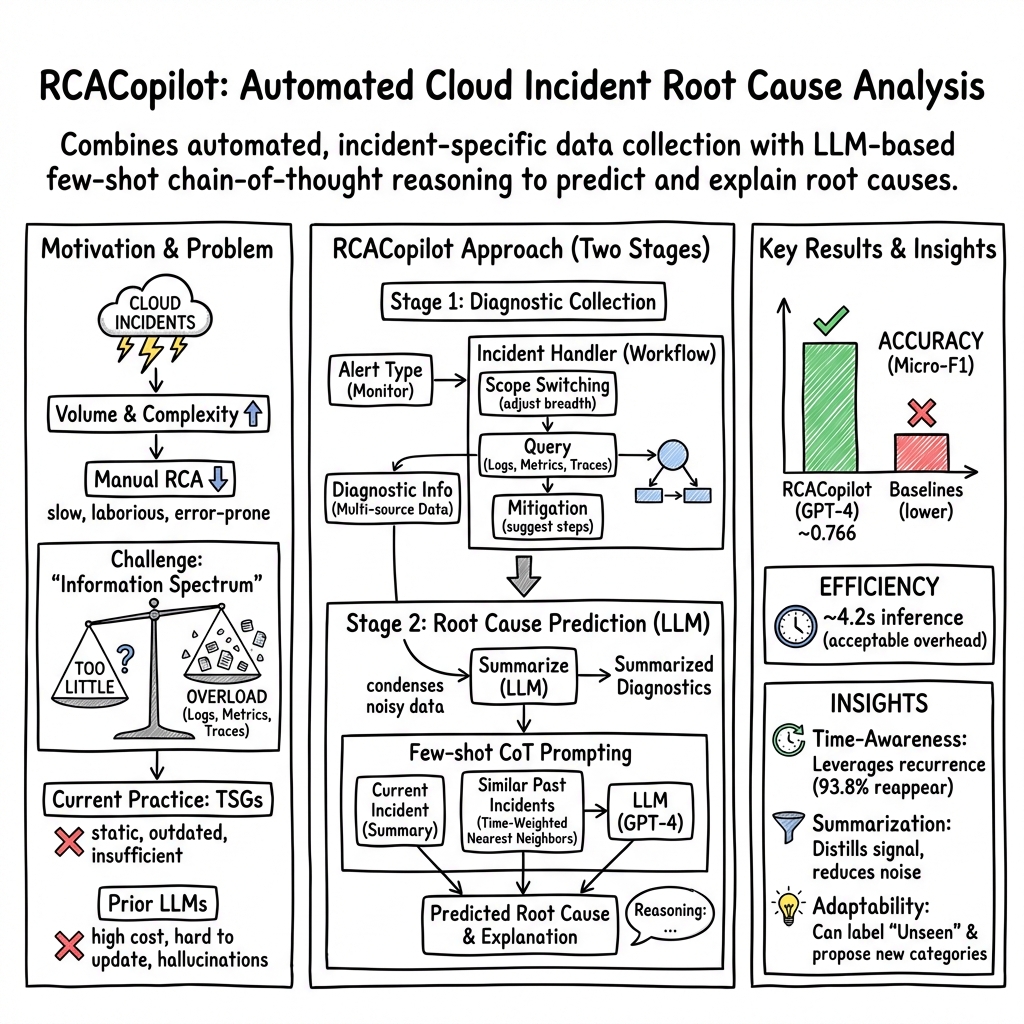

Abstract: Ensuring the reliability and availability of cloud services necessitates efficient root cause analysis (RCA) for cloud incidents. Traditional RCA methods, which rely on manual investigations of data sources such as logs and traces, are often laborious, error-prone, and challenging for on-call engineers. In this paper, we introduce RCACopilot, an innovative on-call system empowered by the LLM for automating RCA of cloud incidents. RCACopilot matches incoming incidents to corresponding incident handlers based on their alert types, aggregates the critical runtime diagnostic information, predicts the incident's root cause category, and provides an explanatory narrative. We evaluate RCACopilot using a real-world dataset consisting of a year's worth of incidents from Microsoft. Our evaluation demonstrates that RCACopilot achieves RCA accuracy up to 0.766. Furthermore, the diagnostic information collection component of RCACopilot has been successfully in use at Microsoft for over four years.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper introduces a system called RCACopilot that helps fix problems in big cloud services (like email or online storage) faster. When something goes wrong, engineers need to figure out the “root cause” — the real reason the problem happened — so they can fix it and stop it from happening again. RCACopilot uses smart software (LLMs, or LLMs) plus expert-designed steps to automatically collect the right information and suggest the most likely cause, with a clear explanation.

The main questions the paper tries to answer

The researchers wanted to know:

- Can we automatically gather the most useful clues from many places (like logs, metrics, and traces) without overwhelming engineers with too much or too little information?

- Can an AI model look at those clues and accurately guess the type of problem and explain why?

- Will this actually help on-call engineers work faster and reduce errors in real cloud systems?

How RCACopilot works (in everyday terms)

Think of RCACopilot as a two-part system that acts like a smart detective:

Stage 1: Collecting the right clues (Diagnostic Information Collection)

Engineers create “incident handlers” — like recipes, checklists, or flowcharts — for different kinds of alerts. Each handler includes reusable actions:

- Scope switching action: Zooms in or out. For example, if a problem looks big (across many machines), it may zoom into a single machine to get more detailed clues.

- Query action: Pulls data from different sources. This is like asking specific questions of logs, metrics, or tools to gather key facts (e.g., “How many errors happened?” “Which process used most network ports?”).

- Mitigation action: Suggests immediate steps to reduce harm (e.g., “restart a service” or “engage a specific team”) if the cause looks familiar.

This stage combats the “information spectrum” problem:

- Sometimes there’s too little info (hard to tell what happened).

- Sometimes there’s too much (hard to find the useful parts). Handlers collect just the relevant data for each alert type, keeping things focused.

Stage 2: Explaining the likely cause (LLM-based Root Cause Prediction)

After collecting clues, RCACopilot uses an LLM to predict the category of the root cause and explain its reasoning. It does this in a few smart steps:

- Summarization: The AI first summarizes long, messy logs into short, readable notes (about 120–140 words). This keeps only the important details.

- Finding similar past cases: RCACopilot turns each incident’s summary into a “text fingerprint” (an embedding vector). It then finds past incidents that look similar, giving more weight to recent ones because similar problems often reappear within weeks.

- Reasoned selection: Using “few-shot” examples (a small set of similar past incidents and their root causes), the AI picks the most likely category for the current incident and explains why — like a detective showing how the clues match a known case. It also includes an “Unseen incident” option if none of the past cases fit.

What they found and why it matters

Here are the most important results from testing RCACopilot on a year of real Microsoft cloud incidents (including a large email service):

- Accuracy: RCACopilot reached a Micro-F1 score of 0.766 and a Macro-F1 score of 0.533 when predicting root cause categories, beating other methods they tested.

- Speed: It runs quickly (about 4.2 seconds per prediction), making it practical during live incidents.

- Real-world use: The data-collection part of RCACopilot has been used at Microsoft for over four years. The root cause prediction part was tested and then actively used for several months by an incident response team.

- Handles tricky patterns: The system recognizes that many incidents repeat within about 20 days and uses that to pick better examples. It also helps when the cause is new (about 25% of incidents), offering an “Unseen incident” label with an explanation rather than guessing wrongly.

- Reduces overload: By guiding engineers to the right clues and offering clear AI explanations, it cuts down on time spent digging through huge amounts of data or chasing outdated troubleshooting guides.

What this means for the future

RCACopilot shows that combining expert-designed workflows with AI can make cloud systems more reliable:

- Faster fixes: On-call engineers get the right information and a clear, AI-backed explanation of likely causes, so they can act sooner.

- Less stress: Automating repetitive, time-consuming steps (like searching dozens of logs or outdated guides) reduces burnout.

- Better resilience: As systems change over time, RCACopilot can adapt—engineers can update handlers easily, and the AI learns from new incidents.

- Safer operations: Quickly finding root causes helps prevent repeat outages and keeps services people rely on running smoothly.

In short, RCACopilot is like giving cloud engineers a smart co-pilot: it gathers the right clues, finds similar past problems, explains what’s likely happening now, and helps them fix issues faster and more confidently.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper presents RCACopilot for automated RCA using LLMs and incident handlers. The following unresolved issues and gaps could guide future research:

- External validity and portability

- Generalization beyond a single Microsoft email service: applicability to other cloud services, architectures (microservices, serverless), and organizations remains untested.

- Dependence on organization-specific tooling and access (internal scripts, data stores) limits reproducibility and portability; strategies for vendor-agnostic deployment are not addressed.

- Data, taxonomy, and labeling

- Ground-truth reliability: incident category labels are assigned by OCEs; inter-annotator agreement, label noise, and consistency of the taxonomy over time are not evaluated.

- Multi-causality: many incidents have multiple interacting root causes; the system treats RCA as single-label classification without support for multi-label or hierarchical causes.

- Taxonomy governance: procedures to propose, validate, name, and merge/split categories (especially for “unseen incidents”) are unspecified.

- Class imbalance: macro-F1 (0.533) lag vs. micro-F1 (0.766) signals poor performance on rare categories; methods to address long-tail categories are not explored.

- Handler construction and matching

- Handler matching accuracy: how incidents are mapped to handlers and the error rate of mis-mapping are not evaluated; no fallback or recovery strategy is described.

- Maintenance burden: handlers are manually crafted/updated by OCEs; no tools for mining common actions, recommending edits, or measuring handler coverage and drift.

- Safety and load of actions: executing queries/scripts during incidents may exacerbate system load or require elevated permissions; rate limiting, sandboxing, and auditing are not discussed.

- Versioning impact: while versions are stored, there is no method to measure how handler changes affect prediction quality, MTTR, or incident outcomes.

- Modeling and prompting

- Embedding choice: reliance on FastText is motivated by efficiency but not compared with modern sentence embeddings (e.g., MPNet, SimCSE, E5); the trade-off between accuracy, cost, and latency is unquantified.

- Temporal similarity weighting: the heuristic exp(−α|Δt|) with α=0.3 is set empirically; sensitivity analysis, principled selection, or adaptive α per category is missing.

- Demonstration selection: fixed K=5 nearest neighbors lacks ablation; effects of K, diversity constraints, category balancing, and de-duplication are unreported.

- Summarization fidelity: the 120–140 word summary may omit critical signals; there is no evaluation of information loss, robustness across prompt templates, or fallback for very noisy/short inputs.

- Prompt sensitivity and robustness: no study of prompt variations, CoT vs. no-CoT, or adversarial/log-induced prompt injection (logs may contain untrusted content).

- Uncertainty and abstention: no calibrated confidence measure, thresholding, or selective prediction is presented beyond a generic “Unseen incident” option; criteria for “unseen” are unclear.

- Explanations and causality

- Explanation faithfulness: LLM-generated rationales are not validated for faithfulness vs. plausibility; no human evaluation or causal grounding is provided.

- Correlation vs. causation: the approach relies on textual/semantic similarity and demonstrations rather than causal inference; methods to disambiguate coincident symptoms from true causes are absent.

- Multi-source data integration

- Structured/temporal signals: metrics, traces, and time-series patterns are not modeled natively; numeric/graph features are lost when reduced to text summaries.

- Conflict resolution: mechanisms to reconcile inconsistent or conflicting signals across sources are not specified.

- Missing data and noise: robustness to incomplete, delayed, or corrupted telemetry/logs is not evaluated.

- Evaluation and impact

- Baselines and ablations: limited detail on baselines (e.g., fine-tuned classifiers, retrieval-augmented non-LLMs), ablations (summary/no summary, CoT, embedding choice), and statistical significance.

- Dataset splits and leakage: train/test protocol, time-aware splits, and leakage risks when using temporal similarity are not documented.

- Operational impact: no measured improvements in MTTR/MTTI, page time reduction, or on-call cognitive load via user studies or A/B tests.

- Error analysis: no breakdown of failure modes by category, severity, data availability, or handler coverage.

- Deployment, security, and privacy

- LLM provenance: the specific LLM(s) used (on-prem vs. external API) and data governance for sensitive internal logs/PII are not detailed; privacy-preserving or on-device alternatives are not discussed.

- Cost and latency: end-to-end token/cost accounting, latency breakdown (summarization, retrieval, prediction), and scalability under high incident volume are missing.

- Security risks: prompt injection via logs, data exfiltration, and supply-chain risks from integrating external tools/LLMs lack mitigations and monitoring plans.

- Lifecycle learning and drift

- Continuous learning: pipelines to incorporate OCE feedback, correct mispredictions, retrain embeddings/LLMs, and handle concept drift are not described.

- Recurrence handling: while temporal proximity is used for retrieval, strategies to prevent entrenched errors when the “known issue” heuristic is wrong are not articulated.

- Mitigation guidance

- From diagnosis to action: how predicted categories translate into actionable, safe mitigation steps is unclear; evaluation of mitigation suggestions (correctness, risk, recovery time) is absent.

- Human-in-the-loop thresholds: criteria for when to defer to OCEs vs. auto-suggest or auto-execute mitigations are unspecified.

- Scalability and systems aspects

- Vector search infrastructure: index type, memory footprint, and query time for large incident corpora are not reported; behavior at scale (e.g., many services/regions) is unknown.

- Concurrency and prioritization: handling of simultaneous incidents, resource contention among handlers, and prioritization policies are not covered.

- Compliance and ethics

- Accountability: processes for auditing decisions, traceability of LLM outputs, and post-incident review integration are not described.

- Fairness and bias: potential biases across teams/services or over-prioritization of recent incidents (due to temporal weighting) are not analyzed.

These gaps suggest concrete avenues for future work: rigorous user-centric evaluations (MTTR, cognitive load), robust modeling (uncertainty, multi-label RCA, structured data fusion), security/privacy-hardening for LLM-in-the-loop ops, automated handler management, stronger baselines and ablations, and systematic studies of portability and scaling.

Practical Applications

Immediate Applications

Below are deployable-now applications that leverage RCACopilot’s incident handlers, multi-source data aggregation, and LLM-based summarization and root-cause prediction (validated in Microsoft’s production with 4+ years of use for data collection and months of active deployment for prediction; micro-F1 up to 0.766).

- Automated diagnostic data collection for incidents

- Description: Use incident handlers (scope-switching, query, mitigation actions) to automatically gather logs, metrics, traces, and script outputs for each alert type, avoiding “information spectrum” issues (too little/too much data).

- Sectors: Software/cloud (SRE/DevOps), telecom NOCs, fintech ops, e-commerce, gaming ops.

- Tools/workflows: “Handler Studio” (no-/low-code GUI to define reusable actions), plugins for Datadog/Splunk/Elastic/Azure Monitor/CloudWatch/New Relic.

- Assumptions/dependencies: Access to observability data sources and internal investigation scripts; alert types defined and mapped to handlers; role-based access and logging/PII controls.

- LLM-driven incident summarization from multi-source diagnostics

- Description: Summarize verbose, low-readability logs/metrics/traces into concise, 120–140-word briefs to reduce cognitive load and accelerate triage.

- Sectors: All sectors with 24/7 ops; MSPs and SMB IT helpdesks.

- Tools/workflows: “Diagnostic Summarizer” microservice; ticket enrichment extensions for ServiceNow/Jira/Opsgenie/PagerDuty.

- Assumptions/dependencies: LLM access (API or on-prem); guardrails to prevent leakage of sensitive content; token and latency budgets.

- Root-cause category prediction with explanations

- Description: Predict incident root-cause category and provide a human-readable rationale using few-shot prompting with retrieved, recent similar cases.

- Sectors: Software/cloud, telecom, finance (trading/clearing systems), media streaming, SaaS.

- Tools/workflows: “RCA Copilot” panel in on-call consoles; postmortem pre-fill of “Cause” and “What happened” sections.

- Assumptions/dependencies: Curated taxonomy of root-cause categories; sufficient historical incidents to power embeddings; expert review-in-the-loop to mitigate misclassifications; SLAs for inference latency.

- Known-issue detection and rapid mitigation routing

- Description: Nearest-neighbor retrieval (with temporal decay) flags recurring incidents and proposes previously effective mitigations or team engagement.

- Sectors: Cloud/SaaS, fintech, telecom.

- Tools/workflows: “Known-Issue Service”; automated team paging rules; runbook linkers.

- Assumptions/dependencies: Historical incident store with timestamps and outcomes; change-management sync to keep mitigations current; routing integration with chat/ITSM.

- TSG modernization: convert static runbooks into executable handlers

- Description: Refactor static troubleshooting guides into versioned, executable workflows with reusable actions and scope-switching logic.

- Sectors: Any ops-heavy organization; MSPs.

- Tools/workflows: “Runbook-to-Handler” migration pipeline; versioned handler repository; review and rollback tools.

- Assumptions/dependencies: SMEs to define actions and validate execution; governance to manage updates; secure script execution environment.

- Incident triage and alert-to-handler matching

- Description: Automatically map incoming alerts to the correct handlers and teams, reducing manual triage effort and time-to-mitigation.

- Sectors: Cloud/SRE, SOC/NOC operations, enterprise IT.

- Tools/workflows: Alert router with handler matching; triage dashboards.

- Assumptions/dependencies: Stable alert schemas; mapping rules; fallbacks for unknown alerts.

- Postmortem drafting and knowledge capture

- Description: Generate structured incident narratives (symptoms, cause, scope, actions) from summaries and predictions for faster, more consistent postmortems.

- Sectors: All ops teams; compliance-heavy sectors (finance, healthcare) where documentation quality matters.

- Tools/workflows: “Postmortem Assistant”; templates with taxonomy alignment.

- Assumptions/dependencies: Policy for AI-assisted documentation; reviewer sign-off; data retention/audit requirements.

- Incident de-duplication and trend detection

- Description: Use embeddings to cluster similar incidents, reduce ticket noise, and highlight rising categories before they spike.

- Sectors: SaaS/Cloud, telecom, retail IT.

- Tools/workflows: “Incident Similarity Service”; trend dashboards; backlog prioritization.

- Assumptions/dependencies: Quality embeddings; sufficient volume; threshold tuning to avoid over- or under-grouping.

- Onboarding and training aids for on-call engineers

- Description: Use handler logic and explanations to create interactive, scenario-based training modules that reflect real production workflows.

- Sectors: Any organization with rotating on-call duties.

- Tools/workflows: “RCA Training Simulator”; sandboxed replay of anonymized incidents.

- Assumptions/dependencies: Curated incident cases; anonymization; training time allocation.

- ITSM integration to auto-populate and enrich tickets

- Description: Pre-fill tickets with summaries, predicted category, known-issue references, and suggested next actions.

- Sectors: Enterprise IT, MSPs.

- Tools/workflows: Connectors to ServiceNow/Jira/Remedy; enrichment webhooks.

- Assumptions/dependencies: API access and field mappings; change governance; rate limits and cost control.

Long-Term Applications

These opportunities require additional research, scaling, verification, or organizational/process changes.

- Closed-loop self-healing with safe automated mitigations

- Description: When confidence and safeguards are high, automatically execute mitigation actions (e.g., restart service, scale-out, roll back deployment) triggered by RCA predictions and handler logic.

- Sectors: Cloud/SaaS, telecom, large-scale e-commerce.

- Tools/workflows: Policy engine with canary/guardrail checks; progressive rollout and auto-rollback; human override.

- Assumptions/dependencies: Robust confidence estimation; safe-action catalogs; strong observability for validation; change-management approvals.

- Cross-service, cross-cloud RCA and propagation analysis

- Description: Diagnose incidents spanning microservices, regions, and vendors, inferring cascades and shared root causes across distributed environments.

- Sectors: Multicloud enterprises, supply-chain-integrated services, fintech exchanges.

- Tools/workflows: Federated data connectors; standardized incident ontology; distributed tracing enrichment.

- Assumptions/dependencies: Data-sharing agreements; standardized schemas; latency-tolerant inference; privacy/security constraints.

- Proactive incident prevention and early warning

- Description: Use temporal-aware similarity and weak-signal patterns to forecast likely recurrences within days/weeks and prompt preemptive checks.

- Sectors: Cloud/SaaS, telecom, OT/Manufacturing.

- Tools/workflows: “Early Warning Service”; scheduled health checks generated from predicted weak points.

- Assumptions/dependencies: Reliable recurrence patterns; minimal false positives; clear escalation paths.

- Sector-specific, safety-critical RCA with verified LLMs

- Description: Extend to regulated domains (healthcare, energy, aviation) with formally verified or constrained LLM components and rigorous traceability.

- Sectors: Healthcare IT, energy grid ops, automotive/AV ops.

- Tools/workflows: Constrained generation, retrieval-augmented generation with validated corpora, formal safety checks.

- Assumptions/dependencies: Certification-ready pipelines; domain datasets; model auditability and deterministic fallbacks.

- Standardized incident taxonomy and benchmarking datasets

- Description: Community-agreed ontologies and shared (synthetic/anonymized) datasets for evaluating LLM-based RCA and handler strategies.

- Sectors: Academia, standards bodies, tooling vendors.

- Tools/workflows: Open benchmarks; evaluation harnesses; shared metrics (e.g., micro-/macro-F1, time-to-mitigation).

- Assumptions/dependencies: Anonymization and legal frameworks; contributor incentives; governance boards.

- Continual learning and feedback-driven adaptation without full fine-tuning

- Description: RAG-style pipelines that update embeddings, exemplars, and handlers using human feedback and incident outcomes while avoiding costly finetuning.

- Sectors: All.

- Tools/workflows: Feedback capture in on-call tools; human-in-the-loop labeling; drift detection.

- Assumptions/dependencies: Reliable feedback signals; guardrails against data contamination and concept drift.

- Privacy-preserving, on-prem LLM inference for sensitive logs

- Description: Deploy compact, domain-tuned LLMs on-premises or in VPCs to keep operational data private while enabling RCA automation.

- Sectors: Finance, healthcare, government, defense.

- Tools/workflows: Model distillation/quantization; KMS-integrated secret handling; DLP and redaction layers.

- Assumptions/dependencies: Hardware capacity; MLOps for private models; compliance audits.

- Policy and governance for AI-assisted incident response

- Description: Organizational and regulatory frameworks specifying explainability, audit trails, human oversight, and data handling for AI in incident management.

- Sectors: Cross-sector policy, risk, and compliance.

- Tools/workflows: AI governance dashboards; incident AI audit logs; model risk assessments.

- Assumptions/dependencies: Cross-functional alignment (SRE, security, legal); evolving regulations; clear accountability.

- Multi-agent AIOps orchestration

- Description: LLM agent coordinating specialized agents (log parser, trace analyzer, metric anomaly detector) to manage complex triage/diagnosis workflows.

- Sectors: Large enterprises with heterogeneous tooling.

- Tools/workflows: Tool-augmented LLM frameworks; secure function calling; agent execution graphs.

- Assumptions/dependencies: Tool interoperability; execution safety; latency/cost optimization.

- Knowledge capture and expertise transfer at scale

- Description: Codify SME heuristics into reusable handler actions and exemplars, reducing single-point-of-failure risks and accelerating global on-call efficacy.

- Sectors: Any with distributed ops teams.

- Tools/workflows: “Expert-to-Handler” capture sessions; playbook validation pipelines.

- Assumptions/dependencies: SME time and incentives; continuous validation; cultural adoption.

Notes on Feasibility and Transferability

- Domain fit: The approach is most immediately effective where high-quality observability and well-structured alerts exist. Benefits diminish with sparse telemetry or ad-hoc incident processes.

- Human oversight: Predictions should be treated as decision support; human review remains critical, especially for new/root-cause-unknown incidents and high-severity events.

- Cost and latency management: Token usage and model latency must be budgeted; summarization and embedding retrieval help keep overhead low (the paper reports ~4.2s runtime).

- Security/compliance: Logs and diagnostics often contain sensitive data; deployments need redaction, RBAC, and compliant data pipelines.

- Taxonomy and datasets: A maintained root-cause taxonomy and labeled historical incidents are key dependencies; without them, similarity/retrieval quality degrades.

Glossary

- AuthCertIssue: An incident category indicating authentication certificate problems affecting service operations. "category: AuthCertIssue."

- Chain-of-Thoughts (CoT) prompting: A prompting technique that guides an LLM to produce intermediate reasoning steps before the final answer. "Chain-of-Thoughts (CoT) prompting is a gradient-free technique that elicits LLMs to generate intermediate reasoning steps that lead to the final answer."

- CodeRegression: An incident category denoting failures caused by regressions or bugs introduced in code changes. "CodeRegression"

- DatacenterHubOutboundProxyProbe: A diagnostic probe that checks outbound proxy connectivity for data center hubs. "The DatacenterHubOutboundProxyProbe has failed twice"

- DeliveryHang: An incident category where message delivery processes hang or stall. "the DeliveryHang incidents (No. 3)"

- DispatcherTaskCancelled: An incident category involving cancellations of dispatcher tasks that affect message processing. "the DispatcherTaskCancelled incidents (No. 10)"

- DNS resolution: The process of translating hostnames to IP addresses; failure can prevent service communication. "failed to do DNS resolution"

- Embedding model: A model that maps text into vectors in an embedding space to capture semantic similarity. "We employ FastText as our embedding model"

- Euclidean distance: A distance metric used to measure similarity between embedding vectors. "compute the Euclidean distance for every pair of incident vectors."

- FastText: An efficient word embedding model used to create vector representations of text. "We employ FastText as our embedding model"

- Few-shot learning: An LLM setting where the model learns a task from a small number of examples. "with zero-shot and few-shot learning"

- Forest (scope): A deployment or administrative scope spanning a collection of services or domains. "Forest-wide processes crashed over threshold."

- Front door server: A proxy server at the service entry point handling incoming/outgoing traffic. "connecting to the front door server."

- Hallucinated results: Fabricated or incorrect outputs generated by an LLM without grounding in data. "such models are prone to generate more hallucinated results over time."

- HubPortExhaustion: An incident category where available hub/network ports are depleted, causing failures. "category: HubPortExhaustion."

- Incident handler: An automated, alert-type–specific workflow comprising actions for data collection and response. "Each incident handler is tailored to a specific alert type."

- LLMs: Advanced neural models trained on massive text corpora to perform language tasks. "The recent advent and success of LLMs"

- Macro-F1: A classification metric averaging F1 scores across classes, treating each class equally. "RCACopilot achieves 0.766 and 0.533 for Micro-F1 and Macro-F1 separately"

- Micro-F1: A classification metric computing F1 globally by aggregating contributions of all classes. "RCACopilot achieves 0.766 and 0.533 for Micro-F1 and Macro-F1 separately"

- Mitigation action: A recommended step in a handler to alleviate or resolve an incident. "Mitigation action: This action refers to the strategic steps suggested to alleviate an incident"

- Nearest neighbor search: The process of retrieving past incidents most similar to a current one based on embeddings. "Nearest neighbor search."

- On-call engineers (OCEs): Engineers responsible for responding to and diagnosing incidents in real time. "Assigned on-call engineers (OCEs) inspect different aspects of the incident"

- Poison Message: A system feature for identifying and handling problematic messages that repeatedly fail processing. "This page gives a real-time, high-level view of the Poison Message feature."

- Query action: A handler action that retrieves data from sources or runs scripts to produce diagnostic outputs. "Query action: Query action can query data from different sources and output the query result as a key-value pair table."

- Root Cause Analysis (RCA): The process of identifying the underlying cause of an incident to prevent recurrence. "Root cause analysis (RCA) is pivotal in promptly and effectively addressing these incidents."

- Scope switching action: A handler action that changes the level of data collection (e.g., forest to machine) to refine diagnosis. "Scope switching action: This action facilitates precision in RCA by allowing adjustments to the data collection scope"

- SMTP: Simple Mail Transfer Protocol, used for sending email; issues can impede message delivery. "The issue seems to be related to the SMTP connection"

- Temporal distance: The time gap between incidents used to weight similarity when selecting historical examples. "it also takes into account the temporal distance between incidents"

- tiktoken tokenizer: A tokenization library used to count tokens in text inputs for LLMs. "Specifically, we employ the tiktoken~\cite{tiktoken} tokenizer to count text tokens."

- Transport.exe: A transport service process observed in diagnostics, often involved in mail flow. "with the majority being used by the Transport.exe process."

- UDP hub ports: Network ports used for UDP traffic on hub servers; exhaustion can cause connectivity failures. "the exhaustion of UDP hub ports on a front door machine."

- WinSock error 11001: A Windows Sockets error indicating an unknown host (DNS-related failure). "A WinSock error: 11001"

- Zero-shot learning: An LLM setting where the model performs a task without seeing any labeled examples. "with zero-shot and few-shot learning"

Collections

Sign up for free to add this paper to one or more collections.