Exploring LLM-based Agents for Root Cause Analysis

Abstract: The growing complexity of cloud based software systems has resulted in incident management becoming an integral part of the software development lifecycle. Root cause analysis (RCA), a critical part of the incident management process, is a demanding task for on-call engineers, requiring deep domain knowledge and extensive experience with a team's specific services. Automation of RCA can result in significant savings of time, and ease the burden of incident management on on-call engineers. Recently, researchers have utilized LLMs to perform RCA, and have demonstrated promising results. However, these approaches are not able to dynamically collect additional diagnostic information such as incident related logs, metrics or databases, severely restricting their ability to diagnose root causes. In this work, we explore the use of LLM based agents for RCA to address this limitation. We present a thorough empirical evaluation of a ReAct agent equipped with retrieval tools, on an out-of-distribution dataset of production incidents collected at Microsoft. Results show that ReAct performs competitively with strong retrieval and reasoning baselines, but with highly increased factual accuracy. We then extend this evaluation by incorporating discussions associated with incident reports as additional inputs for the models, which surprisingly does not yield significant performance improvements. Lastly, we conduct a case study with a team at Microsoft to equip the ReAct agent with tools that give it access to external diagnostic services that are used by the team for manual RCA. Our results show how agents can overcome the limitations of prior work, and practical considerations for implementing such a system in practice.

- Recommending Root-Cause and mitigation steps for cloud incidents using large language models.

- METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. In Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization (Ann Arbor, Michigan, June 2005), Association for Computational Linguistics, pp. 65–72.

- Decaf: Diagnosing and triaging performance issues in large-scale cloud services. CoRR abs/1910.05339 (2019).

- The use of MMR, diversity-based reranking for reordering documents and producing summaries. In Proceedings of the 21st annual international ACM SIGIR conference on Research and development in information retrieval (New York, NY, USA, Aug. 1998), SIGIR ’98, Association for Computing Machinery, pp. 335–336.

- Chase, H. LangChain. Chen, M., Tworek, J., Jun, H., Yuan, Q., Pinto, HP d. O (2022).

- Teaching large language models to self-debug. arXiv preprint arXiv:2304. 05128 (2023).

- {{\{{Push-Button}}\}} reliability testing for {{\{{Cloud-Backed}}\}} applications with rainmaker. In 20th USENIX Symposium on Networked Systems Design and Implementation (NSDI 23) (2023), pp. 1701–1716.

- Empowering practical root cause analysis by large language models for cloud incidents.

- PAL: Program-aided language models. 10764–10799.

- A systematic review on anomaly detection for cloud computing environments. In Proceedings of the 2020 3rd Artificial Intelligence and Cloud Computing Conference (New York, NY, USA, 2021), AICCC ’20, Association for Computing Machinery, p. 83–96.

- How to mitigate the incident? an effective troubleshooting guide recommendation technique for online service systems. In Proceedings of the 28th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering (New York, NY, USA, 2020), ESEC/FSE 2020, Association for Computing Machinery, p. 1410–1420.

- BART: Denoising Sequence-to-Sequence pre-training for natural language generation, translation, and comprehension.

- Retrieval-augmented generation for knowledge-intensive nlp tasks. Adv. Neural Inf. Process. Syst. 33 (2020), 9459–9474.

- Lin, C.-Y. ROUGE: A package for automatic evaluation of summaries. In Text Summarization Branches Out (Barcelona, Spain, July 2004), Association for Computational Linguistics, pp. 74–81.

- {RESIN}: A holistic service for dealing with memory leaks in production cloud infrastructure. In 16th USENIX Symposium on Operating Systems Design and Implementation (OSDI 22) (2022), pp. 109–125.

- Correlating events with time series for incident diagnosis. In Proceedings of the 20th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (New York, NY, USA, 2014), KDD ’14, Association for Computing Machinery, p. 1583–1592.

- Diagnosing root causes of intermittent slow queries in cloud databases. Proceedings VLDB Endowment 13, 8 (Apr. 2020), 1176–1189.

- Self-Refine: Iterative refinement with Self-Feedback.

- Tangled up in BLEU: Reevaluating the evaluation of automatic machine translation evaluation metrics.

- Augmented language models: A survey.

- Learning a hierarchical monitoring system for detecting and diagnosing service issues. In Proceedings of the 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (New York, NY, USA, 2015), KDD ’15, Association for Computing Machinery, p. 2029–2038.

- OpenAI. GPT-4 technical report.

- BLEU: A method for automatic evaluation of machine translation. https://aclanthology.org/P02-1040.pdf, 2002. Accessed: 2023-9-27.

- Sentence-BERT: Sentence embeddings using siamese BERT-Networks.

- The probabilistic relevance framework: BM25 and beyond. Foundations and Trends® in Information Retrieval 3, 4 (2009), 333–389.

- Reassessing automatic evaluation metrics for code summarization tasks. In Proceedings of the 29th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering (New York, NY, USA, Aug. 2021), ESEC/FSE 2021, Association for Computing Machinery, pp. 1105–1116.

- Toolformer: Language models can teach themselves to use tools.

- Autotsg: Learning and synthesis for incident troubleshooting. In Proceedings of the 30th ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (New York, NY, USA, 2022), ESEC/FSE 2022, Association for Computing Machinery, p. 1477–1488.

- Shinn, N. reflexion: Reflexion: Language agents with verbal reinforcement learning.

- Reflexion: an autonomous agent with dynamic memory and self-reflection.

- ALFWorld: Aligning text and embodied environments for interactive learning.

- Anomaly detection and failure root cause analysis in (micro) service-based cloud applications: A survey. ACM Comput. Surv. 55, 3 (feb 2022).

- LLM-Planner: Few-Shot grounded planning for embodied agents with large language models.

- Automated traces-based anomaly detection and root cause analysis in cloud platforms. In 2022 IEEE International Conference on Cloud Engineering (IC2E) (2022), pp. 253–260.

- Interleaving retrieval with Chain-of-Thought reasoning for Knowledge-Intensive Multi-Step questions.

- Chain of thought prompting elicits reasoning in large language models.

- Chain-of-thought prompting elicits reasoning in large language models. 24824–24837.

- WebShop: Towards scalable real-world web interaction with grounded language agents. 20744–20757.

- ReAct: Synergizing reasoning and acting in language models.

- TraceArk: Towards actionable performance anomaly alerting for online service systems. In 2023 IEEE/ACM 45th International Conference on Software Engineering: Software Engineering in Practice (ICSE-SEIP) (May 2023), pp. 258–269.

- Bertscore: Evaluating text generation with bert. arXiv preprint arXiv (2019).

- ExpeL: LLM agents are experiential learners.

- WebArena: A realistic web environment for building autonomous agents.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

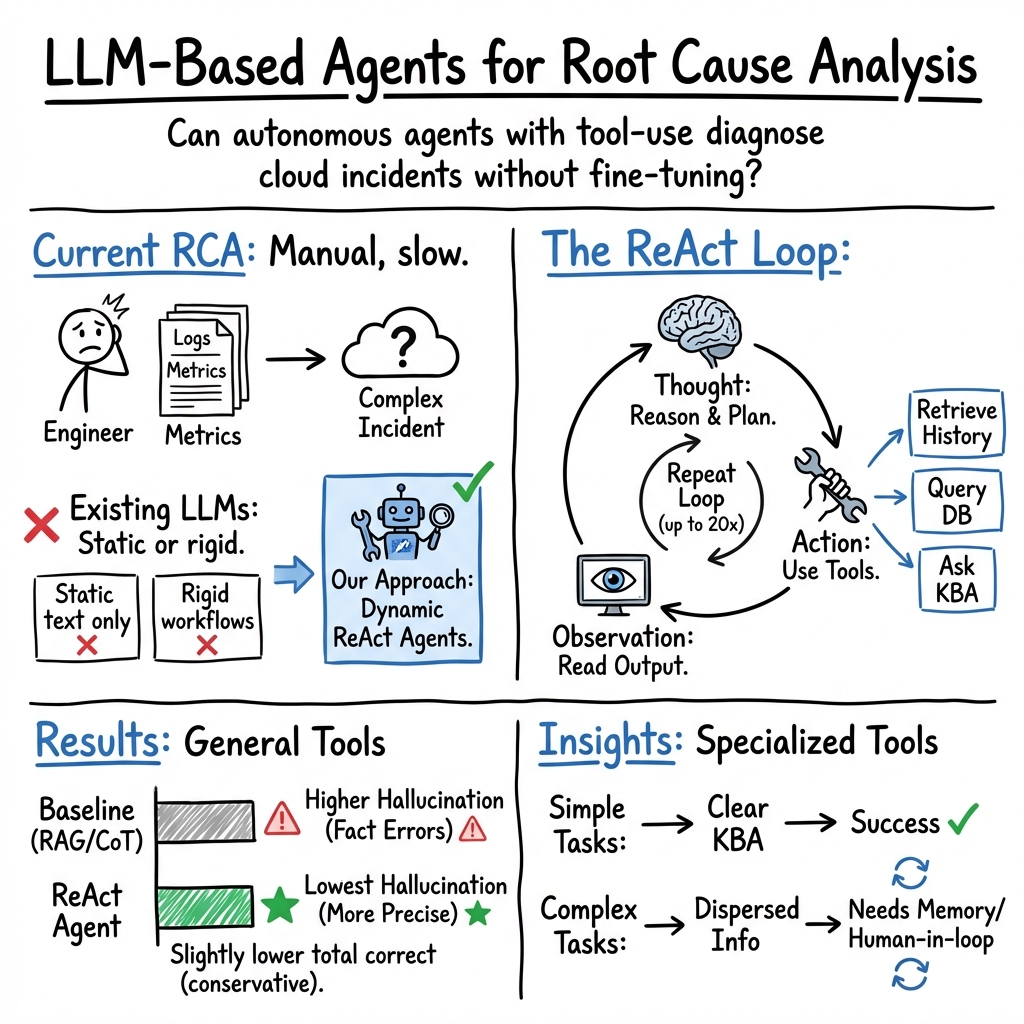

This paper looks at how to use “AI agents” powered by LLMs to help figure out why big cloud software systems break. That job is called root cause analysis (RCA). When a service like an online app has an incident (something goes wrong), engineers need to find the exact reason so they can fix it properly. The paper asks: can an LLM agent, which can think step by step and use tools, help do this faster and more accurately?

Why this is hard

Modern cloud systems are huge and complex, with many parts talking to each other. When something fails, engineers don’t just read the incident report—they also need to collect new information (like logs, metrics, or database records) and know where to look. Earlier AI approaches mostly read the report and guess a cause. They don’t actively go out to gather fresh data. This paper explores LLM agents that can plan, search, and use tools to collect what they need, like a detective gathering clues.

Key Questions

The study focuses on three simple questions:

- RQ1: Can an LLM agent do good root cause analysis using only general tools (like searching past incidents) without special access to team systems?

- RQ2: If we add “discussion comments” from past incident reports (the back-and-forth notes engineers wrote while fixing them), does that help the agent?

- RQ3: If the agent gets special team tools (like the team’s monitoring dashboards and knowledge bases), does it do better in real-world cases?

How They Did It (Methods)

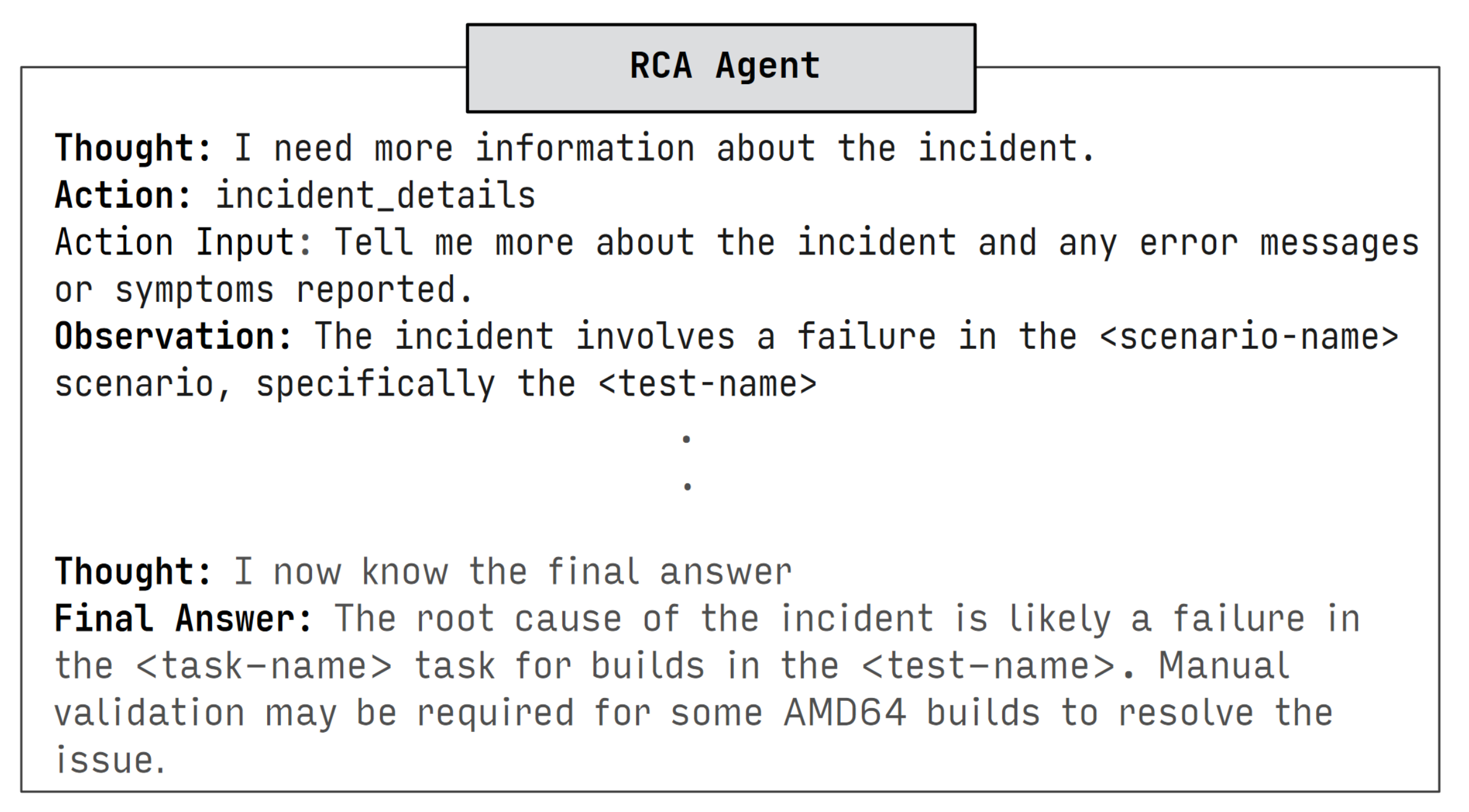

The researchers tested an LLM agent called ReAct, which does two things:

- It “thinks” step by step (planning like a person would).

- It “acts” by using tools (like searching a database of past incidents).

Here’s the approach in everyday terms:

- Data: They used thousands of real incident reports from a large company (Microsoft). Each report has a title, description, and a known root cause for evaluation. For some tests, they also included the engineers’ discussion comments.

- Agent: The ReAct agent uses GPT-4 as its “brain.” It reads the incident, plans what to do next, and uses tools (like “search past incidents” or “answer a question from the current report”). It can do up to 20 think-act steps.

- Tools (general setup):

- “Incident details” tool: lets the agent ask specific questions about the current report.

- “Historical incidents” tool: lets the agent look up similar past incidents.

- Tools (case study setup): For RQ3, they gave the agent access to a team’s monitoring and knowledge systems—like giving it a special toolbox used by that team’s engineers.

- Baselines (for comparison): They tested simpler methods:

- Retrieval baseline (RAG): just fetch the top 10 similar past incidents and let the model learn from them.

- Chain of Thought (CoT): ask the model to “think step by step” without using tools.

- IR-CoT: mix thinking steps with multiple searches during the reasoning.

- Evaluation:

- Automatic scores compare how close the model’s answer is to the true root cause (using text similarity).

- Human review looks for “hallucinations” (confident but wrong statements), reasoning errors, or good/precise answers.

What They Found (Main Results)

Here are the main takeaways, explained simply:

- With only general tools (RQ1):

- The ReAct agent was competitive with the best baselines in meaning (semantic similarity), but a bit lower in word-matching scores (lexical metrics).

- In human review, ReAct made slightly fewer “correct” predictions overall (about 35%) compared to the best baselines (about 39%).

- But ReAct had far fewer hallucinations. In other words, even when wrong, it was less likely to invent fake facts or misleading details. This is important because bad guesses can waste engineers’ time.

- Adding discussion comments (RQ2):

- Surprisingly, including engineers’ discussion notes from past incidents did not consistently improve performance. The extra text sometimes helped with context but didn’t change results much overall.

- Real-world case study with special tools (RQ3):

- When the agent was given access to the team’s actual monitoring and knowledge systems, it could collect the right diagnostic data and help more effectively—showing how agents can overcome the limits of earlier “read-only” approaches.

- The case study also revealed practical issues: connecting the agent to secure, team-specific tools is hard; prompts and tool design need care; and different teams use different systems, so setup takes effort.

Why It Matters

This work suggests that LLM agents can make RCA safer and smarter by:

- Reducing false, made-up statements, which can mislead engineers.

- Helping engineers search relevant past incidents and ask targeted questions.

- Potentially speeding up diagnosis when connected to the right tools.

However, for the biggest impact, agents need:

- Access to the team’s actual diagnostic systems (logs, metrics, dashboards).

- Good prompt design and careful engineering to avoid mistakes.

- Attention to privacy and security, since incident data is sensitive.

Simple Analogy

Think of RCA like solving a mystery:

- Earlier AI methods read the police report and guess.

- The LLM agent acts like a detective: it reads the report, thinks about what to do, searches old cases, and, when possible, checks the crime scene cameras and databases. It’s not perfect, but it’s less likely to claim something that isn’t true, and it gets better when it can use the right tools.

Conclusion and Impact

LLM-based agents show real promise for helping on-call engineers diagnose and fix cloud incidents. On general tests, they’re competitive with strong baselines and make fewer misleading claims. Giving them access to team-specific tools lets them shine in practical settings. In the future, organizations could use such agents to:

- Reduce time to resolve incidents and downtime for users.

- Lessen the burden on on-call engineers.

- Build more reliable services.

To get there, teams will need to integrate agents with their own monitoring and knowledge systems, improve prompts, and ensure data privacy.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of concrete gaps and open questions that future work could address to strengthen and extend the paper’s findings.

- Lack of a realistic, reproducible evaluation environment for RCA agents: no benchmark that simulates access to logs, metrics, traces, knowledge bases, and diagnostic services; develop an open, standardized RCA environment (akin to WebArena/AlfWorld) with tool APIs and ground truth trajectories.

- Restricted tool access in main experiments: agents are evaluated only with general retrieval and incident QA tools; quantify performance gains when agents can use real diagnostic tools (e.g., log query, metrics dashboards, traces, databases) beyond the small case study.

- Small-scale and non-comparative case study: the practical agent evaluation is limited to one team and a small incident set, without quantitative measures (e.g., resolution time, accuracy, escalation rate, user satisfaction); expand to multi-team, longitudinal, controlled studies.

- Inadequate ground-truth evaluation for “specific root cause”: reliance on lexical/semantic similarity (BLEU, ROUGE, METEOR, BERTScore) does not directly capture correctness or factuality of specific causes; design exact-match or structured-label evaluations with rigorous human annotation.

- Limited scope and size of human evaluation: manual coding on 100 predictions across three models is underpowered; scale up human assessment, include inter-annotator agreement, and report statistical significance across models.

- Unassessed impact of summarization pipeline: descriptions, root causes, and discussion comments are summarized with GPT-3.5 without quantitative quality checks; measure summarization fidelity and its effect on RCA performance (ablation: raw vs summarized content).

- Discussion comments integration is inconclusive: comments were added post-retrieval and heavily summarized; test indexing comments directly into the retriever, use procedural steps as few-shot exemplars, and explore retrieval over structured troubleshooting sequences.

- Zero-shot agent prompting only: few-shot, program-of-thought, function-calling, or planning-specific prompts were not explored due to difficulty; evaluate these prompting strategies and their impact on agent stability, reasoning quality, and retrieval behavior.

- Agent prompt stability issues: ReAct occasionally drifted from specified formats in long trajectories; investigate schema-enforced tool calling (function calling), constrained decoding, and parse-time validation to improve robustness.

- Stateless retrieval tool causing duplication: the agent repeatedly retrieves overlapping historical incidents; design novelty-aware, stateful retrieval that tracks prior documents, deduplicates results, and encourages query diversification.

- Unmeasured query quality and optimization: no analysis of agent-generated retrieval queries (precision, recall, diversity, term weighting); add diagnostics and training for query generation (e.g., query rewriting, pseudo-relevance feedback).

- Limited retriever diversity: only BM25 and SentenceTransformer are considered; evaluate hybrid retrieval, BM25+dense re-ranking, domain-adapted embeddings, and service-specific indexes.

- No exploration of external memory or reflection: the paper notes potential benefits but does not test reflection, self-consistency, or long-term memory for multi-step RCA; quantify their effect on accuracy and hallucination rates.

- Absence of time/cost/latency analyses: token usage, number of tool calls, loop iterations, and wall-clock time are not reported; characterize performance–cost trade-offs and define operational budgets for production use.

- Uncertainty estimation and abstention: the agent frequently outputs “Insufficient Evidence” but lacks calibrated confidence or structured escalation strategies; implement uncertainty scoring, abstain policies, and recommendations for next diagnostic steps.

- Integration with structured diagnostic data remains unexplored: real RCA requires querying tabular logs/metrics (e.g., Kusto, SQL) and parsing results; develop DSLs, parsers, and tool adapters for structured data ingestion and reasoning.

- Safety, compliance, and access control: agent access to production systems raises risks (e.g., mis-queries, data exposure); design guardrails, sandboxing, audit logging, and role-based access for safe tool usage.

- Taxonomy and standardization of root causes: ground-truth “specific root causes” are free text and heterogeneous; devise a standardized taxonomy and mapping for more reliable evaluation and cross-team generalization.

- Domain drift and temporal generalization: incidents span 2020–2021 and services evolve; assess performance across time windows, evolving services, and out-of-distribution changes; explore continual learning or periodic re-indexing.

- Limited test set size and statistical rigor: only 500 test incidents (and 100 dev) are used due to cost; perform significance testing, power analysis, and scaling experiments to ensure robust conclusions.

- No head-to-head comparison with prior RCA systems: RCACopilot and fine-tuned LLM approaches are discussed but not experimentally compared; conduct controlled evaluations to quantify trade-offs (accuracy, setup cost, maintainability).

- Generalization across teams and services: heterogeneity in diagnostic stacks and taxonomies is not addressed; evaluate agents across multiple organizations/services and identify portability requirements (tool adapters, knowledge bases).

- Effect of context window and retrieval budget: context constraints drive summarization and limit k; systematically study context size, number of retrieved incidents, and de-duplication strategies on accuracy and hallucinations.

- Multi-agent or specialist-agent architectures: a single planner may struggle with diverse diagnostic tasks; explore specialist agents (logs, metrics, config), coordinator agents, and task decomposition for complex RCA workflows.

- Use of open-source vs proprietary LLMs: only GPT-4 is evaluated; assess performance with open-source models (cost, privacy, deployability) and quantify gains from fine-tuning vs retrieval augmentation.

- Dataset availability and reproducibility: data is confidential and not shareable; propose ways to release de-identified incidents, synthetic benchmarks, or tooling-only evaluations to enable reproducible research.

- Actionability of outputs: beyond correctness, measure whether predictions are actionable (point to verifiable signals, concrete steps) and how they alter OCE workflows; define metrics for actionability and resolution effectiveness.

Glossary

- AIOps (Artificial Intelligence for IT Operations): A field that applies machine learning and AI to automate and enhance IT operations tasks like monitoring, incident response, and RCA. "To address these challenges, the field of AIOps (Artificial Intelligence for IT Operations) has proposed numerous techniques to ease incident management."

- AlfWorld: A simulated embodied environment used to evaluate agent capabilities for sequential tasks. "This is difficult to evaluate without the existence of a simulated environment such as WebArena ~\cite{Zhou2023-ds}, AlfWorld~\cite{Shridhar2020-ky} or WebShop~\cite{Yao2022-hq}."

- Augmented LLMs (ALMs): LLMs enhanced with external tools or reasoning mechanisms (e.g., retrieval, code execution) to extend their capabilities beyond pure text prediction. "A recent development in LM research has been the rise of LMs augmented with the ability to reason and use tools, or Augmented LLMs (ALMs)~\cite{Lewis2020-rj,Mialon2023-rn,Schick2023-vu}."

- BERTScore (BertS): A semantic similarity metric that compares model outputs to references using contextual embeddings from BERT-like models. "BERTScore~(BertS)~\cite{Zhang2019-ju} measures semantic similarity rather using pretrained BERT models."

- BLEU: A precision-based lexical similarity metric that measures n-gram overlap between a system output and reference text. "BLEU~\cite{Papineni2002-yq} is a precision based lexical similarity metric that computes the n-gram overlap between model predictions and ground truth references."

- BM-25: A sparse term-matching retrieval algorithm widely used in information retrieval to rank documents by relevance to a query. "We use BM-25~\cite{Robertson2009-nl} as our sparse retriever."

- Chain of Thought (CoT): A prompting technique that encourages models to generate intermediate reasoning steps to improve performance on complex problems. "Chain of Thought (CoT) Chain of Thought is one of the earlier prompting methodologies developed to enhance the reasoning abilities of LLMs\cite{Wei2022-vl}."

- Dense Retriever: A retrieval approach that encodes queries and documents into dense vector embeddings to compute semantic similarity. "Dense Retriever (ST) We use a pretrained Sentence-Bert~\cite{Reimers2019-pg} based encoder (all-mpnet-base-v2) from the associated SentenceTransformers as our dense retriever and Max Marginal Relevance (MMR)~\cite{Carbonell1998-pu} for search."

- Domain Adaptation: Techniques to adapt models to perform well on data distributions different from their training data. "Since incident data is highly confidential, and unlikely to have been observed by pretrained LLMs, fine-tuning is necessary for domain adaptation of vanilla LLMs."

- Hallucination (in LLMs): When a model outputs plausible-sounding but factually incorrect or unsupported information. "RQ1 Takeaways: ReAct\ agents perform competitively with retrieval and chain of thought baselines on semantic similarity, while under performing on lexical metrics. Manual labelling reveals that they achieve competitive correctness rates (35\% for ReAct S+Q BM25\ vs 39\% for the baselines), while providing a substantially lower rate of hallucinations (4\% for ReAct\ vs 12\% for CoT\ and 40\% for RB (k=10))."

- Interleaving Retrieval - Chain of Thought (IR-CoT): A method that alternates reasoning steps with retrieval to ground and improve multi-step reasoning. "Interleaving Retrieval - Chain of Thought (IR-CoT) Trivedi et al.\cite{Trivedi2022-pf} show that interleaving vanilla CoT prompting with retrieval improves model performance on complex, multistep reasoning tasks."

- Knowledge-intensive question answering: QA tasks that require accessing or incorporating external knowledge beyond what the model memorized during training. "More recently, LLM-based agents combine the external augmentation components with reasoning and planning abilities to allow the LLM to autonomously solve for complex tasks such as sequential decision-making problems~\cite{Shinn_undated-kf}, knowledge-intensive question answering~\cite{Trivedi2022-pf} and self debugging~\cite{Chen2023-hj}."

- Langchain: A framework for building LLM-powered applications, including agents that can use tools. "We use the Langchain\cite{Chase2022-sg} framework to implement the ReAct\ agent."

- LLMs: Scaled neural LLMs trained on vast text corpora that exhibit strong generalization and reasoning abilities. "LLMs have shown remarkable ability to work with a wide variety of data modalities, including unstructured natural language, tabular data and even images."

- Max Marginal Relevance (MMR): A retrieval strategy that balances relevance with diversity to reduce redundancy in retrieved documents. "We use a pretrained Sentence-Bert~\cite{Reimers2019-pg} based encoder (all-mpnet-base-v2) from the associated SentenceTransformers as our dense retriever and Max Marginal Relevance (MMR)~\cite{Carbonell1998-pu} for search."

- METEOR: An evaluation metric for generation that considers precision and recall with stemming and synonym matching. "METEOR \cite{Banerjee2005-cp} considers both precision and recall, and uses more sophisticated text processing and scoring systems."

- On-call engineers (OCEs): Engineers responsible for responding to and resolving production incidents during designated rotations. "On-call engineers (OCEs) require extensive experience with a team's services and deep domain knowledge to be effective at incident management."

- Out-of-distribution (OOD): Data that differs significantly from what a model saw during training, often causing performance degradation. "We present a thorough empirical evaluation of a ReAct\ agent equipped with retrieval tools, on an out-of-distribution dataset of production incidents collected at a large IT corporation."

- RCACopilot: A system that augments LLMs with predefined handlers for diagnostic data collection to assist with RCA. "Chen et al.\cite{Chen2023-js} propose RCACopilot, which expands upon this work and add retrieval augmentation and diagnostic collection tools to the LLM-based root cause analysis pipeline."

- ReAct: An LLM agent framework that interleaves reasoning (“Thought”) with tool use (“Action”) to solve complex tasks. "In this work, we present an empirical evaluation of an LLM-based agent, ReAct\ for root cause analysis for cloud incident management."

- Retrieval Augmented Generation (RAG): A technique where retrieval brings external documents into the model’s context to improve factuality and domain adaptation. "Retrieval Augmented Generation (RAG) is an effective strategy to providing domain adaptation for LLMs without additional training."

- Retrieval corpus: The collection of documents used by retrieval components to ground or inform model outputs. "We construct a retrieval corpus of historical incidents that encompasses the entire training split of our collected dataset."

- rougeL: A recall-oriented metric for text generation that measures the longest common subsequence between output and reference. "rougeL \cite{Lin2004-sf} is commonly used to evaluate summarization and is recall based."

- Sentence-Bert: A transformer model variant designed for producing sentence embeddings useful for semantic retrieval. "Dense Retriever (ST) We use a pretrained Sentence-Bert~\cite{Reimers2019-pg} based encoder (all-mpnet-base-v2) from the associated SentenceTransformers as our dense retriever..."

- Sparse Retriever: A retrieval approach based on term-frequency statistics (e.g., BM-25) rather than dense embeddings. "Sparse Retriever (BM-25) While models that perform a single retrieval step, other models such IR-CoT and the ReAct agent perform multiple retrieval steps with different queries, and can benefit from term based search~\cite{Trivedi2022-tm}."

- Toolformer: A model that learns to decide when and how to call external tools during generation. "This framework interleaves reasoning and tool usage steps, combining principles from reasoning-based approaches such as Chain of Thought~\cite{Wei2022-mi} with tool usage models like Toolformer\cite{Lewis2019-hp}."

- Troubleshooting Guides (TSGs): Curated procedural documents that capture steps and knowledge for diagnosing and resolving issues. "such as troubleshooting guides (TSGs) ~\cite{Jiang22-eg}"

- WebArena: A benchmarked web environment for evaluating agent behavior on web-based tasks. "such as WebArena ~\cite{Zhou2023-ds}, AlfWorld~\cite{Shridhar2020-ky} or WebShop~\cite{Yao2022-hq}."

- WebShop: A simulated e-commerce environment used to evaluate goal-directed agent behavior. "such as WebArena ~\cite{Zhou2023-ds}, AlfWorld~\cite{Shridhar2020-ky} or WebShop~\cite{Yao2022-hq}."

- Zero-shot prompting: Using prompts without task-specific examples to elicit model behavior on new tasks. "we use ReAct\ in a much more challenging setup with a zero-shot prompt."

- Root Cause Analysis (RCA): The process of identifying the fundamental underlying cause of an incident to enable effective remediation. "Root cause analysis (RCA), a critical part of the incident management process, is a demanding task for on-call engineers, requiring deep domain knowledge and extensive experience with a team's specific services."

Practical Applications

Immediate Applications

The following applications can be deployed with current tooling (e.g., GPT‑4 class models, LangChain, BM25/SentenceTransformers retrieval) and standard integrations into incident management systems. They leverage the paper’s findings that ReAct-style agents provide comparable correctness with substantially fewer hallucinations, and that high-quality, structured incident summaries are more valuable than raw discussion threads.

- Industry (Software/Cloud/SRE): Chat-based RCA assistant embedded in incident portals

- What: A ReAct-style agent integrated into platforms like ServiceNow, PagerDuty, Jira, or Azure DevOps that retrieves similar historical incidents and performs question answering over raw incident descriptions to propose specific root-cause hypotheses.

- Workflow/tools: LangChain ReAct planner; dual retrievers (BM25 + SentenceTransformers); “Incident Details” QA tool over raw descriptions; zero-shot CoT prompting; guardrails to allow “insufficient evidence” answers.

- Why now: Requires only access to historical incidents and incident text; no fine-tuning.

- Assumptions/dependencies: Availability of a searchable, de-duplicated incident corpus with root-cause fields; summary pipeline to control context length; secure LLM access (e.g., Azure OpenAI); governance for data privacy.

- Industry (DevOps/SRE): Factuality-first RCA copilot for on-call engineers

- What: Replace or augment naive RAG with reasoning (zero-shot CoT or ReAct) to cut hallucinated root-cause claims and encourage “insufficient evidence” responses.

- Workflow/tools: Prompt templates emphasizing evidence; explicit refusal policies; logging reasoning traces for audits.

- Assumptions/dependencies: Incident knowledge base quality; prompt governance and review; cost/latency budgets.

- Industry (AIOps platform vendors): RCA plugin that prioritizes high-quality root-cause summaries over raw discussion threads

- What: Incorporate the paper’s finding that adding discussion comments provides little benefit; invest in curated root-cause summaries, taxonomies, and deduplication instead of long threads.

- Workflow/tools: Summarization pipelines for titles/descriptions/root causes; curation dashboards; de-duplication/indexing.

- Assumptions/dependencies: Access to data owners; alignment with teams’ postmortem processes.

- Industry (Observability tooling): “Evidence-as-a-first-class-citizen” RCA UX

- What: Guide users and agents to request/attach specific diagnostic data when the agent outputs “insufficient evidence.” Reduce false positives by making evidence collection part of the flow.

- Workflow/tools: UI affordances that surface likely next steps (e.g., “Query logs for service X at time Y”); lightweight checklists driven by agent thoughts.

- Assumptions/dependencies: Minimal integration with existing dashboards; ability to link artifacts to incidents.

- Industry (SRE/Support): Retrieval QA over raw incident descriptions to prevent over-summarization errors

- What: Add a QA tool over the raw incident report so agents and users can pull precise details (e.g., stack traces, error codes) that may be lost in summaries.

- Workflow/tools: Document QA tool with chunking + semantic search; “ask the incident” feature.

- Assumptions/dependencies: Storage of original incident text; chunking strategy; context-size limits.

- Cross-sector (Telecom, SaaS support, IoT): Ticket triage assistant using historical case retrieval + ReAct reasoning

- What: Adapt the RCA assistant for support tickets to hypothesize likely causes and next data to collect.

- Workflow/tools: Historical ticket retrieval; ReAct planning with refusal/uncertainty signaling.

- Assumptions/dependencies: Labeled tickets with resolutions; privacy constraints; domain lexicons.

- Academia: More faithful evaluation protocols for RCA agents

- What: Adopt the paper’s qualitative rubric (correct/precise vs imprecise; hallucination vs insufficient evidence) and semantic metrics over lexical-only scores.

- Workflow/tools: Annotation schema; public reporting of hallucination/“insufficient evidence” rates in addition to BLEU/ROUGE/BERTScore.

- Assumptions/dependencies: Access to annotators; incident anonymization for shared studies.

- Academia/Industry: Retrieval corpora design and summarization pipelines

- What: Build pipelines to summarize long incident artifacts and root causes for RAG/agents while preserving key tokens (e.g., error codes).

- Workflow/tools: Instruction-tuned summarization prompts; chunking; evaluation harnesses.

- Assumptions/dependencies: Noisy heterogeneous inputs; budget for iterative tuning and manual checks.

- Governance/Policy (Enterprise IT): Safer deployment patterns for confidential incident data

- What: Prefer retrieval + zero-shot reasoning with on-prem or enterprise LLM endpoints over fine-tuning; log agent actions for auditability.

- Workflow/tools: Data access controls; prompt redaction; reasoning-trace storage; SOC2/ISO mappings.

- Assumptions/dependencies: Enterprise-grade LLM hosting; legal review of data processing.

- Daily Life (Power users/IT hobbyists): DIY troubleshooting assistant over product manuals and forum posts

- What: A ReAct agent that retrieves manuals/FAQs and reasons step-by-step, explicitly indicating when more information is needed.

- Workflow/tools: Web/document retrieval; step-by-step prompts; cautionary messages about uncertainty.

- Assumptions/dependencies: Variable quality sources; higher risk of hallucination without strong corpora and guardrails.

Long-Term Applications

These items require deeper integration with diagnostic systems, stronger safety/approval layers, new benchmarks/environments, or research advances in planning and tool-use.

- Industry (SRE/Cloud): Fully integrated RCA agent that autonomously queries diagnostics and correlates signals

- What: Equip agents with authenticated tools for logs (e.g., Kusto, Splunk, ELK), metrics (Prometheus, Datadog), traces (OpenTelemetry), and service inventories to collect evidence and propose specific root causes and mitigations.

- Workflow/tools: Tool adapters; credentials/SSO integration; planning and backtracking; observation-to-hypothesis loops.

- Assumptions/dependencies: Robust APIs; least-privilege IAM; rate limits; guardrails; incident-simulation environment for safe testing.

- Industry (AIOps): Auto-remediation orchestration with human-in-the-loop

- What: After proposing a root cause, the agent drafts mitigation steps (e.g., rollback, feature-flag change), collects safety checks, and requests human approval.

- Workflow/tools: Playbook engine; change management APIs; risk scoring; staged rollout controls.

- Assumptions/dependencies: Mature runbooks; strong rollback strategies; change governance.

- Enterprise-wide RCA-as-a-service with federated retrieval

- What: Cross-team agent that searches multiple teams’ historical incidents and knowledge bases while respecting data boundaries.

- Workflow/tools: Multi-tenant vector stores; federation layer; access-policy enforcement; relevance feedback.

- Assumptions/dependencies: Complex security model; taxonomy alignment; data residency.

- Standardized RCA ontologies and service knowledge graphs

- What: Shared schemas for incident symptoms, diagnostics, and root-cause categories to enhance retrieval and reasoning/planning across tools and teams.

- Workflow/tools: Ontology/graph services; embedding strategies; mapping pipelines.

- Assumptions/dependencies: Consensus across orgs; migration of legacy incident data.

- Evaluation infrastructure: Simulated RCA environments and benchmarks

- What: Build controllable testbeds (akin to WebArena/AlfWorld) that emulate diagnostic services, enabling reproducible measurement of agents’ tool-use and planning.

- Workflow/tools: Synthetic incident generators; mock log/metric stores; evaluation suites and leaderboards; safety sandboxes.

- Assumptions/dependencies: Dataset creation cost; partnerships for realistic scenarios.

- Sector adaptations

- Healthcare IT operations: RCA for EHR downtime, PACS failures, or network issues with strict PHI protections.

- Finance/Trading ops: RCA for latency spikes or data-feed anomalies with audit trails.

- Energy/Utilities: Grid/SCADA operations diagnosis with telemetry fusion.

- Manufacturing/Robotics: Downtime RCA integrating PLC logs and sensor data.

- Assumptions/dependencies: Sector-specific compliance (HIPAA, SOX, NERC CIP); on-prem LLMs; domain adapters.

- Proactive operations: From reactive RCA to early warning and preemptive mitigation

- What: Agents monitor signals, detect symptom patterns, auto-collect diagnostics, and propose root-cause hypotheses before widespread impact.

- Workflow/tools: Anomaly detection + agent planning; continuous retrieval; policy-driven alerts.

- Assumptions/dependencies: Reliable anomaly detection; noise management; alert-fatigue safeguards.

- Policy/Compliance: Standards for AI-assisted incident diagnosis and accountability

- What: Define expectations for logging reasoning traces, human oversight points, and change-approval thresholds; certification schemes for RCA agents.

- Workflow/tools: Policy templates; audit tooling; incident review workflows that include AI artifacts.

- Assumptions/dependencies: Cross-functional consensus (security, legal, SRE); regulator engagement.

- Knowledge lifecycle: Continuous improvement loops for agent prompts, tools, and corpora

- What: Post-incident pipelines that curate new incidents into the retrieval corpus, update prompt/toolkits, and correct agent behavior based on errors (e.g., hallucination incidents).

- Workflow/tools: Feedback capture; prompt/tool versioning; evaluation dashboards.

- Assumptions/dependencies: Organizational discipline; telemetry on agent performance; MLOps-like processes for agents.

Key Dependencies and Assumptions Across Applications

- Data: High-quality, labeled historical incidents with concise root-cause summaries outperform long, noisy comment threads; deduplication and taxonomy alignment are critical.

- Security/Privacy: Incident data is highly confidential—prefer enterprise LLMs or on-prem deployments, with strict redaction and access control.

- Tool Integration: APIs for logs/metrics/traces and knowledge bases; robust IAM and rate limiting; audit trails for every agent action.

- Reliability: Guardrails to allow “insufficient evidence,” reduce hallucinations, and log reasoning; clear escalation paths to humans.

- Cost/Latency: Context limits and API costs drive summarization and retrieval budgets; batched retrieval and careful prompt design are essential.

- Evaluation: Move beyond lexical metrics to semantic similarity and human-coded correctness/factuality; track hallucination and refusal rates.

Collections

Sign up for free to add this paper to one or more collections.