- The paper presents a neural variational inference framework for MJPs that leverages NeuralODEs to capture continuous-time dynamics.

- The paper utilizes dual neural networks to parameterize both generative and inference models, enabling efficient state transition estimation on irregular and noisy datasets.

- The paper demonstrates improved accuracy and interpretability in diverse applications, such as ion channels and molecular dynamics, while noting a bias towards shorter time scales.

Neural Markov Jump Processes

Introduction

The paper "Neural Markov Jump Processes" introduces a technique for performing variational inference on Markov jump processes (MJPs) using neural networks and neural ordinary differential equations (NeuralODEs). MJPs are continuous-time stochastic processes widely applicable in various scientific fields due to their powerful temporal modeling capabilities. However, traditional inference methods, such as Monte Carlo and expectation-maximization, face scalability challenges in handling large datasets. This paper proposes a novel approach to defining, training, and utilizing these processes, aiming to improve both efficiency and effectiveness in inference tasks.

Methodology

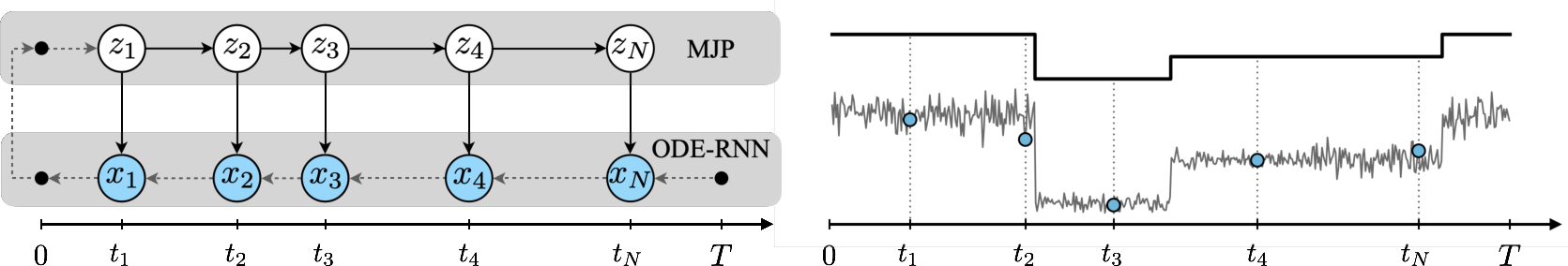

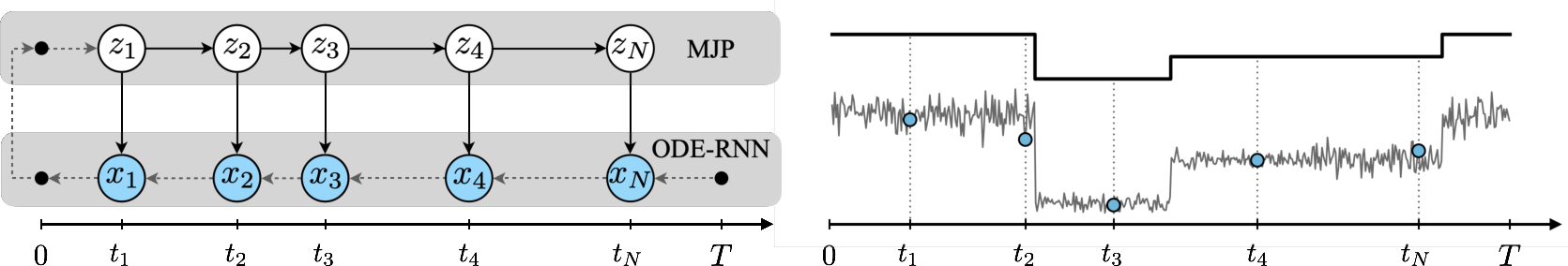

The central concept of the paper is to use neural networks to parametrically represent both the transition dynamics of the MJP and the continuous-time trajectory tracking through NeuralODEs (Figure 1). This is achieved via a variational inference framework that leverages neural networks to approximate the posterior distribution of states in a dynamic system.

The methodology involves:

- Generative Model: Defining a generative process where the initial probability distribution and transition rates are parameterized by a neural network, which predicts transitions in the state space over continuous time.

- Inference Model: Employing another neural network to model the posterior distribution over latent states, solved using the NeuralODE framework to achieve a continuous approximation of state transitions.

- Variational Inference: Utilizing a variational approach that optimizes a lower bound on the marginal likelihood of observed data. This enables the efficient estimation of model parameters by minimizing the Kullback-Leibler divergence between the approximate posterior and true posterior distributions.

- Training Procedure: Implementing a two-step training process where the encoder-decoder parameters and prior parameters are optimized separately, allowing for effective inference and a stable convergence of rate estimates.

- Application and Data Handling: The method is challenged with irregularly sampled and noisy data derived from various domains, including switching ion channels and molecular dynamics, showcasing its adaptability and performance.

Figure 1: Neural Markov Jump Process model. Left: Graphical model and encoding-decoding procedure. The observations $\*x_1, \dots, \*x_N$ are encoded backwards in time with a ODE-RNN model (bottom block). The output ODE-RNN representations are used to condition the posterior master equation, which is solved forward in time (top block).

Experiments

Extensive experiments are conducted across several domains:

- Discrete Flashing Ratchet: This evaluation with synthetic data demonstrates the method's capability to infer underlying MJP parameters even with non-equidistant observation grids, maintaining accuracy across different observation types and noise levels (Figure 2).

- Switching Ion Channel Data: Applying the model to experimental data on ion-permeability showed improved predictive performance and insightful dynamic modeling compared to existing variational approaches (Figure 3). The model discerns subtle switching dynamics more effectively due to its continuous-time formulation.

- Lotka-Volterra and Alanine Dipeptide: The model's efficacy is further validated using complex datasets representing biological dynamics. It infers interpretable system parameters and state transitions, facilitating a deeper understanding of the underlying processes.

Figure 2: Sample Discrete Flashing Ratchet trajectory. The white region shows greedy samples from the posterior within the observation window. Gray region shows predictions of the model.

Figure 3: Sample trajectory from Switching Ion Channel dataset. The white region shows mean reconstruction values, conditioned on posterior samples within the observation window. Gray region shows predictions of the model.

Implications and Future Work

This work bridges a gap in stochastic process modeling by offering a scalable and robust alternative for MJP inference that leverages modern neural network infrastructures. Its ability to handle irregularly sampled, noisy data makes it well-suited for various scientific applications. The framework also encourages future exploration into more complex systems and potentially extending its capabilities further into hybrid models or integrating with other deep learning paradigms.

While the model performs remarkably well, the authors identify a potential bias towards shorter time scales in the inferred dynamics, suggesting an area for further refinement. Future iterations could focus on enhancing training regimes and exploring alternative variational objectives to fine-tune model sensitivity and accuracy across diverse temporal frameworks.

Conclusion

The paper presents a significant advancement in the field of continuous-time stochastic modeling, offering a neural-based variational inference method that enhances the scalability and accuracy of MJPs. By incorporating neural networks and NeuralODEs, the method achieves efficient and effective inference, making it a valuable tool for researchers handling complex temporal data in natural and social sciences. Its adaptability to various datasets and challenges opens numerous possibilities for future research and applied scenarios.